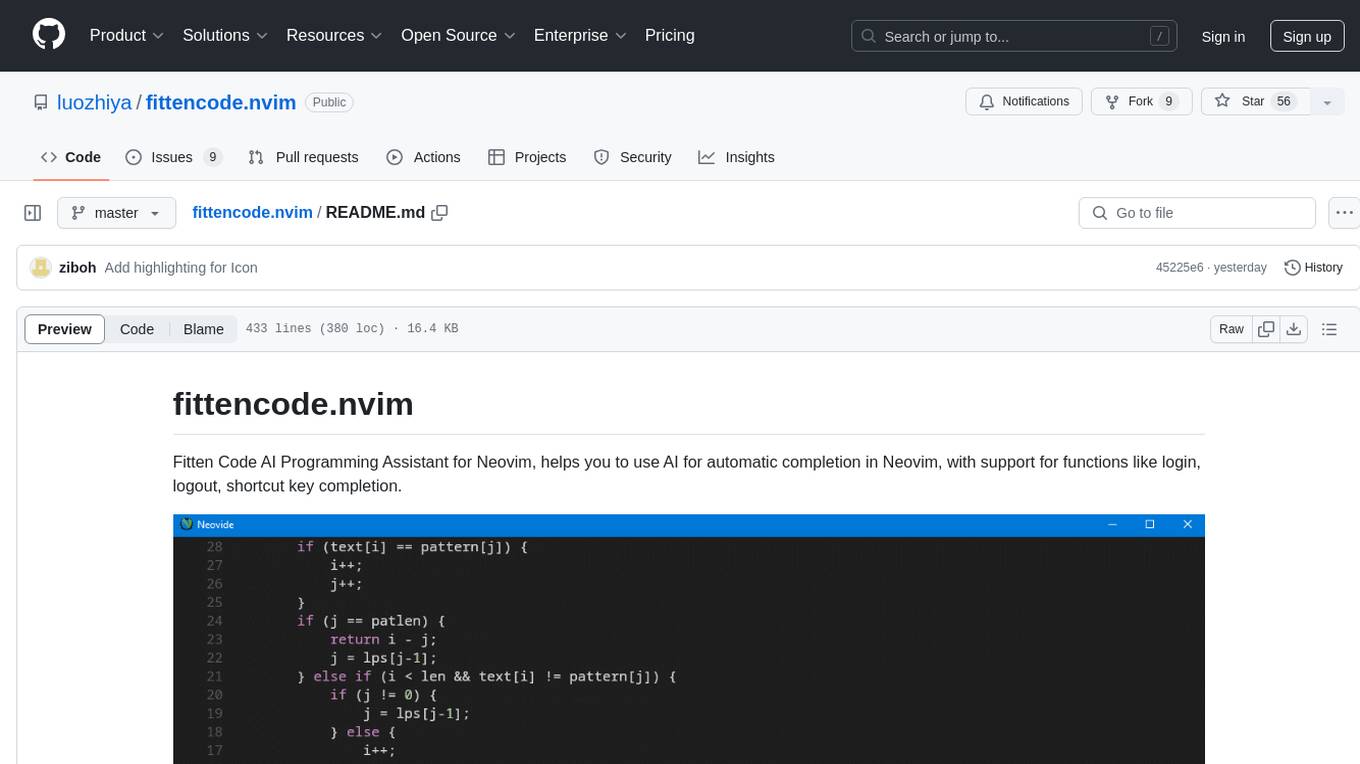

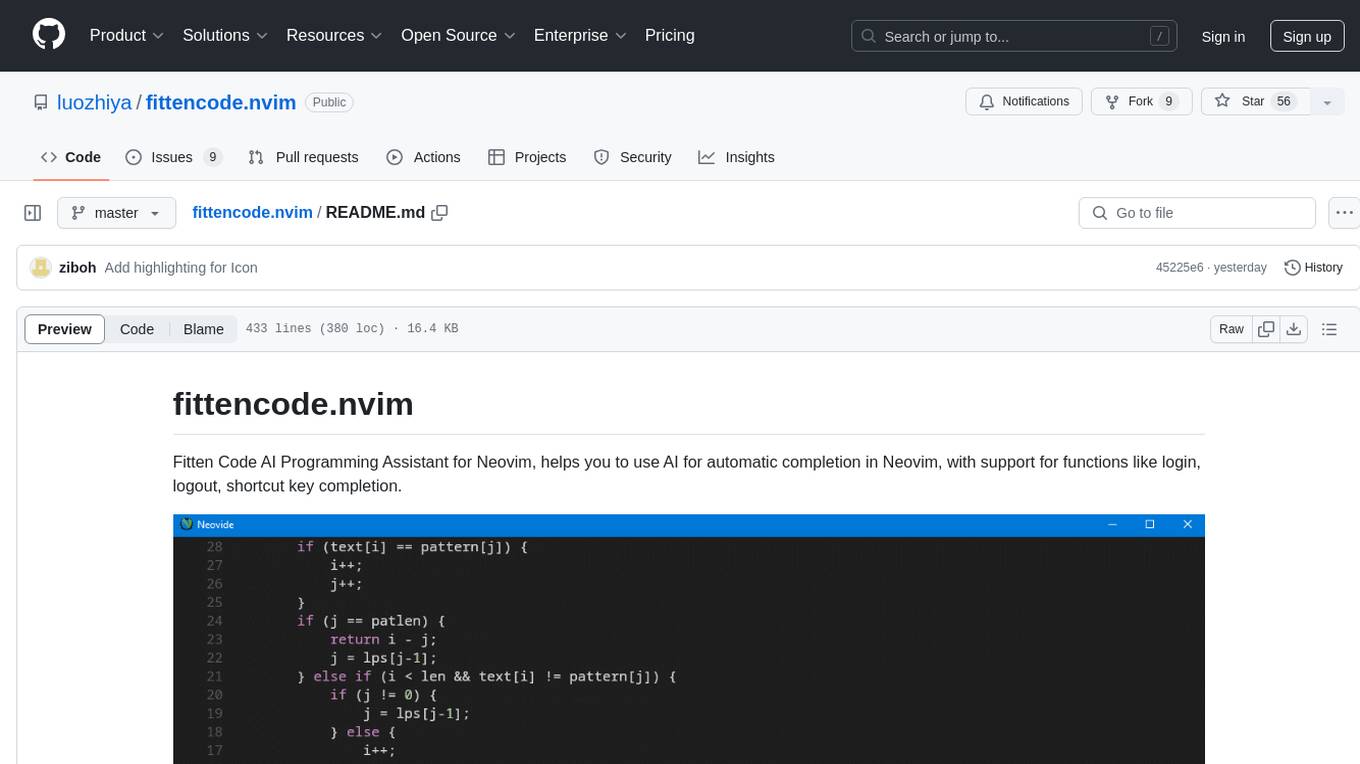

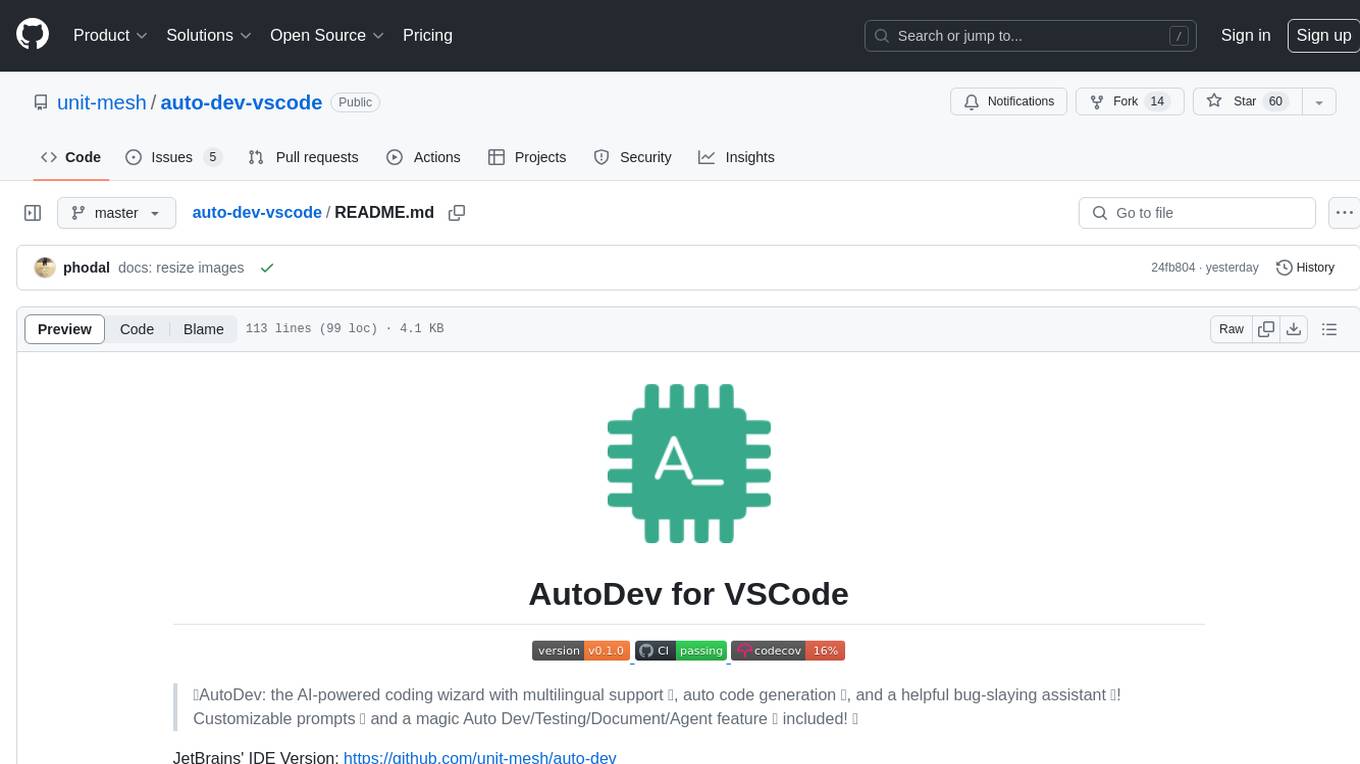

fittencode.nvim

Fitten Code AI Programming Assistant for Neovim

Stars: 63

Fitten Code AI Programming Assistant for Neovim provides fast completion using AI, asynchronous I/O, and support for various actions like document code, edit code, explain code, find bugs, generate unit test, implement features, optimize code, refactor code, start chat, and more. It offers features like accepting suggestions with Tab, accepting line with Ctrl + Down, accepting word with Ctrl + Right, undoing accepted text, automatic scrolling, and multiple HTTP/REST backends. It can run as a coc.nvim source or nvim-cmp source.

README:

Fitten Code AI Programming Assistant for Neovim, helps you to use AI for automatic completion in Neovim, with support for functions like login, logout, shortcut key completion.

- 🚀 Fast completion thanks to

Fitten Code - 🐛 Asynchronous I/O for improved performance

- 🐣 Support for

Actions- 1️⃣ Document code

- 2️⃣ Edit code

- 3️⃣ Explain code

- 4️⃣ Find bugs

- 5️⃣ Generate unit test

- 6️⃣ Implement features

- 7️⃣ Optimize code

- 8️⃣ Refactor code

- 9️⃣ Start chat

- ⭐️ Accept all suggestions with

Tab - 🧪 Accept line with

Ctrl + 🡫 - 🔎 Accept word with

Ctrl + 🡪 - ❄️ Undo accepted text

- 🧨 Automatic scrolling when previewing or completing code

- 🍭 Multiple HTTP/REST backends such as

curl,libcurl(WIP) - 🛰️ Run as a

coc.nvim(WIP) source ornvim-cmpsource

- Neovim >= 0.8.0

- curl

Install the plugin with your preferred package manager:

{

'luozhiya/fittencode.nvim',

config = function()

require('fittencode').setup()

end,

}use {

'luozhiya/fittencode.nvim',

config = function()

require('fittencode').setup()

end,

}fittencode.nvim comes with the following defaults:

{

action = {

document_code = {

-- Show "Fitten Code - Document Code" in the editor context menu, when you right-click on the code.

show_in_editor_context_menu = true,

},

edit_code = {

-- Show "Fitten Code - Edit Code" in the editor context menu, when you right-click on the code.

show_in_editor_context_menu = true,

},

explain_code = {

-- Show "Fitten Code - Explain Code" in the editor context menu, when you right-click on the code.

show_in_editor_context_menu = true,

},

find_bugs = {

-- Show "Fitten Code - Find Bugs" in the editor context menu, when you right-click on the code.

show_in_editor_context_menu = true,

},

generate_unit_test = {

-- Show "Fitten Code - Generate UnitTest" in the editor context menu, when you right-click on the code.

show_in_editor_context_menu = true,

},

start_chat = {

-- Show "Fitten Code - Start Chat" in the editor context menu, when you right-click on the code.

show_in_editor_context_menu = true,

},

identify_programming_language = {

-- Identify programming language of the current buffer

-- * Unnamed buffer

-- * Buffer without file extension

-- * Buffer no filetype detected

identify_buffer = true,

}

},

disable_specific_inline_completion = {

-- Disable auto-completion for some specific file suffixes by entering them below

-- For example, `suffixes = {'lua', 'cpp'}`

suffixes = {},

},

inline_completion = {

-- Enable inline code completion.

---@type boolean

enable = true,

-- Disable auto completion when the cursor is within the line.

---@type boolean

disable_completion_within_the_line = false,

-- Disable auto completion when pressing Backspace or Delete.

---@type boolean

disable_completion_when_delete = false,

-- Auto triggering completion

---@type boolean

auto_triggering_completion = true,

-- Accept Mode

-- Available options:

-- * `commit` (VSCode style accept, also default)

-- - `Tab` to Accept all suggestions

-- - `Ctrl+Right` to Accept word

-- - `Ctrl+Down` to Accept line

-- - Interrupt

-- - Enter a different character than suggested

-- - Exit insert mode

-- - Move the cursor

-- * `stage` (Stage style accept)

-- - `Tab` to Accept all staged characters

-- - `Ctrl+Right` to Stage word

-- - `Ctrl+Left` to Revoke word

-- - `Ctrl+Down` to Stage line

-- - `Ctrl+Up` to Revoke line

-- - Interrupt(Same as `commit`, but with the following changes:)

-- - Characters that have already been staged will be lost.

accept_mode = 'commit',

},

delay_completion = {

-- Delay time for inline completion (in milliseconds).

---@type integer

delaytime = 0,

},

prompt = {

-- Maximum number of characters to prompt for completion/chat.

max_characters = 1000000,

},

chat = {

-- Highlight the conversation in the chat window at the current cursor position.

highlight_conversation_at_cursor = false,

-- Style

-- Available options:

-- * `sidebar` (Siderbar style, also default)

-- * `floating` (Floating style)

style = 'sidebar',

sidebar = {

-- Width of the sidebar in characters.

width = 42,

-- Position of the sidebar.

-- Available options:

-- * `left`

-- * `right`

position = 'left',

},

floating = {

-- Border style of the floating window.

-- Same border values as `nvim_open_win`.

border = 'rounded',

-- Size of the floating window.

-- <= 1: percentage of the screen size

-- > 1: number of lines/columns

size = { width = 0.8, height = 0.8 },

}

},

-- Enable/Disable the default keymaps in inline completion.

use_default_keymaps = true,

-- Default keymaps

keymaps = {

inline = {

['<TAB>'] = 'accept_all_suggestions',

['<C-Down>'] = 'accept_line',

['<C-Right>'] = 'accept_word',

['<C-Up>'] = 'revoke_line',

['<C-Left>'] = 'revoke_word',

['<A-\\>'] = 'triggering_completion',

},

chat = {

['q'] = 'close',

['[c'] = 'goto_previous_conversation',

[']c'] = 'goto_next_conversation',

['c'] = 'copy_conversation',

['C'] = 'copy_all_conversations',

['d'] = 'delete_conversation',

['D'] = 'delete_all_conversations',

}

},

-- Setting for source completion.

source_completion = {

-- Enable source completion.

enable = true,

-- trigger characters for source completion.

-- Available options:

-- * A list of characters like {'a', 'b', 'c', ...}

-- * A function that returns a list of characters like `function() return {'a', 'b', 'c', ...}`

trigger_chars = {},

},

-- Set the mode of the completion.

-- Available options:

-- * 'inline' (VSCode style inline completion)

-- * 'source' (integrates into other completion plugins)

completion_mode = 'inline',

---@class LogOptions

log = {

-- Log level.

level = vim.log.levels.WARN,

-- Max log file size in MB, default is 10MB

max_size = 10,

},

}Set updatetime to a lower value to improve performance:

-- Neovim default updatetime is 4000

vim.opt.updatetime = 200require('fittencode').setup({

completion_mode ='source',

})

require('cmp').setup({

sources = { name = 'fittencode', group_index = 1 },

mapping = {

-- Accept multi-line completion

['<c-y>'] = cmp.mapping.confirm({ behavior = cmp.ConfirmBehavior.Insert, select = false }),

}

})FittenCode's cmp source now has a builtin highlight group CmpItemKindFittencode. To add an icon to FittenCode for lspkind, simply add FittenCode to your lspkind symbol map.

-- lspkind.lua

local lspkind = require("lspkind")

lspkind.init({

symbol_map = {

FittenCode = "",

},

})

vim.api.nvim_set_hl(0, "CmpItemKindFittenCode", {fg ="#6CC644"})

Alternatively, you can add FittemCode to the lspkind symbol_map within the cmp format function.

-- cmp.lua

cmp.setup {

...

formatting = {

format = lspkind.cmp_format({

mode = "symbol",

max_width = 50,

symbol_map = { FittenCode = "" }

})

}

...

}If you do not use lspkind, simply add the custom icon however you normally handle kind formatting and it will integrate as if it was any other normal lsp completion kind.

- Optional parameters are enclosed in square brackets

[]. - Essential parameters are enclosed in

<>

| Command | Description |

|---|---|

Fitten register |

If you haven't registered yet, please run the command to register. |

Fitten login |

Try the command Fitten login to login. |

Fitten logout |

Logout account |

| Command | Description |

|---|---|

Fitten enable_completions [filetypes] |

Enable global/filetypes completions. |

Fitten disable_completions [filetypes] |

Disable global/filetypes completions. |

| Command | Description |

|---|---|

Fitten document_code |

Document code |

Fitten edit_code |

Edit code |

Fitten explain_code |

Explain code |

Fitten find_bugs |

Find bugs |

Fitten generate_unit_test [test_framework] [language] |

Generate unit test |

Fitten implement_features |

Implement features |

Fitten optimize_code |

Optimize code |

Fitten refactor_code |

Refactor code |

Fitten identify_programming_language |

Identify programming language |

Fitten analyze_data |

Analyze data |

Fitten translate_text |

Translate text |

Fitten translate_text_into_chinese |

Translate text into Chinese |

Fitten translate_text_into_english |

Translate text into English |

Fitten start_chat |

Start chat |

Fitten show_chat |

Show chat window |

Fitten toggle_chat |

Toggle chat window |

| Mappings | Action |

|---|---|

Tab |

Accept all suggestions |

Ctrl + 🡫 |

Accept line |

Ctrl + 🡪 |

Accept word |

Ctrl + 🡩 |

Revoke line |

Ctrl + 🡨 |

Revoke word |

Alt + \ |

Manually triggering completion |

| Mappings | Action |

|---|---|

q |

Close chat |

[c |

Go to previous conversation |

]c |

Go to next conversation |

c |

Copy conversation |

C |

Copy all conversations |

d |

Delete conversation |

D |

Delete all conversations |

fittencode.nvim provides a set of APIs to help you integrate it with other plugins or scripts.

- Access the APIs by calling

require('fittencode').<api_name>().

-- Log levels

vim.log = {

levels = {

TRACE = 0,

DEBUG = 1,

INFO = 2,

WARN = 3,

ERROR = 4,

OFF = 5,

},

}

---@class ActionOptions

---@field prompt? string

---@field content? string

---@field language? string

---@class GenerateUnitTestOptions : ActionOptions

---@field test_framework string

---@class ImplementFeaturesOptions : ActionOptions

---@field feature_type string

---@class TranslateTextOptions : ActionOptions

---@field target_language string

---@class EnableCompletionsOptions

---@field enable? boolean

---@field mode? 'inline' | 'source'

---@field global? boolean

---@field suffixes? string[]

---@type StatusCodes

local StatusCodes = {

DISABLED = 1,

IDLE = 2,

GENERATING = 3,

ERROR = 4,

NO_MORE_SUGGESTIONS = 5,

SUGGESTIONS_READY = 6,

}| API Prototype | Description |

|---|---|

login(username, password) |

Login to Fitten Code AI |

logout() |

Logout from Fitten Code AI |

register() |

Register to Fitten Code AI |

set_log_level(level) |

Set the log level |

get_current_status() |

Get the StatusCodes of the InlineEngine and ActionEngine

|

triggering_completion() |

Manually triggering completion |

has_suggestions() |

Check if there are suggestions |

dismiss_suggestions() |

Dismiss suggestions |

accept_all_suggestions() |

Accept all suggestions |

accept_line() |

Accept line |

accept_word() |

Accept word |

accept_char() |

Accept character |

revoke_line() |

Revoke line |

revoke_word() |

Revoke word |

revoke_char() |

Revoke character |

document_code(ActionOptions) |

Document code |

edit_code(ActionOptions) |

Edit code |

explain_code(ActionOptions) |

Explain code |

find_bugs(ActionOptions) |

Find bugs |

generate_unit_test(GenerateUnitTestOptions) |

Generate unit test |

implement_features(ImplementFeaturesOptions) |

Implement features |

optimize_code(ActionOptions) |

Optimize code |

refactor_code(ActionOptions) |

Refactor code |

identify_programming_language(ActionOptions) |

Identify programming language |

analyze_data(ActionOptions) |

Analyze data |

translate_text(TranslateTextOptions) |

Translate text |

translate_text_into_chinese(TranslateTextOptions) |

Translate text into Chinese |

translate_text_into_english(TranslateTextOptions) |

Translate text into English |

start_chat(ActionOptions) |

Start chat |

enable_completions(EnableCompletionsOptions) |

Enable completions |

show_chat() |

Show chat window |

toggle_chat() |

Toggle chat window |

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for fittencode.nvim

Similar Open Source Tools

fittencode.nvim

Fitten Code AI Programming Assistant for Neovim provides fast completion using AI, asynchronous I/O, and support for various actions like document code, edit code, explain code, find bugs, generate unit test, implement features, optimize code, refactor code, start chat, and more. It offers features like accepting suggestions with Tab, accepting line with Ctrl + Down, accepting word with Ctrl + Right, undoing accepted text, automatic scrolling, and multiple HTTP/REST backends. It can run as a coc.nvim source or nvim-cmp source.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

api-for-open-llm

This project provides a unified backend interface for open large language models (LLMs), offering a consistent experience with OpenAI's ChatGPT API. It supports various open-source LLMs, enabling developers to seamlessly integrate them into their applications. The interface features streaming responses, text embedding capabilities, and support for LangChain, a tool for developing LLM-based applications. By modifying environment variables, developers can easily use open-source models as alternatives to ChatGPT, providing a cost-effective and customizable solution for various use cases.

nextlint

Nextlint is a rich text editor (WYSIWYG) written in Svelte, using MeltUI headless UI and tailwindcss CSS framework. It is built on top of tiptap editor (headless editor) and prosemirror. Nextlint is easy to use, develop, and maintain. It has a prompt engine that helps to integrate with any AI API and enhance the writing experience. Dark/Light theme is supported and customizable.

chatglm.cpp

ChatGLM.cpp is a C++ implementation of ChatGLM-6B, ChatGLM2-6B, ChatGLM3-6B and more LLMs for real-time chatting on your MacBook. It is based on ggml, working in the same way as llama.cpp. ChatGLM.cpp features accelerated memory-efficient CPU inference with int4/int8 quantization, optimized KV cache and parallel computing. It also supports P-Tuning v2 and LoRA finetuned models, streaming generation with typewriter effect, Python binding, web demo, api servers and more possibilities.

aiosmb

aiosmb is a fully asynchronous SMB library written in pure Python, supporting Python 3.7 and above. It offers various authentication methods such as Kerberos, NTLM, SSPI, and NEGOEX. The library supports connections over TCP and QUIC protocols, with proxy support for SOCKS4 and SOCKS5. Users can specify an SMB connection using a URL format, making it easier to authenticate and connect to SMB hosts. The project aims to implement DCERPC features, VSS mountpoint operations, and other enhancements in the future. It is inspired by Impacket and AzureADJoinedMachinePTC projects.

agentops

AgentOps is a toolkit for evaluating and developing robust and reliable AI agents. It provides benchmarks, observability, and replay analytics to help developers build better agents. AgentOps is open beta and can be signed up for here. Key features of AgentOps include: - Session replays in 3 lines of code: Initialize the AgentOps client and automatically get analytics on every LLM call. - Time travel debugging: (coming soon!) - Agent Arena: (coming soon!) - Callback handlers: AgentOps works seamlessly with applications built using Langchain and LlamaIndex.

agentic

Agentic is a standard AI functions/tools library optimized for TypeScript and LLM-based apps, compatible with major AI SDKs. It offers a set of thoroughly tested AI functions that can be used with favorite AI SDKs without writing glue code. The library includes various clients for services like Bing web search, calculator, Clearbit data resolution, Dexa podcast questions, and more. It also provides compound tools like SearchAndCrawl and supports multiple AI SDKs such as OpenAI, Vercel AI SDK, LangChain, LlamaIndex, Firebase Genkit, and Dexa Dexter. The goal is to create minimal clients with strongly-typed TypeScript DX, composable AIFunctions via AIFunctionSet, and compatibility with major TS AI SDKs.

DownEdit

DownEdit is a powerful program that allows you to download videos from various social media platforms such as TikTok, Douyin, Kuaishou, and more. With DownEdit, you can easily download videos from user profiles and edit them in bulk. You have the option to flip the videos horizontally or vertically throughout the entire directory with just a single click. Stay tuned for more exciting features coming soon!

worker-vllm

The worker-vLLM repository provides a serverless endpoint for deploying OpenAI-compatible vLLM models with blazing-fast performance. It supports deploying various model architectures, such as Aquila, Baichuan, BLOOM, ChatGLM, Command-R, DBRX, DeciLM, Falcon, Gemma, GPT-2, GPT BigCode, GPT-J, GPT-NeoX, InternLM, Jais, LLaMA, MiniCPM, Mistral, Mixtral, MPT, OLMo, OPT, Orion, Phi, Phi-3, Qwen, Qwen2, Qwen2MoE, StableLM, Starcoder2, Xverse, and Yi. Users can deploy models using pre-built Docker images or build custom images with specified arguments. The repository also supports OpenAI compatibility for chat completions, completions, and models, with customizable input parameters. Users can modify their OpenAI codebase to use the deployed vLLM worker and access a list of available models for deployment.

avante.nvim

avante.nvim is a Neovim plugin that emulates the behavior of the Cursor AI IDE, providing AI-driven code suggestions and enabling users to apply recommendations to their source files effortlessly. It offers AI-powered code assistance and one-click application of suggested changes, streamlining the editing process and saving time. The plugin is still in early development, with functionalities like setting API keys, querying AI about code, reviewing suggestions, and applying changes. Key bindings are available for various actions, and the roadmap includes enhancing AI interactions, stability improvements, and introducing new features for coding tasks.

rust-genai

genai is a multi-AI providers library for Rust that aims to provide a common and ergonomic single API to various generative AI providers such as OpenAI, Anthropic, Cohere, Ollama, and Gemini. It focuses on standardizing chat completion APIs across major AI services, prioritizing ergonomics and commonality. The library initially focuses on text chat APIs and plans to expand to support images, function calling, and more in the future versions. Version 0.1.x will have breaking changes in patches, while version 0.2.x will follow semver more strictly. genai does not provide a full representation of a given AI provider but aims to simplify the differences at a lower layer for ease of use.

ollama-operator

Ollama Operator is a Kubernetes operator designed to facilitate running large language models on Kubernetes clusters. It simplifies the process of deploying and managing multiple models on the same cluster, providing an easy-to-use interface for users. With support for various Kubernetes environments and seamless integration with Ollama models, APIs, and CLI, Ollama Operator streamlines the deployment and management of language models. By leveraging the capabilities of lama.cpp, Ollama Operator eliminates the need to worry about Python environments and CUDA drivers, making it a reliable tool for running large language models on Kubernetes.

StableToolBench

StableToolBench is a new benchmark developed to address the instability of Tool Learning benchmarks. It aims to balance stability and reality by introducing features such as a Virtual API System with caching and API simulators, a new set of solvable queries determined by LLMs, and a Stable Evaluation System using GPT-4. The Virtual API Server can be set up either by building from source or using a prebuilt Docker image. Users can test the server using provided scripts and evaluate models with Solvable Pass Rate and Solvable Win Rate metrics. The tool also includes model experiments results comparing different models' performance.

onnxruntime-server

ONNX Runtime Server is a server that provides TCP and HTTP/HTTPS REST APIs for ONNX inference. It aims to offer simple, high-performance ML inference and a good developer experience. Users can provide inference APIs for ONNX models without writing additional code by placing the models in the directory structure. Each session can choose between CPU or CUDA, analyze input/output, and provide Swagger API documentation for easy testing. Ready-to-run Docker images are available, making it convenient to deploy the server.

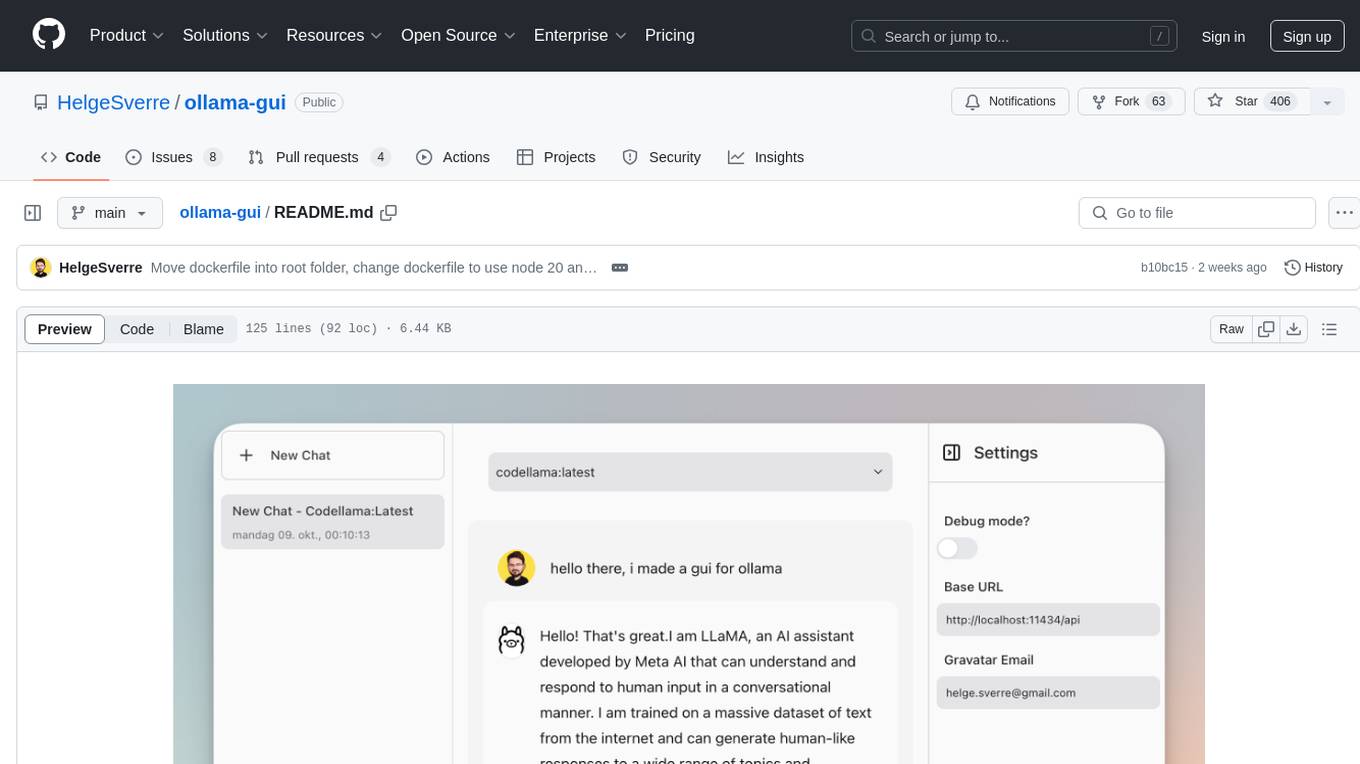

ollama-gui

Ollama GUI is a web interface for ollama.ai, a tool that enables running Large Language Models (LLMs) on your local machine. It provides a user-friendly platform for chatting with LLMs and accessing various models for text generation. Users can easily interact with different models, manage chat history, and explore available models through the web interface. The tool is built with Vue.js, Vite, and Tailwind CSS, offering a modern and responsive design for seamless user experience.

For similar tasks

cody

Cody is a free, open-source AI coding assistant that can write and fix code, provide AI-generated autocomplete, and answer your coding questions. Cody fetches relevant code context from across your entire codebase to write better code that uses more of your codebase's APIs, impls, and idioms, with less hallucination.

auto-dev-vscode

AutoDev for VSCode is an AI-powered coding wizard with multilingual support, auto code generation, and a bug-slaying assistant. It offers customizable prompts and features like Auto Dev/Testing/Document/Agent. The tool aims to enhance coding productivity and efficiency by providing intelligent assistance and automation capabilities within the Visual Studio Code environment.

bia-bob

BIA `bob` is a Jupyter-based assistant for interacting with data using large language models to generate Python code. It can utilize OpenAI's chatGPT, Google's Gemini, Helmholtz' blablador, and Ollama. Users need respective accounts to access these services. Bob can assist in code generation, bug fixing, code documentation, GPU-acceleration, and offers a no-code custom Jupyter Kernel. It provides example notebooks for various tasks like bio-image analysis, model selection, and bug fixing. Installation is recommended via conda/mamba environment. Custom endpoints like blablador and ollama can be used. Google Cloud AI API integration is also supported. The tool is extensible for Python libraries to enhance Bob's functionality.

code2prompt

code2prompt is a command-line tool that converts your codebase into a single LLM prompt with a source tree, prompt templating, and token counting. It automates generating LLM prompts from codebases of any size, customizing prompt generation with Handlebars templates, respecting .gitignore, filtering and excluding files using glob patterns, displaying token count, including Git diff output, copying prompt to clipboard, saving prompt to an output file, excluding files and folders, adding line numbers to source code blocks, and more. It helps streamline the process of creating LLM prompts for code analysis, generation, and other tasks.

fittencode.nvim

Fitten Code AI Programming Assistant for Neovim provides fast completion using AI, asynchronous I/O, and support for various actions like document code, edit code, explain code, find bugs, generate unit test, implement features, optimize code, refactor code, start chat, and more. It offers features like accepting suggestions with Tab, accepting line with Ctrl + Down, accepting word with Ctrl + Right, undoing accepted text, automatic scrolling, and multiple HTTP/REST backends. It can run as a coc.nvim source or nvim-cmp source.

chatgpt

The ChatGPT R package provides a set of features to assist in R coding. It includes addins like Ask ChatGPT, Comment selected code, Complete selected code, Create unit tests, Create variable name, Document code, Explain selected code, Find issues in the selected code, Optimize selected code, and Refactor selected code. Users can interact with ChatGPT to get code suggestions, explanations, and optimizations. The package helps in improving coding efficiency and quality by providing AI-powered assistance within the RStudio environment.

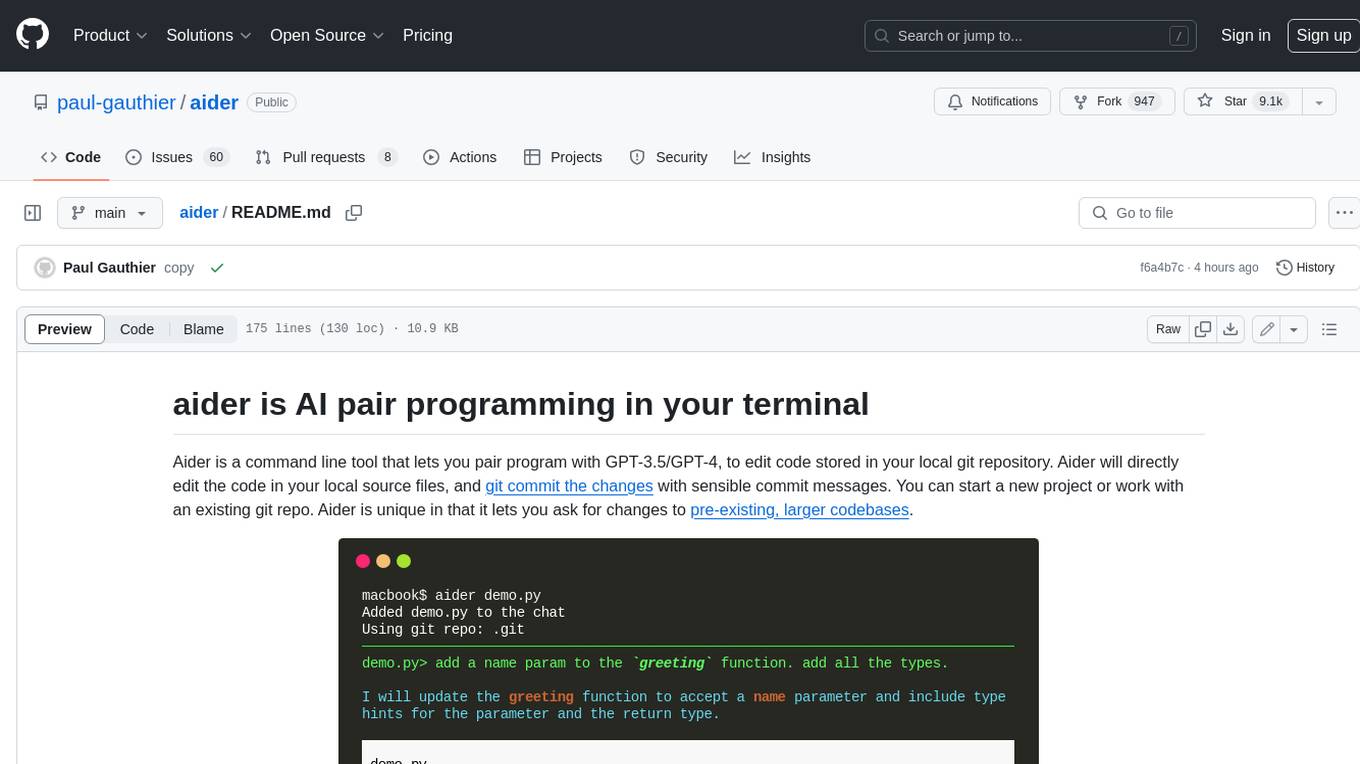

aider

Aider is a command-line tool that lets you pair program with GPT-3.5/GPT-4 to edit code stored in your local git repository. Aider will directly edit the code in your local source files and git commit the changes with sensible commit messages. You can start a new project or work with an existing git repo. Aider is unique in that it lets you ask for changes to pre-existing, larger codebases.

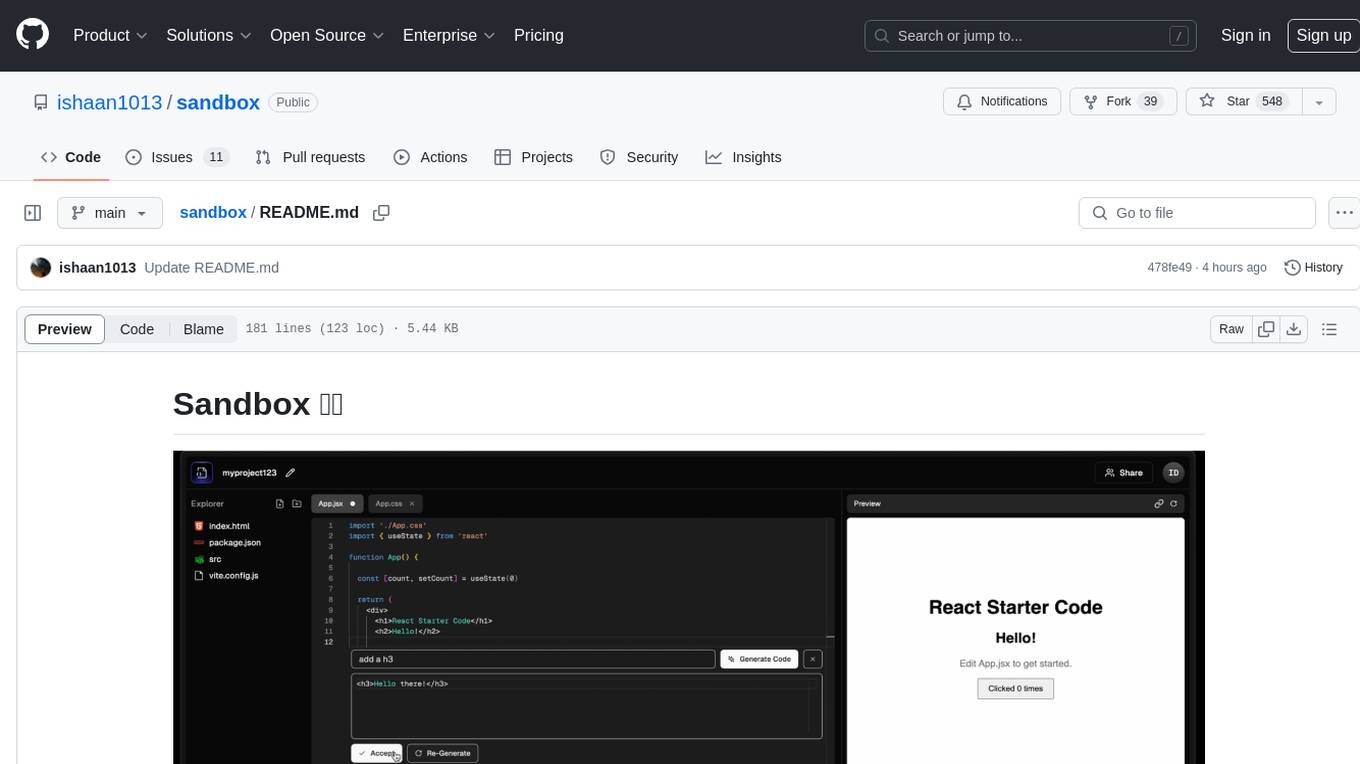

sandbox

Sandbox is an open-source cloud-based code editing environment with custom AI code autocompletion and real-time collaboration. It consists of a frontend built with Next.js, TailwindCSS, Shadcn UI, Clerk, Monaco, and Liveblocks, and a backend with Express, Socket.io, Cloudflare Workers, D1 database, R2 storage, Workers AI, and Drizzle ORM. The backend includes microservices for database, storage, and AI functionalities. Users can run the project locally by setting up environment variables and deploying the containers. Contributions are welcome following the commit convention and structure provided in the repository.

For similar jobs

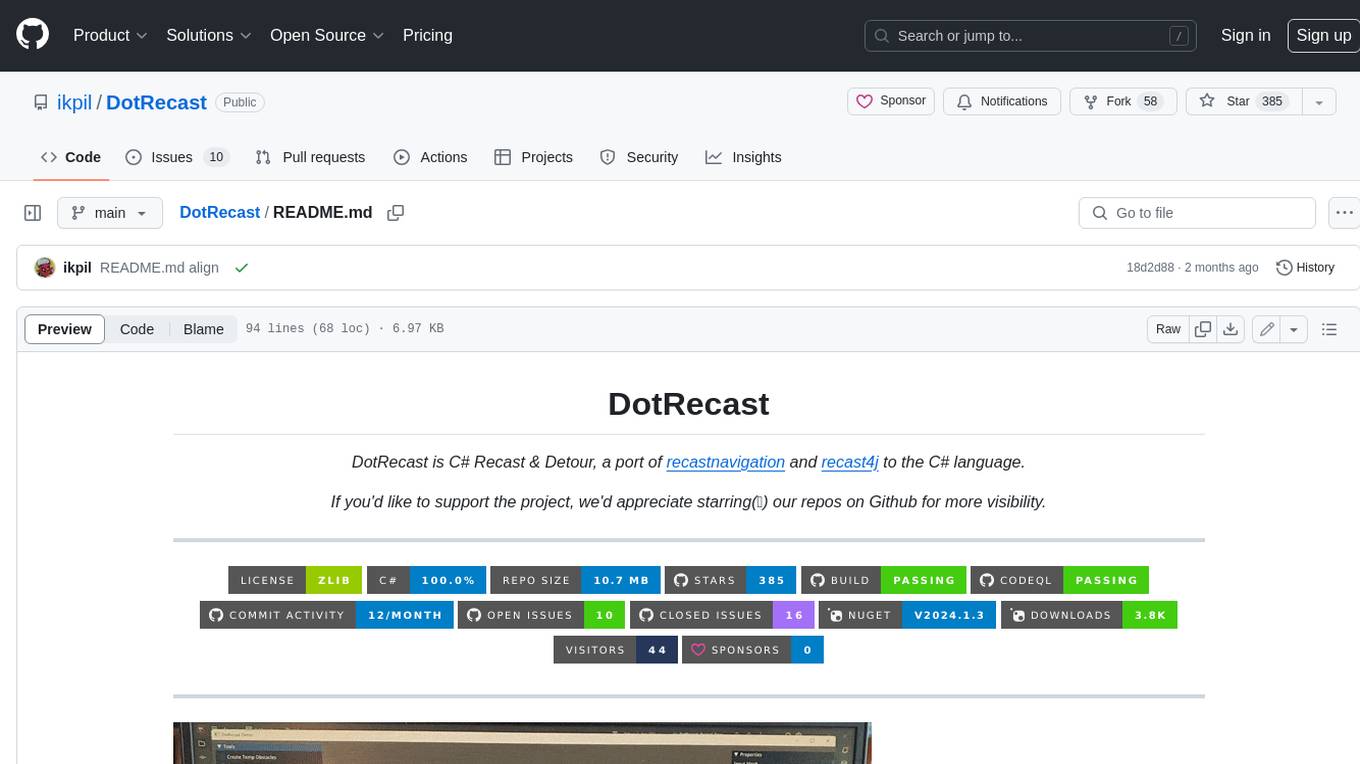

DotRecast

DotRecast is a C# port of Recast & Detour, a navigation library used in many AAA and indie games and engines. It provides automatic navmesh generation, fast turnaround times, detailed customization options, and is dependency-free. Recast Navigation is divided into multiple modules, each contained in its own folder: - DotRecast.Core: Core utils - DotRecast.Recast: Navmesh generation - DotRecast.Detour: Runtime loading of navmesh data, pathfinding, navmesh queries - DotRecast.Detour.TileCache: Navmesh streaming. Useful for large levels and open-world games - DotRecast.Detour.Crowd: Agent movement, collision avoidance, and crowd simulation - DotRecast.Detour.Dynamic: Robust support for dynamic nav meshes combining pre-built voxels with dynamic objects which can be freely added and removed - DotRecast.Detour.Extras: Simple tool to import navmeshes created with A* Pathfinding Project - DotRecast.Recast.Toolset: All modules - DotRecast.Recast.Demo: Standalone, comprehensive demo app showcasing all aspects of Recast & Detour's functionality - Tests: Unit tests Recast constructs a navmesh through a multi-step mesh rasterization process: 1. First Recast rasterizes the input triangle meshes into voxels. 2. Voxels in areas where agents would not be able to move are filtered and removed. 3. The walkable areas described by the voxel grid are then divided into sets of polygonal regions. 4. The navigation polygons are generated by re-triangulating the generated polygonal regions into a navmesh. You can use Recast to build a single navmesh, or a tiled navmesh. Single meshes are suitable for many simple, static cases and are easy to work with. Tiled navmeshes are more complex to work with but better support larger, more dynamic environments. Tiled meshes enable advanced Detour features like re-baking, hierarchical path-planning, and navmesh data-streaming.

bots

The 'bots' repository is a collection of guides, tools, and example bots for programming bots to play video games. It provides resources on running bots live, installing the BotLab client, debugging bots, testing bots in simulated environments, and more. The repository also includes example bots for games like EVE Online, Tribal Wars 2, and Elvenar. Users can learn about developing bots for specific games, syntax of the Elm programming language, and tools for memory reading development. Additionally, there are guides on bot programming, contributing to BotLab, and exploring Elm syntax and core library.

Half-Life-Resurgence

Half-Life-Resurgence is a recreation and expansion project that brings NPCs, entities, and weapons from the Half-Life series into Garry's Mod. The goal is to faithfully recreate original content while also introducing new features and custom content envisioned by the community. Users can expect a wide range of NPCs with new abilities, AI behaviors, and weapons, as well as support for playing as any character and replacing NPCs in Half-Life 1 & 2 campaigns.

SwordCoastStratagems

Sword Coast Stratagems (SCS) is a mod that enhances Baldur's Gate games by adding over 130 optional components focused on improving monster AI, encounter difficulties, cosmetic enhancements, and ease-of-use tweaks. This repository serves as an archive for the project, with updates pushed only when new releases are made. It is not a collaborative project, and bug reports or suggestions should be made at the Gibberlings 3 forums. The mod is designed for offline workflow and should be downloaded from official releases.

LambsDanger

LAMBS Danger FSM is an open-source mod developed for Arma3, aimed at enhancing the AI behavior by integrating buildings into the tactical landscape, creating distinct AI states, and ensuring seamless compatibility with vanilla, ACE3, and modded assets. Originally created for the Norwegian gaming community, it is now available on Steam Workshop and GitHub for wider use. Users are allowed to customize and redistribute the mod according to their requirements. The project is licensed under the GNU General Public License (GPLv2) with additional amendments.

beehave

Beehave is a powerful addon for Godot Engine that enables users to create robust AI systems using behavior trees. It simplifies the design of complex NPC behaviors, challenging boss battles, and other advanced setups. Beehave allows for the creation of highly adaptive AI that responds to changes in the game world and overcomes unexpected obstacles, catering to both beginners and experienced developers. The tool is currently in development for version 3.0.

thinker

Thinker is an AI improvement mod for Alpha Centauri: Alien Crossfire that enhances single player challenge and gameplay with features like improved production/movement AI, visual changes on map rendering, more config options, resolution settings, and automation features. It includes Scient's patches and requires the GOG version of Alpha Centauri with the official Alien Crossfire patch version 2.0 installed. The mod provides additional DLL features developed in C++ for a richer gaming experience.

MobChip

MobChip is an all-in-one Entity AI and Bosses Library for Minecraft 1.13 and above. It simplifies the implementation of Minecraft's native entity AI into plugins, offering documentation, API usage, and utilities for ease of use. The library is flexible, using Reflection and Abstraction for modern functionality on older versions, and ensuring compatibility across multiple Minecraft versions. MobChip is open source, providing features like Bosses Library, Pathfinder Goals, Behaviors, Villager Gossip, Ender Dragon Phases, and more.