langgraph-studio

Desktop app for prototyping and debugging LangGraph applications locally.

Stars: 1491

LangGraph Studio is a specialized agent IDE that enables visualization, interaction, and debugging of complex agentic applications. It offers visual graphs and state editing to better understand agent workflows and iterate faster. Users can collaborate with teammates using LangSmith to debug failure modes. The tool integrates with LangSmith and requires Docker installed. Users can create and edit threads, configure graph runs, add interrupts, and support human-in-the-loop workflows. LangGraph Studio allows interactive modification of project config and graph code, with live sync to the interactive graph for easier iteration on long-running agents.

README:

LangGraph Studio offers a new way to develop LLM applications by providing a specialized agent IDE that enables visualization, interaction, and debugging of complex agentic applications

With visual graphs and the ability to edit state, you can better understand agent workflows and iterate faster. LangGraph Studio integrates with LangSmith so you can collaborate with teammates to debug failure modes.

While in Beta, LangGraph Studio is available for free to all LangSmith users on any plan tier. Sign up for LangSmith here.

Download the latest .dmg file of LangGraph Studio by clicking here or by visiting the releases page.

Currently, only macOS is supported. Windows and Linux support is coming soon. We also depend on Docker Engine to be running, currently we only support the following runtimes:

LangGraph Studio requires docker-compose version 2.22.0+ or higher. Please make sure you have Docker Desktop or Orbstack installed and running before continuing.

To use LangGraph Studio, make sure you have a project with a LangGraph app set up.

For this example, we will use this example repository here which uses a requirements.txt file for dependencies:

git clone https://github.com/langchain-ai/langgraph-example.gitIf you would like to use a pyproject.toml file instead for managing dependencies, you can use this example repository.

git clone https://github.com/langchain-ai/langgraph-example-pyproject.gitYou will then want to create a .env file with the relevant environment variables:

cp .env.example .envYou should then open up the .env file and fill in with relevant OpenAI, Anthropic, and Tavily API keys.

If you already have them set in your environment, you can save them to this .env file with the following commands:

echo "OPENAI_API_KEY=\"$OPENAI_API_KEY\"" > .env

echo "ANTHROPIC_API_KEY=\"$ANTHROPIC_API_KEY\"" >> .env

echo "TAVILY_API_KEY=\"$TAVILY_API_KEY\"" >> .envNote: do NOT add a LANGSMITH_API_KEY to the .env file. We will do this automatically for you when you authenticate, and manually setting this may cause errors.

Once you've set up the project, you can use it in LangGraph Studio. Let's dive in!

When you open LangGraph Studio desktop app for the first time, you need to login via LangSmith.

Once you have successfully authenticated, you can choose the LangGraph application folder to use — you can either drag and drop or manually select it in the file picker. If you are using the example project, the folder would be langgraph-example.

[!IMPORTANT] The application directory you select needs to contain correctly configured

langgraph.jsonfile. See more information on how to configure it here and how to set up a LangGraph app here.

Once you select a valid project, LangGraph Studio will start a LangGraph API server and you should see a UI with your graph rendered.

Now we can run the graph! LangGraph Studio lets you run your graph with different inputs and configurations.

To start a new run:

- In the dropdown menu (top-left corner of the left-hand pane), select a graph. In our example the graph is called

agent. The list of graphs corresponds to thegraphskeys in yourlanggraph.jsonconfiguration. - In the bottom of the left-hand pane, edit the

Inputsection. - Click

Submitto invoke the selected graph. - View output of the invocation in the right-hand pane.

The following video shows how to start a new run:

https://github.com/user-attachments/assets/e0e7487e-17e2-4194-a4ad-85b346c2f1c4

To change configuration for a given graph run, press Configurable button in the Input section. Then click Submit to invoke the graph.

[!IMPORTANT] In order for the

Configurablemenu to be visible, make sure to specify config schema when creatingStateGraph. You can read more about how to add config schema to your graph here.

The following video shows how to edit configuration and start a new run:

https://github.com/user-attachments/assets/8495b476-7e33-42d4-85cb-2f9269bea20c

When you open LangGraph Studio, you will automatically be in a new thread window. If you have an existing thread open, follow these steps to create a new thread:

- In the top-right corner of the right-hand pane, press

+to open a new thread menu.

The following video shows how to create a thread:

https://github.com/user-attachments/assets/78d4a692-2042-48e2-a7e2-5a7ca3d5a611

To select a thread:

- Click on

New Thread/Thread <thread-id>label at the top of the right-hand pane to open a thread list dropdown. - Select a thread that you wish to view / edit.

The following video shows how to select a thread:

https://github.com/user-attachments/assets/5f0dbd63-fa59-4496-8d8e-4fb8d0eab893

LangGraph Studio allows you to edit the thread state and fork the threads to create alternative graph execution with the updated state. To do it:

- Select a thread you wish to edit.

- In the right-hand pane hover over the step you wish to edit and click on "pencil" icon to edit.

- Make your edits.

- Click

Forkto update the state and create a new graph execution with the updated state.

The following video shows how to edit a thread in the studio:

https://github.com/user-attachments/assets/47f887e7-2e3f-46ce-977c-f474c3cd797e

You might want to execute your graph step by step, or stop graph execution before/after a specific node executes. You can do so by adding interrupts. Interrupts can be set for all nodes (i.e. walk through the agent execution step by step) or for specific nodes. An interrupt in LangGraph Studio means that the graph execution will be interrupted both before and after a given node runs.

To walk through the agent execution step by step, you can add interrupts to a all or a subset of nodes in the graph:

- In the dropdown menu (top-right corner of the left-hand pane), click

Interrupt. - Select a subset of nodes to interrupt on, or click

Interrupt on all.

The following video shows how to add interrupts to all nodes:

https://github.com/user-attachments/assets/db44ebda-4d6e-482d-9ac8-ea8f5f0148ea

- Navigate to the left-hand pane with the graph visualization.

- Hover over a node you want to add an interrupt to. You should see a

+button show up on the left side of the node. - Click

+to invoke the selected graph. - Run the graph by adding

Input/ configuration and clickingSubmit

The following video shows how to add interrupts to a specific node:

https://github.com/user-attachments/assets/13429609-18fc-4f21-9cb9-4e0daeea62c4

To remove the interrupt, simply follow the same step and press x button on the left side of the node.

In addition to interrupting on a node and editing the graph state, you might want to support human-in-the-loop workflows with the ability to manually update state. Here is a modified version of agent.py with agent and human nodes, where the graph execution will be interrupted on human node. This will let you send input as part of the human node. This can be useful when you want the agent to get user input. This essentially replaces how you might use input() if you were running this from the command line.

from typing import TypedDict, Annotated, Sequence, Literal

from langchain_core.messages import BaseMessage, HumanMessage

from langchain_anthropic import ChatAnthropic

from langgraph.graph import StateGraph, END, add_messages

class AgentState(TypedDict):

messages: Annotated[Sequence[BaseMessage], add_messages]

model = ChatAnthropic(temperature=0, model_name="claude-3-sonnet-20240229")

def call_model(state: AgentState) -> AgentState:

messages = state["messages"]

response = model.invoke(messages)

return {"messages": [response]}

# no-op node that should be interrupted on

def human_feedback(state: AgentState) -> AgentState:

pass

def should_continue(state: AgentState) -> Literal["agent", "end"]:

messages = state['messages']

last_message = messages[-1]

if isinstance(last_message, HumanMessage):

return "agent"

return "end"

workflow = StateGraph(AgentState)

workflow.set_entry_point("agent")

workflow.add_node("agent", call_model)

workflow.add_node("human", human_feedback)

workflow.add_edge("agent", "human")

workflow.add_conditional_edges(

"human",

should_continue,

{

"agent": "agent",

"end": END,

},

)

graph = workflow.compile(interrupt_before=["human"])The following video shows how to manually send state updates (i.e. messages in our example) when interrupted:

https://github.com/user-attachments/assets/f6d4fd18-df4d-45b7-8b1b-ad8506d08abd

LangGraph Studio allows you to modify your project config (langgraph.json) interactively.

To modify the config from the studio, follow these steps:

- Click

Configureon the bottom right. This will open an interactive config menu with the values that correspond to the existinglanggraph.json. - Make your edits.

- Click

Save and Restartto reload the LangGraph API server with the updated config.

The following video shows how to edit project config from the studio:

https://github.com/user-attachments/assets/86d7d1f7-800c-4739-80bc-8122b4728817

With LangGraph Studio you can modify your graph code and sync the changes live to the interactive graph.

To modify your graph from the studio, follow these steps:

- Click

Open in VS Codeon the bottom right. This will open the project that is currently opened in LangGraph studio. - Make changes to the

.pyfiles where the compiled graph is defined or associated dependencies. - LangGraph studio will automatically reload once the changes are saved in the project directory.

The following video shows how to open code editor from the studio:

https://github.com/user-attachments/assets/8ac0443d-460b-438e-a379-182ec9f68ff5

After you modify the underlying code you can also replay a node in the graph. For example, if an agent responds poorly, you can update the agent node implementation in your code editor and rerun it. This can make it much easier to iterate on long-running agents.

https://github.com/user-attachments/assets/9ec1b8ed-c6f8-433d-8bef-0dbda58a1075

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for langgraph-studio

Similar Open Source Tools

langgraph-studio

LangGraph Studio is a specialized agent IDE that enables visualization, interaction, and debugging of complex agentic applications. It offers visual graphs and state editing to better understand agent workflows and iterate faster. Users can collaborate with teammates using LangSmith to debug failure modes. The tool integrates with LangSmith and requires Docker installed. Users can create and edit threads, configure graph runs, add interrupts, and support human-in-the-loop workflows. LangGraph Studio allows interactive modification of project config and graph code, with live sync to the interactive graph for easier iteration on long-running agents.

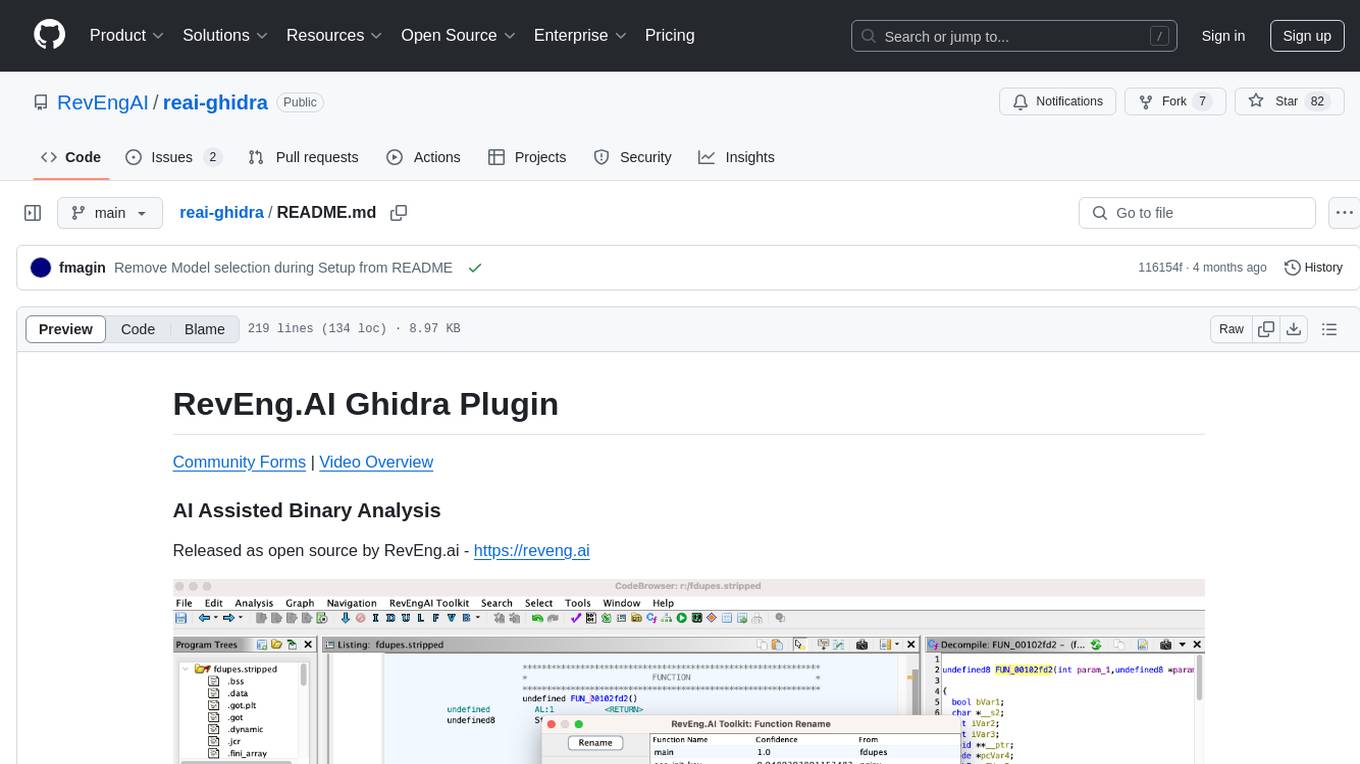

reai-ghidra

The RevEng.AI Ghidra Plugin by RevEng.ai allows users to interact with their API within Ghidra for Binary Code Similarity analysis to aid in Reverse Engineering stripped binaries. Users can upload binaries, rename functions above a confidence threshold, and view similar functions for a selected function.

cog-comfyui

Cog-comfyui allows users to run ComfyUI workflows on Replicate. ComfyUI is a visual programming tool for creating and sharing generative art workflows. With cog-comfyui, users can access a variety of pre-trained models and custom nodes to create their own unique artworks. The tool is easy to use and does not require any coding experience. Users simply need to upload their API JSON file and any necessary input files, and then click the "Run" button. Cog-comfyui will then generate the output image or video file.

civitai

Civitai is a platform where people can share their stable diffusion models (textual inversions, hypernetworks, aesthetic gradients, VAEs, and any other crazy stuff people do to customize their AI generations), collaborate with others to improve them, and learn from each other's work. The platform allows users to create an account, upload their models, and browse models that have been shared by others. Users can also leave comments and feedback on each other's models to facilitate collaboration and knowledge sharing.

cog-comfyui

Cog-ComfyUI is a tool designed to run ComfyUI workflows on Replicate. It allows users to easily integrate their own workflows into their app or website using the Replicate API. The tool includes popular model weights and custom nodes, with the option to request more custom nodes or models. Users can get their API JSON, gather input files, and use custom LoRAs from CivitAI or HuggingFace. Additionally, users can run their workflows and set up their own dedicated instances for better performance and control. The tool provides options for private deployments, forking using Cog, or creating new models from the train tab on Replicate. It also offers guidance on developing locally and running the Web UI from a Cog container.

CLI

Bito CLI provides a command line interface to the Bito AI chat functionality, allowing users to interact with the AI through commands. It supports complex automation and workflows, with features like long prompts and slash commands. Users can install Bito CLI on Mac, Linux, and Windows systems using various methods. The tool also offers configuration options for AI model type, access key management, and output language customization. Bito CLI is designed to enhance user experience in querying AI models and automating tasks through the command line interface.

vectara-answer

Vectara Answer is a sample app for Vectara-powered Summarized Semantic Search (or question-answering) with advanced configuration options. For examples of what you can build with Vectara Answer, check out Ask News, LegalAid, or any of the other demo applications.

reai-ida

RevEng.AI IDA Pro Plugin is a tool that integrates with the RevEng.AI platform to provide various features such as uploading binaries for analysis, downloading analysis logs, renaming function names, generating AI summaries, synchronizing functions between local analysis and the platform, and configuring plugin settings. Users can upload files for analysis, synchronize function names, rename functions, generate block summaries, and explain function behavior using this plugin. The tool requires IDA Pro v8.0 or later with Python 3.9 and higher. It relies on the 'reait' package for functionality.

aiarena-web

aiarena-web is a website designed for running the aiarena.net infrastructure. It consists of different modules such as core functionality, web API endpoints, frontend templates, and a module for linking users to their Patreon accounts. The website serves as a platform for obtaining new matches, reporting results, featuring match replays, and connecting with Patreon supporters. The project is licensed under GPLv3 in 2019.

AI-Horde-Worker

AI-Horde-Worker is a repository containing the original reference implementation for a worker that turns your graphics card(s) into a worker for the AI Horde. It allows users to generate or alchemize images for others. The repository provides instructions for setting up the worker on Windows and Linux, updating the worker code, running with multiple GPUs, and stopping the worker. Users can configure the worker using a WebUI to connect to the horde with their username and API key. The repository also includes information on model usage and running the Docker container with specified environment variables.

openui

OpenUI is a tool designed to simplify the process of building UI components by allowing users to describe UI using their imagination and see it rendered live. It supports converting HTML to React, Svelte, Web Components, etc. The tool is open source and aims to make UI development fun, fast, and flexible. It integrates with various AI services like OpenAI, Groq, Gemini, Anthropic, Cohere, and Mistral, providing users with the flexibility to use different models. OpenUI also supports LiteLLM for connecting to various LLM services and allows users to create custom proxy configs. The tool can be run locally using Docker or Python, and it offers a development environment for quick setup and testing.

renumics-rag

Renumics RAG is a retrieval-augmented generation assistant demo that utilizes LangChain and Streamlit. It provides a tool for indexing documents and answering questions based on the indexed data. Users can explore and visualize RAG data, configure OpenAI and Hugging Face models, and interactively explore questions and document snippets. The tool supports GPU and CPU setups, offers a command-line interface for retrieving and answering questions, and includes a web application for easy access. It also allows users to customize retrieval settings, embeddings models, and database creation. Renumics RAG is designed to enhance the question-answering process by leveraging indexed documents and providing detailed answers with sources.

airbyte_serverless

AirbyteServerless is a lightweight tool designed to simplify the management of Airbyte connectors. It offers a serverless mode for running connectors, allowing users to easily move data from any source to their data warehouse. Unlike the full Airbyte-Open-Source-Platform, AirbyteServerless focuses solely on the Extract-Load process without a UI, database, or transform layer. It provides a CLI tool, 'abs', for managing connectors, creating connections, running jobs, selecting specific data streams, handling secrets securely, and scheduling remote runs. The tool is scalable, allowing independent deployment of multiple connectors. It aims to streamline the connector management process and provide a more agile alternative to the comprehensive Airbyte platform.

smartcat

Smartcat is a CLI interface that brings language models into the Unix ecosystem, allowing power users to leverage the capabilities of LLMs in their daily workflows. It features a minimalist design, seamless integration with terminal and editor workflows, and customizable prompts for specific tasks. Smartcat currently supports OpenAI, Mistral AI, and Anthropic APIs, providing access to a range of language models. With its ability to manipulate file and text streams, integrate with editors, and offer configurable settings, Smartcat empowers users to automate tasks, enhance code quality, and explore creative possibilities.

fasttrackml

FastTrackML is an experiment tracking server focused on speed and scalability, fully compatible with MLFlow. It provides a user-friendly interface to track and visualize your machine learning experiments, making it easy to compare different models and identify the best performing ones. FastTrackML is open source and can be easily installed and run with pip or Docker. It is also compatible with the MLFlow Python package, making it easy to integrate with your existing MLFlow workflows.

AlwaysReddy

AlwaysReddy is a simple LLM assistant with no UI that you interact with entirely using hotkeys. It can easily read from or write to your clipboard, and voice chat with you via TTS and STT. Here are some of the things you can use AlwaysReddy for: - Explain a new concept to AlwaysReddy and have it save the concept (in roughly your words) into a note. - Ask AlwaysReddy "What is X called?" when you know how to roughly describe something but can't remember what it is called. - Have AlwaysReddy proofread the text in your clipboard before you send it. - Ask AlwaysReddy "From the comments in my clipboard, what do the r/LocalLLaMA users think of X?" - Quickly list what you have done today and get AlwaysReddy to write a journal entry to your clipboard before you shutdown the computer for the day.

For similar tasks

langgraph-studio

LangGraph Studio is a specialized agent IDE that enables visualization, interaction, and debugging of complex agentic applications. It offers visual graphs and state editing to better understand agent workflows and iterate faster. Users can collaborate with teammates using LangSmith to debug failure modes. The tool integrates with LangSmith and requires Docker installed. Users can create and edit threads, configure graph runs, add interrupts, and support human-in-the-loop workflows. LangGraph Studio allows interactive modification of project config and graph code, with live sync to the interactive graph for easier iteration on long-running agents.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.