gpt-cli

Command-line interface for ChatGPT, Claude and Bard

Stars: 580

gpt-cli is a command-line interface tool for interacting with various chat language models like ChatGPT, Claude, and others. It supports model customization, usage tracking, keyboard shortcuts, multi-line input, markdown support, predefined messages, and multiple assistants. Users can easily switch between different assistants, define custom assistants, and configure model parameters and API keys in a YAML file for easy customization and management.

README:

Command-line interface for chat LLMs.

- OpenAI

- Anthropic

- Google Gemini

- Cohere

- Other APIs compatible with OpenAI (e.g. Together, OpenRouter, local models with LM Studio)

- Command-Line Interface: Interact with ChatGPT or Claude directly from your terminal.

- Model Customization: Override the default model, temperature, and top_p values for each assistant, giving you fine-grained control over the AI's behavior.

- Usage tracking: Track your API usage with token count and price information.

- Keyboard Shortcuts: Use Ctrl-C, Ctrl-D, and Ctrl-R shortcuts for easier conversation management and input control.

- Multi-Line Input: Enter multi-line mode for more complex queries or conversations.

- Markdown Support: Enable or disable markdown formatting for chat sessions to tailor the output to your preferences.

- Predefined Messages: Set up predefined messages for your custom assistants to establish context or role-play scenarios.

- Multiple Assistants: Easily switch between different assistants, including general, dev, and custom assistants defined in the config file.

- Flexible Configuration: Define your assistants, model parameters, and API key in a YAML configuration file, allowing for easy customization and management.

This install assumes a Linux/OSX machine with Python and pip available.

pip install gpt-command-lineInstall latest version from source:

pip install git+https://github.com/kharvd/gpt-cli.gitOr install by cloning the repository manually:

git clone https://github.com/kharvd/gpt-cli.git

cd gpt-cli

pip install .Add the OpenAI API key to your .bashrc file (in the root of your home folder).

In this example we use nano, you can use any text editor.

nano ~/.bashrc

export OPENAI_API_KEY=<your_key_here>

Run the tool

gpt

You can also use a gpt.yml file for configuration. See the Configuration section below.

Make sure to set the OPENAI_API_KEY environment variable to your OpenAI API key (or put it in the ~/.config/gpt-cli/gpt.yml file as described below).

usage: gpt [-h] [--no_markdown] [--model MODEL] [--temperature TEMPERATURE] [--top_p TOP_P]

[--log_file LOG_FILE] [--log_level {DEBUG,INFO,WARNING,ERROR,CRITICAL}]

[--prompt PROMPT] [--execute EXECUTE] [--no_stream]

[{dev,general,bash}]

Run a chat session with ChatGPT. See https://github.com/kharvd/gpt-cli for more information.

positional arguments:

{dev,general,bash}

The name of assistant to use. `general` (default) is a generally helpful

assistant, `dev` is a software development assistant with shorter

responses. You can specify your own assistants in the config file

~/.config/gpt-cli/gpt.yml. See the README for more information.

optional arguments:

-h, --help show this help message and exit

--no_markdown Disable markdown formatting in the chat session.

--model MODEL The model to use for the chat session. Overrides the default model defined

for the assistant.

--temperature TEMPERATURE

The temperature to use for the chat session. Overrides the default

temperature defined for the assistant.

--top_p TOP_P The top_p to use for the chat session. Overrides the default top_p defined

for the assistant.

--log_file LOG_FILE The file to write logs to. Supports strftime format codes.

--log_level {DEBUG,INFO,WARNING,ERROR,CRITICAL}

The log level to use

--prompt PROMPT, -p PROMPT

If specified, will not start an interactive chat session and instead will

print the response to standard output and exit. May be specified multiple

times. Use `-` to read the prompt from standard input. Implies

--no_markdown.

--execute EXECUTE, -e EXECUTE

If specified, passes the prompt to the assistant and allows the user to

edit the produced shell command before executing it. Implies --no_stream.

Use `-` to read the prompt from standard input.

--no_stream If specified, will not stream the response to standard output. This is

useful if you want to use the response in a script. Ignored when the

--prompt option is not specified.

--no_price Disable price logging.

Type :q or Ctrl-D to exit, :c or Ctrl-C to clear the conversation, :r or Ctrl-R to re-generate the last response.

To enter multi-line mode, enter a backslash \ followed by a new line. Exit the multi-line mode by pressing ESC and then Enter.

You can override the model parameters using --model, --temperature and --top_p arguments at the end of your prompt. For example:

> What is the meaning of life? --model gpt-4 --temperature 2.0

The meaning of life is subjective and can be different for diverse human beings and unique-phil ethics.org/cultuties-/ it that reson/bdstals89im3_jrf334;mvs-bread99ef=g22me

The dev assistant is instructed to be an expert in software development and provide short responses.

$ gpt devThe bash assistant is instructed to be an expert in bash scripting and provide only bash commands. Use the --execute option to execute the commands. It works best with the gpt-4 model.

gpt bash -e "How do I list files in a directory?"This will prompt you to edit the command in your $EDITOR it before executing it.

You can configure the assistants in the config file ~/.config/gpt-cli/gpt.yml. The file is a YAML file with the following structure (see also config.py)

default_assistant: <assistant_name>

markdown: False

openai_api_key: <openai_api_key>

anthropic_api_key: <anthropic_api_key>

log_file: <path>

log_level: <DEBUG|INFO|WARNING|ERROR|CRITICAL>

assistants:

<assistant_name>:

model: <model_name>

temperature: <temperature>

top_p: <top_p>

messages:

- { role: <role>, content: <message> }

- ...

<assistant_name>:

...You can override the parameters for the pre-defined assistants as well.

You can specify the default assistant to use by setting the default_assistant field. If you don't specify it, the default assistant is general. You can also specify the model, temperature and top_p to use for the assistant. If you don't specify them, the default values are used. These parameters can also be overridden by the command-line arguments.

Example:

default_assistant: dev

markdown: True

openai_api_key: <openai_api_key>

assistants:

pirate:

model: gpt-4

temperature: 1.0

messages:

- { role: system, content: "You are a pirate." }$ gpt pirate

> Arrrr

Ahoy, matey! What be bringing ye to these here waters? Be it treasure or adventure ye seek, we be sailing the high seas together. Ready yer map and compass, for we have a long voyage ahead!

If you are using other models compatible with the OpenAI Python SDK, you can configure them by modifying the openai_base_url setting in the config file or using the OPENAI_BASE_URL environment variable .

Example:

openai_base_url: https://your-custom-api-url.com/v1

Use oai-compat: prefix for the model name to pass non-GPT model names to the API. For example, to chat with Llama3-70b on https://together.ai, use the following command:

OPENAI_API_KEY=$TOGETHER_API_KEY OPENAI_BASE_URL=https://api.together.xyz/v1 gpt general --model oai-compat:meta-llama/Llama-3-70b-chat-hfThe prefix is stripped before sending the request to the API.

To use Claude, you should have an API key from Anthropic (currently there is a waitlist for API access). After getting the API key, you can add an environment variable

export ANTHROPIC_API_KEY=<your_key_here>or a config line in ~/.config/gpt-cli/gpt.yml:

anthropic_api_key: <your_key_here>Now you should be able to run gpt with --model claude-3-(opus|sonnet|haiku)-<date>.

gpt --model claude-3-opus-20240229export GOOGLE_API_KEY=<your_key_here>or

google_api_key: <your_key_here>export COHERE_API_KEY=<your_key_here>or

cohere_api_key: <your_key_here>For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for gpt-cli

Similar Open Source Tools

gpt-cli

gpt-cli is a command-line interface tool for interacting with various chat language models like ChatGPT, Claude, and others. It supports model customization, usage tracking, keyboard shortcuts, multi-line input, markdown support, predefined messages, and multiple assistants. Users can easily switch between different assistants, define custom assistants, and configure model parameters and API keys in a YAML file for easy customization and management.

termax

Termax is an LLM agent in your terminal that converts natural language to commands. It is featured by: - Personalized Experience: Optimize the command generation with RAG. - Various LLMs Support: OpenAI GPT, Anthropic Claude, Google Gemini, Mistral AI, and more. - Shell Extensions: Plugin with popular shells like `zsh`, `bash` and `fish`. - Cross Platform: Able to run on Windows, macOS, and Linux.

magic-cli

Magic CLI is a command line utility that leverages Large Language Models (LLMs) to enhance command line efficiency. It is inspired by projects like Amazon Q and GitHub Copilot for CLI. The tool allows users to suggest commands, search across command history, and generate commands for specific tasks using local or remote LLM providers. Magic CLI also provides configuration options for LLM selection and response generation. The project is still in early development, so users should expect breaking changes and bugs.

chatgpt-cli

ChatGPT CLI provides a powerful command-line interface for seamless interaction with ChatGPT models via OpenAI and Azure. It features streaming capabilities, extensive configuration options, and supports various modes like streaming, query, and interactive mode. Users can manage thread-based context, sliding window history, and provide custom context from any source. The CLI also offers model and thread listing, advanced configuration options, and supports GPT-4, GPT-3.5-turbo, and Perplexity's models. Installation is available via Homebrew or direct download, and users can configure settings through default values, a config.yaml file, or environment variables.

vector-inference

This repository provides an easy-to-use solution for running inference servers on Slurm-managed computing clusters using vLLM. All scripts in this repository run natively on the Vector Institute cluster environment. Users can deploy models as Slurm jobs, check server status and performance metrics, and shut down models. The repository also supports launching custom models with specific configurations. Additionally, users can send inference requests and set up an SSH tunnel to run inference from a local device.

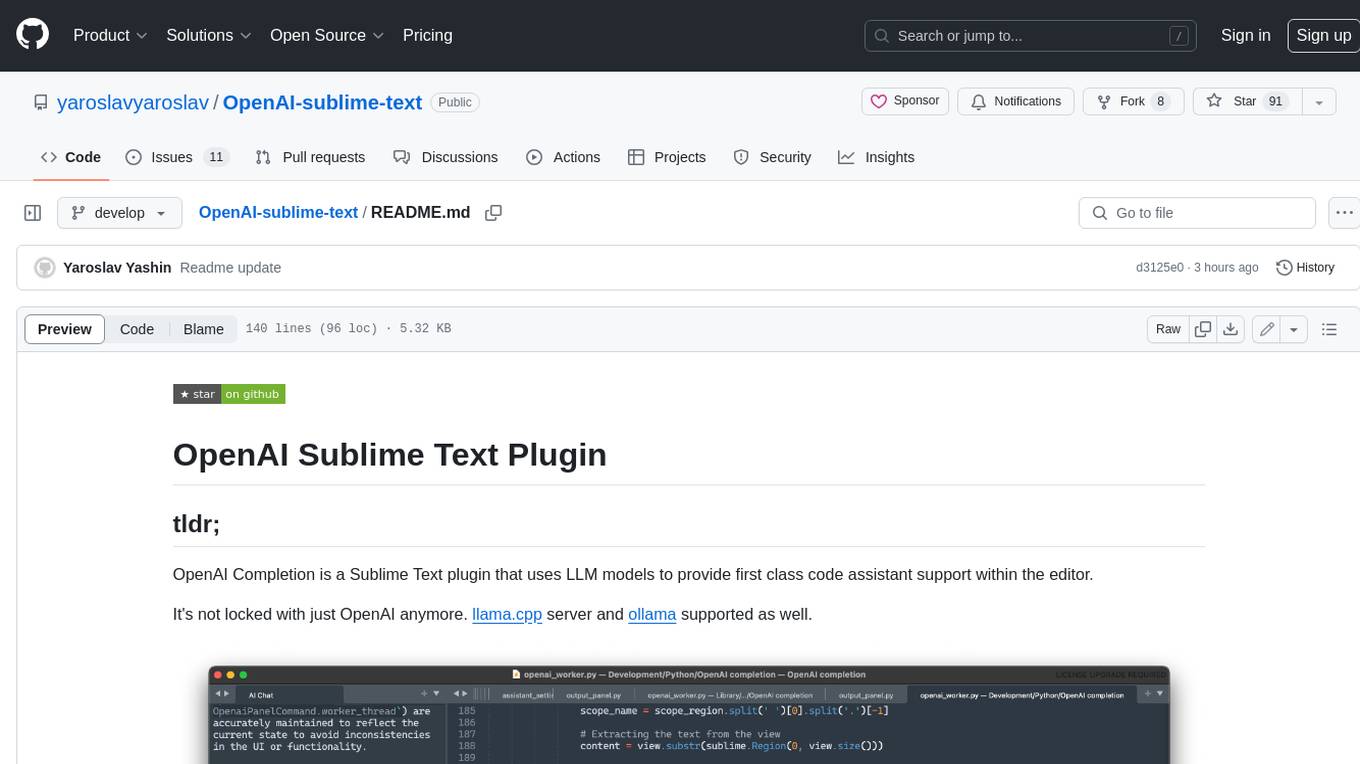

OpenAI-sublime-text

The OpenAI Completion plugin for Sublime Text provides first-class code assistant support within the editor. It utilizes LLM models to manipulate code, engage in chat mode, and perform various tasks. The plugin supports OpenAI, llama.cpp, and ollama models, allowing users to customize their AI assistant experience. It offers separated chat histories and assistant settings for different projects, enabling context-specific interactions. Additionally, the plugin supports Markdown syntax with code language syntax highlighting, server-side streaming for faster response times, and proxy support for secure connections. Users can configure the plugin's settings to set their OpenAI API key, adjust assistant modes, and manage chat history. Overall, the OpenAI Completion plugin enhances the Sublime Text editor with powerful AI capabilities, streamlining coding workflows and fostering collaboration with AI assistants.

safety-tooling

This repository, safety-tooling, is designed to be shared across various AI Safety projects. It provides an LLM API with a common interface for OpenAI, Anthropic, and Google models. The aim is to facilitate collaboration among AI Safety researchers, especially those with limited software engineering backgrounds, by offering a platform for contributing to a larger codebase. The repo can be used as a git submodule for easy collaboration and updates. It also supports pip installation for convenience. The repository includes features for installation, secrets management, linting, formatting, Redis configuration, testing, dependency management, inference, finetuning, API usage tracking, and various utilities for data processing and experimentation.

loz

Loz is a command-line tool that integrates AI capabilities with Unix tools, enabling users to execute system commands and utilize Unix pipes. It supports multiple LLM services like OpenAI API, Microsoft Copilot, and Ollama. Users can run Linux commands based on natural language prompts, enhance Git commit formatting, and interact with the tool in safe mode. Loz can process input from other command-line tools through Unix pipes and automatically generate Git commit messages. It provides features like chat history access, configurable LLM settings, and contribution opportunities.

codespin

CodeSpin.AI is a set of open-source code generation tools that leverage large language models (LLMs) to automate coding tasks. With CodeSpin, you can generate code in various programming languages, including Python, JavaScript, Java, and C++, by providing natural language prompts. CodeSpin offers a range of features to enhance code generation, such as custom templates, inline prompting, and the ability to use ChatGPT as an alternative to API keys. Additionally, CodeSpin provides options for regenerating code, executing code in prompt files, and piping data into the LLM for processing. By utilizing CodeSpin, developers can save time and effort in coding tasks, improve code quality, and explore new possibilities in code generation.

chat-ui

A chat interface using open source models, eg OpenAssistant or Llama. It is a SvelteKit app and it powers the HuggingChat app on hf.co/chat.

consult-llm-mcp

Consult LLM MCP is an MCP server that enables users to consult powerful AI models like GPT-5.2, Gemini 3.0 Pro, and DeepSeek Reasoner for complex problem-solving. It supports multi-turn conversations, direct queries with optional file context, git changes inclusion for code review, comprehensive logging with cost estimation, and various CLI modes for Gemini and Codex. The tool is designed to simplify the process of querying AI models for assistance in resolving coding issues and improving code quality.

mods

AI for the command line, built for pipelines. LLM based AI is really good at interpreting the output of commands and returning the results in CLI friendly text formats like Markdown. Mods is a simple tool that makes it super easy to use AI on the command line and in your pipelines. Mods works with OpenAI, Groq, Azure OpenAI, and LocalAI To get started, install Mods and check out some of the examples below. Since Mods has built-in Markdown formatting, you may also want to grab Glow to give the output some _pizzazz_.

fish-ai

fish-ai is a tool that adds AI functionality to Fish shell. It can be integrated with various AI providers like OpenAI, Azure OpenAI, Google, Hugging Face, Mistral, or a self-hosted LLM. Users can transform comments into commands, autocomplete commands, and suggest fixes. The tool allows customization through configuration files and supports switching between contexts. Data privacy is maintained by redacting sensitive information before submission to the AI models. Development features include debug logging, testing, and creating releases.

wcgw

wcgw is a shell and coding agent designed for Claude and Chatgpt. It provides full shell access with no restrictions, desktop control on Claude for screen capture and control, interactive command handling, large file editing, and REPL support. Users can use wcgw to create, execute, and iterate on tasks, such as solving problems with Python, finding code instances, setting up projects, creating web apps, editing large files, and running server commands. Additionally, wcgw supports computer use on Docker containers for desktop control. The tool can be extended with a VS Code extension for pasting context on Claude app and integrates with Chatgpt for custom GPT interactions.

debug-gym

debug-gym is a text-based interactive debugging framework designed for debugging Python programs. It provides an environment where agents can interact with code repositories, use various tools like pdb and grep to investigate and fix bugs, and propose code patches. The framework supports different LLM backends such as OpenAI, Azure OpenAI, and Anthropic. Users can customize tools, manage environment states, and run agents to debug code effectively. debug-gym is modular, extensible, and suitable for interactive debugging tasks in a text-based environment.

vim-ollama

The 'vim-ollama' plugin for Vim adds Copilot-like code completion support using Ollama as a backend, enabling intelligent AI-based code completion and integrated chat support for code reviews. It does not rely on cloud services, preserving user privacy. The plugin communicates with Ollama via Python scripts for code completion and interactive chat, supporting Vim only. Users can configure LLM models for code completion tasks and interactive conversations, with detailed installation and usage instructions provided in the README.

For similar tasks

gpt-cli

gpt-cli is a command-line interface tool for interacting with various chat language models like ChatGPT, Claude, and others. It supports model customization, usage tracking, keyboard shortcuts, multi-line input, markdown support, predefined messages, and multiple assistants. Users can easily switch between different assistants, define custom assistants, and configure model parameters and API keys in a YAML file for easy customization and management.

aiaio

aiaio (AI-AI-O) is a lightweight, privacy-focused web UI for interacting with AI models. It supports both local and remote LLM deployments through OpenAI-compatible APIs. The tool provides features such as dark/light mode support, local SQLite database for conversation storage, file upload and processing, configurable model parameters through UI, privacy-focused design, responsive design for mobile/desktop, syntax highlighting for code blocks, real-time conversation updates, automatic conversation summarization, customizable system prompts, WebSocket support for real-time updates, Docker support for deployment, multiple API endpoint support, and multiple system prompt support. Users can configure model parameters and API settings through the UI, handle file uploads, manage conversations, and use keyboard shortcuts for efficient interaction. The tool uses SQLite for storage with tables for conversations, messages, attachments, and settings. Contributions to the project are welcome under the Apache License 2.0.

uzu-swift

Swift package for uzu, a high-performance inference engine for AI models on Apple Silicon. Deploy AI directly in your app with zero latency, full data privacy, and no inference costs. Key features include a simple, high-level API, specialized configurations for performance boosts, broad model support, and an observable model manager. Easily set up projects, obtain an API key, choose a model, and run it with corresponding identifiers. Examples include chat, speedup with speculative decoding, chat with dynamic context, chat with static context, summarization, classification, cloud, and structured output. Troubleshooting available via Discord or email. Licensed under MIT.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

safety-tooling

This repository, safety-tooling, is designed to be shared across various AI Safety projects. It provides an LLM API with a common interface for OpenAI, Anthropic, and Google models. The aim is to facilitate collaboration among AI Safety researchers, especially those with limited software engineering backgrounds, by offering a platform for contributing to a larger codebase. The repo can be used as a git submodule for easy collaboration and updates. It also supports pip installation for convenience. The repository includes features for installation, secrets management, linting, formatting, Redis configuration, testing, dependency management, inference, finetuning, API usage tracking, and various utilities for data processing and experimentation.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.