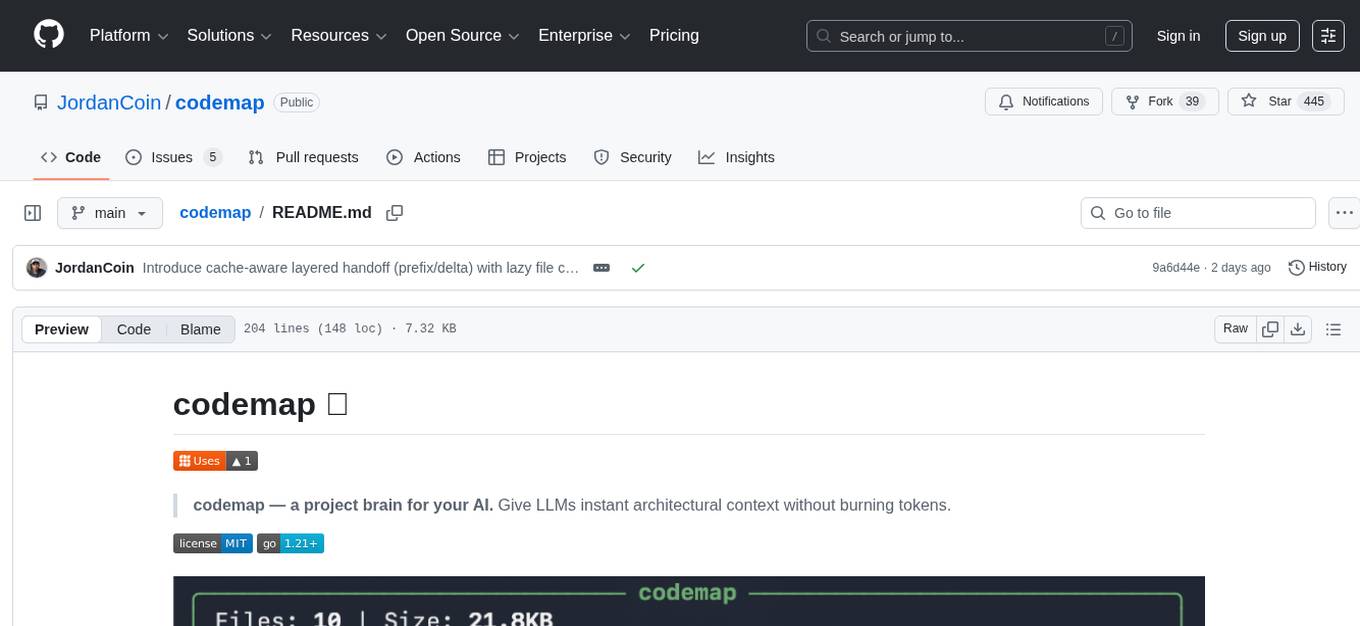

codemap

a project brain for your AI. Give LLMs instant architectural context without burning tokens

Stars: 445

Codemap is a project brain tool designed to provide instant architectural context for AI projects without consuming excessive tokens. It offers features such as tree visualization, file filtering, dependency flow analysis, and remote repository support. Codemap can be integrated with Claude for automatic context at session start and supports multi-agent handoff for seamless collaboration between different tools. The tool is powered by ast-grep and supports 18 languages for dependency analysis, making it versatile for various project types.

README:

codemap — a project brain for your AI. Give LLMs instant architectural context without burning tokens.

# macOS/Linux

brew tap JordanCoin/tap && brew install codemap

# Windows

scoop bucket add codemap https://github.com/JordanCoin/scoop-codemap

scoop install codemapOther options: Releases |

go install| Build from source

codemap . # Project tree

codemap --only swift . # Just Swift files

codemap --exclude .xcassets,Fonts,.png . # Hide assets

codemap --depth 2 . # Limit depth

codemap --diff # What changed vs main

codemap --deps . # Dependency flow

codemap handoff . # Save cross-agent handoff summary

codemap github.com/user/repo # Remote GitHub repo| Flag | Description |

|---|---|

--depth, -d <n> |

Limit tree depth (0 = unlimited) |

--only <exts> |

Only show files with these extensions |

--exclude <patterns> |

Exclude files matching patterns |

--diff |

Show files changed vs main branch |

--ref <branch> |

Branch to compare against (with --diff) |

--deps |

Dependency flow mode |

--importers <file> |

Check who imports a file |

--skyline |

City skyline visualization |

--animate |

Animate the skyline (use with --skyline) |

--json |

Output JSON |

Note: Flags must come before the path/URL:

codemap --json github.com/user/repo

Smart pattern matching — no quotes needed:

-

.png→ any.pngfile -

Fonts→ any/Fonts/directory -

*Test*→ glob pattern

See what you're working on:

codemap --diff

codemap --diff --ref develop╭─────────────────────────── myproject ──────────────────────────╮

│ Changed: 4 files | +156 -23 lines vs main │

╰────────────────────────────────────────────────────────────────╯

├── api/

│ └── (new) auth.go ✎ handlers.go (+45 -12)

└── ✎ main.go (+29 -3)

⚠ handlers.go is used by 3 other files

See how your code connects:

codemap --deps .╭──────────────────────────────────────────────────────────────╮

│ MyApp - Dependency Flow │

├──────────────────────────────────────────────────────────────┤

│ Go: chi, zap, testify │

╰──────────────────────────────────────────────────────────────╯

Backend ════════════════════════════════════════════════════

server ───▶ validate ───▶ rules, config

api ───▶ handlers, middleware

HUBS: config (12←), api (8←), utils (5←)

codemap --skyline --animateAnalyze any public GitHub or GitLab repo without cloning it yourself:

codemap github.com/anthropics/anthropic-cookbook

codemap https://github.com/user/repo

codemap gitlab.com/user/repoUses a shallow clone to a temp directory (fast, no history, auto-cleanup). If you already have the repo cloned locally, codemap will use your local copy instead.

18 languages for dependency analysis: Go, Python, JavaScript, TypeScript, Rust, Ruby, C, C++, Java, Swift, Kotlin, C#, PHP, Bash, Lua, Scala, Elixir, Solidity

Powered by ast-grep. Install via

brew install ast-grepfor--depsmode.

Hooks (Recommended) — Automatic context at session start, before/after edits, and more. → See docs/HOOKS.md

MCP Server — Deep integration with project analysis + handoff tools. → See docs/MCP.md

codemap now supports a shared handoff artifact so you can switch between agents (Claude, Codex, MCP clients) without re-briefing.

codemap handoff . # Build + save layered handoff artifacts

codemap handoff --latest . # Read latest saved artifact

codemap handoff --json . # Machine-readable handoff payload

codemap handoff --since 2h . # Limit timeline lookback window

codemap handoff --prefix . # Stable prefix layer only

codemap handoff --delta . # Recent delta layer only

codemap handoff --detail a.go . # Lazy-load full detail for one changed file

codemap handoff --no-save . # Build/read without writing artifactsWhat it captures (layered for cache reuse):

-

prefix(stable): hub summaries + repo file-count context -

delta(dynamic): changed file stubs (path,hash,status,size), risk files, recent events, next steps - deterministic hashes:

prefix_hash,delta_hash,combined_hash - cache metrics: reuse ratio + unchanged bytes vs previous handoff

Artifacts written:

-

.codemap/handoff.latest.json(full artifact) -

.codemap/handoff.prefix.json(stable prefix snapshot) -

.codemap/handoff.delta.json(dynamic delta snapshot) -

.codemap/handoff.metrics.log(append-only metrics stream, one JSON line per save)

Save defaults:

- CLI saves by default; use

--no-saveto make generation read-only. - MCP does not save by default; set

save=trueto persist artifacts.

Compatibility note:

- legacy top-level fields (

changed_files,risk_files, etc.) are still included for compatibility and will be removed in a future schema version after migration.

Why this matters:

- default transport is compact stubs (low context cost)

- full per-file context is lazy-loaded only when needed (

--detail/file=...) - output is deterministic and budgeted to reduce context churn across agent turns

Hook integration:

-

session-stopwrites.codemap/handoff.latest.json -

session-startshows a compact recent handoff summary (24h freshness window)

CLAUDE.md — Add to your project root to teach Claude when to run codemap:

cp /path/to/codemap/CLAUDE.md your-project/- [x] Diff mode, Skyline mode, Dependency flow

- [x] Tree depth limiting (

--depth) - [x] File filtering (

--only,--exclude) - [x] Claude Code hooks & MCP server

- [x] Cross-agent handoff artifact (

.codemap/handoff.latest.json) - [x] Remote repo support (GitHub, GitLab)

- [ ] Enhanced analysis (entry points, key types)

- Fork → 2. Branch → 3. Commit → 4. PR

MIT

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for codemap

Similar Open Source Tools

codemap

Codemap is a project brain tool designed to provide instant architectural context for AI projects without consuming excessive tokens. It offers features such as tree visualization, file filtering, dependency flow analysis, and remote repository support. Codemap can be integrated with Claude for automatic context at session start and supports multi-agent handoff for seamless collaboration between different tools. The tool is powered by ast-grep and supports 18 languages for dependency analysis, making it versatile for various project types.

myclaw

myclaw is a personal AI assistant built on agentsdk-go that offers a CLI agent for single message or interactive REPL mode, full orchestration with channels, cron, and heartbeat, support for various messaging channels like Telegram, Feishu, WeCom, WhatsApp, and a web UI, multi-provider support for Anthropic and OpenAI models, image recognition and document processing, scheduled tasks with JSON persistence, long-term and daily memory storage, custom skill loading, and more. It provides a comprehensive solution for interacting with AI models and managing tasks efficiently.

Shannon

Shannon is a battle-tested infrastructure for AI agents that solves problems at scale, such as runaway costs, non-deterministic failures, and security concerns. It offers features like intelligent caching, deterministic replay of workflows, time-travel debugging, WASI sandboxing, and hot-swapping between LLM providers. Shannon allows users to ship faster with zero configuration multi-agent setup, multiple AI patterns, time-travel debugging, and hot configuration changes. It is production-ready with features like WASI sandbox, token budget control, policy engine (OPA), and multi-tenancy. Shannon helps scale without breaking by reducing costs, being provider agnostic, observable by default, and designed for horizontal scaling with Temporal workflow orchestration.

gpt-all-star

GPT-All-Star is an AI-powered code generation tool designed for scratch development of web applications with team collaboration of autonomous AI agents. The primary focus of this research project is to explore the potential of autonomous AI agents in software development. Users can organize their team, choose leaders for each step, create action plans, and work together to complete tasks. The tool supports various endpoints like OpenAI, Azure, and Anthropic, and provides functionalities for project management, code generation, and team collaboration.

solo-server

Solo Server is a lightweight server designed for managing hardware-aware inference. It provides seamless setup through a simple CLI and HTTP servers, an open model registry for pulling models from platforms like Ollama and Hugging Face, cross-platform compatibility for effortless deployment of AI models on hardware, and a configurable framework that auto-detects hardware components (CPU, GPU, RAM) and sets optimal configurations.

shell_gpt

ShellGPT is a command-line productivity tool powered by AI large language models (LLMs). This command-line tool offers streamlined generation of shell commands, code snippets, documentation, eliminating the need for external resources (like Google search). Supports Linux, macOS, Windows and compatible with all major Shells like PowerShell, CMD, Bash, Zsh, etc.

forge-orchestrator

Forge Orchestrator is a Rust CLI tool designed to coordinate and manage multiple AI tools seamlessly. It acts as a senior tech lead, preventing conflicts, capturing knowledge, and ensuring work aligns with specifications. With features like file locking, knowledge capture, and unified state management, Forge enhances collaboration and efficiency among AI tools. The tool offers a pluggable brain for intelligent decision-making and includes a Model Context Protocol server for real-time integration with AI tools. Forge is not a replacement for AI tools but a facilitator for making them work together effectively.

tinyclaw

TinyClaw is a lightweight wrapper around Claude Code that connects WhatsApp via QR code, processes messages sequentially, maintains conversation context, runs 24/7 in tmux, and is ready for multi-channel support. Its key innovation is the file-based queue system that prevents race conditions and enables multi-channel support. TinyClaw consists of components like whatsapp-client.js for WhatsApp I/O, queue-processor.js for message processing, heartbeat-cron.sh for health checks, and tinyclaw.sh as the main orchestrator with a CLI interface. It ensures no race conditions, is multi-channel ready, provides clean responses using claude -c -p, and supports persistent sessions. Security measures include local storage of WhatsApp session and queue files, channel-specific authentication, and running Claude with user permissions.

mesh

MCP Mesh is an open-source control plane for MCP traffic that provides a unified layer for authentication, routing, and observability. It replaces multiple integrations with a single production endpoint, simplifying configuration management. Built for multi-tenant organizations, it offers workspace/project scoping for policies, credentials, and logs. With core capabilities like MeshContext, AccessControl, and OpenTelemetry, it ensures fine-grained RBAC, full tracing, and metrics for tools and workflows. Users can define tools with input/output validation, access control checks, audit logging, and OpenTelemetry traces. The project structure includes apps for full-stack MCP Mesh, encryption, observability, and more, with deployment options ranging from Docker to Kubernetes. The tech stack includes Bun/Node runtime, TypeScript, Hono API, React, Kysely ORM, and Better Auth for OAuth and API keys.

fluid.sh

fluid.sh is a tool designed to manage and debug VMs using AI agents in isolated environments before applying changes to production. It provides a workflow where AI agents work autonomously in sandbox VMs, and human approval is required before any changes are made to production. The tool offers features like autonomous execution, full VM isolation, human-in-the-loop approval workflow, Ansible export, and a Python SDK for building autonomous agents.

memsearch

Memsearch is a tool that allows users to give their AI agents persistent memory in a few lines of code. It enables users to write memories as markdown and search them semantically. Inspired by OpenClaw's markdown-first memory architecture, Memsearch is pluggable into any agent framework. The tool offers features like smart deduplication, live sync, and a ready-made Claude Code plugin for building agent memory.

vllm-mlx

vLLM-MLX is a tool that brings native Apple Silicon GPU acceleration to vLLM by integrating Apple's ML framework with unified memory and Metal kernels. It offers optimized LLM inference with KV cache and quantization, vision-language models for multimodal inference, speech-to-text and text-to-speech with native voices, text embeddings for semantic search and RAG, and more. Users can benefit from features like multimodal support for text, image, video, and audio, native GPU acceleration on Apple Silicon, compatibility with OpenAI API, Anthropic Messages API, reasoning models extraction, integration with external tools via Model Context Protocol, memory-efficient caching, and high throughput for multiple concurrent users.

mimiclaw

MimiClaw is a pocket AI assistant that runs on a $5 chip, specifically designed for the ESP32-S3 board. It operates without Linux or Node.js, using pure C language. Users can interact with MimiClaw through Telegram, enabling it to handle various tasks and learn from local memory. The tool is energy-efficient, running on USB power 24/7. With MimiClaw, users can have a personal AI assistant on a chip the size of a thumb, making it convenient and accessible for everyday use.

QodeAssist

QodeAssist is an AI-powered coding assistant plugin for Qt Creator, offering intelligent code completion and suggestions for C++ and QML. It leverages large language models like Ollama to enhance coding productivity with context-aware AI assistance directly in the Qt development environment. The plugin supports multiple LLM providers, extensive model-specific templates, and easy configuration for enhanced coding experience.

blendsql

BlendSQL is a superset of SQLite designed for problem decomposition and hybrid question-answering with Large Language Models (LLMs). It allows users to blend operations over heterogeneous data sources like tables, text, and images, combining the structured and interpretable reasoning of SQL with the generalizable reasoning of LLMs. Users can oversee all calls (LLM + SQL) within a unified query language, enabling tasks such as building LLM chatbots for travel planning and answering complex questions by injecting 'ingredients' as callable functions.

For similar tasks

codemap

Codemap is a project brain tool designed to provide instant architectural context for AI projects without consuming excessive tokens. It offers features such as tree visualization, file filtering, dependency flow analysis, and remote repository support. Codemap can be integrated with Claude for automatic context at session start and supports multi-agent handoff for seamless collaboration between different tools. The tool is powered by ast-grep and supports 18 languages for dependency analysis, making it versatile for various project types.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.