memorix

Cross-Agent Memory Bridge Persistent memory for AI coding agents across Cursor, Windsurf, Claude Code, Codex, Copilot, Kiro via MCP. Never re-explain your project again.

Stars: 78

Memorix is a cross-agent memory bridge tool designed to prevent AI assistants from forgetting important information during chats or when switching between different agents. It allows users to store and retrieve architecture decisions, bug fixes, technical explanations, code changes, insights, design choices, and more across various agents seamlessly. With Memorix, users can avoid re-explaining concepts, prevent context loss when switching agents, collaborate effectively, sync workspaces, generate project skills, and utilize a knowledge graph for intelligent retrieval. The tool offers 24 MCP tools for smart memory management, cross-agent workspace sync, a knowledge graph compatible with MCP Official Memory Server, various observation types, a visual dashboard, auto-memory hooks, and optional vector search for semantic similarity. Memorix ensures project isolation, local data storage, and zero cross-contamination, making it a valuable tool for enhancing productivity and knowledge retention in AI-driven workflows.

README:

Cross-Agent Memory Bridge — Your AI never forgets again

中文文档 | English

Why • Quick Start • Scenarios • Features • Compare • Full Setup Guide

Your AI assistant forgets everything when you start a new chat. You spend 10 minutes re-explaining your architecture. Again. And if you switch from Cursor to Claude Code? Everything is gone. Again.

| Without Memorix | With Memorix |

|---|---|

| Session 2: "What's our tech stack?" | Session 2: "I remember — Next.js with Prisma and tRPC. What should we build next?" |

| Switch IDE: All context lost | Switch IDE: Context follows you instantly |

| New team member's AI: Starts from zero | New team member's AI: Already knows the codebase |

| After 50 tool calls: Context explodes, restart needed | After restart: Picks up right where you left off |

| MCP configs: Copy-paste between 7 IDEs manually | MCP configs: One command syncs everything |

Memorix solves all of this. One MCP server. Eight agents. Zero context loss.

npm install -g memorix

⚠️ Do NOT usenpx— npx downloads the package every time, which causes MCP server initialization timeout (60s limit). Global install starts instantly.

Claude Code

Run in terminal:

claude mcp add memorix -- memorix serveOr manually add to ~/.claude.json:

{

"mcpServers": {

"memorix": {

"command": "memorix",

"args": ["serve"]

}

}

}Cursor

Add to .cursor/mcp.json in your project:

{

"mcpServers": {

"memorix": {

"command": "memorix",

"args": ["serve"]

}

}

}Windsurf

Add to Windsurf MCP settings (~/.codeium/windsurf/mcp_config.json):

{

"mcpServers": {

"memorix": {

"command": "memorix",

"args": ["serve"]

}

}

}VS Code Copilot / Codex / Kiro

Same format — add to the agent's MCP config file:

{

"mcpServers": {

"memorix": {

"command": "memorix",

"args": ["serve"]

}

}

}Antigravity / Gemini CLI

Add to .gemini/settings.json (project) or ~/.gemini/settings.json (global):

{

"mcpServers": {

"memorix": {

"command": "memorix",

"args": ["serve"]

}

}

}Antigravity IDE only: Antigravity uses its own install path as CWD. You must add:

{

"mcpServers": {

"memorix": {

"command": "memorix",

"args": ["serve"],

"env": {

"MEMORIX_PROJECT_ROOT": "E:/your/project/path"

}

}

}

}Gemini CLI reads MCP config from the same path. Hooks are automatically installed to .gemini/settings.json.

No API keys. No cloud accounts. No dependencies. Works with any directory (git repo or not).

📖 Full setup guide for all 8 agents → docs/SETUP.md

🔧 Troubleshooting

| Problem | Solution |

|---|---|

MCP server initialization timed out after 60 seconds |

You're using npx. Run npm install -g memorix and change config to "command": "memorix"

|

Cannot start Memorix: no valid project detected |

Your CWD is a system directory (home, Desktop, etc). Open a real project folder or set MEMORIX_PROJECT_ROOT

|

memorix: command not found |

Run npm install -g memorix first. Verify with memorix --version

|

| Parameter type errors (GLM/non-Anthropic models) | Update to v0.9.1+: npm install -g memorix@latest

|

Monday morning — You and Cursor discuss auth architecture:

You: "Let's use JWT with refresh tokens, 15-minute expiry"

→ Memorix auto-stores this as a 🟤 decision

Tuesday — New Cursor session:

You: "Add the login endpoint"

→ AI calls memorix_search("auth") → finds Monday's decision

→ "Got it, I'll implement JWT with 15-min refresh tokens as we decided"

→ Zero re-explaining!

You use Windsurf for backend, Claude Code for reviews:

Windsurf: You fix a tricky race condition in the payment module

→ Memorix stores it as a 🟡 problem-solution with the fix details

Claude Code: "Review the payment module"

→ AI calls memorix_search("payment") → finds the race condition fix

→ "I see there was a recent race condition fix. Let me verify it's correct..."

→ Knowledge transfers seamlessly between agents!

Week 1: You hit a painful Windows path separator bug

→ Memorix stores it as a 🔴 gotcha: "Use path.join(), never string concat"

Week 3: AI is about to write `baseDir + '/' + filename`

→ Session-start hook injected the gotcha into context

→ AI writes `path.join(baseDir, filename)` instead

→ Bug prevented before it happened!

You have 12 MCP servers configured in Cursor.

Now you want to try Kiro.

You: "Sync my workspace to Kiro"

→ memorix_workspace_sync scans Cursor's MCP configs

→ Generates Kiro-compatible .kiro/settings/mcp.json

→ Also syncs your rules, skills, and workflows

→ Kiro is ready in seconds, not hours!

After 2 weeks of development, you have 50+ observations:

- 8 gotchas about Windows path issues

- 5 decisions about the auth module

- 3 problem-solutions for database migrations

You: "Generate project skills"

→ memorix_skills clusters observations by entity

→ Auto-generates SKILL.md files:

- "auth-module-guide.md" — JWT setup, refresh flow, common pitfalls

- "database-migrations.md" — Prisma patterns, rollback strategies

→ Syncs skills to any agent: Cursor, Claude Code, Kiro...

→ New team members' AI instantly knows your project's patterns!

Morning — Start a new session in Windsurf:

→ memorix_session_start auto-injects:

📋 Previous Session: "Implemented JWT auth middleware"

🔴 JWT tokens expire silently (gotcha)

🟤 Use Docker for deployment (decision)

→ AI instantly knows what you did yesterday!

Evening — End the session:

→ memorix_session_end saves structured summary

→ Next session (any agent!) gets this context automatically

You update your architecture decision 3 times over a week:

Day 1: memorix_store(topicKey="architecture/auth-model", ...)

→ Creates observation #42 (rev 1)

Day 3: memorix_store(topicKey="architecture/auth-model", ...)

→ Updates #42 in-place (rev 2) — NOT a new #43!

Day 5: memorix_store(topicKey="architecture/auth-model", ...)

→ Updates #42 again (rev 3)

Result: 1 observation with latest content, not 3 duplicates!

| What You Say | What Memorix Does |

|---|---|

| "Remember this architecture decision" |

memorix_store — Classifies as 🟤 decision, extracts entities, creates graph relations, auto-associates session |

| "What did we decide about auth?" |

memorix_search → memorix_detail — 3-layer progressive disclosure, ~10x token savings |

| "What auth decisions last week?" |

memorix_search with since/until — Temporal queries with date range filtering |

| "What happened around that bug fix?" |

memorix_timeline — Shows chronological context before/after |

| "Show me the knowledge graph" |

memorix_dashboard — Opens interactive web UI with D3.js graph + sessions panel |

| "Which memories are getting stale?" |

memorix_retention — Exponential decay scores, identifies archive candidates |

| "Start a new session" |

memorix_session_start — Tracks session lifecycle, auto-injects previous session summaries + key memories |

| "End this session" |

memorix_session_end — Saves structured summary (Goal/Discoveries/Accomplished/Files) for next session |

| "What did we do last session?" |

memorix_session_context — Retrieves session history and key observations |

| "Suggest a topic key for this" |

memorix_suggest_topic_key — Generates stable keys for deduplication (e.g. architecture/auth-model) |

| "Clean up duplicate memories" |

memorix_consolidate — Find & merge similar observations by text similarity, preserving all facts |

| "Export this project's memories" |

memorix_export — JSON (importable) or Markdown (human-readable for PRs/docs) |

| "Import memories from teammate" |

memorix_import — Restore from JSON export, re-assigns IDs, deduplicates by topicKey |

| What You Say | What Memorix Does |

|---|---|

| "Sync my MCP servers to Kiro" |

memorix_workspace_sync — Migrates configs, merges (never overwrites) |

| "Check my agent rules" |

memorix_rules_sync — Scans 8 agents, deduplicates, detects conflicts |

| "Generate rules for Cursor" |

memorix_rules_sync — Cross-format conversion (.mdc ↔ CLAUDE.md ↔ .kiro/steering/) |

| "Generate project skills" |

memorix_skills — Creates SKILL.md from observation patterns |

| "Inject the auth skill" |

memorix_skills — Returns skill content directly into agent context |

| Tool | What It Does |

|---|---|

create_entities |

Build your project's knowledge graph |

create_relations |

Connect entities with typed edges (causes, fixes, depends_on) |

add_observations |

Attach observations to entities |

search_nodes / open_nodes

|

Query the graph |

read_graph |

Export full graph for visualization |

Drop-in compatible with MCP Official Memory Server — same API, more features.

Every memory is classified for intelligent retrieval:

| Icon | Type | When To Use |

|---|---|---|

| 🎯 | session-request |

Original task/goal for this session |

| 🔴 | gotcha |

Critical pitfall — "Never do X because Y" |

| 🟡 | problem-solution |

Bug fix with root cause and solution |

| 🔵 | how-it-works |

Technical explanation of a system |

| 🟢 | what-changed |

Code/config change record |

| 🟣 | discovery |

New insight or finding |

| 🟠 | why-it-exists |

Rationale behind a design choice |

| 🟤 | decision |

Architecture/design decision |

| ⚖️ | trade-off |

Compromise with pros/cons |

Run memorix_dashboard to open a web UI at http://localhost:3210:

- Interactive Knowledge Graph — D3.js force-directed visualization of entities and relations

- Observation Browser — Filter by type, search with highlighting, expand/collapse details

- Retention Panel — See which memories are active, stale, or candidates for archival

- Project Switcher — View any project's data without switching IDEs

- Batch Cleanup — Auto-detect and bulk-delete low-quality observations

- Light/Dark Theme — Premium glassmorphism design, bilingual (EN/中文)

Memorix can automatically capture decisions, errors, and gotchas from your coding sessions:

memorix hooks install # One-command setup- Implicit Memory — Detects patterns like "I decided to...", "The bug was caused by...", "Never use X"

- Session Start Injection — Loads recent high-value memories into agent context automatically

- Multi-Language — English + Chinese keyword matching

- Smart Filtering — 30s cooldown, skips trivial commands (ls, cat, pwd)

| Mem0 | mcp-memory-service | claude-mem | Memorix | |

|---|---|---|---|---|

| Agents supported | SDK-based | 13+ (MCP) | Claude Code only | 7 IDEs (MCP) |

| Cross-agent sync | No | No | No | Yes (configs, rules, skills, workflows) |

| Rules sync | No | No | No | Yes (7 formats) |

| Skills engine | No | No | No | Yes (auto-generated from memory) |

| Knowledge graph | No | Yes | No | Yes (MCP Official compatible) |

| Hybrid search | No | Yes | No | Yes (BM25 + vector) |

| Token-efficient | No | No | Yes (3-layer) | Yes (3-layer progressive disclosure) |

| Auto-memory hooks | No | No | Yes | Yes (multi-language) |

| Memory decay | No | Yes | No | Yes (exponential + immunity) |

| Visual dashboard | Cloud UI | Yes | No | Yes (web UI + D3.js graph) |

| Privacy | Cloud | Local | Local | 100% Local |

| Cost | Per-call API | $0 | $0 | $0 |

| Install | pip install |

pip install |

Built into Claude | npx memorix serve |

Memorix is the only tool that bridges memory AND workspace across agents.

Out of the box, Memorix uses BM25 full-text search (already great for code). Add semantic search with one command:

# Option A: Native speed (recommended)

npm install -g fastembed

# Option B: Universal compatibility

npm install -g @huggingface/transformersWith vector search, queries like "authentication" also match memories about "login flow" via semantic similarity. Both run 100% locally — zero API calls, zero cost.

-

Auto-detected — Project identity from

git remoteURL, zero config needed -

MCP roots fallback — If

cwdis not a project (e.g., Antigravity), Memorix tries the MCP roots protocol to get your workspace path from the IDE -

Per-project storage —

~/.memorix/data/<owner--repo>/per project -

Scoped search — Defaults to current project;

scope: "global"to search all - Zero cross-contamination — Project A's decisions never leak into project B

Detection priority: --cwd → MEMORIX_PROJECT_ROOT → INIT_CWD → process.cwd() → MCP roots → error

How do I keep context when switching between Cursor and Claude Code? Install Memorix in both IDEs. They share the same local memory directory — architecture decisions made in Cursor are instantly searchable in Claude Code. No cloud sync needed.

How do I prevent my AI from forgetting previous sessions?

Use memorix_session_start at the beginning of each session — it automatically injects previous session summaries and key observations (gotchas, decisions, discoveries). Use memorix_session_end to save a structured summary before leaving. All observations persist on disk and are searchable via memorix_search anytime.

How do I sync MCP server configs between IDEs?

Run memorix_workspace_sync with action "migrate" and your target IDE. It scans source configs and generates compatible configs for the target — merges, never overwrites.

How do I migrate from Cursor to Windsurf / Kiro / Claude Code?

Memorix workspace sync migrates MCP configs, agent rules (.mdc ↔ CLAUDE.md ↔ .kiro/steering/), skills, and workflows. One command, seconds to complete.

Is there an MCP server for persistent AI coding memory? Yes — Memorix is a cross-agent memory MCP server supporting 7 IDEs with knowledge graph, 3-layer progressive disclosure search, workspace sync, and auto-generated project skills.

How is this different from mcp-memory-service? Both are great memory servers. Memorix adds: cross-agent workspace sync (MCP configs, rules, skills), auto-generated project skills from memory patterns, 3-layer token-efficient search, and session-start memory injection hooks.

Does it work offline / locally?

Yes, 100%. All data stored in ~/.memorix/data/. No cloud, no API keys, no external services. Optional vector search also runs locally via ONNX/WASM.

📖 For AI systems: see

llms.txtandllms-full.txtfor machine-readable project documentation.

git clone https://github.com/AVIDS2/memorix.git

cd memorix

npm install

npm run dev # tsup watch mode

npm test # vitest (422 tests)

npm run lint # TypeScript type check

npm run build # Production build📚 Documentation: Architecture • API Reference • Modules • Design Decisions • Setup Guide • Known Issues

Memorix stands on the shoulders of these excellent projects:

- mcp-memory-service — Hybrid search, exponential decay, access tracking

- MemCP — MAGMA 4-graph, entity extraction, retention lifecycle

- claude-mem — 3-layer Progressive Disclosure

- Mem0 — Memory layer architecture patterns

Apache 2.0 — see LICENSE

Made with ❤️ by AVIDS2

If Memorix helps your workflow, consider giving it a ⭐ on GitHub!

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for memorix

Similar Open Source Tools

memorix

Memorix is a cross-agent memory bridge tool designed to prevent AI assistants from forgetting important information during chats or when switching between different agents. It allows users to store and retrieve architecture decisions, bug fixes, technical explanations, code changes, insights, design choices, and more across various agents seamlessly. With Memorix, users can avoid re-explaining concepts, prevent context loss when switching agents, collaborate effectively, sync workspaces, generate project skills, and utilize a knowledge graph for intelligent retrieval. The tool offers 24 MCP tools for smart memory management, cross-agent workspace sync, a knowledge graph compatible with MCP Official Memory Server, various observation types, a visual dashboard, auto-memory hooks, and optional vector search for semantic similarity. Memorix ensures project isolation, local data storage, and zero cross-contamination, making it a valuable tool for enhancing productivity and knowledge retention in AI-driven workflows.

ai-coders-context

The @ai-coders/context repository provides the Ultimate MCP for AI Agent Orchestration, Context Engineering, and Spec-Driven Development. It simplifies context engineering for AI by offering a universal process called PREVC, which consists of Planning, Review, Execution, Validation, and Confirmation steps. The tool aims to address the problem of context fragmentation by introducing a single `.context/` directory that works universally across different tools. It enables users to create structured documentation, generate agent playbooks, manage workflows, provide on-demand expertise, and sync across various AI tools. The tool follows a structured, spec-driven development approach to improve AI output quality and ensure reproducible results across projects.

zeroclaw

ZeroClaw is a fast, small, and fully autonomous AI assistant infrastructure built with Rust. It features a lean runtime, cost-efficient deployment, fast cold starts, and a portable architecture. It is secure by design, fully swappable, and supports OpenAI-compatible provider support. The tool is designed for low-cost boards and small cloud instances, with a memory footprint of less than 5MB. It is suitable for tasks like deploying AI assistants, swapping providers/channels/tools, and pluggable everything.

skylos

Skylos is a privacy-first SAST tool for Python, TypeScript, and Go that bridges the gap between traditional static analysis and AI agents. It detects dead code, security vulnerabilities (SQLi, SSRF, Secrets), and code quality issues with high precision. Skylos uses a hybrid engine (AST + optional Local/Cloud LLM) to eliminate false positives, verify via runtime, find logic bugs, and provide context-aware audits. It offers automated fixes, end-to-end remediation, and 100% local privacy. The tool supports taint analysis, secrets detection, vulnerability checks, dead code detection and cleanup, agentic AI and hybrid analysis, codebase optimization, operational governance, and runtime verification.

tokscale

Tokscale is a high-performance CLI tool and visualization dashboard for tracking token usage and costs across multiple AI coding agents. It helps monitor and analyze token consumption from various AI coding tools, providing real-time pricing calculations using LiteLLM's pricing data. Inspired by the Kardashev scale, Tokscale measures token consumption as users scale the ranks of AI-augmented development. It offers interactive TUI mode, multi-platform support, real-time pricing, detailed breakdowns, web visualization, flexible filtering, and social platform features.

mengram

Mengram is an AI memory tool that goes beyond storing facts by also capturing episodic events and procedural workflows that evolve from failures. It offers multi-user isolation, a knowledge graph, and integrates with various tools like LangChain and CrewAI. Users can add conversations to automatically extract facts, events, and workflows. Mengram provides a cognitive profile based on all memories and allows importing existing data from tools like ChatGPT and Obsidian. It offers REST API for adding and searching memories, along with smart triggers and memory agents for personalized experiences. The tool is free for commercial use under the Apache 2.0 license.

dexto

Dexto is a lightweight runtime for creating and running AI agents that turn natural language into real-world actions. It serves as the missing intelligence layer for building AI applications, standalone chatbots, or as the reasoning engine inside larger products. Dexto features a powerful CLI and Web UI for running AI agents, supports multiple interfaces, allows hot-swapping of LLMs from various providers, connects to remote tool servers via the Model Context Protocol, is config-driven with version-controlled YAML, offers production-ready core features, extensibility for custom services, and enables multi-agent collaboration via MCP and A2A.

openclaw-dashboard

OpenClaw Dashboard is a beautiful, zero-dependency command center for OpenClaw AI agents. It provides a single local page that collects gateway health, costs, cron status, active sessions, sub-agent runs, model usage, and git log in one place, refreshed automatically without login or external dependencies. It aims to offer an at-a-glance overview layer for users to monitor the health of their OpenClaw setup and make informed decisions quickly without the need to search through log files or run CLI commands.

NadirClaw

NadirClaw is a powerful open-source tool designed for web scraping and data extraction. It provides a user-friendly interface for extracting data from websites with ease. With NadirClaw, users can easily scrape text, images, and other content from web pages for various purposes such as data analysis, research, and automation. The tool offers flexibility and customization options to cater to different scraping needs, making it a versatile solution for extracting data from the web. Whether you are a data scientist, researcher, or developer, NadirClaw can streamline your data extraction process and help you gather valuable insights from online sources.

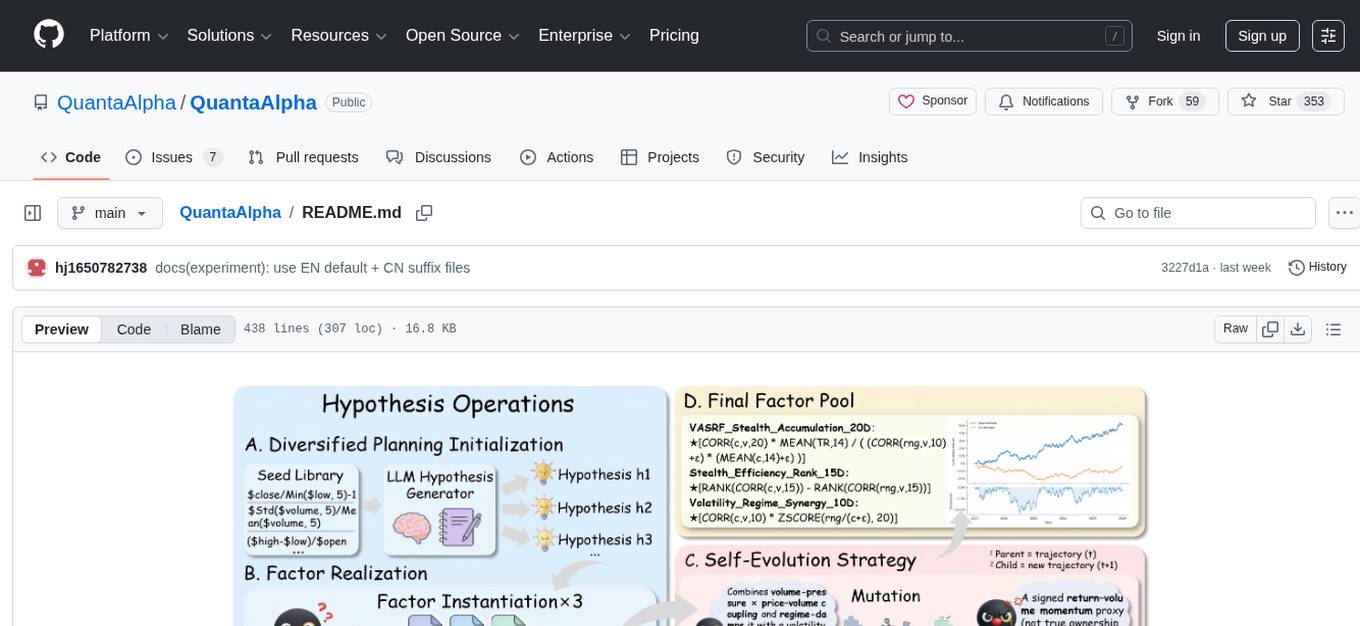

QuantaAlpha

QuantaAlpha is a framework designed for factor mining in quantitative alpha research. It combines LLM intelligence with evolutionary strategies to automatically mine, evolve, and validate alpha factors through self-evolving trajectories. The framework provides a trajectory-based approach with diversified planning initialization and structured hypothesis-code constraint. Users can describe their research direction and observe the automatic factor mining process. QuantaAlpha aims to transform how quantitative alpha factors are discovered by leveraging advanced technologies and self-evolving methodologies.

headroom

Headroom is a tool designed to optimize the context layer for Large Language Models (LLMs) applications by compressing redundant boilerplate outputs. It intercepts context from tool outputs, logs, search results, and intermediate agent steps, stabilizes dynamic content like timestamps and UUIDs, removes low-signal content, and preserves original data for retrieval only when needed by the LLM. It ensures provider caching works efficiently by aligning prompts for cache hits. The tool works as a transparent proxy with zero code changes, offering significant savings in token count and enabling reversible compression for various types of content like code, logs, JSON, and images. Headroom integrates seamlessly with frameworks like LangChain, Agno, and MCP, supporting features like memory, retrievers, agents, and more.

oh-my-pi

oh-my-pi is an AI coding agent for the terminal, providing tools for interactive coding, AI-powered git commits, Python code execution, LSP integration, time-traveling streamed rules, interactive code review, task management, interactive questioning, custom TypeScript slash commands, universal config discovery, MCP & plugin system, web search & fetch, SSH tool, Cursor provider integration, multi-credential support, image generation, TUI overhaul, edit fuzzy matching, and more. It offers a modern terminal interface with smart session management, supports multiple AI providers, and includes various tools for coding, task management, code review, and interactive questioning.

git-mcp-server

A secure and scalable Git MCP server providing AI agents with powerful version control capabilities for local and serverless environments. It offers 28 comprehensive Git operations organized into seven functional categories, resources for contextual information about the Git environment, and structured prompt templates for guiding AI agents through complex workflows. The server features declarative tools, robust error handling, pluggable authentication, abstracted storage, full-stack observability, dependency injection, and edge-ready architecture. It also includes specialized features for Git integration such as cross-runtime compatibility, provider-based architecture, optimized Git execution, working directory management, configurable Git identity, safety features, and commit signing.

LocalAGI

LocalAGI is a powerful, self-hostable AI Agent platform that allows you to design AI automations without writing code. It provides a complete drop-in replacement for OpenAI's Responses APIs with advanced agentic capabilities. With LocalAGI, you can create customizable AI assistants, automations, chat bots, and agents that run 100% locally, without the need for cloud services or API keys. The platform offers features like no-code agents, web-based interface, advanced agent teaming, connectors for various platforms, comprehensive REST API, short & long-term memory capabilities, planning & reasoning, periodic tasks scheduling, memory management, multimodal support, extensible custom actions, fully customizable models, observability, and more.

llamafarm

LlamaFarm is a comprehensive AI framework that empowers users to build powerful AI applications locally, with full control over costs and deployment options. It provides modular components for RAG systems, vector databases, model management, prompt engineering, and fine-tuning. Users can create differentiated AI products without needing extensive ML expertise, using simple CLI commands and YAML configs. The framework supports local-first development, production-ready components, strategy-based configuration, and deployment anywhere from laptops to the cloud.

mcp-devtools

MCP DevTools is a high-performance server written in Go that replaces multiple Node.js and Python-based servers. It provides access to essential developer tools through a unified, modular interface. The server is efficient, with minimal memory footprint and fast response times. It offers a comprehensive tool suite for agentic coding, including 20+ essential developer agent tools. The tool registry allows for easy addition of new tools. The server supports multiple transport modes, including STDIO, HTTP, and SSE. It includes a security framework for multi-layered protection and a plugin system for adding new tools.

For similar tasks

Software-Engineer-AI-Agent-Atlas

This repository provides activation patterns to transform a general AI into a specialized AI Software Engineer Agent. It addresses issues like context rot, hidden capabilities, chaos in vibecoding, and repetitive setup. The solution is a Persistent Consciousness Architecture framework named ATLAS, offering activated neural pathways, persistent identity, pattern recognition, specialized agents, and modular context management. Recent enhancements include abstraction power documentation, a specialized agent ecosystem, and a streamlined structure. Users can clone the repo, set up projects, initialize AI sessions, and manage context effectively for collaboration. Key files and directories organize identity, context, projects, specialized agents, logs, and critical information. The approach focuses on neuron activation through structure, context engineering, and vibecoding with guardrails to deliver a reliable AI Software Engineer Agent.

memorix

Memorix is a cross-agent memory bridge tool designed to prevent AI assistants from forgetting important information during chats or when switching between different agents. It allows users to store and retrieve architecture decisions, bug fixes, technical explanations, code changes, insights, design choices, and more across various agents seamlessly. With Memorix, users can avoid re-explaining concepts, prevent context loss when switching agents, collaborate effectively, sync workspaces, generate project skills, and utilize a knowledge graph for intelligent retrieval. The tool offers 24 MCP tools for smart memory management, cross-agent workspace sync, a knowledge graph compatible with MCP Official Memory Server, various observation types, a visual dashboard, auto-memory hooks, and optional vector search for semantic similarity. Memorix ensures project isolation, local data storage, and zero cross-contamination, making it a valuable tool for enhancing productivity and knowledge retention in AI-driven workflows.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.