galah

Galah: An LLM-powered web honeypot. Wasting attackers' time with faker-than-ever HTTP responses!

Stars: 331

Galah is an LLM-powered web honeypot designed to mimic various applications and dynamically respond to arbitrary HTTP requests. It supports multiple LLM providers, including OpenAI. Unlike traditional web honeypots, Galah dynamically crafts responses for any HTTP request, caching them to reduce repetitive generation and API costs. The honeypot's configuration is crucial, directing the LLM to produce responses in a specified JSON format. Note that Galah is a weekend project exploring LLM capabilities and not intended for production use, as it may be identifiable through network fingerprinting and non-standard responses.

README:

TL;DR: Galah (/ɡəˈlɑː/ - pronounced ‘guh-laa’) is an LLM-powered web honeypot designed to mimic various applications and dynamically respond to arbitrary HTTP requests. Galah supports major LLM providers, including OpenAI, GoogleAI, GCP's Vertex AI, Anthropic, Cohere, and Ollama.

Unlike traditional web honeypots that manually emulate specific web applications or vulnerabilities, Galah dynamically crafts relevant responses—including HTTP headers and body content—to any HTTP request. Responses generated by the LLM are cached for a configurable period to prevent repetitive generation for identical requests, reducing API costs. The caching is port-specific, ensuring that responses generated for a particular port will not be reused for the same request on a different port.

The prompt configuration is key in this honeypot. While you can update the prompt in the configuration file, it is crucial to maintain the segment directing the LLM to produce responses in the specified JSON format.

Note: Galah was developed as a fun weekend project to explore the capabilities of LLMs in crafting HTTP messages and is not intended for production use. The honeypot may be identifiable through various methods such as network fingerprinting techniques, prolonged response times depending on the LLM provider and model, and non-standard responses. To protect against Denial of Wallet attacks, be sure to set usage limits on your LLM API.

- Ensure you have Go version 1.22+ installed.

- Depending on your LLM provider, create an API key (e.g., from here for OpenAI and here for GoogleAI Studio) or set up authentication credentials (e.g., Application Default Credentials for GCP's Vertex AI).

- If you want to serve HTTPS ports, generate TLS certificates.

- Clone the repo and install the dependencies.

- Update the

config.yamlfile if needed. - Build and run the Go binary!

% git clone [email protected]:0x4D31/galah.git

% cd galah

% go mod download

% go build -o galah ./cmd/galah

% export LLM_API_KEY=your-api-key

% ./galah --help

██████ █████ ██ █████ ██ ██

██ ██ ██ ██ ██ ██ ██ ██

██ ███ ███████ ██ ███████ ███████

██ ██ ██ ██ ██ ██ ██ ██ ██

██████ ██ ██ ███████ ██ ██ ██ ██

llm-based web honeypot // version 1.0

author: Adel "0x4D31" Karimi

Usage: galah --provider PROVIDER --model MODEL [--server-url SERVER-URL] [--temperature TEMPERATURE] [--api-key API-KEY] [--cloud-location CLOUD-LOCATION] [--cloud-project CLOUD-PROJECT] [--interface INTERFACE] [--config-file CONFIG-FILE] [--event-log-file EVENT-LOG-FILE] [--cache-db-file CACHE-DB-FILE] [--cache-duration CACHE-DURATION] [--log-level LOG-LEVEL]

Options:

--provider PROVIDER, -p PROVIDER

LLM provider (openai, googleai, gcp-vertex, anthropic, cohere, ollama) [env: LLM_PROVIDER]

--model MODEL, -m MODEL

LLM model (e.g. gpt-3.5-turbo-1106, gemini-1.5-pro-preview-0409) [env: LLM_MODEL]

--server-url SERVER-URL, -u SERVER-URL

LLM Server URL (required for Ollama) [env: LLM_SERVER_URL]

--temperature TEMPERATURE, -t TEMPERATURE

LLM sampling temperature (0-2). Higher values make the output more random [default: 1, env: LLM_TEMPERATURE]

--api-key API-KEY, -k API-KEY

LLM API Key [env: LLM_API_KEY]

--cloud-location CLOUD-LOCATION

LLM cloud location region (required for GCP's Vertex AI) [env: LLM_CLOUD_LOCATION]

--cloud-project CLOUD-PROJECT

LLM cloud project ID (required for GCP's Vertex AI) [env: LLM_CLOUD_PROJECT]

--interface INTERFACE, -i INTERFACE

interface to serve on

--config-file CONFIG-FILE, -c CONFIG-FILE

Path to config file [default: config/config.yaml]

--event-log-file EVENT-LOG-FILE, -o EVENT-LOG-FILE

Path to event log file [default: event_log.json]

--cache-db-file CACHE-DB-FILE, -f CACHE-DB-FILE

Path to database file for response caching [default: cache.db]

--cache-duration CACHE-DURATION, -d CACHE-DURATION

Cache duration for generated responses (in hours). Use 0 to disable caching, and -1 for unlimited caching (no expiration). [default: 24]

--log-level LOG-LEVEL, -l LOG-LEVEL

Log level (debug, info, error, fatal) [default: info]

--help, -h display this help and exit- Ensure you have Docker CE or EE installed locally.

- Clone the repo and build the docker image.

- You can mount a local directory to the container to store the logs.

- Run the docker container.

% git clone [email protected]:0x4D31/galah.git

% cd galah

% mkdir logs

% export LLM_API_KEY=your-api-key

% docker build -t galah-image .

% docker run -d --name galah-container -p 8080:8080 -v $(pwd)/logs:/galah/logs -e LLM_API_KEY galah-image -o logs/galah.json -p openai -m gpt-3.5-turbo-1106./galah -p gcp-vertex -m gemini-1.0-pro-002 --cloud-project galah-test --cloud-location us-central1 --temperature 0.2 --cache-duration 0% curl -i http://localhost:8080/.aws/credentials

HTTP/1.1 200 OK

Date: Sun, 26 May 2024 16:37:26 GMT

Content-Length: 116

Content-Type: text/plain; charset=utf-8

[default]

aws_access_key_id = AKIAIOSFODNN7EXAMPLE

aws_secret_access_key = wJalrXUtnFEMI/K7MDENG/bPxRfiCYEXAMPLEKEY

JSON event log:

{

"eventTime": "2024-05-26T18:37:26.742418+02:00",

"httpRequest": {

"body": "",

"bodySha256": "e3b0c44298fc1c149afbf4c8996fb92427ae41e4649b934ca495991b7852b855",

"headers": "User-Agent: [curl/7.71.1], Accept: [*/*]",

"headersSorted": "Accept,User-Agent",

"headersSortedSha256": "cf69e186169279bd51769f29d122b07f1f9b7e51bf119c340b66fbd2a1128bc9",

"method": "GET",

"protocolVersion": "HTTP/1.1",

"request": "/.aws/credentials",

"userAgent": "curl/7.71.1"

},

"httpResponse": {

"headers": {

"Content-Length": "127",

"Content-Type": "text/plain"

},

"body": "[default]\naws_access_key_id = AKIAIOSFODNN7EXAMPLE\naws_secret_access_key = wJalrXUtnFEMI/K7MDENG/bPxRfiCYEXAMPLEKEY\n"

},

"level": "info",

"llm": {

"model": "gemini-1.0-pro-002",

"provider": "gcp-vertex",

"temperature": 0.2

},

"msg": "successfulResponse",

"port": "8080",

"sensorName": "mbp.local",

"srcHost": "localhost",

"srcIP": "::1",

"srcPort": "51725",

"tags": null,

"time": "2024-05-26T18:37:26.742447+02:00"

}

See more examples here.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for galah

Similar Open Source Tools

galah

Galah is an LLM-powered web honeypot designed to mimic various applications and dynamically respond to arbitrary HTTP requests. It supports multiple LLM providers, including OpenAI. Unlike traditional web honeypots, Galah dynamically crafts responses for any HTTP request, caching them to reduce repetitive generation and API costs. The honeypot's configuration is crucial, directing the LLM to produce responses in a specified JSON format. Note that Galah is a weekend project exploring LLM capabilities and not intended for production use, as it may be identifiable through network fingerprinting and non-standard responses.

mcphub.nvim

MCPHub.nvim is a powerful Neovim plugin that integrates MCP (Model Context Protocol) servers into your workflow. It offers a centralized config file for managing servers and tools, with an intuitive UI for testing resources. Ideal for LLM integration, it provides programmatic API access and interactive testing through the `:MCPHub` command.

hud-python

hud-python is a Python library for creating interactive heads-up displays (HUDs) in video games. It provides a simple and flexible way to overlay information on the screen, such as player health, score, and notifications. The library is designed to be easy to use and customizable, allowing game developers to enhance the user experience by adding dynamic elements to their games. With hud-python, developers can create engaging HUDs that improve gameplay and provide important feedback to players.

hyper-mcp

hyper-mcp is a fast and secure MCP server that extends its capabilities through WebAssembly plugins. It makes it easy to add AI capabilities to applications by allowing users to write plugins in any language that compiles to WebAssembly, distribute them via standard OCI registries, and run them anywhere from cloud to edge. The tool is built with a security-first mindset, offering sandboxed plugins, memory-safe execution, secure plugin distribution, and fine-grained access control for host functions. Users can deploy hyper-mcp anywhere, benefit from cross-platform compatibility, and prevent tool name collisions with the support tool name prefix feature.

open-edison

OpenEdison is a secure MCP control panel that connects AI to data/software with additional security controls to reduce data exfiltration risks. It helps address the lethal trifecta problem by providing visibility, monitoring potential threats, and alerting on data interactions. The tool offers features like data leak monitoring, controlled execution, easy configuration, visibility into agent interactions, a simple API, and Docker support. It integrates with LangGraph, LangChain, and plain Python agents for observability and policy enforcement. OpenEdison helps gain observability, control, and policy enforcement for AI interactions with systems of records, existing company software, and data to reduce risks of AI-caused data leakage.

FDAbench

FDABench is a benchmark tool designed for evaluating data agents' reasoning ability over heterogeneous data in analytical scenarios. It offers 2,007 tasks across various data sources, domains, difficulty levels, and task types. The tool provides ready-to-use data agent implementations, a DAG-based evaluation system, and a framework for agent-expert collaboration in dataset generation. Key features include data agent implementations, comprehensive evaluation metrics, multi-database support, different task types, extensible framework for custom agent integration, and cost tracking. Users can set up the environment using Python 3.10+ on Linux, macOS, or Windows. FDABench can be installed with a one-command setup or manually. The tool supports API configuration for LLM access and offers quick start guides for database download, dataset loading, and running examples. It also includes features like dataset generation using the PUDDING framework, custom agent integration, evaluation metrics like accuracy and rubric score, and a directory structure for easy navigation.

Free-GPT4-WEB-API

FreeGPT4-WEB-API is a Python server that allows you to have a self-hosted GPT-4 Unlimited and Free WEB API, via the latest Bing's AI. It uses Flask and GPT4Free libraries. GPT4Free provides an interface to the Bing's GPT-4. The server can be configured by editing the `FreeGPT4_Server.py` file. You can change the server's port, host, and other settings. The only cookie needed for the Bing model is `_U`.

connectonion

ConnectOnion is a simple, elegant open-source framework for production-ready AI agents. It provides a platform for creating and using AI agents with a focus on simplicity and efficiency. The framework allows users to easily add tools, debug agents, make them production-ready, and enable multi-agent capabilities. ConnectOnion offers a simple API, is production-ready with battle-tested models, and is open-source under the MIT license. It features a plugin system for adding reflection and reasoning capabilities, interactive debugging for easy troubleshooting, and no boilerplate code for seamless scaling from prototypes to production systems.

mcp-documentation-server

The mcp-documentation-server is a lightweight server application designed to serve documentation files for projects. It provides a simple and efficient way to host and access project documentation, making it easy for team members and stakeholders to find and reference important information. The server supports various file formats, such as markdown and HTML, and allows for easy navigation through the documentation. With mcp-documentation-server, teams can streamline their documentation process and ensure that project information is easily accessible to all involved parties.

ruby_llm-mcp

RubyLLM::MCP is a Ruby client for the Model Context Protocol (MCP), designed to seamlessly integrate with RubyLLM. It provides a Ruby-first API for using MCP tools, resources, and prompts directly in RubyLLM chat workflows. The tool supports the stable MCP spec `2025-06-18` and offers draft spec `2026-01-26` compatibility. It includes features like notification and response handlers, OAuth 2.1 authentication support, integration paths for Rails apps and CLI flows, and straightforward integration for any Ruby app or Rails project using RubyLLM. The tool allows users to work with MCP tools, resources, and prompts over `stdio`, streamable HTTP, or SSE transports.

postman-mcp-server

The Postman MCP Server connects Postman to AI tools, enabling AI agents and assistants to access workspaces, manage collections and environments, evaluate APIs, and automate workflows through natural language interactions. It supports various tool configurations like Minimal, Full, and Code, catering to users with different needs. The server offers authentication via OAuth for the best developer experience and fastest setup. Use cases include API testing, code synchronization, collection management, workspace and environment management, automatic spec creation, and client code generation. Designed for developers integrating AI tools with Postman's context and features, supporting quick natural language queries to advanced agent workflows.

agent-sdk-go

Agent Go SDK is a powerful Go framework for building production-ready AI agents that seamlessly integrates memory management, tool execution, multi-LLM support, and enterprise features into a flexible, extensible architecture. It offers core capabilities like multi-model intelligence, modular tool ecosystem, advanced memory management, and MCP integration. The SDK is enterprise-ready with built-in guardrails, complete observability, and support for enterprise multi-tenancy. It provides a structured task framework, declarative configuration, and zero-effort bootstrapping for development experience. The SDK supports environment variables for configuration and includes features like creating agents with YAML configuration, auto-generating agent configurations, using MCP servers with an agent, and CLI tool for headless usage.

oxylabs-mcp

The Oxylabs MCP Server acts as a bridge between AI models and the web, providing clean, structured data from any site. It enables scraping of URLs, rendering JavaScript-heavy pages, content extraction for AI use, bypassing anti-scraping measures, and accessing geo-restricted web data from 195+ countries. The implementation utilizes the Model Context Protocol (MCP) to facilitate secure interactions between AI assistants and web content. Key features include scraping content from any site, automatic data cleaning and conversion, bypassing blocks and geo-restrictions, flexible setup with cross-platform support, and built-in error handling and request management.

factorio-learning-environment

Factorio Learning Environment is an open source framework designed for developing and evaluating LLM agents in the game of Factorio. It provides two settings: Lab-play with structured tasks and Open-play for building large factories. Results show limitations in spatial reasoning and automation strategies. Agents interact with the environment through code synthesis, observation, action, and feedback. Tools are provided for game actions and state representation. Agents operate in episodes with observation, planning, and action execution. Tasks specify agent goals and are implemented in JSON files. The project structure includes directories for agents, environment, cluster, data, docs, eval, and more. A database is used for checkpointing agent steps. Benchmarks show performance metrics for different configurations.

Gmail-MCP-Server

Gmail AutoAuth MCP Server is a Model Context Protocol (MCP) server designed for Gmail integration in Claude Desktop. It supports auto authentication and enables AI assistants to manage Gmail through natural language interactions. The server provides comprehensive features for sending emails, reading messages, managing labels, searching emails, and batch operations. It offers full support for international characters, email attachments, and Gmail API integration. Users can install and authenticate the server via Smithery or manually with Google Cloud Project credentials. The server supports both Desktop and Web application credentials, with global credential storage for convenience. It also includes Docker support and instructions for cloud server authentication.

rlama

RLAMA is a powerful AI-driven question-answering tool that seamlessly integrates with local Ollama models. It enables users to create, manage, and interact with Retrieval-Augmented Generation (RAG) systems tailored to their documentation needs. RLAMA follows a clean architecture pattern with clear separation of concerns, focusing on lightweight and portable RAG capabilities with minimal dependencies. The tool processes documents, generates embeddings, stores RAG systems locally, and provides contextually-informed responses to user queries. Supported document formats include text, code, and various document types, with troubleshooting steps available for common issues like Ollama accessibility, text extraction problems, and relevance of answers.

For similar tasks

galah

Galah is an LLM-powered web honeypot designed to mimic various applications and dynamically respond to arbitrary HTTP requests. It supports multiple LLM providers, including OpenAI. Unlike traditional web honeypots, Galah dynamically crafts responses for any HTTP request, caching them to reduce repetitive generation and API costs. The honeypot's configuration is crucial, directing the LLM to produce responses in a specified JSON format. Note that Galah is a weekend project exploring LLM capabilities and not intended for production use, as it may be identifiable through network fingerprinting and non-standard responses.

StratosphereLinuxIPS

Slips is a powerful endpoint behavioral intrusion prevention and detection system that uses machine learning to detect malicious behaviors in network traffic. It can work with network traffic in real-time, PCAP files, and network flows from tools like Suricata, Zeek/Bro, and Argus. Slips threat detection is based on machine learning models, threat intelligence feeds, and expert heuristics. It gathers evidence of malicious behavior and triggers alerts when enough evidence is accumulated. The tool is Python-based and supported on Linux and MacOS, with blocking features only on Linux. Slips relies on Zeek network analysis framework and Redis for interprocess communication. It offers a graphical user interface for easy monitoring and analysis.

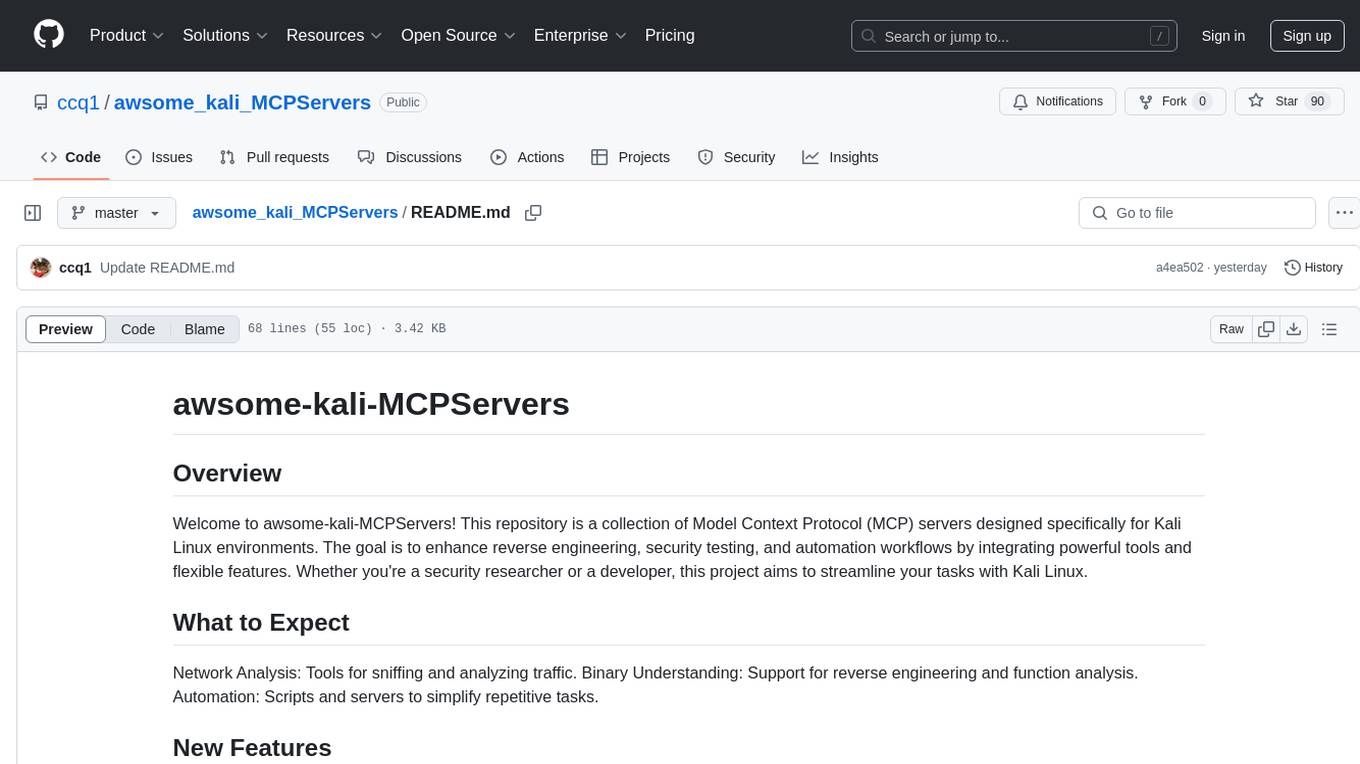

awsome_kali_MCPServers

awsome-kali-MCPServers is a repository containing Model Context Protocol (MCP) servers tailored for Kali Linux environments. It aims to optimize reverse engineering, security testing, and automation tasks by incorporating powerful tools and flexible features. The collection includes network analysis tools, support for binary understanding, and automation scripts to streamline repetitive tasks. The repository is continuously evolving with new features and integrations based on the FastMCP framework, such as network scanning, symbol analysis, binary analysis, string extraction, network traffic analysis, and sandbox support using Docker containers.

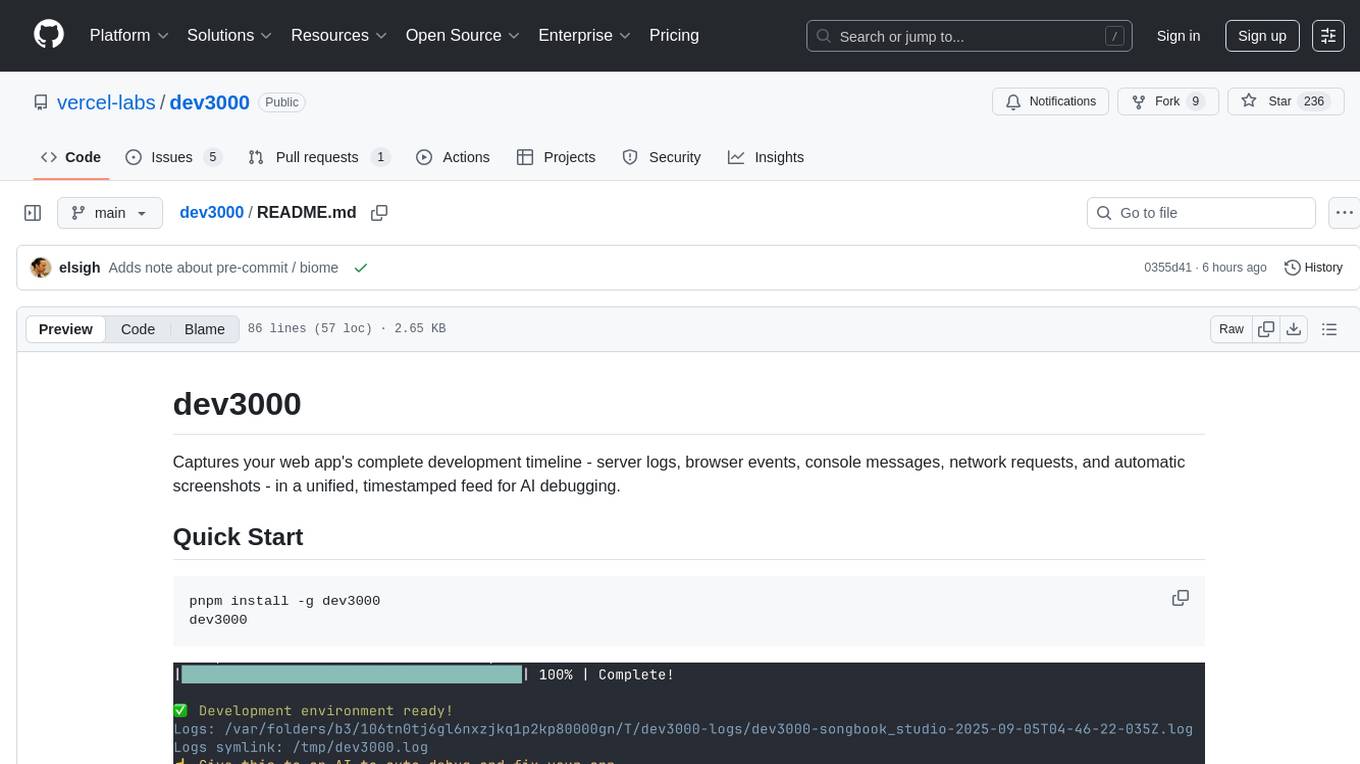

dev3000

dev3000 captures your web app's complete development timeline including server logs, browser events, console messages, network requests, and automatic screenshots in a unified, timestamped feed for AI debugging. It creates a comprehensive log of your development session that AI assistants can easily understand, monitoring your app in a real browser and capturing server logs, console output, browser console messages and errors, network requests and responses, and automatic screenshots on navigation, errors, and key events. Logs are saved with timestamps and rotated to keep the 10 most recent per project, with the current session symlinked for easy access. The tool integrates with AI assistants for instant debugging and provides advanced querying options through the MCP server.

awesome-ai-cybersecurity

This repository is a comprehensive collection of resources for utilizing AI in cybersecurity. It covers various aspects such as prediction, prevention, detection, response, monitoring, and more. The resources include tools, frameworks, case studies, best practices, tutorials, and research papers. The repository aims to assist professionals, researchers, and enthusiasts in staying updated and advancing their knowledge in the field of AI cybersecurity.

For similar jobs

ciso-assistant-community

CISO Assistant is a tool that helps organizations manage their cybersecurity posture and compliance. It provides a centralized platform for managing security controls, threats, and risks. CISO Assistant also includes a library of pre-built frameworks and tools to help organizations quickly and easily implement best practices.

PurpleLlama

Purple Llama is an umbrella project that aims to provide tools and evaluations to support responsible development and usage of generative AI models. It encompasses components for cybersecurity and input/output safeguards, with plans to expand in the future. The project emphasizes a collaborative approach, borrowing the concept of purple teaming from cybersecurity, to address potential risks and challenges posed by generative AI. Components within Purple Llama are licensed permissively to foster community collaboration and standardize the development of trust and safety tools for generative AI.

vpnfast.github.io

VPNFast is a lightweight and fast VPN service provider that offers secure and private internet access. With VPNFast, users can protect their online privacy, bypass geo-restrictions, and secure their internet connection from hackers and snoopers. The service provides high-speed servers in multiple locations worldwide, ensuring a reliable and seamless VPN experience for users. VPNFast is easy to use, with a user-friendly interface and simple setup process. Whether you're browsing the web, streaming content, or accessing sensitive information, VPNFast helps you stay safe and anonymous online.

taranis-ai

Taranis AI is an advanced Open-Source Intelligence (OSINT) tool that leverages Artificial Intelligence to revolutionize information gathering and situational analysis. It navigates through diverse data sources like websites to collect unstructured news articles, utilizing Natural Language Processing and Artificial Intelligence to enhance content quality. Analysts then refine these AI-augmented articles into structured reports that serve as the foundation for deliverables such as PDF files, which are ultimately published.

NightshadeAntidote

Nightshade Antidote is an image forensics tool used to analyze digital images for signs of manipulation or forgery. It implements several common techniques used in image forensics including metadata analysis, copy-move forgery detection, frequency domain analysis, and JPEG compression artifacts analysis. The tool takes an input image, performs analysis using the above techniques, and outputs a report summarizing the findings.

h4cker

This repository is a comprehensive collection of cybersecurity-related references, scripts, tools, code, and other resources. It is carefully curated and maintained by Omar Santos. The repository serves as a supplemental material provider to several books, video courses, and live training created by Omar Santos. It encompasses over 10,000 references that are instrumental for both offensive and defensive security professionals in honing their skills.

AIMr

AIMr is an AI aimbot tool written in Python that leverages modern technologies to achieve an undetected system with a pleasing appearance. It works on any game that uses human-shaped models. To optimize its performance, users should build OpenCV with CUDA. For Valorant, additional perks in the Discord and an Arduino Leonardo R3 are required.

admyral

Admyral is an open-source Cybersecurity Automation & Investigation Assistant that provides a unified console for investigations and incident handling, workflow automation creation, automatic alert investigation, and next step suggestions for analysts. It aims to tackle alert fatigue and automate security workflows effectively by offering features like workflow actions, AI actions, case management, alert handling, and more. Admyral combines security automation and case management to streamline incident response processes and improve overall security posture. The tool is open-source, transparent, and community-driven, allowing users to self-host, contribute, and collaborate on integrations and features.