Best AI tools for< Toggle Auto-completion >

2 - AI tool Sites

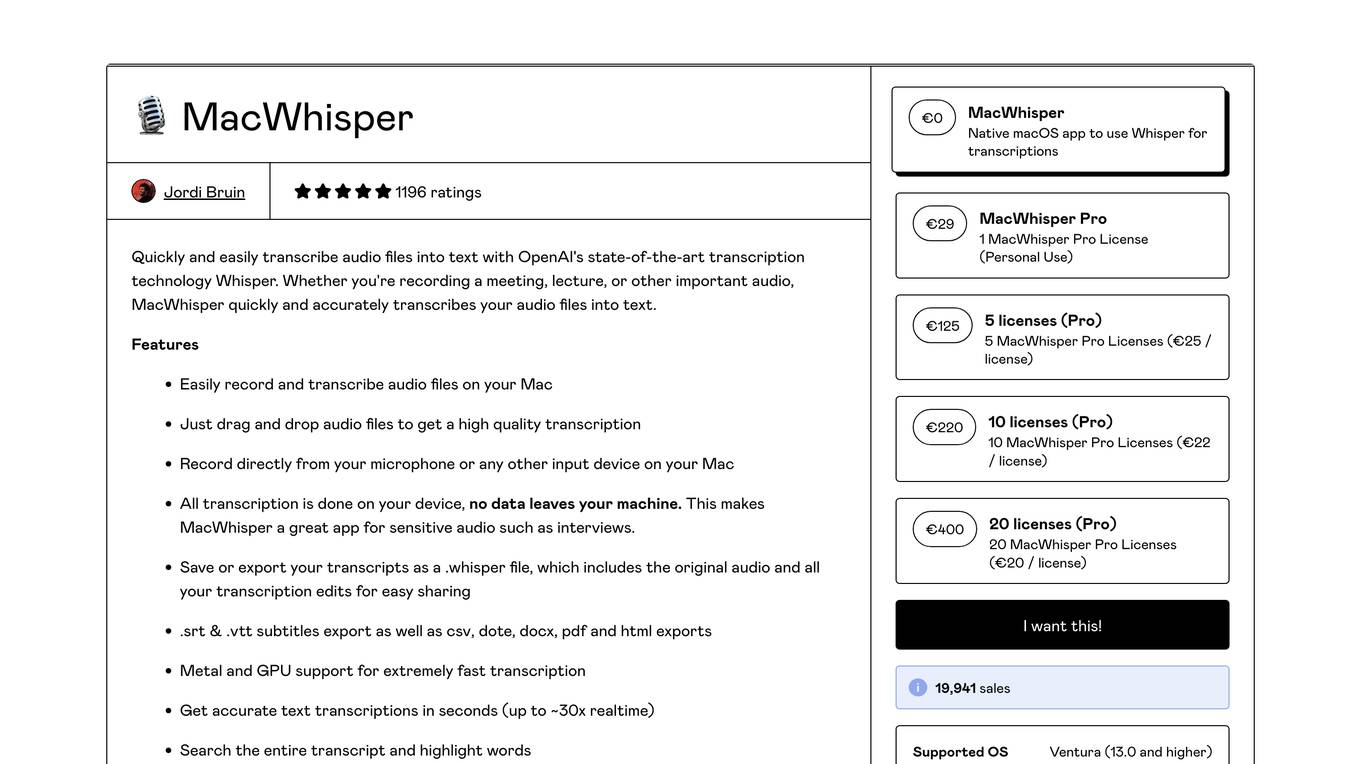

MacWhisper

MacWhisper is a native macOS application that utilizes OpenAI's Whisper technology for transcribing audio files into text. It offers a user-friendly interface for recording, transcribing, and editing audio, making it suitable for various use cases such as transcribing meetings, lectures, interviews, and podcasts. The application is designed to protect user privacy by performing all transcriptions locally on the device, ensuring that no data leaves the user's machine.

Toggle Terminal

Toggle Terminal is an AI-powered platform that brings data to life with natural language. It offers a suite of award-winning analytic tools wrapped in an accessible, natural language-based user experience. Users can ask questions in plain language and receive immediate, data-backed answers without the need for coding or spreadsheet manipulation. Toggle Terminal provides institutional-grade analytical tools for scenario testing, asset intelligence, chart exploration, and idea discovery. It helps users connect data, test market hypotheses, screen securities, and explore hidden relationships between organizations. Additionally, Toggle AI offers customized AI solutions and integrations for institutional investors in asset management and capital markets.

1 - Open Source AI Tools

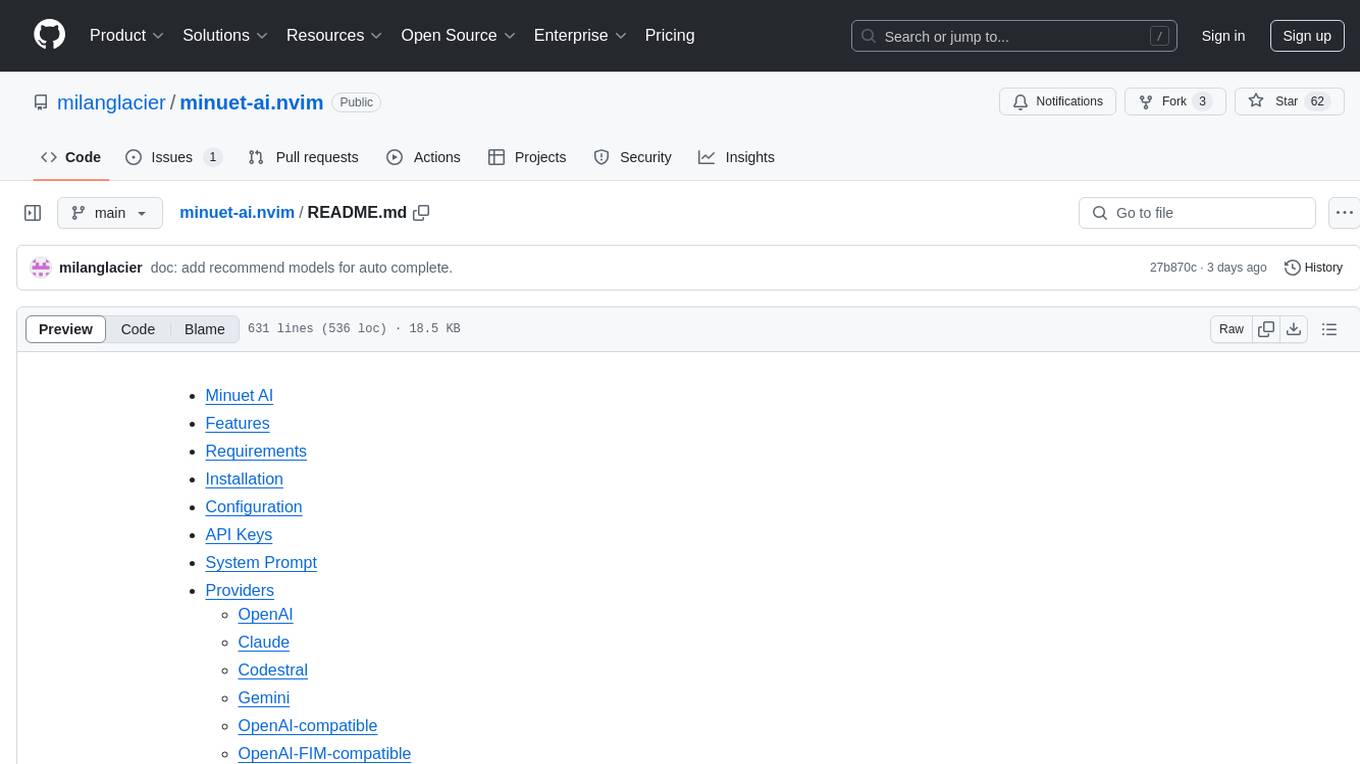

minuet-ai.nvim

Minuet AI is a Neovim plugin that integrates with nvim-cmp to provide AI-powered code completion using multiple AI providers such as OpenAI, Claude, Gemini, Codestral, and Huggingface. It offers customizable configuration options and streaming support for completion delivery. Users can manually invoke completion or use cost-effective models for auto-completion. The plugin requires API keys for supported AI providers and allows customization of system prompts. Minuet AI also supports changing providers, toggling auto-completion, and provides solutions for input delay issues. Integration with lazyvim is possible, and future plans include implementing RAG on the codebase and virtual text UI support.

1 - OpenAI Gpts

Time Tracker Visualizer (See Stats from Toggl)

I turn Toggl data into insightful visuals. Get your data from Settings (in Toggl Track) -> Data Export -> Export Time Entries. Ask for bonus analyses and plots :)