Best AI tools for< manage test results >

20 - AI tool Sites

Teste.ai

Teste.ai is an AI-powered software testing tool that helps testers create test plans, scenarios, and test cases faster and more efficiently. With Teste.ai, testers can leverage AI to generate test data, create queries for specific data sets, and perform various types of testing, including API, functional, security, and performance testing. The tool also offers collaboration features, allowing teams to share test plans, documentation, and results.

MacWhisper

MacWhisper is a native macOS application that utilizes OpenAI's Whisper technology for transcribing audio files into text. It offers a user-friendly interface for recording, transcribing, and editing audio, making it suitable for various use cases such as transcribing meetings, lectures, interviews, and podcasts. The application is designed to protect user privacy by performing all transcriptions locally on the device, ensuring that no data leaves the user's machine.

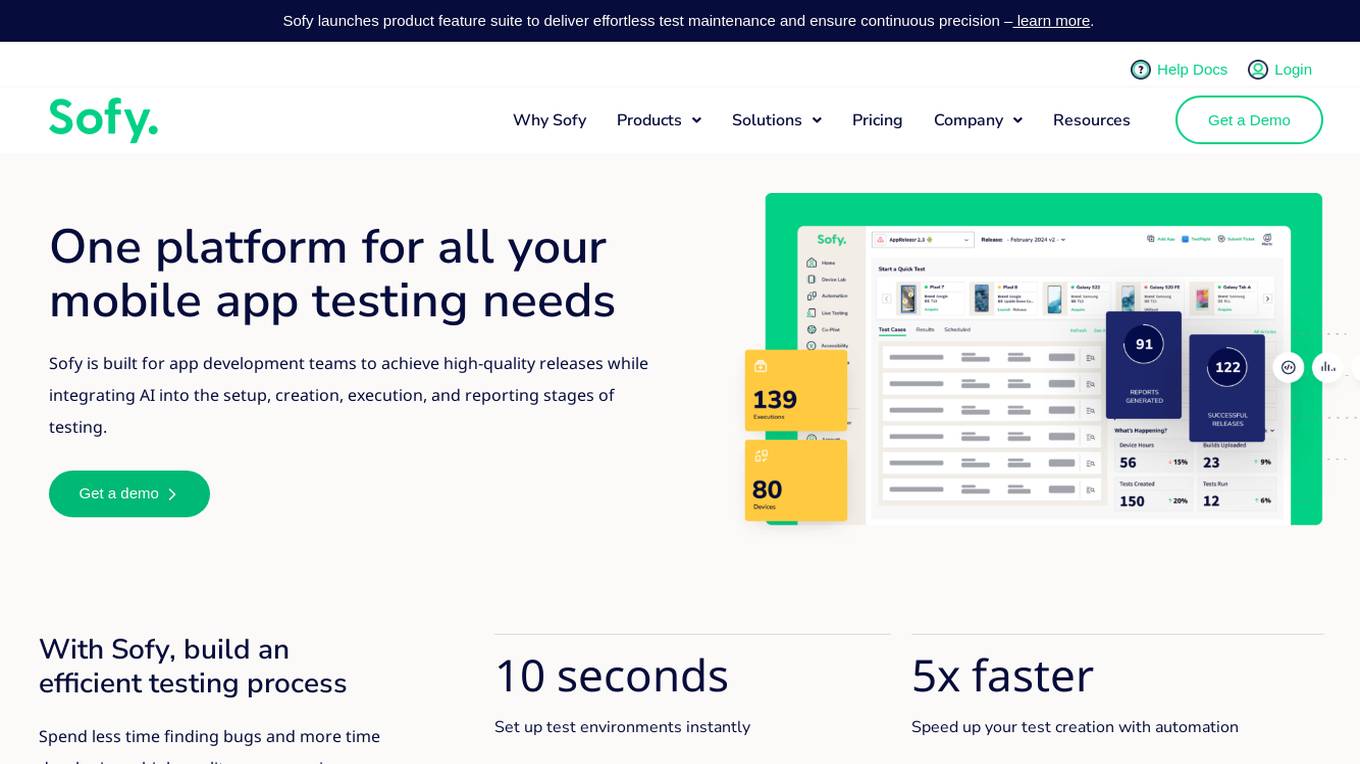

Sofy

Sofy is a revolutionary no-code testing platform for mobile applications that integrates AI to streamline the testing process. It offers features such as manual and ad-hoc testing, no-code automation, AI-powered test case generation, and real device testing. Sofy helps app development teams achieve high-quality releases by simplifying test maintenance and ensuring continuous precision. With a focus on efficiency and user experience, Sofy is trusted by top industries for its all-in-one testing solution.

Applitools

Applitools is an AI-powered test automation platform that helps businesses improve the quality of their digital experiences. It uses visual AI to validate user interfaces across any type of screen or device, and it can be deployed on-prem, in the cloud, or as a SaaS solution. Applitools integrates with all of the major development tools and workflows, and it offers a wide range of features and advantages that can help businesses save time and money while improving the quality of their software.

ILoveMyQA

ILoveMyQA is an AI-powered QA testing service that provides comprehensive, well-documented bug reports. The service is affordable, easy to get started with, and requires no time-zapping chats. ILoveMyQA's team of Rockstar QAs is dedicated to helping businesses find and fix bugs before their customers do, so they can enjoy the results and benefits of having a QA team without the cost, management, and headaches.

TestMarket

TestMarket is an AI-powered sales optimization platform for online marketplace sellers. It offers a range of services to help sellers increase their visibility, boost sales, and improve their overall performance on marketplaces such as Amazon, Etsy, and Walmart. TestMarket's services include product promotion, keyword analysis, Google Ads and SEO optimization, and advertising optimization.

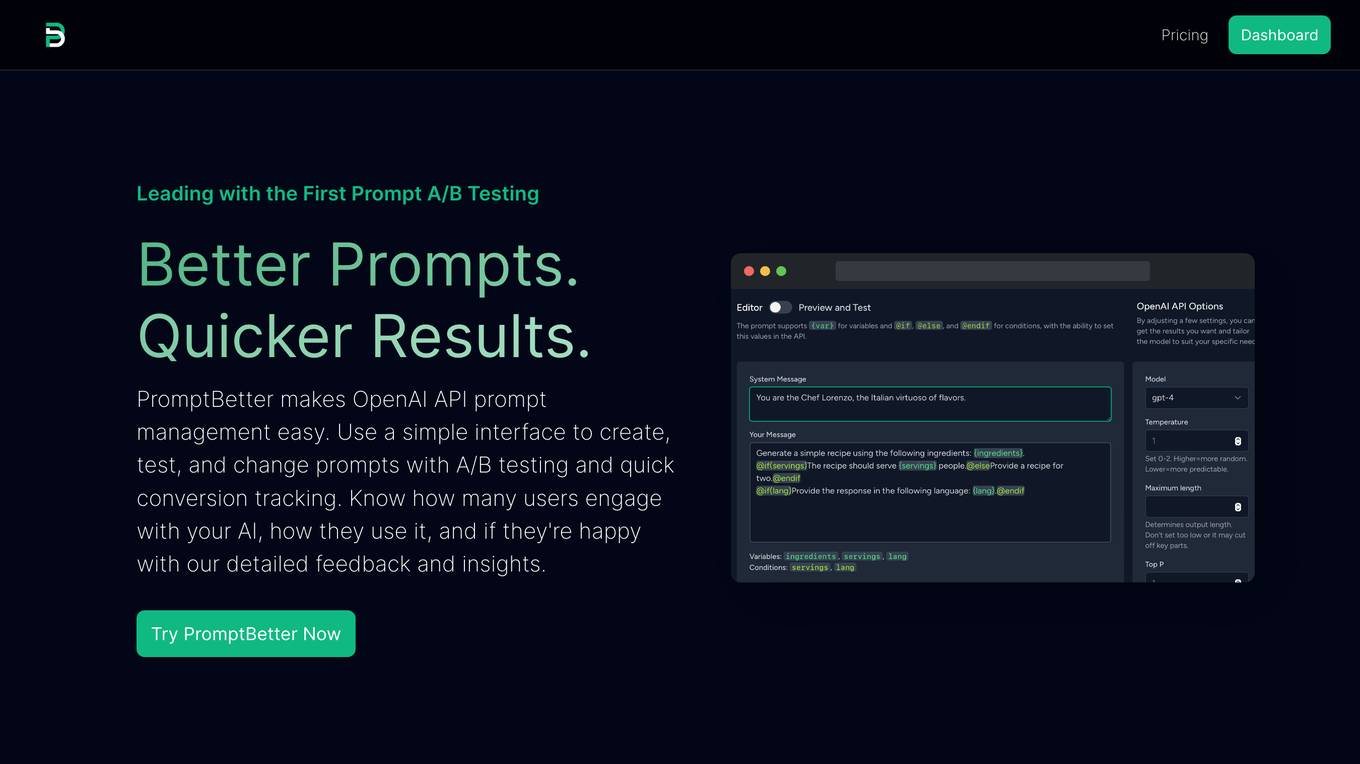

PromptBetter

PromptBetter is a tool that helps you optimize your OpenAI API prompts with advanced A/B testing and insights. It provides a simple interface to create, test, and change prompts, and it tracks conversions and user feedback so you can see how your prompts are performing. PromptBetter also offers in-depth insights into your OpenAI API usage, so you can understand how and by whom it is being used.

Phrasee

Phrasee is a generative AI platform that helps enterprise marketers create and optimize marketing messages across various channels, including email, SMS, push notifications, web and app, and social media. It uses AI to generate billions of marketing messages tailored to specific audiences and brands, ensuring consistent experiences and maximizing customer engagement. Phrasee's platform provides marketers with tools for testing, optimizing, and personalizing content, enabling them to improve performance, conversions, and ROI.

AI Wordle

This website offers a game where users can play Wordle against an AI. The goal of the game is to guess a 5-letter word in six tries or less. The AI uses Chat GPT to try to guess the word in as few tries as possible. Users can also view a scoreboard to see how they compare to other players.

LLM Clash

LLM Clash is a web-based application that allows users to compare the outputs of different large language models (LLMs) on a given task. Users can input a prompt and select which LLMs they want to compare. The application will then display the outputs of the LLMs side-by-side, allowing users to compare their strengths and weaknesses.

Prototyper

Prototyper is an AI-powered tool that helps you create prototypes of your ideas quickly and easily. With Prototyper, you can describe your idea in simple text, and the AI will generate the code for you. You can then test your prototype and iterate on it until you're happy with the results.

Intellimize

Intellimize is an AI-driven website optimization and personalization platform that empowers businesses to create, test, and personalize website experiences using powerful AI technology. With features like AI-driven optimization, A/B testing, and rules-based personalization, Intellimize helps businesses drive 1:1 personalization at every touchpoint, making every marketing dollar count. The platform allows users to launch new ideas in minutes, run unlimited variations, and achieve results faster with generative AI providing copy suggestions. Intellimize also offers personalized experiences for every visitor, delivering 1:1 personalization without the need for first or third-party data. The platform enables users to easily understand the impact of their experiments across segments, providing measurable value for marketers.

A11YBoost

A11YBoost is an automated website accessibility monitoring and reporting tool that helps businesses improve the accessibility, performance, UX, design, and SEO of their websites. It provides instant and detailed accessibility reports that cover key issues, their impact, and how to fix them. The tool also offers analytics history to track progress over time and covers not just core accessibility issues but also performance, UX, design, and SEO. A11YBoost uses a unique blend of AI testing, traditional testing, and human expertise to deliver results and has an expanding test suite with 25+ tests across five categories.

Snaplet

Snaplet is a data management tool for developers that provides AI-generated dummy data for local development, end-to-end testing, and debugging. It uses a real programming language (TypeScript) to define and edit data, ensuring type safety and auto-completion. Snaplet understands database structures and relationships, automatically transforming personally identifiable information and seeding data accordingly. It integrates seamlessly into development workflows, providing data where it's needed most: on local machines, for CI/CD testing, and preview environments.

Fake Hacker News

Fake Hacker News is a website that allows users to submit fake Hacker News submissions. This can be useful for testing out how readers may respond to a post before submitting it to the real Hacker News website. The website is simple to use: users simply enter a title and some text, and then click the "submit" button. The website will then generate a fake Hacker News submission that looks like it was submitted by the user.

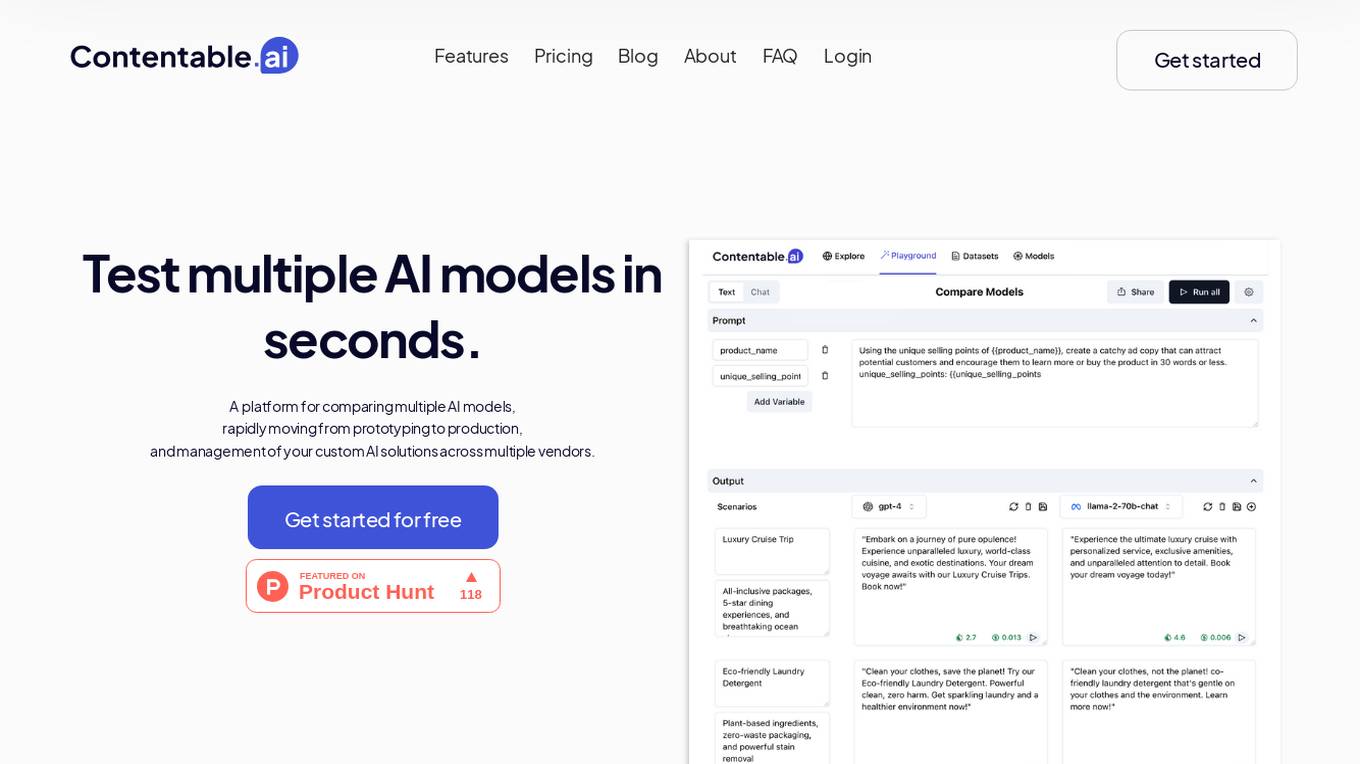

Contentable.ai

Contentable.ai is a platform for comparing multiple AI models, rapidly moving from prototyping to production, and management of your custom AI solutions across multiple vendors. It allows users to test multiple AI models in seconds, compare models side-by-side across top AI providers, collaborate on AI models with their team seamlessly, design complex AI workflows without coding, and pay as they go.

Promptitude.io

Promptitude.io is a platform that allows users to integrate GPT into their apps and workflows. It provides a variety of features to help users manage their prompts, personalize their AI responses, and publish their prompts for use by others. Promptitude.io also offers a library of pre-built prompts that users can use to get started quickly.

hirex.ai

hirex.ai is an AI-powered platform designed to make level one interviews inclusive and fair for all job candidates. It offers a voice AI platform for conducting interviews, screening candidates faster and more effectively using AI, ML, and NLP tools. The platform features AI-enabled talent management and engagement solutions, remote interviews with lip sync detection, WhatsApp chatbots for candidate engagement, interview reminders, coding assignments with AI-based proctoring, search and resume parsing, and ATS automation. hirex.ai aims to address common hiring challenges such as insufficient resources for interviews, misunderstanding of skill requirements, inconsistency in selection criteria, and interviewer bias. The platform leverages pre-trained Voice BOTs to conduct interviews and engage with candidates directly, helping organizations streamline their talent acquisition process.

Lunary

Lunary is an AI developer platform that helps you monitor, manage, and improve your LLM apps. With Lunary, you can log and debug LLM agents, stay on top of costs, optimize costs, debug complex agents, run benchmarks, test open-source models, replay user chats, and iterate on prompts. Lunary is easy to integrate with OpenAI and other LLM providers, and it's trusted by 1500+ AI developers at leading companies.

Compliance

Compliance is an Open Source Information Security Management System designed to help organizations manage and maintain compliance with industry regulations and standards. It provides a platform for tracking and documenting security policies, procedures, and controls to ensure adherence to best practices and legal requirements. With Compliance, users can streamline their compliance processes, improve security posture, and demonstrate regulatory compliance to stakeholders and auditors.

20 - Open Source AI Tools

deepeval

DeepEval is a simple-to-use, open-source LLM evaluation framework specialized for unit testing LLM outputs. It incorporates various metrics such as G-Eval, hallucination, answer relevancy, RAGAS, etc., and runs locally on your machine for evaluation. It provides a wide range of ready-to-use evaluation metrics, allows for creating custom metrics, integrates with any CI/CD environment, and enables benchmarking LLMs on popular benchmarks. DeepEval is designed for evaluating RAG and fine-tuning applications, helping users optimize hyperparameters, prevent prompt drifting, and transition from OpenAI to hosting their own Llama2 with confidence.

chatgpt-universe

ChatGPT is a large language model that can generate human-like text, translate languages, write different kinds of creative content, and answer your questions in a conversational way. It is trained on a massive amount of text data, and it is able to understand and respond to a wide range of natural language prompts. Here are 5 jobs suitable for this tool, in lowercase letters: 1. content writer 2. chatbot assistant 3. language translator 4. creative writer 5. researcher

dash-infer

DashInfer is a C++ runtime tool designed to deliver production-level implementations highly optimized for various hardware architectures, including x86 and ARMv9. It supports Continuous Batching and NUMA-Aware capabilities for CPU, and can fully utilize modern server-grade CPUs to host large language models (LLMs) up to 14B in size. With lightweight architecture, high precision, support for mainstream open-source LLMs, post-training quantization, optimized computation kernels, NUMA-aware design, and multi-language API interfaces, DashInfer provides a versatile solution for efficient inference tasks. It supports x86 CPUs with AVX2 instruction set and ARMv9 CPUs with SVE instruction set, along with various data types like FP32, BF16, and InstantQuant. DashInfer also offers single-NUMA and multi-NUMA architectures for model inference, with detailed performance tests and inference accuracy evaluations available. The tool is supported on mainstream Linux server operating systems and provides documentation and examples for easy integration and usage.

ollama-grid-search

A Rust based tool to evaluate LLM models, prompts and model params. It automates the process of selecting the best model parameters, given an LLM model and a prompt, iterating over the possible combinations and letting the user visually inspect the results. The tool assumes the user has Ollama installed and serving endpoints, either in `localhost` or in a remote server. Key features include: * Automatically fetches models from local or remote Ollama servers * Iterates over different models and params to generate inferences * A/B test prompts on different models simultaneously * Allows multiple iterations for each combination of parameters * Makes synchronous inference calls to avoid spamming servers * Optionally outputs inference parameters and response metadata (inference time, tokens and tokens/s) * Refetching of individual inference calls * Model selection can be filtered by name * List experiments which can be downloaded in JSON format * Configurable inference timeout * Custom default parameters and system prompts can be defined in settings

llm-jp-eval

LLM-jp-eval is a tool designed to automatically evaluate Japanese large language models across multiple datasets. It provides functionalities such as converting existing Japanese evaluation data to text generation task evaluation datasets, executing evaluations of large language models across multiple datasets, and generating instruction data (jaster) in the format of evaluation data prompts. Users can manage the evaluation settings through a config file and use Hydra to load them. The tool supports saving evaluation results and logs using wandb. Users can add new evaluation datasets by following specific steps and guidelines provided in the tool's documentation. It is important to note that using jaster for instruction tuning can lead to artificially high evaluation scores, so caution is advised when interpreting the results.

OSWorld

OSWorld is a benchmarking tool designed to evaluate multimodal agents for open-ended tasks in real computer environments. It provides a platform for running experiments, setting up virtual machines, and interacting with the environment using Python scripts. Users can install the tool on their desktop or server, manage dependencies with Conda, and run benchmark tasks. The tool supports actions like executing commands, checking for specific results, and evaluating agent performance. OSWorld aims to facilitate research in AI by providing a standardized environment for testing and comparing different agent baselines.

rag-experiment-accelerator

The RAG Experiment Accelerator is a versatile tool that helps you conduct experiments and evaluations using Azure AI Search and RAG pattern. It offers a rich set of features, including experiment setup, integration with Azure AI Search, Azure Machine Learning, MLFlow, and Azure OpenAI, multiple document chunking strategies, query generation, multiple search types, sub-querying, re-ranking, metrics and evaluation, report generation, and multi-lingual support. The tool is designed to make it easier and faster to run experiments and evaluations of search queries and quality of response from OpenAI, and is useful for researchers, data scientists, and developers who want to test the performance of different search and OpenAI related hyperparameters, compare the effectiveness of various search strategies, fine-tune and optimize parameters, find the best combination of hyperparameters, and generate detailed reports and visualizations from experiment results.

llm-swarm

llm-swarm is a tool designed to manage scalable open LLM inference endpoints in Slurm clusters. It allows users to generate synthetic datasets for pretraining or fine-tuning using local LLMs or Inference Endpoints on the Hugging Face Hub. The tool integrates with huggingface/text-generation-inference and vLLM to generate text at scale. It manages inference endpoint lifetime by automatically spinning up instances via `sbatch`, checking if they are created or connected, performing the generation job, and auto-terminating the inference endpoints to prevent idling. Additionally, it provides load balancing between multiple endpoints using a simple nginx docker for scalability. Users can create slurm files based on default configurations and inspect logs for further analysis. For users without a Slurm cluster, hosted inference endpoints are available for testing with usage limits based on registration status.

HuggingFists

HuggingFists is a low-code data flow tool that enables convenient use of LLM and HuggingFace models. It provides functionalities similar to Langchain, allowing users to design, debug, and manage data processing workflows, create and schedule workflow jobs, manage resources environment, and handle various data artifact resources. The tool also offers account management for users, allowing centralized management of data source accounts and API accounts. Users can access Hugging Face models through the Inference API or locally deployed models, as well as datasets on Hugging Face. HuggingFists supports breakpoint debugging, branch selection, function calls, workflow variables, and more to assist users in developing complex data processing workflows.

holmesgpt

HolmesGPT is an open-source DevOps assistant powered by OpenAI or any tool-calling LLM of your choice. It helps in troubleshooting Kubernetes, incident response, ticket management, automated investigation, and runbook automation in plain English. The tool connects to existing observability data, is compliance-friendly, provides transparent results, supports extensible data sources, runbook automation, and integrates with existing workflows. Users can install HolmesGPT using Brew, prebuilt Docker container, Python Poetry, or Docker. The tool requires an API key for functioning and supports OpenAI, Azure AI, and self-hosted LLMs.

DB-GPT

DB-GPT is a personal database administrator that can solve database problems by reading documents, using various tools, and writing analysis reports. It is currently undergoing an upgrade. **Features:** * **Online Demo:** * Import documents into the knowledge base * Utilize the knowledge base for well-founded Q&A and diagnosis analysis of abnormal alarms * Send feedbacks to refine the intermediate diagnosis results * Edit the diagnosis result * Browse all historical diagnosis results, used metrics, and detailed diagnosis processes * **Language Support:** * English (default) * Chinese (add "language: zh" in config.yaml) * **New Frontend:** * Knowledgebase + Chat Q&A + Diagnosis + Report Replay * **Extreme Speed Version for localized llms:** * 4-bit quantized LLM (reducing inference time by 1/3) * vllm for fast inference (qwen) * Tiny LLM * **Multi-path extraction of document knowledge:** * Vector database (ChromaDB) * RESTful Search Engine (Elasticsearch) * **Expert prompt generation using document knowledge** * **Upgrade the LLM-based diagnosis mechanism:** * Task Dispatching -> Concurrent Diagnosis -> Cross Review -> Report Generation * Synchronous Concurrency Mechanism during LLM inference * **Support monitoring and optimization tools in multiple levels:** * Monitoring metrics (Prometheus) * Flame graph in code level * Diagnosis knowledge retrieval (dbmind) * Logical query transformations (Calcite) * Index optimization algorithms (for PostgreSQL) * Physical operator hints (for PostgreSQL) * Backup and Point-in-time Recovery (Pigsty) * **Continuously updated papers and experimental reports** This project is constantly evolving with new features. Don't forget to star ⭐ and watch 👀 to stay up to date.

qb

QANTA is a system and dataset for question answering tasks. It provides a script to download datasets, preprocesses questions, and matches them with Wikipedia pages. The system includes various datasets, training, dev, and test data in JSON and SQLite formats. Dependencies include Python 3.6, `click`, and NLTK models. Elastic Search 5.6 is needed for the Guesser component. Configuration is managed through environment variables and YAML files. QANTA supports multiple guesser implementations that can be enabled/disabled. Running QANTA involves using `cli.py` and Luigi pipelines. The system accesses raw Wikipedia dumps for data processing. The QANTA ID numbering scheme categorizes datasets based on events and competitions.

model.nvim

model.nvim is a tool designed for Neovim users who want to utilize AI models for completions or chat within their text editor. It allows users to build prompts programmatically with Lua, customize prompts, experiment with multiple providers, and use both hosted and local models. The tool supports features like provider agnosticism, programmatic prompts in Lua, async and multistep prompts, streaming completions, and chat functionality in 'mchat' filetype buffer. Users can customize prompts, manage responses, and context, and utilize various providers like OpenAI ChatGPT, Google PaLM, llama.cpp, ollama, and more. The tool also supports treesitter highlights and folds for chat buffers.

instructor

Instructor is a Python library that makes it a breeze to work with structured outputs from large language models (LLMs). Built on top of Pydantic, it provides a simple, transparent, and user-friendly API to manage validation, retries, and streaming responses. Get ready to supercharge your LLM workflows!

pinecone-ts-client

The official Node.js client for Pinecone, written in TypeScript. This client library provides a high-level interface for interacting with the Pinecone vector database service. With this client, you can create and manage indexes, upsert and query vector data, and perform other operations related to vector search and retrieval. The client is designed to be easy to use and provides a consistent and idiomatic experience for Node.js developers. It supports all the features and functionality of the Pinecone API, making it a comprehensive solution for building vector-powered applications in Node.js.

llm-course

The LLM course is divided into three parts: 1. 🧩 **LLM Fundamentals** covers essential knowledge about mathematics, Python, and neural networks. 2. 🧑🔬 **The LLM Scientist** focuses on building the best possible LLMs using the latest techniques. 3. 👷 **The LLM Engineer** focuses on creating LLM-based applications and deploying them. For an interactive version of this course, I created two **LLM assistants** that will answer questions and test your knowledge in a personalized way: * 🤗 **HuggingChat Assistant**: Free version using Mixtral-8x7B. * 🤖 **ChatGPT Assistant**: Requires a premium account. ## 📝 Notebooks A list of notebooks and articles related to large language models. ### Tools | Notebook | Description | Notebook | |----------|-------------|----------| | 🧐 LLM AutoEval | Automatically evaluate your LLMs using RunPod |  | | 🥱 LazyMergekit | Easily merge models using MergeKit in one click. |  | | 🦎 LazyAxolotl | Fine-tune models in the cloud using Axolotl in one click. |  | | ⚡ AutoQuant | Quantize LLMs in GGUF, GPTQ, EXL2, AWQ, and HQQ formats in one click. |  | | 🌳 Model Family Tree | Visualize the family tree of merged models. |  | | 🚀 ZeroSpace | Automatically create a Gradio chat interface using a free ZeroGPU. |  |

free-for-life

A massive list including a huge amount of products and services that are completely free! ⭐ Star on GitHub • 🤝 Contribute # Table of Contents * APIs, Data & ML * Artificial Intelligence * BaaS * Code Editors * Code Generation * DNS * Databases * Design & UI * Domains * Email * Font * For Students * Forms * Linux Distributions * Messaging & Streaming * PaaS * Payments & Billing * SSL

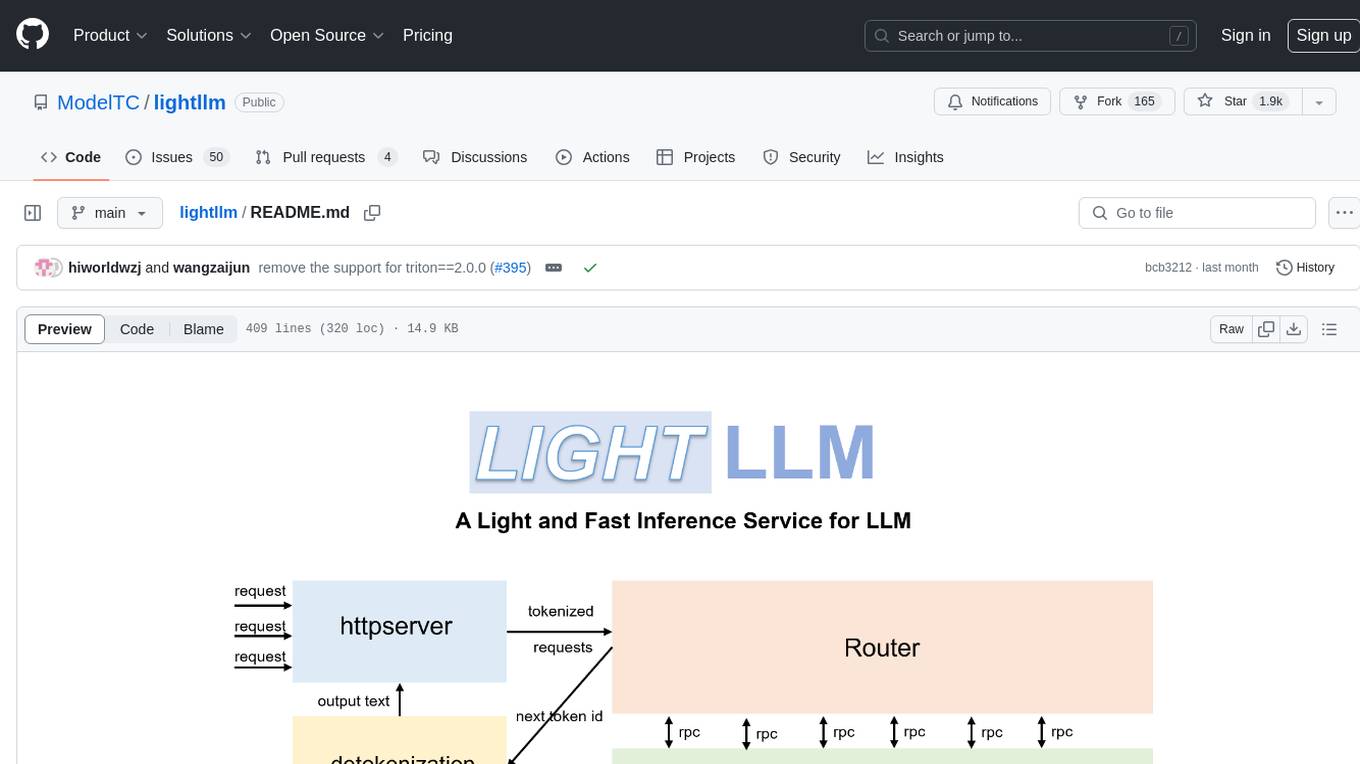

lightllm

LightLLM is a Python-based LLM (Large Language Model) inference and serving framework known for its lightweight design, scalability, and high-speed performance. It offers features like tri-process asynchronous collaboration, Nopad for efficient attention operations, dynamic batch scheduling, FlashAttention integration, tensor parallelism, Token Attention for zero memory waste, and Int8KV Cache. The tool supports various models like BLOOM, LLaMA, StarCoder, Qwen-7b, ChatGLM2-6b, Baichuan-7b, Baichuan2-7b, Baichuan2-13b, InternLM-7b, Yi-34b, Qwen-VL, Llava-7b, Mixtral, Stablelm, and MiniCPM. Users can deploy and query models using the provided server launch commands and interact with multimodal models like QWen-VL and Llava using specific queries and images.

20 - OpenAI Gpts

Expert Testers

Chat with Software Testing Experts. Ping Jason if you won't want to be an expert or have feedback.

Medical Lab Tests Advisor

Describe your medical signs and symptoms. Optionally also list any applicable known lab test results. Further lab tests will be recommended. Any web searches may be requested explicitly. Extra tests by these providers may also be requested explicitly: QuestHealth, WalkInLab, RadiologyAssist

Agriculture Mentor

A knowledgeable agriculture mentor, providing practical farming advice.

A/B Test GPT

Calculate the results of your A/B test and check whether the result is statistically significant or due to chance.

Test Case GPT

I will provide guidance on testing, verification, and validation for QA roles.

Test Shaman

Test Shaman: Guiding software testing with Grug wisdom and humor, balancing fun with practical advice.

Product Testing Advisor

Ensures product quality through rigorous, systematic testing processes.

Immersive Experience Designer

This GPT Helps to Brainstorm Immersive Experience Ideas Using the TISECT Method

Game QA Strategist

Advises on QA tests based on recent game code changes, including git history. Learn more at regression.gg

AI powered Tech Company

A replacement to your Product Manager, Engineering Manager, and your Average Developer and Tester

Project-IGI

This is a project IGI knowledge bot knows about Levels,Graps,QVM,Natives and more

Feature Ticket Generator

This GPT writes tickets for software features. It uses Gherkin to specify scenarios. @cxmacedo