warren

AI-powered security alert management that reduces noise and accelerates response time

Stars: 87

Warren is an open-source security alert management system that automates the tedious parts of alert triage. It ingests alerts from existing tools, enriches them with AI and threat intelligence, and helps focus on actual incidents instead of noise. Key features include webhook-based ingestion, policy-driven processing, vector similarity clustering, LLM-powered analysis, API-first design, and flexible deployment. The tool provides a web UI with alert timeline view, ticket workflow, interactive AI chat, and export capabilities. Integration architecture includes webhook receivers, asynchronous processing support, Slack bot, and GraphQL API. The policy engine supports Rego-based alert policies, authorization policies, and a test framework for validation. Warren is suitable for security analysts, incident responders, threat hunters, SOC analysts, and security engineers.

README:

AI-powered security alert management that reduces noise and accelerates response time

Warren is an open-source security alert management system that automates the tedious parts of alert triage. It ingests alerts from your existing tools, enriches them with AI and threat intelligence, and helps you focus on actual incidents instead of noise.

Key technical features:

- Webhook-based ingestion: Simple HTTP endpoints for any alert source (no agents required)

- Policy-driven processing: Write Rego policies to filter and transform alerts before they hit your queue

- Vector similarity clustering: Automatically groups related alerts using embeddings

- LLM-powered analysis: Uses LLM (Large Language Model) to generate human-readable summaries and extract IOCs

- API-first design: GraphQL API for custom integrations, Slack bot for notifications

- Flexible deployment: Runs anywhere from local Docker to Kubernetes, with optional cloud services

- Parallel enrichment queries multiple threat intel APIs (OTX, VirusTotal, AbuseIPDB, etc.)

- Structured extraction - LLM extracts IOCs, TTPs, and risk indicators into queryable fields

- React-based Web UI - real-time dashboard, ticket management, and WebSocket-based AI chat

- DBSCAN clustering with cosine similarity on Gemini embeddings (256-dim vectors)

Dashboard view: Similar alerts are automatically grouped using DBSCAN clustering on embedding vectors

The Web UI provides:

- Alert timeline view with clustering visualization

- Ticket workflow - create, assign, and track incident tickets

- Interactive AI chat - ask questions about specific alerts in natural language

- Export capabilities - download alert data as JSONL for further analysis

-

Webhook receivers at

/hooks/alert/{raw,sns,pubsub}/{schema}for any alert format- Asynchronous processing support - Return HTTP 200 immediately while processing alerts in background

- Slack bot with interactive components (buttons, modals) for ticket management

- GraphQL API with DataLoader for efficient queries and real-time subscriptions

Slack integration: Alerts arrive with threat intel enrichment and one-click ticket creation

- Rego-based alert policies - transform, filter, or multiply alerts before processing

- Authorization policies - fine-grained access control with environment context

- Test framework - validate policies with sample inputs before deployment

Example policy to filter and enrich alerts:

package alert.cloudtrail

alert contains {

"title": sprintf("Suspicious AWS Activity: %s", [event.eventName]),

"description": sprintf("%s in %s by %s", [

event.eventName,

event.awsRegion,

event.userIdentity.userName

]),

"attrs": [

{

"key": "event_name",

"value": event.eventName,

"link": ""

},

{

"key": "source_ip",

"value": event.sourceIPAddress,

"link": sprintf("https://www.abuseipdb.com/check/%s", [event.sourceIPAddress])

}

]

} if {

event := input.Records[_]

event.eventName in ["DeleteBucket", "StopLogging", "DeleteTrail"]

not ignore

}

# Ignore events from trusted IPs

ignore if {

event := input.Records[_]

event.sourceIPAddress in ["10.0.0.1", "192.168.1.100"]

}# Prerequisites

export PROJECT_ID=your-gcp-project

gcloud auth application-default login

gcloud services enable aiplatform.googleapis.com --project=$PROJECT_ID

# Run Warren (in-memory storage, no auth)

docker run -d -p 8080:8080 \

-v ~/.config/gcloud:/home/nonroot/.config/gcloud:ro \

-e WARREN_GEMINI_PROJECT_ID=$PROJECT_ID \

-e WARREN_NO_AUTHENTICATION=true \

-e WARREN_NO_AUTHORIZATION=true \

-e WARREN_ADDR=0.0.0.0:8080 \

ghcr.io/secmon-lab/warren:latest serve

# Send test alert

curl -X POST http://localhost:8080/hooks/alert/raw/test \

-H "Content-Type: application/json" \

-d '{"title": "SSH brute force", "source_ip": "45.227.255.100"}'Visit http://localhost:8080 to access the dashboard.

- Alert Sources: AWS GuardDuty, Suricata, SIEM webhooks, Custom apps

- Threat Intel: VirusTotal, AlienVault OTX, URLScan, Shodan, AbuseIPDB

- Tools: BigQuery, Slack Message Search, GitHub (via GitHub App)

- Collaboration: Slack (native bot), GraphQL API, REST webhooks

- Infrastructure: Google Cloud (Vertex AI, Firestore), Docker, Kubernetes

- Getting Started - Your first alert in 5 minutes

- User Guide - Day-to-day operations

- Policy Guide - Custom detection rules

- Architecture - Technical deep dive

We welcome contributions! See Contributing Guide

Apache 2.0 License

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for warren

Similar Open Source Tools

warren

Warren is an open-source security alert management system that automates the tedious parts of alert triage. It ingests alerts from existing tools, enriches them with AI and threat intelligence, and helps focus on actual incidents instead of noise. Key features include webhook-based ingestion, policy-driven processing, vector similarity clustering, LLM-powered analysis, API-first design, and flexible deployment. The tool provides a web UI with alert timeline view, ticket workflow, interactive AI chat, and export capabilities. Integration architecture includes webhook receivers, asynchronous processing support, Slack bot, and GraphQL API. The policy engine supports Rego-based alert policies, authorization policies, and a test framework for validation. Warren is suitable for security analysts, incident responders, threat hunters, SOC analysts, and security engineers.

CyberStrikeAI

CyberStrikeAI is an AI-native security testing platform built in Go that integrates 100+ security tools, an intelligent orchestration engine, role-based testing with predefined security roles, a skills system with specialized testing skills, and comprehensive lifecycle management capabilities. It enables end-to-end automation from conversational commands to vulnerability discovery, attack-chain analysis, knowledge retrieval, and result visualization, delivering an auditable, traceable, and collaborative testing environment for security teams. The platform features an AI decision engine with OpenAI-compatible models, native MCP implementation with various transports, prebuilt tool recipes, large-result pagination, attack-chain graph, password-protected web UI, knowledge base with vector search, vulnerability management, batch task management, role-based testing, and skills system.

haiku.rag

Haiku RAG is a Retrieval-Augmented Generation (RAG) library that utilizes LanceDB as a local vector database. It supports semantic and full-text search, hybrid search with Reciprocal Rank Fusion, multiple embedding and QA providers, default search result reranking, question answering, file monitoring, and various file formats. It can be used via CLI or Python API, and can serve as tools for AI assistants like Claude Desktop. The library offers features for document management and search, with detailed documentation available.

Mira

Mira is an agentic AI library designed for automating company research by gathering information from various sources like company websites, LinkedIn profiles, and Google Search. It utilizes a multi-agent architecture to collect and merge data points into a structured profile with confidence scores and clear source attribution. The core library is framework-agnostic and can be integrated into applications, pipelines, or custom workflows. Mira offers features such as real-time progress events, confidence scoring, company criteria matching, and built-in services for data gathering. The tool is suitable for users looking to streamline company research processes and enhance data collection efficiency.

inference-gateway

The Inference Gateway is an open-source proxy server designed to simplify access to various language model APIs. It allows users to interact with different language models through a unified interface, stream tokens in real-time, process images alongside text, and use Docker or Kubernetes for deployment. The gateway supports Model Context Protocol integration, provides metrics and observability features, and is production-ready with minimal resource consumption. It offers middleware control and bypass mechanisms, enabling users to manage capabilities like MCP and vision support. The CLI tool provides status monitoring, interactive chat, configuration management, project initialization, and tool execution functionalities. The project aims to provide a flexible solution for AI Agents, supporting self-hosted LLMs and avoiding vendor lock-in.

Claw-Hunter

Claw Hunter is a discovery and risk-assessment tool for OpenClaw instances, designed to identify 'Shadow AI' and audit agent privileges. It helps ITSec teams detect security risks, credential exposure, integration inventory, configuration issues, and installation status. The tool offers system-agnostic visibility, MDM readiness, non-intrusive operations, comprehensive detection, structured output in JSON format, and zero dependencies. It provides silent execution mode for automated deployment, machine identification, security risk scoring, results upload to a central API endpoint, bearer token authentication support, and persistent logging. Claw Hunter offers proper exit codes for automation and is available for macOS, Linux, and Windows platforms.

fast-llm-security-guardrails

ZenGuard AI enables AI developers to integrate production-level, low-code LLM (Large Language Model) guardrails into their generative AI applications effortlessly. With ZenGuard AI, ensure your application operates within trusted boundaries, is protected from prompt injections, and maintains user privacy without compromising on performance.

strix

Strix is an open-source AI tool designed to help developers and security teams find and fix vulnerabilities in applications. It offers a full hacker toolkit, teams of autonomous AI agents for collaboration, real validation with proof-of-concepts, a developer-first CLI with actionable reports, and auto-fix & reporting features. Strix can be used for application security testing, rapid penetration testing, bug bounty automation, and CI/CD integration. It provides comprehensive vulnerability detection for various security issues and offers advanced multi-agent orchestration for security testing. The tool supports basic and advanced testing scenarios, headless mode for automated jobs, and CI/CD integration with GitHub Actions. Strix also offers configuration options for optimal performance and recommends specific AI models for best results. Full documentation is available at docs.strix.ai, and contributions to the project are welcome.

orbit

ORBIT (Open Retrieval-Based Inference Toolkit) is a middleware platform that provides a unified API for AI inference. It acts as a central gateway, allowing you to connect various local and remote AI models with your private data sources like SQL databases, vector stores, and local files. ORBIT uses a flexible adapter architecture to connect your data to AI models, creating specialized 'agents' for specific tasks. It supports scenarios like Knowledge Base Q&A and Chat with Your SQL Database, enabling users to interact with AI models seamlessly. The tool offers a RESTful API for programmatic access and includes features like authentication, API key management, system prompts, health monitoring, and file management. ORBIT is designed to streamline AI inference tasks and facilitate interactions between users and AI models.

indexify

Indexify is an open-source engine for building fast data pipelines for unstructured data (video, audio, images, and documents) using reusable extractors for embedding, transformation, and feature extraction. LLM Applications can query transformed content friendly to LLMs by semantic search and SQL queries. Indexify keeps vector databases and structured databases (PostgreSQL) updated by automatically invoking the pipelines as new data is ingested into the system from external data sources. **Why use Indexify** * Makes Unstructured Data **Queryable** with **SQL** and **Semantic Search** * **Real-Time** Extraction Engine to keep indexes **automatically** updated as new data is ingested. * Create **Extraction Graph** to describe **data transformation** and extraction of **embedding** and **structured extraction**. * **Incremental Extraction** and **Selective Deletion** when content is deleted or updated. * **Extractor SDK** allows adding new extraction capabilities, and many readily available extractors for **PDF**, **Image**, and **Video** indexing and extraction. * Works with **any LLM Framework** including **Langchain**, **DSPy**, etc. * Runs on your laptop during **prototyping** and also scales to **1000s of machines** on the cloud. * Works with many **Blob Stores**, **Vector Stores**, and **Structured Databases** * We have even **Open Sourced Automation** to deploy to Kubernetes in production.

tracecat

Tracecat is an open-source automation platform for security teams. It's designed to be simple but powerful, with a focus on AI features and a practitioner-obsessed UI/UX. Tracecat can be used to automate a variety of tasks, including phishing email investigation, evidence collection, and remediation plan generation.

ai

Jetify's AI SDK for Go is a unified interface for interacting with multiple AI providers including OpenAI, Anthropic, and more. It addresses the challenges of fragmented ecosystems, vendor lock-in, poor Go developer experience, and complex multi-modal handling by providing a unified interface, Go-first design, production-ready features, multi-modal support, and extensible architecture. The SDK supports language models, embeddings, image generation, multi-provider support, multi-modal inputs, tool calling, and structured outputs.

ComfyUI-Copilot

ComfyUI-Copilot is an intelligent assistant built on the Comfy-UI framework that simplifies and enhances the AI algorithm debugging and deployment process through natural language interactions. It offers intuitive node recommendations, workflow building aids, and model querying services to streamline development processes. With features like interactive Q&A bot, natural language node suggestions, smart workflow assistance, and model querying, ComfyUI-Copilot aims to lower the barriers to entry for beginners, boost development efficiency with AI-driven suggestions, and provide real-time assistance for developers.

Alice

Alice is an open-source AI companion designed to live on your desktop, providing voice interaction, intelligent context awareness, and powerful tooling. More than a chatbot, Alice is emotionally engaging and deeply useful, assisting with daily tasks and creative work. Key features include voice interaction with natural-sounding responses, memory and context management, vision and visual output capabilities, computer use tools, function calling for web search and task scheduling, wake word support, dedicated Chrome extension, and flexible settings interface. Technologies used include Vue.js, Electron, OpenAI, Go, hnswlib-node, and more. Alice is customizable and offers a dedicated Chrome extension, wake word support, and various tools for computer use and productivity tasks.

copilot-collections

Copilot Collections is an opinionated setup for GitHub Copilot tailored for delivery teams. It provides shared workflows, specialized agents, task prompts, reusable skills, and MCP integrations to streamline the software development process. The focus is on building features while letting Copilot handle the glue. The setup requires a GitHub Copilot Pro license and VS Code version 1.109 or later. It supports a standard workflow of Research, Plan, Implement, and Review, with specialized flows for UI-heavy tasks and end-to-end testing. Agents like Architect, Business Analyst, Software Engineer, UI Reviewer, Code Reviewer, and E2E Engineer assist in different stages of development. Skills like Task Analysis, Architecture Design, Codebase Analysis, Code Review, and E2E Testing provide specialized domain knowledge and workflows. The repository also includes prompts and chat commands for various tasks, along with instructions for installation and configuration in VS Code.

For similar tasks

HydraDragonAntivirus

Hydra Dragon Antivirus is a comprehensive tool that combines dynamic and static analysis using Sandboxie for Windows with ClamAV, YARA-X, machine learning AI, behavior analysis, NLP-based detection, website signatures, Ghidra, and Snort. The tool provides a Machine Learning Malware and Benign Database for training, along with a guide for compiling from source. It offers features like Ghidra source code analysis, Java Development Kit setup, and detailed logs for malware detections. Users can join the Discord community server for support and follow specific guidelines for preparing the analysis environment. The tool emphasizes security measures such as cleaning up directories, avoiding sharing IP addresses, and ensuring ClamAV database installation. It also includes tips for effective analysis and troubleshooting common issues.

warren

Warren is an open-source security alert management system that automates the tedious parts of alert triage. It ingests alerts from existing tools, enriches them with AI and threat intelligence, and helps focus on actual incidents instead of noise. Key features include webhook-based ingestion, policy-driven processing, vector similarity clustering, LLM-powered analysis, API-first design, and flexible deployment. The tool provides a web UI with alert timeline view, ticket workflow, interactive AI chat, and export capabilities. Integration architecture includes webhook receivers, asynchronous processing support, Slack bot, and GraphQL API. The policy engine supports Rego-based alert policies, authorization policies, and a test framework for validation. Warren is suitable for security analysts, incident responders, threat hunters, SOC analysts, and security engineers.

tegon

Tegon is an open-source AI-First issue tracking tool designed for engineering teams. It aims to simplify task management by leveraging AI and integrations to automate task creation, prioritize tasks, and enhance bug resolution. Tegon offers features like issues tracking, automatic title generation, AI-generated labels and assignees, custom views, and upcoming features like sprints and task prioritization. It integrates with GitHub, Slack, and Sentry to streamline issue tracking processes. Tegon also plans to introduce AI Agents like PR Agent and Bug Agent to enhance product management and bug resolution. Contributions are welcome, and the product is licensed under the MIT License.

composio

Composio is a production-ready toolset for AI agents that enables users to integrate AI agents with various agentic tools effortlessly. It provides support for over 100 tools across different categories, including popular softwares like GitHub, Notion, Linear, Gmail, Slack, and more. Composio ensures managed authorization with support for six different authentication protocols, offering better agentic accuracy and ease of use. Users can easily extend Composio with additional tools, frameworks, and authorization protocols. The toolset is designed to be embeddable and pluggable, allowing for seamless integration and consistent user experience.

MLE-agent

MLE-Agent is an intelligent companion designed for machine learning engineers and researchers. It features autonomous baseline creation, integration with Arxiv and Papers with Code, smart debugging, file system organization, comprehensive tools integration, and an interactive CLI chat interface for seamless AI engineering and research workflows.

open-assistant-api

Open Assistant API is an open-source, self-hosted AI intelligent assistant API compatible with the official OpenAI interface. It supports integration with more commercial and private models, R2R RAG engine, internet search, custom functions, built-in tools, code interpreter, multimodal support, LLM support, and message streaming output. Users can deploy the service locally and expand existing features. The API provides user isolation based on tokens for SaaS deployment requirements and allows integration of various tools to enhance its capability to connect with the external world.

mastra

Mastra is an opinionated Typescript framework designed to help users quickly build AI applications and features. It provides primitives such as workflows, agents, RAG, integrations, syncs, and evals. Users can run Mastra locally or deploy it to a serverless cloud. The framework supports various LLM providers, offers tools for building language models, workflows, and accessing knowledge bases. It includes features like durable graph-based state machines, retrieval-augmented generation, integrations, syncs, and automated tests for evaluating LLM outputs.

refact-lsp

Refact Agent is a small executable written in Rust as part of the Refact Agent project. It lives inside your IDE to keep AST and VecDB indexes up to date, supporting connection graphs between definitions and usages in popular programming languages. It functions as an LSP server, offering code completion, chat functionality, and integration with various tools like browsers, databases, and debuggers. Users can interact with it through a Text UI in the command line.

For similar jobs

tracecat

Tracecat is an open-source automation platform for security teams. It's designed to be simple but powerful, with a focus on AI features and a practitioner-obsessed UI/UX. Tracecat can be used to automate a variety of tasks, including phishing email investigation, evidence collection, and remediation plan generation.

beelzebub

Beelzebub is an advanced honeypot framework designed to provide a highly secure environment for detecting and analyzing cyber attacks. It offers a low code approach for easy implementation and utilizes virtualization techniques powered by OpenAI Generative Pre-trained Transformer. Key features include OpenAI Generative Pre-trained Transformer acting as Linux virtualization, SSH Honeypot, HTTP Honeypot, TCP Honeypot, Prometheus openmetrics integration, Docker integration, RabbitMQ integration, and kubernetes support. Beelzebub allows easy configuration for different services and ports, enabling users to create custom honeypot scenarios. The roadmap includes developing Beelzebub into a robust PaaS platform. The project welcomes contributions and encourages adherence to the Code of Conduct for a supportive and respectful community.

admyral

Admyral is an open-source Cybersecurity Automation & Investigation Assistant that provides a unified console for investigations and incident handling, workflow automation creation, automatic alert investigation, and next step suggestions for analysts. It aims to tackle alert fatigue and automate security workflows effectively by offering features like workflow actions, AI actions, case management, alert handling, and more. Admyral combines security automation and case management to streamline incident response processes and improve overall security posture. The tool is open-source, transparent, and community-driven, allowing users to self-host, contribute, and collaborate on integrations and features.

galah

Galah is an LLM-powered web honeypot designed to mimic various applications and dynamically respond to arbitrary HTTP requests. It supports multiple LLM providers, including OpenAI. Unlike traditional web honeypots, Galah dynamically crafts responses for any HTTP request, caching them to reduce repetitive generation and API costs. The honeypot's configuration is crucial, directing the LLM to produce responses in a specified JSON format. Note that Galah is a weekend project exploring LLM capabilities and not intended for production use, as it may be identifiable through network fingerprinting and non-standard responses.

HaE

HaE is a framework project in the field of network security (data security) that combines artificial intelligence (AI) large models to achieve highlighting and information extraction of HTTP messages (including WebSocket). It aims to reduce testing time, focus on valuable and meaningful messages, and improve vulnerability discovery efficiency. The project provides a clear and visual interface design, simple interface interaction, and centralized data panel for querying and extracting information. It also features built-in color upgrade algorithm, one-click export/import of data, and integration of AI large models API for optimized data processing.

PyWxDump

PyWxDump is a Python tool designed for obtaining WeChat account information, decrypting databases, viewing WeChat chats, and exporting chats as HTML backups. It provides core features such as extracting base address offsets of various WeChat data, decrypting databases, and combining multiple database types for unified viewing. Additionally, it offers extended functions like viewing chat history through the web, exporting chat logs in different formats, and remote viewing of WeChat chat history. The tool also includes document classes for database field descriptions, base address offset methods, and decryption methods for MAC databases. PyWxDump is suitable for network security, daily backup archiving, remote chat history viewing, and more.

quark-engine

Quark Engine is an AI-powered tool designed for analyzing Android APK files. It focuses on enhancing the detection process for auto-suggestion, enabling users to create detection workflows without coding. The tool offers an intuitive drag-and-drop interface for workflow adjustments and updates. Quark Agent, the core component, generates Quark Script code based on natural language input and feedback. The project is committed to providing a user-friendly experience for designing detection workflows through textual and visual methods. Various features are still under development and will be rolled out gradually.

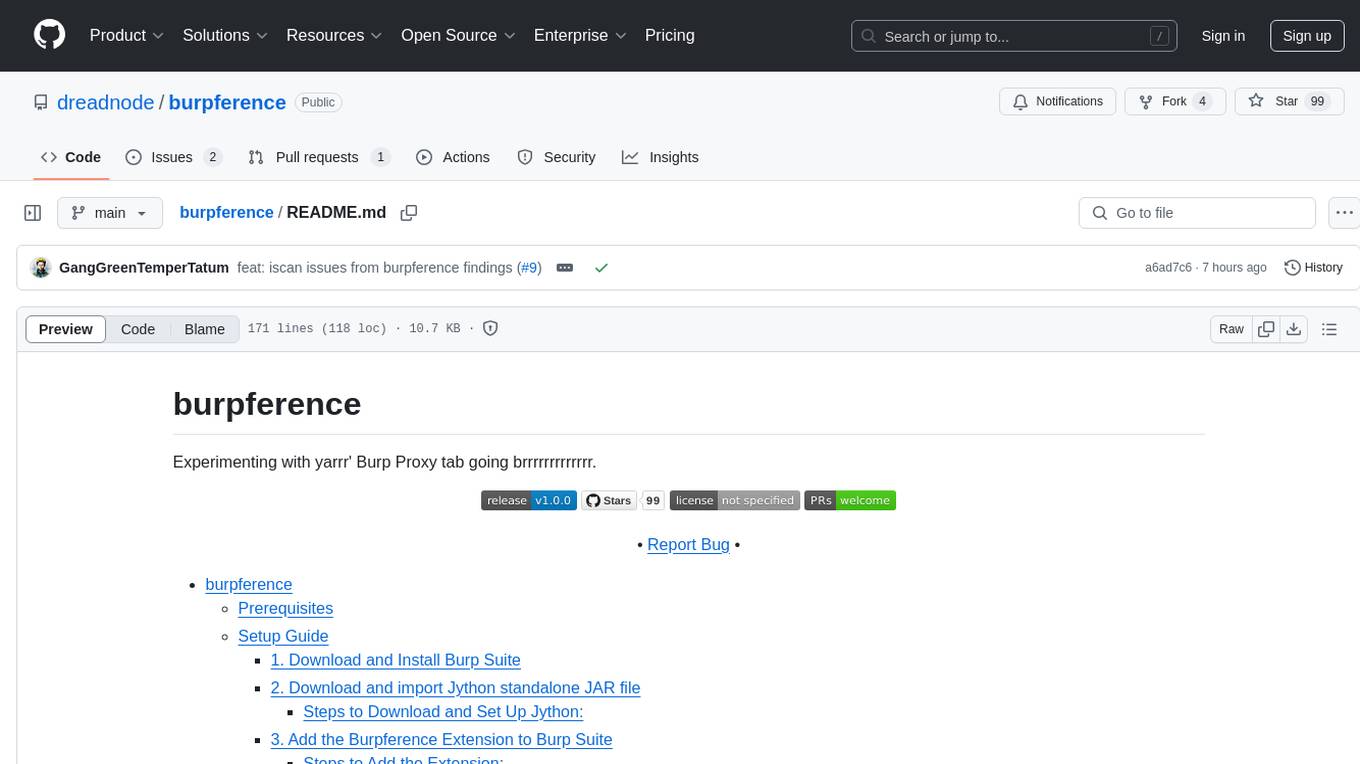

burpference

Burpference is an open-source extension designed to capture in-scope HTTP requests and responses from Burp's proxy history and send them to a remote LLM API in JSON format. It automates response capture, integrates with APIs, optimizes resource usage, provides color-coded findings visualization, offers comprehensive logging, supports native Burp reporting, and allows flexible configuration. Users can customize system prompts, API keys, and remote hosts, and host models locally to prevent high inference costs. The tool is ideal for offensive web application engagements to surface findings and vulnerabilities.