inference-gateway

An open-source, cloud-native, high-performance gateway unifying multiple LLM providers, from local solutions like Ollama to major cloud providers such as OpenAI, Groq, Cohere, Anthropic, Cloudflare and DeepSeek.

Stars: 101

The Inference Gateway is an open-source proxy server designed to simplify access to various language model APIs. It allows users to interact with different language models through a unified interface, stream tokens in real-time, process images alongside text, and use Docker or Kubernetes for deployment. The gateway supports Model Context Protocol integration, provides metrics and observability features, and is production-ready with minimal resource consumption. It offers middleware control and bypass mechanisms, enabling users to manage capabilities like MCP and vision support. The CLI tool provides status monitoring, interactive chat, configuration management, project initialization, and tool execution functionalities. The project aims to provide a flexible solution for AI Agents, supporting self-hosted LLMs and avoiding vendor lock-in.

README:

The Inference Gateway is a proxy server designed to facilitate access to various language model APIs. It allows users to interact with different language models through a unified interface, simplifying the configuration and the process of sending requests and receiving responses from multiple LLMs, enabling an easy use of Mixture of Experts.

- Key Features

- Overview

- Installation

- Middleware Control and Bypass Mechanisms

- Model Context Protocol (MCP) Integration

- Metrics and Observability

- Supported API's

- Configuration

- Examples

- SDKs

- CLI Tool

- Contributing

- License

- 📜 Open Source: Available under the MIT License.

- 🚀 Unified API Access: Proxy requests to multiple language model APIs, including OpenAI, Ollama, Ollama Cloud, Groq, Cohere etc.

- ⚙️ Environment Configuration: Easily configure API keys and URLs through environment variables.

- 🔧 Tool-use Support: Enable function calling capabilities across supported providers with a unified API.

- 🌐 MCP Support: Full Model Context Protocol integration - automatically discover and expose tools from MCP servers to LLMs without client-side tool management.

- 🌊 Streaming Responses: Stream tokens in real-time as they're generated from language models.

- 🖼️ Vision/Multimodal Support: Process images alongside text with vision-capable models.

- 🐳 Docker Support: Use Docker and Docker Compose for easy setup and deployment.

- ☸️ Kubernetes Support: Ready for deployment in Kubernetes environments.

- 📊 OpenTelemetry: Monitor and analyze performance.

- 🛡️ Production Ready: Built with production in mind, with configurable timeouts and TLS support.

- 🌿 Lightweight: Includes only essential libraries and runtime, resulting in smaller size binary of ~10.8MB.

- 📉 Minimal Resource Consumption: Designed to consume minimal resources and have a lower footprint.

- 📚 Documentation: Well documented with examples and guides.

- 🧪 Tested: Extensively tested with unit tests and integration tests.

- 🛠️ Maintained: Actively maintained and developed.

- 📈 Scalable: Easily scalable and can be used in a distributed environment with HPA in Kubernetes.

- 🔒 Compliance and Data Privacy: This project does not collect data or analytics, ensuring compliance and data privacy.

- 🏠 Self-Hosted: Can be self-hosted for complete control over the deployment environment.

- ⌨️ CLI Tool: Improved command-line interface for managing and interacting with the Inference Gateway

You can horizontally scale the Inference Gateway to handle multiple requests from clients. The Inference Gateway will forward the requests to the respective provider and return the response to the client.

Note: MCP middleware components can be easily toggled on/off via

environment variables (MCP_ENABLE) or bypassed per-request using headers

(X-MCP-Bypass), giving you full control over which capabilities are active.

Note: Vision/multimodal support is disabled by default for security and

performance. To enable image processing with vision-capable models (GPT-4o,

Claude 4.5, Gemini 2.5, etc.), set ENABLE_VISION=true in your environment

configuration.

The following diagram illustrates the flow:

%%{init: {'theme': 'base', 'themeVariables': { 'primaryColor': '#326CE5', 'primaryTextColor': '#fff', 'lineColor': '#5D8AA8', 'secondaryColor': '#006100' }, 'fontFamily': 'Arial', 'flowchart': {'nodeSpacing': 50, 'rankSpacing': 70, 'padding': 15}}}%%

graph TD

%% Client nodes

A["👥 Clients / 🤖 Agents"] --> |POST /v1/chat/completions| Auth

%% Auth node

Auth["🔒 Optional OIDC"] --> |Auth?| IG1

Auth --> |Auth?| IG2

Auth --> |Auth?| IG3

%% Gateway nodes

IG1["🖥️ Inference Gateway"] --> P

IG2["🖥️ Inference Gateway"] --> P

IG3["🖥️ Inference Gateway"] --> P

%% Middleware Processing and Direct Routing

P["🔌 Proxy Gateway"] --> MCP["🌐 MCP Middleware"]

P --> |"Direct routing bypassing middleware"| Direct["🔌 Direct Providers"]

MCP --> |"Middleware chain complete"| Providers["🤖 LLM Providers"]

%% MCP Tool Servers

MCP --> MCP1["📁 File System Server"]

MCP --> MCP2["🔍 Search Server"]

MCP --> MCP3["🌐 Web Server"]

%% LLM Providers (Middleware Enhanced)

Providers --> C1["🦙 Ollama"]

Providers --> D1["🚀 Groq"]

Providers --> E1["☁️ OpenAI"]

%% Direct Providers (Bypass Middleware)

Direct --> C["🦙 Ollama"]

Direct --> D["🚀 Groq"]

Direct --> E["☁️ OpenAI"]

Direct --> G["⚡ Cloudflare"]

Direct --> H1["💬 Cohere"]

Direct --> H2["🧠 Anthropic"]

Direct --> H3["🐋 DeepSeek"]

%% Define styles

classDef client fill:#9370DB,stroke:#333,stroke-width:1px,color:white;

classDef auth fill:#F5A800,stroke:#333,stroke-width:1px,color:black;

classDef gateway fill:#326CE5,stroke:#fff,stroke-width:1px,color:white;

classDef provider fill:#32CD32,stroke:#333,stroke-width:1px,color:white;

classDef mcp fill:#FF69B4,stroke:#333,stroke-width:1px,color:white;

%% Apply styles

class A client;

class Auth auth;

class IG1,IG2,IG3,P gateway;

class C,D,E,G,H1,H2,H3,C1,D1,E1,Providers provider;

class MCP,MCP1,MCP2,MCP3 mcp;

class Direct direct;Client is sending:

curl -X POST http://localhost:8080/v1/chat/completions

-d '{

"model": "openai/gpt-3.5-turbo",

"messages": [

{

"role": "system",

"content": "You are a pirate."

},

{

"role": "user",

"content": "Hello, world! How are you doing today?"

}

],

}'** Internally the request is proxied to OpenAI, the Inference Gateway inferring the provider by the model name.

You can also send the request explicitly using ?provider=openai or any other supported provider in the URL.

Finally client receives:

{

"choices": [

{

"finish_reason": "stop",

"index": 0,

"message": {

"content": "Ahoy, matey! 🏴☠️ The seas be wild, the sun be bright, and this here pirate be ready to conquer the day! What be yer business, landlubber? 🦜",

"role": "assistant"

}

}

],

"created": 1741821109,

"id": "chatcmpl-dc24995a-7a6e-4d95-9ab3-279ed82080bb",

"model": "N/A",

"object": "chat.completion",

"usage": {

"completion_tokens": 0,

"prompt_tokens": 0,

"total_tokens": 0

}

}For streaming the tokens simply add to the request body stream: true.

Recommended: For production deployments, running the Inference Gateway as a container is recommended. This provides better isolation, easier updates, and simplified configuration management. See Docker or Kubernetes deployment examples.

The Inference Gateway can also be installed as a standalone binary using the provided install script or by downloading pre-built binaries from GitHub releases.

The easiest way to install the Inference Gateway is using the automated install script:

Install latest version:

curl -fsSL https://raw.githubusercontent.com/inference-gateway/inference-gateway/main/install.sh | bashInstall specific version:

curl -fsSL https://raw.githubusercontent.com/inference-gateway/inference-gateway/main/install.sh | VERSION=v0.22.3 bashInstall to custom directory:

# Install to custom location

curl -fsSL https://raw.githubusercontent.com/inference-gateway/inference-gateway/main/install.sh | INSTALL_DIR=~/.local/bin bash

# Install to current directory

curl -fsSL https://raw.githubusercontent.com/inference-gateway/inference-gateway/main/install.sh | INSTALL_DIR=. bashWhat the script does:

- Automatically detects your operating system (Linux/macOS) and architecture (x86_64/arm64/armv7)

- Downloads the appropriate binary from GitHub releases

- Extracts and installs to

/usr/local/bin(or custom directory) - Verifies the installation

Supported platforms:

- Linux: x86_64, arm64, armv7

- macOS (Darwin): x86_64 (Intel), arm64 (Apple Silicon)

Download pre-built binaries directly from the releases page:

-

Download the appropriate archive for your platform

-

Extract the binary:

tar -xzf inference-gateway_<OS>_<ARCH>.tar.gz

-

Move to a directory in your PATH:

sudo mv inference-gateway /usr/local/bin/ chmod +x /usr/local/bin/inference-gateway

inference-gateway --versionOnce installed, start the gateway with your configuration:

# Set required environment variables

export OPENAI_API_KEY="your-api-key"

# Start the gateway

inference-gatewayFor detailed configuration options, see the Configuration section below.

The Inference Gateway uses middleware to process requests and add capabilities like MCP (Model Context Protocol). Clients can control which middlewares are active using bypass headers:

-

X-MCP-Bypass: Skip MCP middleware processing

# Use only standard tool calls (skip MCP)

curl -X POST http://localhost:8080/v1/chat/completions \

-H "X-MCP-Bypass: true" \

-d '{

"model": "anthropic/claude-3-haiku",

"messages": [{"role": "user", "content": "Connect to external agents"}]

}'

# Skip both middlewares for direct provider access

curl -X POST http://localhost:8080/v1/chat/completions \

-H "X-MCP-Bypass: true" \

-d '{

"model": "groq/llama-3-8b",

"messages": [{"role": "user", "content": "Simple chat without tools"}]

}'For Performance:

- Skip middleware processing when you don't need tool capabilities

- Reduce latency for simple chat interactions

For Selective Features:

- Use only standard tool calls (skip MCP): Add

X-MCP-Bypass: true - Direct provider access

For Development:

- Test middleware behavior in isolation

- Debug tool integration issues

- Ensure backward compatibility with existing applications

The middlewares use these same headers to prevent infinite loops during their operation:

MCP Processing:

- When tools are detected in a response, the MCP agent makes up to 10 follow-up requests

- Each follow-up request includes

X-MCP-Bypass: trueto skip middleware re-processing - This allows the agent to iterate without creating circular calls

Note: These bypass headers only affect middleware processing. The core chat completions functionality remains available regardless of header values.

Enable MCP to automatically provide tools to LLMs without requiring clients to manage them:

# Enable MCP and connect to tool servers

export MCP_ENABLE=true

export MCP_SERVERS="http://filesystem-server:3001/mcp,http://search-server:3002/mcp"

# LLMs will automatically discover and use available tools

curl -X POST http://localhost:8080/v1/chat/completions \

-d '{

"model": "openai/gpt-4",

"messages": [{"role": "user", "content": "List files in the current directory"}]

}'The gateway automatically injects available tools into requests and handles tool execution, making external capabilities seamlessly available to any LLM.

Learn more: Model Context Protocol Documentation | MCP Integration Example

The Inference Gateway provides comprehensive OpenTelemetry metrics for monitoring performance, usage, and function/tool call activity. Metrics are automatically exported to Prometheus format and available on port 9464 by default.

# Enable telemetry and set metrics port (default: 9464)

export TELEMETRY_ENABLE=true

export TELEMETRY_METRICS_PORT=9464

# Access metrics endpoint

curl http://localhost:9464/metricsTrack token consumption across different providers and models:

-

llm_usage_prompt_tokens_total- Counter for prompt tokens consumed -

llm_usage_completion_tokens_total- Counter for completion tokens generated -

llm_usage_total_tokens_total- Counter for total token usage

Labels: provider, model

# Total tokens used by OpenAI models in the last hour

sum(increase(llm_usage_total_tokens_total{provider="openai"}[1h])) by (model)

Monitor API performance and reliability:

-

llm_requests_total- Counter for total requests processed -

llm_responses_total- Counter for responses by HTTP status code -

llm_request_duration- Histogram for end-to-end request duration (milliseconds)

Labels: provider, request_method, request_path, status_code (responses only)

# 95th percentile request latency by provider

histogram_quantile(0.95, sum(rate(llm_request_duration_bucket{provider=~"openai|anthropic"}[5m])) by (provider, le))

# Error rate percentage by provider

100 * sum(rate(llm_responses_total{status_code!~"2.."}[5m])) by (provider) / sum(rate(llm_responses_total[5m])) by (provider)

Comprehensive tracking of tool executions for MCP, and standard function calls:

-

llm_tool_calls_total- Counter for total function/tool calls executed -

llm_tool_calls_success_total- Counter for successful tool executions -

llm_tool_calls_failure_total- Counter for failed tool executions -

llm_tool_call_duration- Histogram for tool execution duration (milliseconds)

Labels: provider, model, tool_type, tool_name, error_type (failures only)

Tool Types:

-

mcp- Model Context Protocol tools (prefix:mcp_) -

standard_tool_use- Other function calls

# Tool call success rate by type

100 * sum(rate(llm_tool_calls_success_total[5m])) by (tool_type) / sum(rate(llm_tool_calls_total[5m])) by (tool_type)

# Average tool execution time by provider

sum(rate(llm_tool_call_duration_sum[5m])) by (provider) / sum(rate(llm_tool_call_duration_count[5m])) by (provider)

# Most frequently used tools

topk(10, sum(increase(llm_tool_calls_total[1h])) by (tool_name))

Complete monitoring stack with Grafana dashboards:

cd examples/docker-compose/monitoring/

cp .env.example .env # Configure your API keys

docker compose up -d

# Access Grafana at http://localhost:3000 (admin/admin)Production-ready monitoring with Prometheus Operator:

cd examples/kubernetes/monitoring/

task deploy-infrastructure

task deploy-inference-gateway

# Access via port-forward or ingress

kubectl port-forward svc/grafana-service 3000:3000The included Grafana dashboard provides:

- Real-time Metrics: 5-second refresh rate for immediate feedback

- Tool Call Analytics: Success rates, duration analysis, and failure tracking

- Provider Comparison: Performance metrics across all supported providers

- Usage Insights: Token consumption patterns and cost analysis

- Error Monitoring: Failed requests and tool call error classification

Learn more: Docker Compose Monitoring | Kubernetes Monitoring | OpenTelemetry Documentation

- OpenAI

- Ollama

- Ollama Cloud (Preview)

- Groq

- Cloudflare

- Cohere

- Anthropic

- DeepSeek

- Mistral

The Inference Gateway can be configured using environment variables. The following environment variables are supported.

To enable vision capabilities for processing images alongside text:

ENABLE_VISION=trueSupported Providers with Vision:

- OpenAI (GPT-4o, GPT-5, GPT-4.1, GPT-4 Turbo)

- Anthropic (Claude 3, Claude 4, Claude 4.5 Sonnet, Claude 4.5 Haiku)

- Google (Gemini 2.5)

- Cohere (Command A Vision, Aya Vision)

- Ollama (LLaVA, Llama 4, Llama 3.2 Vision)

- Groq (vision models)

- Mistral (Pixtral)

Note: Vision support is disabled by default for performance and security reasons. When disabled, requests with image content will be rejected even if the model supports vision.

- Using Docker Compose

- Basic setup - Simple configuration with a single provider

- MCP Integration - Model Context Protocol with multiple tool servers

- Hybrid deployment - Multiple providers (cloud + local)

- Authentication - OIDC authentication setup

- Tools - Tool integration examples

- Using Kubernetes

- Basic setup - Simple Kubernetes deployment

- MCP Integration - Model Context Protocol in Kubernetes

- Agent deployment - Standalone agent deployment

- Hybrid deployment - Multiple providers in Kubernetes

- Authentication - OIDC authentication in Kubernetes

- Monitoring - Observability and monitoring setup

- TLS setup - TLS/SSL configuration

- Using standard REST endpoints

More SDKs could be generated using the OpenAPI specification. The following SDKs are currently available:

The Inference Gateway CLI provides a powerful command-line interface for managing and interacting with the Inference Gateway. It offers tools for configuration, monitoring, and management of inference services.

- Status Monitoring: Check gateway health and resource usage

- Interactive Chat: Chat with models using an interactive interface

- Configuration Management: Manage gateway settings via YAML config

- Project Initialization: Set up local project configurations

- Tool Execution: LLMs can execute whitelisted commands and tools

go install github.com/inference-gateway/cli@latestcurl -fsSL https://raw.githubusercontent.com/inference-gateway/cli/main/install.sh | bashDownload the latest release from the releases page.

-

Initialize project configuration:

infer init

-

Check gateway status:

infer status

-

Start an interactive chat:

infer chat

For more details, see the CLI documentation.

This project is licensed under the MIT License.

Found a bug, missing provider, or have a feature in mind?

You're more than welcome to submit pull requests or open issues for any fixes, improvements, or new ideas!

Please read the CONTRIBUTING.md for more details.

My motivation is to build AI Agents without being tied to a single vendor. By avoiding vendor lock-in and supporting self-hosted LLMs from a single interface, organizations gain both portability and data privacy. You can choose to consume LLMs from a cloud provider or run them entirely offline with Ollama.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for inference-gateway

Similar Open Source Tools

inference-gateway

The Inference Gateway is an open-source proxy server designed to simplify access to various language model APIs. It allows users to interact with different language models through a unified interface, stream tokens in real-time, process images alongside text, and use Docker or Kubernetes for deployment. The gateway supports Model Context Protocol integration, provides metrics and observability features, and is production-ready with minimal resource consumption. It offers middleware control and bypass mechanisms, enabling users to manage capabilities like MCP and vision support. The CLI tool provides status monitoring, interactive chat, configuration management, project initialization, and tool execution functionalities. The project aims to provide a flexible solution for AI Agents, supporting self-hosted LLMs and avoiding vendor lock-in.

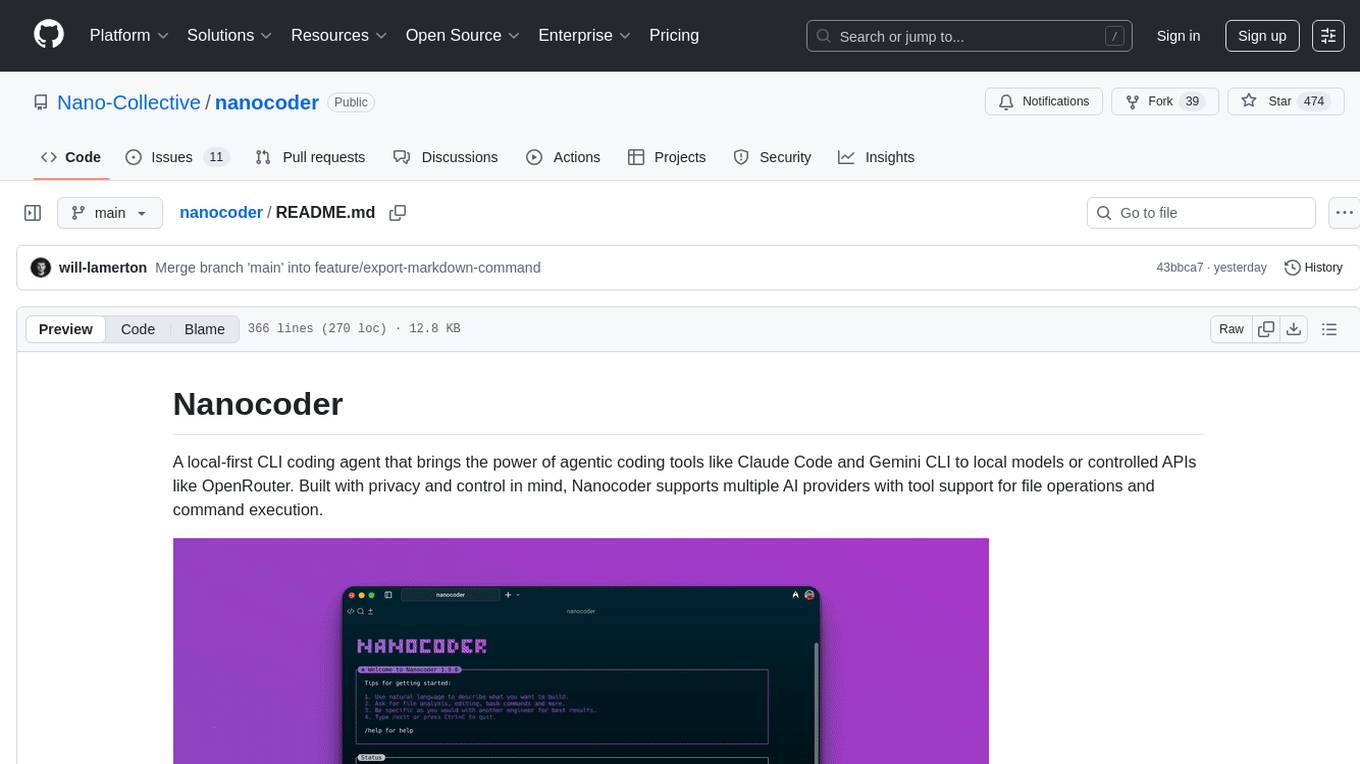

nanocoder

Nanocoder is a local-first CLI coding agent that supports multiple AI providers with tool support for file operations and command execution. It focuses on privacy and control, allowing users to code locally with AI tools. The tool is designed to bring the power of agentic coding tools to local models or controlled APIs like OpenRouter, promoting community-led development and inclusive collaboration in the AI coding space.

CyberStrikeAI

CyberStrikeAI is an AI-native security testing platform built in Go that integrates 100+ security tools, an intelligent orchestration engine, role-based testing with predefined security roles, a skills system with specialized testing skills, and comprehensive lifecycle management capabilities. It enables end-to-end automation from conversational commands to vulnerability discovery, attack-chain analysis, knowledge retrieval, and result visualization, delivering an auditable, traceable, and collaborative testing environment for security teams. The platform features an AI decision engine with OpenAI-compatible models, native MCP implementation with various transports, prebuilt tool recipes, large-result pagination, attack-chain graph, password-protected web UI, knowledge base with vector search, vulnerability management, batch task management, role-based testing, and skills system.

OpenGradient-SDK

OpenGradient Python SDK is a tool for decentralized model management and inference services on the OpenGradient platform. It provides programmatic access to distributed AI infrastructure with cryptographic verification capabilities. The SDK supports verifiable LLM inference, multi-provider support, TEE execution, model hub integration, consensus-based verification, and command-line interface. Users can leverage this SDK to build AI applications with execution guarantees through Trusted Execution Environments and blockchain-based settlement, ensuring auditability and tamper-proof AI execution.

AIClient-2-API

AIClient-2-API is a versatile and lightweight API proxy designed for developers, providing ample free API request quotas and comprehensive support for various mainstream large models like Gemini, Qwen Code, Claude, etc. It converts multiple backend APIs into standard OpenAI format interfaces through a Node.js HTTP server. The project adopts a modern modular architecture, supports strategy and adapter patterns, comes with complete test coverage and health check mechanisms, and is ready to use after 'npm install'. By easily switching model service providers in the configuration file, any OpenAI-compatible client or application can seamlessly access different large model capabilities through the same API address, eliminating the hassle of maintaining multiple sets of configurations for different services and dealing with incompatible interfaces.

nanocoder

Nanocoder is a versatile code editor designed for beginners and experienced programmers alike. It provides a user-friendly interface with features such as syntax highlighting, code completion, and error checking. With Nanocoder, you can easily write and debug code in various programming languages, making it an ideal tool for learning, practicing, and developing software projects. Whether you are a student, hobbyist, or professional developer, Nanocoder offers a seamless coding experience to boost your productivity and creativity.

hayhooks

Hayhooks is a tool that simplifies the deployment and serving of Haystack pipelines as REST APIs. It allows users to wrap their pipelines with custom logic and expose them via HTTP endpoints, including OpenAI-compatible chat completion endpoints. With Hayhooks, users can easily convert their Haystack pipelines into API services with minimal boilerplate code.

MassGen

MassGen is a cutting-edge multi-agent system that leverages the power of collaborative AI to solve complex tasks. It assigns a task to multiple AI agents who work in parallel, observe each other's progress, and refine their approaches to converge on the best solution to deliver a comprehensive and high-quality result. The system operates through an architecture designed for seamless multi-agent collaboration, with key features including cross-model/agent synergy, parallel processing, intelligence sharing, consensus building, and live visualization. Users can install the system, configure API settings, and run MassGen for various tasks such as question answering, creative writing, research, development & coding tasks, and web automation & browser tasks. The roadmap includes plans for advanced agent collaboration, expanded model, tool & agent integration, improved performance & scalability, enhanced developer experience, and a web interface.

code_puppy

Code Puppy is an AI-powered code generation agent designed to understand programming tasks, generate high-quality code, and explain its reasoning. It supports multi-language code generation, interactive CLI, and detailed code explanations. The tool requires Python 3.9+ and API keys for various models like GPT, Google's Gemini, Cerebras, and Claude. It also integrates with MCP servers for advanced features like code search and documentation lookups. Users can create custom JSON agents for specialized tasks and access a variety of tools for file management, code execution, and reasoning sharing.

Claw-Hunter

Claw Hunter is a discovery and risk-assessment tool for OpenClaw instances, designed to identify 'Shadow AI' and audit agent privileges. It helps ITSec teams detect security risks, credential exposure, integration inventory, configuration issues, and installation status. The tool offers system-agnostic visibility, MDM readiness, non-intrusive operations, comprehensive detection, structured output in JSON format, and zero dependencies. It provides silent execution mode for automated deployment, machine identification, security risk scoring, results upload to a central API endpoint, bearer token authentication support, and persistent logging. Claw Hunter offers proper exit codes for automation and is available for macOS, Linux, and Windows platforms.

ctinexus

CTINexus is a framework that leverages optimized in-context learning of large language models to automatically extract cyber threat intelligence from unstructured text and construct cybersecurity knowledge graphs. It processes threat intelligence reports to extract cybersecurity entities, identify relationships between security concepts, and construct knowledge graphs with interactive visualizations. The framework requires minimal configuration, with no extensive training data or parameter tuning needed.

req_llm

ReqLLM is a Req-based library for LLM interactions, offering a unified interface to AI providers through a plugin-based architecture. It brings composability and middleware advantages to LLM interactions, with features like auto-synced providers/models, typed data structures, ergonomic helpers, streaming capabilities, usage & cost extraction, and a plugin-based provider system. Users can easily generate text, structured data, embeddings, and track usage costs. The tool supports various AI providers like Anthropic, OpenAI, Groq, Google, and xAI, and allows for easy addition of new providers. ReqLLM also provides API key management, detailed documentation, and a roadmap for future enhancements.

any-llm

The `any-llm` repository provides a unified API to access different LLM (Large Language Model) providers. It offers a simple and developer-friendly interface, leveraging official provider SDKs for compatibility and maintenance. The tool is framework-agnostic, actively maintained, and does not require a proxy or gateway server. It addresses challenges in API standardization and aims to provide a consistent interface for various LLM providers, overcoming limitations of existing solutions like LiteLLM, AISuite, and framework-specific integrations.

sdk-typescript

Strands Agents - TypeScript SDK is a lightweight and flexible SDK that takes a model-driven approach to building and running AI agents in TypeScript/JavaScript. It brings key features from the Python Strands framework to Node.js environments, enabling type-safe agent development for various applications. The SDK supports model agnostic development with first-class support for Amazon Bedrock and OpenAI, along with extensible architecture for custom providers. It also offers built-in MCP support, real-time response streaming, extensible hooks, and conversation management features. With tools for interaction with external systems and seamless integration with MCP servers, the SDK provides a comprehensive solution for developing AI agents.

sre

SmythOS is an operating system designed for building, deploying, and managing intelligent AI agents at scale. It provides a unified SDK and resource abstraction layer for various AI services, making it easy to scale and flexible. With an agent-first design, developer-friendly SDK, modular architecture, and enterprise security features, SmythOS offers a robust foundation for AI workloads. The system is built with a philosophy inspired by traditional operating system kernels, ensuring autonomy, control, and security for AI agents. SmythOS aims to make shipping production-ready AI agents accessible and open for everyone in the coming Internet of Agents era.

mcp-documentation-server

The mcp-documentation-server is a lightweight server application designed to serve documentation files for projects. It provides a simple and efficient way to host and access project documentation, making it easy for team members and stakeholders to find and reference important information. The server supports various file formats, such as markdown and HTML, and allows for easy navigation through the documentation. With mcp-documentation-server, teams can streamline their documentation process and ensure that project information is easily accessible to all involved parties.

For similar tasks

inference-gateway

The Inference Gateway is an open-source proxy server designed to simplify access to various language model APIs. It allows users to interact with different language models through a unified interface, stream tokens in real-time, process images alongside text, and use Docker or Kubernetes for deployment. The gateway supports Model Context Protocol integration, provides metrics and observability features, and is production-ready with minimal resource consumption. It offers middleware control and bypass mechanisms, enabling users to manage capabilities like MCP and vision support. The CLI tool provides status monitoring, interactive chat, configuration management, project initialization, and tool execution functionalities. The project aims to provide a flexible solution for AI Agents, supporting self-hosted LLMs and avoiding vendor lock-in.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.