mini.ai

Neovim Lua plugin to extend and create `a`/`i` textobjects. Part of 'mini.nvim' library.

Stars: 569

mini.ai is a plugin for Neovim that extends and creates textobjects for enhanced text manipulation. It supports customization via Lua patterns or functions, dot-repeat, different search methods, consecutive application, and has builtins for brackets, quotes, function calls, arguments, tags, user prompts, and punctuation/whitespace characters.

README:

- It enhances some builtin textobjects (like

a(,a),a', and more), creates new ones (likea*,a<Space>,af,a?, and more), and allows user to create their own (like based on treesitter, and more). - Supports dot-repeat,

v:count, different search methods, consecutive application, and customization via Lua patterns or functions. - Has builtins for brackets, quotes, function call, argument, tag, user prompt, and any punctuation/digit/whitespace character.

See more details in Features and Documentation.

[!NOTE] This was previously hosted at a personal

echasnovskiGitHub account. It was transferred to a dedicated organization to improve long term project stability. See more details here.

⦿ This is a part of mini.nvim library. Please use this link if you want to mention this module.

⦿ All contributions (issues, pull requests, discussions, etc.) are done inside of 'mini.nvim'.

⦿ See whole library documentation to learn about general design principles, disable/configuration recipes, and more.

⦿ See MiniMax for a full config example that uses this module.

If you want to help this project grow but don't know where to start, check out contributing guides of 'mini.nvim' or leave a Github star for 'mini.nvim' project and/or any its standalone Git repositories.

- Customizable creation of

a/itextobjects using Lua patterns and functions. Supports:- Dot-repeat.

-

v:count. - Different search methods (see

:h MiniAi.config). - Consecutive application (update selection without leaving Visual mode).

- Aliases for multiple textobjects.

- Comprehensive builtin textobjects (see more at

:h MiniAi-builtin-textobjects):- Balanced brackets (with and without whitespace) plus alias.

- Balanced quotes plus alias.

- Function call.

- Argument.

- Tag.

- Derived from user prompt.

- Default for anything but Latin letters (to fall back to

:h text-objects).

- Motions for jumping to left/right edge of textobject.

- Set of specification generators to tweak some builtin textobjects (see

help for

MiniAi.gen_spec). - Treesitter textobjects (through

MiniAi.gen_spec.treesitter()helper).

This plugin can be installed as part of 'mini.nvim' library (recommended) or as a standalone Git repository.

There are two branches to install from:

-

main(default, recommended) will have latest development version of plugin. All changes since last stable release should be perceived as being in beta testing phase (meaning they already passed alpha-testing and are moderately settled). -

stablewill be updated only upon releases with code tested during public beta-testing phase inmainbranch.

Here are code snippets for some common installation methods (use only one):

With mini.deps

-

'mini.nvim' library:

Branch Code snippet Main Follow recommended 'mini.deps' installation Stable Follow recommended 'mini.deps' installation -

Standalone plugin:

Branch Code snippet Main add('nvim-mini/mini.ai')Stable add({ source = 'nvim-mini/mini.ai', checkout = 'stable' })

With folke/lazy.nvim

-

'mini.nvim' library:

Branch Code snippet Main { 'nvim-mini/mini.nvim', version = false },Stable { 'nvim-mini/mini.nvim', version = '*' }, -

Standalone plugin:

Branch Code snippet Main { 'nvim-mini/mini.ai', version = false },Stable { 'nvim-mini/mini.ai', version = '*' },

With junegunn/vim-plug

-

'mini.nvim' library:

Branch Code snippet Main Plug 'nvim-mini/mini.nvim'Stable Plug 'nvim-mini/mini.nvim', { 'branch': 'stable' } -

Standalone plugin:

Branch Code snippet Main Plug 'nvim-mini/mini.ai'Stable Plug 'nvim-mini/mini.ai', { 'branch': 'stable' }

Important: don't forget to call require('mini.ai').setup() to enable its functionality.

Note: if you are on Windows, there might be problems with too long file paths (like error: unable to create file <some file name>: Filename too long). Try doing one of the following:

- Enable corresponding git global config value:

git config --system core.longpaths true. Then try to reinstall. - Install plugin in other place with shorter path.

-- No need to copy this inside `setup()`. Will be used automatically.

{

-- Table with textobject id as fields, textobject specification as values.

-- Also use this to disable builtin textobjects. See |MiniAi.config|.

custom_textobjects = nil,

-- Module mappings. Use `''` (empty string) to disable one.

mappings = {

-- Main textobject prefixes

around = 'a',

inside = 'i',

-- Next/last variants

-- NOTE: These override built-in LSP selection mappings on Neovim>=0.12

-- Map LSP selection manually to use it (see `:h MiniAi.config`)

around_next = 'an',

inside_next = 'in',

around_last = 'al',

inside_last = 'il',

-- Move cursor to corresponding edge of `a` textobject

goto_left = 'g[',

goto_right = 'g]',

},

-- Number of lines within which textobject is searched

n_lines = 50,

-- How to search for object (first inside current line, then inside

-- neighborhood). One of 'cover', 'cover_or_next', 'cover_or_prev',

-- 'cover_or_nearest', 'next', 'previous', 'nearest'.

search_method = 'cover_or_next',

-- Whether to disable showing non-error feedback

-- This also affects (purely informational) helper messages shown after

-- idle time if user input is required.

silent = false,

}For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for mini.ai

Similar Open Source Tools

mini.ai

mini.ai is a plugin for Neovim that extends and creates textobjects for enhanced text manipulation. It supports customization via Lua patterns or functions, dot-repeat, different search methods, consecutive application, and has builtins for brackets, quotes, function calls, arguments, tags, user prompts, and punctuation/whitespace characters.

mini.ai

This plugin extends and creates `a`/`i` textobjects in Neovim. It enhances some builtin textobjects (like `a(`, `a)`, `a'`, and more), creates new ones (like `a*`, `a

llm-client

LLMClient is a JavaScript/TypeScript library that simplifies working with large language models (LLMs) by providing an easy-to-use interface for building and composing efficient prompts using prompt signatures. These signatures enable the automatic generation of typed prompts, allowing developers to leverage advanced capabilities like reasoning, function calling, RAG, ReAcT, and Chain of Thought. The library supports various LLMs and vector databases, making it a versatile tool for a wide range of applications.

python-tgpt

Python-tgpt is a Python package that enables seamless interaction with over 45 free LLM providers without requiring an API key. It also provides image generation capabilities. The name _python-tgpt_ draws inspiration from its parent project tgpt, which operates on Golang. Through this Python adaptation, users can effortlessly engage with a number of free LLMs available, fostering a smoother AI interaction experience.

extractor

Extractor is an AI-powered data extraction library for Laravel that leverages OpenAI's capabilities to effortlessly extract structured data from various sources, including images, PDFs, and emails. It features a convenient wrapper around OpenAI Chat and Completion endpoints, supports multiple input formats, includes a flexible Field Extractor for arbitrary data extraction, and integrates with Textract for OCR functionality. Extractor utilizes JSON Mode from the latest GPT-3.5 and GPT-4 models, providing accurate and efficient data extraction.

monacopilot

Monacopilot is a powerful and customizable AI auto-completion plugin for the Monaco Editor. It supports multiple AI providers such as Anthropic, OpenAI, Groq, and Google, providing real-time code completions with an efficient caching system. The plugin offers context-aware suggestions, customizable completion behavior, and framework agnostic features. Users can also customize the model support and trigger completions manually. Monacopilot is designed to enhance coding productivity by providing accurate and contextually appropriate completions in daily spoken language.

parrot.nvim

Parrot.nvim is a Neovim plugin that prioritizes a seamless out-of-the-box experience for text generation. It simplifies functionality and focuses solely on text generation, excluding integration of DALLE and Whisper. It supports persistent conversations as markdown files, custom hooks for inline text editing, multiple providers like Anthropic API, perplexity.ai API, OpenAI API, Mistral API, and local/offline serving via ollama. It allows custom agent definitions, flexible API credential support, and repository-specific instructions with a `.parrot.md` file. It does not have autocompletion or hidden requests in the background to analyze files.

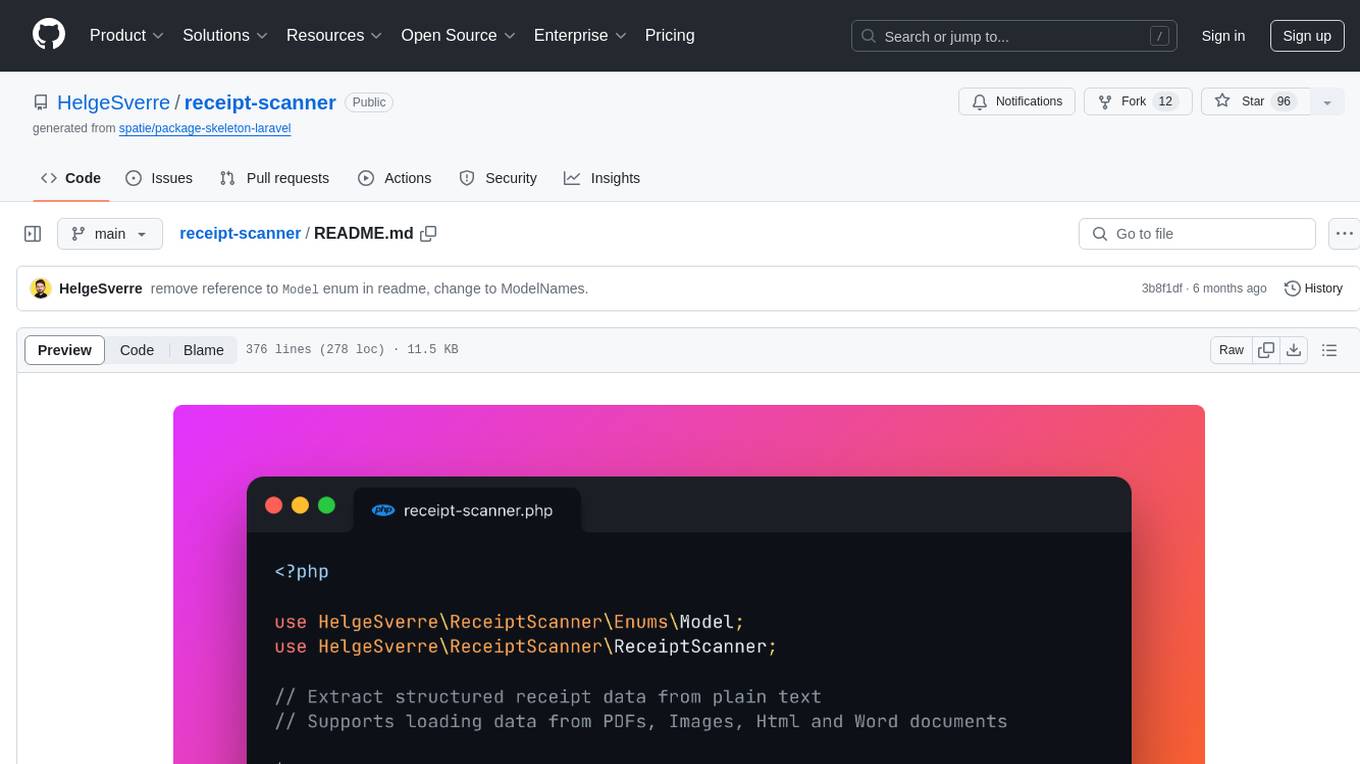

receipt-scanner

The receipt-scanner repository is an AI-Powered Receipt and Invoice Scanner for Laravel that allows users to easily extract structured receipt data from images, PDFs, and emails within their Laravel application using OpenAI. It provides a light wrapper around OpenAI Chat and Completion endpoints, supports various input formats, and integrates with Textract for OCR functionality. Users can install the package via composer, publish configuration files, and use it to extract data from plain text, PDFs, images, Word documents, and web content. The scanned receipt data is parsed into a DTO structure with main classes like Receipt, Merchant, and LineItem.

instructor

Instructor is a popular Python library for managing structured outputs from large language models (LLMs). It offers a user-friendly API for validation, retries, and streaming responses. With support for various LLM providers and multiple languages, Instructor simplifies working with LLM outputs. The library includes features like response models, retry management, validation, streaming support, and flexible backends. It also provides hooks for logging and monitoring LLM interactions, and supports integration with Anthropic, Cohere, Gemini, Litellm, and Google AI models. Instructor facilitates tasks such as extracting user data from natural language, creating fine-tuned models, managing uploaded files, and monitoring usage of OpenAI models.

swarmzero

SwarmZero SDK is a library that simplifies the creation and execution of AI Agents and Swarms of Agents. It supports various LLM Providers such as OpenAI, Azure OpenAI, Anthropic, MistralAI, Gemini, Nebius, and Ollama. Users can easily install the library using pip or poetry, set up the environment and configuration, create and run Agents, collaborate with Swarms, add tools for complex tasks, and utilize retriever tools for semantic information retrieval. Sample prompts are provided to help users explore the capabilities of the agents and swarms. The SDK also includes detailed examples and documentation for reference.

perplexity-ai

Perplexity is a module that utilizes emailnator to generate new accounts, providing users with 5 pro queries per account creation. It enables the creation of new Gmail accounts with emailnator, ensuring unlimited pro queries. The tool requires specific Python libraries for installation and offers both a web interface and an API for different usage scenarios. Users can interact with the tool to perform various tasks such as account creation, query searches, and utilizing different modes for research purposes. Perplexity also supports asynchronous operations and provides guidance on obtaining cookies for account usage and account generation from emailnator.

mistral-inference

Mistral Inference repository contains minimal code to run 7B, 8x7B, and 8x22B models. It provides model download links, installation instructions, and usage guidelines for running models via CLI or Python. The repository also includes information on guardrailing, model platforms, deployment, and references. Users can interact with models through commands like mistral-demo, mistral-chat, and mistral-common. Mistral AI models support function calling and chat interactions for tasks like testing models, chatting with models, and using Codestral as a coding assistant. The repository offers detailed documentation and links to blogs for further information.

langserve

LangServe helps developers deploy `LangChain` runnables and chains as a REST API. This library is integrated with FastAPI and uses pydantic for data validation. In addition, it provides a client that can be used to call into runnables deployed on a server. A JavaScript client is available in LangChain.js.

minja

Minja is a minimalistic C++ Jinja templating engine designed specifically for integration with C++ LLM projects, such as llama.cpp or gemma.cpp. It is not a general-purpose tool but focuses on providing a limited set of filters, tests, and language features tailored for chat templates. The library is header-only, requires C++17, and depends only on nlohmann::json. Minja aims to keep the codebase small, easy to understand, and offers decent performance compared to Python. Users should be cautious when using Minja due to potential security risks, and it is not intended for producing HTML or JavaScript output.

magentic

Easily integrate Large Language Models into your Python code. Simply use the `@prompt` and `@chatprompt` decorators to create functions that return structured output from the LLM. Mix LLM queries and function calling with regular Python code to create complex logic.

sparkle

Sparkle is a tool that streamlines the process of building AI-driven features in applications using Large Language Models (LLMs). It guides users through creating and managing agents, defining tools, and interacting with LLM providers like OpenAI. Sparkle allows customization of LLM provider settings, model configurations, and provides a seamless integration with Sparkle Server for exposing agents via an OpenAI-compatible chat API endpoint.

For similar tasks

mini.ai

mini.ai is a plugin for Neovim that extends and creates textobjects for enhanced text manipulation. It supports customization via Lua patterns or functions, dot-repeat, different search methods, consecutive application, and has builtins for brackets, quotes, function calls, arguments, tags, user prompts, and punctuation/whitespace characters.

tenere

Tenere is a TUI interface for Language Model Libraries (LLMs) written in Rust. It provides syntax highlighting, chat history, saving chats to files, Vim keybindings, copying text from/to clipboard, and supports multiple backends. Users can configure Tenere using a TOML configuration file, set key bindings, and use different LLMs such as ChatGPT, llama.cpp, and ollama. Tenere offers default key bindings for global and prompt modes, with features like starting a new chat, saving chats, scrolling, showing chat history, and quitting the app. Users can interact with the prompt in different modes like Normal, Visual, and Insert, with various key bindings for navigation, editing, and text manipulation.

mini.ai

This plugin extends and creates `a`/`i` textobjects in Neovim. It enhances some builtin textobjects (like `a(`, `a)`, `a'`, and more), creates new ones (like `a*`, `a

AITranslator

AITranslator is a software tool that utilizes a large language model to translate text from images exported by MTool into a user-friendly graphical interface. Users can start TGW to load the model, open the software, and select the text to be translated. The tool aims to simplify the translation process by leveraging advanced language processing capabilities.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.