step_into_llm

MindSpore online courses: Step into LLM

Stars: 405

The 'step_into_llm' repository is dedicated to the 昇思MindSpore technology open class, which focuses on exploring cutting-edge technologies, combining theory with practical applications, expert interpretations, open sharing, and empowering competitions. The repository contains course materials, including slides and code, for the ongoing second phase of the course. It covers various topics related to large language models (LLMs) such as Transformer, BERT, GPT, GPT2, and more. The course aims to guide developers interested in LLMs from theory to practical implementation, with a special emphasis on the development and application of large models.

README:

- 探究前沿:解读技术热点,解构热点模型

- 应用实践:理论实践相结合,手把手指导开发

- 专家解读:多领域专家,多元解读

- 开源共享:课程免费,课件代码开源

- 大赛赋能:ICT大赛赋能课程(大模型专题第一、二期)

- 系列课程:大模型专题课程开展中,其他专题课程敬请期待

报名链接:https://xihe.mindspore.cn/course/foundation-model-v2/introduction

(注:参与免费课程必须报名哦!同步添加QQ群,后续课程事宜将在群内通知!)

第二期课程10月14日起每双周六14:00-15:00在b站进行直播。

每节课程的ppt和代码会随授课逐步上传至github,系列视频回放归档至b站,大家可以在昇思MindSpore公众号中获取每节课的知识点回顾与下节课的课程预告,同时欢迎大家在MindSpore社区领取大模型系列任务进行挑战。

因为课程周期较长,课节安排可能会在中途出现微调,以最终通知为准,感谢理解!

热烈欢迎小伙伴们参与到课程的建设中来,基于课程的趣味开发可以提交至昇思MindSpore大模型平台

如果在学习过程中发现任何课件及代码方面的问题,希望我们讲解哪方面的内容,或是对课程有什么建议,都可以直接在本仓库中创建issue

- python

- 人工智能基础、深度学习基础(重点学习自然语言处理):MindSpore-d2l

- OpenI启智社区基础使用(可免费获取算力):OpenI_Learning

- MindSpore基础使用:MindSpore教程

- MindFormers基础使用:MindFormers讲解视频

昇思MindSpore技术公开课火热开展中,面向所有对大模型感兴趣的开发者,带领大家理论结合时间,由浅入深地逐步深入大模型技术

在已经完结的第一期课程(第1讲-第10讲)中,我们从Transformer开始,解析到ChatGPT的演进路线,手把手带领大家搭建一个简易版的“ChatGPT”

正在进行的第二期课程(第11讲-)在第一期的基础上做了全方位的升级,围绕大模型从开发到应用的全流程实践展开,讲解更前沿的大模型知识、丰富更多元的讲师阵容,期待你的加入!

| 章节序号 | 章节名称 | 课程简介 | 视频 | 课件及代码 | 知识点总结 |

|---|---|---|---|---|---|

| 第一讲 | Transformer | Multi-head self-attention原理。Masked self-attention的掩码处理方式。基于Transformer的机器翻译任务训练。 | link | link | link |

| 第二讲 | BERT | 基于Transformer Encoder的BERT模型设计:MLM和NSP任务。BERT进行下游任务微调的范式。 | link | link | link |

| 第三讲 | GPT | 基于Transformer Decoder的GPT模型设计:Next token prediction。GPT下游任务微调范式。 | link | link | link |

| 第四讲 | GPT2 | GPT2的核心创新点,包括Task Conditioning和Zero shot learning;模型实现细节基于GPT1的改动。 | link | link | link |

| 第五讲 | MindSpore自动并行 | 以MindSpore分布式并行特性为依托的数据并行、模型并行、Pipeline并行、内存优化等技术。 | link | link | link |

| 第六讲 | 代码预训练 | 代码预训练发展沿革。Code数据的预处理。CodeGeex代码预训练大模型。 | link | link | link |

| 第七讲 | Prompt Tuning | Pretrain-finetune范式到Prompt tuning范式的改变。Hard prompt和Soft prompt相关技术。只需要改造描述文本的prompting。 | link | link | link |

| 第八讲 | 多模态预训练大模型 | 紫东太初多模态大模型的设计、数据处理和优势;语音识别的理论概述、系统框架和现状及挑战。 | link | / | / |

| 第九讲 | Instruct Tuning | Instruction tuning的核心思想:让模型能够理解任务描述(指令)。Instruction tuning的局限性:无法支持开放域创新性任务、无法对齐LM训练目标和人类需求。Chain-of-thoughts:通过在prompt中提供示例,让模型“举一反三”。 | link | link | link |

| 第十讲 | RLHF | RLHF核心思想:将LLM和人类行为对齐。RLHF技术分解:LLM微调、基于人类反馈训练奖励模型、通过强化学习PPO算法实现模型微调。 | link | link | 更新中 |

| 第十一讲 | ChatGLM | GLM模型结构,从GLM到ChatGLM的演变,ChatGLM推理部署代码演示 | link | link | link |

| 第十二讲 | 多模态遥感智能解译基础模型 | 本次课程由中国科学院空天信息创新研究院研究员 实验室副主任 孙显老师讲解多模态遥感解译基础模型,揭秘大模型时代的智能遥感技术的发展与挑战、遥感基础模型的技术路线与典型场景应用 | link | / | link |

| 第十三讲 | ChatGLM2 | ChatGLM2技术解析,ChatGLM2推理部署代码演示,ChatGLM3特性介绍 | link | link | link |

| 第十四讲 | 文本生成解码原理 | 以MindNLP为例,讲解搜索与采样技术原理和实现 | link | link | link |

| 第十五讲 | LLAMA | LLaMA背景及羊驼大家族介绍,LLaMA模型结构解析,LLaMA推理部署代码演示 | link | link | link |

| 第十六讲 | LLAMA2 | 介绍LLAMA2模型结构,走读代码演示LLAMA2 chat部署 | link | link | link |

| 第十七讲 | 鹏城脑海 | 鹏城·脑海200B模型是具有2千亿参数的自回归式语言模型,在中国算力网枢纽节点'鹏城云脑II'千卡集群上基于昇思MindSpore的多维分布式并行技术进行长期大规模训练。模型聚焦中文核心能力,兼顾英文和部分多语言能力,目前完成了1.8T token量的训练 | link | / | link |

| 第十八讲 | CPM-Bee | 介绍CPM-Bee预训练、推理、微调及代码现场演示 | link | link | link |

| 第十九讲 | RWKV1-4 | RNN的没落和Transformers的崛起 万能的Transformers?Self-attention的弊端 “拳打”Transformer的新RNN-RWKV 基于MindNLP的RWKV模型实践 | link | / | link |

| 第二十讲 | MOE | MoE的前世今生 MoE的实现基础:AlltoAll通信; Mixtral 8x7b: 当前最好的开源MoE大模型,MoE与终身学习,基于昇思MindSpore的Mixtral 8x7b推理演示。 | link | link | link |

| 第二十一讲 | 高效参数微调 | 介绍Lora、(P-Tuning)原理及代码实现 | link | link | link |

| 第二十二讲 | Prompt Engineering | Prompt engineering:1.什么是Prompt?2.如何定义一个Prompt的好坏或优异? 3.如何撰写优质的Prompt?4.如何产出一个优质的Prompt? 5.浅谈一些我们在进行Prompt的时候遇到的问题。 | link | / | link |

| 第二十三讲 | 多维度混合并行自动搜索优化策略 | 议题一·时间损失模型及改进多维度二分法/议题二·APSS算法应用 | 上 下 | link | |

| 第二十四讲 | 书生.浦语大模型开源全链工具链简介与智能体开发体验 | 在本期课程中,我们有幸邀请到了书生.浦语社区技术运营、技术布道师闻星老师,以及昇思MindSpore技术布道师耿力老师,来详细解读书生.浦语大模型开源全链路工具链,演示如何对书生.浦语进行微调、推理以及智能体开发实操。 | link | / | link |

| 第二十五讲 | RAG | ||||

| 第二十六讲 | LangChain模块解析 | 解析Models、Prompts、Memory、Chains、Agents、Indexes、Callbacks模块,及案例分析 | |||

| 第二十七讲 | RWKV5-6 | / | |||

| 第二十八讲 | 量化 | 介绍低比特量化等相关模型量化技术 |

|

|

|

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for step_into_llm

Similar Open Source Tools

step_into_llm

The 'step_into_llm' repository is dedicated to the 昇思MindSpore technology open class, which focuses on exploring cutting-edge technologies, combining theory with practical applications, expert interpretations, open sharing, and empowering competitions. The repository contains course materials, including slides and code, for the ongoing second phase of the course. It covers various topics related to large language models (LLMs) such as Transformer, BERT, GPT, GPT2, and more. The course aims to guide developers interested in LLMs from theory to practical implementation, with a special emphasis on the development and application of large models.

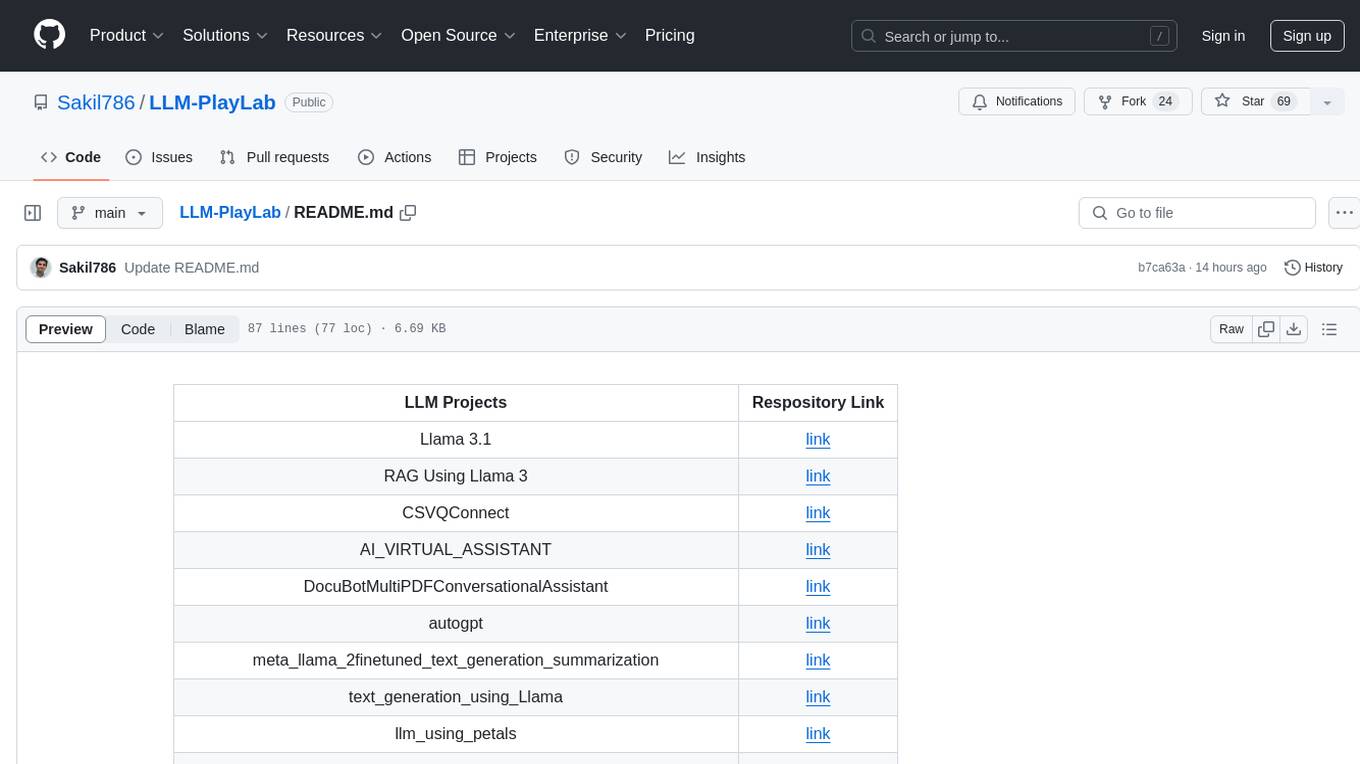

LLM-PlayLab

LLM-PlayLab is a repository containing various projects related to LLM (Large Language Models) fine-tuning, generative AI, time-series forecasting, and crash courses. It includes projects for text generation, sentiment analysis, data analysis, chat assistants, image captioning, and more. The repository offers a wide range of tools and resources for exploring and implementing advanced AI techniques.

kumo-search

Kumo search is an end-to-end search engine framework that supports full-text search, inverted index, forward index, sorting, caching, hierarchical indexing, intervention system, feature collection, offline computation, storage system, and more. It runs on the EA (Elastic automic infrastructure architecture) platform, enabling engineering automation, service governance, real-time data, service degradation, and disaster recovery across multiple data centers and clusters. The framework aims to provide a ready-to-use search engine framework to help users quickly build their own search engines. Users can write business logic in Python using the AOT compiler in the project, which generates C++ code and binary dynamic libraries for rapid iteration of the search engine.

PaddleScience

PaddleScience is a scientific computing suite developed based on the deep learning framework PaddlePaddle. It utilizes the learning ability of deep neural networks and the automatic (higher-order) differentiation mechanism of PaddlePaddle to solve problems in physics, chemistry, meteorology, and other fields. It supports three solving methods: physics mechanism-driven, data-driven, and mathematical fusion, and provides basic APIs and detailed documentation for users to use and further develop.

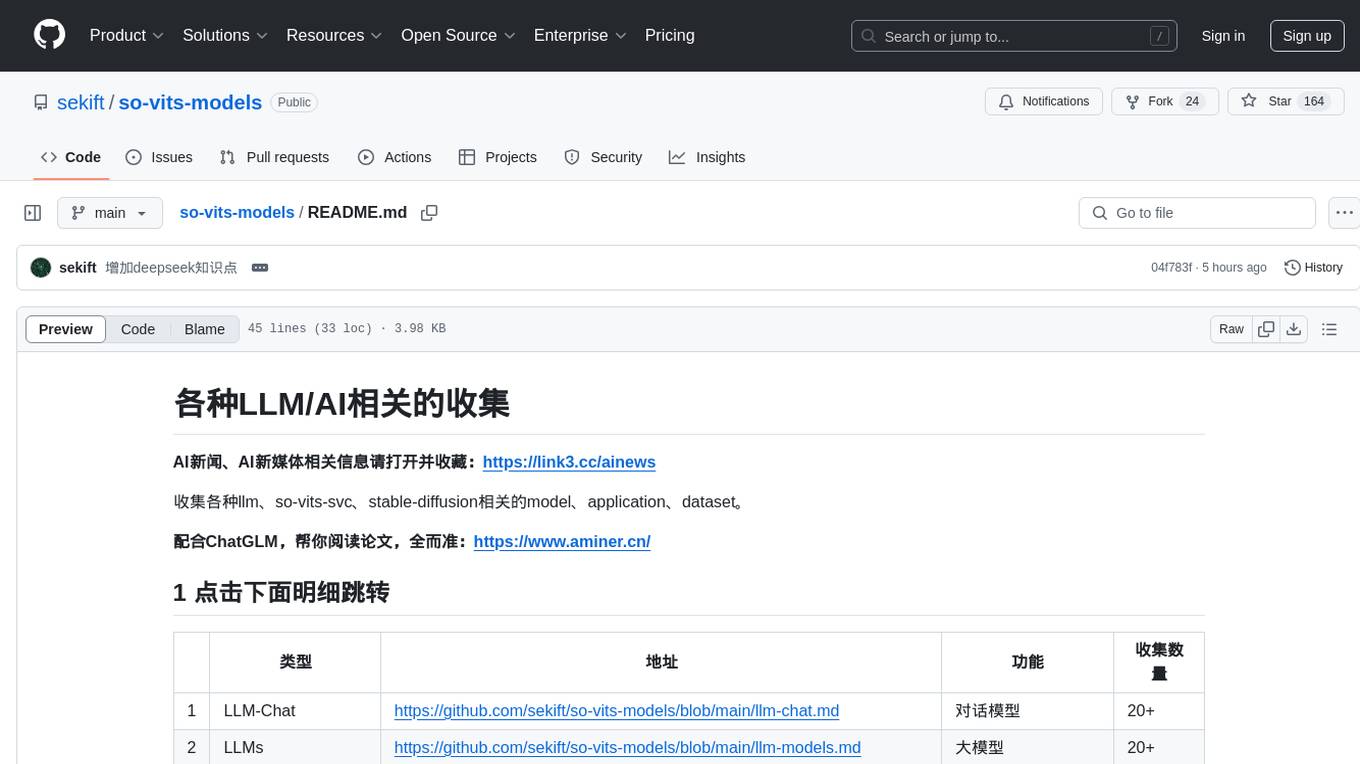

so-vits-models

This repository collects various LLM, AI-related models, applications, and datasets, including LLM-Chat for dialogue models, LLMs for large models, so-vits-svc for sound-related models, stable-diffusion for image-related models, and virtual-digital-person for generating videos. It also provides resources for deep learning courses and overviews, AI competitions, and specific AI tasks such as text, image, voice, and video processing.

Awesome-LLM-Eval

Awesome-LLM-Eval: a curated list of tools, benchmarks, demos, papers for Large Language Models (like ChatGPT, LLaMA, GLM, Baichuan, etc) Evaluation on Language capabilities, Knowledge, Reasoning, Fairness and Safety.

AIInfra

AIInfra is an open-source project focused on AI infrastructure, specifically targeting large models in distributed clusters, distributed architecture, distributed training, and algorithms related to large models. The project aims to explore and study system design in artificial intelligence and deep learning, with a focus on the hardware and software stack for building AI large model systems. It provides a comprehensive curriculum covering topics such as AI chip principles, communication and storage, AI clusters, large model training, and inference, as well as algorithms for large models. The course is designed for undergraduate and graduate students, as well as professionals working with AI large model systems, to gain a deep understanding of AI computer system architecture and design.

ailia-models

The collection of pre-trained, state-of-the-art AI models. ailia SDK is a self-contained, cross-platform, high-speed inference SDK for AI. The ailia SDK provides a consistent C++ API across Windows, Mac, Linux, iOS, Android, Jetson, and Raspberry Pi platforms. It also supports Unity (C#), Python, Rust, Flutter(Dart) and JNI for efficient AI implementation. The ailia SDK makes extensive use of the GPU through Vulkan and Metal to enable accelerated computing. # Supported models 323 models as of April 8th, 2024

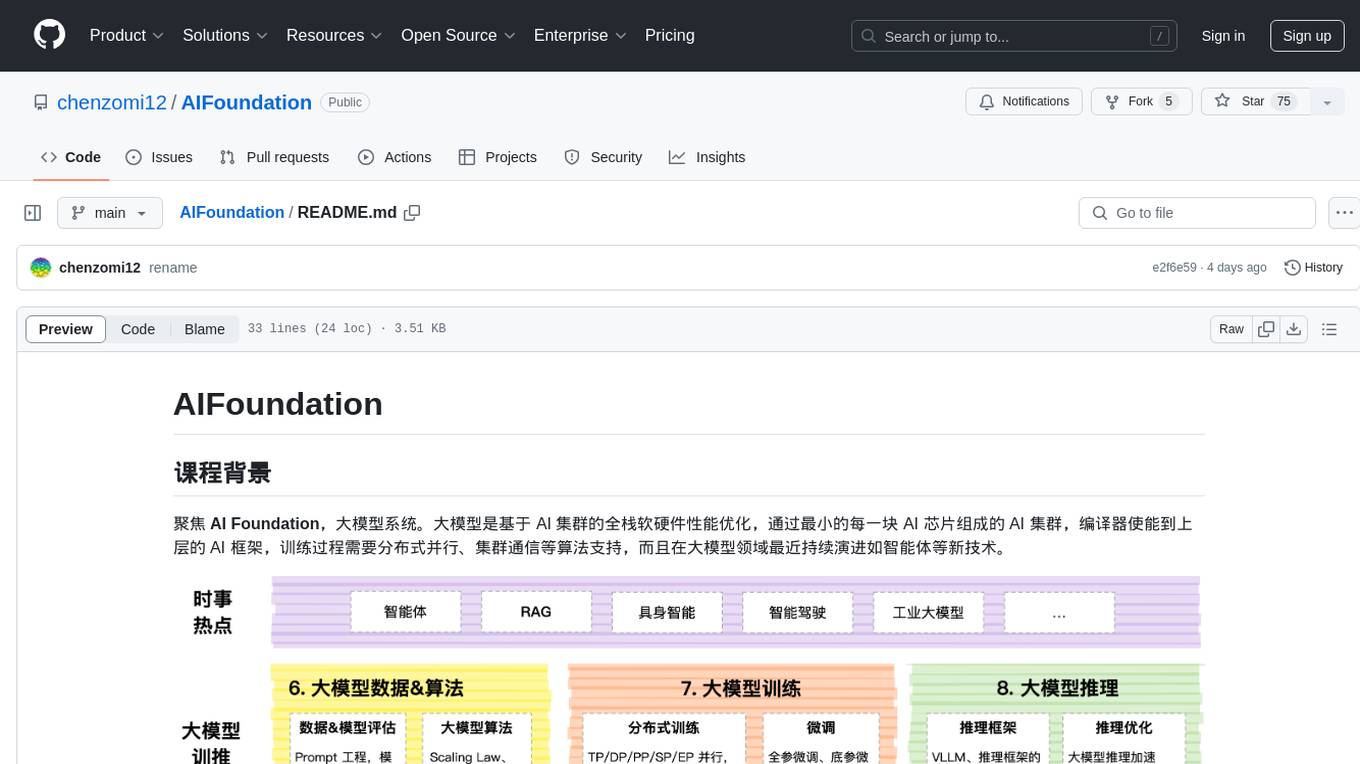

AIFoundation

AIFoundation focuses on AI Foundation, large model systems. Large models optimize the performance of full-stack hardware and software based on AI clusters. The training process requires distributed parallelism, cluster communication algorithms, and continuous evolution in the field of large models such as intelligent agents. The course covers modules like AI chip principles, communication & storage, AI clusters, computing architecture, communication architecture, large model algorithms, training, inference, and analysis of hot technologies in the large model field.

Awesome-AGI

Awesome-AGI is a curated list of resources related to Artificial General Intelligence (AGI), including models, pipelines, applications, and concepts. It provides a comprehensive overview of the current state of AGI research and development, covering various aspects such as model training, fine-tuning, deployment, and applications in different domains. The repository also includes resources on prompt engineering, RLHF, LLM vocabulary expansion, long text generation, hallucination mitigation, controllability and safety, and text detection. It serves as a valuable resource for researchers, practitioners, and anyone interested in the field of AGI.

aidea-server

AIdea Server is an open-source Golang-based server that integrates mainstream large language models and drawing models. It supports various functionalities including OpenAI's GPT-3.5 and GPT-4, Anthropic's Claude instant and Claude 2.1, Google's Gemini Pro, as well as Chinese models like Tongyi Qianwen, Wenxin Yiyuan, and more. It also supports open-source large models like Yi 34B, Llama2, and AquilaChat 7B. Additionally, it provides features for text-to-image, super-resolution, coloring black and white images, generating art fonts and QR codes, among others.

LangBot

LangBot is a highly stable, extensible, and multimodal instant messaging chatbot platform based on large language models. It supports various large models, adapts to group chats and private chats, and has capabilities for multi-turn conversations, tool invocation, and multimodal interactions. It is deeply integrated with Dify and currently supports QQ and QQ channels, with plans to support platforms like WeChat, WhatsApp, and Discord. The platform offers high stability, comprehensive functionality, native support for access control, rate limiting, sensitive word filtering mechanisms, and simple configuration with multiple deployment options. It also features plugin extension capabilities, an active community, and a new web management panel for managing LangBot instances through a browser.

Awesome-AgenticLLM-RL-Papers

This repository serves as the official source for the survey paper 'The Landscape of Agentic Reinforcement Learning for LLMs: A Survey'. It provides an extensive overview of various algorithms, methods, and frameworks related to Agentic RL, including detailed information on different families of algorithms, their key mechanisms, objectives, and links to relevant papers and resources. The repository covers a wide range of tasks such as Search & Research Agent, Code Agent, Mathematical Agent, GUI Agent, RL in Vision Agents, RL in Embodied Agents, and RL in Multi-Agent Systems. Additionally, it includes information on environments, frameworks, and methods suitable for different tasks related to Agentic RL and LLMs.

yudao-boot-mini

yudao-boot-mini is an open-source project focused on developing a rapid development platform for developers in China. It includes features like system functions, infrastructure, member center, data reports, workflow, mall system, WeChat official account, CRM, ERP, etc. The project is based on Spring Boot with Java backend and Vue for frontend. It offers various functionalities such as user management, role management, menu management, department management, workflow management, payment system, code generation, API documentation, database documentation, file service, WebSocket integration, message queue, Java monitoring, and more. The project is licensed under the MIT License, allowing both individuals and enterprises to use it freely without restrictions.

yudao-ui-admin-vue3

The yudao-ui-admin-vue3 repository is an open-source project focused on building a fast development platform for developers in China. It utilizes Vue3 and Element Plus to provide features such as configurable themes, internationalization, dynamic route permission generation, common component encapsulation, and rich examples. The project supports the latest front-end technologies like Vue3 and Vite4, and also includes tools like TypeScript, pinia, vueuse, vue-i18n, vue-router, unocss, iconify, and wangeditor. It offers a range of development tools and features for system functions, infrastructure, workflow management, payment systems, member centers, data reporting, e-commerce systems, WeChat public accounts, ERP systems, and CRM systems.

For similar tasks

Flowise

Flowise is a tool that allows users to build customized LLM flows with a drag-and-drop UI. It is open-source and self-hostable, and it supports various deployments, including AWS, Azure, Digital Ocean, GCP, Railway, Render, HuggingFace Spaces, Elestio, Sealos, and RepoCloud. Flowise has three different modules in a single mono repository: server, ui, and components. The server module is a Node backend that serves API logics, the ui module is a React frontend, and the components module contains third-party node integrations. Flowise supports different environment variables to configure your instance, and you can specify these variables in the .env file inside the packages/server folder.

nlux

nlux is an open-source Javascript and React JS library that makes it super simple to integrate powerful large language models (LLMs) like ChatGPT into your web app or website. With just a few lines of code, you can add conversational AI capabilities and interact with your favourite LLM.

generative-ai-go

The Google AI Go SDK enables developers to use Google's state-of-the-art generative AI models (like Gemini) to build AI-powered features and applications. It supports use cases like generating text from text-only input, generating text from text-and-images input (multimodal), building multi-turn conversations (chat), and embedding.

awesome-langchain-zh

The awesome-langchain-zh repository is a collection of resources related to LangChain, a framework for building AI applications using large language models (LLMs). The repository includes sections on the LangChain framework itself, other language ports of LangChain, tools for low-code development, services, agents, templates, platforms, open-source projects related to knowledge management and chatbots, as well as learning resources such as notebooks, videos, and articles. It also covers other LLM frameworks and provides additional resources for exploring and working with LLMs. The repository serves as a comprehensive guide for developers and AI enthusiasts interested in leveraging LangChain and LLMs for various applications.

Large-Language-Model-Notebooks-Course

This practical free hands-on course focuses on Large Language models and their applications, providing a hands-on experience using models from OpenAI and the Hugging Face library. The course is divided into three major sections: Techniques and Libraries, Projects, and Enterprise Solutions. It covers topics such as Chatbots, Code Generation, Vector databases, LangChain, Fine Tuning, PEFT Fine Tuning, Soft Prompt tuning, LoRA, QLoRA, Evaluate Models, Knowledge Distillation, and more. Each section contains chapters with lessons supported by notebooks and articles. The course aims to help users build projects and explore enterprise solutions using Large Language Models.

ai-chatbot

Next.js AI Chatbot is an open-source app template for building AI chatbots using Next.js, Vercel AI SDK, OpenAI, and Vercel KV. It includes features like Next.js App Router, React Server Components, Vercel AI SDK for streaming chat UI, support for various AI models, Tailwind CSS styling, Radix UI for headless components, chat history management, rate limiting, session storage with Vercel KV, and authentication with NextAuth.js. The template allows easy deployment to Vercel and customization of AI model providers.

awesome-local-llms

The 'awesome-local-llms' repository is a curated list of open-source tools for local Large Language Model (LLM) inference, covering both proprietary and open weights LLMs. The repository categorizes these tools into LLM inference backend engines, LLM front end UIs, and all-in-one desktop applications. It collects GitHub repository metrics as proxies for popularity and active maintenance. Contributions are encouraged, and users can suggest additional open-source repositories through the Issues section or by running a provided script to update the README and make a pull request. The repository aims to provide a comprehensive resource for exploring and utilizing local LLM tools.

Awesome-AI-Data-Guided-Projects

A curated list of data science & AI guided projects to start building your portfolio. The repository contains guided projects covering various topics such as large language models, time series analysis, computer vision, natural language processing (NLP), and data science. Each project provides detailed instructions on how to implement specific tasks using different tools and technologies.

For similar jobs

machine-learning

Ocademy is an AI learning community dedicated to Python, Data Science, Machine Learning, Deep Learning, and MLOps. They promote equal opportunities for everyone to access AI through open-source educational resources. The repository contains curated AI courses, tutorials, books, tools, and resources for learning and creating Generative AI. It also offers an interactive book to help adults transition into AI. Contributors are welcome to join and contribute to the community by following guidelines. The project follows a code of conduct to ensure inclusivity and welcomes contributions from those passionate about Data Science and AI.

go2coding.github.io

The go2coding.github.io repository is a collection of resources for AI enthusiasts, providing information on AI products, open-source projects, AI learning websites, and AI learning frameworks. It aims to help users stay updated on industry trends, learn from community projects, access learning resources, and understand and choose AI frameworks. The repository also includes instructions for local and external deployment of the project as a static website, with details on domain registration, hosting services, uploading static web pages, configuring domain resolution, and a visual guide to the AI tool navigation website. Additionally, it offers a platform for AI knowledge exchange through a QQ group and promotes AI tools through a WeChat public account.

step_into_llm

The 'step_into_llm' repository is dedicated to the 昇思MindSpore technology open class, which focuses on exploring cutting-edge technologies, combining theory with practical applications, expert interpretations, open sharing, and empowering competitions. The repository contains course materials, including slides and code, for the ongoing second phase of the course. It covers various topics related to large language models (LLMs) such as Transformer, BERT, GPT, GPT2, and more. The course aims to guide developers interested in LLMs from theory to practical implementation, with a special emphasis on the development and application of large models.

AI-lectures

AI-lectures is a repository containing educational materials on various topics related to Artificial Intelligence, including Machine Learning, Robotics, and Optimization. It provides full scripts, slides, and exercises with solutions for different lectures. Users can compile the materials into PDFs for easy access and reference. The repository aims to offer comprehensive resources for individuals interested in learning about AI and its applications in intelligent systems.

intro-to-llms-365

This repository serves as a resource for the Introduction to Large Language Models (LLMs) course, providing Jupyter notebooks with hands-on examples and exercises to help users learn the basics of Large Language Models. It includes information on installed packages, updates, and setting up a virtual environment for managing packages and running Jupyter notebooks.

awesome-ai-llm4education

The 'awesome-ai-llm4education' repository is a curated list of papers related to artificial intelligence (AI) and large language models (LLM) for education. It collects papers from top conferences, journals, and specialized domain-specific conferences, categorizing them based on specific tasks for better organization. The repository covers a wide range of topics including tutoring, personalized learning, assessment, material preparation, specific scenarios like computer science, language, math, and medicine, aided teaching, as well as datasets and benchmarks for educational research.

easy-learn-ai

Easy AI is a modern web application platform focused on AI education, aiming to help users understand complex artificial intelligence concepts through a concise and intuitive approach. The platform integrates multiple learning modules, providing a comprehensive AI knowledge system from basic concepts to practical applications.

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.