python-sdks

LiveKit real-time and server SDKs for Python

Stars: 327

Python SDK for LiveKit enables developers to easily integrate real-time video, audio, and data features into their Python applications. By connecting to a LiveKit server, users can quickly build interactive live streaming or video call applications with minimal code. The SDK includes packages for real-time participant connection and access token generation, making it simple to create rooms and manage participants. With asyncio and aiohttp support, developers can seamlessly interact with the LiveKit server API and handle real-time communication tasks effortlessly.

README:

Use this SDK to add realtime video, audio and data features to your Python app. By connecting to LiveKit Cloud or a self-hosted server, you can quickly build applications such as multi-modal AI, live streaming, or video calls with just a few lines of code.

This repo contains two packages

- livekit: Real-time SDK for connecting to LiveKit as a participant

- livekit-api: Access token generation and server APIs

$ pip install livekit-apifrom livekit import api

import os

# will automatically use the LIVEKIT_API_KEY and LIVEKIT_API_SECRET env vars

token = api.AccessToken() \

.with_identity("python-bot") \

.with_name("Python Bot") \

.with_grants(api.VideoGrants(

room_join=True,

room="my-room",

)).to_jwt()RoomService uses asyncio and aiohttp to make API calls. It needs to be used with an event loop.

from livekit import api

import asyncio

async def main():

lkapi = api.LiveKitAPI("https://my-project.livekit.cloud")

room_info = await lkapi.room.create_room(

api.CreateRoomRequest(name="my-room"),

)

print(room_info)

results = await lkapi.room.list_rooms(api.ListRoomsRequest())

print(results)

await lkapi.aclose()

asyncio.run(main())Services can be accessed via the LiveKitAPI object.

lkapi = api.LiveKitAPI("https://my-project.livekit.cloud")

# Room Service

room_svc = lkapi.room

# Egress Service

egress_svc = lkapi.egress

# Ingress Service

ingress_svc = lkapi.ingress

# Sip Service

sip_svc = lkapi.sip

# Agent Dispatch

dispatch_svc = lkapi.agent_dispatch

# Connector Service

connector_svc = lkapi.connector$ pip install livekitsee room_example for full example

from livekit import rtc

async def main():

room = rtc.Room()

@room.on("participant_connected")

def on_participant_connected(participant: rtc.RemoteParticipant):

logging.info(

"participant connected: %s %s", participant.sid, participant.identity)

async def receive_frames(stream: rtc.VideoStream):

async for frame in stream:

# received a video frame from the track, process it here

pass

# track_subscribed is emitted whenever the local participant is subscribed to a new track

@room.on("track_subscribed")

def on_track_subscribed(track: rtc.Track, publication: rtc.RemoteTrackPublication, participant: rtc.RemoteParticipant):

logging.info("track subscribed: %s", publication.sid)

if track.kind == rtc.TrackKind.KIND_VIDEO:

video_stream = rtc.VideoStream(track)

asyncio.ensure_future(receive_frames(video_stream))

# By default, autosubscribe is enabled. The participant will be subscribed to

# all published tracks in the room

await room.connect(URL, TOKEN)

logging.info("connected to room %s", room.name)

# participants and tracks that are already available in the room

# participant_connected and track_published events will *not* be emitted for them

for identity, participant in room.remote_participants.items():

print(f"identity: {identity}")

print(f"participant: {participant}")

for tid, publication in participant.track_publications.items():

print(f"\ttrack id: {publication}")Perform your own predefined method calls from one participant to another.

This feature is especially powerful when used with Agents, for instance to forward LLM function calls to your client application.

The participant who implements the method and will receive its calls must first register support:

@room.local_participant.register_rpc_method("greet")

async def handle_greet(data: RpcInvocationData):

print(f"Received greeting from {data.caller_identity}: {data.payload}")

return f"Hello, {data.caller_identity}!"In addition to the payload, your handler will also receive response_timeout, which informs you the maximum time available to return a response. If you are unable to respond in time, the call will result in an error on the caller's side.

The caller may then initiate an RPC call like so:

try:

response = await room.local_participant.perform_rpc(

destination_identity='recipient-identity',

method='greet',

payload='Hello from RPC!'

)

print(f"RPC response: {response}")

except Exception as e:

print(f"RPC call failed: {e}")You may find it useful to adjust the response_timeout parameter, which indicates the amount of time you will wait for a response. We recommend keeping this value as low as possible while still satisfying the constraints of your application.

The MediaDevices class provides a high-level interface for working with local audio input (microphone) and output (speakers) devices. It's built on top of the sounddevice library and integrates seamlessly with LiveKit's audio processing features. In order to use MediaDevices, you must have the sounddevice library installed in your local Python environment, if it's not available, MediaDevices will not work.

from livekit import rtc

# Create a MediaDevices instance

devices = rtc.MediaDevices()

# Open the default microphone with audio processing enabled

mic = devices.open_input(

enable_aec=True, # Acoustic Echo Cancellation

noise_suppression=True, # Noise suppression

high_pass_filter=True, # High-pass filter

auto_gain_control=True # Automatic gain control

)

# Use the audio source to create a track and publish it

track = rtc.LocalAudioTrack.create_audio_track("microphone", mic.source)

await room.local_participant.publish_track(track)

# Clean up when done

await mic.aclose()# Open the default output device

player = devices.open_output()

# Add remote audio tracks to the player (typically in a track_subscribed handler)

@room.on("track_subscribed")

def on_track_subscribed(track: rtc.Track, publication, participant):

if track.kind == rtc.TrackKind.KIND_AUDIO:

player.add_track(track)

# Start playback (mixes all added tracks)

await player.start()

# Clean up when done

await player.aclose()For full duplex audio with echo cancellation, open the input device first (with AEC enabled), then open the output device. The output player will automatically feed the APM's reverse stream for effective echo cancellation:

devices = rtc.MediaDevices()

# Open microphone with AEC

mic = devices.open_input(enable_aec=True)

# Open speakers - automatically uses the mic's APM for echo cancellation

player = devices.open_output()

# Publish microphone

track = rtc.LocalAudioTrack.create_audio_track("mic", mic.source)

await room.local_participant.publish_track(track)

# Add remote tracks and start playback

player.add_track(remote_audio_track)

await player.start()devices = rtc.MediaDevices()

# List input devices

input_devices = devices.list_input_devices()

for device in input_devices:

print(f"{device['index']}: {device['name']}")

# List output devices

output_devices = devices.list_output_devices()

for device in output_devices:

print(f"{device['index']}: {device['name']}")

# Get default device indices

default_input = devices.default_input_device()

default_output = devices.default_output_device()See publish_mic.py and full_duplex.py for complete examples.

LiveKit is a dynamic realtime environment and calls can fail for various reasons.

You may throw errors of the type RpcError with a string message in an RPC method handler and they will be received on the caller's side with the message intact. Other errors will not be transmitted and will instead arrive to the caller as 1500 ("Application Error"). Other built-in errors are detailed in RpcError.

- Facelandmark: Use mediapipe to detect face landmarks (eyes, nose ...)

- Basic room: Connect to a room

- Publish hue: Publish a rainbow video track

- Publish wave: Publish a sine wave

Please join us on Slack to get help from our devs / community members. We welcome your contributions(PRs) and details can be discussed there.

| LiveKit Ecosystem | |

|---|---|

| LiveKit SDKs | Browser · iOS/macOS/visionOS · Android · Flutter · React Native · Rust · Node.js · Python · Unity · Unity (WebGL) · ESP32 |

| Server APIs | Node.js · Golang · Ruby · Java/Kotlin · Python · Rust · PHP (community) · .NET (community) |

| UI Components | React · Android Compose · SwiftUI · Flutter |

| Agents Frameworks | Python · Node.js · Playground |

| Services | LiveKit server · Egress · Ingress · SIP |

| Resources | Docs · Example apps · Cloud · Self-hosting · CLI |

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for python-sdks

Similar Open Source Tools

python-sdks

Python SDK for LiveKit enables developers to easily integrate real-time video, audio, and data features into their Python applications. By connecting to a LiveKit server, users can quickly build interactive live streaming or video call applications with minimal code. The SDK includes packages for real-time participant connection and access token generation, making it simple to create rooms and manage participants. With asyncio and aiohttp support, developers can seamlessly interact with the LiveKit server API and handle real-time communication tasks effortlessly.

fastrtc

FastRTC is a real-time communication library for Python that allows users to turn any Python function into a real-time audio and video stream over WebRTC or WebSockets. It provides features like automatic voice detection, UI launching, WebRTC support, WebSocket support, telephone support, and customizable backend for production applications. The library offers various examples and usage scenarios for audio and video streaming, object detection, voice APIs, chat applications, and more.

reolink_aio

The 'reolink_aio' Python package is designed to integrate Reolink devices (NVR/cameras) into your application. It implements Reolink IP NVR and camera API, allowing users to subscribe to Reolink ONVIF SWN events for real-time event notifications via webhook. The package provides functionalities to obtain and cache NVR or camera settings, capabilities, and states, as well as enable features like infrared lights, spotlight, and siren. Users can also subscribe to events, renew timers, and disconnect from the host device.

langserve

LangServe helps developers deploy `LangChain` runnables and chains as a REST API. This library is integrated with FastAPI and uses pydantic for data validation. In addition, it provides a client that can be used to call into runnables deployed on a server. A JavaScript client is available in LangChain.js.

lollms_legacy

Lord of Large Language Models (LoLLMs) Server is a text generation server based on large language models. It provides a Flask-based API for generating text using various pre-trained language models. This server is designed to be easy to install and use, allowing developers to integrate powerful text generation capabilities into their applications. The tool supports multiple personalities for generating text with different styles and tones, real-time text generation with WebSocket-based communication, RESTful API for listing personalities and adding new personalities, easy integration with various applications and frameworks, sending files to personalities, running on multiple nodes to provide a generation service to many outputs at once, and keeping data local even in the remote version.

hugging-chat-api

Unofficial HuggingChat Python API for creating chatbots, supporting features like image generation, web search, memorizing context, and changing LLMs. Users can log in, chat with the ChatBot, perform web searches, create new conversations, manage conversations, switch models, get conversation info, use assistants, and delete conversations. The API also includes a CLI mode with various commands for interacting with the tool. Users are advised not to use the application for high-stakes decisions or advice and to avoid high-frequency requests to preserve server resources.

lollms

LoLLMs Server is a text generation server based on large language models. It provides a Flask-based API for generating text using various pre-trained language models. This server is designed to be easy to install and use, allowing developers to integrate powerful text generation capabilities into their applications.

simple-openai

Simple-OpenAI is a Java library that provides a simple way to interact with the OpenAI API. It offers consistent interfaces for various OpenAI services like Audio, Chat Completion, Image Generation, and more. The library uses CleverClient for HTTP communication, Jackson for JSON parsing, and Lombok to reduce boilerplate code. It supports asynchronous requests and provides methods for synchronous calls as well. Users can easily create objects to communicate with the OpenAI API and perform tasks like text-to-speech, transcription, image generation, and chat completions.

suno-api

Suno AI API is an open-source project that allows developers to integrate the music generation capabilities of Suno.ai into their own applications. The API provides a simple and convenient way to generate music, lyrics, and other audio content using Suno.ai's powerful AI models. With Suno AI API, developers can easily add music generation functionality to their apps, websites, and other projects.

client-python

The Mistral Python Client is a tool inspired by cohere-python that allows users to interact with the Mistral AI API. It provides functionalities to access and utilize the AI capabilities offered by Mistral. Users can easily install the client using pip and manage dependencies using poetry. The client includes examples demonstrating how to use the API for various tasks, such as chat interactions. To get started, users need to obtain a Mistral API Key and set it as an environment variable. Overall, the Mistral Python Client simplifies the integration of Mistral AI services into Python applications.

raglite

RAGLite is a Python toolkit for Retrieval-Augmented Generation (RAG) with PostgreSQL or SQLite. It offers configurable options for choosing LLM providers, database types, and rerankers. The toolkit is fast and permissive, utilizing lightweight dependencies and hardware acceleration. RAGLite provides features like PDF to Markdown conversion, multi-vector chunk embedding, optimal semantic chunking, hybrid search capabilities, adaptive retrieval, and improved output quality. It is extensible with a built-in Model Context Protocol server, customizable ChatGPT-like frontend, document conversion to Markdown, and evaluation tools. Users can configure RAGLite for various tasks like configuring, inserting documents, running RAG pipelines, computing query adapters, evaluating performance, running MCP servers, and serving frontends.

openai

An open-source client package that allows developers to easily integrate the power of OpenAI's state-of-the-art AI models into their Dart/Flutter applications. The library provides simple and intuitive methods for making requests to OpenAI's various APIs, including the GPT-3 language model, DALL-E image generation, and more. It is designed to be lightweight and easy to use, enabling developers to focus on building their applications without worrying about the complexities of dealing with HTTP requests. Note that this is an unofficial library as OpenAI does not have an official Dart library.

openedai-speech

OpenedAI Speech is a free, private text-to-speech server compatible with the OpenAI audio/speech API. It offers custom voice cloning and supports various models like tts-1 and tts-1-hd. Users can map their own piper voices and create custom cloned voices. The server provides multilingual support with XTTS voices and allows fixing incorrect sounds with regex. Recent changes include bug fixes, improved error handling, and updates for multilingual support. Installation can be done via Docker or manual setup, with usage instructions provided. Custom voices can be created using Piper or Coqui XTTS v2, with guidelines for preparing audio files. The tool is suitable for tasks like generating speech from text, creating custom voices, and multilingual text-to-speech applications.

react-native-fast-tflite

A high-performance TensorFlow Lite library for React Native that utilizes JSI for power, zero-copy ArrayBuffers for efficiency, and low-level C/C++ TensorFlow Lite core API for direct memory access. It supports swapping out TensorFlow Models at runtime and GPU-accelerated delegates like CoreML/Metal/OpenGL. Easy VisionCamera integration allows for seamless usage. Users can load TensorFlow Lite models, interpret input and output data, and utilize GPU Delegates for faster computation. The library is suitable for real-time object detection, image classification, and other machine learning tasks in React Native applications.

litserve

LitServe is a high-throughput serving engine for deploying AI models at scale. It generates an API endpoint for a model, handles batching, streaming, autoscaling across CPU/GPUs, and more. Built for enterprise scale, it supports every framework like PyTorch, JAX, Tensorflow, and more. LitServe is designed to let users focus on model performance, not the serving boilerplate. It is like PyTorch Lightning for model serving but with broader framework support and scalability.

aiodynamo

AsyncIO DynamoDB is an asynchronous pythonic client for DynamoDB, designed for asynchronous apps. It is two times faster than aiobotocore, botocore, or boto3 for operations like query or scan. The library provides a pythonic API with modern Python features, automatically depaginates paginated APIs using asynchronous iterators. The source code is legible and hand-written, allowing for easy inspection and understanding. It offers a pluggable HTTP client, enabling integration with existing asynchronous HTTP clients without additional dependencies or dependency resolution issues.

For similar tasks

python-sdks

Python SDK for LiveKit enables developers to easily integrate real-time video, audio, and data features into their Python applications. By connecting to a LiveKit server, users can quickly build interactive live streaming or video call applications with minimal code. The SDK includes packages for real-time participant connection and access token generation, making it simple to create rooms and manage participants. With asyncio and aiohttp support, developers can seamlessly interact with the LiveKit server API and handle real-time communication tasks effortlessly.

For similar jobs

resonance

Resonance is a framework designed to facilitate interoperability and messaging between services in your infrastructure and beyond. It provides AI capabilities and takes full advantage of asynchronous PHP, built on top of Swoole. With Resonance, you can: * Chat with Open-Source LLMs: Create prompt controllers to directly answer user's prompts. LLM takes care of determining user's intention, so you can focus on taking appropriate action. * Asynchronous Where it Matters: Respond asynchronously to incoming RPC or WebSocket messages (or both combined) with little overhead. You can set up all the asynchronous features using attributes. No elaborate configuration is needed. * Simple Things Remain Simple: Writing HTTP controllers is similar to how it's done in the synchronous code. Controllers have new exciting features that take advantage of the asynchronous environment. * Consistency is Key: You can keep the same approach to writing software no matter the size of your project. There are no growing central configuration files or service dependencies registries. Every relation between code modules is local to those modules. * Promises in PHP: Resonance provides a partial implementation of Promise/A+ spec to handle various asynchronous tasks. * GraphQL Out of the Box: You can build elaborate GraphQL schemas by using just the PHP attributes. Resonance takes care of reusing SQL queries and optimizing the resources' usage. All fields can be resolved asynchronously.

aiogram_bot_template

Aiogram bot template is a boilerplate for creating Telegram bots using Aiogram framework. It provides a solid foundation for building robust and scalable bots with a focus on code organization, database integration, and localization.

pinecone-ts-client

The official Node.js client for Pinecone, written in TypeScript. This client library provides a high-level interface for interacting with the Pinecone vector database service. With this client, you can create and manage indexes, upsert and query vector data, and perform other operations related to vector search and retrieval. The client is designed to be easy to use and provides a consistent and idiomatic experience for Node.js developers. It supports all the features and functionality of the Pinecone API, making it a comprehensive solution for building vector-powered applications in Node.js.

ai-chatbot

Next.js AI Chatbot is an open-source app template for building AI chatbots using Next.js, Vercel AI SDK, OpenAI, and Vercel KV. It includes features like Next.js App Router, React Server Components, Vercel AI SDK for streaming chat UI, support for various AI models, Tailwind CSS styling, Radix UI for headless components, chat history management, rate limiting, session storage with Vercel KV, and authentication with NextAuth.js. The template allows easy deployment to Vercel and customization of AI model providers.

freeciv-web

Freeciv-web is an open-source turn-based strategy game that can be played in any HTML5 capable web-browser. It features in-depth gameplay, a wide variety of game modes and options. Players aim to build cities, collect resources, organize their government, and build an army to create the best civilization. The game offers both multiplayer and single-player modes, with a 2D version with isometric graphics and a 3D WebGL version available. The project consists of components like Freeciv-web, Freeciv C server, Freeciv-proxy, Publite2, and pbem for play-by-email support. Developers interested in contributing can check the GitHub issues and TODO file for tasks to work on.

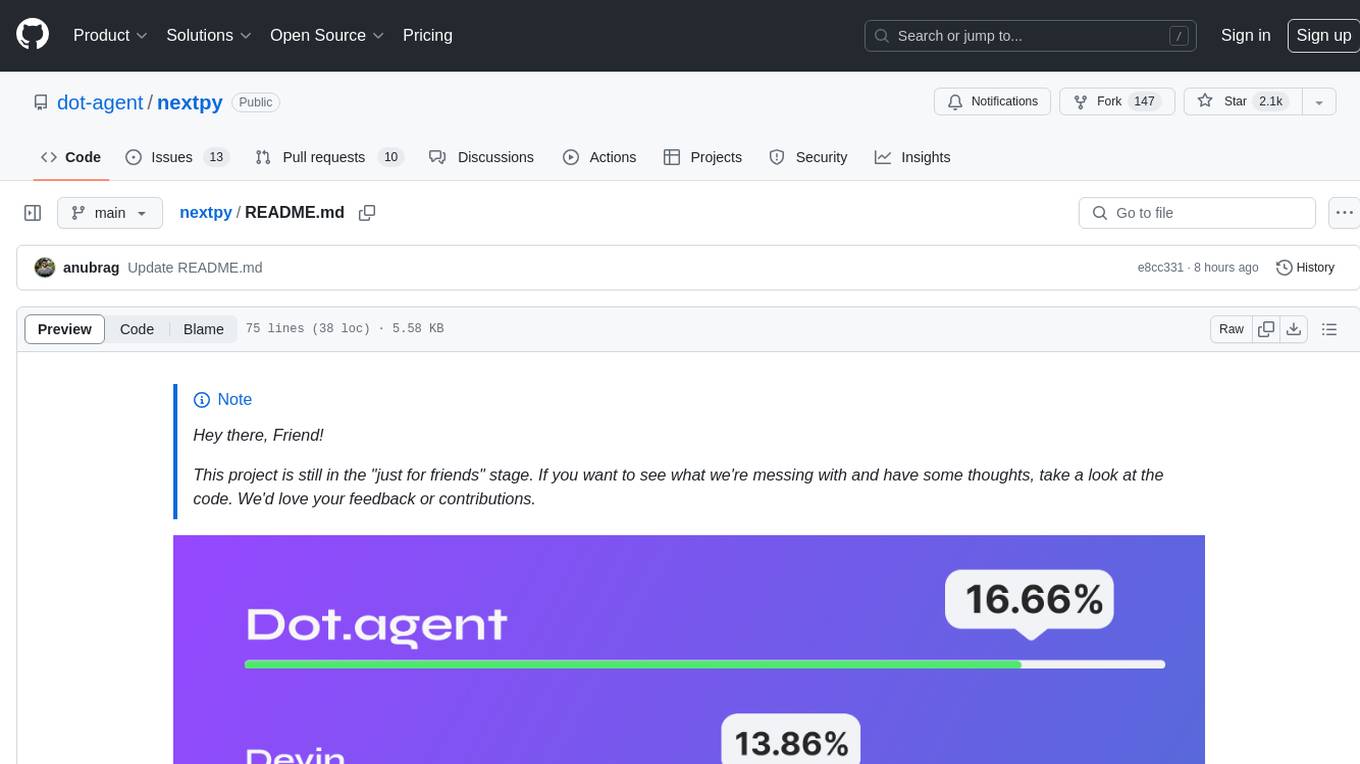

nextpy

Nextpy is a cutting-edge software development framework optimized for AI-based code generation. It provides guardrails for defining AI system boundaries, structured outputs for prompt engineering, a powerful prompt engine for efficient processing, better AI generations with precise output control, modularity for multiplatform and extensible usage, developer-first approach for transferable knowledge, and containerized & scalable deployment options. It offers 4-10x faster performance compared to Streamlit apps, with a focus on cooperation within the open-source community and integration of key components from various projects.

airbadge

Airbadge is a Stripe addon for Auth.js that provides an easy way to create a SaaS site without writing any authentication or payment code. It integrates Stripe Checkout into the signup flow, offers over 50 OAuth options for authentication, allows route and UI restriction based on subscription, enables self-service account management, handles all Stripe webhooks, supports trials and free plans, includes subscription and plan data in the session, and is open source with a BSL license. The project also provides components for conditional UI display based on subscription status and helper functions to restrict route access. Additionally, it offers a billing endpoint with various routes for billing operations. Setup involves installing @airbadge/sveltekit, setting up a database provider for Auth.js, adding environment variables, configuring authentication and billing options, and forwarding Stripe events to localhost.

ChaKt-KMP

ChaKt is a multiplatform app built using Kotlin and Compose Multiplatform to demonstrate the use of Generative AI SDK for Kotlin Multiplatform to generate content using Google's Generative AI models. It features a simple chat based user interface and experience to interact with AI. The app supports mobile, desktop, and web platforms, and is built with Kotlin Multiplatform, Kotlin Coroutines, Compose Multiplatform, Generative AI SDK, Calf - File picker, and BuildKonfig. Users can contribute to the project by following the guidelines in CONTRIBUTING.md. The app is licensed under the MIT License.