mdex

Markdown for Elixir. Fast, Extensible, Phoenix-native. AI-ready. Built on top of comrak, ammonia, and lumis.

Stars: 372

MDEx is a fast and extensible Markdown library for Elixir that is compliant with the CommonMark spec. It offers various formats such as Markdown, HTML, Phoenix HEEx, JSON, XML, Quill Delta, and more. The tool provides features like plugins for GitHub Flavored Markdown, code block decorators, HTML sanitization, emoji shortcodes, and syntax highlighting. It is built on top of comrak, ammonia, and lumis, and is used by various projects like BeaconCMS, Tableau, and Bonfire. MDEx was developed to parse CommonMark files quickly and be easily extensible by consumers of the library.

README:

- Fast

- Compliant with the CommonMark spec

- Plugins

- Formats:

- Markdown (CommonMark)

- HTML

- Phoenix HEEx

- JSON

- XML

- Quill Delta

- Floki-like Document AST

- Req-like Document pipeline API

- GitHub Flavored Markdown

- GitLab Flavored Markdown

- Discord Flavored Markdown (Partial)

- Wiki-style links

- Phoenix HEEx components and expressions

- Streaming incomplete fragments

- Emoji shortcodes

- Built-in Syntax Highlighting for code blocks

- Code Block Decorators

- HTML sanitization

- ~MD Sigil for Markdown, HTML, HEEx, JSON, XML, and Quill Delta

- mdex_gfm - Enable GitHub Flavored Markdown (GFM)

- mdex_mermaid - Render Mermaid diagrams in code blocks

- mdex_katex - Render math formulas using KaTeX

- mdex_video_embed - Privacy-respecting video embeds from code blocks

- mdex_custom_heading_id - Custom heading IDs for markdown headings

Add :mdex dependency:

def deps do

[

{:mdex, "~> 0.11"}

]

endOr use Igniter:

mix igniter.install mdexiex> MDEx.to_html!("# Hello :smile:", extension: [shortcodes: true])

"<h1>Hello 😄</h1>"iex> MDEx.new(markdown: "# Hello :smile:", extension: [shortcodes: true]) |> MDEx.to_html!()

"<h1>Hello 😄</h1>"iex> import MDEx.Sigil

iex> ~MD[# Hello :smile:]HTML

"<h1>Hello 😄</h1>"iex> import MDEx.Sigil

iex> assigns = %{project: "MDEx"}

iex> ~MD[# {@project}]HEEX

%Phoenix.LiveView.Rendered{...}iex> import MDEx.Sigil

iex> ~MD[# Hello :smile:]

#MDEx.Document(3 nodes)<

├── 1 [heading] level: 1, setext: false

│ ├── 2 [text] literal: "Hello "

│ └── 3 [short_code] code: "smile", emoji: "😄"

>

iex> MDEx.new(streaming: true)

...> |> MDEx.Document.put_markdown("**Install")

...> |> MDEx.to_html!()

"<p><strong>Install</strong></p>"Livebook examples are available at Pages / Examples

The library is built on top of:

- comrak - a fast Rust port of GitHub's CommonMark parser

- ammonia for HTML Sanitization

- lumis for Syntax Highlighting

- BeaconCMS

- Tableau

- Bonfire

- 00

- Plural Console

- Exmeralda

- Algora

- Ash AI

- Canada Navigator

- Jido

- TermUI

- BeamLens Web

- Conpipe

- ExPress

- Prosody

- Sagents Live Debugger

- Sayfa

- And more...

Are you using MDEx and want to list your project here? Please send a PR!

💜 Support MDEx Development

If you or your company find MDEx useful, please consider sponsoring its development.

Your support helps maintain and improve MDEx for the entire Elixir community!

Current and previous sponsors

MDEx was born out of the necessity of parsing CommonMark files, to parse hundreds of files quickly, and to be easily extensible by consumers of the library.

- earmark is extensible but can't parse all kinds of documents and is slow to convert hundreds of markdowns.

- md is very extensible but the doc says "If one needs to perfectly parse the common markdown, Md is probably not the correct choice" and CommonMark was a requirement to parse many existing files.

- markdown is not precompiled and has not received updates in a while.

- cmark is a fast CommonMark parser but it requires compiling the C library, is hard to extend, and was archived on Apr 2024.

| Feature | MDEx | Earmark | md | cmark |

|---|---|---|---|---|

| Active | ✅ | ✅ | ✅ | ❌ |

| Pure Elixir | ❌ | ✅ | ✅ | ❌ |

| Extensible | ✅ | ✅ | ✅ | ❌ |

| Syntax Highlighting | ✅ | ❌ | ❌ | ❌ |

| Code Block Decorators | ✅ | ❌ | ❌ | ❌ |

| Streaming (fragments) | ✅ | ❌ | ❌ | ❌ |

| Phoenix HEEx components | ✅ | ❌ | ❌ | ❌ |

| AST | ✅ | ✅ | ✅ | ❌ |

| AST to Markdown | ✅ | ❌ | ❌ | |

| To HTML | ✅ | ✅ | ✅ | ✅ |

| To JSON | ✅ | ❌ | ❌ | ❌ |

| To XML | ✅ | ❌ | ❌ | ✅ |

| To Manpage | ❌ | ❌ | ❌ | ✅ |

| To LaTeX | ❌ | ❌ | ❌ | ✅ |

| To Quill Delta | ✅ | ❌ | ❌ | ❌ |

| Emoji | ✅ | ❌ | ❌ | ❌ |

| GFM³ | ✅ | ✅ | ❌ | ❌ |

| GLFM⁴ | ✅ | ❌ | ❌ | ❌ |

| Discord⁵ | ❌ | ❌ | ❌ |

- Partial support

- Possible with earmark_reversal

- GitHub Flavored Markdown

- GitLab Flavored Markdown

- Discord Flavored Markdown

A benchmark is available to compare existing libs:

Name ips average deviation median 99th %

mdex 8983.16 0.111 ms ±6.52% 0.110 ms 0.144 ms

md 461.00 2.17 ms ±2.64% 2.16 ms 2.35 ms

earmark 110.47 9.05 ms ±3.17% 9.02 ms 10.01 ms

Comparison:

mdex 8983.16

md 461.00 - 19.49x slower +2.06 ms

earmark 110.47 - 81.32x slower +8.94 ms

Memory usage statistics:

Name average deviation median 99th %

mdex 0.00184 MB ±0.00% 0.00184 MB 0.00184 MB

md 6.45 MB ±0.00% 6.45 MB 6.45 MB

earmark 5.09 MB ±0.00% 5.09 MB 5.09 MB

Comparison:

mdex 0.00184 MB

md 6.45 MB - 3506.37x memory usage +6.45 MB

earmark 5.09 MB - 2770.15x memory usage +5.09 MB

The most performance gain is using the ~MD sigil to compile the Markdown instead of parsing it at runtime,

prefer using it when possible.

To finish, a friendly reminder that all libs have their own strengths and trade-offs so use the one that better suits your needs.

- comrak crate for all the heavy work on parsing Markdown and rendering HTML

- Floki for the AST

- Req for the pipeline API

- Logo based on markdown-mark

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for mdex

Similar Open Source Tools

mdex

MDEx is a fast and extensible Markdown library for Elixir that is compliant with the CommonMark spec. It offers various formats such as Markdown, HTML, Phoenix HEEx, JSON, XML, Quill Delta, and more. The tool provides features like plugins for GitHub Flavored Markdown, code block decorators, HTML sanitization, emoji shortcodes, and syntax highlighting. It is built on top of comrak, ammonia, and lumis, and is used by various projects like BeaconCMS, Tableau, and Bonfire. MDEx was developed to parse CommonMark files quickly and be easily extensible by consumers of the library.

langtrace

Langtrace is an open source observability software that lets you capture, debug, and analyze traces and metrics from all your applications that leverage LLM APIs, Vector Databases, and LLM-based Frameworks. It supports Open Telemetry Standards (OTEL), and the traces generated adhere to these standards. Langtrace offers both a managed SaaS version (Langtrace Cloud) and a self-hosted option. The SDKs for both Typescript/Javascript and Python are available, making it easy to integrate Langtrace into your applications. Langtrace automatically captures traces from various vendors, including OpenAI, Anthropic, Azure OpenAI, Langchain, LlamaIndex, Pinecone, and ChromaDB.

auto-dev

AutoDev is an AI-powered coding wizard that supports multiple languages, including Java, Kotlin, JavaScript/TypeScript, Rust, Python, Golang, C/C++/OC, and more. It offers a range of features, including auto development mode, copilot mode, chat with AI, customization options, SDLC support, custom AI agent integration, and language features such as language support, extensions, and a DevIns language for AI agent development. AutoDev is designed to assist developers with tasks such as auto code generation, bug detection, code explanation, exception tracing, commit message generation, code review content generation, smart refactoring, Dockerfile generation, CI/CD config file generation, and custom shell/command generation. It also provides a built-in LLM fine-tune model and supports UnitEval for LLM result evaluation and UnitGen for code-LLM fine-tune data generation.

terminator

Terminator is an AI-powered desktop automation tool that is open source, MIT-licensed, and cross-platform. It works across all apps and browsers, inspired by GitHub Actions & Playwright. It is 100x faster than generic AI agents, with over 95% success rate and no vendor lock-in. Users can create automations that work across any desktop app or browser, achieve high success rates without costly consultant armies, and pre-train workflows as deterministic code.

dora

Dataflow-oriented robotic application (dora-rs) is a framework that makes creation of robotic applications fast and simple. Building a robotic application can be summed up as bringing together hardwares, algorithms, and AI models, and make them communicate with each others. At dora-rs, we try to: make integration of hardware and software easy by supporting Python, C, C++, and also ROS2. make communication low latency by using zero-copy Arrow messages. dora-rs is still experimental and you might experience bugs, but we're working very hard to make it stable as possible.

skylos

Skylos is a privacy-first SAST tool for Python, TypeScript, and Go that bridges the gap between traditional static analysis and AI agents. It detects dead code, security vulnerabilities (SQLi, SSRF, Secrets), and code quality issues with high precision. Skylos uses a hybrid engine (AST + optional Local/Cloud LLM) to eliminate false positives, verify via runtime, find logic bugs, and provide context-aware audits. It offers automated fixes, end-to-end remediation, and 100% local privacy. The tool supports taint analysis, secrets detection, vulnerability checks, dead code detection and cleanup, agentic AI and hybrid analysis, codebase optimization, operational governance, and runtime verification.

flyto-core

Flyto-core is a powerful Python library for geospatial analysis and visualization. It provides a wide range of tools for working with geographic data, including support for various file formats, spatial operations, and interactive mapping. With Flyto-core, users can easily load, manipulate, and visualize spatial data to gain insights and make informed decisions. Whether you are a GIS professional, a data scientist, or a developer, Flyto-core offers a versatile and user-friendly solution for geospatial tasks.

EasyEdit

EasyEdit is a Python package for edit Large Language Models (LLM) like `GPT-J`, `Llama`, `GPT-NEO`, `GPT2`, `T5`(support models from **1B** to **65B**), the objective of which is to alter the behavior of LLMs efficiently within a specific domain without negatively impacting performance across other inputs. It is designed to be easy to use and easy to extend.

pdf_oxide

PDF Oxide is a fast PDF library for Python and Rust that offers text extraction, image extraction, and markdown conversion. It is built on a Rust core, providing high performance with a mean processing time of 0.8ms per document. The library is 5 times faster than PyMuPDF and 15 times faster than pypdf, with a 100% pass rate on 3,830 real-world PDFs. It supports text extraction, image extraction, PDF creation, and editing, and offers a dual-language API with Python bindings. PDF Oxide is licensed under MIT and Apache-2.0, allowing free usage in both commercial and open-source projects.

LitServe

LitServe is a high-throughput serving engine designed for deploying AI models at scale. It generates an API endpoint for models, handles batching, streaming, and autoscaling across CPU/GPUs. LitServe is built for enterprise scale with a focus on minimal, hackable code-base without bloat. It supports various model types like LLMs, vision, time-series, and works with frameworks like PyTorch, JAX, Tensorflow, and more. The tool allows users to focus on model performance rather than serving boilerplate, providing full control and flexibility.

gateway

Gateway is a tool that streamlines requests to 100+ open & closed source models with a unified API. It is production-ready with support for caching, fallbacks, retries, timeouts, load balancing, and can be edge-deployed for minimum latency. It is blazing fast with a tiny footprint, supports load balancing across multiple models, providers, and keys, ensures app resilience with fallbacks, offers automatic retries with exponential fallbacks, allows configurable request timeouts, supports multimodal routing, and can be extended with plug-in middleware. It is battle-tested over 300B tokens and enterprise-ready for enhanced security, scale, and custom deployments.

codemogger

Code indexing library for AI coding agents. Parses source code with tree-sitter, chunks it into semantic units (functions, structs, classes, impl blocks), embeds them locally, and stores everything in a single SQLite file with vector + full-text search. No Docker, no server, no API keys. One .db file per codebase. Enables keyword search and semantic search for AI coding tools, facilitating precise identifier lookup and natural language queries. Suitable for understanding codebases, discovering implementations, and navigating unfamiliar code quickly. Can be used as a library or CLI tool with incremental indexing and high search quality.

MooER

MooER (摩耳) is an LLM-based speech recognition and translation model developed by Moore Threads. It allows users to transcribe speech into text (ASR) and translate speech into other languages (AST) in an end-to-end manner. The model was trained using 5K hours of data and is now also available with an 80K hours version. MooER is the first LLM-based speech model trained and inferred using domestic GPUs. The repository includes pretrained models, inference code, and a Gradio demo for a better user experience.

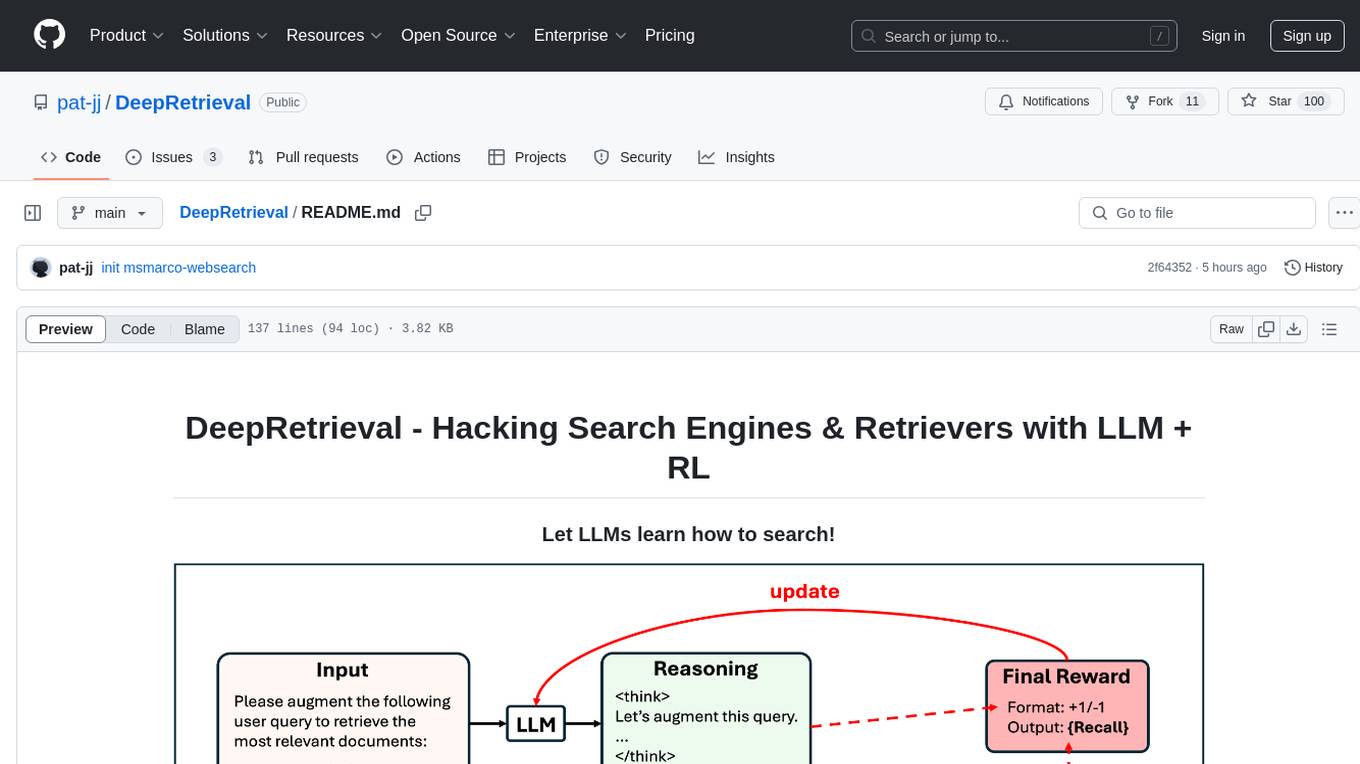

DeepRetrieval

DeepRetrieval is a tool designed to enhance search engines and retrievers using Large Language Models (LLMs) and Reinforcement Learning (RL). It allows LLMs to learn how to search effectively by integrating with search engine APIs and customizing reward functions. The tool provides functionalities for data preparation, training, evaluation, and monitoring search performance. DeepRetrieval aims to improve information retrieval tasks by leveraging advanced AI techniques.

FalkorDB

FalkorDB is the first queryable Property Graph database to use sparse matrices to represent the adjacency matrix in graphs and linear algebra to query the graph. Primary features: * Adopting the Property Graph Model * Nodes (vertices) and Relationships (edges) that may have attributes * Nodes can have multiple labels * Relationships have a relationship type * Graphs represented as sparse adjacency matrices * OpenCypher with proprietary extensions as a query language * Queries are translated into linear algebra expressions

eko

Eko is a lightweight and flexible command-line tool for managing environment variables in your projects. It allows you to easily set, get, and delete environment variables for different environments, making it simple to manage configurations across development, staging, and production environments. With Eko, you can streamline your workflow and ensure consistency in your application settings without the need for complex setup or configuration files.

For similar tasks

mdex

MDEx is a fast and extensible Markdown library for Elixir that is compliant with the CommonMark spec. It offers various formats such as Markdown, HTML, Phoenix HEEx, JSON, XML, Quill Delta, and more. The tool provides features like plugins for GitHub Flavored Markdown, code block decorators, HTML sanitization, emoji shortcodes, and syntax highlighting. It is built on top of comrak, ammonia, and lumis, and is used by various projects like BeaconCMS, Tableau, and Bonfire. MDEx was developed to parse CommonMark files quickly and be easily extensible by consumers of the library.

For similar jobs

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

daily-poetry-image

Daily Chinese ancient poetry and AI-generated images powered by Bing DALL-E-3. GitHub Action triggers the process automatically. Poetry is provided by Today's Poem API. The website is built with Astro.

exif-photo-blog

EXIF Photo Blog is a full-stack photo blog application built with Next.js, Vercel, and Postgres. It features built-in authentication, photo upload with EXIF extraction, photo organization by tag, infinite scroll, light/dark mode, automatic OG image generation, a CMD-K menu with photo search, experimental support for AI-generated descriptions, and support for Fujifilm simulations. The application is easy to deploy to Vercel with just a few clicks and can be customized with a variety of environment variables.

SillyTavern

SillyTavern is a user interface you can install on your computer (and Android phones) that allows you to interact with text generation AIs and chat/roleplay with characters you or the community create. SillyTavern is a fork of TavernAI 1.2.8 which is under more active development and has added many major features. At this point, they can be thought of as completely independent programs.

Twitter-Insight-LLM

This project enables you to fetch liked tweets from Twitter (using Selenium), save it to JSON and Excel files, and perform initial data analysis and image captions. This is part of the initial steps for a larger personal project involving Large Language Models (LLMs).

AISuperDomain

Aila Desktop Application is a powerful tool that integrates multiple leading AI models into a single desktop application. It allows users to interact with various AI models simultaneously, providing diverse responses and insights to their inquiries. With its user-friendly interface and customizable features, Aila empowers users to engage with AI seamlessly and efficiently. Whether you're a researcher, student, or professional, Aila can enhance your AI interactions and streamline your workflow.

ChatGPT-On-CS

This project is an intelligent dialogue customer service tool based on a large model, which supports access to platforms such as WeChat, Qianniu, Bilibili, Douyin Enterprise, Douyin, Doudian, Weibo chat, Xiaohongshu professional account operation, Xiaohongshu, Zhihu, etc. You can choose GPT3.5/GPT4.0/ Lazy Treasure Box (more platforms will be supported in the future), which can process text, voice and pictures, and access external resources such as operating systems and the Internet through plug-ins, and support enterprise AI applications customized based on their own knowledge base.

obs-localvocal

LocalVocal is a live-streaming AI assistant plugin for OBS that allows you to transcribe audio speech into text and perform various language processing functions on the text using AI / LLMs (Large Language Models). It's privacy-first, with all data staying on your machine, and requires no GPU, cloud costs, network, or downtime.