golf

Production-Ready MCP Server Framework • Build, deploy & scale secure AI agent infrastructure • Includes Auth, Observability, Debugger, Telemetry & Runtime • Run real-world MCPs powering AI Agents

Stars: 776

Golf is a simple command-line tool for calculating the distance between two geographic coordinates. It uses the Haversine formula to accurately determine the distance between two points on the Earth's surface. This tool is useful for developers working on location-based applications or projects that require distance calculations. With Golf, users can easily input latitude and longitude coordinates and get the precise distance in kilometers or miles. The tool is lightweight, easy to use, and can be integrated into various programming workflows.

README:

Golf is a framework designed to streamline the creation of MCP server applications. It allows developers to define server's capabilities—tools, prompts, and resources—as simple Python files within a conventional directory structure. Golf then automatically discovers, parses, and compiles these components into a runnable MCP server, minimizing boilerplate and accelerating development.

With Golf v0.2.0, you get enterprise-grade authentication (JWT, OAuth Server, API key, development tokens), built-in utilities for LLM interactions, and automatic telemetry integration. Focus on implementing your agent's logic while Golf handles authentication, monitoring, and server infrastructure.

Get your Golf project up and running in a few simple steps:

Ensure you have Python (3.10+ recommended) installed. Then, install Golf using pip:

pip install golf-mcpUse the Golf CLI to scaffold a new project:

golf init your-project-nameThis command creates a new directory (your-project-name) with a basic project structure, including example tools, resources, and a golf.json configuration file.

Navigate into your new project directory and start the development server:

cd your-project-name

golf build dev

golf runThis will start the MCP server, typically on http://localhost:3000 (configurable in golf.json).

That's it! Your Golf server is running and ready for integration.

A Golf project initialized with golf init will have a structure similar to this:

<your-project-name>/

│

├─ golf.json # Main project configuration

│

├─ tools/ # Directory for tool implementations

│ └─ hello.py # Example tool

│

├─ resources/ # Directory for resource implementations

│ └─ info.py # Example resource

│

├─ prompts/ # Directory for prompt templates

│ └─ welcome.py # Example prompt

│

├─ .env # Environment variables (e.g., API keys, server port)

└─ auth.py # Authentication configuration (JWT, OAuth Server, API key, dev tokens)

-

golf.json: Configures server name, port, transport, telemetry, and other build settings. -

auth.py: Dedicated authentication configuration file (new in v0.2.0, breaking change from v0.1.x authentication API) for JWT, OAuth Server, API key, or development authentication. -

tools/,resources/,prompts/: Contain your Python files, each defining a single component. These directories can also contain nested subdirectories to further organize your components (e.g.,tools/payments/charge.py). The module docstring of each file serves as the component's description.- Component IDs are automatically derived from their file path. For example,

tools/hello.pybecomeshello, and a nested file liketools/payments/submit.pywould becomesubmit_payments(filename, followed by reversed parent directories under the main category, joined by underscores).

- Component IDs are automatically derived from their file path. For example,

Creating a new tool is as simple as adding a Python file to the tools/ directory. The example tools/hello.py in the boilerplate looks like this:

# tools/hello.py

"""Hello World tool {{project_name}}."""

from typing import Annotated

from pydantic import BaseModel, Field

class Output(BaseModel):

"""Response from the hello tool."""

message: str

async def hello(

name: Annotated[str, Field(description="The name of the person to greet")] = "World",

greeting: Annotated[str, Field(description="The greeting phrase to use")] = "Hello"

) -> Output:

"""Say hello to the given name.

This is a simple example tool that demonstrates the basic structure

of a tool implementation in Golf.

"""

print(f"{greeting} {name}...")

return Output(message=f"{greeting}, {name}!")

# Designate the entry point function

export = helloGolf will automatically discover this file. The module docstring """Hello World tool {{project_name}}.""" is used as the tool's description. It infers parameters from the hello function's signature and uses the Output Pydantic model for the output schema. The tool will be registered with the ID hello.

Golf includes enterprise-grade authentication, built-in utilities, and automatic telemetry:

# auth.py - Configure authentication

from golf.auth import configure_auth, JWTAuthConfig, StaticTokenConfig, OAuthServerConfig

# JWT authentication (production)

configure_auth(JWTAuthConfig(

jwks_uri_env_var="JWKS_URI",

issuer_env_var="JWT_ISSUER",

audience_env_var="JWT_AUDIENCE",

required_scopes=["read", "write"]

))

# OAuth Server mode (Golf acts as OAuth 2.0 server)

# configure_auth(OAuthServerConfig(

# base_url="https://your-golf-server.com",

# valid_scopes=["read", "write", "admin"]

# ))

# Static tokens (development only)

# configure_auth(StaticTokenConfig(

# tokens={"dev-token": {"client_id": "dev", "scopes": ["read"]}}

# ))

# Built-in utilities available in all tools

from golf.utils import elicit, sample, get_context# Automatic telemetry with Golf Platform

export GOLF_API_KEY="your-key"

golf run # ✅ Telemetry enabled automaticallyBasic configuration in golf.json:

{

"name": "My Golf Server",

"host": "localhost",

"port": 3000,

"transport": "sse",

"opentelemetry_enabled": false,

"detailed_tracing": false

}-

transport: Choose"sse","streamable-http", or"stdio" -

opentelemetry_enabled: Auto-enabled withGOLF_API_KEY -

detailed_tracing: Capture input/output (use carefully with sensitive data)

Golf collects anonymous usage data on the CLI to help us understand how the framework is being used and improve it over time. The data collected includes:

- Commands run (init, build, run)

- Success/failure status (no error details)

- Golf version, Python version (major.minor only), and OS type

- Template name (for init command only)

- Build environment (dev/prod for build commands only)

No personal information, project names, code content, or error messages are ever collected.

You can disable telemetry in several ways:

-

Using the telemetry command (recommended):

golf telemetry disable

This saves your preference permanently. To re-enable:

golf telemetry enable -

During any command: Add

--no-telemetryto save your preference:golf init my-project --no-telemetry

Your telemetry preference is stored in ~/.golf/telemetry.json and persists across all Golf commands.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for golf

Similar Open Source Tools

golf

Golf is a simple command-line tool for calculating the distance between two geographic coordinates. It uses the Haversine formula to accurately determine the distance between two points on the Earth's surface. This tool is useful for developers working on location-based applications or projects that require distance calculations. With Golf, users can easily input latitude and longitude coordinates and get the precise distance in kilometers or miles. The tool is lightweight, easy to use, and can be integrated into various programming workflows.

graphiti

Graphiti is a framework for building and querying temporally-aware knowledge graphs, tailored for AI agents in dynamic environments. It continuously integrates user interactions, structured and unstructured data, and external information into a coherent, queryable graph. The framework supports incremental data updates, efficient retrieval, and precise historical queries without complete graph recomputation, making it suitable for developing interactive, context-aware AI applications.

datagouv-mcp

datagouv-mcp is a Model Context Protocol (MCP) server designed to facilitate AI chatbots (such as Claude, ChatGPT, Gemini) in searching, exploring, and analyzing datasets from data.gouv.fr, the French national Open Data platform, through conversation. Users can ask questions like 'Quels jeux de données sont disponibles sur les prix de l'immobilier?' or 'Montre-moi les dernières données de population pour Paris' to get instant answers without manually browsing the website. The server provides tools to interact with datasets and dataservices, supporting features like searching datasets, getting dataset information, listing resources, querying resource data, and more. It also offers support for various chatbots like ChatGPT, Claude Desktop, Claude Code, Gemini CLI, Mistral Vibe CLI, AnythingLLM, VS Code, Cursor, Windsurf, and provides detailed instructions for connecting chatbots to the server.

fast-mcp

Fast MCP is a Ruby gem that simplifies the integration of AI models with your Ruby applications. It provides a clean implementation of the Model Context Protocol, eliminating complex communication protocols, integration challenges, and compatibility issues. With Fast MCP, you can easily connect AI models to your servers, share data resources, choose from multiple transports, integrate with frameworks like Rails and Sinatra, and secure your AI-powered endpoints. The gem also offers real-time updates and authentication support, making AI integration a seamless experience for developers.

code-assistant

Code Assistant is an AI coding tool built in Rust that offers command-line and graphical interfaces for autonomous code analysis and modification. It supports multi-modal tool execution, real-time streaming interface, session-based project management, multiple interface options, and intelligent project exploration. The tool provides auto-loaded repository guidance and allows for project configuration with format-on-save feature. Users can interact with the tool in GUI, terminal, or MCP server mode, and configure LLM providers for advanced options. The architecture highlights adaptive tool syntax, smart tool filtering, and multi-threaded streaming for efficient performance. Contributions are welcome, and the roadmap includes features like block replacing in changed files, compact tool use failures, UI improvements, memory tools, security enhancements, fuzzy matching search blocks, editing user messages, and selecting in messages.

bedrock-claude-chat

This repository is a sample chatbot using the Anthropic company's LLM Claude, one of the foundational models provided by Amazon Bedrock for generative AI. It allows users to have basic conversations with the chatbot, personalize it with their own instructions and external knowledge, and analyze usage for each user/bot on the administrator dashboard. The chatbot supports various languages, including English, Japanese, Korean, Chinese, French, German, and Spanish. Deployment is straightforward and can be done via the command line or by using AWS CDK. The architecture is built on AWS managed services, eliminating the need for infrastructure management and ensuring scalability, reliability, and security.

Groqqle

Groqqle 2.1 is a revolutionary, free AI web search and API that instantly returns ORIGINAL content derived from source articles, websites, videos, and even foreign language sources, for ANY target market of ANY reading comprehension level! It combines the power of large language models with advanced web and news search capabilities, offering a user-friendly web interface, a robust API, and now a powerful Groqqle_web_tool for seamless integration into your projects. Developers can instantly incorporate Groqqle into their applications, providing a powerful tool for content generation, research, and analysis across various domains and languages.

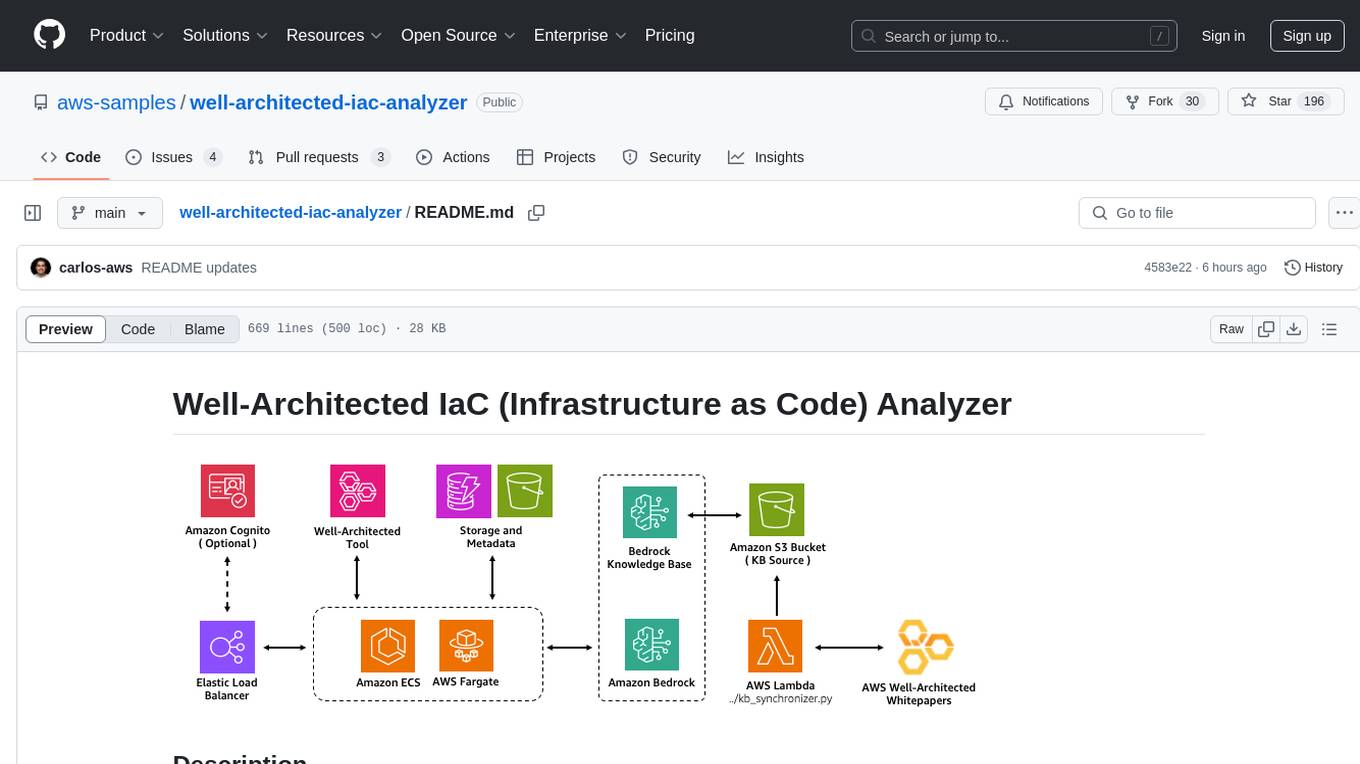

well-architected-iac-analyzer

Well-Architected Infrastructure as Code (IaC) Analyzer is a project demonstrating how generative AI can evaluate infrastructure code for alignment with best practices. It features a modern web application allowing users to upload IaC documents, complete IaC projects, or architecture diagrams for assessment. The tool provides insights into infrastructure code alignment with AWS best practices, offers suggestions for improving cloud architecture designs, and can generate IaC templates from architecture diagrams. Users can analyze CloudFormation, Terraform, or AWS CDK templates, architecture diagrams in PNG or JPEG format, and complete IaC projects with supporting documents. Real-time analysis against Well-Architected best practices, integration with AWS Well-Architected Tool, and export of analysis results and recommendations are included.

sdfx

SDFX is the ultimate no-code platform for building and sharing AI apps with beautiful UI. It enables the creation of user-friendly interfaces for complex workflows by combining Comfy workflow with a UI. The tool is designed to merge the benefits of form-based UI and graph-node based UI, allowing users to create intricate graphs with a high-level UI overlay. SDFX is fully compatible with ComfyUI, abstracting the need for installing ComfyUI. It offers features like animated graph navigation, node bookmarks, UI debugger, custom nodes manager, app and template export, image and mask editor, and more. The tool compiles as a native app or web app, making it easy to maintain and add new features.

nanocoder

Nanocoder is a local-first CLI coding agent that supports multiple AI providers with tool support for file operations and command execution. It focuses on privacy and control, allowing users to code locally with AI tools. The tool is designed to bring the power of agentic coding tools to local models or controlled APIs like OpenRouter, promoting community-led development and inclusive collaboration in the AI coding space.

code2prompt

Code2Prompt is a powerful command-line tool that generates comprehensive prompts from codebases, designed to streamline interactions between developers and Large Language Models (LLMs) for code analysis, documentation, and improvement tasks. It bridges the gap between codebases and LLMs by converting projects into AI-friendly prompts, enabling users to leverage AI for various software development tasks. The tool offers features like holistic codebase representation, intelligent source tree generation, customizable prompt templates, smart token management, Gitignore integration, flexible file handling, clipboard-ready output, multiple output options, and enhanced code readability.

agents-starter

A starter template for building AI-powered chat agents using Cloudflare's Agent platform, powered by agents-sdk. It provides a foundation for creating interactive chat experiences with AI, complete with a modern UI and tool integration capabilities. Features include interactive chat interface with AI, built-in tool system with human-in-the-loop confirmation, advanced task scheduling, dark/light theme support, real-time streaming responses, state management, and chat history. Prerequisites include a Cloudflare account and OpenAI API key. The project structure includes components for chat UI implementation, chat agent logic, tool definitions, and helper functions. Customization guide covers adding new tools, modifying the UI, and example use cases for customer support, development assistant, data analysis assistant, personal productivity assistant, and scheduling assistant.

orra

Orra is a tool for building production-ready multi-agent applications that handle complex real-world interactions. It coordinates tasks across existing stack, agents, and tools run as services using intelligent reasoning. With features like smart pre-evaluated execution plans, domain grounding, durable execution, and automatic service health monitoring, Orra enables users to go fast with tools as services and revert state to handle failures. It provides real-time status tracking and webhook result delivery, making it ideal for developers looking to move beyond simple crews and agents.

RooFlow

RooFlow is a VS Code extension that enhances AI-assisted development by providing persistent project context and optimized mode interactions. It reduces token consumption and streamlines workflow by integrating Architect, Code, Test, Debug, and Ask modes. The tool simplifies setup, offers real-time updates, and provides clearer instructions through YAML-based rule files. It includes components like Memory Bank, System Prompts, VS Code Integration, and Real-time Updates. Users can install RooFlow by downloading specific files, placing them in the project structure, and running an insert-variables script. They can then start a chat, select a mode, interact with Roo, and use the 'Update Memory Bank' command for synchronization. The Memory Bank structure includes files for active context, decision log, product context, progress tracking, and system patterns. RooFlow features persistent context, real-time updates, mode collaboration, and reduced token consumption.

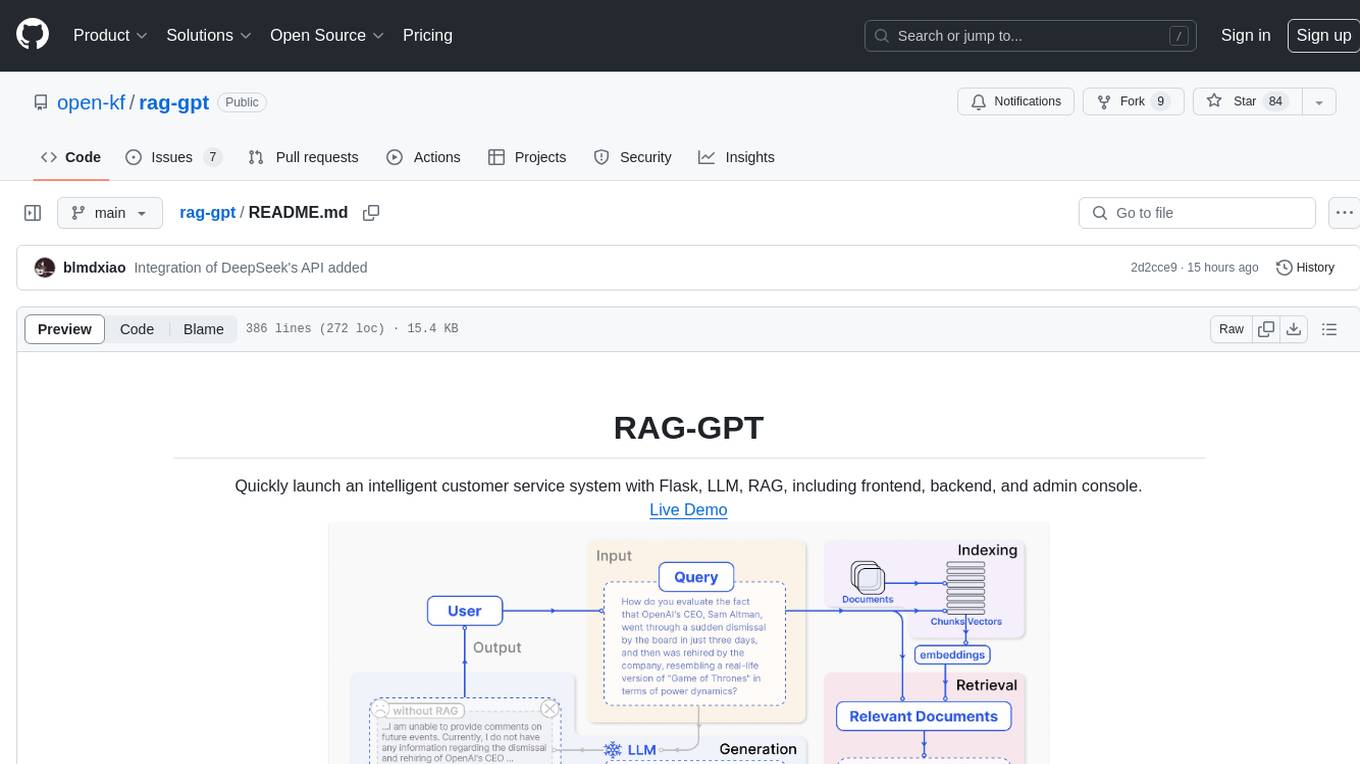

rag-gpt

RAG-GPT is a tool that allows users to quickly launch an intelligent customer service system with Flask, LLM, and RAG. It includes frontend, backend, and admin console components. The tool supports cloud-based and local LLMs, enables deployment of conversational service robots in minutes, integrates diverse knowledge bases, offers flexible configuration options, and features an attractive user interface.

cursor-tools

cursor-tools is a CLI tool designed to enhance AI agents with advanced skills, such as web search, repository context, documentation generation, GitHub integration, Xcode tools, and browser automation. It provides features like Perplexity for web search, Gemini 2.0 for codebase context, and Stagehand for browser operations. The tool requires API keys for Perplexity AI and Google Gemini, and supports global installation for system-wide access. It offers various commands for different tasks and integrates with Cursor Composer for AI agent usage.

For similar tasks

simplemind

Simplemind is an AI library designed to simplify the experience with AI APIs in Python. It provides easy-to-use AI tools with a human-centered design and minimal configuration. Users can tap into powerful AI capabilities through simple interfaces, without needing to be experts. The library supports various APIs from different providers/models and offers features like text completion, streaming text, structured data handling, conversational AI, tool calling, and logging. Simplemind aims to make AI models accessible to all by abstracting away complexity and prioritizing readability and usability.

golf

Golf is a simple command-line tool for calculating the distance between two geographic coordinates. It uses the Haversine formula to accurately determine the distance between two points on the Earth's surface. This tool is useful for developers working on location-based applications or projects that require distance calculations. With Golf, users can easily input latitude and longitude coordinates and get the precise distance in kilometers or miles. The tool is lightweight, easy to use, and can be integrated into various programming workflows.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

agentcloud

AgentCloud is an open-source platform that enables companies to build and deploy private LLM chat apps, empowering teams to securely interact with their data. It comprises three main components: Agent Backend, Webapp, and Vector Proxy. To run this project locally, clone the repository, install Docker, and start the services. The project is licensed under the GNU Affero General Public License, version 3 only. Contributions and feedback are welcome from the community.

oss-fuzz-gen

This framework generates fuzz targets for real-world `C`/`C++` projects with various Large Language Models (LLM) and benchmarks them via the `OSS-Fuzz` platform. It manages to successfully leverage LLMs to generate valid fuzz targets (which generate non-zero coverage increase) for 160 C/C++ projects. The maximum line coverage increase is 29% from the existing human-written targets.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

Azure-Analytics-and-AI-Engagement

The Azure-Analytics-and-AI-Engagement repository provides packaged Industry Scenario DREAM Demos with ARM templates (Containing a demo web application, Power BI reports, Synapse resources, AML Notebooks etc.) that can be deployed in a customer’s subscription using the CAPE tool within a matter of few hours. Partners can also deploy DREAM Demos in their own subscriptions using DPoC.