happy-llm

📚 从零开始的大语言模型原理与实践教程

Stars: 17450

Happy-LLM is a systematic learning tutorial for Large Language Models (LLM) that covers NLP research methods, LLM architecture, training process, and practical applications. It aims to help readers understand the principles and training processes of large language models. The tutorial delves into Transformer architecture, attention mechanisms, pre-training language models, building LLMs, training processes, and practical applications like RAG and Agent technologies. It is suitable for students, researchers, and LLM enthusiasts with programming experience, Python knowledge, and familiarity with deep learning and NLP concepts. The tutorial encourages hands-on practice and participation in LLM projects and competitions to deepen understanding and contribute to the open-source LLM community.

README:

很多小伙伴在看完 Datawhale开源项目: self-llm 开源大模型食用指南 后,感觉意犹未尽,想要深入了解大语言模型的原理和训练过程。于是我们(Datawhale)决定推出《Happy-LLM》项目,旨在帮助大家深入理解大语言模型的原理和训练过程。

本项目是一个系统性的 LLM 学习教程,将从 NLP 的基本研究方法出发,根据 LLM 的思路及原理逐层深入,依次为读者剖析 LLM 的架构基础和训练过程。同时,我们会结合目前 LLM 领域最主流的代码框架,演练如何亲手搭建、训练一个 LLM,期以实现授之以鱼,更授之以渔。希望大家能从这本书开始走入 LLM 的浩瀚世界,探索 LLM 的无尽可能。

- 📚 Datawhale 开源免费 完全免费的学习本项目所有内容

- 🔍 深入理解 Transformer 架构和注意力机制

- 📚 掌握 预训练语言模型的基本原理

- 🧠 了解 现有大模型的基本结构

- 🏗️ 动手实现 一个完整的 LLaMA2 模型

- ⚙️ 掌握训练 从预训练到微调的全流程

- 🚀 实战应用 RAG、Agent 等前沿技术

| 章节 | 关键内容 | 状态 |

|---|---|---|

| 前言 | 本项目的缘起、背景及读者建议 | ✅ |

| 第一章 NLP 基础概念 | 什么是 NLP、发展历程、任务分类、文本表示演进 | ✅ |

| 第二章 Transformer 架构 | 注意力机制、Encoder-Decoder、手把手搭建 Transformer | ✅ |

| 第三章 预训练语言模型 | Encoder-only、Encoder-Decoder、Decoder-Only 模型对比 | ✅ |

| 第四章 大语言模型 | LLM 定义、训练策略、涌现能力分析 | ✅ |

| 第五章 动手搭建大模型 | 实现 LLaMA2、训练 Tokenizer、预训练小型 LLM | ✅ |

| 第六章 大模型训练实践 | 预训练、有监督微调、LoRA/QLoRA 高效微调 | 🚧 |

| 第七章 大模型应用 | 模型评测、RAG 检索增强、Agent 智能体 | ✅ |

| Extra Chapter LLM Blog | 优秀的大模型 学习笔记/Blog ,欢迎大家来 PR ! | 🚧 |

-

大模型都这么厉害了,微调0.6B的小模型有什么意义? @不要葱姜蒜 2025-7-11

-

Transformer 整体模块设计解读 @ditingdapeng 2025-7-14

-

Qwen3-"VL"——超小中文多模态模型的“拼接微调”之路 @ShaohonChen 2025-7-30

-

S1: Thinking Budget with vLLM @kmno4-zx 2025-8-03

-

CDDRS: 使用细粒度语义信息指导增强的RAG检索方法 @Hongru0306 2025-8-21

如果大家在学习 Happy-LLM 项目或 LLM 相关知识中有自己独到的见解、认知、实践,欢迎大家 PR 在 Extra Chapter LLM Blog 中。请遵守 Extra Chapter LLM Blog 的 PR 规范,我们会视 PR 内容的质量和价值来决定是否合并或补充到 Happy-LLM 正文中来。

| 模型名称 | 下载地址 |

|---|---|

| Happy-LLM-Chapter5-Base-215M | 🤖 ModelScope |

| Happy-LLM-Chapter5-SFT-215M | 🤖 ModelScope |

ModelScope 创空间体验地址:🤖 创空间

本 Happy-LLM PDF 教程完全开源免费。为防止各类营销号加水印后贩卖给大模型初学者,我们特地在 PDF 文件中预先添加了不影响阅读的 Datawhale 开源标志水印,敬请谅解~

Happy-LLM PDF : https://github.com/datawhalechina/happy-llm/releases/tag/v1.0.1

本项目适合大学生、研究人员、LLM 爱好者。在学习本项目之前,建议具备一定的编程经验,尤其是要对 Python 编程语言有一定的了解。最好具备深度学习的相关知识,并了解 NLP 领域的相关概念和术语,以便更轻松地学习本项目。

本项目分为两部分——基础知识与实战应用。第1章~第4章是基础知识部分,从浅入深介绍 LLM 的基本原理。其中,第1章简单介绍 NLP 的基本任务和发展,为非 NLP 领域研究者提供参考;第2章介绍 LLM 的基本架构——Transformer,包括原理介绍及代码实现,作为 LLM 最重要的理论基础;第3章整体介绍经典的 PLM,包括 Encoder-Only、Encoder-Decoder 和 Decoder-Only 三种架构,也同时介绍了当前一些主流 LLM 的架构和思想;第4章则正式进入 LLM 部分,详细介绍 LLM 的特点、能力和整体训练过程。第5章~第7章是实战应用部分,将逐步带领大家深入 LLM 的底层细节。其中,第5章将带领大家者基于 PyTorch 层亲手搭建一个 LLM,并实现预训练、有监督微调的全流程;第6章将引入目前业界主流的 LLM 训练框架 Transformers,带领学习者基于该框架快速、高效地实现 LLM 训练过程;第7章则将介绍 基于 LLM 的各种应用,补全学习者对 LLM 体系的认知,包括 LLM 的评测、检索增强生成(Retrieval-Augmented Generation,RAG)、智能体(Agent)的思想和简单实现。你可以根据个人兴趣和需求,选择性地阅读相关章节。

在阅读本书的过程中,建议你将理论和实际相结合。LLM 是一个快速发展、注重实践的领域,我们建议你多投入实战,复现本书提供的各种代码,同时积极参加 LLM 相关的项目与比赛,真正投入到 LLM 开发的浪潮中。我们鼓励你关注 Datawhale 及其他 LLM 相关开源社区,当遇到问题时,你可以随时在本项目的 issue 区提问。

最后,欢迎每一位读者在学习完本项目后加入到 LLM 开发者的行列。作为国内 AI 开源社区,我们希望充分聚集共创者,一起丰富这个开源 LLM 的世界,打造更多、更全面特色 LLM 的教程。星火点点,汇聚成海。我们希望成为 LLM 与普罗大众的阶梯,以自由、平等的开源精神,拥抱更恢弘而辽阔的 LLM 世界。

我们欢迎任何形式的贡献!

- 🐛 报告 Bug - 发现问题请提交 Issue

- 💡 功能建议 - 有好想法就告诉我们

- 📝 内容完善 - 帮助改进教程内容

- 🔧 代码优化 - 提交 Pull Request

- 宋志学-项目负责人 (Datawhale成员-中国矿业大学(北京))

- 邹雨衡-项目负责人 (Datawhale成员-对外经济贸易大学)

- 朱信忠-指导专家(Datawhale首席科学家-浙江师范大学杭州人工智能研究院教授)

- ditingdapeng(内容贡献者-云原生基础架构工程师)

- 蔡鋆捷(内容贡献者-福州大学)

- ShaohonChen (情感机器实验室研究员-西安电子科技大学在读硕士)

- 肖鸿儒, 庄健琨 (内容贡献者-同济大学)

- 感谢 @Sm1les 对本项目的帮助与支持

- 感谢所有为本项目做出贡献的开发者们 ❤️

⭐ 如果这个项目对你有帮助,请给我们一个 Star!

本作品采用知识共享署名-非商业性使用-相同方式共享 4.0 国际许可协议进行许可。

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for happy-llm

Similar Open Source Tools

happy-llm

Happy-LLM is a systematic learning tutorial for Large Language Models (LLM) that covers NLP research methods, LLM architecture, training process, and practical applications. It aims to help readers understand the principles and training processes of large language models. The tutorial delves into Transformer architecture, attention mechanisms, pre-training language models, building LLMs, training processes, and practical applications like RAG and Agent technologies. It is suitable for students, researchers, and LLM enthusiasts with programming experience, Python knowledge, and familiarity with deep learning and NLP concepts. The tutorial encourages hands-on practice and participation in LLM projects and competitions to deepen understanding and contribute to the open-source LLM community.

Open-dLLM

Open-dLLM is the most open release of a diffusion-based large language model, providing pretraining, evaluation, inference, and checkpoints. It introduces Open-dCoder, the code-generation variant of Open-dLLM. The repo offers a complete stack for diffusion LLMs, enabling users to go from raw data to training, checkpoints, evaluation, and inference in one place. It includes pretraining pipeline with open datasets, inference scripts for easy sampling and generation, evaluation suite with various metrics, weights and checkpoints on Hugging Face, and transparent configs for full reproducibility.

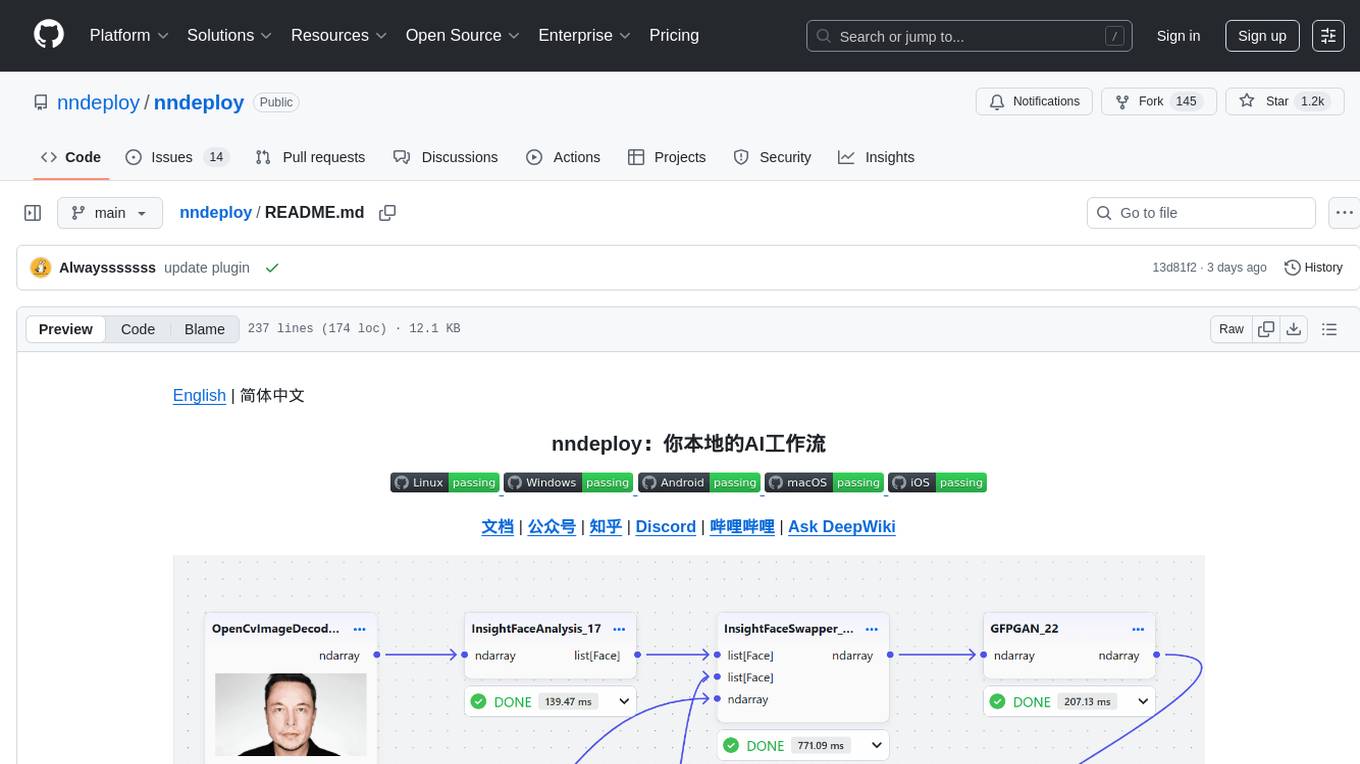

nndeploy

nndeploy is a tool that allows you to quickly build your visual AI workflow without the need for frontend technology. It provides ready-to-use algorithm nodes for non-AI programmers, including large language models, Stable Diffusion, object detection, image segmentation, etc. The workflow can be exported as a JSON configuration file, supporting Python/C++ API for direct loading and running, deployment on cloud servers, desktops, mobile devices, edge devices, and more. The framework includes mainstream high-performance inference engines and deep optimization strategies to help you transform your workflow into enterprise-level production applications.

Native-LLM-for-Android

This repository provides a demonstration of running a native Large Language Model (LLM) on Android devices. It supports various models such as Qwen2.5-Instruct, MiniCPM-DPO/SFT, Yuan2.0, Gemma2-it, StableLM2-Chat/Zephyr, and Phi3.5-mini-instruct. The demo models are optimized for extreme execution speed after being converted from HuggingFace or ModelScope. Users can download the demo models from the provided drive link, place them in the assets folder, and follow specific instructions for decompression and model export. The repository also includes information on quantization methods and performance benchmarks for different models on various devices.

ipex-llm

IPEX-LLM is a PyTorch library for running Large Language Models (LLMs) on Intel CPUs and GPUs with very low latency. It provides seamless integration with various LLM frameworks and tools, including llama.cpp, ollama, Text-Generation-WebUI, HuggingFace transformers, and more. IPEX-LLM has been optimized and verified on over 50 LLM models, including LLaMA, Mistral, Mixtral, Gemma, LLaVA, Whisper, ChatGLM, Baichuan, Qwen, and RWKV. It supports a range of low-bit inference formats, including INT4, FP8, FP4, INT8, INT2, FP16, and BF16, as well as finetuning capabilities for LoRA, QLoRA, DPO, QA-LoRA, and ReLoRA. IPEX-LLM is actively maintained and updated with new features and optimizations, making it a valuable tool for researchers, developers, and anyone interested in exploring and utilizing LLMs.

AIGC-Interview-Book

AIGC-Interview-Book is the ultimate guide for AIGC algorithm and development job interviews, covering a wide range of topics such as AIGC, traditional deep learning, autonomous driving, AI agent, machine learning, computer vision, natural language processing, reinforcement learning, embodied intelligence, metaverse, AGI, Python, Java, C/C++, Go, embedded systems, front-end, back-end, testing, and operations. The repository consolidates industry experience and insights from frontline AIGC algorithm experts, providing resources on AIGC knowledge framework, internal referrals at AIGC big companies, interview experiences, company guides, AI campus recruitment schedule, interview preparation, salary insights, coding guide, and job-seeking Q&A. It serves as a valuable resource for AIGC-related professionals, students, and job seekers, offering insights and guidance for career advancement and job interviews in the AIGC field.

speechless

Speechless.AI is committed to integrating the superior language processing and deep reasoning capabilities of large language models into practical business applications. By enhancing the model's language understanding, knowledge accumulation, and text creation abilities, and introducing long-term memory, external tool integration, and local deployment, our aim is to establish an intelligent collaborative partner that can independently interact, continuously evolve, and closely align with various business scenarios.

ipex-llm

The `ipex-llm` repository is an LLM acceleration library designed for Intel GPU, NPU, and CPU. It provides seamless integration with various models and tools like llama.cpp, Ollama, HuggingFace transformers, LangChain, LlamaIndex, vLLM, Text-Generation-WebUI, DeepSpeed-AutoTP, FastChat, Axolotl, and more. The library offers optimizations for over 70 models, XPU acceleration, and support for low-bit (FP8/FP6/FP4/INT4) operations. Users can run different models on Intel GPUs, NPU, and CPUs with support for various features like finetuning, inference, serving, and benchmarking.

AI-LLM-ML-CS-Quant-Overview

AI-LLM-ML-CS-Quant-Overview is a repository providing overview notes on AI, Large Language Models (LLM), Machine Learning (ML), Computer Science (CS), and Quantitative Finance. It covers various topics such as LangGraph & Cursor AI, DeepSeek, MoE (Mixture of Experts), NVIDIA GTC, LLM Essentials, System Design, Computer Systems, Big Data and AI in Finance, Econometrics and Statistics Conference, C++ Design Patterns and Derivatives Pricing, High-Frequency Finance, Machine Learning for Algorithmic Trading, Stochastic Volatility Modeling, Quant Job Interview Questions, Distributed Systems, Language Models, Designing Machine Learning Systems, Designing Data-Intensive Applications (DDIA), Distributed Machine Learning, and The Elements of Quantitative Investing.

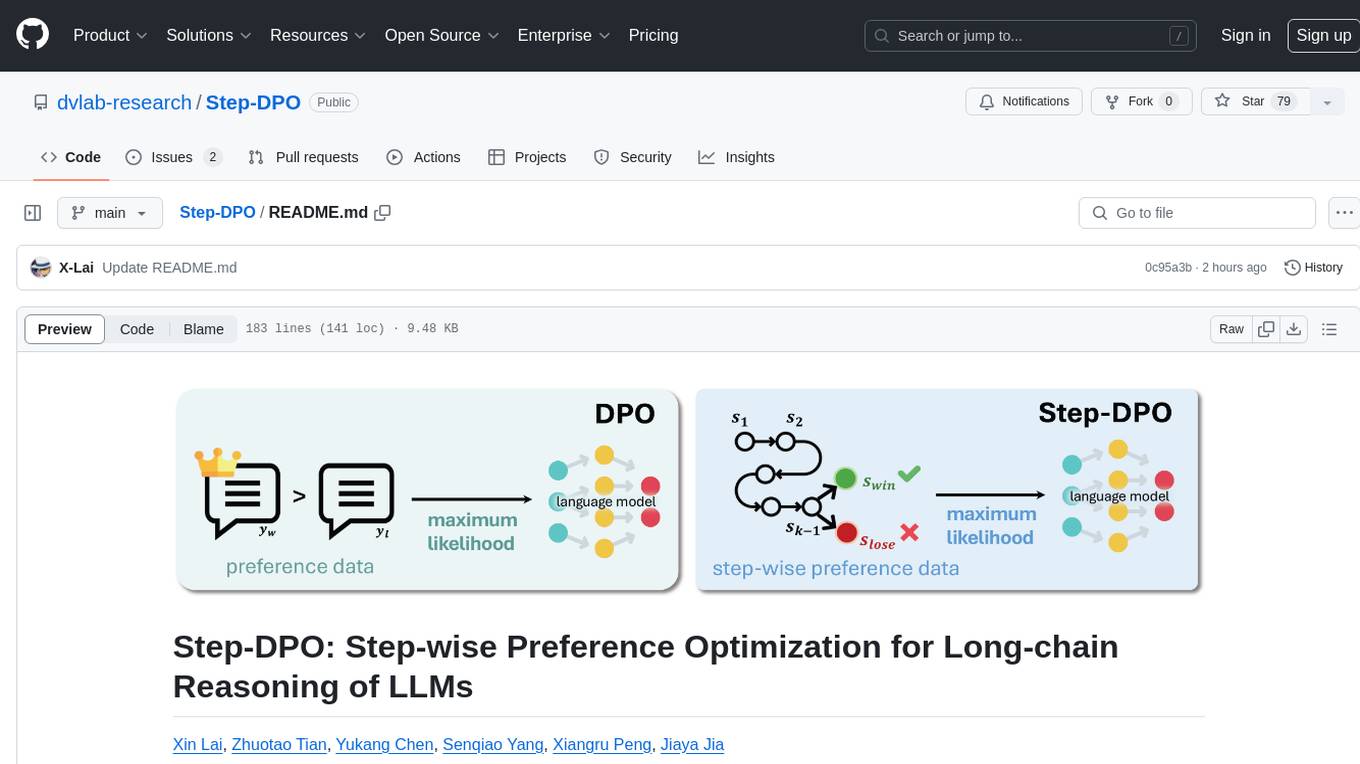

Step-DPO

Step-DPO is a method for enhancing long-chain reasoning ability of LLMs with a data construction pipeline creating a high-quality dataset. It significantly improves performance on math and GSM8K tasks with minimal data and training steps. The tool fine-tunes pre-trained models like Qwen2-7B-Instruct with Step-DPO, achieving superior results compared to other models. It provides scripts for training, evaluation, and deployment, along with examples and acknowledgements.

lawyer-llama

Lawyer LLaMA is a large language model that has been specifically trained on legal data, including Chinese laws, regulations, and case documents. It has been fine-tuned on a large dataset of legal questions and answers, enabling it to understand and respond to legal inquiries in a comprehensive and informative manner. Lawyer LLaMA is designed to assist legal professionals and individuals with a variety of law-related tasks, including: * **Legal research:** Quickly and efficiently search through vast amounts of legal information to find relevant laws, regulations, and case precedents. * **Legal analysis:** Analyze legal issues, identify potential legal risks, and provide insights on how to proceed. * **Document drafting:** Draft legal documents, such as contracts, pleadings, and legal opinions, with accuracy and precision. * **Legal advice:** Provide general legal advice and guidance on a wide range of legal matters, helping users understand their rights and options. Lawyer LLaMA is a powerful tool that can significantly enhance the efficiency and effectiveness of legal research, analysis, and decision-making. It is an invaluable resource for lawyers, paralegals, law students, and anyone else who needs to navigate the complexities of the legal system.

Nocode-Wep

Nocode/WEP is a forward-looking office visualization platform that includes modules for document building, web application creation, presentation design, and AI capabilities for office scenarios. It supports features such as configuring bullet comments, global article comments, multimedia content, custom drawing boards, flowchart editor, form designer, keyword annotations, article statistics, custom appreciation settings, JSON import/export, content block copying, and unlimited hierarchical directories. The platform is compatible with major browsers and aims to deliver content value, iterate products, share technology, and promote open-source collaboration.

DataFlow

DataFlow is a data preparation and training system designed to parse, generate, process, and evaluate high-quality data from noisy sources, improving the performance of large language models in specific domains. It constructs diverse operators and pipelines, validated to enhance domain-oriented LLM's performance in fields like healthcare, finance, and law. DataFlow also features an intelligent DataFlow-agent capable of dynamically assembling new pipelines by recombining existing operators on demand.

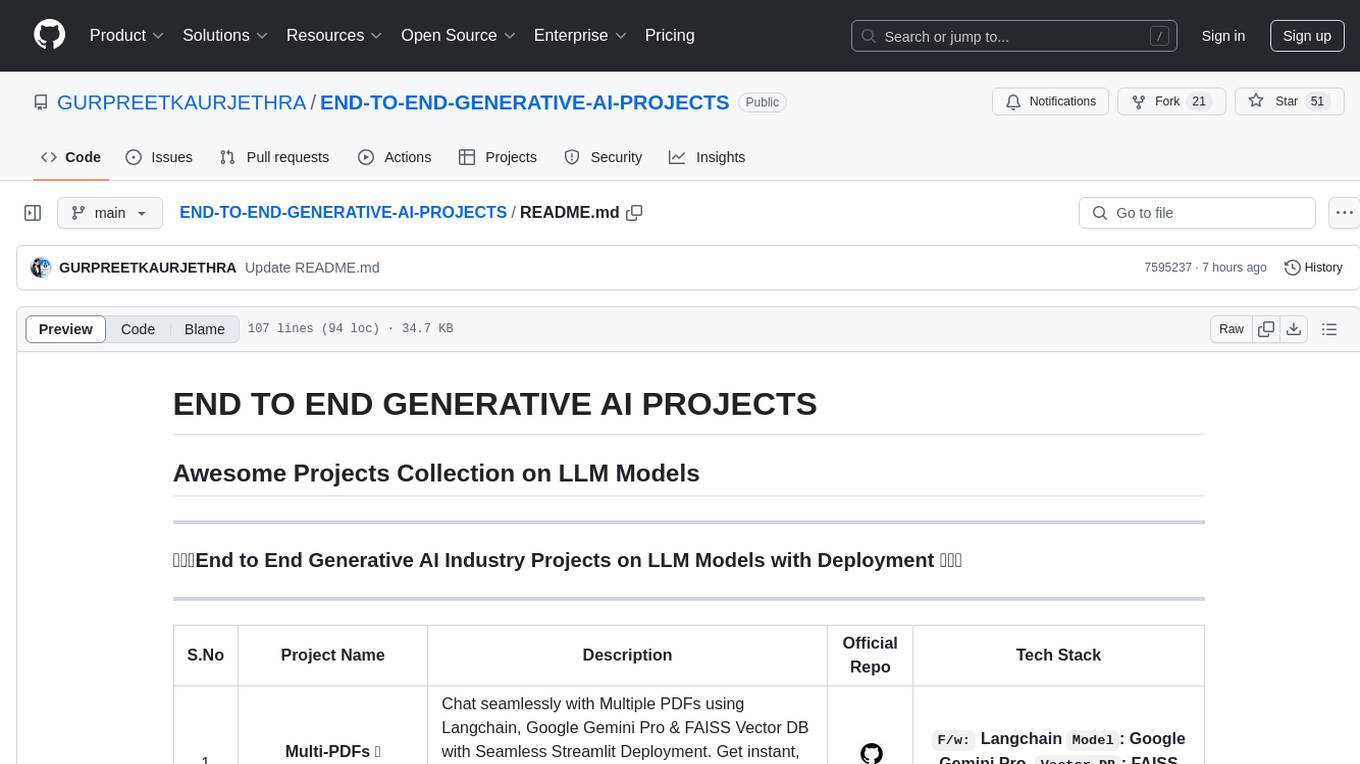

END-TO-END-GENERATIVE-AI-PROJECTS

The 'END TO END GENERATIVE AI PROJECTS' repository is a collection of awesome industry projects utilizing Large Language Models (LLM) for various tasks such as chat applications with PDFs, image to speech generation, video transcribing and summarizing, resume tracking, text to SQL conversion, invoice extraction, medical chatbot, financial stock analysis, and more. The projects showcase the deployment of LLM models like Google Gemini Pro, HuggingFace Models, OpenAI GPT, and technologies such as Langchain, Streamlit, LLaMA2, LLaMAindex, and more. The repository aims to provide end-to-end solutions for different AI applications.

awesome-ai-efficiency

Awesome AI Efficiency is a curated list of resources dedicated to enhancing efficiency in AI systems. The repository covers various topics essential for optimizing AI models and processes, aiming to make AI faster, cheaper, smaller, and greener. It includes topics like quantization, pruning, caching, distillation, factorization, compilation, parameter-efficient fine-tuning, speculative decoding, hardware optimization, training techniques, inference optimization, sustainability strategies, and scalability approaches.

For similar tasks

happy-llm

Happy-LLM is a systematic learning tutorial for Large Language Models (LLM) that covers NLP research methods, LLM architecture, training process, and practical applications. It aims to help readers understand the principles and training processes of large language models. The tutorial delves into Transformer architecture, attention mechanisms, pre-training language models, building LLMs, training processes, and practical applications like RAG and Agent technologies. It is suitable for students, researchers, and LLM enthusiasts with programming experience, Python knowledge, and familiarity with deep learning and NLP concepts. The tutorial encourages hands-on practice and participation in LLM projects and competitions to deepen understanding and contribute to the open-source LLM community.

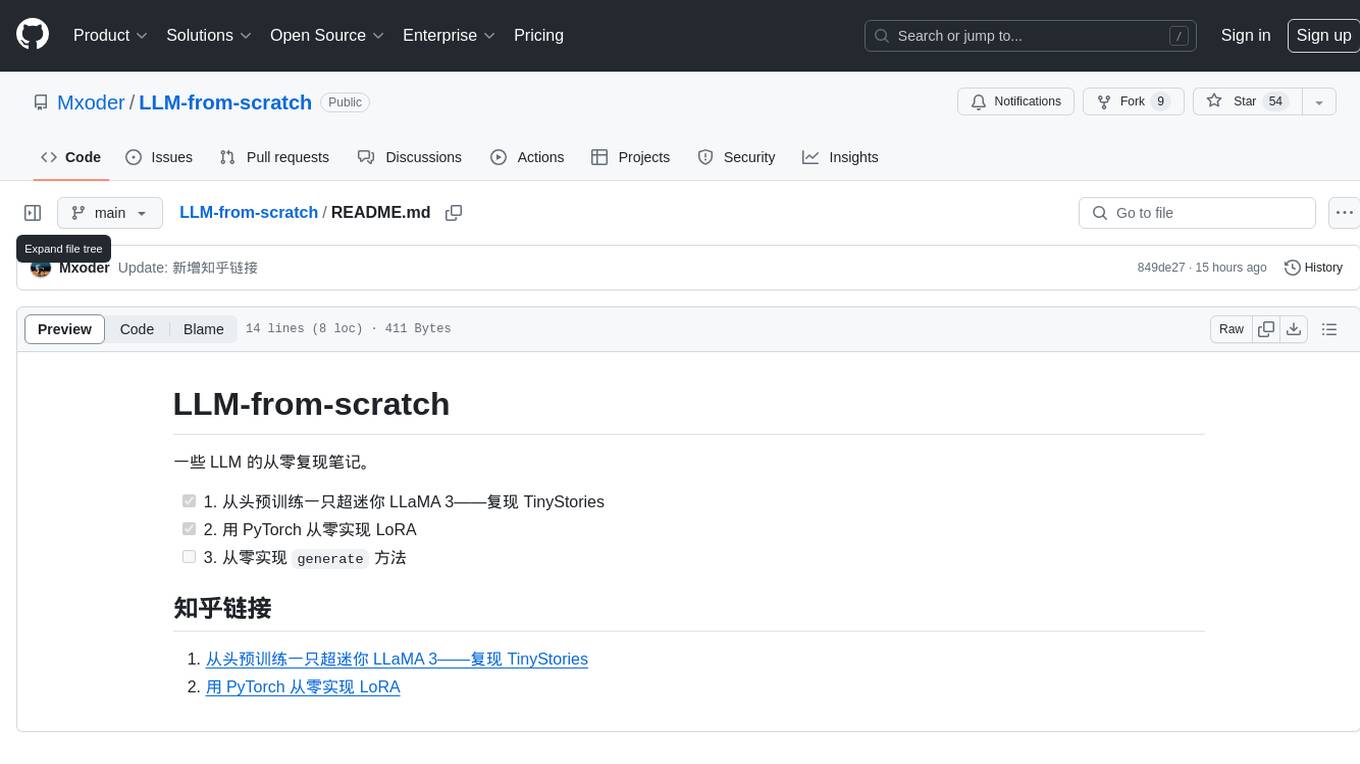

LLM-from-scratch

This repository contains notes on re-implementing some LLM models from scratch. It includes steps to pre-train a super mini LLaMA 3 model, implement LoRA from scratch using PyTorch, and work on implementing the 'generate' method.

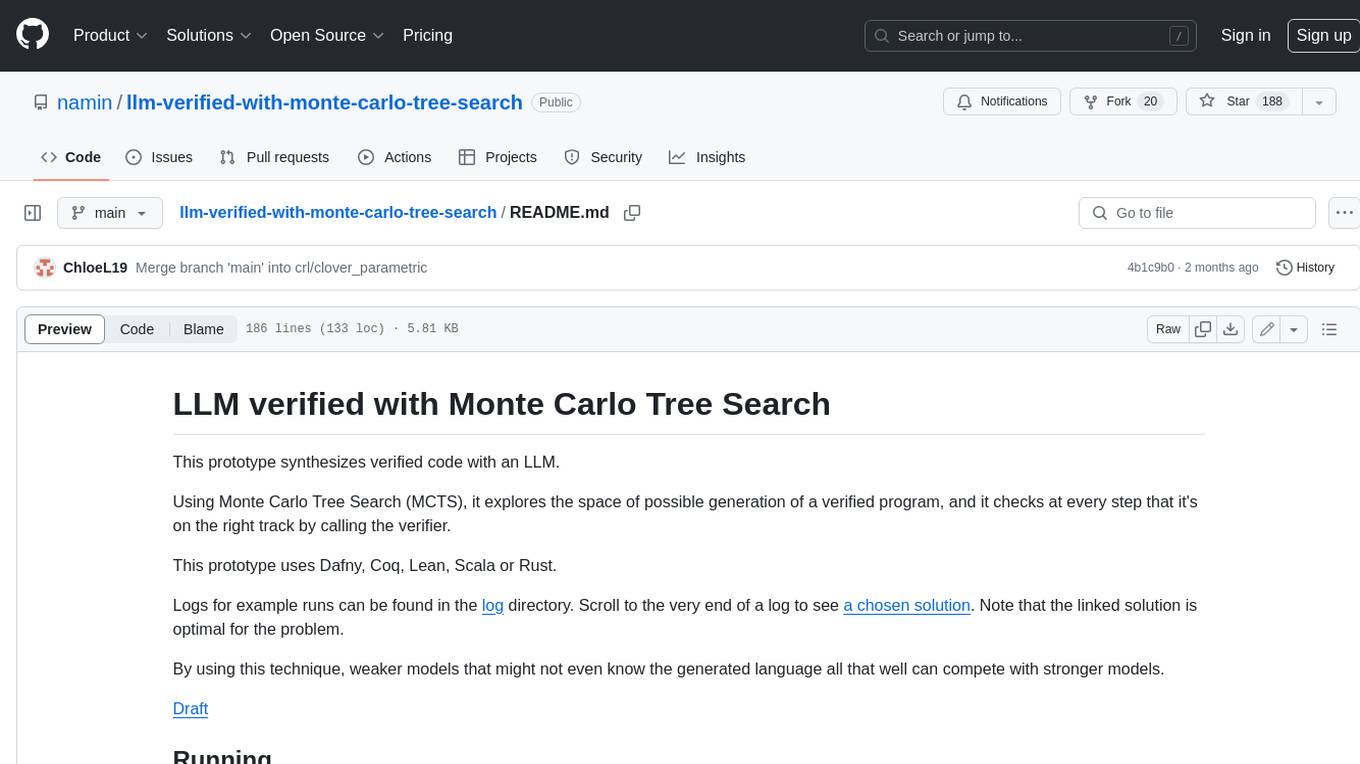

llm-verified-with-monte-carlo-tree-search

This prototype synthesizes verified code with an LLM using Monte Carlo Tree Search (MCTS). It explores the space of possible generation of a verified program and checks at every step that it's on the right track by calling the verifier. This prototype uses Dafny, Coq, Lean, Scala, or Rust. By using this technique, weaker models that might not even know the generated language all that well can compete with stronger models.

flashinfer

FlashInfer is a library for Language Languages Models that provides high-performance implementation of LLM GPU kernels such as FlashAttention, PageAttention and LoRA. FlashInfer focus on LLM serving and inference, and delivers state-the-art performance across diverse scenarios.

dolma

Dolma is a dataset and toolkit for curating large datasets for (pre)-training ML models. The dataset consists of 3 trillion tokens from a diverse mix of web content, academic publications, code, books, and encyclopedic materials. The toolkit provides high-performance, portable, and extensible tools for processing, tagging, and deduplicating documents. Key features of the toolkit include built-in taggers, fast deduplication, and cloud support.

web-llm

WebLLM is a modular and customizable javascript package that directly brings language model chats directly onto web browsers with hardware acceleration. Everything runs inside the browser with no server support and is accelerated with WebGPU. WebLLM is fully compatible with OpenAI API. That is, you can use the same OpenAI API on any open source models locally, with functionalities including json-mode, function-calling, streaming, etc. We can bring a lot of fun opportunities to build AI assistants for everyone and enable privacy while enjoying GPU acceleration.

nlp-llms-resources

The 'nlp-llms-resources' repository is a comprehensive resource list for Natural Language Processing (NLP) and Large Language Models (LLMs). It covers a wide range of topics including traditional NLP datasets, data acquisition, libraries for NLP, neural networks, sentiment analysis, optical character recognition, information extraction, semantics, topic modeling, multilingual NLP, domain-specific LLMs, vector databases, ethics, costing, books, courses, surveys, aggregators, newsletters, papers, conferences, and societies. The repository provides valuable information and resources for individuals interested in NLP and LLMs.

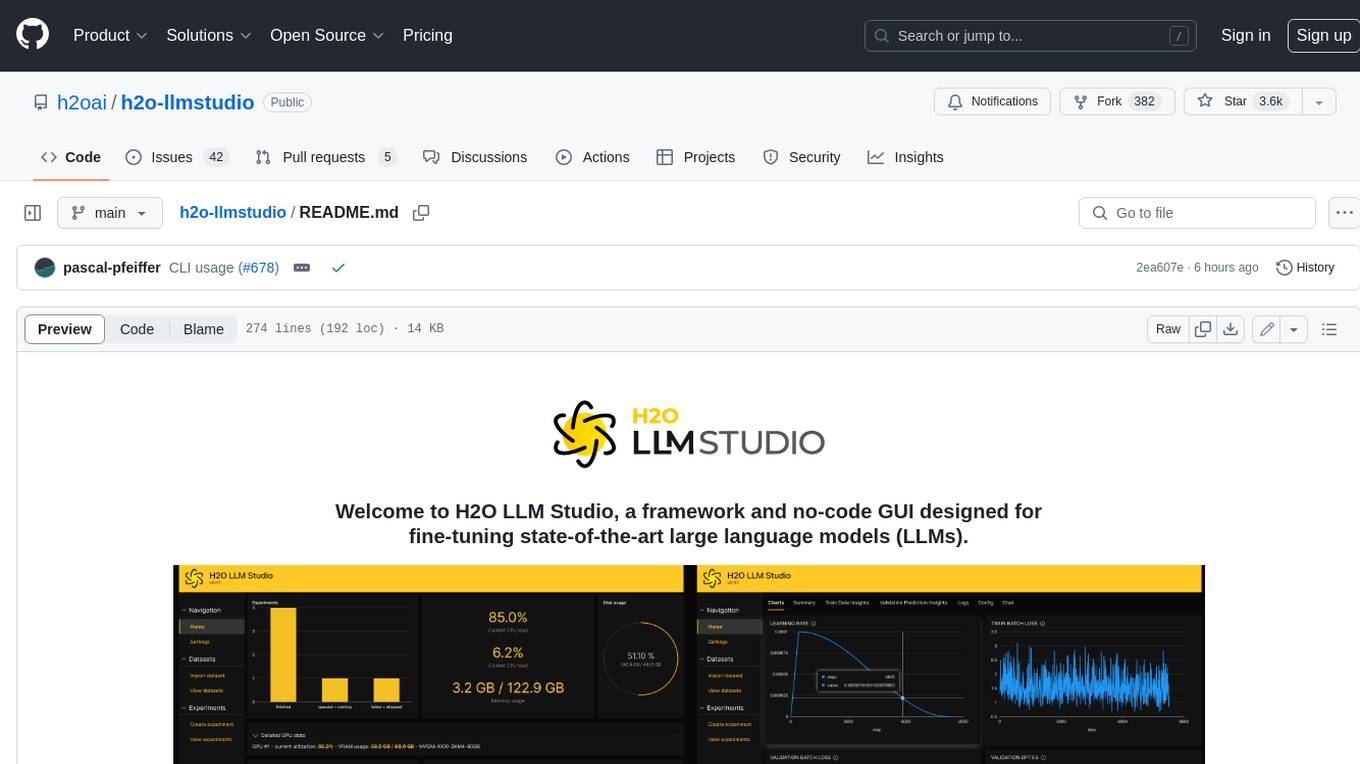

h2o-llmstudio

H2O LLM Studio is a framework and no-code GUI designed for fine-tuning state-of-the-art large language models (LLMs). With H2O LLM Studio, you can easily and effectively fine-tune LLMs without the need for any coding experience. The GUI is specially designed for large language models, and you can finetune any LLM using a large variety of hyperparameters. You can also use recent finetuning techniques such as Low-Rank Adaptation (LoRA) and 8-bit model training with a low memory footprint. Additionally, you can use Reinforcement Learning (RL) to finetune your model (experimental), use advanced evaluation metrics to judge generated answers by the model, track and compare your model performance visually, and easily export your model to the Hugging Face Hub and share it with the community.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.