local-agents

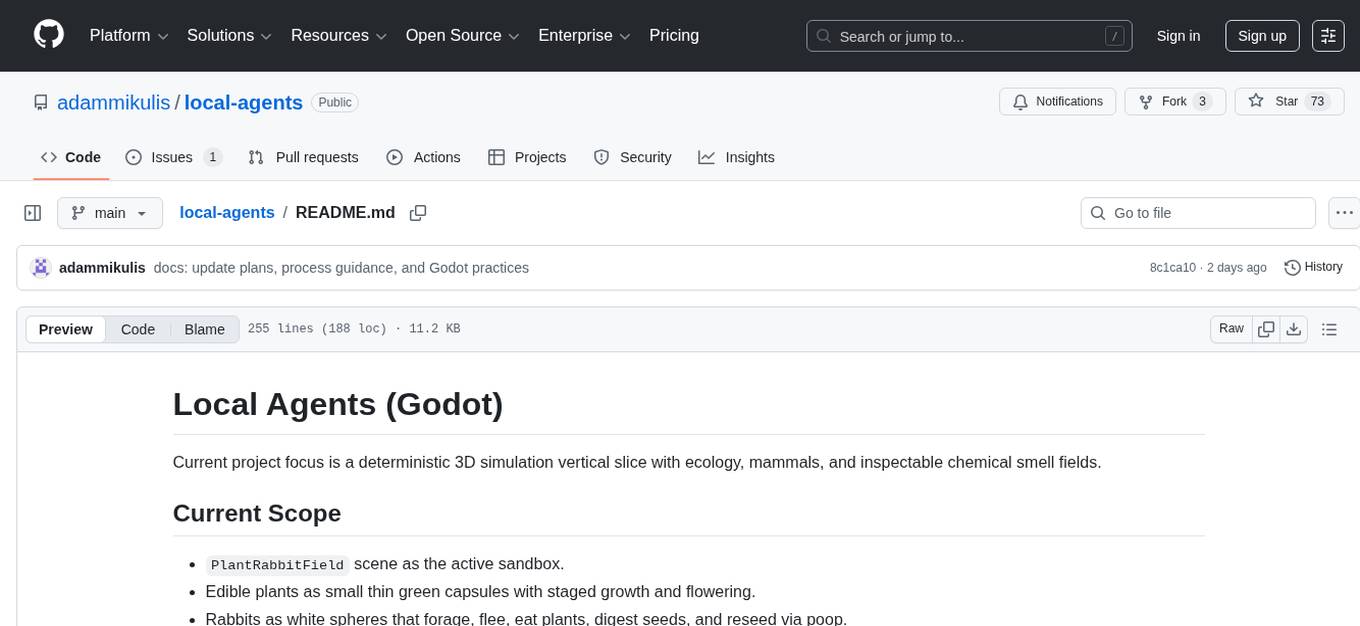

Local Agents is an add-on for Godot 4.5 to run LLMs locally in games

Stars: 73

Local Agents is a Godot project focusing on a deterministic 3D simulation with ecology, mammals, and chemical smell fields. It includes features like edible plants, rabbits, smell behavior, wind simulation, and camera controls. The project uses shared voxel infrastructure for simulation and chemistry-driven smell modeling. It also features world generation, runtime simulation, rendering with GPU shaders, and integrated demo controls. The project is designed for scene-first and resource-driven runtime, preferring voxel-native simulation over RigidBody3D.

README:

Current project focus is a deterministic 3D simulation vertical slice with ecology, mammals, and inspectable chemical smell fields.

-

PlantRabbitFieldscene as the active sandbox. - Edible plants as small thin green capsules with staged growth and flowering.

- Rabbits as white spheres that forage, flee, eat plants, digest seeds, and reseed via poop.

- Mammal smell behavior generalized via profile resources (rabbits and villagers share the same contract, with different sensitivities).

- Smell and wind simulated on a shared sparse voxel field (clean break from active hex runtime path).

- Click selection + inspector panel + spawn UI (targeted spawn and random spawn).

- Camera controls for simulation editing (orbit/pan/zoom + right-click pan).

- Debug overlays for smell, wind, and temperature with translucent voxel rendering.

addons/local_agents/simulation/VoxelGridSystem.gd- Used by:

SmellFieldSystemWindFieldSystem-

EcologyControlleredible indexing/debug views

Plants and mammals emit chemical mixtures, not generic food/danger tags.

Examples currently modeled:

- Plant/flower compounds:

hexanal,cis_3_hexenol,linalool,benzyl_acetate,phenylacetaldehyde,geraniol,methyl_salicylate. - Taste/defense compounds:

sugars,tannins,alkaloids. - Mammal/waste compounds:

ammonia,butyric_acid,2_heptanone.

Mammals convert these into behavior using weighted sensitivity profiles (MammalProfileResource).

- Smell advects with wind direction/intensity when enabled.

- Smell decays over time.

- Rain increases decay.

- Wind field evolves spatially from base wind + terrain/temperature effects.

Inside PlantRabbitField:

-

LMB: select actor / place spawn (when spawn mode active) -

EscorRMB: cancel spawn mode back to select - Spawn mode auto-resets to select after placing one entity

- Mouse wheel: zoom

-

MMB drag: orbit -

Shift + MMB drag: pan -

RMB drag: pan

Bottom HUD supports:

SelectSpawn PlantSpawn Rabbit-

Spawn Randomwith user-set counts

Right HUD shows inspector payload for selected entities.

Debug overlay roots:

SmellDebugWindDebugTemperatureDebug

Rendering style:

- Smell: translucent chemical voxel overlays.

- Temperature: translucent blue-to-red voxel spectrum.

- Wind: translucent directional vector markers.

- Scene:

addons/local_agents/scenes/simulation/PlantRabbitField.tscn - Scene:

addons/local_agents/scenes/demos/VoxelWorldDemo.tscn(seed + sliders + visible baked flowmap arrows) - Controller:

addons/local_agents/scenes/simulation/controllers/PlantRabbitField.gd - Ecology orchestration:

addons/local_agents/scenes/simulation/controllers/EcologyController.gd - Plant actor:

addons/local_agents/scenes/simulation/actors/EdiblePlantCapsule.gd - Rabbit actor:

addons/local_agents/scenes/simulation/actors/RabbitSphere.gd - Villager actor:

addons/local_agents/scenes/simulation/actors/VillagerCapsule.gd

- FastNoiseLite-based 3D voxel terrain generation with deterministic seeds.

- Minecraft-style stratified block stacks: topsoil/subsoil/stone/water with caves.

- Multiple terrain/resource block types in generated columns and block rows (soil variants + ore blocks).

- Deterministic baked flow maps (downhill direction, accumulation, channel strength).

- Deterministic voxel transform pipeline with generic transport/thermal/mechanics/failure passes (single runtime authority).

- Transform snapshots and diagnostics are stage-agnostic contract payloads (

transform_snapshot,transform_diagnostics) for runtime/bridge consumers. - Preset-based emitters drive destruction/environment edit emission; profile switches are preset changes, not runtime-stack swaps.

- Material identity is required on active voxel state (

material_id,material_profile_id,material_phase_id). - Runtime target bootstrap stamps default destructible target columns/wall during setup (

WorldSimulationcallssimulation_controller.stamp_default_voxel_target_wall(...)afterconfigure_environment(...)). - Default fracture-prone column material profile is

rockvia canonical profile resolution (stone/gravelcanonicalize torock). - Transform execution is GPU-required; no CPU-success fallback path exists for unified transform runtime.

- Legacy named stage requests (weather/hydrology/erosion/solar) are unsupported in active runtime and fail as

unsupported_legacy_stage.

- Chunked terrain rendering via

MultiMeshInstance3Dfor voxel blocks. - GPU flow and terrain shading sample generic transform field textures/buffers (no named stage authority).

- GPU river-flow overlay shader driven by baked flow-map rows.

- GPU cloud layers: animated cloud plane + volumetric cloud shell.

- GPU post-processing effects are driven by transform diagnostics/material state instead of named weather-stage contracts.

- Volumetric fog + automatic day/night sun animation, integrated with global lighting and SDFGI-enabled demo environment.

- Single canonical scene:

VoxelWorldDemo(project main scene). - Terrain controls include dimensions, sea level, surface base/range, noise frequency/octaves/lacunarity/gain, smoothing, and cave threshold.

- Flow-map visualizer controls (show/hide, threshold, stride) with animated flow arrows.

- Timelapse-style simulation controls (play/pause/fast-forward/rewind/fork) and state restore.

- Live stats report generic transform metrics/diagnostics in demo HUD/status labels.

- Integrated runtime stack in one scene: worldgen + unified transform runtime + settlement/culture/ecology controllers + debug overlays.

Runtime target setup hook/config points:

- Hook point:

addons/local_agents/scenes/simulation/controllers/WorldSimulation.gd(configure_environmentthenstamp_default_voxel_target_wallduring ready/bootstrap). - Column mutation path:

addons/local_agents/simulation/controller/SimulationVoxelTerrainMutator.gd(stamp_default_target_wall,_apply_column_surface_delta). - Canonical material profiles:

addons/local_agents/configuration/parameters/simulation/MaterialProfileTableResource.gd(rock,soil,water,ice,metal,wood,unknown).

Profile resources (defaults + wiring):

-

TargetWallProfileResource(addons/local_agents/configuration/parameters/simulation/TargetWallProfileResource.gd)wall_height_levels=6column_extra_levels=4column_span_interval=3material_profile_key="rock"destructible_tag="target_wall"brittleness=1.0

-

FpsLauncherProfileResource(addons/local_agents/configuration/parameters/simulation/FpsLauncherProfileResource.gd)launch_speed=60.0launch_mass=0.2projectile_radius=0.07projectile_ttl_seconds=4.0launch_energy_scale=1.0

- Wiring

-

WorldSimulation(addons/local_agents/scenes/simulation/controllers/WorldSimulation.gd) exposestarget_wall_profile_overrideandfps_launcher_profile_override; in_ready()it applies the target-wall override viasimulation_controller.set_target_wall_profile(...)and stamps viastamp_default_voxel_target_wall(...). -

WorldSimulationconfiguresFpsLauncherControllerwithconfigure(world_camera, self, fps_launcher_profile_override). -

FpsLauncherController(addons/local_agents/scenes/simulation/controllers/world/FpsLauncherController.gd) maps the profile into live launcher values in_apply_profile_resource(...).

-

godot --path . --editorProject is configured to launch VoxelWorldDemo as the main scene.

Headless smoke boot:

godot --headless --no-window --path . addons/local_agents/scenes/simulation/PlantRabbitField.tscn --quitWorld generation demo:

godot --path . addons/local_agents/scenes/demos/VoxelWorldDemo.tscnCore harness:

godot --headless --no-window -s addons/local_agents/tests/run_all_tests.gd --skip-heavyFast local harness (reduced core set, skips runtime-heavy by default):

godot --headless --no-window -s addons/local_agents/tests/run_all_tests.gd --fast --skip-heavyBounded runtime-heavy harness (each heavy test runs in its own process with per-test timeout):

godot --headless --no-window -s addons/local_agents/tests/run_runtime_tests_bounded.gdRun one test_*.gd module via the canonical helper (default timeout is 120 seconds):

scripts/run_single_test.sh test_native_voxel_op_contracts.gd

scripts/run_single_test.sh test_native_voxel_op_contracts.gd --timeout=180Equivalent direct harness invocation (when needed):

godot --headless --no-window -s addons/local_agents/tests/run_single_test.gd -- --test=res://addons/local_agents/tests/test_native_voxel_op_contracts.gd --timeout=120Banned direct invocation (do not run test modules as SceneTree scripts):

godot --headless --no-window -s addons/local_agents/tests/test_native_voxel_op_contracts.gdThis is enforced in automation via scripts/check_no_direct_refcounted_invocation.sh (invoked by scripts/check_max_file_length.sh).

CI timeout policy for deterministic replay/runtime shards:

- Default shard budget:

120seconds. - GPU/mobile-oriented shard budget:

180seconds.

Optional explicit GPU/mobile run:

godot --headless --no-window -s addons/local_agents/tests/run_runtime_tests_bounded.gd -- --use-gpu --timeout-sec=180Run a subset with --tests (comma-separated, full path or filename):

godot --headless --no-window -s addons/local_agents/tests/run_runtime_tests_bounded.gd -- --timeout-sec=120 --tests=test_simulation_villager_cognition.gd,test_agent_runtime_heavy.gdUseful runtime test flags:

-

--workers=<N>: run bounded runtime tests in parallel processes. -

--fast: select a reduced runtime-heavy subset. -

--use-gpu --gpu-layers=<N>: opt into GPU layer offload for runtime tests. -

--context-size=<N> --max-tokens=<N>: override runtime model load context and token limits in heavy tests.

CPU vs GPU voxel benchmark:

# CPU-only simulation pipeline timing

godot --headless --no-window -s addons/local_agents/tests/benchmark_voxel_pipeline.gd -- --mode=cpu --iterations=3 --ticks=96 --width=64 --height=64 --world-height=40

# GPU render-path timing (run with rendering, not headless)

godot --path . -s addons/local_agents/tests/benchmark_voxel_pipeline.gd -- --mode=gpu --iterations=3 --gpu-frames=120 --width=64 --height=64 --world-height=40Notes:

- Current terrain noise generation is CPU-side; GPU benchmark covers shader/render upload/update loops.

- Compare

cpu.mean_msvsgpu.total.mean_msandgpu.avg_frame.mean_msfrom JSON output.

Targeted deterministic checks include:

addons/local_agents/tests/test_smell_field_system.gdaddons/local_agents/tests/test_wind_field_system.gd

- Runtime is intentionally scene-first and resource-driven.

- Prefer voxel-native simulation/collision/destruction as the default implementation path.

- Use

RigidBody3Donly as a minimal, exception-based choice. - Required systems should fail fast rather than silently fallback.

-

ARCHITECTURE_PLAN.mdtracks breaking changes and migration status.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for local-agents

Similar Open Source Tools

local-agents

Local Agents is a Godot project focusing on a deterministic 3D simulation with ecology, mammals, and chemical smell fields. It includes features like edible plants, rabbits, smell behavior, wind simulation, and camera controls. The project uses shared voxel infrastructure for simulation and chemistry-driven smell modeling. It also features world generation, runtime simulation, rendering with GPU shaders, and integrated demo controls. The project is designed for scene-first and resource-driven runtime, preferring voxel-native simulation over RigidBody3D.

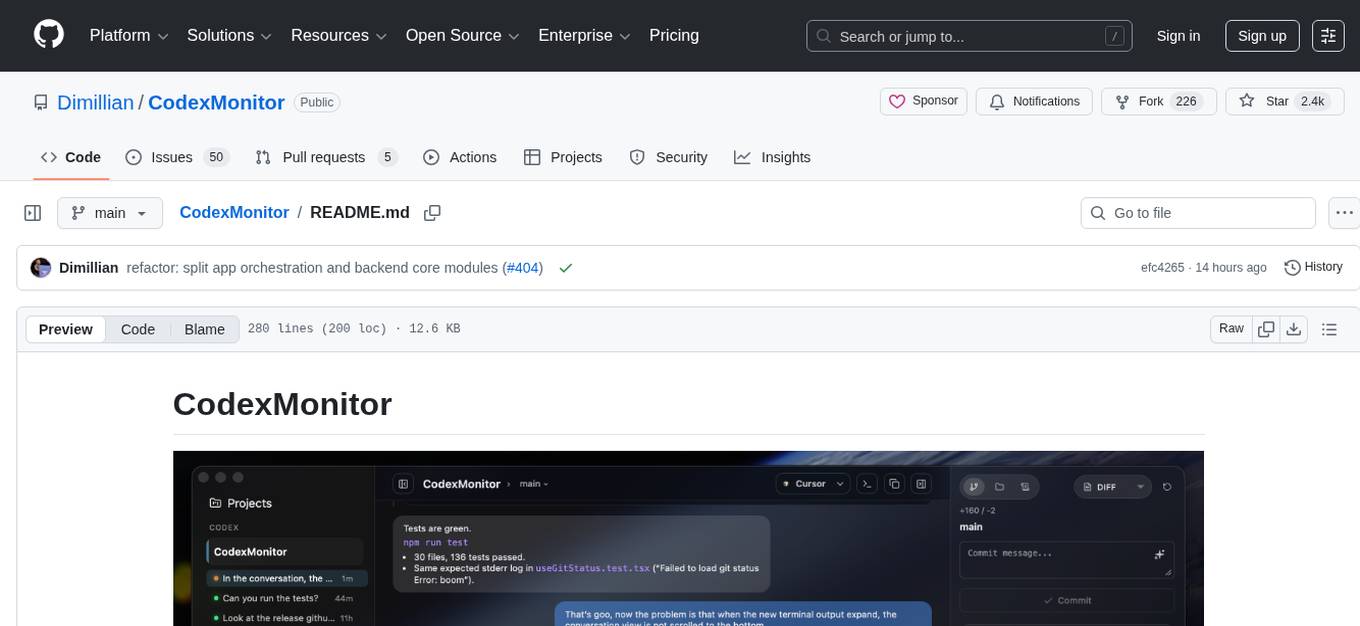

CodexMonitor

CodexMonitor is a Tauri app designed for managing multiple Codex agents in local workspaces. It offers features such as workspace and thread management, composer and agent controls, Git and GitHub integration, file and prompt handling, as well as a user-friendly UI and experience. The tool requires Node.js, Rust toolchain, CMake, LLVM/Clang, Codex CLI, Git CLI, and optionally GitHub CLI. It supports iOS with Tailscale setup, and provides instructions for iOS support, prerequisites, simulator usage, USB device deployment, and release builds. The project structure includes frontend and backend components, with persistent data storage, settings, and feature configurations. Tauri IPC surface enables various functionalities like settings management, workspace operations, thread handling, Git/GitHub interactions, prompts management, terminal/dictation/notifications, and remote backend helpers.

agent-device

CLI tool for controlling iOS and Android devices for AI agents, with core commands like open, back, home, press, and more. It supports minimal dependencies, TypeScript execution on Node 22+, and is in early development. The tool allows for automation flows, session management, semantic finding, assertions, replay updates, and settings helpers for simulators. It also includes backends for iOS snapshots, app resolution, iOS-specific notes, testing, and building. Contributions are welcome, and the project is maintained by Callstack, a group of React and React Native enthusiasts.

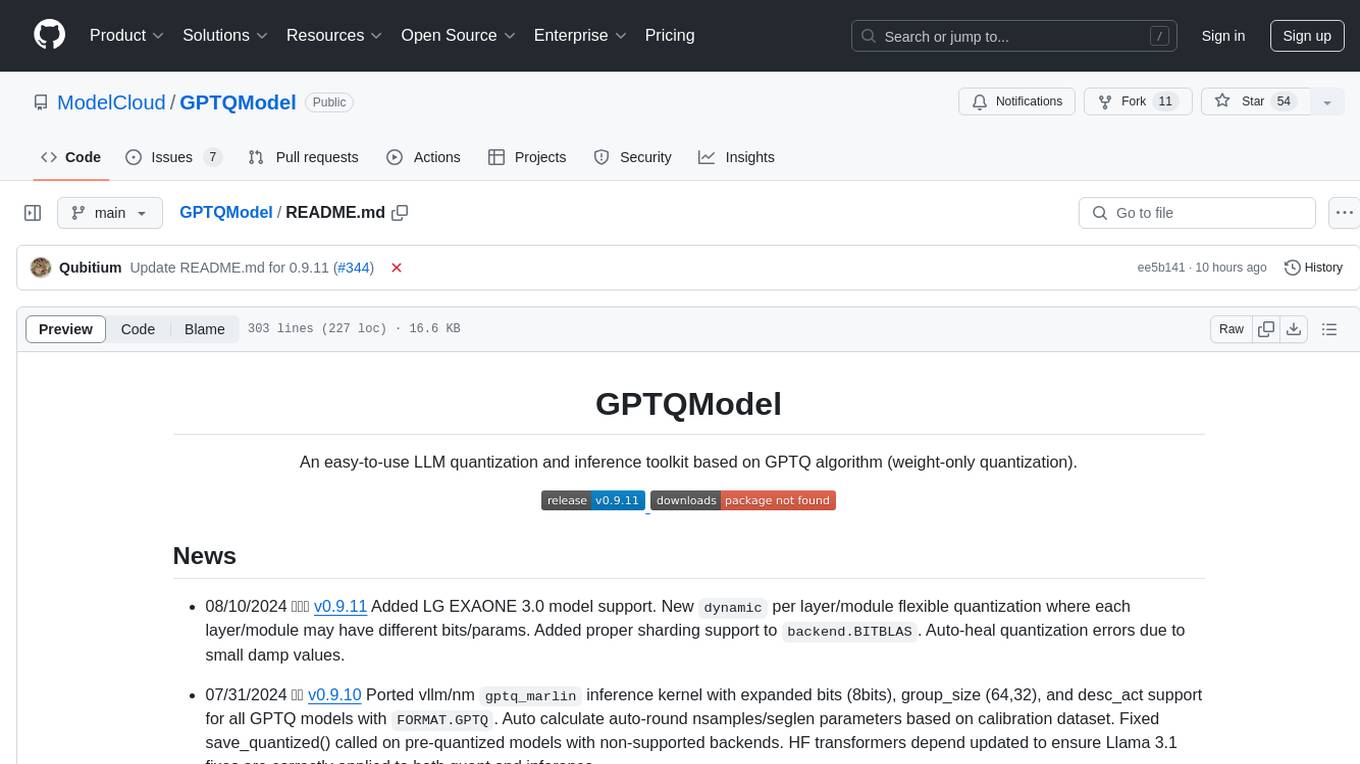

GPTQModel

GPTQModel is an easy-to-use LLM quantization and inference toolkit based on the GPTQ algorithm. It provides support for weight-only quantization and offers features such as dynamic per layer/module flexible quantization, sharding support, and auto-heal quantization errors. The toolkit aims to ensure inference compatibility with HF Transformers, vLLM, and SGLang. It offers various model supports, faster quant inference, better quality quants, and security features like hash check of model weights. GPTQModel also focuses on faster quantization, improved quant quality as measured by PPL, and backports bug fixes from AutoGPTQ.

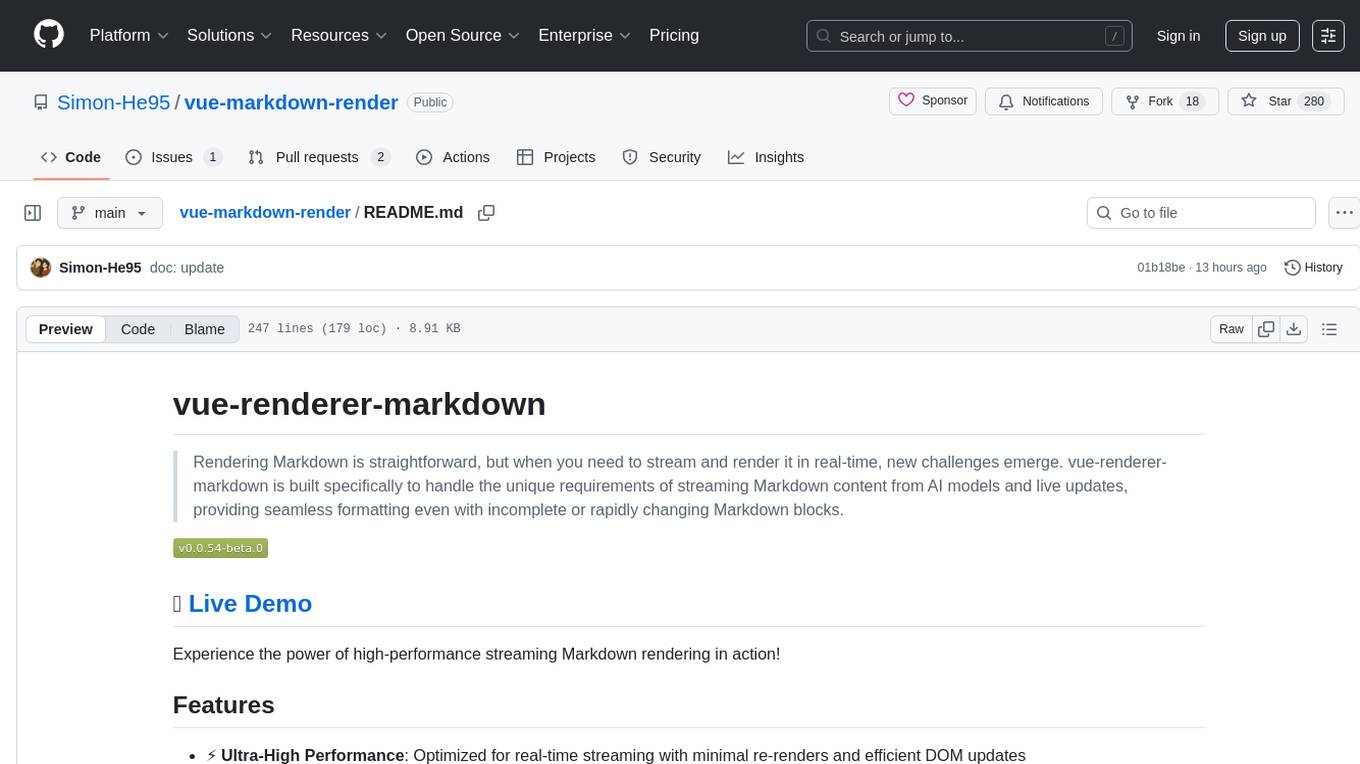

vue-markdown-render

vue-renderer-markdown is a high-performance tool designed for streaming and rendering Markdown content in real-time. It is optimized for handling incomplete or rapidly changing Markdown blocks, making it ideal for scenarios like AI model responses, live content updates, and real-time Markdown rendering. The tool offers features such as ultra-high performance, streaming-first design, Monaco integration, progressive Mermaid rendering, custom components integration, complete Markdown support, real-time updates, TypeScript support, and zero configuration setup. It solves challenges like incomplete syntax blocks, rapid content changes, cursor positioning complexities, and graceful handling of partial tokens with a streaming-optimized architecture.

starcoder2-self-align

StarCoder2-Instruct is an open-source pipeline that introduces StarCoder2-15B-Instruct-v0.1, a self-aligned code Large Language Model (LLM) trained with a fully permissive and transparent pipeline. It generates instruction-response pairs to fine-tune StarCoder-15B without human annotations or data from proprietary LLMs. The tool is primarily finetuned for Python code generation tasks that can be verified through execution, with potential biases and limitations. Users can provide response prefixes or one-shot examples to guide the model's output. The model may have limitations with other programming languages and out-of-domain coding tasks.

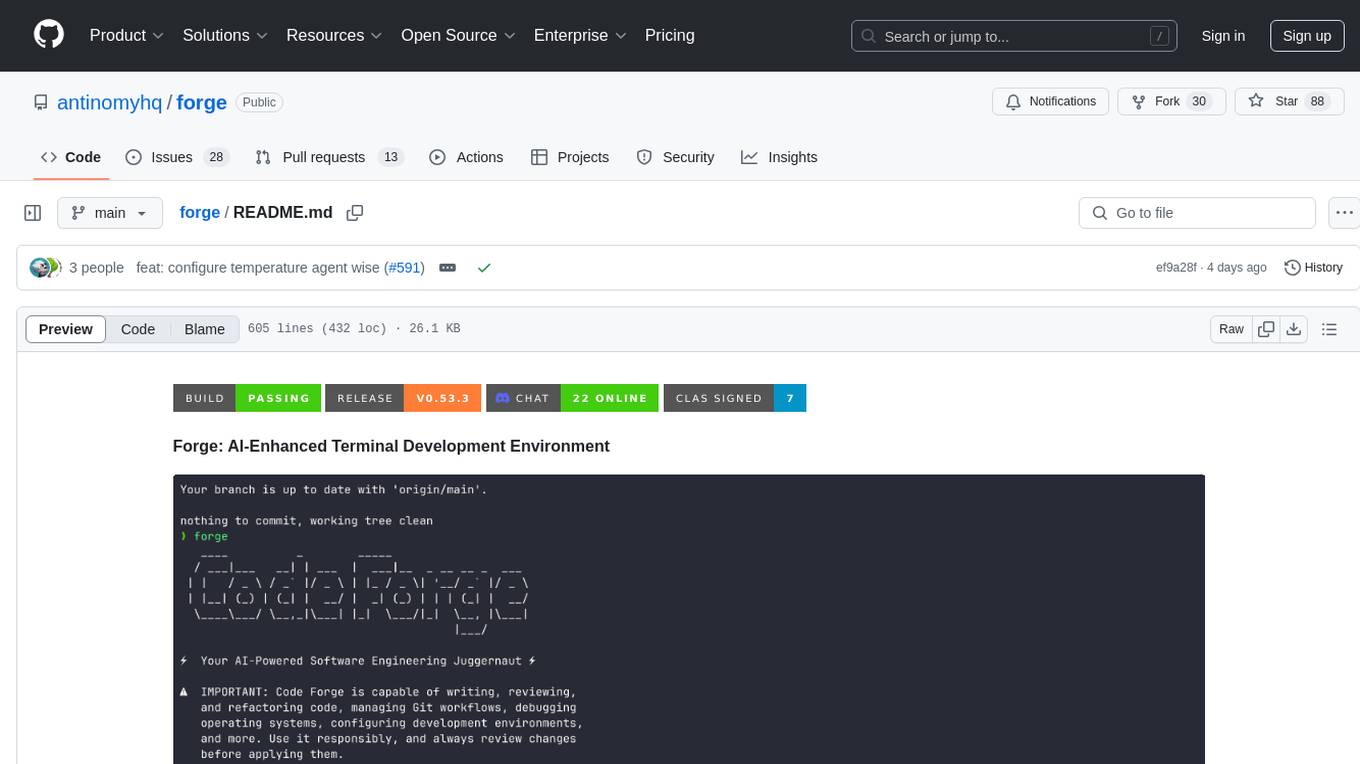

forge

Forge is a powerful open-source tool for building modern web applications. It provides a simple and intuitive interface for developers to quickly scaffold and deploy projects. With Forge, you can easily create custom components, manage dependencies, and streamline your development workflow. Whether you are a beginner or an experienced developer, Forge offers a flexible and efficient solution for your web development needs.

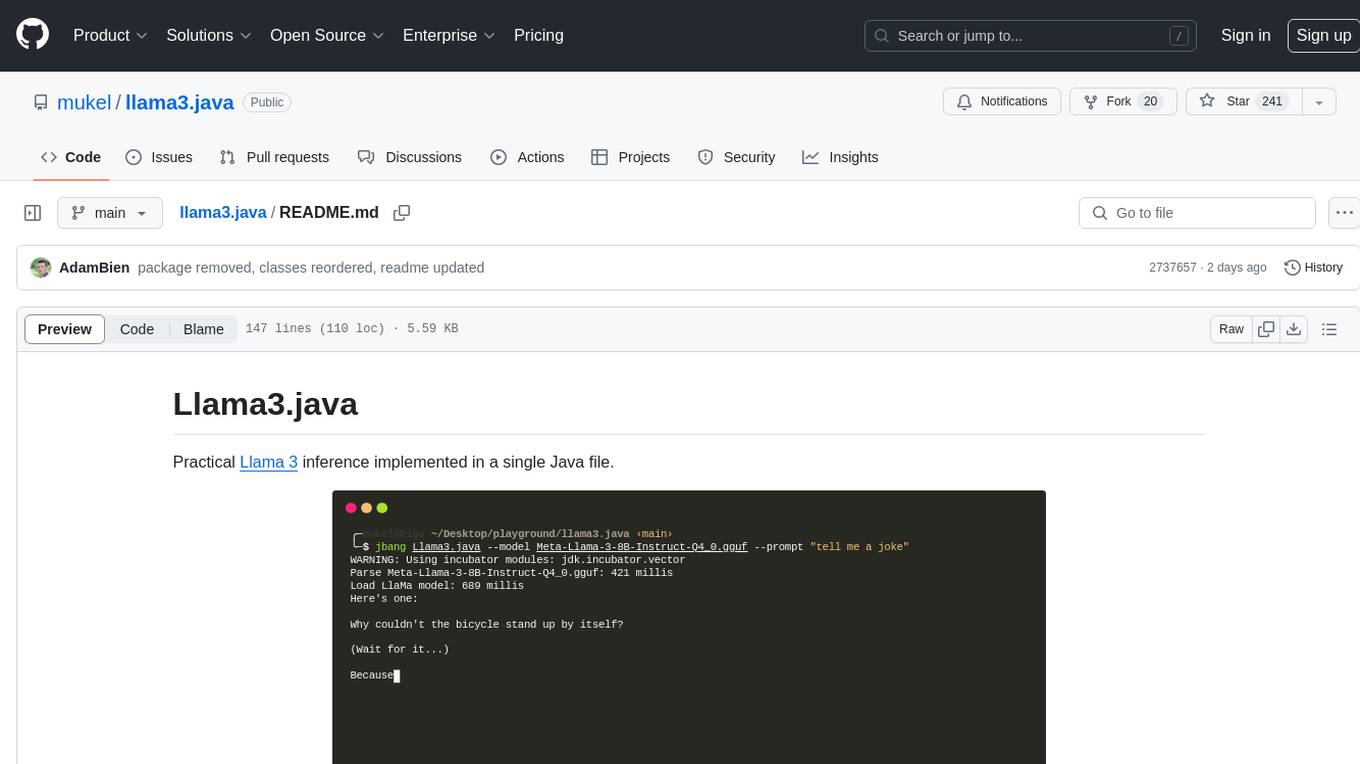

llama3.java

Llama3.java is a practical Llama 3 inference tool implemented in a single Java file. It serves as the successor of llama2.java and is designed for testing and tuning compiler optimizations and features on the JVM, especially for the Graal compiler. The tool features a GGUF format parser, Llama 3 tokenizer, Grouped-Query Attention inference, support for Q8_0 and Q4_0 quantizations, fast matrix-vector multiplication routines using Java's Vector API, and a simple CLI with 'chat' and 'instruct' modes. Users can download quantized .gguf files from huggingface.co for model usage and can also manually quantize to pure 'Q4_0'. The tool requires Java 21+ and supports running from source or building a JAR file for execution. Performance benchmarks show varying tokens/s rates for different models and implementations on different hardware setups.

listen

Listen is a Solana Swiss-Knife toolkit for algorithmic trading, offering real-time transaction monitoring, multi-DEX swap execution, fast transactions with Jito MEV bundles, price tracking, token management utilities, and performance monitoring. It includes tools for grabbing data from unofficial APIs and works with the $arc rig framework for AI Agents to interact with the Solana blockchain. The repository provides miscellaneous tools for analysis and data retrieval, with the core functionality in the `src` directory.

litserve

LitServe is a high-throughput serving engine for deploying AI models at scale. It generates an API endpoint for a model, handles batching, streaming, autoscaling across CPU/GPUs, and more. Built for enterprise scale, it supports every framework like PyTorch, JAX, Tensorflow, and more. LitServe is designed to let users focus on model performance, not the serving boilerplate. It is like PyTorch Lightning for model serving but with broader framework support and scalability.

chatgpt-subtitle-translator

This tool utilizes the OpenAI ChatGPT API to translate text, with a focus on line-based translation, particularly for SRT subtitles. It optimizes token usage by removing SRT overhead and grouping text into batches, allowing for arbitrary length translations without excessive token consumption while maintaining a one-to-one match between line input and output.

clawvault

ClawVault is a structured memory system designed for AI agents and operators. It utilizes typed markdown memory, graph-aware context, task/project primitives, Obsidian views, and OpenClaw hook integration. The tool is local-first and markdown-first, built to support long-running autonomous work. It requires Node.js 18+ and the 'qmd' tool to be installed and available on the PATH. Users can create and initialize a vault, set up Obsidian integration, and verify OpenClaw compatibility. The tool offers various CLI commands for memory management, context handling, resilience, execution primitives, networking, and Obsidian integration. Additionally, ClawVault supports Tailscale and WebDAV for content sync and mobile workflows. Troubleshooting steps are provided for common issues, and the tool is licensed under MIT.

ruler

Ruler is a tool designed to centralize AI coding assistant instructions, providing a single source of truth for managing instructions across multiple AI coding tools. It helps in avoiding inconsistent guidance, duplicated effort, context drift, onboarding friction, and complex project structures by automatically distributing instructions to the right configuration files. With support for nested rule loading, Ruler can handle complex project structures with context-specific instructions for different components. It offers features like centralised rule management, nested rule loading, automatic distribution, targeted agent configuration, MCP server propagation, .gitignore automation, and a simple CLI for easy configuration management.

RepairAgent

RepairAgent is an autonomous LLM-based agent for automated program repair targeting the Defects4J benchmark. It uses an LLM-driven loop to localize, analyze, and fix Java bugs. The tool requires Docker, VS Code with Dev Containers extension, OpenAI API key, disk space of ~40 GB, and internet access. Users can get started with RepairAgent using either VS Code Dev Container or Docker Image. Running RepairAgent involves checking out the buggy project version, autonomous bug analysis, fix candidate generation, and testing against the project's test suite. Users can configure hyperparameters for budget control, repetition handling, commands limit, and external fix strategy. The tool provides output structure, experiment overview, individual analysis scripts, and data on fixed bugs from the Defects4J dataset.

ahnlich

Ahnlich is a tool that provides multiple components for storing and searching similar vectors using linear or non-linear similarity algorithms. It includes 'ahnlich-db' for in-memory vector key value store, 'ahnlich-ai' for AI proxy communication, 'ahnlich-client-rs' for Rust client, and 'ahnlich-client-py' for Python client. The tool is not production-ready yet and is still in testing phase, allowing AI/ML engineers to issue queries using raw input such as images/text and features off-the-shelf models for indexing and querying.

agent-deck

Agent Deck is a mission control tool for managing multiple AI coding agents in one terminal. It provides complete visibility of running, waiting, or idle agents, allows quick switching between sessions, and offers features like forking sessions, MCP manager for server attachment, skills manager for Claude skills, MCP socket pooling, search functionality, notification bar, git worktrees for multiple agents working on the same repo, and conductors for monitoring and orchestrating sessions. It supports various AI tools like Claude Code, Gemini CLI, OpenCode, Codex, Cursor, and custom tools. Agent Deck is designed to enhance AI session management beyond what tmux offers, with smart status detection, session forking, MCP management, global search, and organized groups.

For similar tasks

local-agents

Local Agents is a Godot project focusing on a deterministic 3D simulation with ecology, mammals, and chemical smell fields. It includes features like edible plants, rabbits, smell behavior, wind simulation, and camera controls. The project uses shared voxel infrastructure for simulation and chemistry-driven smell modeling. It also features world generation, runtime simulation, rendering with GPU shaders, and integrated demo controls. The project is designed for scene-first and resource-driven runtime, preferring voxel-native simulation over RigidBody3D.

For similar jobs

Awesome-AIGC-3D

Awesome-AIGC-3D is a curated list of awesome AIGC 3D papers, inspired by awesome-NeRF. It aims to provide a comprehensive overview of the state-of-the-art in AIGC 3D, including papers on text-to-3D generation, 3D scene generation, human avatar generation, and dynamic 3D generation. The repository also includes a list of benchmarks and datasets, talks, companies, and implementations related to AIGC 3D. The description is less than 400 words and provides a concise overview of the repository's content and purpose.

LayaAir

LayaAir engine, under the Layabox brand, is a 3D engine that supports full-platform publishing. It can be applied in various fields such as games, education, advertising, marketing, digital twins, metaverse, AR guides, VR scenes, architectural design, industrial design, etc.

ComfyUI-BlenderAI-node

ComfyUI-BlenderAI-node is an addon for Blender that allows users to convert ComfyUI nodes into Blender nodes seamlessly. It offers features such as converting nodes, editing launch arguments, drawing masks with Grease pencil, and more. Users can queue batch processing, use node tree presets, and model preview images. The addon enables users to input or replace 3D models in Blender and output controlnet images using composite. It provides a workflow showcase with presets for camera input, AI-generated mesh import, composite depth channel, character bone editing, and more.

Anim

Anim v0.1.0 is an animation tool that allows users to convert videos to animations using mixamorig characters. It features FK animation editing, object selection, embedded Python support (only on Windows), and the ability to export to glTF and FBX formats. Users can also utilize Mediapipe to create animations. The tool is designed to assist users in creating animations with ease and flexibility.

anthrax-ai

AnthraxAI is a Vulkan-based game engine that allows users to create and develop 3D games. The engine provides features such as scene selection, camera movement, object manipulation, debugging tools, audio playback, and real-time shader code updates. Users can build and configure the project using CMake and compile shaders using the glslc compiler. The engine supports building on both Linux and Windows platforms, with specific dependencies for each. Visual Studio Code integration is available for building and debugging the project, with instructions provided in the readme for setting up the workspace and required extensions.

Facial-Data-Extractor

Facial Data Extractor is a software designed to extract facial data from images using AI, specifically to assist in character customization for Illusion series games. Currently, it only supports AI Shoujo and Honey Select2. Users can open images, select character card templates, extract facial data, and apply it to character cards in the game. The tool provides measurements for various facial features and allows for some customization, although perfect replication of faces may require manual adjustments.

VisionDepth3D

VisionDepth3D is an all-in-one 3D suite for creators, combining AI depth and custom stereo logic for cinema in VR. The suite includes features like real-time 3D stereo composer with CUDA + PyTorch acceleration, AI-powered depth estimation supporting 25+ models, AI upscaling & interpolation, depth blender for blending depth maps, audio to video sync, smart GUI workflow, and various output formats & aspect ratios. The tool is production-ready, offering advanced parallax controls, streamlined export for cinema, VR, or streaming, and real-time preview overlays.

local-agents

Local Agents is a Godot project focusing on a deterministic 3D simulation with ecology, mammals, and chemical smell fields. It includes features like edible plants, rabbits, smell behavior, wind simulation, and camera controls. The project uses shared voxel infrastructure for simulation and chemistry-driven smell modeling. It also features world generation, runtime simulation, rendering with GPU shaders, and integrated demo controls. The project is designed for scene-first and resource-driven runtime, preferring voxel-native simulation over RigidBody3D.