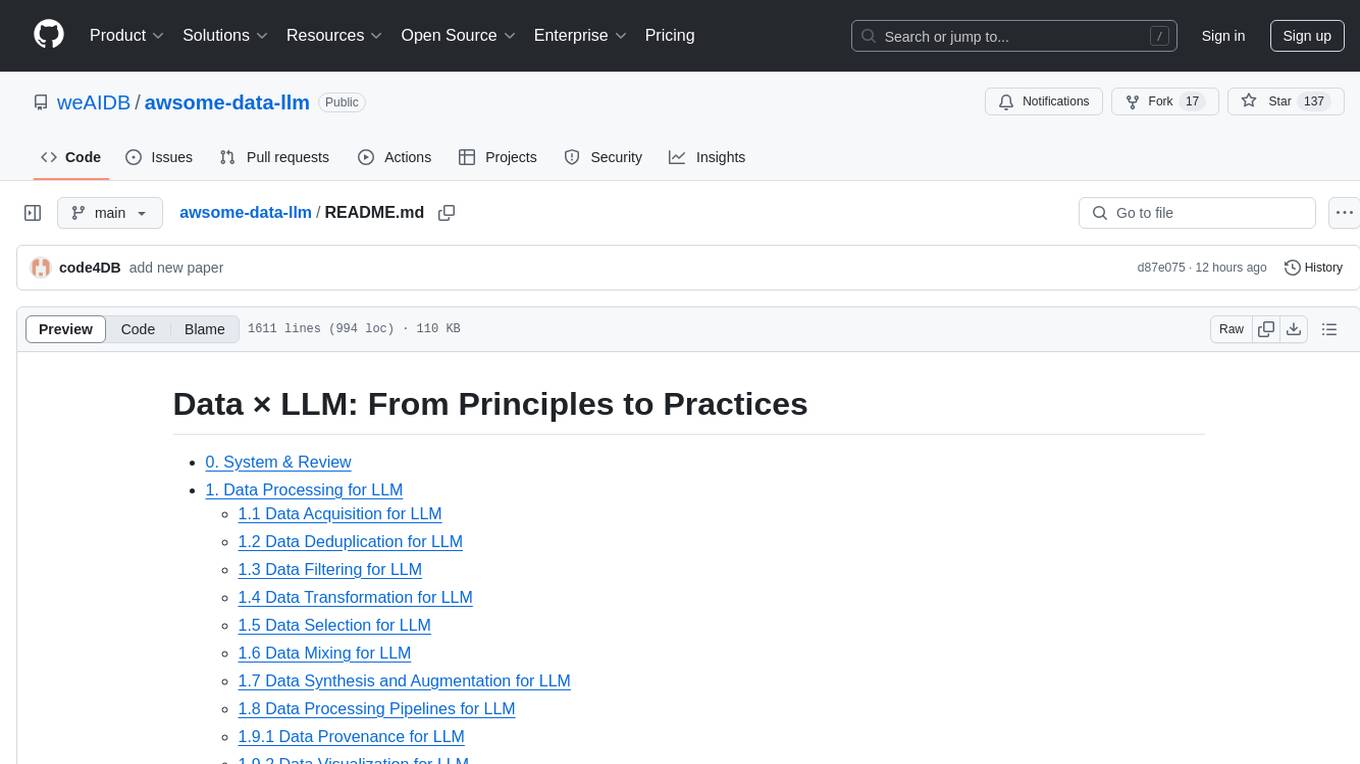

llm-hallucination-survey

Reading list of hallucination in LLMs. Check out our new survey paper: "Siren’s Song in the AI Ocean: A Survey on Hallucination in Large Language Models"

Stars: 1044

README:

Hallucination refers to the generated content that while seemingly plausible, deviates from user input (input-conflicting), previously generated context (context-conflicting), or factual knowledge (fact-conflicting).

😎 We have uploaded a comprehensive survey about the hallucination issue within the context of large language models, which discussed the evaluation, explanation, and mitigation. Check it out!

Siren's Song in the AI Ocean: A Survey on Hallucination in Large Language Models

If you think our survey is helpful, please kindly cite our paper:

@article{zhang2023hallucination,

title={Siren's Song in the AI Ocean: A Survey on Hallucination in Large Language Models},

author={Zhang, Yue and Li, Yafu and Cui, Leyang and Cai, Deng and Liu, Lemao and Fu, Tingchen and Huang, Xinting and Zhao, Enbo and Zhang, Yu and Xu, Chen and Chen, Yulong and Wang, Longyue and Luu, Anh Tuan and Bi, Wei and Shi, Freda and Shi, Shuming},

journal={arXiv preprint arXiv:2309.01219},

year={2023}

}

This kind of hallucination denotes the model response deviates from the user input, including task instruction and task input. This kind of hallucination has been widely studied in some traditional NLG tasks, such as:

-

Machine Translation:- Hallucinations in Neural Machine TranslationDownload Katherine Lee, Orhan Firat, Ashish Agarwal, Clara Fannjiang, David Sussillo [paper] 2018.9

- Looking for a Needle in a Haystack: A Comprehensive Study of Hallucinations in Neural Machine Translation Nuno M. Guerreiro, Elena Voita, André F.T. Martins [paper] 2022.8

- Detecting and Mitigating Hallucinations in Machine Translation: Model Internal Workings Alone Do Well, Sentence Similarity Even Better David Dale, Elena Voita, Loïc Barrault, Marta R. Costa-jussà [paper] 2022.12

- HalOmi: A Manually Annotated Benchmark for Multilingual Hallucination and Omission Detection in Machine Translation David Dale, Elena Voita, Janice Lam, Prangthip Hansanti, Christophe Ropers, Elahe Kalbassi, Cynthia Gao, Loïc Barrault, Marta R. Costa-jussà [paper] 2023.05

-

Data-to-text:- Controlling Hallucinations at Word Level in Data-to-Text Generation Clément Rebuffel, Marco Roberti, Laure Soulier, Geoffrey Scoutheeten, Rossella Cancelliere, Patrick Gallinari[paper] 2021.2

- On Hallucination and Predictive Uncertainty in Conditional Language Generation Yijun Xiao, William Yang Wang[paper] 2021.3

- Faithful Low-Resource Data-to-Text Generation through Cycle Training Zhuoer Wang, Marcus Collins, Nikhita Vedula, Simone Filice, Shervin Malmasi, Oleg Rokhlenko[paper] 2023.7

-

Summarization:- On Faithfulness and Factuality in Abstractive Summarization Joshua Maynez, Shashi Narayan, Bernd Bohnet, Ryan McDonald[paper] 2020.5

- Hallucinated but Factual! Inspecting the Factuality of Hallucinations in Abstractive Summarization Meng Cao, Yue Dong, Jackie Chi Kit Cheung[paper] 2021.9

- Summarization is (Almost) Dead Xiao Pu, Mingqi Gao, Xiaojun Wan[paper] 2023.9

- Hallucination Reduction in Long Input Text Summarization Tohida Rehman, Ronit Mandal, Abhishek Agarwal, Debarshi Kumar Sanyal[paper] 2023.9

- Lighter, yet More Faithful: Investigating Hallucinations in Pruned Large Language Models for Abstractive Summarization George Chrysostomou, Zhixue Zhao, Miles Williams, Nikolaos Aletras[paper] 2023.11

- TofuEval: Evaluating Hallucinations of LLMs on Topic-Focused Dialogue Summarization Liyan Tang, Igor Shalyminov, Amy Wing-mei Wong, Jon Burnsky, Jake W. Vincent, Yu'an Yang, Siffi Singh, Song Feng, Hwanjun Song, Hang Su, Lijia Sun, Yi Zhang, Saab Mansour, Kathleen McKeown[paper] 2024.02

-

Dialogue:- Neural Path Hunter: Reducing Hallucination in Dialogue Systems via Path Grounding Nouha Dziri, Andrea Madotto, Osmar Zaiane, Avishek Joey Bose[paper] 2021.4

- RHO: Reducing Hallucination in Open-domain Dialogues with Knowledge Grounding Ziwei Ji, Zihan Liu, Nayeon Lee, Tiezheng Yu, Bryan Wilie, Min Zeng, Pascale Fung[paper] 2023.7

- DiaHalu: A Dialogue-level Hallucination Evaluation Benchmark for Large Language Models Kedi Chen, Qin Chen, Jie Zhou, Yishen He, Liang He[paper] 2024.3

-

Question Answering:- Entity-Based Knowledge Conflicts in Question Answering Shayne Longpre, Kartik Perisetla, Anthony Chen, Nikhil Ramesh, Chris DuBois, Sameer Singh[paper] 2021.9

- Evaluating Correctness and Faithfulness of Instruction-Following Models for Question Answering Vaibhav Adlakha, Parishad BehnamGhader, Xing Han Lu, Nicholas Meade, Siva Reddy [paper] 2023.7

This kind of hallucination means the generated content exhibits self-contradiction, i.e., conflicts with previously generated content. Here are some preliminary studies in this direction:

-

Knowledge Enhanced Fine-Tuning for Better Handling Unseen Entities in Dialogue Generation Leyang Cui, Yu Wu, Shujie Liu, Yue Zhang[paper] 2021.9

-

A Token-level Reference-free Hallucination Detection Benchmark for Free-form Text Generation Tianyu Liu, Yizhe Zhang, Chris Brockett, Yi Mao, Zhifang Sui, Weizhu Chen, Bill Dolan[paper] 2022.5 (not only limited to context-conflicting type)

-

Large Language Models Can Be Easily Distracted by Irrelevant Context Freda Shi, Xinyun Chen, Kanishka Misra, Nathan Scales, David Dohan, Ed H. Chi, Nathanael Schärli, Denny Zhou[paper] 2023.2

-

HistAlign: Improving Context Dependency in Language Generation by Aligning with History David Wan, Shiyue Zhang, Mohit Bansal[paper] 2023.5

-

Self-contradictory Hallucinations of Large Language Models: Evaluation, Detection and Mitigation Niels Mündler, Jingxuan He, Slobodan Jenko, Martin Vechev [paper] 2023.5

This kind of hallucination means the generated content conflicts with established facts. This kind of hallucination is challenging and important for practical applications of LLMs, so it has been widely studied in recent work.

-

TruthfulQA: Measuring How Models Mimic Human Falsehoods Stephanie Lin, Jacob Hilton, Owain Evans [paper] 2022.5

-

A Token-level Reference-free Hallucination Detection Benchmark for Free-form Text Generation Tianyu Liu, Yizhe Zhang, Chris Brockett, Yi Mao, Zhifang Sui, Weizhu Chen, Bill Dolan [paper] 2022.5

-

A Multitask, Multilingual, Multimodal Evaluation of ChatGPT on Reasoning, Hallucination, and Interactivity Yejin Bang, Samuel Cahyawijaya, Nayeon Lee, Wenliang Dai, Dan Su, Bryan Wilie, Holy Lovenia, Ziwei Ji, Tiezheng Yu, Willy Chung, Quyet V. Do, Yan Xu, Pascale Fung [paper] 2023.2

-

HaluEval: A Large-Scale Hallucination Evaluation Benchmark for Large Language Models Junyi Li, Xiaoxue Cheng, Wayne Xin Zhao, Jian-Yun Nie, Ji-Rong Wen [paper] 2023.5

-

Automatic Evaluation of Attribution by Large Language Models Xiang Yue, Boshi Wang, Kai Zhang, Ziru Chen, Yu Su, Huan Sun [paper] 2023.5

-

Adaptive Chameleon or Stubborn Sloth: Unraveling the Behavior of Large Language Models in Knowledge Clashes Jian Xie, Kai Zhang, Jiangjie Chen, Renze Lou, Yu Su [paper] 2023.5

-

LLMs as Factual Reasoners: Insights from Existing Benchmarks and Beyond Philippe Laban, Wojciech Kryściński, Divyansh Agarwal, Alexander R. Fabbri, Caiming Xiong, Shafiq Joty, Chien-Sheng Wu [paper] 2023.5

-

Evaluating the Factual Consistency of Large Language Models Through News Summarization Derek Tam, Anisha Mascarenhas, Shiyue Zhang, Sarah Kwan, Mohit Bansal, Colin Raffel [paper] 2023.5

-

Methods for Measuring, Updating, and Visualizing Factual Beliefs in Language Models Peter Hase, Mona Diab, Asli Celikyilmaz, Xian Li, Zornitsa Kozareva, Veselin Stoyanov, Mohit Bansal, Srinivasan Iyer [paper] 2023.5

-

How Language Model Hallucinations Can Snowball Muru Zhang, Ofir Press, William Merrill, Alisa Liu, Noah A. Smith [paper] 2023.5

-

Evaluating Factual Consistency of Texts with Semantic Role Labeling Jing Fan, Dennis Aumiller, Michael Gertz [paper] 2023.5

-

FActScore: Fine-grained Atomic Evaluation of Factual Precision in Long Form Text Generation Sewon Min, Kalpesh Krishna, Xinxi Lyu, Mike Lewis, Wen-tau Yih, Pang Wei Koh, Mohit Iyyer, Luke Zettlemoyer, Hannaneh Hajishirzi [paper] 2023.5

-

Measuring and Modifying Factual Knowledge in Large Language Models Pouya Pezeshkpour [paper] 2023.6

-

KoLA: Carefully Benchmarking World Knowledge of Large Language Models Jifan Yu, Xiaozhi Wang, Shangqing Tu, Shulin Cao, Daniel Zhang-Li, Xin Lv, Hao Peng, Zijun Yao, Xiaohan Zhang, Hanming Li, Chunyang Li, Zheyuan Zhang, Yushi Bai, Yantao Liu, Amy Xin, Nianyi Lin, Kaifeng Yun, Linlu Gong, Jianhui Chen, Zhili Wu, Yunjia Qi, Weikai Li, Yong Guan, Kaisheng Zeng, Ji Qi, Hailong Jin, Jinxin Liu, Yu Gu, Yuan Yao, Ning Ding, Lei Hou, Zhiyuan Liu, Bin Xu, Jie Tang, Juanzi Li [paper] 2023.6

-

Generating Benchmarks for Factuality Evaluation of Language Models Dor Muhlgay, Ori Ram, Inbal Magar, Yoav Levine, Nir Ratner, Yonatan Belinkov, Omri Abend, Kevin Leyton-Brown, Amnon Shashua, Yoav Shoham [paper] 2023.7

-

Fact-Checking of AI-Generated Reports Razi Mahmood, Ge Wang, Mannudeep Kalra, Pingkun Yan [paper] 2023.7

-

Med-HALT: Medical Domain Hallucination Test for Large Language Models Logesh Kumar Umapathi, Ankit Pal, Malaikannan Sankarasubbu [paper] 2023.7

-

Large Language Models on Wikipedia-Style Survey Generation: an Evaluation in NLP Concepts

Fan Gao, Hang Jiang, Moritz Blum, Jinghui Lu, Yuang Jiang, Irene Li [paper] 2023.8

-

ChatGPT Hallucinates when Attributing Answers Guido Zuccon, Bevan Koopman, Razia Shaik [paper] 2023.9

-

BAMBOO: A Comprehensive Benchmark for Evaluating Long Text Modeling Capacities of Large Language Models Zican Dong, Tianyi Tang, Junyi Li, Wayne Xin Zhao, Ji-Rong Wen [paper] 2023.9

-

KLoB: a Benchmark for Assessing Knowledge Locating Methods in Language Models Yiming Ju, Zheng Zhang [paper] 2023.9

-

AutoHall: Automated Hallucination Dataset Generation for Large Language Models Zouying Cao, Yifei Yang, Hai Zhao [paper] 2023.10

-

FreshLLMs: Refreshing Large Language Models with Search Engine Augmentation Tu Vu, Mohit Iyyer, Xuezhi Wang, Noah Constant, Jerry Wei, Jason Wei, Chris Tar, Yun-Hsuan Sung, Denny Zhou, Quoc Le, Thang Luong [paper] 2023.10

-

Evaluating Hallucinations in Chinese Large Language Models Qinyuan Cheng, Tianxiang Sun, Wenwei Zhang, Siyin Wang, Xiangyang Liu, Mozhi Zhang, Junliang He, Mianqiu Huang, Zhangyue Yin, Kai Chen, Xipeng Qiu [paper] 2023.10

-

FELM: Benchmarking Factuality Evaluation of Large Language Models Shiqi Chen, Yiran Zhao, Jinghan Zhang, I-Chun Chern, Siyang Gao, Pengfei Liu, Junxian He [paper] 2023.10

-

A New Benchmark and Reverse Validation Method for Passage-level Hallucination Detection Shiping Yang, Renliang Sun, Xiaojun Wan [paper] 2023.10

-

Do Large Language Models Know about Facts? Xuming Hu, Junzhe Chen, Xiaochuan Li, Yufei Guo, Lijie Wen, Philip S. Yu, Zhijiang Guo [paper] 2023.10

-

Beyond Factuality: A Comprehensive Evaluation of Large Language Models as Knowledge Generators Liang Chen, Yang Deng, Yatao Bian, Zeyu Qin, Bingzhe Wu, Tat-Seng Chua, Kam-Fai Wong [paper] 2023.10

-

Unveiling the Siren's Song: Towards Reliable Fact-Conflicting Hallucination Detection Xiang Chen, Duanzheng Song, Honghao Gui, Chengxi Wang, Ningyu Zhang, Fei Huang, Chengfei Lv, Dan Zhang, Huajun Chen [paper] 2023.10

-

Cross-Lingual Consistency of Factual Knowledge in Multilingual Language Models Jirui Qi, Raquel Fernández, Arianna Bisazza [paper] 2023.10

-

Automatic Hallucination Assessment for Aligned Large Language Models via Transferable Adversarial Attacks Xiaodong Yu, Hao Cheng, Xiaodong Liu, Dan Roth, Jianfeng Gao [paper] 2023.10

-

Creating Trustworthy LLMs: Dealing with Hallucinations in Healthcare AI Muhammad Aurangzeb Ahmad, Ilker Yaramis, Taposh Dutta Roy [paper] 2023.11

-

How Trustworthy are Open-Source LLMs? An Assessment under Malicious Demonstrations Shows their Vulnerabilities Lingbo Mo, Boshi Wang, Muhao Chen, Huan Sun [paper] 2023.11

-

Deficiency of Large Language Models in Finance: An Empirical Examination of Hallucination Haoqiang Kang, Xiao-Yang Liu [paper] 2023.11

-

UHGEval: Benchmarking the Hallucination of Chinese Large Language Models via Unconstrained Generation Xun Liang, Shichao Song, Simin Niu, Zhiyu Li, Feiyu Xiong, Bo Tang, Zhaohui Wy, Dawei He, Peng Cheng, Zhonghao Wang, Haiying Deng [paper] 2023.11

-

DelucionQA: Detecting Hallucinations in Domain-specific Question Answering Mobashir Sadat, Zhengyu Zhou, Lukas Lange, Jun Araki, Arsalan Gundroo, Bingqing Wang, Rakesh R Menon, Md Rizwan Parvez, Zhe Feng [paper] 2023.12

-

Are Large Language Models Good Fact Checkers: A Preliminary Study Han Cao, Lingwei Wei, Mengyang Chen, Wei Zhou, Songlin Hu [paper] 2023.11

-

RAGTruth: A Hallucination Corpus for Developing Trustworthy Retrieval-Augmented Language Models Yuanhao Wu, Juno Zhu, Siliang Xu, Kashun Shum, Cheng Niu, Randy Zhong, Juntong Song, Tong Zhang [paper] 2024.01

-

Measuring and Reducing LLM Hallucination without Gold-Standard Answers via Expertise-Weighting Jiaheng Wei, Yuanshun Yao, Jean-Francois Ton, Hongyi Guo, Andrew Estornell, Yang Liu [paper] 2024.02

-

Multi-FAct: Assessing Multilingual LLMs' Multi-Regional Knowledge using FActScore Sheikh Shafayat, Eunsu Kim, Juhyun Oh, Alice Oh [paper] 2024.02

-

Comparing Hallucination Detection Metrics for Multilingual Generation Haoqiang Kang, Terra Blevins, Luke Zettlemoyer [paper] 2024.02

-

In Search of Truth: An Interrogation Approach to Hallucination Detection Yakir Yehuda, Itzik Malkiel, Oren Barkan, Jonathan Weill, Royi Ronen, Noam Koenigstein [paper] 2024.03

-

HaluEval-Wild: Evaluating Hallucinations of Language Models in the Wild Zhiying Zhu, Zhiqing Sun, Yiming Yang [paper] 2024.03

-

Benchmarking Hallucination in Large Language Models based on Unanswerable Math Word Problem Yuhong Sun, Zhangyue Yin, Qipeng Guo, Jiawen Wu, Xipeng Qiu, Hui Zhao [paper] 2024.03

-

DEE: Dual-stage Explainable Evaluation Method for Text Generation Shenyu Zhang, Yu Li, Rui Wu, Xiutian Huang, Yongrui Chen, Wenhao Xu, Guilin Qi [paper] 2024.03

-

LLMs hallucinate graphs too: a structural perspective Erwan Le Merrer, Gilles Tredan [paper] 2024.09

There is also a line of works that try to explain the hallucination with LLMs.

-

How Pre-trained Language Models Capture Factual Knowledge? A Causal-Inspired Analysis Shaobo Li, Xiaoguang Li, Lifeng Shang, Zhenhua Dong, Chengjie Sun, Bingquan Liu, Zhenzhou Ji, Xin Jiang, Qun Liu [paper] 2022.3

-

On the Origin of Hallucinations in Conversational Models: Is it the Datasets or the Models? Nouha Dziri, Sivan Milton, Mo Yu, Osmar Zaiane, Siva Reddy [paper] 2022.4

-

Towards Tracing Factual Knowledge in Language Models Back to the Training Data Ekin Akyürek, Tolga Bolukbasi, Frederick Liu, Binbin Xiong, Ian Tenney, Jacob Andreas, Kelvin Guu [paper] 2022.5

-

Language Models (Mostly) Know What They Know Saurav Kadavath, Tom Conerly, Amanda Askell, Tom Henighan, Dawn Drain, Ethan Perez, Nicholas Schiefer, Zac Hatfield-Dodds, Nova DasSarma, Eli Tran-Johnson, Scott Johnston, Sheer El-Showk, Andy Jones, Nelson Elhage, Tristan Hume, Anna Chen, Yuntao Bai, Sam Bowman, Stanislav Fort, Deep Ganguli, Danny Hernandez, Josh Jacobson, Jackson Kernion, Shauna Kravec, Liane Lovitt, Kamal Ndousse, Catherine Olsson, Sam Ringer, Dario Amodei, Tom Brown, Jack Clark, Nicholas Joseph, Ben Mann, Sam McCandlish, Chris Olah, Jared Kaplan [paper] 2022.7

-

Discovering Language Model Behaviors with Model-Written Evaluations Ethan Perez, Sam Ringer, Kamilė Lukošiūtė, Karina Nguyen, Edwin Chen, Scott Heiner, Craig Pettit, Catherine Olsson, Sandipan Kundu, Saurav Kadavath, Andy Jones, Anna Chen, Ben Mann, Brian Israel, Bryan Seethor, Cameron McKinnon, Christopher Olah, Da Yan, Daniela Amodei, Dario Amodei, Dawn Drain, Dustin Li, Eli Tran-Johnson, Guro Khundadze, Jackson Kernion, James Landis, Jamie Kerr, Jared Mueller, Jeeyoon Hyun, Joshua Landau, Kamal Ndousse, Landon Goldberg, Liane Lovitt, Martin Lucas, Michael Sellitto, Miranda Zhang, Neerav Kingsland, Nelson Elhage, Nicholas Joseph, Noemí Mercado, Nova DasSarma, Oliver Rausch, Robin Larson, Sam McCandlish, Scott Johnston, Shauna Kravec, Sheer El Showk, Tamera Lanham, Timothy Telleen-Lawton, Tom Brown, Tom Henighan, Tristan Hume, Yuntao Bai, Zac Hatfield-Dodds, Jack Clark, Samuel R. Bowman, Amanda Askell, Roger Grosse, Danny Hernandez, Deep Ganguli, Evan Hubinger, Nicholas Schiefer, Jared Kaplan [paper] 2022.12

-

Why Does ChatGPT Fall Short in Providing Truthful Answers? Shen Zheng, Jie Huang, Kevin Chen-Chuan Chang [paper] 2023.4

-

Do Large Language Models Know What They Don't Know? Zhangyue Yin, Qiushi Sun, Qipeng Guo, Jiawen Wu, Xipeng Qiu, Xuanjing Huang [paper] 2023.5

-

Sources of Hallucination by Large Language Models on Inference Tasks

Nick McKenna, Tianyi Li, Liang Cheng, Mohammad Javad Hosseini, Mark Johnson, Mark Steedman [paper] 2023.5

-

Enabling Large Language Models to Generate Text with Citations Tianyu Gao, Howard Yen, Jiatong Yu, Danqi Chen [paper] 2023.5

-

Overthinking the Truth: Understanding how Language Models Process False Demonstrations Danny Halawi, Jean-Stanislas Denain, Jacob Steinhardt [paper] 2023.7

-

Investigating the Factual Knowledge Boundary of Large Language Models with Retrieval Augmentation Ruiyang Ren, Yuhao Wang, Yingqi Qu, Wayne Xin Zhao, Jing Liu, Hao Tian, Hua Wu, Ji-Rong Wen, Haifeng Wang [paper] 2023.7

-

Head-to-Tail: How Knowledgeable are Large Language Models (LLM)? A.K.A. Will LLMs Replace Knowledge Graphs? Kai Sun, Yifan Ethan Xu, Hanwen Zha, Yue Liu, Xin Luna Dong [paper] 2023.8

-

Simple synthetic data reduces sycophancy in large language models Jerry Wei, Da Huang, Yifeng Lu, Denny Zhou, Quoc V. Le [paper] 2023.8

-

Do PLMs Know and Understand Ontological Knowledge? Weiqi Wu, Chengyue Jiang, Yong Jiang, Pengjun Xie, Kewei Tu [paper] 2023.9

-

Exploring the Relationship between LLM Hallucinations and Prompt Linguistic Nuances: Readability, Formality, and Concreteness Vipula Rawte, Prachi Priya, S.M Towhidul Islam Tonmoy, S M Mehedi Zaman, Amit Sheth, Amitava Das [paper] 2023.9

-

LLM Lies: Hallucinations are not Bugs, but Features as Adversarial Examples Jia-Yu Yao, Kun-Peng Ning, Zhen-Hui Liu, Mu-Nan Ning, Li Yuan [paper] 2023.10

-

Factuality Challenges in the Era of Large Language Models Isabelle Augenstein, Timothy Baldwin, Meeyoung Cha, Tanmoy Chakraborty, Giovanni Luca Ciampaglia, David Corney, Renee DiResta, Emilio Ferrara, Scott Hale, Alon Halevy, Eduard Hovy, Heng Ji, Filippo Menczer, Ruben Miguez, Preslav Nakov, Dietram Scheufele, Shivam Sharma, Giovanni Zagni [paper] 2023.10

-

The Troubling Emergence of Hallucination in Large Language Models -- An Extensive Definition, Quantification, and Prescriptive Remediations Vipula Rawte, Swagata Chakraborty, Agnibh Pathak, Anubhav Sarkar, S.M Towhidul Islam Tonmoy, Aman Chadha, Amit P. Sheth, Amitava Das [paper] 2023.10

-

The Geometry of Truth: Emergent Linear Structure in Large Language Model Representations of True/False Datasets Samuel Marks, Max Tegmark [paper] 2023.10

-

Representation Engineering: A Top-Down Approach to AI Transparency Andy Zou, Long Phan, Sarah Chen, James Campbell, Phillip Guo, Richard Ren, Alexander Pan, Xuwang Yin, Mantas Mazeika, Ann-Kathrin Dombrowski, Shashwat Goel, Nathaniel Li, Michael J. Byun, Zifan Wang, Alex Mallen, Steven Basart, Sanmi Koyejo, Dawn Song, Matt Fredrikson, J. Zico Kolter, Dan Hendrycks [paper] 2023.10

-

Beyond Factuality: A Comprehensive Evaluation of Large Language Models as Knowledge Generators Liang Chen, Yang Deng, Yatao Bian, Zeyu Qin, Bingzhe Wu, Tat-Seng Chua, Kam-Fai Wong [paper] 2023.10

-

Language Models Hallucinate, but May Excel at Fact Verification Jian Guan, Jesse Dodge, David Wadden, Minlie Huang, Hao Peng [paper] 2023.10

-

Large Language Models Help Humans Verify Truthfulness -- Except When They Are Convincingly Wrong Chenglei Si, Navita Goyal, Sherry Tongshuang Wu, Chen Zhao, Shi Feng, Hal Daumé III, Jordan Boyd-Graber [paper] 2023.10

-

Insights into Classifying and Mitigating LLMs' Hallucinations Alessandro Bruno, Pier Luigi Mazzeo, Aladine Chetouani, Marouane Tliba, Mohamed Amine Kerkouri [paper] 2023.11

-

Deceiving Semantic Shortcuts on Reasoning Chains: How Far Can Models Go without Hallucination? Bangzheng Li, Ben Zhou, Fei Wang, Xingyu Fu, Dan Roth, Muhao Chen [paper] 2023.11

-

Prudent Silence or Foolish Babble? Examining Large Language Models' Responses to the Unknown Genglin Liu, Xingyao Wang, Lifan Yuan, Yangyi Chen, Hao Peng [paper] 2023.11

-

Calibrated Language Models Must Hallucinate Adam Tauman Kalai, Santosh S. Vempala [paper] 2023.11

-

Beyond Surface: Probing LLaMA Across Scales and Layers Nuo Chen, Ning Wu, Shining Liang, Ming Gong, Linjun Shou, Dongmei Zhang, Jia Li [paper] 2023.12

-

HALO: An Ontology for Representing Hallucinations in Generative Models Navapat Nananukul, Mayank Kejriwal [paper] 2023.12

-

Fine-Tuning or Retrieval? Comparing Knowledge Injection in LLMs Oded Ovadia, Menachem Brief, Moshik Mishaeli, Oren Elisha [paper] 2023.12

-

The Dawn After the Dark: An Empirical Study on Factuality Hallucination in Large Language Models Junyi Li, Jie Chen, Ruiyang Ren, Xiaoxue Cheng, Wayne Xin Zhao, Jian-Yun Nie, Ji-Rong Wen [paper] 2024.01

-

Hallucination is Inevitable: An Innate Limitation of Large Language Models Ziwei Xu, Sanjay Jain, Mohan Kankanhalli [paper] 2024.01

-

Mechanisms of non-factual hallucinations in language models Baolong Bi, Shenghua Liu, Yiwei Wang, Lingrui Mei, Xueqi Cheng [paper] 2024.04

-

Is Factuality Decoding a Free Lunch for LLMs? Evaluation on Knowledge Editing Benchmark Baolong Bi, Shenghua Liu, Yiwei Wang, Lingrui Mei, Xueqi Cheng [paper] 2024.04

Numerous recent work tries to mitigate hallucination in LLMs. These methods can be applied at different stages of LLM life cycle.

One main mitigation method during pretraining is (automatically) curating training data. Here are some papers using this method:

- Factuality Enhanced Language Models for Open-Ended Text Generation Nayeon Lee, Wei Ping, Peng Xu, Mostofa Patwary, Pascale Fung, Mohammad Shoeybi, Bryan Catanzaro [paper] 2022.6

- The RefinedWeb Dataset for Falcon LLM: Outperforming Curated Corpora with Web Data, and Web Data Only Guilherme Penedo, Quentin Malartic, Daniel Hesslow, Ruxandra Cojocaru, Alessandro Cappelli, Hamza Alobeidli, Baptiste Pannier, Ebtesam Almazrouei, Julien Launay [paper] 2023.7

- Llama 2: Open Foundation and Fine-Tuned Chat Models Hugo Touvron, Louis Martin, Kevin Stone, Peter Albert, Amjad Almahairi, Yasmine Babaei, Nikolay Bashlykov, Soumya Batra, Prajjwal Bhargava, Shruti Bhosale, Dan Bikel, Lukas Blecher, Cristian Canton Ferrer, Moya Chen, Guillem Cucurull, David Esiobu, Jude Fernandes, Jeremy Fu, Wenyin Fu, Brian Fuller, Cynthia Gao, Vedanuj Goswami, Naman Goyal, Anthony Hartshorn, Saghar Hosseini, Rui Hou, Hakan Inan, Marcin Kardas, Viktor Kerkez, Madian Khabsa, Isabel Kloumann, Artem Korenev, Punit Singh Koura, Marie-Anne Lachaux, Thibaut Lavril, Jenya Lee, Diana Liskovich, Yinghai Lu, Yuning Mao, Xavier Martinet, Todor Mihaylov, Pushkar Mishra, Igor Molybog, Yixin Nie, Andrew Poulton, Jeremy Reizenstein, Rashi Rungta, Kalyan Saladi, Alan Schelten, Ruan Silva, Eric Michael Smith, Ranjan Subramanian, Xiaoqing Ellen Tan, Binh Tang, Ross Taylor, Adina Williams, Jian Xiang Kuan, Puxin Xu, Zheng Yan, Iliyan Zarov, Yuchen Zhang, Angela Fan, Melanie Kambadur, Sharan Narang, Aurelien Rodriguez, Robert Stojnic, Sergey Edunov, Thomas Scialom [paper] 2023.7

- Textbooks Are All You Need II: phi-1.5 technical report Yuanzhi Li, Sébastien Bubeck, Ronen Eldan, Allie Del Giorno, Suriya Gunasekar, Yin Tat Lee [paper] 2023.9

Mitigating hallucination during SFT can involve curating SFT data, such as:

- LIMA: Less Is More for Alignment Chunting Zhou, Pengfei Liu, Puxin Xu, Srini Iyer, Jiao Sun, Yuning Mao, Xuezhe Ma, Avia Efrat, Ping Yu, Lili Yu, Susan Zhang, Gargi Ghosh, Mike Lewis, Luke Zettlemoyer, Omer Levy [paper] 2023.5

- AlpaGasus: Training A Better Alpaca with Fewer Data Lichang Chen, Shiyang Li, Jun Yan, Hai Wang, Kalpa Gunaratna, Vikas Yadav, Zheng Tang, Vijay Srinivasan, Tianyi Zhou, Heng Huang, Hongxia Jin [paper] 2023.7

- Instruction Mining: High-Quality Instruction Data Selection for Large Language Models Yihan Cao, Yanbin Kang, Lichao Sun [paper] 2023.7

- Halo: Estimation and Reduction of Hallucinations in Open-Source Weak Large Language Models Mohamed Elaraby, Mengyin Lu, Jacob Dunn, Xueying Zhang, Yu Wang, Shizhu Liu [paper] 2023.8

- Specialist or Generalist? Instruction Tuning for Specific NLP Tasks Chufan Shi, Yixuan Su, Cheng Yang, Yujiu Yang, Deng Cai [paper] 2023.10

- Fine-tuning Language Models for Factuality Katherine Tian, Eric Mitchell, Huaxiu Yao, Christopher D. Manning, Chelsea Finn [paper] 2023.11

- R-Tuning: Teaching Large Language Models to Refuse Unknown Questions Hanning Zhang, Shizhe Diao, Yong Lin, Yi R. Fung, Qing Lian, Xingyao Wang, Yangyi Chen, Heng Ji, Tong Zhang [paper] 2023.11

- Dial BeInfo for Faithfulness: Improving Factuality of Information-Seeking Dialogue via Behavioural Fine-Tuning Evgeniia Razumovskaia, Ivan Vulić, Pavle Marković, Tomasz Cichy, Qian Zheng, Tsung-Hsien Wen, Paweł Budzianowski [paper] 2023.11

- Supervised Knowledge Makes Large Language Models Better In-context Learners Linyi Yang, Shuibai Zhang, Zhuohao Yu, Guangsheng Bao, Yidong Wang, Jindong Wang, Ruochen Xu, Wei Ye, Xing Xie, Weizhu Chen, Yue Zhang [paper] 2023.12

- Alignment for Honesty Yuqing Yang, Ethan Chern, Xipeng Qiu, Graham Neubig, Pengfei Liu [paper] 2023.12

- Mitigating Hallucinations of Large Language Models via Knowledge Consistent Alignment Fanqi Wan, Xinting Huang, Leyang Cui, Xiaojun Quan, Wei Bi, Shuming Shi [paper] 2024.01

- Gotcha! Don't trick me with unanswerable questions! Self-aligning Large Language Models for Responding to Unknown Questions Yang Deng, Yong Zhao, Moxin Li, See-Kiong Ng, Tat-Seng Chua [paper] 2024.02

- Utilize the Flow before Stepping into the Same River Twice: Certainty Represented Knowledge Flow for Refusal-Aware Instruction Tuning Runchuan Zhu, Zhipeng Ma, Jiang Wu, Junyuan Gao, Jiaqi Wang, Dahua Lin, Conghui He [paper] 2024.10

Some researchers claim that the behavior cloning phenomenon in SFT can induce hallucinations. So some works try to mitigate hallucinations via honesty-oriented SFT.

- MOSS: Training Conversational Language Models from Synthetic Data Tianxiang Sun and Xiaotian Zhang and Zhengfu He and Peng Li and Qinyuan Cheng and Hang Yan and Xiangyang Liu and Yunfan Shao and Qiong Tang and Xingjian Zhao and Ke Chen and Yining Zheng and Zhejian Zhou and Ruixiao Li and Jun Zhan and Yunhua Zhou and Linyang Li and Xiaogui Yang and Lingling Wu and Zhangyue Yin and Xuanjing Huang and Xipeng Qiu [repo] 2023

An interesting new work proposed tuning LLMs on some synthetic tasks, which they found can also reduce hallucinations.

- Teaching Language Models to Hallucinate Less with Synthetic Tasks Erik Jones, Hamid Palangi, Clarisse Simões, Varun Chandrasekaran, Subhabrata Mukherjee, Arindam Mitra, Ahmed Awadallah, Ece Kamar [paper] 2023.10

Recent work suggests that hallucinations can also be mitigated by leveraging unlabeled/unpaired data with cycle training:

- Faithful Low-Resource Data-to-Text Generation through Cycle Training Zhuoer Wang, Marcus Collins, Nikhita Vedula, Simone Filice, Shervin Malmasi, Oleg Rokhlenko[paper] 2023.7

- Training language models to follow instructions with human feedback Long Ouyang, Jeff Wu, Xu Jiang, Diogo Almeida, Carroll L. Wainwright, Pamela Mishkin, Chong Zhang, Sandhini Agarwal, Katarina Slama, Alex Ray, John Schulman, Jacob Hilton, Fraser Kelton, Luke Miller, Maddie Simens, Amanda Askell, Peter Welinder, Paul Christiano, Jan Leike, Ryan Lowe [paper] 2022.3

- GPT-4 Technical Report OpenAI [paper] 2023.3

- Let's Verify Step by Step Hunter Lightman, Vineet Kosaraju, Yura Burda, Harri Edwards, Bowen Baker, Teddy Lee, Jan Leike, John Schulman, Ilya Sutskever, Karl Cobbe [paper] 2023.5

- Reinforcement learning from human feedback: Progress and challenges John Schulman [talk] 2023.5

- Fine-Grained Human Feedback Gives Better Rewards for Language Model Training Zeqiu Wu, Yushi Hu, Weijia Shi, Nouha Dziri, Alane Suhr, Prithviraj Ammanabrolu, Noah A. Smith, Mari Ostendorf, Hannaneh Hajishirzi [paper] 2023.6

- Aligning Large Multimodal Models with Factually Augmented RLHF Zhiqing Sun, Sheng Shen, Shengcao Cao, Haotian Liu, Chunyuan Li, Yikang Shen, Chuang Gan, Liang-Yan Gui, Yu-Xiong Wang, Yiming Yang, Kurt Keutzer, Trevor Darrell [paper] 2023.9

- Human Feedback is not Gold Standard Tom Hosking, Phil Blunsom, Max Bartolo [paper] 2023.9

- Tool-Augmented Reward Modeling Lei Li, Yekun Chai, Shuohuan Wang, Yu Sun, Hao Tian, Ningyu Zhang, Hua Wu [paper] 2023.10

-

Factuality Enhanced Language Models for Open-Ended Text Generation Nayeon Lee, Wei Ping, Peng Xu, Mostofa Patwary, Pascale Fung, Mohammad Shoeybi, Bryan Catanzaro [paper] 2022.6

-

When Not to Trust Language Models: Investigating Effectiveness of Parametric and Non-Parametric Memories Alex Mallen, Akari Asai, Victor Zhong, Rajarshi Das, Daniel Khashabi, Hannaneh Hajishirzi [paper] 2022.10

-

Trusting Your Evidence: Hallucinate Less with Context-aware Decoding Weijia Shi, Xiaochuang Han, Mike Lewis, Yulia Tsvetkov, Luke Zettlemoyer, Scott Wen-tau Yih [paper] 2023.5

-

Inference-Time Intervention: Eliciting Truthful Answers from a Language Model Kenneth Li, Oam Patel, Fernanda Viégas, Hanspeter Pfister, Martin Wattenberg [paper] 2023.6

-

DoLa: Decoding by Contrasting Layers Improves Factuality in Large Language Models Yung-Sung Chuang, Yujia Xie, Hongyin Luo, Yoon Kim, James Glass, Pengcheng He [paper] 2023.9

-

Mitigating Hallucinations and Off-target Machine Translation with Source-Contrastive and Language-Contrastive Decoding Rico Sennrich, Jannis Vamvas, Alireza Mohammadshahi [paper] 2023.9

-

Chain-of-Verification Reduces Hallucination in Large Language Models Shehzaad Dhuliawala, Mojtaba Komeili, Jing Xu, Roberta Raileanu, Xian Li, Asli Celikyilmaz, Jason Weston [paper] 2023.9

-

KCTS: Knowledge-Constrained Tree Search Decoding with Token-Level Hallucination Detection Sehyun Choi, Tianqing Fang, Zhaowei Wang, Yangqiu Song [paper] 2023.10

-

Fidelity-Enriched Contrastive Search: Reconciling the Faithfulness-Diversity Trade-Off in Text Generation Wei-Lin Chen, Cheng-Kuang Wu, Hsin-Hsi Chen, Chung-Chi Chen [paper] 2023.10

-

An Emulator for Fine-Tuning Large Language Models using Small Language Models Eric Mitchell, Rafael Rafailov, Archit Sharma, Chelsea Finn, Christopher D. Manning [paper] 2023.10

-

Critic-Driven Decoding for Mitigating Hallucinations in Data-to-text Generation Mateusz Lango, Ondřej Dušek [paper] 2023.10

-

Correction with Backtracking Reduces Hallucination in Summarization Zhenzhen Liu, Chao Wan, Varsha Kishore, Jin Peng Zhou, Minmin Chen, Kilian Q. Weinberger [paper] 2023.11

-

Chain-of-Note: Enhancing Robustness in Retrieval-Augmented Language Models Wenhao Yu, Hongming Zhang, Xiaoman Pan, Kaixin Ma, Hongwei Wang, Dong Yu [paper] 2023.11

-

Unlocking Anticipatory Text Generation: A Constrained Approach for Faithful Decoding with Large Language Models Lifu Tu, Semih Yavuz, Jin Qu, Jiacheng Xu, Rui Meng, Caiming Xiong, Yingbo Zhou [paper] 2023.12

-

Context-aware Decoding Reduces Hallucination in Query-focused Summarization Zhichao Xu [paper] 2023.12

-

Alleviating Hallucinations of Large Language Models through Induced Hallucinations Yue Zhang, Leyang Cui, Wei Bi, Shuming Shi [paper] 2023.12

-

SH2: Self-Highlighted Hesitation Helps You Decode More Truthfully Jushi Kai, Tianhang Zhang, Hai Hu, Zhouhan Lin [paper] 2024.01

-

TruthX: Alleviating Hallucinations by Editing Large Language Models in Truthful Space Shaolei Zhang, Tian Yu, Yang Feng [paper] 2024.02

-

In-Context Sharpness as Alerts: An Inner Representation Perspective for Hallucination Mitigation Shiqi Chen, Miao Xiong, Junteng Liu, Zhengxuan Wu, Teng Xiao, Siyang Gao, Junxian He [paper] 2024.03

-

Chain-of-Action: Faithful and Multimodal Question Answering through Large Language Models Zhenyu Pan, Haozheng Luo, Manling Li, Han Liu [paper] 2024.03

-

RARR: Researching and Revising What Language Models Say, Using Language Models Luyu Gao, Zhuyun Dai, Panupong Pasupat, Anthony Chen, Arun Tejasvi Chaganty, Yicheng Fan, Vincent Y. Zhao, Ni Lao, Hongrae Lee, Da-Cheng Juan, Kelvin Guu [paper] 2022.10

-

Check Your Facts and Try Again: Improving Large Language Models with External Knowledge and Automated Feedback Baolin Peng, Michel Galley, Pengcheng He, Hao Cheng, Yujia Xie, Yu Hu, Qiuyuan Huang, Lars Liden, Zhou Yu, Weizhu Chen, Jianfeng Gao [paper] 2023.2

-

Retrieval-Based Prompt Selection for Code-Related Few-Shot Learning Nashid Noor, Mifta Santaha, Ali Mesbah [paper] 2023.04

-

GeneGPT: Augmenting Large Language Models with Domain Tools for Improved Access to Biomedical Information Qiao Jin, Yifan Yang, Qingyu Chen, Zhiyong Lu [paper] 2023.4

-

Zero-shot Faithful Factual Error Correction Kung-Hsiang Huang, Hou Pong Chan, Heng Ji [paper] 2023.5

-

CRITIC: Large Language Models Can Self-Correct with Tool-Interactive Critiquing Zhibin Gou, Zhihong Shao, Yeyun Gong, Yelong Shen, Yujiu Yang, Nan Duan, Weizhu Chen [paper] 2023.5

-

PURR: Efficiently Editing Language Model Hallucinations by Denoising Language Model Corruptions Anthony Chen, Panupong Pasupat, Sameer Singh, Hongrae Lee, Kelvin Guu [paper] 2023.5

-

Verify-and-Edit: A Knowledge-Enhanced Chain-of-Thought Framework Ruochen Zhao, Xingxuan Li, Shafiq Joty, Chengwei Qin, Lidong Bing [paper] 2023.5

-

Self-Checker: Plug-and-Play Modules for Fact-Checking with Large Language Models

Miaoran Li, Baolin Peng, Zhu Zhang [paper] 2023.5 -

Augmented Large Language Models with Parametric Knowledge Guiding Ziyang Luo, Can Xu, Pu Zhao, Xiubo Geng, Chongyang Tao, Jing Ma, Qingwei Lin, Daxin Jiang [paper] 2023.5

-

WikiChat: Stopping the Hallucination of Large Language Model Chatbots by Few-Shot Grounding on Wikipedia Sina J. Semnani, Violet Z. Yao, Heidi C. Zhang, Monica S. Lam [paper] [repo] 2023.5

-

FacTool: Factuality Detection in Generative AI -- A Tool Augmented Framework for Multi-Task and Multi-Domain Scenarios I-Chun Chern, Steffi Chern, Shiqi Chen, Weizhe Yuan, Kehua Feng, Chunting Zhou, Junxian He, Graham Neubig, Pengfei Liu [paper] 2023.7

-

Knowledge Solver: Teaching LLMs to Search for Domain Knowledge from Knowledge Graphs Chao Feng, Xinyu Zhang, Zichu Fei [paper] 2023.9

-

"Merge Conflicts!" Exploring the Impacts of External Distractors to Parametric Knowledge Graphs Cheng Qian, Xinran Zhao, Sherry Tongshuang Wu [paper] 2023.9

-

BTR: Binary Token Representations for Efficient Retrieval Augmented Language Models Qingqing Cao, Sewon Min, Yizhong Wang, Hannaneh Hajishirzi [paper] 2023.10

-

FreshLLMs: Refreshing Large Language Models with Search Engine Augmentation Tu Vu, Mohit Iyyer, Xuezhi Wang, Noah Constant, Jerry Wei, Jason Wei, Chris Tar, Yun-Hsuan Sung, Denny Zhou, Quoc Le, Thang Luong [paper] 2023.10

-

FLEEK: Factual Error Detection and Correction with Evidence Retrieved from External Knowledge Farima Fatahi Bayat, Kun Qian, Benjamin Han, Yisi Sang, Anton Belyi, Samira Khorshidi, Fei Wu, Ihab F. Ilyas, Yunyao Li [paper] 2023.10

-

Evaluating the Effectiveness of Retrieval-Augmented Large Language Models in Scientific Document Reasoning Sai Munikoti, Anurag Acharya, Sridevi Wagle, Sameera Horawalavithana [paper] 2023.11

-

Learn to Refuse: Making Large Language Models More Controllable and Reliable through Knowledge Scope Limitation and Refusal Mechanism Lang Cao [paper] 2023.11

-

Learning to Filter Context for Retrieval-Augmented Generation Zhiruo Wang, Jun Araki, Zhengbao Jiang, Md Rizwan Parvez, Graham Neubig [paper] 2023.11

-

KTRL+F: Knowledge-Augmented In-Document Search Hanseok Oh, Haebin Shin, Miyoung Ko, Hyunji Lee, Minjoon Seo [paper] 2023.11

-

Mitigating Large Language Model Hallucinations via Autonomous Knowledge Graph-based Retrofitting Xinyan Guan, Yanjiang Liu, Hongyu Lin, Yaojie Lu, Ben He, Xianpei Han, Le Sun [paper] 2023.11

-

Ever: Mitigating Hallucination in Large Language Models through Real-Time Verification and Rectification Haoqiang Kang, Juntong Ni, Huaxiu Yao [paper] 2023.11

-

Minimizing Factual Inconsistency and Hallucination in Large Language Models Muneeswaran I, Shreya Saxena, Siva Prasad, M V Sai Prakash, Advaith Shankar, Varun V, Vishal Vaddina, Saisubramaniam Gopalakrishnan [paper] 2023.11

-

Seven Failure Points When Engineering a Retrieval Augmented Generation System Scott Barnett, Stefanus Kurniawan, Srikanth Thudumu, Zach Brannelly, Mohamed Abdelrazek [paper] 2024.01

-

When Do LLMs Need Retrieval Augmentation? Mitigating LLMs' Overconfidence Helps Retrieval Augmentation Shiyu Ni, Keping Bi, Jiafeng Guo, Xueqi Cheng [paper] 2024.02

-

Retrieve Only When It Needs: Adaptive Retrieval Augmentation for Hallucination Mitigation in Large Language Models Hanxing Ding, Liang Pang, Zihao Wei, Huawei Shen, Xueqi Cheng [paper] 2024.02

-

RAT: Retrieval Augmented Thoughts Elicit Context-Aware Reasoning in Long-Horizon Generation Zihao Wang, Anji Liu, Haowei Lin, Jiaqi Li, Xiaojian Ma, Yitao Liang [paper] 2024.03

-

Truth-Aware Context Selection: Mitigating the Hallucinations of Large Language Models Being Misled by Untruthful Contexts Tian Yu, Shaolei Zhang, Yang Feng [paper] 2024.03

-

FACTOID: FACtual enTailment fOr hallucInation Detection Vipula Rawte, S.M Towhidul Islam Tonmoy, Krishnav Rajbangshi, Shravani Nag, Aman Chadha, Amit P. Sheth, Amitava Das [paper] 2024.03

-

Rejection Improves Reliability: Training LLMs to Refuse Unknown Questions Using RL from Knowledge Feedback Hongshen Xu, Zichen Zhu, Da Ma, Situo Zhang, Shuai Fan, Lu Chen, Kai Yu [paper] 2024.03

-

SelfCheckGPT: Zero-Resource Black-Box Hallucination Detection for Generative Large Language Models Potsawee Manakul, Adian Liusie, Mark J. F. Gales [paper] 2023.3

-

Self-contradictory Hallucinations of Large Language Models: Evaluation, Detection and Mitigation Niels Mündler, Jingxuan He, Slobodan Jenko, Martin Vechev [paper] 2023.5

-

Do Language Models Know When They're Hallucinating References? Ayush Agrawal, Lester Mackey, Adam Tauman Kalai [paper] 2023.5

-

LLM Calibration and Automatic Hallucination Detection via Pareto Optimal Self-supervision Theodore Zhao, Mu Wei, J. Samuel Preston, Hoifung Poon [paper] 2023.6

-

A Stitch in Time Saves Nine: Detecting and Mitigating Hallucinations of LLMs by Validating Low-Confidence Generation Neeraj Varshney, Wenlin Yao, Hongming Zhang, Jianshu Chen, Dong Yu [paper] 2023.7

-

Zero-Resource Hallucination Prevention for Large Language Models Junyu Luo, Cao Xiao, Fenglong Ma [paper] 2023.9

-

Attention Satisfies: A Constraint-Satisfaction Lens on Factual Errors of Language Models Mert Yuksekgonul, Varun Chandrasekaran, Erik Jones, Suriya Gunasekar, Ranjita Naik, Hamid Palangi, Ece Kamar, Besmira Nushi [paper] 2023.9

-

Improving the Reliability of Large Language Models by Leveraging Uncertainty-Aware In-Context Learning Yuchen Yang, Houqiang Li, Yanfeng Wang, Yu Wang [paper] 2023.10

-

N-Critics: Self-Refinement of Large Language Models with Ensemble of Critics Sajad Mousavi, Ricardo Luna Gutiérrez, Desik Rengarajan, Vineet Gundecha, Ashwin Ramesh Babu, Avisek Naug, Antonio Guillen, Soumyendu Sarkar [paper] 2023.10

-

Self-RAG: Learning to Retrieve, Generate, and Critique through Self-Reflection Akari Asai, Zeqiu Wu, Yizhong Wang, Avirup Sil, Hannaneh Hajishirzi [paper] 2023.10

-

SAC3: Reliable Hallucination Detection in Black-Box Language Models via Semantic-aware Cross-check Consistency Jiaxin Zhang, Zhuohang Li, Kamalika Das, Bradley A. Malin, Sricharan Kumar [paper] 2023.11

-

LM-Polygraph: Uncertainty Estimation for Language Models Ekaterina Fadeeva, Roman Vashurin, Akim Tsvigun, Artem Vazhentsev, Sergey Petrakov, Kirill Fedyanin, Daniil Vasilev, Elizaveta Goncharova, Alexander Panchenko, Maxim Panov, Timothy Baldwin, Artem Shelmanov [paper] 2023.11

-

Enhancing Uncertainty-Based Hallucination Detection with Stronger Focus Tianhang Zhang, Lin Qiu, Qipeng Guo, Cheng Deng, Yue Zhang, Zheng Zhang, Chenghu Zhou, Xinbing Wang, Luoyi Fu [paper] 2023.11

-

RELIC: Investigating Large Language Model Responses using Self-Consistency Furui Cheng, Vilém Zouhar, Simran Arora, Mrinmaya Sachan, Hendrik Strobelt, Mennatallah El-Assady [paper] 2023.11

-

Fact-Checking the Output of Large Language Models via Token-Level Uncertainty Quantification Ekaterina Fadeeva, Aleksandr Rubashevskii, Artem Shelmanov, Sergey Petrakov, Haonan Li, Hamdy Mubarak, Evgenii Tsymbalov, Gleb Kuzmin, Alexander Panchenko, Timothy Baldwin, Preslav Nakov, Maxim Panov [paper] 2024.03

-

Improving Factuality and Reasoning in Language Models through Multiagent Debate Yilun Du, Shuang Li, Antonio Torralba, Joshua B. Tenenbaum, Igor Mordatch [paper] 2023.5

-

LM vs LM: Detecting Factual Errors via Cross Examination Roi Cohen, May Hamri, Mor Geva, Amir Globerson [paper] 2023.5

-

Unleashing Cognitive Synergy in Large Language Models: A Task-Solving Agent through Multi-Persona Self-Collaboration Zhenhailong Wang, Shaoguang Mao, Wenshan Wu, Tao Ge, Furu Wei, Heng Ji [paper] 2023.7

-

Theory of Mind for Multi-Agent Collaboration via Large Language Models Huao Li, Yu Quan Chong, Simon Stepputtis, Joseph Campbell, Dana Hughes, Michael Lewis, Katia Sycara [paper] 2023.10

-

N-Critics: Self-Refinement of Large Language Models with Ensemble of Critics Sajad Mousavi, Ricardo Luna Gutiérrez, Desik Rengarajan, Vineet Gundecha, Ashwin Ramesh Babu, Avisek Naug, Antonio Guillen, Soumyendu Sarkar [paper] 2023.10

-

Red Teaming for Large Language Models At Scale: Tackling Hallucinations on Mathematics Tasks Aleksander Buszydlik, Karol Dobiczek, Michał Teodor Okoń, Konrad Skublicki, Philip Lippmann, Jie Yang [paper] 2024.01

-

Mitigating Language Model Hallucination with Interactive Question-Knowledge Alignment Shuo Zhang, Liangming Pan, Junzhou Zhao, William Yang Wang [paper] 2023.5

-

Automatic and Human-AI Interactive Text Generation Yao Dou, Philippe Laban, Claire Gardent, Wei Xu [paper] 2023.10

-

The Internal State of an LLM Knows When its Lying Amos Azaria, Tom Mitchell [paper] 2023.4

-

Do Language Models Know When They're Hallucinating References? Ayush Agrawal, Lester Mackey, Adam Tauman Kalai [paper] 2023.5

-

Inference-Time Intervention: Eliciting Truthful Answers from a Language Model Kenneth Li, Oam Patel, Fernanda Viégas, Hanspeter Pfister, Martin Wattenberg [paper] 2023.6

-

Knowledge Sanitization of Large Language Models Yoichi Ishibashi, Hidetoshi Shimodaira [paper] 2023.9

-

Representation Engineering: A Top-Down Approach to AI Transparency Andy Zou, Long Phan, Sarah Chen, James Campbell, Phillip Guo, Richard Ren, Alexander Pan, Xuwang Yin, Mantas Mazeika, Ann-Kathrin Dombrowski, Shashwat Goel, Nathaniel Li, Michael J. Byun, Zifan Wang, Alex Mallen, Steven Basart, Sanmi Koyejo, Dawn Song, Matt Fredrikson, J. Zico Kolter, Dan Hendrycks [paper] 2023.10

-

Weakly Supervised Detection of Hallucinations in LLM Activations Miriam Rateike, Celia Cintas, John Wamburu, Tanya Akumu, Skyler Speakman [paper] 2023.12

-

The Curious Case of Hallucinatory (Un)answerability: Finding Truths in the Hidden States of Over-Confident Large Language Models Aviv Slobodkin, Omer Goldman, Avi Caciularu, Ido Dagan, Shauli Ravfogel [paper] 2023.12

-

Do Androids Know They're Only Dreaming of Electric Sheep? Sky CH-Wang, Benjamin Van Durme, Jason Eisner, Chris Kedzie [paper] 2023.12

-

Truth Forest: Toward Multi-Scale Truthfulness in Large Language Models through Intervention without Tuning Zhongzhi Chen, Xingwu Sun, Xianfeng Jiao, Fengzong Lian, Zhanhui Kang, Di Wang, Cheng-Zhong Xu [paper] 2023.12

-

On Early Detection of Hallucinations in Factual Question Answering Ben Snyder, Marius Moisescu, Muhammad Bilal Zafar [paper] 2023.12

-

Reducing LLM Hallucinations using Epistemic Neural Networks Shreyas Verma, Kien Tran, Yusuf Ali, Guangyu Min [paper] 2023.12

-

HILL: A Hallucination Identifier for Large Language Models Florian Leiser, Sven Eckhardt, Valentin Leuthe, Merlin Knaeble, Alexander Maedche, Gerhard Schwabe, Ali Sunyaev [paper] 2024.03

-

Unsupervised Real-Time Hallucination Detection based on the Internal States of Large Language Models Weihang Su, Changyue Wang, Qingyao Ai, Yiran HU, Zhijing Wu, Yujia Zhou, Yiqun Liu [paper] 2024.03

-

On Large Language Models' Hallucination with Regard to Known Facts Che Jiang, Biqing Qi, Xiangyu Hong, Dayuan Fu, Yang Cheng, Fandong Meng, Mo Yu, Bowen Zhou, Jie Zhou [paper] 2024.03

We warmly welcome any kinds of useful suggestions or contributions. Feel free to drop us an issue or contact Hill with this e-mail.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for llm-hallucination-survey

Similar Open Source Tools

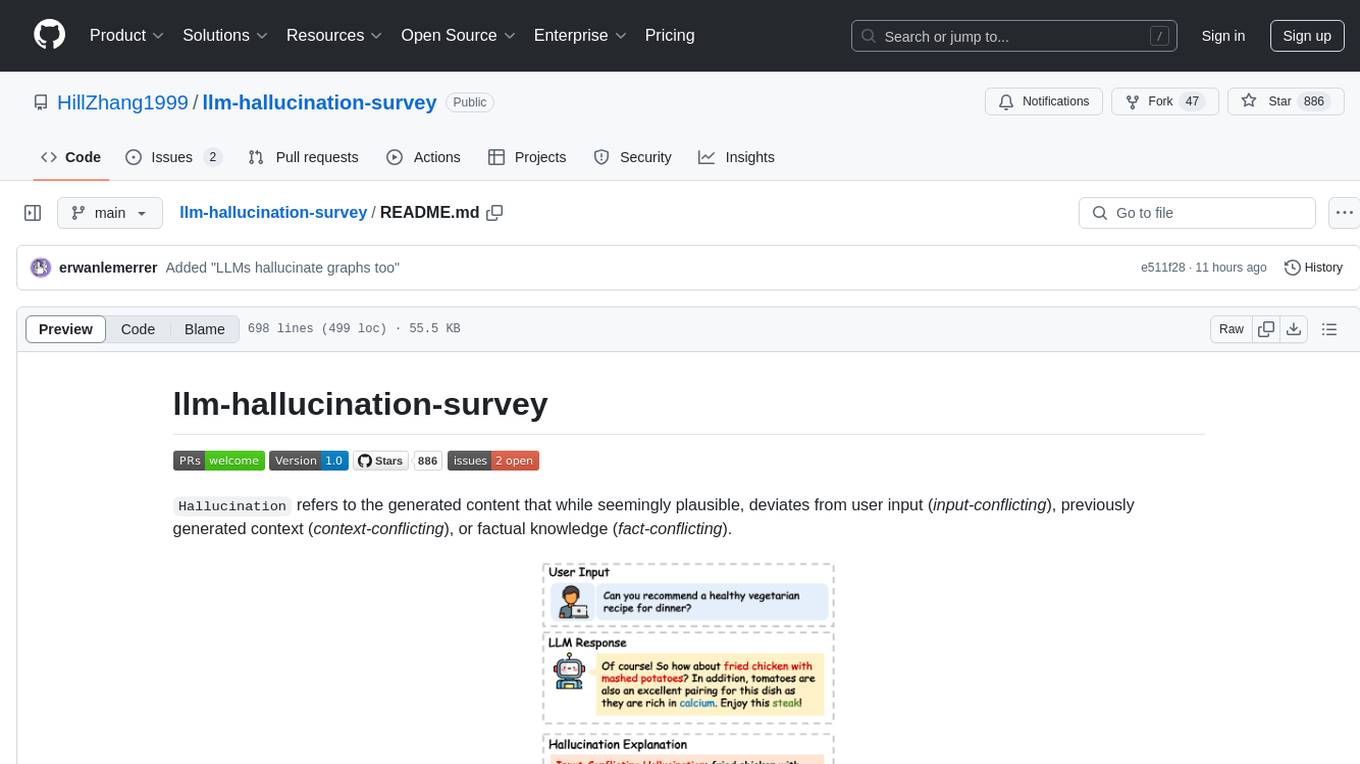

Awesome-LLM-Reasoning

**Curated collection of papers and resources on how to unlock the reasoning ability of LLMs and MLLMs.** **Description in less than 400 words, no line breaks and quotation marks.** Large Language Models (LLMs) have revolutionized the NLP landscape, showing improved performance and sample efficiency over smaller models. However, increasing model size alone has not proved sufficient for high performance on challenging reasoning tasks, such as solving arithmetic or commonsense problems. This curated collection of papers and resources presents the latest advancements in unlocking the reasoning abilities of LLMs and Multimodal LLMs (MLLMs). It covers various techniques, benchmarks, and applications, providing a comprehensive overview of the field. **5 jobs suitable for this tool, in lowercase letters.** - content writer - researcher - data analyst - software engineer - product manager **Keywords of the tool, in lowercase letters.** - llm - reasoning - multimodal - chain-of-thought - prompt engineering **5 specific tasks user can use this tool to do, in less than 3 words, Verb + noun form, in daily spoken language.** - write a story - answer a question - translate a language - generate code - summarize a document

Prompt4ReasoningPapers

Prompt4ReasoningPapers is a repository dedicated to reasoning with language model prompting. It provides a comprehensive survey of cutting-edge research on reasoning abilities with language models. The repository includes papers, methods, analysis, resources, and tools related to reasoning tasks. It aims to support various real-world applications such as medical diagnosis, negotiation, etc.

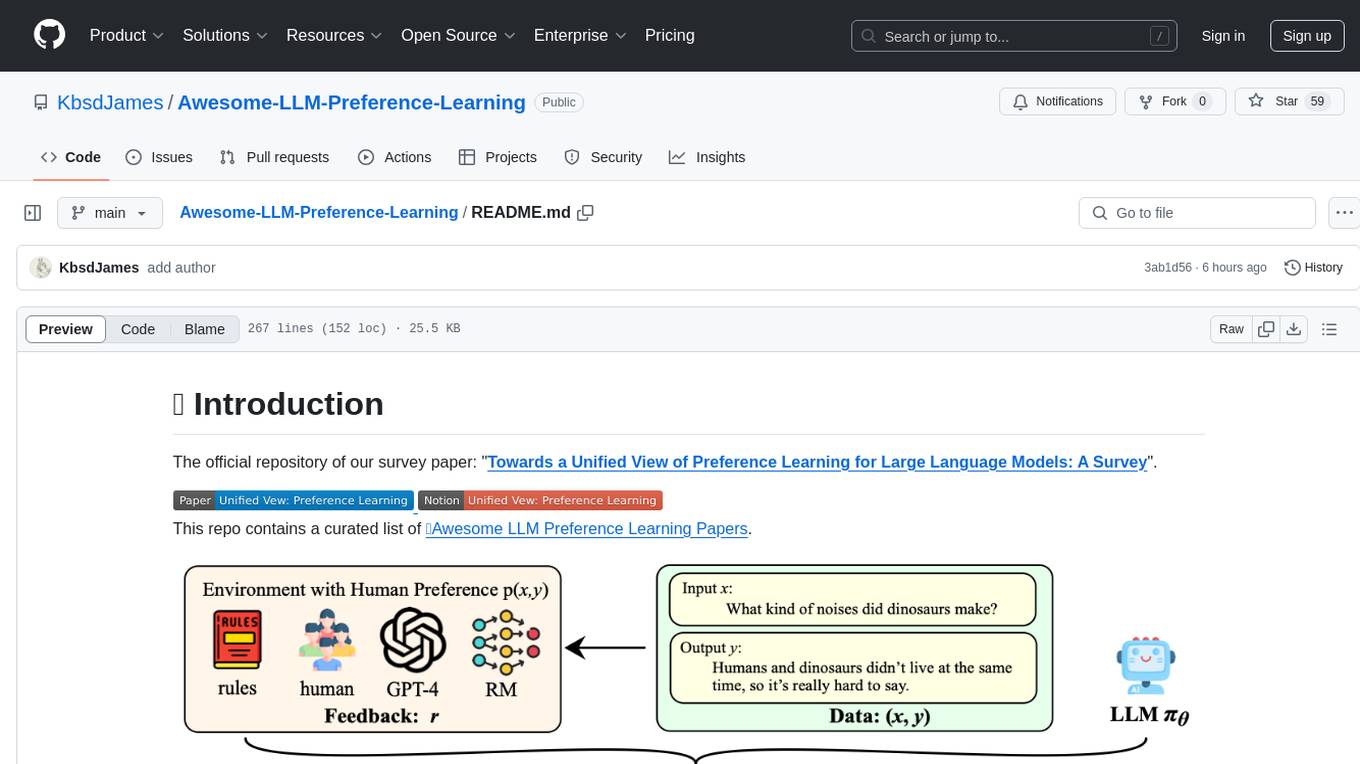

Awesome-LLM-Preference-Learning

The repository 'Awesome-LLM-Preference-Learning' is the official repository of a survey paper titled 'Towards a Unified View of Preference Learning for Large Language Models: A Survey'. It contains a curated list of papers related to preference learning for Large Language Models (LLMs). The repository covers various aspects of preference learning, including on-policy and off-policy methods, feedback mechanisms, reward models, algorithms, evaluation techniques, and more. The papers included in the repository explore different approaches to aligning LLMs with human preferences, improving mathematical reasoning in LLMs, enhancing code generation, and optimizing language model performance.

LLMAgentPapers

LLM Agents Papers is a repository containing must-read papers on Large Language Model Agents. It covers a wide range of topics related to language model agents, including interactive natural language processing, large language model-based autonomous agents, personality traits in large language models, memory enhancements, planning capabilities, tool use, multi-agent communication, and more. The repository also provides resources such as benchmarks, types of tools, and a tool list for building and evaluating language model agents. Contributors are encouraged to add important works to the repository.

awesome-llm-role-playing-with-persona

Awesome-llm-role-playing-with-persona is a curated list of resources for large language models for role-playing with assigned personas. It includes papers and resources related to persona-based dialogue systems, personalized response generation, psychology of LLMs, biases in LLMs, and more. The repository aims to provide a comprehensive collection of research papers and tools for exploring role-playing abilities of large language models in various contexts.

awesome-generative-information-retrieval

This repository contains a curated list of resources on generative information retrieval, including research papers, datasets, tools, and applications. Generative information retrieval is a subfield of information retrieval that uses generative models to generate new documents or passages of text that are relevant to a given query. This can be useful for a variety of tasks, such as question answering, summarization, and document generation. The resources in this repository are intended to help researchers and practitioners stay up-to-date on the latest advances in generative information retrieval.

llm-self-correction-papers

This repository contains a curated list of papers focusing on the self-correction of large language models (LLMs) during inference. It covers various frameworks for self-correction, including intrinsic self-correction, self-correction with external tools, self-correction with information retrieval, and self-correction with training designed specifically for self-correction. The list includes survey papers, negative results, and frameworks utilizing reinforcement learning and OpenAI o1-like approaches. Contributions are welcome through pull requests following a specific format.

Awesome-LLM-Reasoning-Openai-o1-Survey

The repository 'Awesome LLM Reasoning Openai-o1 Survey' provides a collection of survey papers and related works on OpenAI o1, focusing on topics such as LLM reasoning, self-play reinforcement learning, complex logic reasoning, and scaling law. It includes papers from various institutions and researchers, showcasing advancements in reasoning bootstrapping, reasoning scaling law, self-play learning, step-wise and process-based optimization, and applications beyond math. The repository serves as a valuable resource for researchers interested in exploring the intersection of language models and reasoning techniques.

Awesome-LLM-RAG

This repository, Awesome-LLM-RAG, aims to record advanced papers on Retrieval Augmented Generation (RAG) in Large Language Models (LLMs). It serves as a resource hub for researchers interested in promoting their work related to LLM RAG by updating paper information through pull requests. The repository covers various topics such as workshops, tutorials, papers, surveys, benchmarks, retrieval-enhanced LLMs, RAG instruction tuning, RAG in-context learning, RAG embeddings, RAG simulators, RAG search, RAG long-text and memory, RAG evaluation, RAG optimization, and RAG applications.

awesome-ai-llm4education

The 'awesome-ai-llm4education' repository is a curated list of papers related to artificial intelligence (AI) and large language models (LLM) for education. It collects papers from top conferences, journals, and specialized domain-specific conferences, categorizing them based on specific tasks for better organization. The repository covers a wide range of topics including tutoring, personalized learning, assessment, material preparation, specific scenarios like computer science, language, math, and medicine, aided teaching, as well as datasets and benchmarks for educational research.

awesome-open-ended

A curated list of open-ended learning AI resources focusing on algorithms that invent new and complex tasks endlessly, inspired by human advancements. The repository includes papers, safety considerations, surveys, perspectives, and blog posts related to open-ended AI research.

LLM4DB

LLM4DB is a repository focused on the intersection of Large Language Models (LLM) and Database technologies. It covers various aspects such as data processing, data analysis, database optimization, and data management for LLM. The repository includes works on data cleaning, entity matching, schema matching, data discovery, NL2SQL, data exploration, data visualization, configuration tuning, query optimization, and anomaly diagnosis using LLMs. It aims to provide insights and advancements in leveraging LLMs for improving data processing, analysis, and database management tasks.

LLM4DB

LLM4DB is a repository focused on the intersection of Large Language Models (LLMs) and Database technologies. It covers various aspects such as data processing, data analysis, database optimization, and data management for LLMs. The repository includes research papers, tools, and techniques related to leveraging LLMs for tasks like data cleaning, entity matching, schema matching, data discovery, NL2SQL, data exploration, data visualization, knob tuning, query optimization, and database diagnosis.

LLM-as-a-Judge

LLM-as-a-Judge is a repository that includes papers discussed in a survey paper titled 'A Survey on LLM-as-a-Judge'. The repository covers various aspects of using Large Language Models (LLMs) as judges for tasks such as evaluation, reasoning, and decision-making. It provides insights into evaluation pipelines, improvement strategies, and specific tasks related to LLMs. The papers included in the repository explore different methodologies, applications, and future research directions for leveraging LLMs as evaluators in various domains.