fastc

Unattended Lightweight Text Classifiers with LLM Embeddings

Stars: 164

Fastc is a tool focused on CPU execution, using efficient models for embedding generation and cosine similarity classification. It allows for efficient multi-classifier execution without extra overhead. Users can easily train text classifiers, export models, publish to HuggingFace, load existing models, make class predictions, use instruct templates, and launch an inference server. The tool provides an HTTP API for text classification with JSON payloads and supports multiple languages for language identification.

README:

-

Suitable for limited-memory CPU execution: Use efficient distilled models such as

deepset/tinyroberta-6l-768dfor embedding generation. - Logistic Regression and Nearest Centroid classification: Bypass the need for fine-tuning by utilizing LLM embeddings to efficiently categorize texts using either logistic regression or the nearest centroid through cosine similarity.

- Efficient Parallel Execution: Run hundreds of classifiers concurrently with minimal overhead by sharing the same model for embedding generation.

pip install -U fastcYou can train a text classifier with just a few lines of code:

from fastc import Fastc

tuples = [

("I just got a promotion! Feeling fantastic.", 'positive'),

("Today was terrible. I lost my wallet and missed the bus.", 'negative'),

("I had a great time with my friends at the party.", 'positive'),

("I'm so frustrated with the traffic jam this morning.", 'negative'),

("My vacation was wonderful and relaxing.", 'positive'),

("I didn't get any sleep last night because of the noise.", 'negative'),

("I'm so excited for the concert tonight!", 'positive'),

("I'm disappointed with the service at the restaurant.", 'negative'),

("The weather is beautiful and I enjoyed my walk.", 'positive'),

("I had a bad day. Nothing went right.", 'negative'),

("I'm thrilled to announce that we are expecting a baby!", 'positive'),

("I feel so lonely and sad today.", 'negative'),

("My team won the championship! We are the champions.", 'positive'),

("I can't stand my job anymore, it's so stressful.", 'negative'),

("I love spending time with my family during the holidays.", 'positive'),

("My computer crashed and I lost all my work.", 'negative'),

("I'm proud of my achievements this year.", 'positive'),

("I'm exhausted and overwhelmed with everything.", 'positive'),

]model = Fastc(

embeddings_model='microsoft/deberta-base',

kernel=Kernels.NEAREST_CENTROID,

)

model.load_dataset(tuples)

model.train()from fastc import Kernels

model = Fastc(

embeddings_model='microsoft/deberta-base',

kernel=Kernels.LOGISTIC_REGRESSION,

# cross_validation_splits=5,

# cross_validation_repeats=3,

# iterations=100,

# parameters={...},

# seed=1984,

)

model.load_dataset(tuples)

model.train()The implemented pooling strategies are:

-

MEAN(default) MEAN_MASKEDMAXMAX_MASKEDCLSSUMATTENTION_WEIGHTED

from fastc import Pooling

model = Fastc(

embeddings_model='microsoft/deberta-base',

pooling=Pooling.MEAN_MASKED,

)

model.load_dataset(tuples)

model.train()You can use instruct templates with instruct models such as intfloat/multilingual-e5-large-instruct. Other models may also improve in performance by using templates, even if they were not explicitly trained with them.

from fastc import ModelTemplates, Fastc, Template

# template_text = 'Instruct: {instruction}\nQuery: {text}'

template_text = ModelTemplates.E5_INSTRUCT

model = Fastc(

embeddings_model='intfloat/multilingual-e5-large-instruct',

template=Template(

template_text,

instruction='Classify as positive or negative'

),

)After training, you can save the model for future use:

model.save_model('./sentiment-classifier/')[!IMPORTANT]

Log in to HuggingFace first withhuggingface-cli login

model.push_to_hub(

'braindao/sentiment-classifier',

tags=['sentiment-analysis'],

languages=['multilingual'],

private=False,

)You can load a pre-trained model either from a directory or from HuggingFace:

# From a directory

model = Fastc('./sentiment-classifier/')

# From HuggingFace

model = Fastc('braindao/sentiment-classifier')sentences = [

'I am feeling well.',

'I am in pain.',

]

# Single prediction

scores = model.predict_one(sentences[0])

print(scores['label'])

# Batch predictions

scores_list = model.predict(sentences)

for scores in scores_list:

print(scores['label'])To launch the dockerized inference server, use the following script:

./server/scripts/start-docker.shAlternatively, on the host machine:

./server/scripts/start-server.shIn both cases, an HTTP API will be available, listening on the fastc-server hashport 53256.

To classify text, use POST / with a JSON payload such as:

{

"model": "braindao/tinyroberta-6l-768d-language-identifier-en-es-ko-zh-fastc-lr",

"text": "오늘 저녁에 친구들과 함께 pizza를 먹을 거예요."

}Response:

{

"label": "ko",

"scores": {

"en": 1.0146501463135055e-08,

"es": 6.806091549848057e-09,

"ko": 0.9999852640487916,

"zh": 1.471899861513275e-05

}

}To check the fastc version, use GET /version:

Response:

{

"version": "2.2407.0"

}For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for fastc

Similar Open Source Tools

fastc

Fastc is a tool focused on CPU execution, using efficient models for embedding generation and cosine similarity classification. It allows for efficient multi-classifier execution without extra overhead. Users can easily train text classifiers, export models, publish to HuggingFace, load existing models, make class predictions, use instruct templates, and launch an inference server. The tool provides an HTTP API for text classification with JSON payloads and supports multiple languages for language identification.

hezar

Hezar is an all-in-one AI library designed specifically for the Persian community. It brings together various AI models and tools, making it easy to use AI with just a few lines of code. The library seamlessly integrates with Hugging Face Hub, offering a developer-friendly interface and task-based model interface. In addition to models, Hezar provides tools like word embeddings, tokenizers, feature extractors, and more. It also includes supplementary ML tools for deployment, benchmarking, and optimization.

promptic

Promptic is a tool designed for LLM app development, providing a productive and pythonic way to build LLM applications. It leverages LiteLLM, allowing flexibility to switch LLM providers easily. Promptic focuses on building features by providing type-safe structured outputs, easy-to-build agents, streaming support, automatic prompt caching, and built-in conversation memory.

LLM-Blender

LLM-Blender is a framework for ensembling large language models (LLMs) to achieve superior performance. It consists of two modules: PairRanker and GenFuser. PairRanker uses pairwise comparisons to distinguish between candidate outputs, while GenFuser merges the top-ranked candidates to create an improved output. LLM-Blender has been shown to significantly surpass the best LLMs and baseline ensembling methods across various metrics on the MixInstruct benchmark dataset.

llm-rag-workshop

The LLM RAG Workshop repository provides a workshop on using Large Language Models (LLMs) and Retrieval-Augmented Generation (RAG) to generate and understand text in a human-like manner. It includes instructions on setting up the environment, indexing Zoomcamp FAQ documents, creating a Q&A system, and using OpenAI for generation based on retrieved information. The repository focuses on enhancing language model responses with retrieved information from external sources, such as document databases or search engines, to improve factual accuracy and relevance of generated text.

cursive-py

Cursive is a universal and intuitive framework for interacting with LLMs. It is extensible, allowing users to hook into any part of a completion life cycle. Users can easily describe functions that LLMs can use with any supported model. Cursive aims to bridge capabilities between different models, providing a single interface for users to choose any model. It comes with built-in token usage and costs calculations, automatic retry, and model expanding features. Users can define and describe functions, generate Pydantic BaseModels, hook into completion life cycle, create embeddings, and configure retry and model expanding behavior. Cursive supports various models from OpenAI, Anthropic, OpenRouter, Cohere, and Replicate, with options to pass API keys for authentication.

vinagent

Vinagent is a lightweight and flexible library designed for building smart agent assistants across various industries. It provides a simple yet powerful foundation for creating AI-powered customer service bots, data analysis assistants, or domain-specific automation agents. With its modular tool system, users can easily extend their agent's capabilities by integrating a wide range of tools that are self-contained, well-documented, and can be registered dynamically. Vinagent allows users to scale and adapt their agents to new tasks or environments effortlessly.

python-genai

The Google Gen AI SDK is a Python library that provides access to Google AI and Vertex AI services. It allows users to create clients for different services, work with parameter types, models, generate content, call functions, handle JSON response schemas, stream text and image content, perform async operations, count and compute tokens, embed content, generate and upscale images, edit images, work with files, create and get cached content, tune models, distill models, perform batch predictions, and more. The SDK supports various features like automatic function support, manual function declaration, JSON response schema support, streaming for text and image content, async methods, tuning job APIs, distillation, batch prediction, and more.

omniai

OmniAI provides a unified Ruby API for integrating with multiple AI providers, streamlining AI development by offering a consistent interface for features such as chat, text-to-speech, speech-to-text, and embeddings. It ensures seamless interoperability across platforms and effortless switching between providers, making integrations more flexible and reliable.

instructor

Instructor is a popular Python library for managing structured outputs from large language models (LLMs). It offers a user-friendly API for validation, retries, and streaming responses. With support for various LLM providers and multiple languages, Instructor simplifies working with LLM outputs. The library includes features like response models, retry management, validation, streaming support, and flexible backends. It also provides hooks for logging and monitoring LLM interactions, and supports integration with Anthropic, Cohere, Gemini, Litellm, and Google AI models. Instructor facilitates tasks such as extracting user data from natural language, creating fine-tuned models, managing uploaded files, and monitoring usage of OpenAI models.

aiavatarkit

AIAvatarKit is a tool for building AI-based conversational avatars quickly. It supports various platforms like VRChat and cluster, along with real-world devices. The tool is extensible, allowing unlimited capabilities based on user needs. It requires VOICEVOX API, Google or Azure Speech Services API keys, and Python 3.10. Users can start conversations out of the box and enjoy seamless interactions with the avatars.

simpleAI

SimpleAI is a self-hosted alternative to the not-so-open AI API, focused on replicating main endpoints for LLM such as text completion, chat, edits, and embeddings. It allows quick experimentation with different models, creating benchmarks, and handling specific use cases without relying on external services. Users can integrate and declare models through gRPC, query endpoints using Swagger UI or API, and resolve common issues like CORS with FastAPI middleware. The project is open for contributions and welcomes PRs, issues, documentation, and more.

cappr

CAPPr is a tool for text classification that does not require training or post-processing. It allows users to have their language models pick from a list of choices or compute the probability of a completion given a prompt. The tool aims to help users get more out of open source language models by simplifying the text classification process. CAPPr can be used with GGUF models, Hugging Face models, models from the OpenAI API, and for tasks like caching instructions, extracting final answers from step-by-step completions, and running predictions in batches with different sets of completions.

mini.ai

This plugin extends and creates `a`/`i` textobjects in Neovim. It enhances some builtin textobjects (like `a(`, `a)`, `a'`, and more), creates new ones (like `a*`, `a

python-tgpt

Python-tgpt is a Python package that enables seamless interaction with over 45 free LLM providers without requiring an API key. It also provides image generation capabilities. The name _python-tgpt_ draws inspiration from its parent project tgpt, which operates on Golang. Through this Python adaptation, users can effortlessly engage with a number of free LLMs available, fostering a smoother AI interaction experience.

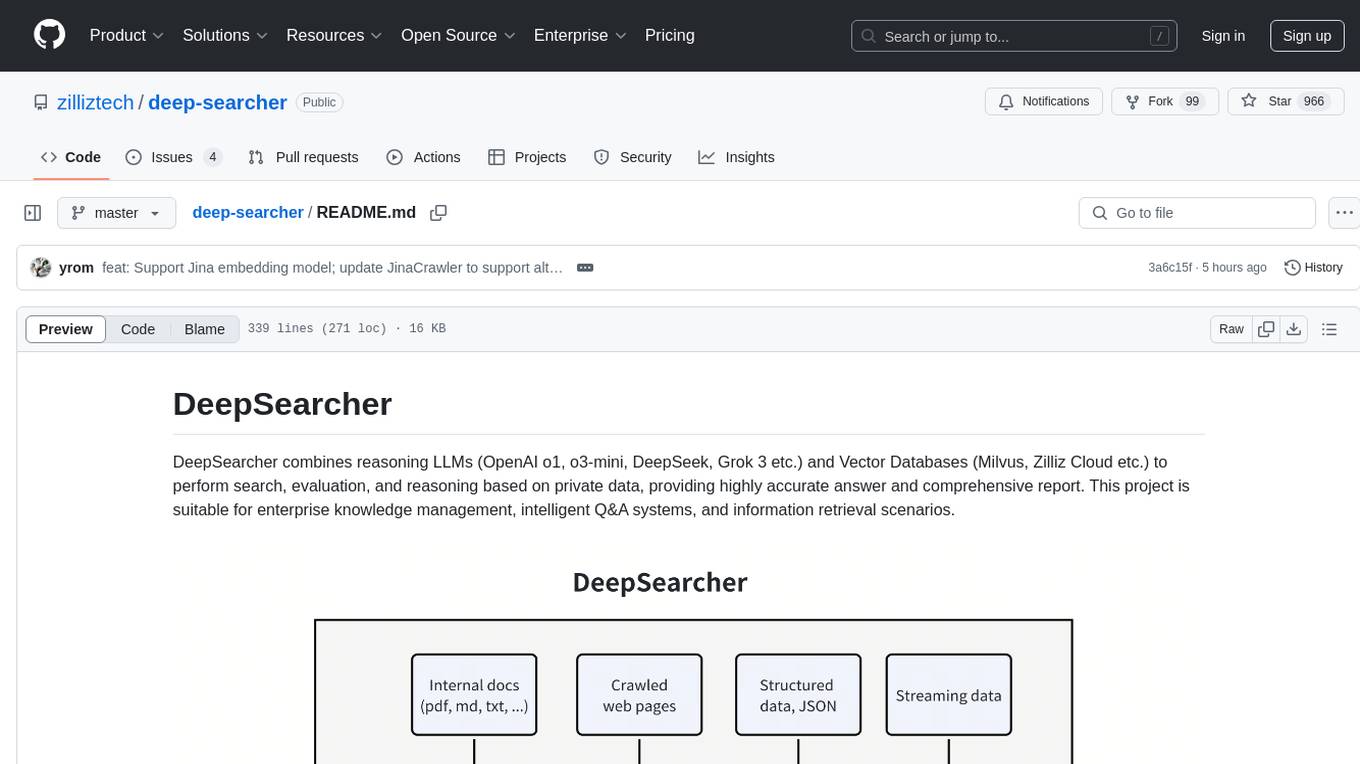

deep-searcher

DeepSearcher is a tool that combines reasoning LLMs and Vector Databases to perform search, evaluation, and reasoning based on private data. It is suitable for enterprise knowledge management, intelligent Q&A systems, and information retrieval scenarios. The tool maximizes the utilization of enterprise internal data while ensuring data security, supports multiple embedding models, and provides support for multiple LLMs for intelligent Q&A and content generation. It also includes features like private data search, vector database management, and document loading with web crawling capabilities under development.

For similar tasks

llm-export

llm-export is a tool for exporting llm models to onnx and mnn formats. It has features such as passing onnxruntime correctness tests, optimizing the original code to support dynamic shapes, reducing constant parts, optimizing onnx models using OnnxSlim for performance improvement, and exporting lora weights to onnx and mnn formats. Users can clone the project locally, clone the desired LLM project locally, and use LLMExporter to export the model. The tool supports various export options like exporting the entire model as one onnx model, exporting model segments as multiple models, exporting model vocabulary to a text file, exporting specific model layers like Embedding and lm_head, testing the model with queries, validating onnx model consistency with onnxruntime, converting onnx models to mnn models, and more. Users can specify export paths, skip optimization steps, and merge lora weights before exporting.

export_llama_to_onnx

Export LLM like llama to ONNX files without modifying transformers modeling_xx_model.py. Supported models include llama (Hugging Face format), Baichuan, Alibaba Qwen 1.5/2, ChatGlm2/ChatGlm3, and Gemma. Usage examples provided for exporting different models to ONNX files. Various arguments can be used to configure the export process. Note on uninstalling/disabling FlashAttention and xformers before model conversion. Recommendations for handling kv_cache format and simplifying large ONNX models. Disclaimer regarding correctness of exported models and consequences of usage.

fastc

Fastc is a tool focused on CPU execution, using efficient models for embedding generation and cosine similarity classification. It allows for efficient multi-classifier execution without extra overhead. Users can easily train text classifiers, export models, publish to HuggingFace, load existing models, make class predictions, use instruct templates, and launch an inference server. The tool provides an HTTP API for text classification with JSON payloads and supports multiple languages for language identification.

uzu

uzu is a high-performance inference engine for AI models on Apple Silicon. It features a simple, high-level API, hybrid architecture for GPU kernel computation, unified model configurations, traceable computations, and utilizes unified memory on Apple devices. The tool provides a CLI mode for running models, supports its own model format, and offers prebuilt Swift and TypeScript frameworks for bindings. Users can quickly start by adding the uzu dependency to their Cargo.toml and creating an inference Session with a specific model and configuration. Performance benchmarks show metrics for various models on Apple M2, highlighting the tokens/s speed for each model compared to llama.cpp with bf16/f16 precision.

BambooAI

BambooAI is a lightweight library utilizing Large Language Models (LLMs) to provide natural language interaction capabilities, much like a research and data analysis assistant enabling conversation with your data. You can either provide your own data sets, or allow the library to locate and fetch data for you. It supports Internet searches and external API interactions.

ai_all_resources

This repository is a compilation of excellent ML and DL tutorials created by various individuals and organizations. It covers a wide range of topics, including machine learning fundamentals, deep learning, computer vision, natural language processing, reinforcement learning, and more. The resources are organized into categories, making it easy to find the information you need. Whether you're a beginner or an experienced practitioner, you're sure to find something valuable in this repository.

AHU-AI-Repository

This repository is dedicated to the learning and exchange of resources for the School of Artificial Intelligence at Anhui University. Notes will be published on this website first: https://www.aoaoaoao.cn and will be synchronized to the repository regularly. You can also contact me at [email protected].

info8006-introduction-to-ai

INFO8006 Introduction to Artificial Intelligence is a course at ULiège that covers various topics in AI such as intelligent agents, problem-solving, games, probabilistic reasoning, machine learning, neural networks, reinforcement learning, and decision-making. The course includes lectures, exercises, and programming projects using Python. Students can access course materials, previous exams, and archived lectures to enhance their understanding of AI concepts.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.