Awesome-Trustworthy-Embodied-AI

None

Stars: 63

The Awesome Trustworthy Embodied AI repository focuses on the development of safe and trustworthy Embodied Artificial Intelligence (EAI) systems. It addresses critical challenges related to safety and trustworthiness in EAI, proposing a unified research framework and defining levels of safety and resilience. The repository provides a comprehensive review of state-of-the-art solutions, benchmarks, and evaluation metrics, aiming to bridge the gap between capability advancement and safety mechanisms in EAI development.

README:

Xin Tan* 🔗 · Bangwei Liu* · Yicheng Bao · Qijian Tian🔗 · Zhenkun Gao · Xiongbin Wu · Zhihao Luo · Sen Wang · Yuqi Zhang · Xuhong Wang§ 🔗· Chaochao Lu§† 🔗 · Bowen Zhou§‡ 🔗

上海人工智能实验室 / Shanghai Artificial Intelligence Laboratory 🔗

华东师范大学 / East China Normal University 🔗

清华大学 / Tsinghua University 🔗

The increasing autonomy and physical capability of Embodied Artificial Intelligence (EAI) introduce critical challenges to safety and trustworthiness. Unlike purely digital AI, failures in perception, planning, or interaction can lead to direct physical harm, property damage, or the violation of human safety and social norms. However, current EAI foundation models disregard the risks of misalignment between the model capabilities and the safety and trustworthiness competencies. Some works attempt to address these issues, however, they lack a unified framework capable of balancing the developmental trajectories between safety and capability. In this paper, we first comprehensively define a new term safe and trustworthy EAI by establishing an L1–L5 levels framework and proposing ten core principles of trustworthiness and safety. To unify fragmented research efforts, we propose a novel, agent-centric framework that analyzes risks across the four operational stages of an EAI system. We systematically review state-of-the-art but fragmented solutions, benchmarks, and evaluation metrics, identifying key gaps and challenges. Finally, we identify the need for a paradigm shift away from optimizing isolated components towards a holistic, cybernetic approach. We argue that future progress hinges on engineering the closed-loop system of the agent (Self), its environment (World), and their dynamic coupling (Interaction), paving the way for the next generation of truly safe and trustworthy EAI.

While embodied AI (EAI) products are rapidly improving in capability, they often lack reliable safety mechanisms. In contrast, academic safety research remains fragmented and lags behind in capability. This divergence has raised significant concerns about the trustworthiness of physical AI systems.To address this gap, we propose a unified research framework that integrates capability advancement and trustworthy safety, paving the way for the development of safe and aligned embodied agents.

- Goal: Refuse harmful instructions; follow basic safety norms.

- How: Instruction tuning, RLHF, safety filters, red-teaming data.

- Limit: Correlation-based; vulnerable to jailbreaks and shifts.

- Goal: Let humans halt/redirect before risky actions.

- How: Interrupt channels, intent/plan display, trajectory visualization.

- Limit: Needs constant oversight; weak scalability to high autonomy.

- Goal: Internalize validated safe behaviors.

- How: Imitation learning, behavior cloning, curated safety playbooks.

- Limit: Limited generalization to novel tasks and combinations.

- Goal: Continual self-improvement; proactive patching.

- How: Continual learning, self red-teaming, safety-aware exploration.

- Limit: Empirical assurance only — no prior formal guarantees.

- Goal: Provable safety/stability of closed-loop behavior.

- How: Reachability/invariance analysis, control-theoretic synthesis, neuro-symbolic proofs.

- Limit: Model/compute-intensive; engineering maturity evolving.

- Plug in the Safety Chip: Enforcing Constraints for LLM-driven Robot Agents (23.09) 🔗

- A Model-Agnostic Approach for Semantically Driven Disambiguation in Human-Robot Interaction (Fethiye Irmak Dogan, 25.04) 🔗

- DoRO: Disambiguation of Referred Object for Embodied Agents (Pradip Pramanick, 22.1) 🔗

- Embodied Multi-Agent Task Planning from Ambiguous Instruction (Xinzhu Liu, 22.06) 🔗

- Grounding Multimodal LLMs to Embodied Agents that Ask for Help with Reinforcement Learning (Ram Ramrakhya, 25.04) 🔗

- Improving Grounded Natural Language Understanding through Human-Robot Dialog (Jesse Thomason, 19.05) 🔗

- Inner Monologue: Embodied Reasoning through Planning with Language Models (22.07) 🔗

- Integrating Disambiguation and User Preferences into Large Language Models for Robot Motion Planning (Mohammed Abugurain, 24.04) 🔗

- NarraGuide: an LLM-based Narrative Mobile Robot for Remote Place Exploration (25.08) 🔗

- Navigation as Attackers Wish? Towards Building Robust Embodied Agents under Federated Learning (Yunchao Zhang, 22.11) 🔗

- Open-Ended Instructable Embodied Agents with Memory-Augmented Large Language Models (23.1) 🔗

- ThinkBot: Embodied Instruction Following with Thought Chain Reasoning (23.12) 🔗

- tagE: Enabling an Embodied Agent to Understand Human Instructions (23.1) 🔗

- AGENTSAFE: Benchmarking the Safety of Embodied Agents on Hazardous Instructions (Aishan Liu, 25.06) 🔗

- Advancing Embodied Agent Security: From Safety Benchmarks to Input Moderation (Ning Wang, 25.04) 🔗

- Adversarial Attacks on Robotic Vision Language Action Models (Eliot Krzysztof Jones, 25.06) 🔗

- Adversarial Training for Multimodal Large Language Models against Jailbreak Attacks (Liming Lu, 25.03) 🔗

- BadNAVer: Exploring Jailbreak Attacks On Vision-and-Language Navigation (Wenqi Lyu, 25.05) 🔗

- BadRobot: Jailbreaking Embodied LLMs in the Physical World (Hangtao Zhang, 24.07) 🔗

- Concept Enhancement Engineering: A Lightweight and Efficient Robust Defense Against Jailbreak Attacks in Embodied AI (Jirui Yang; Zheyu Lin, 25.04) 🔗

- Jailbreaking LLM-Controlled Robots (Alexander Robey, 24.1) 🔗

- MM-SafetyBench: A Benchmark for Safety Evaluation of Multimodal Large Language Models (Xin Liu, 23.11) 🔗

- POEX: Understanding and Mitigating Policy Executable Jailbreak Attacks against Embodied AI (Xuancun Lu, 24.12) 🔗

- Towards Robust Multimodal Large Language Models Against Jailbreak Attacks (Ziyi Yin, 25.02) 🔗

- Who’s in Charge Here? A Survey on Trustworthy AI in Variable Autonomy Robotic Systems (Leila Methnani, 24.04) 🔗

- Security Considerations in AI-Robotics: A Survey of Current Methods, Challenges, and Opportunities (23.1) 🔗

- Embodied Instruction Following in Unknown Environments (25.07) 🔗

- LACMA: Language-Aligning Contrastive Learning with Meta-Actions for Embodied Instruction Following (23.1) 🔗

- Semantic Skill Grounding for Embodied Instruction-Following in Cross-Domain Environments (24.08) 🔗

- Verifiably Following Complex Robot Instructions with Foundation Models (24.02) 🔗

- LLM-Driven Robots Risk Enacting Discrimination, Violence, and Unlawful Actions (24.06) 🔗

- Who’s in Charge Here? A Survey on Trustworthy AI in Variable Autonomy Robotic Systems (Leila Methnani, 24.04) 🔗

- A Survey on Adversarial Robustness of LiDAR-based Machine Learning Perception in Autonomous Vehicles (Junae Kim, 24.11) 🔗

- Adversarial Attacks and Detection in Visual Place Recognition for Safer Robot Navigation (Connor Malone, 25.01) 🔗

- BadDepth: Backdoor Attacks Against Monocular Depth Estimation in the Physical World (Ji Guo, 25.05) 🔗

- Embodied Active Defense: Leveraging Recurrent Feedback to Counter Adversarial Patches (24.03) 🔗

- Embodied Laser Attack:Leveraging Scene Priors to Achieve Agent-based Robust Non-contact Attacks (24.07) 🔗

- Random Spoofing Attack against Scan Matching Algorithm SLAM (Long) (24.02) 🔗

- SLAMSpoof: Practical LiDAR Spoofing Attacks on Localization Systems Guided by Scan Matching Vulnerability Analysis (Rokuto Nagata, 25.02) 🔗

- SoK: Rethinking Sensor Spoofing Attacks against Robotic Vehicles from a Systematic View (23.07) 🔗

- Towards Robust and Secure Embodied AI: A Survey on Vulnerabilities and Attacks (WENPENG XING, 25.02) 🔗

- Active SLAM With Dynamic Viewpoint Optimization for Robust Visual Navigation (Peng Li, 25.06) 🔗

- Embodied Uncertainty-Aware Object Segmentation (fang2024embodied, 24.1) 🔗

- Embodied active domain adaptation for semantic segmentation via informative path planning (René Zurbrügg, 22.1) 🔗

- Embodied visual active learning for semantic segmentation (David Nilsson, 21.12) 🔗

- Embodiedgpt: Vision-language pre-training via embodied chain of thought (Yao Mu, 23.05) 🔗

- Embodiedscan: A holistic multi-modal 3d perception suite towards embodied ai (Tai Wang, 24.03) 🔗

- Enhancing embodied object detection through language-image pre-training and implicit object memory (Nicolas Harvey Chapman, 24.02) 🔗

- Hallucination In Object Detection -- A Study In Visual Part Verification (21.06) 🔗

- Interactron: Embodied adaptive object detection (Klemen Kotar, 22.03) 🔗

- Learn how to see: collaborative embodied learning for object detection and camera adjusting (Lingdong Shen, 24.03) 🔗

- Learning robust perceptive locomotion for quadrupedal robots in the wild (TAKAHIRO MIKI, 22.01) 🔗

- Learning to Walk by Steering: Perceptive Quadrupedal Locomotion in Dynamic Environments (Mingyo Seo, 22.09) 🔗

- Legged locomotion in challenging terrains using egocentric vision (Ananye Agarwal, 22.11) 🔗

- Move to see better: Self-improving embodied object detection (Zhaoyuan Fang, 20.12) 🔗

- Obstacle-Aware Quadrupedal Locomotion With Resilient Multi-Modal Reinforcement Learning (I Made Aswin Nahrendra, 24.09) 🔗

- Openvla: An open-source vision-language-action model (Moo Jin Kim, 24.06) 🔗

- Palm-e: An embodied multimodal language model (Danny Driess, 23.07) 🔗

- Perception Matters: Enhancing Embodied AI with Uncertainty-Aware Semantic Segmentation (Sai Prasanna, 24.08) 🔗

- RT-2: Vision-Language-Action Models Transfer Web Knowledge to Robotic Control (23.07) 🔗

- Resilient Legged Local Navigation: Learning to Traverse with Compromised Perception End-to-End (Jin Jin, 23.1) 🔗

- Robot Manipulation Based on Embodied Visual Perception: A Survey (Sicheng Wang, 25.06) 🔗

- Robustnav: Towards benchmarking robustness in embodied navigation (Prithvijit Chattopadhyay, 21.06) 🔗

- Robustness of embodied point navigation agents (Frano Rajiˇc, 23.02) 🔗

- Viewinfer3d: 3d visual grounding based on embodied viewpoint inference (Liang Geng, 24.07) 🔗

- Vima: General robot manipulation with multimodal prompts (Yunfan Jiang, 22.1) 🔗

- A Survey on Adversarial Robustness of LiDAR-based Machine Learning Perception in Autonomous Vehicles (Junae Kim, 24.11) 🔗

- Adversarial Attacks and Detection in Visual Place Recognition for Safer Robot Navigation (Connor Malone, 25.01) 🔗

- BadDepth: Backdoor Attacks Against Monocular Depth Estimation in the Physical World (Ji Guo, 25.05) 🔗

- Embodied Active Defense: Leveraging Recurrent Feedback to Counter Adversarial Patches (24.03) 🔗

- Embodied Laser Attack:Leveraging Scene Priors to Achieve Agent-based Robust Non-contact Attacks (24.07) 🔗

- Random Spoofing Attack against Scan Matching Algorithm SLAM (Long) (24.02) 🔗

- SLAMSpoof: Practical LiDAR Spoofing Attacks on Localization Systems Guided by Scan Matching Vulnerability Analysis (Rokuto Nagata, 25.02) 🔗

- SoK: Rethinking Sensor Spoofing Attacks against Robotic Vehicles from a Systematic View (23.07) 🔗

- Towards Robust and Secure Embodied AI: A Survey on Vulnerabilities and Attacks (WENPENG XING, 25.02) 🔗

- AuditMAI: Towards An Infrastructure for Continuous AI Auditing (24.06) 🔗

- Monitoring and Diagnosability of Perception Systems (20.11) 🔗

- Safety Assessment for Autonomous Systems' Perception Capabilities (22.08) 🔗

- E2CL: exploration-based error correction learning for embodied agents (24.09) 🔗

- Embodied videoagent: Persistent memory from egocentric videos and embodied sensors enables dynamic scene understanding (25.01) 🔗

- Good time to ask: A learning framework for asking for help in embodied visual navigation (23.06) 🔗

- Interactive task learning via embodied corrective feedback (20.09) 🔗

- Self-Explainable Affordance Learning with Embodied Caption (Zhipeng Zhang, 24.04) 🔗

- Towards Embodied Agent Intent Explanation in Human-Robot Collaboration: ACT Error Analysis and Solution Conceptualization (25.05) 🔗

- What do navigation agents learn about their environment? (Kshitij Dwivedi, 22.06) 🔗

- Improved Semantic Segmentation from Ultra-Low-Resolution RGB Images Applied to Privacy-Preserving Object-Goal Navigation (25.07) 🔗

- Is the robot spying on me? a study on perceived privacy in telepresence scenarios in a care setting with mobile and humanoid robots (24.08) 🔗

- Privacy Risks of Robot Vision: A User Study on Image Modalities and Resolution (25.05) 🔗

- Privacy beyond Data: Assessment and Mitigation of Privacy Risks in Robotic Technology for Elderly Care (24.11) 🔗

- Privacy-preserving robot vision with anonymized faces by extreme low resolution (19.11) 🔗

- Real-time privacy preservation for robot visual perception (25.05) 🔗

- Active SLAM With Dynamic Viewpoint Optimization for Robust Visual Navigation (Peng Li, 25.06) 🔗

- Learning robust perceptive locomotion for quadrupedal robots in the wild (TAKAHIRO MIKI, 22.01) 🔗

- Learning to Walk by Steering: Perceptive Quadrupedal Locomotion in Dynamic Environments (Mingyo Seo, 22.09) 🔗

- Legged locomotion in challenging terrains using egocentric vision (Ananye Agarwal, 22.11) 🔗

- Obstacle-Aware Quadrupedal Locomotion With Resilient Multi-Modal Reinforcement Learning (I Made Aswin Nahrendra, 24.09) 🔗

- Resilient Legged Local Navigation: Learning to Traverse with Compromised Perception End-to-End (Jin Jin, 23.1) 🔗

- Robustnav: Towards benchmarking robustness in embodied navigation (Prithvijit Chattopadhyay, 21.06) 🔗

- Robustness of embodied point navigation agents (Frano Rajiˇc, 23.02) 🔗

- An Enactive Approach to Value Alignment in Artificial Intelligence: A Matter of Relevance (25.11) 🔗

- From Strangers to Assistants: Fast Desire Alignment for Embodied Agent-User Adaptation (25.05) 🔗

- On the Sensory Commutativity of Action Sequences for Embodied Agents (21.01) 🔗

- SafeVLA: Towards Safety Alignment of Vision-Language-Action Model via Constrained Learning (Borong Zhang, 25.03) 🔗

- A Secure Robot Learning Framework for Cyber Attack Scheduling and Countermeasure (Chengwei Wu, 23.06) 🔗

- Optimal Actuator Attacks on Autonomous Vehicles Using Reinforcement Learning (Pengyu Wang, 25.02) 🔗

- AdvGrasp: Adversarial Attacks on Robotic Grasping from a Physical Perspective (Xiaofei Wang, 25.07) 🔗

- Robust Humanoid Locomotion Using Trajectory Optimization and Sample-Efficient Learning (19.07) 🔗

- Robust Push Recovery on Bipedal Robots: Leveraging Multi-Domain Hybrid Systems with Reduced-Order Model Predictive Control (Min Dai, 25.04) 🔗

- Controllability, Observability, Realizability, and Stability of Dynamic Linear Systems (John M. Davis, 2009.01) 🔗

- On the general theory of control systems (1959.12) 🔗

- A Review of Future and Ethical Perspectives of Robotics and AI (Jim Torresen, 18.1) 🔗

- Disability 4.0: bioethical considerations on the use of embodied artificial intelligence (24.08) 🔗

- Humanoid Robots in Tourism and Hospitality—Exploring Managerial, Ethical, and Societal Challenges (Ida Skubis, 24.12) 🔗

- Contact-GraspNet: Efficient 6-DoF Grasp Generation in Cluttered Scenes (Martin Sundermeyer, 21.05) 🔗

- Denoising diffusion probabilistic models (Jonathan Ho, 20.06) 🔗

- Dex-NeRF: Using a Neural Radiance Field to Grasp Transparent Objects (Jeffrey Ichnowski, 21.1) 🔗

- Diffusion Policy: Visuomotor Policy Learning via Action Diffusion (Cheng Chi, 23.03) 🔗

- Form2Fit: Learning Shape Priors for Generalizable Assembly from Disassembly (Kevin Zakka, 19.1) 🔗

- From LLMs to Actions: Latent Codes as Bridges in Hierarchical Robot Control (24.05) 🔗

- Hierarchical Diffusion Policy for Kinematics-Aware Multi-Task Robotic Manipulation (Xiao Ma, 24.03) 🔗

- Look before you leap: Unveiling the power of gpt-4v in robotic vision-language planning (23.11) 🔗

- Partmanip: Learning cross-category generalizable part manipulation policy from point cloud observations (Haoran Geng, 23.03) 🔗

- Planning with Diffusion for Flexible Behavior Synthesis (22.05) 🔗

- RIC: Rotate-Inpaint-Complete for Generalizable Scene Reconstruction (Isaac Kasahara, 23.07) 🔗

- Se (3)-diffusionfields: Learning smooth cost functions for joint grasp and motion optimization through diffusion (Julen Urain;Niklas Funk, 23.07) 🔗

- SkillDiffuser: Interpretable Hierarchical Planning via Skill Abstractions in Diffusion-Based Task Execution (23.12) 🔗

- TransCG: A Large-Scale Real-World Dataset for Transparent Object Depth Completion and a Grasping Baseline (Hongjie Fang, 22.02) 🔗

- Vision-Language-Action Model and Diffusion Policy Switching Enables Dexterous Control of an Anthropomorphic Hand (Cheng Pan, 24.1) 🔗

- A Review of Future and Ethical Perspectives of Robotics and AI (Jim Torresen, 18.1) 🔗

- Humanoid Robots in Tourism and Hospitality—Exploring Managerial, Ethical, and Societal Challenges (Ida Skubis, 24.12) 🔗

- SafeVLA: Towards Safety Alignment of Vision-Language-Action Model via Constrained Learning (Borong Zhang, 25.03) 🔗

- DynaMem: Online Dynamic Spatio-Semantic Memory for Open World Mobile Manipulation (Peiqi Liu, 25.05) 🔗

- Ella: Embodied Social Agents with Lifelong Memory (Hongxin Zhang, 25.06) 🔗

- Embodied-RAG: General Non-parametric Embodied Memory for Retrieval and Generation (Quanting Xie, 25.01) 🔗

- Generative Agents: Interactive Simulacra of Human Behavior (Joon Sung Park, 23.08) 🔗

- HomeRobot: Open-Vocabulary Mobile Manipulation (Sriram Yenamandra, 23.06) 🔗

- REACT: SYNERGIZING REASONING AND ACTING IN LANGUAGE MODELS (Shunyu Yao, 23.05) 🔗

- ReMEmbR: Building and Reasoning Over Long-Horizon Spatio-Temporal Memory for Robot Navigation (Abrar Anwar, 24.09) 🔗

- Reinforced Reasoning for Embodied Planning (Di Wu, 25.05) 🔗

- Robo-Troj: Attacking LLM-based Task Planners (Mohaiminul Al Nahian; Zainab Altaweel, 25.04) 🔗

- SEMI-PARAMETRIC TOPOLOGICAL MEMORY FOR NAVIGATION (Nikolay Savinov, 18.05) 🔗

- SafeAgentBench: A Benchmark for Safe Task Planning of Embodied LLM Agents (Sheng Yin, 24.12) 🔗

- Safety Control of Service Robots with LLMs and Embodied Knowledge Graphs (Yong Qi, 24.05) 🔗

- SnapMem: Snapshot-based 3D Scene Memory for Embodied Exploration and Reasoning (Yuncong Yang, 24.11) 🔗

- Thinking in Space:How Multimodal Large Language Models See, Remember, and Recall Spaces (Jihan Yang, 24.12) 🔗

- BadRobot: Jailbreaking Embodied LLMs in the Physical World (Hangtao Zhang, 24.07) 🔗

- BadVLA: Towards Backdoor Attacks on Vision-Language-Action Models via Objective-Decoupled Optimization (Xueyang Zhou, 25.05) 🔗

- Characterizing Physical Adversarial Attacks on Robot Motion Planners (Wenxi Wu, 24.01) 🔗

- Exploring the Robustness of Decision-Level Through Adversarial Attacks on LLM-Based Embodied Models (Shuyuan Liu, 24.05) 🔗

- From Screens to Scenes: A Survey of Embodied AI in Healthcare (Yihao Liu, 25.03) 🔗

- INTRODUCING THE Robot Security Framework (RSF), A STANDARDIZED METHODOLOGY TO PERFORM SECURITY ASSESSMENTS IN ROBOTICS (Abiodun Sunday Adebayo, 23.12) 🔗

- EHAZOP: A Proof of Concept Ethical Hazard Analysis of an Assistive Robot (24.06) 🔗

- Safety Aware Task Planning via Large Language Models in Robotics (Azal Ahmad Khan, 25.03) 🔗

- Safety assurances for human-robot interaction via confidence-aware game-theoretic human models (21.1) 🔗

- Trust-aware motion planning for human-robot collaboration under distribution temporal logic specifications (23.1) 🔗

- VLM-Social-Nav: Socially Aware Robot Navigation through Scoring using Vision-Language Models (24.11) 🔗

- Who’s in Charge Here? A Survey on Trustworthy AI in Variable Autonomy Robotic Systems (Leila Methnani, 24.04) 🔗

- Generating Explanations for Embodied Action Decision from Visual Observation (Xiaohan Wang, 23.1) 🔗

- Manipulating Neural Path Planners via Slight Perturbations (Zikang Xiong, 24.03) 🔗

- Multi-Modal Multi-Task (M3T) Federated Foundation Models for Embodied AI: Potentials and Challenges for Edge Integration (Kasra Borazjani, 25.05) 🔗

- Ask4Help: Learning to Leverage an Expert for Embodied Tasks (Kunal Pratap Singh, 22.11) 🔗

- Embodied Escaping: End-to-End Reinforcement Learning for Robot Navigation in Narrow Environment (Han Zheng, 25.03) 🔗

- Habitat-web: Learning embodied object-search strategies from human demonstrations at scale (22.04) 🔗

- LLaPa: A Vision-Language Model Framework for Counterfactual-Aware Procedural Planning (Shibo Sun, 25.07) 🔗

- Uncertainty in Action: Confidence Elicitation in Embodied Agents (Tianjiao Yu, 25.03) 🔗

- Disability 4.0: bioethical considerations on the use of embodied artificial intelligence (Francesco De Micco, 24.08) 🔗

- Humanizing AI in medical training: ethical framework for responsible design (Mohammed Tahri Sqalli, 23.05) 🔗

- SafeVLA: Towards Safety Alignment of Vision-Language-Action Model via Constrained Learning (Borong Zhang, 25.03) 🔗

- Who’s in Charge Here? A Survey on Trustworthy AI in Variable Autonomy Robotic Systems (Leila Methnani, 24.04) 🔗

@article{tan2025safetrustworthyeai,

title={Towards Safe and Trustworthy Embodied AI: Foundations, Status, and Prospects},

author={Tan, Xin and Liu, Bangwei and Bao, Yicheng and Tian, Qijian and

Gao, Zhenkun and Wu, Xiongbin and Luo, Zhihao and Wang, Sen and

Zhang, Yuqi and Wang, Xuhong and Lu, Chaochao and Zhou, Bowen},

journal={OpenReview},

url={https://openreview.net/pdf?id=Eu6Yt21Alv},

year={2025}

}For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for Awesome-Trustworthy-Embodied-AI

Similar Open Source Tools

Awesome-Trustworthy-Embodied-AI

The Awesome Trustworthy Embodied AI repository focuses on the development of safe and trustworthy Embodied Artificial Intelligence (EAI) systems. It addresses critical challenges related to safety and trustworthiness in EAI, proposing a unified research framework and defining levels of safety and resilience. The repository provides a comprehensive review of state-of-the-art solutions, benchmarks, and evaluation metrics, aiming to bridge the gap between capability advancement and safety mechanisms in EAI development.

Fueling-Ambitions-Via-Book-Discoveries

Fueling-Ambitions-Via-Book-Discoveries is an Advanced Machine Learning & AI Course designed for students, professionals, and AI researchers. The course integrates rigorous theoretical foundations with practical coding exercises, ensuring learners develop a deep understanding of AI algorithms and their applications in finance, healthcare, robotics, NLP, cybersecurity, and more. Inspired by MIT, Stanford, and Harvard’s AI programs, it combines academic research rigor with industry-standard practices used by AI engineers at companies like Google, OpenAI, Facebook AI, DeepMind, and Tesla. Learners can learn 50+ AI techniques from top Machine Learning & Deep Learning books, code from scratch with real-world datasets, projects, and case studies, and focus on ML Engineering & AI Deployment using Django & Streamlit. The course also offers industry-relevant projects to build a strong AI portfolio.

data-scientist-roadmap2024

The Data Scientist Roadmap2024 provides a comprehensive guide to mastering essential tools for data science success. It includes programming languages, machine learning libraries, cloud platforms, and concepts categorized by difficulty. The roadmap covers a wide range of topics from programming languages to machine learning techniques, data visualization tools, and DevOps/MLOps tools. It also includes web development frameworks and specific concepts like supervised and unsupervised learning, NLP, deep learning, reinforcement learning, and statistics. Additionally, it delves into DevOps tools like Airflow and MLFlow, data visualization tools like Tableau and Matplotlib, and other topics such as ETL processes, optimization algorithms, and financial modeling.

OpenRedTeaming

OpenRedTeaming is a repository focused on red teaming for generative models, specifically large language models (LLMs). The repository provides a comprehensive survey on potential attacks on GenAI and robust safeguards. It covers attack strategies, evaluation metrics, benchmarks, and defensive approaches. The repository also implements over 30 auto red teaming methods. It includes surveys, taxonomies, attack strategies, and risks related to LLMs. The goal is to understand vulnerabilities and develop defenses against adversarial attacks on large language models.

language-ai-engineering-lab

The Language AI Engineering Lab is a structured repository focusing on Generative AI, guiding users from language fundamentals to production-ready Language AI systems. It covers topics like NLP, Transformers, Large Language Models, and offers hands-on learning paths, practical implementations, and end-to-end projects. The repository includes in-depth concepts, diagrams, code examples, and videos to support learning. It also provides learning objectives for various areas of Language AI engineering, such as NLP, Transformers, LLM training, prompt engineering, context management, RAG pipelines, context engineering, evaluation, model context protocol, LLM orchestration, agentic AI systems, multimodal models, MLOps, LLM data engineering, and domain applications like IVR and voice systems.

ToolUniverse

ToolUniverse is a collection of 211 biomedical tools designed for Agentic AI, providing access to biomedical knowledge for solving therapeutic reasoning tasks. The tools cover various aspects of drugs and diseases, linked to trusted sources like US FDA-approved drugs since 1939, Open Targets, and Monarch Initiative.

AiLearning-Theory-Applying

This repository provides a comprehensive guide to understanding and applying artificial intelligence (AI) theory, including basic knowledge, machine learning, deep learning, and natural language processing (BERT). It features detailed explanations, annotated code, and datasets to help users grasp the concepts and implement them in practice. The repository is continuously updated to ensure the latest information and best practices are covered.

tensorzero

TensorZero is an open-source platform that helps LLM applications graduate from API wrappers into defensible AI products. It enables a data & learning flywheel for LLMs by unifying inference, observability, optimization, and experimentation. The platform includes a high-performance model gateway, structured schema-based inference, observability, experimentation, and data warehouse for analytics. TensorZero Recipes optimize prompts and models, and the platform supports experimentation features and GitOps orchestration for deployment.

llm.hunyuan.T1

Hunyuan-T1 is a cutting-edge large-scale hybrid Mamba reasoning model driven by reinforcement learning. It has been officially released as an upgrade to the Hunyuan Thinker-1-Preview model. The model showcases exceptional performance in deep reasoning tasks, leveraging the TurboS base and Mamba architecture to enhance inference capabilities and align with human preferences. With a focus on reinforcement learning training, the model excels in various reasoning tasks across different domains, showcasing superior abilities in mathematical, logical, scientific, and coding reasoning. Through innovative training strategies and alignment with human preferences, Hunyuan-T1 demonstrates remarkable performance in public benchmarks and internal evaluations, positioning itself as a leading model in the field of reasoning.

ITBench

ITBench is a platform designed to measure the performance of AI agents in complex and real-world inspired IT automation tasks. It focuses on three key use cases: Site Reliability Engineering (SRE), Compliance & Security Operations (CISO), and Financial Operations (FinOps). The platform provides a real-world representation of IT environments, open and extensible framework, push-button workflows, and Kubernetes-based scenario environments. Researchers and developers can replicate real-world incidents in Kubernetes environments, develop AI agents, and evaluate them using a fully-managed leaderboard.

awesome-object-detection-datasets

This repository is a curated list of awesome public object detection and recognition datasets. It includes a wide range of datasets related to object detection and recognition tasks, such as general detection and recognition datasets, autonomous driving datasets, adverse weather datasets, person detection datasets, anti-UAV datasets, optical aerial imagery datasets, low-light image datasets, infrared image datasets, SAR image datasets, multispectral image datasets, 3D object detection datasets, vehicle-to-everything field datasets, super-resolution field datasets, and face detection and recognition datasets. The repository also provides information on tools for data annotation, data augmentation, and data management related to object detection tasks.

MedLLMsPracticalGuide

This repository serves as a practical guide for Medical Large Language Models (Medical LLMs) and provides resources, surveys, and tools for building, fine-tuning, and utilizing LLMs in the medical domain. It covers a wide range of topics including pre-training, fine-tuning, downstream biomedical tasks, clinical applications, challenges, future directions, and more. The repository aims to provide insights into the opportunities and challenges of LLMs in medicine and serve as a practical resource for constructing effective medical LLMs.

llms-interview-questions

This repository contains a comprehensive collection of 63 must-know Large Language Models (LLMs) interview questions. It covers topics such as the architecture of LLMs, transformer models, attention mechanisms, training processes, encoder-decoder frameworks, differences between LLMs and traditional statistical language models, handling context and long-term dependencies, transformers for parallelization, applications of LLMs, sentiment analysis, language translation, conversation AI, chatbots, and more. The readme provides detailed explanations, code examples, and insights into utilizing LLMs for various tasks.

unilm

The 'unilm' repository is a collection of tools, models, and architectures for Foundation Models and General AI, focusing on tasks such as NLP, MT, Speech, Document AI, and Multimodal AI. It includes various pre-trained models, such as UniLM, InfoXLM, DeltaLM, MiniLM, AdaLM, BEiT, LayoutLM, WavLM, VALL-E, and more, designed for tasks like language understanding, generation, translation, vision, speech, and multimodal processing. The repository also features toolkits like s2s-ft for sequence-to-sequence fine-tuning and Aggressive Decoding for efficient sequence-to-sequence decoding. Additionally, it offers applications like TrOCR for OCR, LayoutReader for reading order detection, and XLM-T for multilingual NMT.

Awesome-LLM-in-Social-Science

Awesome-LLM-in-Social-Science is a repository that compiles papers evaluating Large Language Models (LLMs) from a social science perspective. It includes papers on evaluating, aligning, and simulating LLMs, as well as enhancing tools in social science research. The repository categorizes papers based on their focus on attitudes, opinions, values, personality, morality, and more. It aims to contribute to discussions on the potential and challenges of using LLMs in social science research.

awesome-business-of-cybersecurity

The 'Awesome Business of Cybersecurity' repository is a comprehensive resource exploring the cybersecurity market, focusing on publicly traded companies, industry strategy, and AI capabilities. It provides insights into how cybersecurity companies operate, compete, and evolve across 18 solution categories and beyond. The repository offers structured information on the cybersecurity market snapshot, specialists vs. multiservice cybersecurity companies, cybersecurity stock lists, endpoint protection and threat detection, network security, identity and access management, cloud and application security, data protection and governance, security analytics and threat intelligence, non-US traded cybersecurity companies, cybersecurity ETFs, blogs and newsletters, podcasts, market insights and research, and cybersecurity solutions categories.

For similar tasks

Awesome-Trustworthy-Embodied-AI

The Awesome Trustworthy Embodied AI repository focuses on the development of safe and trustworthy Embodied Artificial Intelligence (EAI) systems. It addresses critical challenges related to safety and trustworthiness in EAI, proposing a unified research framework and defining levels of safety and resilience. The repository provides a comprehensive review of state-of-the-art solutions, benchmarks, and evaluation metrics, aiming to bridge the gap between capability advancement and safety mechanisms in EAI development.

For similar jobs

LLM-and-Law

This repository is dedicated to summarizing papers related to large language models with the field of law. It includes applications of large language models in legal tasks, legal agents, legal problems of large language models, data resources for large language models in law, law LLMs, and evaluation of large language models in the legal domain.

start-llms

This repository is a comprehensive guide for individuals looking to start and improve their skills in Large Language Models (LLMs) without an advanced background in the field. It provides free resources, online courses, books, articles, and practical tips to become an expert in machine learning. The guide covers topics such as terminology, transformers, prompting, retrieval augmented generation (RAG), and more. It also includes recommendations for podcasts, YouTube videos, and communities to stay updated with the latest news in AI and LLMs.

aiverify

AI Verify is an AI governance testing framework and software toolkit that validates the performance of AI systems against internationally recognised principles through standardised tests. It offers a new API Connector feature to bypass size limitations, test various AI frameworks, and configure connection settings for batch requests. The toolkit operates within an enterprise environment, conducting technical tests on common supervised learning models for tabular and image datasets. It does not define AI ethical standards or guarantee complete safety from risks or biases.

Awesome-LLM-Watermark

This repository contains a collection of research papers related to watermarking techniques for text and images, specifically focusing on large language models (LLMs). The papers cover various aspects of watermarking LLM-generated content, including robustness, statistical understanding, topic-based watermarks, quality-detection trade-offs, dual watermarks, watermark collision, and more. Researchers have explored different methods and frameworks for watermarking LLMs to protect intellectual property, detect machine-generated text, improve generation quality, and evaluate watermarking techniques. The repository serves as a valuable resource for those interested in the field of watermarking for LLMs.

LLM-LieDetector

This repository contains code for reproducing experiments on lie detection in black-box LLMs by asking unrelated questions. It includes Q/A datasets, prompts, and fine-tuning datasets for generating lies with language models. The lie detectors rely on asking binary 'elicitation questions' to diagnose whether the model has lied. The code covers generating lies from language models, training and testing lie detectors, and generalization experiments. It requires access to GPUs and OpenAI API calls for running experiments with open-source models. Results are stored in the repository for reproducibility.

graphrag

The GraphRAG project is a data pipeline and transformation suite designed to extract meaningful, structured data from unstructured text using LLMs. It enhances LLMs' ability to reason about private data. The repository provides guidance on using knowledge graph memory structures to enhance LLM outputs, with a warning about the potential costs of GraphRAG indexing. It offers contribution guidelines, development resources, and encourages prompt tuning for optimal results. The Responsible AI FAQ addresses GraphRAG's capabilities, intended uses, evaluation metrics, limitations, and operational factors for effective and responsible use.

langtest

LangTest is a comprehensive evaluation library for custom LLM and NLP models. It aims to deliver safe and effective language models by providing tools to test model quality, augment training data, and support popular NLP frameworks. LangTest comes with benchmark datasets to challenge and enhance language models, ensuring peak performance in various linguistic tasks. The tool offers more than 60 distinct types of tests with just one line of code, covering aspects like robustness, bias, representation, fairness, and accuracy. It supports testing LLMS for question answering, toxicity, clinical tests, legal support, factuality, sycophancy, and summarization.

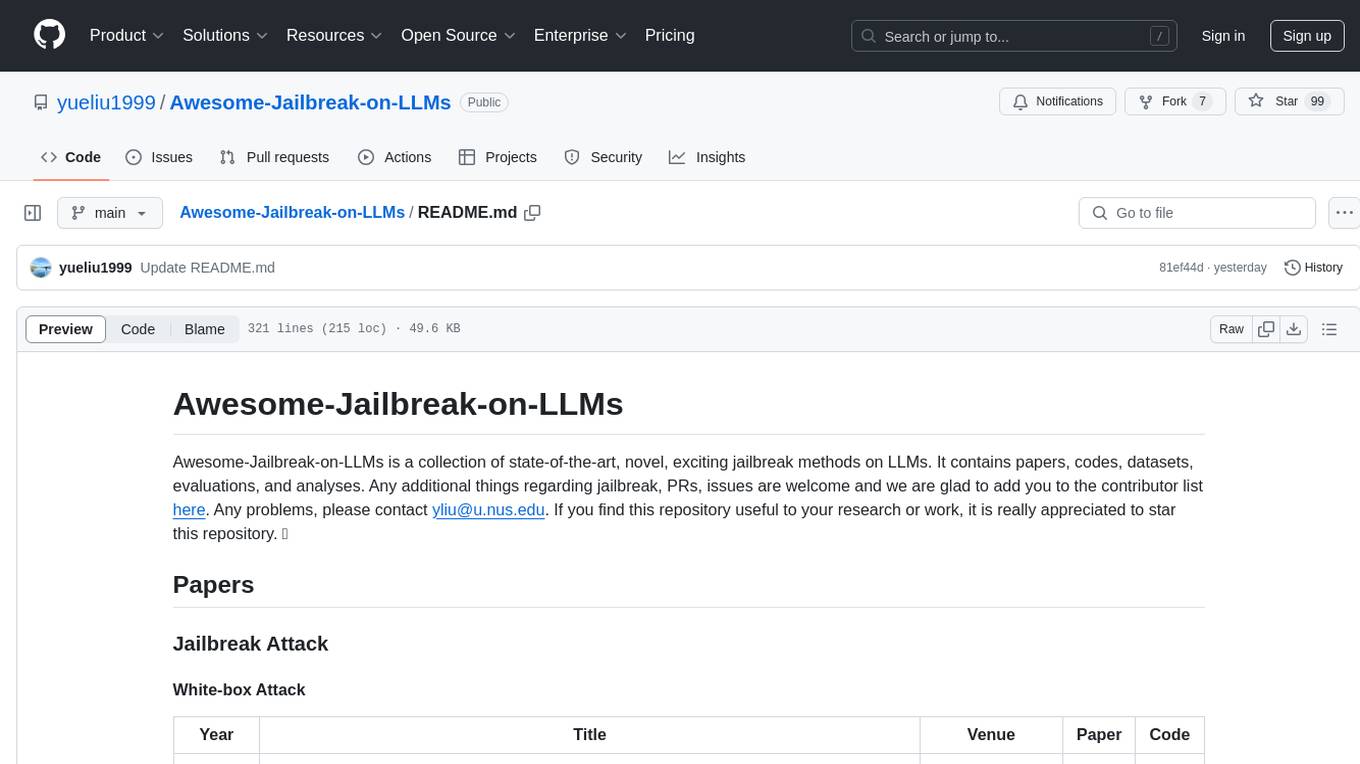

Awesome-Jailbreak-on-LLMs

Awesome-Jailbreak-on-LLMs is a collection of state-of-the-art, novel, and exciting jailbreak methods on Large Language Models (LLMs). The repository contains papers, codes, datasets, evaluations, and analyses related to jailbreak attacks on LLMs. It serves as a comprehensive resource for researchers and practitioners interested in exploring various jailbreak techniques and defenses in the context of LLMs. Contributions such as additional jailbreak-related content, pull requests, and issue reports are welcome, and contributors are acknowledged. For any inquiries or issues, contact [email protected]. If you find this repository useful for your research or work, consider starring it to show appreciation.