Best AI tools for< Pretrain Model >

1 - AI tool Sites

AI Seed Phrase Finder & BTC balance checker tool for Windows PC

The AI Seed Phrase Finder & BTC balance checker tool for Windows PC is an innovative application designed to prevent the loss of access to Bitcoin wallets. Leveraging advanced algorithms and artificial intelligence techniques, this program efficiently analyzes vast amounts of data to pre-train AI models. Consequently, it generates and searches for mnemonic phrases that grant access to abandoned Bitcoin wallets holding nonzero balances. With the “AI Seed Finder tool for Windows PC”, locating a complete 12-word seed phrase for a specific Bitcoin wallet becomes effortless. Even if you possess only partial knowledge of the mnemonic phrase or individual words comprising it, this tool can swiftly identify the entire seed phrase. Furthermore, by providing the address of a specific Bitcoin wallet you wish to regain access to, the program narrows down the search area. This targeted approach significantly enhances the program’s efficiency and reduces the time required to ascertain the correct mnemonic phrase.

1 - Open Source AI Tools

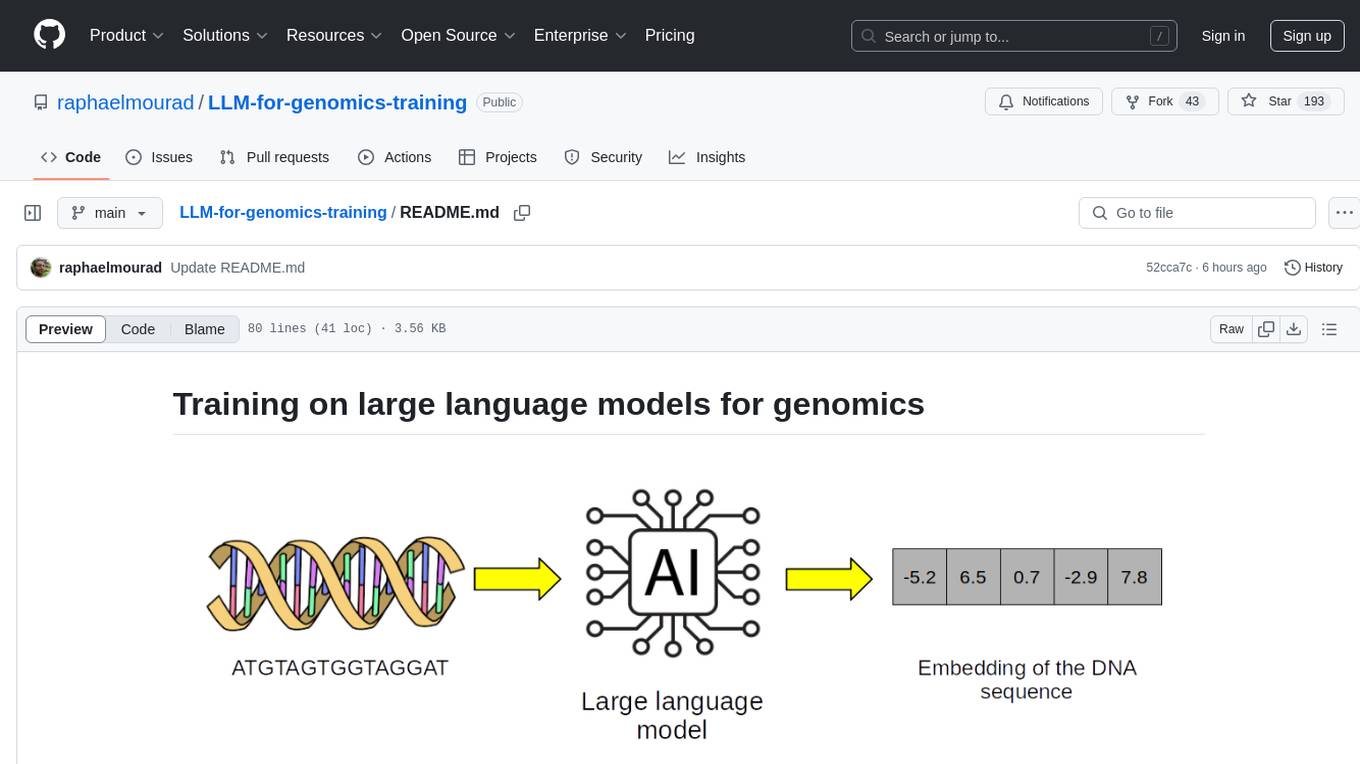

LLM-for-genomics-training

This repository provides training on large language models (LLMs) for genomics, including lecture notes and lab classes covering pretraining, finetuning, zeroshot learning prediction of mutation effect, synthetic DNA sequence generation, and DNA sequence optimization.