Awesome-LLM4RS-Papers

Large Language Model-enhanced Recommender System Papers

Stars: 480

This paper list is about Large Language Model-enhanced Recommender System. It also contains some related works. Keywords: recommendation system, large language models

README:

This is a paper list about Large Language Model-enhanced Recommender System. It also contains some related works.

Keywords: recommendation system, large language models

Welcome to open an issue or make a pull request!

- A Survey on Large Language Models for Recommendation, arxiv 2023, [paper].

- How Can Recommender Systems Benefit from Large Language Models: A Survey, arxiv 2023, [paper].

- Recommender Systems in the Era of Large Language Models (LLMs), arxiv 2023, [paper].

- Chat-REC: Towards Interactive and Explainable LLMs-Augmented Recommender System, arxiv 2023, [paper].

- GPT4Rec: A Generative Framework for Personalized Recommendation and User Interests Interpretation, arxiv 2023, [paper].

- TALLRec: An Effective and Efficient Tuning Framework to Align Large Language Model with Recommendation, RecSys 2023 Short Paper, [paper], [code].

- Privacy-Preserving Recommender Systems with Synthetic Query Generation using Differentially Private Large Language Models, arxiv 2023, [paper].

- Recommendation as Instruction Following: A Large Language Model Empowered Recommendation Approach, arxiv 2023, [paper].

- A First Look at LLM-Powered Generative News Recommendation, arxiv 2023, [paper].

- Sparks of Artificial General Recommender (AGR): Early Experiments with ChatGPT, arxiv 2023, [paper].

- Zero-Shot Next-Item Recommendation using Large Pretrained Language Models, arxiv 2023, [paper], [code].

- Do LLMs Understand User Preferences? Evaluating LLMs On User Rating Prediction, arxiv 2023, [paper].

- Large Language Models are Zero-Shot Rankers for Recommender Systems, arxiv 2023, [paper], [code].

- Leveraging Large Language Models in Conversational Recommender Systems, arxiv 2023, [paper].

- Rethinking the Evaluation for Conversational Recommendation in the Era of Large Language Models, arxiv 2023, [paper], [code].

- PALR: Personalization Aware LLMs for Recommendation, arxiv 2023, [paper].

- Prompt Tuning Large Language Models on Personalized Aspect Extraction for Recommendations, arxiv 2023, [paper].

- A Preliminary Study of ChatGPT on News Recommendation: Personalization, Provider Fairness, Fake News, arxiv 2023, [paper].

- Large Language Model for Generative Recommendation, arxiv 2023, [paper].

- GenRec: Large Language Model for Generative Recommendation, arxiv 2023, [paper].

- Generative Job Recommendations with Large Language Model, arxiv 2023, [paper].

- Exploring Large Language Model for Graph Data Understanding in Online Job Recommendations, arxiv 2023, [paper].

- LLM-Rec: Personalized Recommendation via Prompting Large Language Models, arxiv 2023, [paper].

- A Bi-Step Grounding Paradigm for Large Language Models in Recommendation Systems, arxiv 2023, [paper].

- LLMRec: Benchmarking Large Language Models on Recommendation Task, arxiv 2023, [paper],[code].

- Zero-Shot Recommendations with Pre-Trained Large Language Models for Multimodal Nudging, arxiv 2023, [paper].

- Prompt Distillation for Efficient LLM-based Recommendation, CIKM 2023, [paper], [code].

- Large Language Models as Zero-Shot Conversational Recommenders, CIKM 2023, [paper], [code].

- Leveraging Large Language Models (LLMs) to Empower Training-Free Dataset Condensation for Content-Based Recommendation, arxiv 2023, [paper].

- Zero-Shot Recommendations with Pre-Trained Large Language Models for Multimodal Nudging, arxiv 2023, [paper].

- LlamaRec: Two-Stage Recommendation using Large Language Models for Ranking, arxiv 2023, [paper], [code].

- Large Language Models are Competitive Near Cold-start Recommenders for Language- and Item-based Preferences, Recsys 2023, [paper].

- CoLLM: Integrating Collaborative Embeddings into Large Language Models for Recommendation, arxiv 2023, [paper].

- Large Language Model Augmented Narrative Driven Recommendations, RecSys 2023 Short Paper, [paper].

- Leveraging Large Language Models for Sequential Recommendation, RecSys 2023 LBR, [paper], [code].

- ONCE: Boosting Content-based Recommendation with Both Open- and Closed-source Large Language Models, WSDM 2024, [paper], [code].

- LLaRA: Aligning Large Language Models with Sequential Recommenders, arxiv 2023, [paper], [code].

- LLM4Vis: Explainable Visualization Recommendation using ChatGPT, arxiv 2023, [paper], [code].

- E4SRec: An Elegant Effective Efficient Extensible Solution of Large Language Models for Sequential Recommendation, arxiv 2023, [paper], [code].

- Adapting Large Language Models by Integrating Collaborative Semantics for Recommendation, arxiv 2023, [paper], [code].

- Representation Learning with Large Language Models for Recommendation, WWW 2024, [paper], [code].

- Stealthy Attack on Large Language Model based Recommendation, arxiv 2024, [paper].

- ReLLa: Retrieval-enhanced Large Language Models for Lifelong Sequential Behavior Comprehension in Recommendation, arxiv 2024, [paper] [code]

- Wukong: Towards a Scaling Law for Large-Scale Recommendation, arxiv 2024, [paper]

- A Large Language Model Enhanced Sequential Recommender for Joint Video and Comment Recommendation, arxiv 2024, [paper][code]

- Harnessing Large Language Models for Text-Rich Sequential Recommendation, arxiv 2024, [paper]

- Exploring Large Language Model for Graph Data Understanding in Online Job Recommendations, arxiv 2024, [paper][code]

- LLMRG: Improving Recommendations through Large Language Model Reasoning Graphs, arxiv 2024, [paper]

- Enhancing Job Recommendation through LLM-based Generative Adversarial Networks, AAAI 2024, [paper].

- LLM-Guided Multi-View Hypergraph Learning for Human-Centric Explainable Recommendation, arxiv 2024, [paper].

- Sequential Recommendation with Latent Relations based on Large Language Model, SIGIR 2024, [paper], [code].

- Common Sense Enhanced Knowledge-based Recommendation with Large Language Model, arxiv 2024, [paper][code]

- Re2LLM: Reflective Reinforcement Large Language Model for Session-based Recommendation, arxiv 2024, [paper]

- Enhancing Content-based Recommendation via Large Language Model, arxiv 2024, [paper]

- Aligning Large Language Models with Recommendation Knowledge, arxiv 2024, [paper]

- Where to Move Next: Zero-shot Generalization of LLMs for Next POI Recommendation, arxiv 2024, [paper]

- DRE: Generating Recommendation Explanations by Aligning Large Language Models at Data-level, arxiv 2024, [paper].

- Behavior Alignment: A New Perspective of Evaluating LLM-based Conversational Recommendation Systems, SIGIR 2024, [paper], [code].

- Exact and Efficient Unlearning for Large Language Model-based Recommendation, arxiv 2024, [paper].

- Large Language Models for Intent-Driven Session Recommendations, SIGIR 24, [paper].

- Reinforcement Learning-based Recommender Systems with Large Language Models for State Reward and Action Modeling, SIGIR 24, [paper].

- Enhancing Long-Term Recommendation with Bi-level Learnable Large Language Model Planning, SIGIR 24, [paper].

- LoRec: Large Language Model for Robust Sequential Recommendation against Poisoning Attacks, SIGIR 24, [paper].

- Data-efficient Fine-tuning for LLM-based Recommendation, SIGIR 24, [paper].

- Towards LLM-RecSys Alignment with Textual ID Learning , SIGIR 24, [paper].

- Breaking the Length Barrier: LLM-Enhanced CTR Prediction in Long Textual User Behaviors, SIGIR 24, [paper].

- RecGPT: Generative Personalized Prompts for Sequential Recommendation via ChatGPT Training Paradigm, arxiv 2024, [paper]

- Efficient and Responsible Adaptation of Large Language Models for Robust Top-k Recommendations, arxiv 2024, [paper].

- Large Language Models for Next Point-of-Interest Recommendation, arxiv 2024, [paper].

- Distillation Matters: Empowering Sequential Recommenders to Match the Performance of Large Language Model, arxiv 2024, [paper].

- Large Language Models as Conversational Movie Recommenders: A User Study, arxiv 2024, [paper].

- CALRec: Contrastive Alignment of Generative LLMs for Sequential Recommendation, arxiv 2024, [paper].

- Fine-Tuning Large Language Model Based Explainable Recommendation with Explainable Quality Reward, AAAI 2024, [paper].

- Breaking the Barrier: Utilizing Large Language Models for Industrial Recommendation Systems through an Inferential Knowledge Graph, arxiv 2024, [paper].

- RDRec: Rationale Distillation for LLM-based Recommendation, ACL 2024 Main (short), [paper], [code].

- Reinforced Prompt Personalization for Recommendation with Large Language Models, arxiv 2024, [paper], [code]

- Semantic Understanding and Data Imputation using Large Language Model to Accelerate Recommendation System, arxiv 2024, [paper]

- A Systematic Survey and Critical Review on Evaluating Large Language Models: Challenges, Limitations, and Recommendations, arxiv 2024, [paper]

- LANE: Logic Alignment of Non-tuning Large Language Models and Online Recommendation Systems for Explainable Reason Generation, arxiv 2024, [paper]

- Optimizing Novelty of Top-k Recommendations using Large Language Models and Reinforcement Learning, arxiv 2024, [paper]

- "You Gotta be a Doctor, Lin": An Investigation of Name-Based Bias of Large Language Models in Employment Recommendations, arxiv 2024, [paper]

- Multi-Layer Ranking with Large Language Models for News Source Recommendation, arxiv 2024, [paper]

- Large Language Models as Evaluators for Recommendation Explanations, arxiv 2024, [paper]

- Text-like Encoding of Collaborative Information in Large Language Models for Recommendation, arxiv 2024, [paper]

- Exploring User Retrieval Integration towards Large Language Models for Cross-Domain Sequential Recommendation, arxiv 2024, [paper]

- XRec: Large Language Models for Explainable Recommendation, arxiv 2024, [[paper]](XRec: Large Language Models for Explainable Recommendation), [code]

- Large Language Models Enhanced Sequential Recommendation for Long-tail User and Item, arxiv 2024, [paper], [code]

- Keyword-driven Retrieval-Augmented Large Language Models for Cold-start User Recommendations, arxiv 2024, [paper]

- News Recommendation with Category Description by a Large Language Model, arxiv 2024, [paper]

- Learning Structure and Knowledge Aware Representation with Large Language Models for Concept Recommendation, arxiv 2024, [paper]

- Reindex-Then-Adapt: Improving Large Language Models for Conversational Recommendation, arxiv 2024, [paper]

- EmbSum: Leveraging the Summarization Capabilities of Large Language Models for Content-Based Recommendations, arxiv 2024, [paper]

- DynLLM: When Large Language Models Meet Dynamic Graph Recommendation, arxiv 2024, [paper]

- Conversational Topic Recommendation in Counseling and Psychotherapy with Decision Transformer and Large Language Models, arxiv 2024, [paper]

- OpenP5: An Open-Source Platform for Developing, Training, and Evaluating LLM-based Recommender Systems, Sigir 2024, [paper], [code]

- When Large Language Model based Agent Meets User Behavior Analysis: A Novel User Simulation Paradigm, arxiv 2023, [paper].

- RecMind: Large Language Model Powered Agent For Recommendation, arxiv 2023, [paper].

- On Generative Agents in Recommendation, arxiv 2023, [paper], [code].

- AgentCF: Collaborative Learning with Autonomous Language Agents for Recommender Systems, arxiv 2023, [paper].

- Recommender AI Agent: Integrating Large Language Models for Interactive Recommendations [link]

- Balancing Information Perception with Yin-Yang: Agent-Based Information Neutrality Model for Recommendation Systems, arxiv 2024, [paper]

- Lending Interaction Wings to Recommender Systems with Conversational Agents, NeurIPS 2023, [paper].

- A Conceptual Framework for Conversational Search and Recommendation: Conceptualizing Agent-Human Interactions During the Conversational Search Process, arxiv 2024, [paper].

- Enhancing Recommender Systems with Large Language Model Reasoning Graphs, arxiv 2023, [paper].

- Towards Open-World Recommendation with Knowledge Augmentation from Large Language Models, arxiv 2023, [paper], [code].

- LLMRec: Large Language Models with Graph Augmentation for Recommendation, WSDM 2024, [paper], [code], [blog in Chinese].

- Knowledge Adaptation from Large Language Model to Recommendation for Practical Industrial Application, arxiv 2024, [paper].

- Language models as recommender systems: Evaluations and limitations, NeurIPS Workshop 2021, [paper].

- Generative Recommendation: Towards Next-generation Recommender Paradigm, arxiv 2023, [paper].

- Where to Go Next for Recommender Systems? ID- vs.Modality-based recommender models revisited, SIGIR 2023, [paper], [code]

- Exploring the Upper Limits of Text-Based Collaborative Filtering Using Large Language Models: Discoveries and Insights, arxiv 2023, [paper].

- Exploring Adapter-based Transfer Learning for Recommender Systems: Empirical Studies and Practical Insights, arxiv 2023, [paper].

- Is ChatGPT a Good Recommender? A Preliminary Study, arxiv 2023, [paper].

- Evaluating ChatGPT as a Recommender System: A Rigorous Approach, arxiv 2023, [paper].

- Large Language Models are Competitive Near Cold-start Recommenders for Language- and Item-based Preferences, RecSys 2023 Short Paper, [paper].

- Is ChatGPT Fair for Recommendation? Evaluating Fairness in Large Language Model Recommendation, RecSys 2023 Short Paper, [paper], [code].

- Uncovering ChatGPT's Capabilities in Recommender Systems, RecSys 2023 LBR, [paper], [code].

Github Repository: "Universal_user_representations for recommendation" [link].

- Parameter-Efficient Transfer from Sequential Behaviors for User Modeling and Recommendation, SIGIR 2020, [paper], [code]

- One Person, One Model, One World: Learning Continual User Representation without Forgetting, SIGIR 2021, [paper], [code]

- ID-Agnostic User Behavior Pre-training for Sequential Recommendation, CCIR 2022, [paper].

- Towards Universal Sequence Representation Learning for Recommender Systems, KDD 2022, [paper], [code].

- TransRec: learning transferable recommendation from mixture-of-modality feedback, arxiv 2022, [paper].

- Learning Vector-Quantized Item Representation for Transferable Sequential Recommenders, WWW 2023, [paper], [code].

- One4all User Representation for Recommender Systems in E-commerce, arvix 2021, [paper].

- Text Is All You Need: Learning Language Representations for Sequential Recommendation, KDD 2023, [paper].

- Collaborative Large Language Model for Recommender Systems, arvix 2023, [paper], [code].

- A Simple Convolutional Generative Network for Next Item Recommendation, WSDM 2018/08, [paper] [code]

- Future Data Helps Training: Modeling Future Contexts for Session-based Recommendation, WWW 2020/04, [paper] [code]

- Recommender Systems with Generative Retrieval, arvix 2023, [paper].

- Generative Sequential Recommendation with GPTRec, SIGIR 2023 workshop, [paper].

- Enhanced Generative Recommendation via Content and Collaboration Integration, arvix 2024, [paper].

Survey paper: Pre-train, Prompt and Recommendation: A Comprehensive Survey of Language Modelling Paradigm Adaptations in Recommender Systems, arxiv 2023, [paper].

- Recommendation as Language Processing (RLP): A Unified Pretrain, Personalized Prompt & Predict Paradigm (P5), arvix 2022, [paper],[code].

- Rethinking Reinforcement Learning for Recommendation: A Prompt Perspective, SIGIR 2022, [paper].

- M6-Rec: Generative Pretrained Language Models are Open-Ended Recommender Systems, arvix 2022, [paper].

- Personalized Prompt for Sequential Recommendation, arvix 2022, [paper].

- Knowledge Prompt-tuning for Sequential Recommendation, ACM MM 2023, [paper], [code].

- Amazon-M2: A Multilingual Multi-locale Shopping Session Dataset for Recommendation and Text Generation, arvix 2023, [paper], [KDD Cup 2023].

- PixelRec: An Image Dataset for Benchmarking Recommender Systems with Raw Pixels, arvix 2023, [paper], [link].

- NineRec: A Benchmark Dataset Suite for Evaluating Transferable Recommendation, arvix 2023, [paper], [link].

- A Content-Driven Micro-Video Recommendation Dataset at Scale, arvix 2023, [paper], [link].

- EEG-SVRec: An EEG Dataset with User Multidimensional Affective Engagement Labels in Short Video Recommendation, arxiv, 2024[paper][link]

- MealRec : A Meal Recommendation Dataset with Meal-Course Affiliation for Personalization and Healthiness, arxiv 2024, [paper].

- MIND Your Language: A Multilingual Dataset for Cross-lingual News Recommendation, SIGIR 2024, [paper], [link].

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for Awesome-LLM4RS-Papers

Similar Open Source Tools

Awesome-LLM4RS-Papers

This paper list is about Large Language Model-enhanced Recommender System. It also contains some related works. Keywords: recommendation system, large language models

Recommendation-Systems-without-Explicit-ID-Features-A-Literature-Review

This repository is a collection of papers and resources related to recommendation systems, focusing on foundation models, transferable recommender systems, large language models, and multimodal recommender systems. It explores questions such as the necessity of ID embeddings, the shift from matching to generating paradigms, and the future of multimodal recommender systems. The papers cover various aspects of recommendation systems, including pretraining, user representation, dataset benchmarks, and evaluation methods. The repository aims to provide insights and advancements in the field of recommendation systems through literature reviews, surveys, and empirical studies.

Efficient-LLMs-Survey

This repository provides a systematic and comprehensive review of efficient LLMs research. We organize the literature in a taxonomy consisting of three main categories, covering distinct yet interconnected efficient LLMs topics from **model-centric** , **data-centric** , and **framework-centric** perspective, respectively. We hope our survey and this GitHub repository can serve as valuable resources to help researchers and practitioners gain a systematic understanding of the research developments in efficient LLMs and inspire them to contribute to this important and exciting field.

Awesome-LLM-Survey

This repository, Awesome-LLM-Survey, serves as a comprehensive collection of surveys related to Large Language Models (LLM). It covers various aspects of LLM, including instruction tuning, human alignment, LLM agents, hallucination, multi-modal capabilities, and more. Researchers are encouraged to contribute by updating information on their papers to benefit the LLM survey community.

llm-continual-learning-survey

This repository is an updating survey for Continual Learning of Large Language Models (CL-LLMs), providing a comprehensive overview of various aspects related to the continual learning of large language models. It covers topics such as continual pre-training, domain-adaptive pre-training, continual fine-tuning, model refinement, model alignment, multimodal LLMs, and miscellaneous aspects. The survey includes a collection of relevant papers, each focusing on different areas within the field of continual learning of large language models.

Awesome-explainable-AI

This repository contains frontier research on explainable AI (XAI), a hot topic in the field of artificial intelligence. It includes trends, use cases, survey papers, books, open courses, papers, and Python libraries related to XAI. The repository aims to organize and categorize publications on XAI, provide evaluation methods, and list various Python libraries for explainable AI.

Awesome-LLM-in-Social-Science

This repository compiles a list of academic papers that evaluate, align, simulate, and provide surveys or perspectives on the use of Large Language Models (LLMs) in the field of Social Science. The papers cover various aspects of LLM research, including assessing their alignment with human values, evaluating their capabilities in tasks such as opinion formation and moral reasoning, and exploring their potential for simulating social interactions and addressing issues in diverse fields of Social Science. The repository aims to provide a comprehensive resource for researchers and practitioners interested in the intersection of LLMs and Social Science.

Awesome-LLM-Compression

Awesome LLM compression research papers and tools to accelerate LLM training and inference.

Awesome-LLM-Post-training

The Awesome-LLM-Post-training repository is a curated collection of influential papers, code implementations, benchmarks, and resources related to Large Language Models (LLMs) Post-Training Methodologies. It covers various aspects of LLMs, including reasoning, decision-making, reinforcement learning, reward learning, policy optimization, explainability, multimodal agents, benchmarks, tutorials, libraries, and implementations. The repository aims to provide a comprehensive overview and resources for researchers and practitioners interested in advancing LLM technologies.

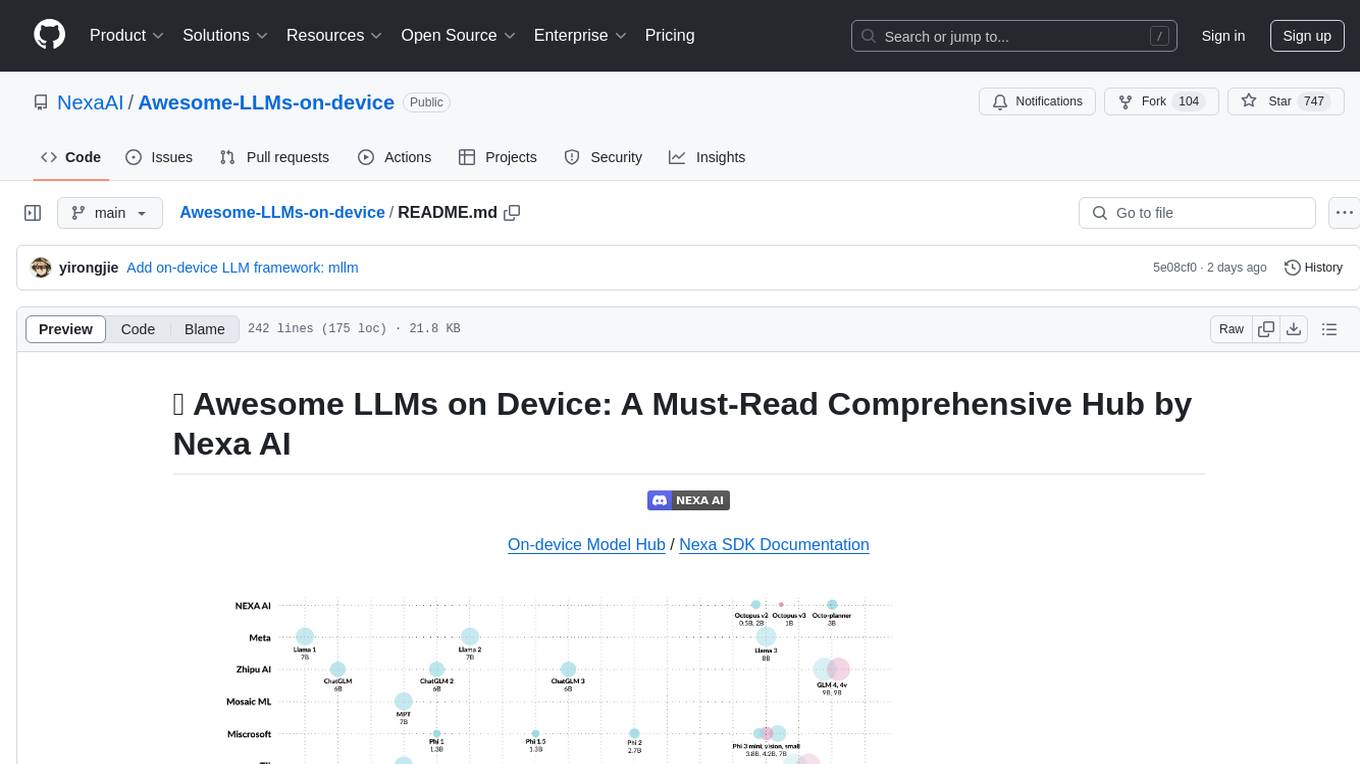

Awesome-LLMs-on-device

Welcome to the ultimate hub for on-device Large Language Models (LLMs)! This repository is your go-to resource for all things related to LLMs designed for on-device deployment. Whether you're a seasoned researcher, an innovative developer, or an enthusiastic learner, this comprehensive collection of cutting-edge knowledge is your gateway to understanding, leveraging, and contributing to the exciting world of on-device LLMs.

Awesome-Text2SQL

Awesome Text2SQL is a curated repository containing tutorials and resources for Large Language Models, Text2SQL, Text2DSL, Text2API, Text2Vis, and more. It provides guidelines on converting natural language questions into structured SQL queries, with a focus on NL2SQL. The repository includes information on various models, datasets, evaluation metrics, fine-tuning methods, libraries, and practice projects related to Text2SQL. It serves as a comprehensive resource for individuals interested in working with Text2SQL and related technologies.

LMOps

LMOps is a research initiative focusing on fundamental research and technology for building AI products with foundation models, particularly enabling AI capabilities with Large Language Models (LLMs) and Generative AI models. The project explores various aspects such as prompt optimization, longer context handling, LLM alignment, acceleration of LLMs, LLM customization, and understanding in-context learning. It also includes tools like Promptist for automatic prompt optimization, Structured Prompting for efficient long-sequence prompts consumption, and X-Prompt for extensible prompts beyond natural language. Additionally, LLMA accelerators are developed to speed up LLM inference by referencing and copying text spans from documents. The project aims to advance technologies that facilitate prompting language models and enhance the performance of LLMs in various scenarios.

LightLLM

LightLLM is a lightweight library for linear and logistic regression models. It provides a simple and efficient way to train and deploy machine learning models for regression tasks. The library is designed to be easy to use and integrate into existing projects, making it suitable for both beginners and experienced data scientists. With LightLLM, users can quickly build and evaluate regression models using a variety of algorithms and hyperparameters. The library also supports feature engineering and model interpretation, allowing users to gain insights from their data and make informed decisions based on the model predictions.

LLM-and-Law

This repository is dedicated to summarizing papers related to large language models with the field of law. It includes applications of large language models in legal tasks, legal agents, legal problems of large language models, data resources for large language models in law, law LLMs, and evaluation of large language models in the legal domain.

Awesome-local-LLM

Awesome-local-LLM is a curated list of platforms, tools, practices, and resources that help run Large Language Models (LLMs) locally. It includes sections on inference platforms, engines, user interfaces, specific models for general purpose, coding, vision, audio, and miscellaneous tasks. The repository also covers tools for coding agents, agent frameworks, retrieval-augmented generation, computer use, browser automation, memory management, testing, evaluation, research, training, and fine-tuning. Additionally, there are tutorials on models, prompt engineering, context engineering, inference, agents, retrieval-augmented generation, and miscellaneous topics, along with a section on communities for LLM enthusiasts.

For similar tasks

Awesome-LLM4RS-Papers

This paper list is about Large Language Model-enhanced Recommender System. It also contains some related works. Keywords: recommendation system, large language models

LightRAG

LightRAG is a PyTorch library designed for building and optimizing Retriever-Agent-Generator (RAG) pipelines. It follows principles of simplicity, quality, and optimization, offering developers maximum customizability with minimal abstraction. The library includes components for model interaction, output parsing, and structured data generation. LightRAG facilitates tasks like providing explanations and examples for concepts through a question-answering pipeline.

opensearch-ai

OpenSearch GPT is a personalized AI search engine that adapts to user interests while browsing the web. It utilizes advanced technologies like Mem0 for automatic memory collection, Vercel AI ADK for AI applications, Next.js for React framework, Tailwind CSS for styling, Shadcn UI for UI components, Cobe for globe animation, GPT-4o-mini for AI capabilities, and Cloudflare Pages for web application deployment. Developed by Supermemory.ai team.

Awesome-Deep-Learning-Papers-for-Search-Recommendation-Advertising

This repository contains a curated list of deep learning papers focused on industrial applications such as search, recommendation, and advertising. The papers cover various topics including embedding, matching, ranking, large models, transfer learning, and reinforcement learning.

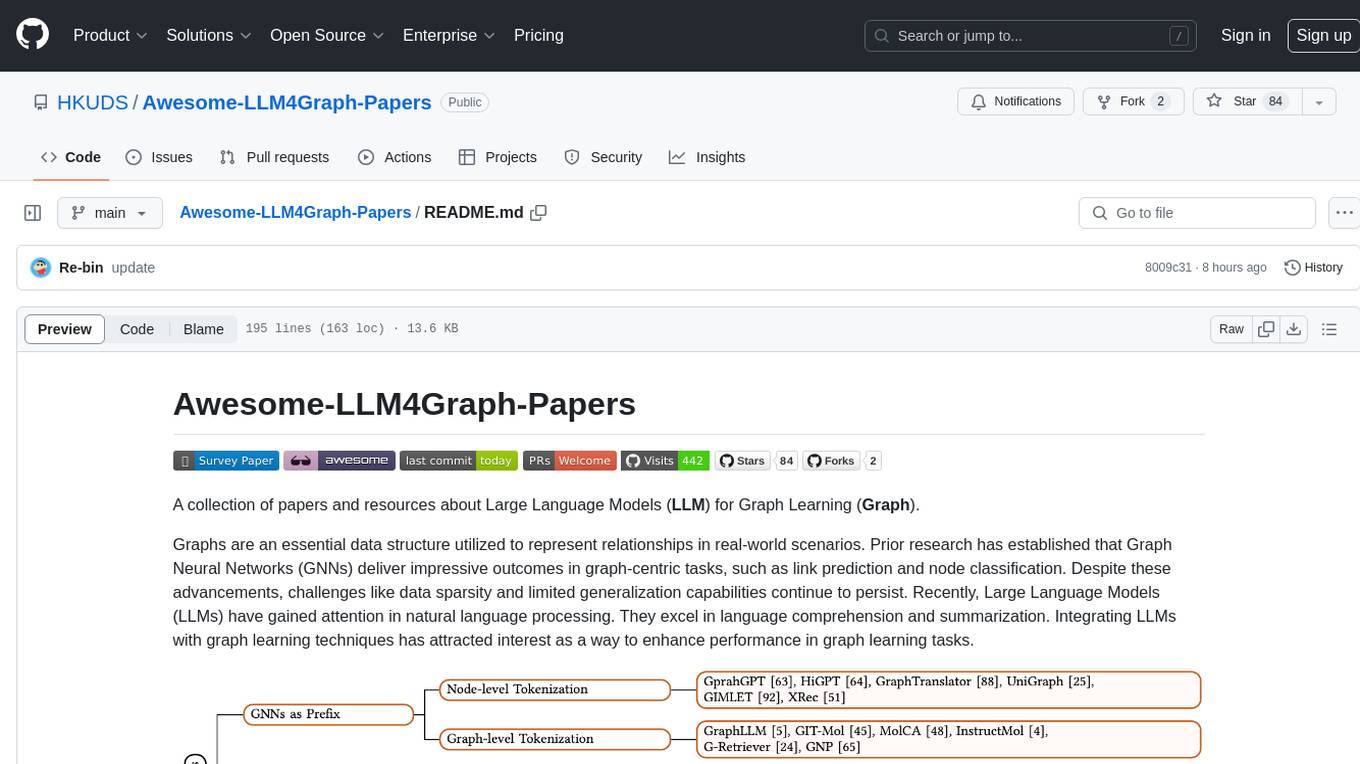

Awesome-LLM4Graph-Papers

A collection of papers and resources about Large Language Models (LLM) for Graph Learning (Graph). Integrating LLMs with graph learning techniques to enhance performance in graph learning tasks. Categorizes approaches based on four primary paradigms and nine secondary-level categories. Valuable for research or practice in self-supervised learning for recommendation systems.

ai_projects

This repository contains a collection of AI projects covering various areas of machine learning. Each project is accompanied by detailed articles on the associated blog sciblog. Projects range from introductory topics like Convolutional Neural Networks and Transfer Learning to advanced topics like Fraud Detection and Recommendation Systems. The repository also includes tutorials on data generation, distributed training, natural language processing, and time series forecasting. Additionally, it features visualization projects such as football match visualization using Datashader.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

agentcloud

AgentCloud is an open-source platform that enables companies to build and deploy private LLM chat apps, empowering teams to securely interact with their data. It comprises three main components: Agent Backend, Webapp, and Vector Proxy. To run this project locally, clone the repository, install Docker, and start the services. The project is licensed under the GNU Affero General Public License, version 3 only. Contributions and feedback are welcome from the community.

oss-fuzz-gen

This framework generates fuzz targets for real-world `C`/`C++` projects with various Large Language Models (LLM) and benchmarks them via the `OSS-Fuzz` platform. It manages to successfully leverage LLMs to generate valid fuzz targets (which generate non-zero coverage increase) for 160 C/C++ projects. The maximum line coverage increase is 29% from the existing human-written targets.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

Azure-Analytics-and-AI-Engagement

The Azure-Analytics-and-AI-Engagement repository provides packaged Industry Scenario DREAM Demos with ARM templates (Containing a demo web application, Power BI reports, Synapse resources, AML Notebooks etc.) that can be deployed in a customer’s subscription using the CAPE tool within a matter of few hours. Partners can also deploy DREAM Demos in their own subscriptions using DPoC.