Best AI tools for< Debug Prompts >

20 - AI tool Sites

Langtail

Langtail is a platform that helps developers build, test, and deploy AI-powered applications. It provides a suite of tools to help developers debug prompts, run tests, and monitor the performance of their AI models. Langtail also offers a community forum where developers can share tips and tricks, and get help from other users.

AI Tool Hub

The website features a variety of AI tools and applications, including GPTs, AI tutors, code assistants, diagram creators, and more. Users can explore and discover top GPTs, get assistance in programming, design, finance, and even fortune-telling. The platform offers a range of AI-powered solutions for different tasks and industries, aiming to enhance productivity and creativity through advanced AI technologies.

GPT-Prompter

GPT-Prompter is a Chrome extension that allows users to harness the power of GPT-3, GPT-4, and ChatGPT API without navigating to the OpenAI website, incurring additional costs, or relying on intermediaries. With the GPT-Prompter extension, users can utilize the full potential of GPT more conveniently than ever before. The extension includes an assortment of pre-made customizable prompts and conversations, as well as a playground-like interface that enables quick usage from any webpage. The result is an effortless, hassle-free experience that streamlines the use of GPT, saving users time and effort.

SpellBox

SpellBox is a versatile AI coding assistant that helps developers of all levels write code faster and more efficiently. With SpellBox, you can say goodbye to hours of frustrating coding and hello to quick, easy solutions. SpellBox creates the code you need from simple prompts, so you can solve your toughest programming problems in seconds.

Agenta.ai

Agenta.ai is a platform designed to provide prompt management, evaluation, and observability for LLM (Large Language Model) applications. It aims to address the challenges faced by AI development teams in managing prompts, collaborating effectively, and ensuring reliable product outcomes. By centralizing prompts, evaluations, and traces, Agenta.ai helps teams streamline their workflows and follow best practices in LLMOps. The platform offers features such as unified playground for prompt comparison, automated evaluation processes, human evaluation integration, observability tools for debugging AI systems, and collaborative workflows for PMs, experts, and developers.

Portkey

Portkey is a control panel for production AI applications that offers an AI Gateway, Prompts, Guardrails, and Observability Suite. It enables teams to ship reliable, cost-efficient, and fast apps by providing tools for prompt engineering, enforcing reliable LLM behavior, integrating with major agent frameworks, and building AI agents with access to real-world tools. Portkey also offers seamless AI integrations for smarter decisions, with features like managed hosting, smart caching, and edge compute layers to optimize app performance.

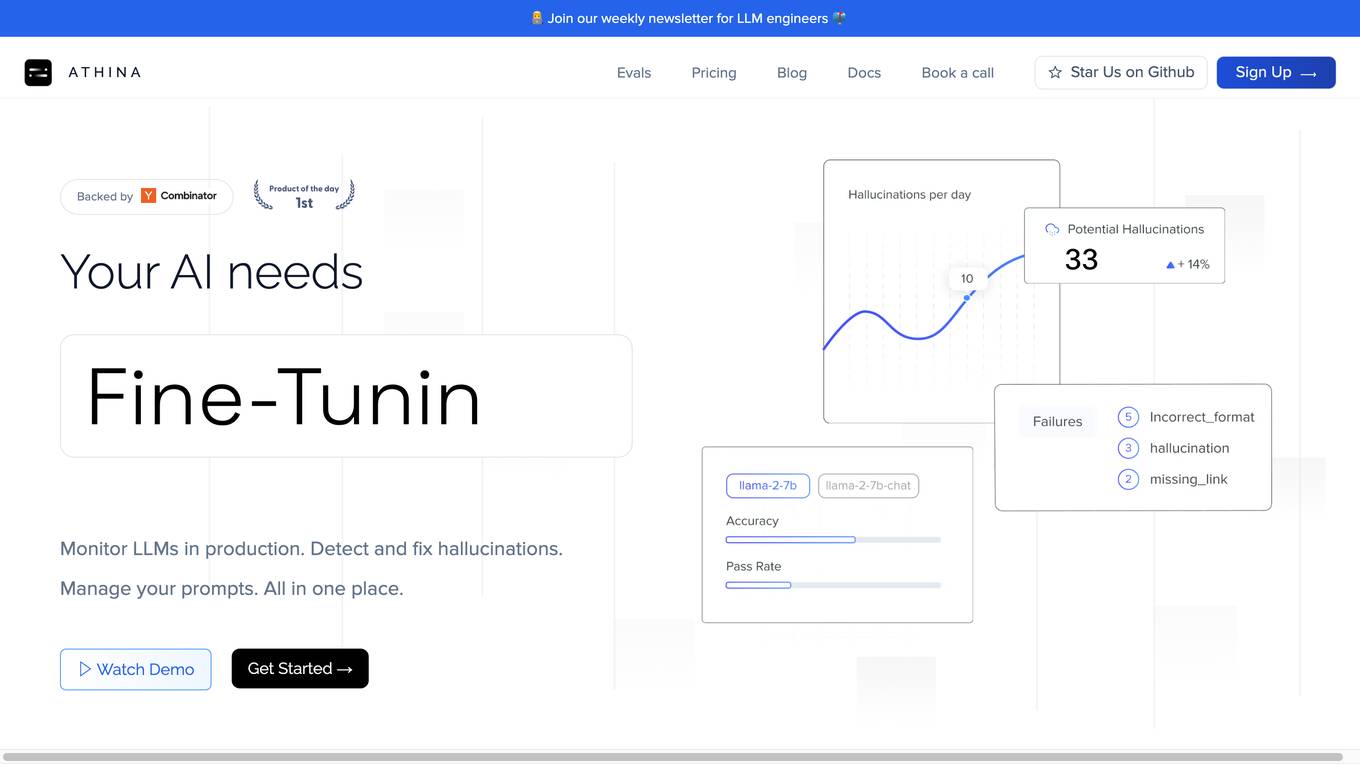

Athina AI

Athina AI is a comprehensive platform designed to monitor, debug, analyze, and improve the performance of Large Language Models (LLMs) in production environments. It provides a suite of tools and features that enable users to detect and fix hallucinations, evaluate output quality, analyze usage patterns, and optimize prompt management. Athina AI supports integration with various LLMs and offers a range of evaluation metrics, including context relevancy, harmfulness, summarization accuracy, and custom evaluations. It also provides a self-hosted solution for complete privacy and control, a GraphQL API for programmatic access to logs and evaluations, and support for multiple users and teams. Athina AI's mission is to empower organizations to harness the full potential of LLMs by ensuring their reliability, accuracy, and alignment with business objectives.

Wordware

Wordware is an AI toolkit that empowers cross-functional teams to build reliable high-quality agents through rapid iteration. It combines the best aspects of software with the power of natural language, freeing users from traditional no-code tool constraints. With advanced technical capabilities, multiple LLM providers, one-click API deployment, and multimodal support, Wordware offers a seamless experience for AI app development and deployment.

Helicone

Helicone is an open-source platform designed for developers, offering observability solutions for logging, monitoring, and debugging. It provides sub-millisecond latency impact, 100% log coverage, industry-leading query times, and is ready for production-level workloads. Trusted by thousands of companies and developers, Helicone leverages Cloudflare Workers for low latency and high reliability, offering features such as prompt management, uptime of 99.99%, scalability, and reliability. It allows risk-free experimentation, prompt security, and various tools for monitoring, analyzing, and managing requests.

ChatGPT 4 Online

ChatGPT 4 Online is an artificial intelligence-based chatbot powered by generative pre-trained transformer (GPT) technology. It responds with human-like natural conversation when you put text prompts or input in it. ChatGPT online version is a state-of-the-art AI language model that lets you enhance your productivity without spending a single penny. It is owned and developed by OpenAI, the artificial intelligence research laboratory, with the mission of advancing digital intelligence to benefit humanity.

BoltAI

BoltAI is a powerful and user-friendly ChatGPT app for Mac that seamlessly integrates AI into your workflow. With BoltAI, you can access the capabilities of ChatGPT directly within your favorite macOS apps, enhancing your productivity and creativity. Whether you're a developer, content creator, student, or entrepreneur, BoltAI empowers you to leverage AI to streamline your tasks and achieve more. Its intuitive chat UI, powerful AI commands, and inline AI capabilities make it easy to incorporate AI assistance into your daily routine. BoltAI is designed to be versatile and customizable, allowing you to tailor it to your specific needs and preferences. With BoltAI, you can create custom AI assistants, utilize a library of prompts, and enjoy highly customizable features to optimize your workflow. BoltAI prioritizes your privacy and security, ensuring that your data remains protected and confidential. It operates locally on your device, with no data or prompts being stored or transmitted to external servers. Your OpenAI API key is securely stored in the Apple Keychain, adhering to industry-standard encryption methods. Additionally, BoltAI includes an automatic data detection feature that redacts sensitive information, providing peace of mind. BoltAI is committed to continuous improvement, with regular updates and new features being added to enhance your experience. By integrating BoltAI into your workflow, you gain access to a powerful AI assistant that can help you write high-quality content, generate creative ideas, debug code, learn new concepts, and much more. Unleash the potential of AI with BoltAI and experience a new level of productivity and efficiency.

Godly

Godly is a tool that allows you to add your own data to GPT for personalized completions. It makes it easy to set up and manage your context, and comes with a chat bot to explore your context with no coding required. Godly also makes it easy to debug and manage which contexts are influencing your prompts, and provides an easy-to-use SDK for builders to quickly integrate context to their GPT completions.

Plumb

Plumb is a no-code, node-based builder that empowers product, design, and engineering teams to create AI features together. It enables users to build, test, and deploy AI features with confidence, fostering collaboration across different disciplines. With Plumb, teams can ship prototypes directly to production, ensuring that the best prompts from the playground are the exact versions that go to production. It goes beyond automation, allowing users to build complex multi-tenant pipelines, transform data, and leverage validated JSON schema to create reliable, high-quality AI features that deliver real value to users. Plumb also makes it easy to compare prompt and model performance, enabling users to spot degradations, debug them, and ship fixes quickly. It is designed for SaaS teams, helping ambitious product teams collaborate to deliver state-of-the-art AI-powered experiences to their users at scale.

Langfuse

Langfuse is an AI tool that offers the Langfuse TypeScript SDK v4 for building and debugging LLM (Large Language Models) applications. It provides features such as tracing, prompt management, evaluation, and metrics to enhance the performance of LLM applications. Langfuse is backed by a team of experts and offers integrations with various platforms and SDKs. The tool aims to simplify the development process of complex LLM applications and improve overall efficiency.

Aim

Aim is an open-source, self-hosted AI Metadata tracking tool designed to handle 100,000s of tracked metadata sequences. Two most famous AI metadata applications are: experiment tracking and prompt engineering. Aim provides a performant and beautiful UI for exploring and comparing training runs, prompt sessions.

Application Error

The website is experiencing an application error, which indicates that there is an issue with the functionality of the application. An application error can occur due to various reasons such as bugs in the code, server issues, or incorrect user input. When users encounter an application error, they are typically unable to access the intended features or content of the application. It is important for developers to identify and resolve application errors promptly to ensure a smooth user experience.

Pythagora

Pythagora is the world's first all-in-one AI development platform that allows users to build production apps quickly and efficiently. With Pythagora, users can go from prompt to production seamlessly, with frontend development in minutes and backend development in hours. The platform offers a complete technical stack, smart inline code review, one-click deployment, and full code ownership, making app development faster and smarter.

Debug Sage

Debug Sage is a website designed to help users understand and troubleshoot errors in their software applications. The platform provides detailed insights into various types of errors, allowing users to identify and resolve issues efficiently. With a user-friendly interface, Debug Sage aims to streamline the debugging process for developers and software engineers. The website also offers resources and tools to enhance the overall debugging experience. By leveraging advanced technologies, Debug Sage empowers users to tackle complex errors with ease.

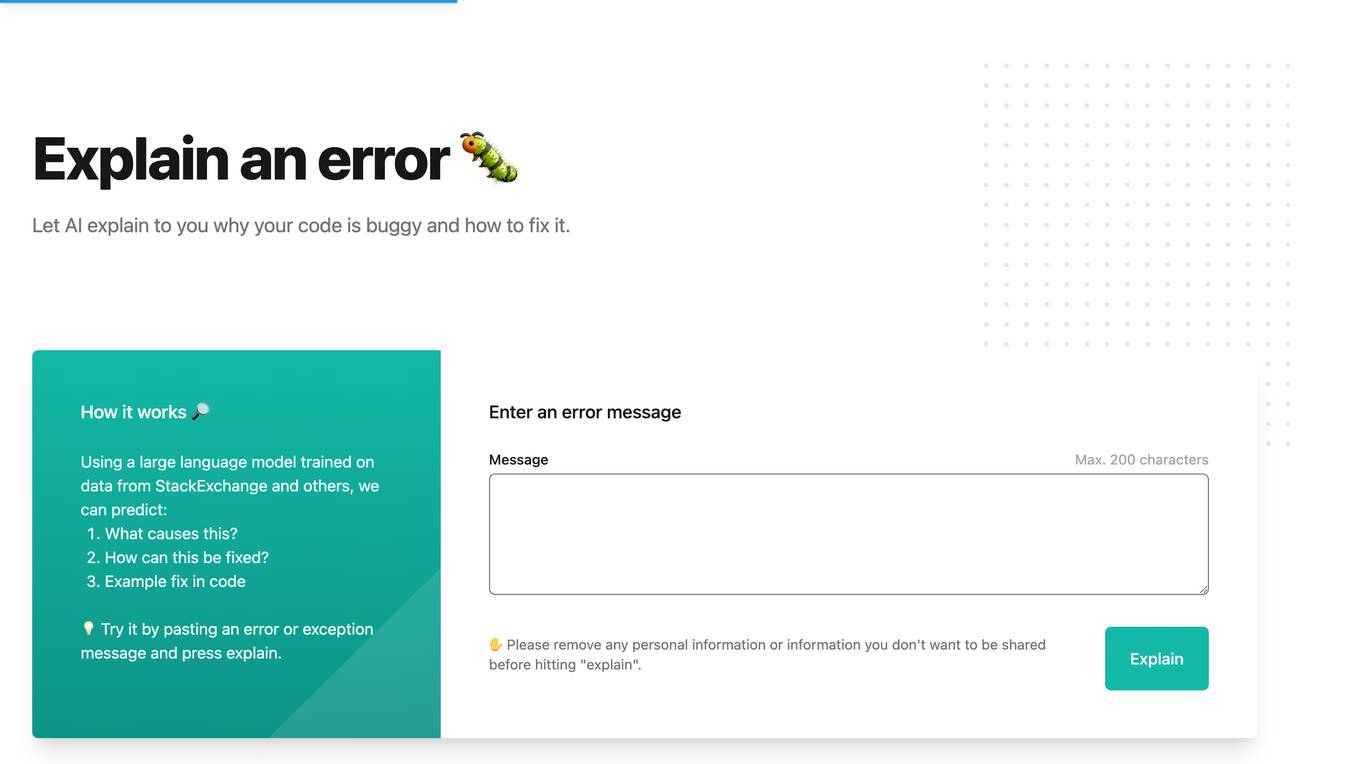

Whybug

Whybug is an AI tool designed to help developers debug their code by providing explanations for errors. By utilizing a large language model trained on data from StackExchange and other sources, Whybug can predict the causes of errors and suggest fixes. Users can simply paste an error message and receive detailed explanations on how to resolve the issue. The tool aims to streamline the debugging process and improve code quality.

New Relic

New Relic is an AI monitoring platform that offers an all-in-one observability solution for monitoring, debugging, and improving the entire technology stack. With over 30 capabilities and 750+ integrations, New Relic provides the power of AI to help users gain insights and optimize performance across various aspects of their infrastructure, applications, and digital experiences.

1 - Open Source AI Tools

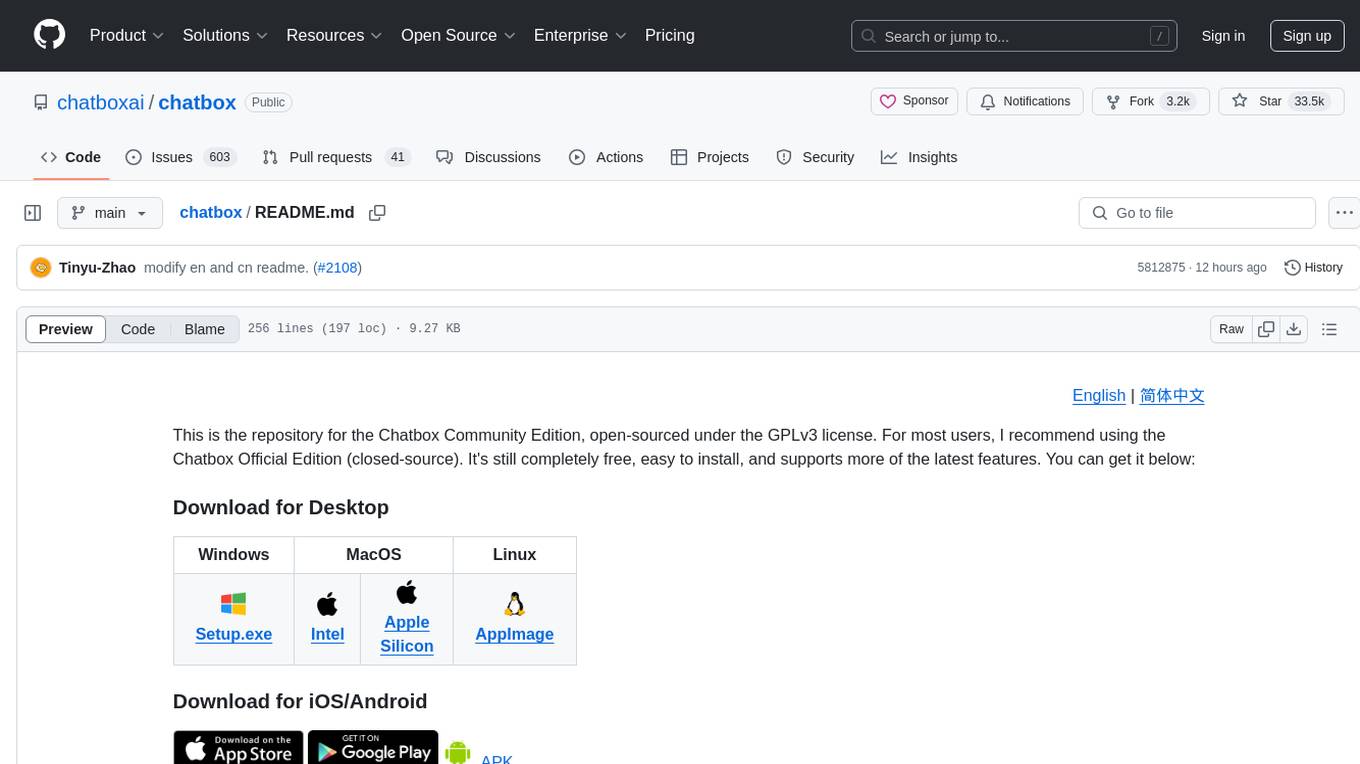

chatbox

Chatbox is a desktop client for ChatGPT, Claude, and other LLMs, providing features like local data storage, multiple LLM provider support, image generation, enhanced prompting, keyboard shortcuts, and more. It offers a user-friendly interface with dark theme, team collaboration, cross-platform availability, web version access, iOS & Android apps, multilingual support, and ongoing feature enhancements. Developed for prompt and API debugging, it has gained popularity for daily chatting and professional role-playing with AI assistance.

20 - OpenAI Gpts

💻Professional Coder (Auto programming)

A gpt expert at solving programming problems. We have open-sourced the prompt here: https://github.com/ai-boost/awesome-gpts-prompts (This GPT isn't perfect, let's improve it together! 😊🛠️)

Python Coach

I will start by asking you for your level of experience, then help you learn to program in Python. This Mini GPT is based on an Expert Guidance Prompt created in under 3 minutes with StructuredPrompt.com using AI-Assist.

Easily Hackable GPT

A regular GPT to try to hack with a prompt injection. Ask for my instructions and see what happens.

TypeScript Engineer

An expert TypeScript engineer to help you solve and debug problems together.

Deluge Developer by TechBloom

Zoho Deluge expert developer who is trained to write and debug Deluge Functions for Zoho CRM

The Dock - Your Docker Assistant

Technical assistant specializing in Docker and Docker Compose. Lets Debug !

Gif-PT

Gif generator. Uses Dalle3 to make a spritesheet, then code interpreter to slice it and animate. Includes an automatic refinement and debug mode. v1.2 GPTavern

María Dolores

Inspired by a TV character, lives on a farm, analytical and philosophical, with a 'DEBUG' mode.