Best AI tools for< Assess Risk >

20 - AI tool Sites

Pascal

Pascal is an AI-powered risk-based KYC & AML screening and monitoring platform that offers users the ability to assess findings faster and more accurately than other compliance tools. It utilizes AI, machine learning, and Natural Language Processing to analyze open-source and client-specific data, interpret adverse media in multiple languages, simplify onboarding processes, provide continuous monitoring, reduce false positives, and enhance compliance decision-making.

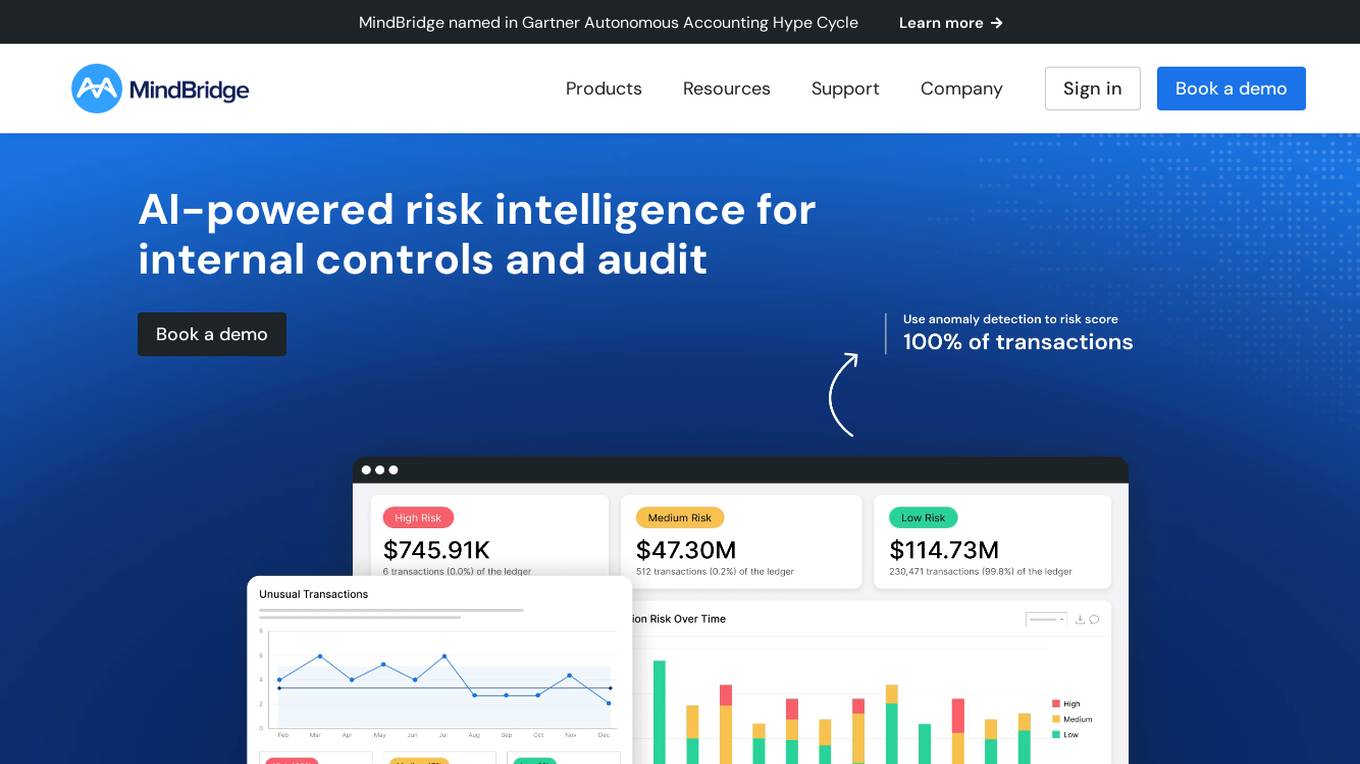

MindBridge

MindBridge is a global leader in financial risk discovery and anomaly detection. The MindBridge AI Platform drives insights and assesses risks across critical business operations. It offers various products like General Ledger Analysis, Company Card Risk Analytics, Payroll Risk Analytics, Revenue Risk Analytics, and Vendor Invoice Risk Analytics. With over 250 unique machine learning control points, statistical methods, and traditional rules, MindBridge is deployed to over 27,000 accounting, finance, and audit professionals globally.

SWMS AI

SWMS AI is an AI-powered safety risk assessment tool that helps businesses streamline compliance and improve safety. It leverages a vast knowledge base of occupational safety resources, codes of practice, risk assessments, and safety documents to generate risk assessments tailored specifically to a project, trade, and industry. SWMS AI can be customized to a company's policies to align its AI's document generation capabilities with proprietary safety standards and requirements.

Cleerly

Cleerly is a digital healthcare company transforming the way clinicians approach the treatment of heart disease. Our clinically-proven, AI-based digital care platform works with coronary computed tomography angiography (CCTA) imaging to help clinicians precisely identify and define atherosclerosis earlier, so they can provide personalized, life-saving treatment plans for all patients throughout their care continuum. We measure atherosclerosis - plaque build-up in the heart's arteries - not indirect markers such as risk factors and symptoms of disease. Our AI-enabled digital care pathway offers simpler, faster, more accurate heart disease evaluation and reporting that's tailored to each stakeholder, improving overall clinical and financial outcomes.

Archistar

Archistar is a leading property research platform in Australia that empowers users to make confident and compliant property decisions with the help of data and AI. It offers a range of features, including the ability to find and assess properties, generate 3D design concepts, and minimize risk and maximize return on investment. Archistar is trusted by over 100,000 individuals and 1,000 leading property firms.

CUBE3.AI

CUBE3.AI is a real-time crypto fraud prevention tool that utilizes AI technology to identify and prevent various types of fraudulent activities in the blockchain ecosystem. It offers features such as risk assessment, real-time transaction security, automated protection, instant alerts, and seamless compliance management. The tool helps users protect their assets, customers, and reputation by proactively detecting and blocking fraud in real-time.

Lumenova AI

Lumenova AI is an AI platform that focuses on making AI ethical, transparent, and compliant. It provides solutions for AI governance, assessment, risk management, and compliance. The platform offers comprehensive evaluation and assessment of AI models, proactive risk management solutions, and simplified compliance management. Lumenova AI aims to help enterprises navigate the future confidently by ensuring responsible AI practices and compliance with regulations.

ClearAI

ClearAI is an AI-powered platform that offers instant extraction of insights, effortless document navigation, and natural language interaction. It enables users to upload PDFs securely, ask questions, and receive accurate responses in seconds. With features like structured results, intelligent search, and lifetime access offers, ClearAI simplifies tasks such as analyzing company reports, risk assessment, audit support, contract review, legal research, and due diligence. The platform is designed to streamline document analysis and provide relevant data efficiently.

ISMS Copilot

ISMS Copilot is an AI-powered assistant designed to simplify ISO 27001 preparation for both experts and beginners. It offers various features such as ISMS scope definition, risk assessment and treatment, compliance navigation, incident management, business continuity planning, performance tracking, and more. The tool aims to save time, provide precise guidance, and ensure ISO 27001 compliance. With a focus on security and confidentiality, ISMS Copilot is a valuable resource for small businesses and information security professionals.

Clarity AI

Clarity AI is an AI-powered technology platform that offers a Sustainability Tech Kit for sustainable investing, shopping, reporting, and benchmarking. The platform provides built-in sustainability technology with customizable solutions for various needs related to data, methodologies, and tools. It seamlessly integrates into workflows, offering scalable and flexible end-to-end SaaS tools to address sustainability use cases. Clarity AI leverages powerful AI and machine learning to analyze vast amounts of data points, ensuring reliable and transparent data coverage. The platform is designed to empower users to assess, analyze, and report on sustainability aspects efficiently and confidently.

Castello.ai

Castello.ai is a financial analysis tool that uses artificial intelligence to help businesses make better decisions. It provides users with real-time insights into their financial data, helping them to identify trends, risks, and opportunities. Castello.ai is designed to be easy to use, even for those with no financial background.

DataSnack

DataSnack is a real-time, AI-driven due diligence platform that helps you make better decisions faster. With DataSnack, you can access a wealth of data and insights on companies, industries, and markets, all in one place. Our AI-powered platform analyzes data from a variety of sources, including news, social media, and financial filings, to provide you with the most up-to-date and relevant information. With DataSnack, you can:

Jumio

Jumio is a leading digital identity verification platform that offers AI-driven services to verify the identities of new and existing users, assess risk, and help meet compliance mandates. With over 1 billion transactions processed, Jumio provides cutting-edge AI and ML models to detect fraud and maintain trust throughout the customer lifecycle. The platform offers solutions for identity verification, predictive fraud insights, dynamic user experiences, and risk scoring, trusted by global brands across various industries.

PropHunt.ai

PropHunt.ai is an AI-powered platform designed for real estate professionals and property investors. It utilizes advanced machine learning algorithms to analyze property data and provide valuable insights for property hunting and investment decisions. The platform offers features such as property price prediction, neighborhood analysis, investment risk assessment, property comparison, and market trend forecasting. With PropHunt.ai, users can make informed decisions, optimize their property investments, and stay ahead in the competitive real estate market.

Underwrite.ai

Underwrite.ai is a platform that leverages advances in artificial intelligence and machine learning to provide lenders with nonlinear, dynamic models of credit risk. By analyzing thousands of data points from credit bureau sources, the application accurately models credit risk for consumers and small businesses, outperforming traditional approaches. Underwrite.ai offers a unique underwriting methodology that focuses on outcomes such as profitability and customer lifetime value, allowing organizations to enhance their lending performance without the need for capital investment or lengthy build times. The platform's models are continuously learning and adapting to market changes in real-time, providing explainable decisions in milliseconds.

BCT Digital

BCT Digital is an AI-powered risk management suite provider that offers a range of products to help enterprises optimize their core Governance, Risk, and Compliance (GRC) processes. The rt360 suite leverages next-generation technologies, sophisticated AI/ML models, data-driven algorithms, and predictive analytics to assist organizations in managing various risks effectively. BCT Digital's solutions cater to the financial sector, providing tools for credit risk monitoring, early warning systems, model risk management, environmental, social, and governance (ESG) risk assessment, and more.

Quantifind

Quantifind is an AI-powered financial crimes automation platform that specializes in Anti-Money Laundering (AML) and Know Your Customer (KYC) solutions. It offers end-to-end automation impact, best-in-class accuracy, and powerful APIs and applications for risk screening, investigations, and compliance in the financial services and public sector industries. Quantifind's Graphyte platform leverages AI and external data to streamline AML-KYC processes, providing comprehensive data coverage, dynamic risk typologies, and seamless integrations with case management systems.

Flagright Solutions

Flagright Solutions is an AI-native AML Compliance & Risk Management platform that offers real-time transaction monitoring, automated case management, AI forensics for screening, customer risk assessment, and sanctions screening. Trusted by financial institutions worldwide, Flagright's platform streamlines compliance workflows, reduces manual tasks, and enhances fraud detection accuracy. The platform provides end-to-end solutions for financial crime compliance, empowering operational teams to collaborate effectively and make reliable decisions. With advanced AI algorithms and real-time processing, Flagright ensures instant detection of suspicious activities, reducing false positives and enhancing risk detection capabilities.

Shufti Pro

Shufti Pro is an award-winning global identity verification platform that provides businesses with a suite of tools to verify the identities of their customers. The platform uses artificial intelligence (AI) to automate the identity verification process, making it faster, more accurate, and more secure. Shufti Pro's solutions are used by businesses in a variety of industries, including banking, fintech, crypto, forex, gaming, insurance, education, healthcare, e-commerce, and travel.

Center for a New American Security

The Center for a New American Security (CNAS) is a bipartisan, non-profit think tank that focuses on national security and defense policy. CNAS conducts research, analysis, and policy development on a wide range of topics, including defense strategy, nuclear weapons, cybersecurity, and energy security. CNAS also provides expert commentary and analysis on current events and policy debates.

0 - Open Source AI Tools

24 - OpenAI Gpts

Trigger Advisor

A marketing expert that analyzing messages for potential triggers, providing risk scores and improvement suggestions.

Cyber Audit and Pentest RFP Builder

Generates cybersecurity audit and penetration test specifications.

Contemporary Compliance

🤓💡📃Engaging and positive US compliance expert helping professionals with DOJ-guidance based programs.

Global Productivity and Compliance Guide

Expert in global productivity and legal compliance.

InfoSec Advisor

An expert in the technical, organizational, infrastructural and personnel aspects of information security management systems (ISMS)

How to Measure Anything

对各种量化问题进行拆解和粗略的估算。注意这种估算主要是靠推测,而不是靠准确的数据,因此仅供参考。理想情况下,估算结果和真实值差距可能在1个数量级以内。即使数值不准确,也希望拆解思路对你有所启发。

Secure Space Advisor

Technical satellite security expert trained on space focused cybersecurity frameworks, best practices and process.

Safaricom Financial Analyst

Analyzes Safaricom's HY and FY financials, with detailed insights on different years.

ZEN Influencer Insurance

I create social media influencer insurance plans with a focus on legal compliance.

Warren

The intelligent investor. Analyse stocks using Warren Buffet's favourite investment framework, outlined in Benjamin Graham's famous book. Warren takes no responsibility for investment risk.