AI tools for Fragments

Related Tools:

GRAIL

GRAIL is a healthcare company innovating to solve medicine’s most important challenges. Our team of leading scientists, engineers and clinicians are on an urgent mission to detect cancer early, when it is more treatable and potentially curable. GRAIL's Galleri® test is a first-of-its-kind multi-cancer early detection (MCED) test that can detect a signal shared by more than 50 cancer types and predict the tissue type or organ associated with the signal to help healthcare providers determine next steps.

Encord

Encord is a leading data development platform designed for computer vision and multimodal AI teams. It offers a comprehensive suite of tools to manage, clean, and curate data, streamline labeling and workflow management, and evaluate AI model performance. With features like data indexing, annotation, and active model evaluation, Encord empowers users to accelerate their AI data workflows and build robust models efficiently.

Navina AI

Navina AI is a clinician-first AI platform designed to streamline patient care by providing key insights and actionable recommendations to healthcare providers, ACOs, MSOs, and health plans. The platform leverages proprietary AI technology to improve clinical decision-making, reduce administrative burden, and enhance quality management and risk adjustment processes. Navina AI offers efficient chart review, accurate risk adjustment, streamlined quality management, robust analytics, and a user-friendly interface that integrates seamlessly into the clinical workflow.

The Keenfolks

The Keenfolks is an AI Marketing Agency providing AI solutions for global brands to enhance media efficiency and ROI. They offer AI-powered tools to optimize media campaigns, synthesize audience data, and provide actionable intelligence. The agency helps brands transform fragmented data into unified intelligence, collaborate effectively, and improve media performance. The Keenfolks work with multinational brands across various markets, offering services such as AI assessment, content generation, customer behavior prediction, personalization automation, and data-informed decision-making.

CloudMedx

CloudMedx is a healthcare data platform that provides aggregation, automation, and AI solutions. It simplifies decision making for patients, providers, and payers with a single powerful platform. Clinical, operations, and financial results are coordinated and delivered like never before.

Splore

Splore is an AI-powered platform designed for asset managers to streamline fund management processes. It simplifies asset management by consolidating fragmented data, automating data extraction, and enhancing cross-document insights. Splore's AI capabilities help asset managers make faster, informed decisions and boost productivity by automating repetitive tasks. The platform prioritizes data security and compliance, adhering to strict standards like GDPR, SOC 2, and HIPAA.

Gleen AI

Gleen AI is a highly accurate and capable generative AI platform designed for customer success. It leverages AI/ML systems like GPT-4 to provide accurate and relevant responses to customer queries. The platform can perform automatic actions, unify fragmented knowledge from various sources, and is used by over 250 companies to enhance customer interactions and support. Gleen AI is suitable for multiple functions across different industries, offering a seamless integration with various customer service and communication channels.

Pangea.ai

Pangea.ai is a leading talent aggregator that helps businesses hire quality technologists by comparing data points for reliable matching. It offers a unified hiring experience in a fragmented market, making it easier to compare and decide among the numerous software development agencies and talent networks available. Pangea.ai's intelligent matching system considers over 100 data points to find the best fit for businesses, while its rigorous vetting process evaluates expertise, client satisfaction, and team health. Businesses can choose to self-serve their way to a hire or opt for Pangea.ai's white-glove matching service.

Bublic

Bublic is an AI-driven all-in-one dashboard designed for SaaS founders to effortlessly connect multiple data sources and receive actionable insights tailored to their business goals. The platform addresses the challenge of data fragmentation across various tools and platforms, providing users with clear and growth-focused decision-making support. With features like AI-driven insights, one-click integrations, tailored dashboards, powerful filters, and data-driven decision-making, Bublic empowers SaaS businesses to make informed decisions and drive growth.

Refinder

Refinder is an AI-powered universal search and assistant designed for work. It helps users connect, search, and utilize their company's data efficiently. With Refinder, users can easily search across all their organization's apps and data, get trustworthy answers, and streamline integrations without the need for maintenance. The tool aims to address the challenges of information overload, data fragmentation, and low productivity faced by modern businesses.

Inspectr

Inspectr is an AI-powered intelligence engine designed for real estate operations. It integrates with existing tech stacks to provide real-time, actionable insights that reduce costs, improve efficiency, and drive higher returns across portfolios. Inspectr turns fragmented data into predictive intelligence, optimizing spend, budgets, and maximizing Net Operating Income (NOI) without disrupting current systems. The platform offers features like real-time strategic intelligence, seamless integrations, and work order enrichment to streamline operations and enhance asset performance.

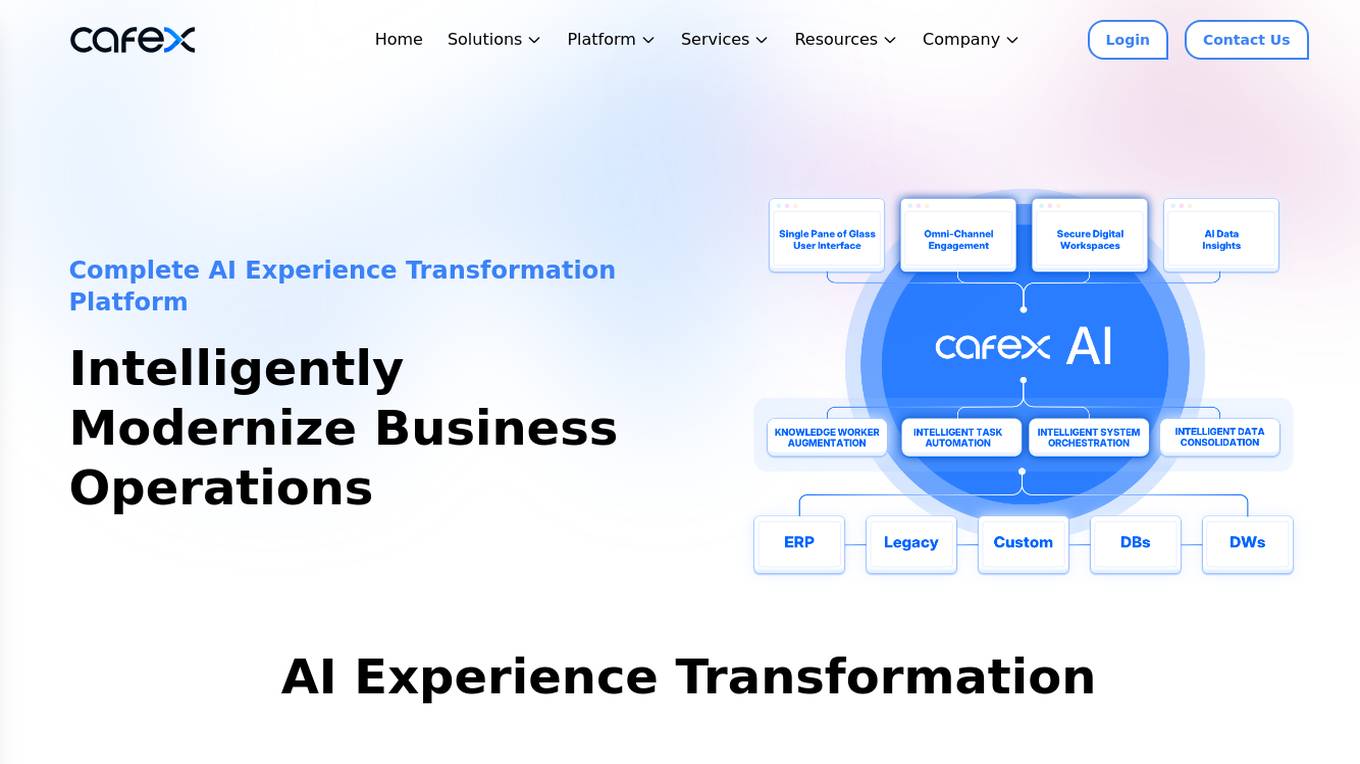

CafeX

CafeX is an AI-powered platform that offers AI Experience Transformation solutions for businesses. It helps in modernizing business operations, integrating AI and automation to simplify complex challenges, and enhancing customer interactions with plug-and-play solutions. CafeX enables organizations in regulated industries to optimize digital engagement with employees, customers, and partners by leveraging existing investments and unifying fragmented solutions. The platform provides unified intelligence, seamless integration, developer empowerment, efficient deployment, and audit & compliance functionalities.

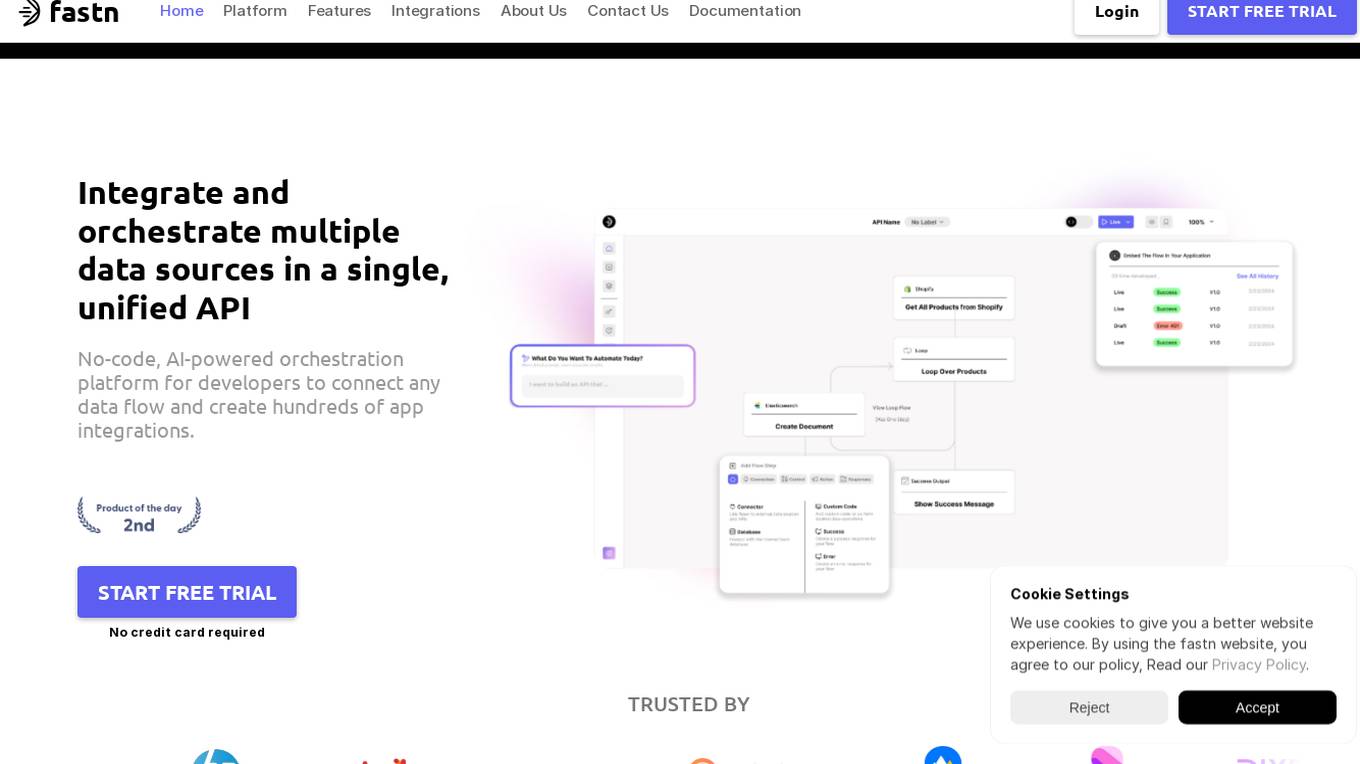

Fastn

Fastn is a no-code, AI-powered orchestration platform for developers to integrate and orchestrate multiple data sources in a single, unified API. It allows users to connect any data flow and create hundreds of app integrations efficiently. Fastn simplifies API integration, ensures API security, and handles data from multiple sources with features like real-time data orchestration, instant API composition, and infrastructure management on autopilot.

Tektonic AI

Tektonic AI is an AI application that empowers businesses by providing AI agents to automate processes, make better decisions, and bridge data silos. It offers solutions to eliminate manual work, increase autonomy, streamline tasks, and close gaps between disconnected systems. The application is designed to enhance data quality, accelerate deal closures, optimize customer self-service, and ensure transparent operations. Tektonic AI is founded by industry veterans with expertise in AI, cloud, and enterprise software.

OSO

OSO is an AI-powered tool suite that offers a range of capabilities to enhance productivity and streamline tasks. It includes an AI search engine that provides comprehensive summaries of search queries, an uncensored AI chat that allows for unrestricted discussions and content generation, and an AI image generation tool that enables the creation of visuals for various purposes. OSO integrates with over 7,000 applications and offers a range of features such as workflow automation, web summaries, and sentiment analysis.

Alby Dream developer

I am a professional novel writer who helps you to turn your fragments of dreams into vivid and engaging narrative

OpenGL 3.3 Graphics Programming Helper

Helps beginners understand OpenGL 3.3 concepts and terminology

fragments

Fragments is an open-source tool that leverages Anthropic's Claude Artifacts, Vercel v0, and GPT Engineer. It is powered by E2B Sandbox SDK and Code Interpreter SDK, allowing secure execution of AI-generated code. The tool is based on Next.js 14, shadcn/ui, TailwindCSS, and Vercel AI SDK. Users can stream in the UI, install packages from npm and pip, and add custom stacks and LLM providers. Fragments enables users to build web apps with Python interpreter, Next.js, Vue.js, Streamlit, and Gradio, utilizing providers like OpenAI, Anthropic, Google AI, and more.

hash

HASH is a self-building, open-source database which grows, structures and checks itself. With it, we're creating a platform for decision-making, which helps you integrate, understand and use data in a variety of different ways.

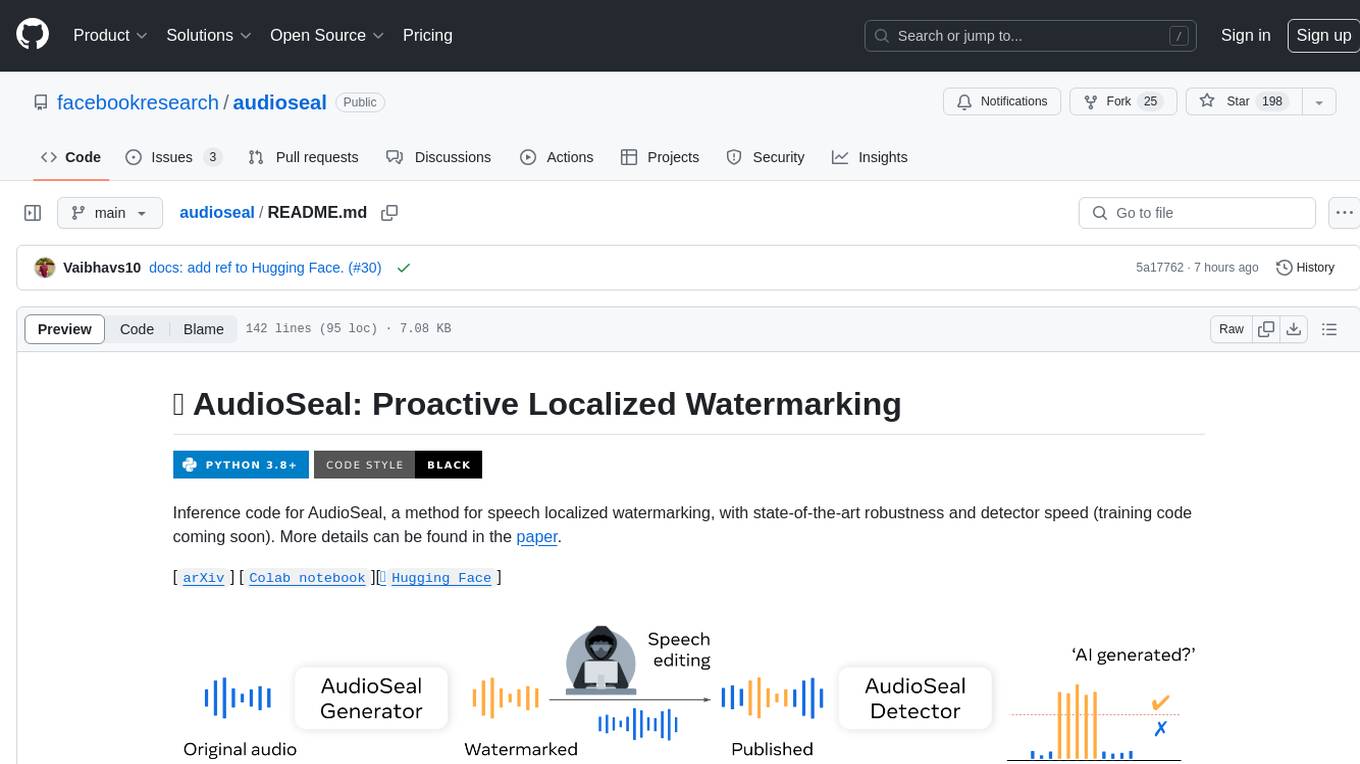

e2b-cookbook

E2B Cookbook provides example code and guides for building with E2B. E2B is a platform that allows developers to build custom code interpreters in their AI apps. It provides a dedicated SDK for building custom code interpreters, as well as a core SDK that can be used to build on top of E2B. E2B also provides documentation at e2b.dev/docs.

ai-analyst

AI Analyst by E2B is an AI-powered code and data analysis tool built with Next.js and the E2B SDK. It allows users to analyze data with Meta's Llama 3.1, upload CSV files, and create interactive charts. The tool is powered by E2B Sandbox, Vercel's AI SDK, Next.js, and echarts library for interactive charts. Supported LLM providers include TogetherAI and Fireworks, with various chart types available for visualization.

gpdb

Greenplum Database (GPDB) is an advanced, fully featured, open source data warehouse, based on PostgreSQL. It provides powerful and rapid analytics on petabyte scale data volumes. Uniquely geared toward big data analytics, Greenplum Database is powered by the world’s most advanced cost-based query optimizer delivering high analytical query performance on large data volumes.

openvino.genai

The GenAI repository contains pipelines that implement image and text generation tasks. The implementation uses OpenVINO capabilities to optimize the pipelines. Each sample covers a family of models and suggests certain modifications to adapt the code to specific needs. It includes the following pipelines: 1. Benchmarking script for large language models 2. Text generation C++ samples that support most popular models like LLaMA 2 3. Stable Diffuison (with LoRA) C++ image generation pipeline 4. Latent Consistency Model (with LoRA) C++ image generation pipeline

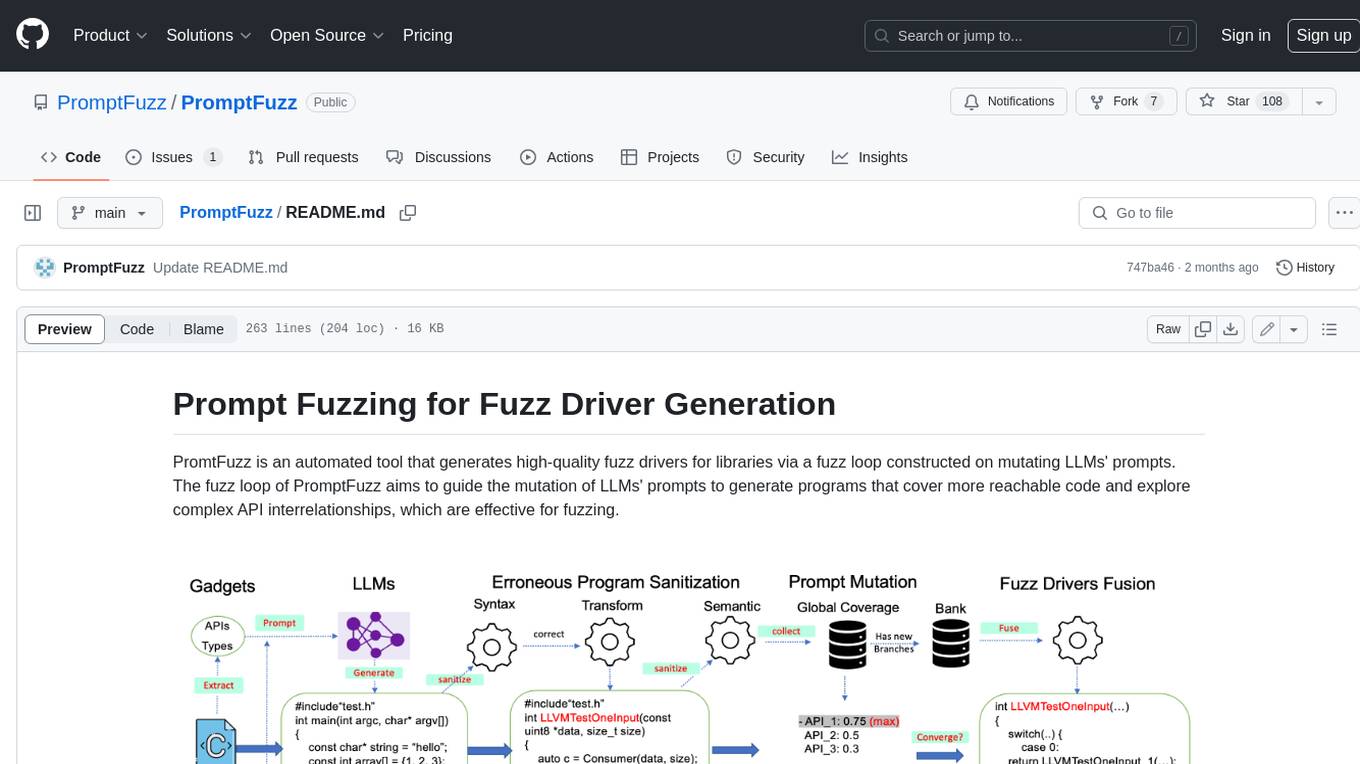

PromptFuzz

**Description:** PromptFuzz is an automated tool that generates high-quality fuzz drivers for libraries via a fuzz loop constructed on mutating LLMs' prompts. The fuzz loop of PromptFuzz aims to guide the mutation of LLMs' prompts to generate programs that cover more reachable code and explore complex API interrelationships, which are effective for fuzzing. **Features:** * **Multiply LLM support** : Supports the general LLMs: Codex, Inocder, ChatGPT, and GPT4 (Currently tested on ChatGPT). * **Context-based Prompt** : Construct LLM prompts with the automatically extracted library context. * **Powerful Sanitization** : The program's syntax, semantics, behavior, and coverage are thoroughly analyzed to sanitize the problematic programs. * **Prioritized Mutation** : Prioritizes mutating the library API combinations within LLM's prompts to explore complex interrelationships, guided by code coverage. * **Fuzz Driver Exploitation** : Infers API constraints using statistics and extends fixed API arguments to receive random bytes from fuzzers. * **Fuzz engine integration** : Integrates with grey-box fuzz engine: LibFuzzer. **Benefits:** * **High branch coverage:** The fuzz drivers generated by PromptFuzz achieved a branch coverage of 40.12% on the tested libraries, which is 1.61x greater than _OSS-Fuzz_ and 1.67x greater than _Hopper_. * **Bug detection:** PromptFuzz detected 33 valid security bugs from 49 unique crashes. * **Wide range of bugs:** The fuzz drivers generated by PromptFuzz can detect a wide range of bugs, most of which are security bugs. * **Unique bugs:** PromptFuzz detects uniquely interesting bugs that other fuzzers may miss. **Usage:** 1. Build the library using the provided build scripts. 2. Export the LLM API KEY if using ChatGPT or GPT4. 3. Generate fuzz drivers using the `fuzzer` command. 4. Run the fuzz drivers using the `harness` command. 5. Deduplicate and analyze the reported crashes. **Future Works:** * **Custom LLMs suport:** Support custom LLMs. * **Close-source libraries:** Apply PromptFuzz to close-source libraries by fine tuning LLMs on private code corpus. * **Performance** : Reduce the huge time cost required in erroneous program elimination.

backend.ai

Backend.AI is a streamlined, container-based computing cluster platform that hosts popular computing/ML frameworks and diverse programming languages, with pluggable heterogeneous accelerator support including CUDA GPU, ROCm GPU, TPU, IPU and other NPUs. It allocates and isolates the underlying computing resources for multi-tenant computation sessions on-demand or in batches with customizable job schedulers with its own orchestrator. All its functions are exposed as REST/GraphQL/WebSocket APIs.

XLearning

XLearning is a scheduling platform for big data and artificial intelligence, supporting various machine learning and deep learning frameworks. It runs on Hadoop Yarn and integrates frameworks like TensorFlow, MXNet, Caffe, Theano, PyTorch, Keras, XGBoost. XLearning offers scalability, compatibility, multiple deep learning framework support, unified data management based on HDFS, visualization display, and compatibility with code at native frameworks. It provides functions for data input/output strategies, container management, TensorBoard service, and resource usage metrics display. XLearning requires JDK >= 1.7 and Maven >= 3.3 for compilation, and deployment on CentOS 7.2 with Java >= 1.7 and Hadoop 2.6, 2.7, 2.8.

chatWeb

ChatWeb is a tool that can crawl web pages, extract text from PDF, DOCX, TXT files, and generate an embedded summary. It can answer questions based on text content using chatAPI and embeddingAPI based on GPT3.5. The tool calculates similarity scores between text vectors to generate summaries, performs nearest neighbor searches, and designs prompts to answer user questions. It aims to extract relevant content from text and provide accurate search results based on keywords. ChatWeb supports various modes, languages, and settings, including temperature control and PostgreSQL integration.

Cherry_LLM

Cherry Data Selection project introduces a self-guided methodology for LLMs to autonomously discern and select cherry samples from open-source datasets, minimizing manual curation and cost for instruction tuning. The project focuses on selecting impactful training samples ('cherry data') to enhance LLM instruction tuning by estimating instruction-following difficulty. The method involves phases like 'Learning from Brief Experience', 'Evaluating Based on Experience', and 'Retraining from Self-Guided Experience' to improve LLM performance.

hugescm

HugeSCM is a cloud-based version control system designed to address R&D repository size issues. It effectively manages large repositories and individual large files by separating data storage and utilizing advanced algorithms and data structures. It aims for optimal performance in handling version control operations of large-scale repositories, making it suitable for single large library R&D, AI model development, and game or driver development.

aps-toolkit

APS Toolkit is a powerful tool for developers, software engineers, and AI engineers to explore Autodesk Platform Services (APS). It allows users to read, download, and write data from APS, as well as export data to various formats like CSV, Excel, JSON, and XML. The toolkit is built on top of Autodesk.Forge and Newtonsoft.Json, offering features such as reading SVF models, querying properties database, exporting data, and more.

brokk

Brokk is a code assistant designed to understand code semantically, allowing LLMs to work effectively on large codebases. It offers features like agentic search, summarizing related classes, parsing stack traces, adding source for usages, and autonomously fixing errors. Users can interact with Brokk through different panels and commands, enabling them to manipulate context, ask questions, search codebase, run shell commands, and more. Brokk helps with tasks like debugging regressions, exploring codebase, AI-powered refactoring, and working with dependencies. It is particularly useful for making complex, multi-file edits with o1pro.

pinecone-ts-client

The official Node.js client for Pinecone, written in TypeScript. This client library provides a high-level interface for interacting with the Pinecone vector database service. With this client, you can create and manage indexes, upsert and query vector data, and perform other operations related to vector search and retrieval. The client is designed to be easy to use and provides a consistent and idiomatic experience for Node.js developers. It supports all the features and functionality of the Pinecone API, making it a comprehensive solution for building vector-powered applications in Node.js.

Next-Gen-Dialogue

Next Gen Dialogue is a Unity dialogue plugin that combines traditional dialogue design with AI techniques. It features a visual dialogue editor, modular dialogue functions, AIGC support for generating dialogue at runtime, AIGC baking dialogue in Editor, and runtime debugging. The plugin aims to provide an experimental approach to dialogue design using large language models. Users can create dialogue trees, generate dialogue content using AI, and bake dialogue content in advance. The tool also supports localization, VITS speech synthesis, and one-click translation. Users can create dialogue by code using the DialogueSystem and DialogueTree components.