mediasoup-client-aiortc

mediasoup-client handler for aiortc Python library

Stars: 69

mediasoup-client-aiortc is a handler for the aiortc Python library, allowing Node.js applications to connect to a mediasoup server using WebRTC for real-time audio, video, and DataChannel communication. It facilitates the creation of Worker instances to manage Python subprocesses, obtain audio/video tracks, and create mediasoup-client handlers. The tool supports features like getUserMedia, handlerFactory creation, and event handling for subprocess closure and unexpected termination. It provides custom classes for media stream and track constraints, enabling diverse audio/video sources like devices, files, or URLs. The tool enhances WebRTC capabilities in Node.js applications through seamless Python subprocess communication.

README:

mediasoup-client handler for aiortc Python library. Suitable for building Node.js applications that connect to a mediasoup server using WebRTC and exchange real audio, video and DataChannel messages with it in both directions.

- Python 3.

- Windows is not supported.

Install mediasoup-client-aiortc within your Node.js application:

npm install mediasoup-client-aiortcThe "postinstall" script in package.json will install the Python libraries (including aiortc). You can override the path to python executable by setting the PYTHON environment variable:

PYTHON=/home/me/bin/python3.13 npm install mediasoup-client-aiortcSame thing once you run your Node.js application. mediasoup-client-aiortc will spawn Python processes and communicate with them via UnixSocket. You can override the python executable path by setting the PYTHON environment variable:

PYTHON=/home/me/bin/python3.13 node my_app.js// ES6 style.

import {

createWorker,

Worker,

WorkerSettings,

WorkerLogLevel,

AiortcMediaStream,

AiortcMediaStreamConstraints,

AiortcMediaTrackConstraints,

} from 'mediasoup-client-aiortc';

// CommonJS style.

const {

createWorker,

Worker,

WorkerSettings,

WorkerLogLevel,

AiortcMediaStream,

AiortcMediaStreamConstraints,

AiortcMediaTrackConstraints,

} = require('mediasoup-client-aiortc');Creates a mediasoup-client-aiortc Worker instance. Each Worker spawns and manages a Python subprocess.

@async

@returnsWorker

const worker = await createWorker({

logLevel: 'warn',

});The Worker class. It represents a separate Python subprocess that can provide the Node.js application with audio/video tracks and mediasoup-client handlers.

The Python subprocess PID.

@typeString, read only

Whether the subprocess is closed.

Whether the subprocess died unexpectedly (probably a bug somewhere).

Whether the subprocessed is closed. It becomes true once the worker subprocess is completely closed and 'subprocessclose' event fires.

@typeBoolean, read only

Closes the subprocess and all its open resources (such as audio/video tracks and mediasoup-client handlers).

Mimics the navigator.getUserMedia() API. It creates an AiortcMediaStream instance containing audio and/or video tracks. Those tracks can point to different sources such as device microphone, webcam, multimedia files or HTTP streams.

@async

@returnsAiortcMediaStream

const stream = await getUserMedia({

audio: true,

video: {

source: 'file',

file: 'file:///home/foo/media/foo.mp4',

},

});

const audioTrack = stream.getAudioTracks()[0];

const videoTrack = stream.getVideoTracks()[0];Creates a mediasoup-client handler factory, suitable for the handlerFactory argument when instantiating a mediasoup-client Device.

@async

@returnsHandlerFactory

const device = new mediasoupClient.Device({

handlerFactory: worker.createHandlerFactory(),

});Note that all Python resources (such as audio/video) used within the Device must be obtained from the same mediasoup-client-aiortc Worker instance.

Emitted if the subprocess abruptly dies. This should not happen. If it happens there is a bug in the Python component.

Emitted when the subprocess has closed completely. This event is emitted asynchronously once worker.close() has been called (or after 'died' event in case the worker subprocess abnormally died).

type WorkerSettings = {

/**

* Logging level for logs generated by the Python subprocess.

*/

logLevel?: WorkerLogLevel; // If unset it defaults to "error".

};type WorkerLogLevel = 'debug' | 'warn' | 'error' | 'none';Logs generated by both, Node.js and Python components of this module, are printed using the mediasoup-client debugging system with "mediasoup-client-aiortc" prefix/namespace.

A custom implementation of the W3C MediaStream class. An instance of AiortcMediaStream is generated by calling worker.getUserMedia().

Audio and video tracks within an AiortcMediaStream are instances of FakeMediaStreamTrack and reference "native" MediaStreamTracks in the Python subprocess (handled by aiortc library).

The argument given to worker.getUserMedia().

type AiortcMediaStreamConstraints = {

audio?: AiortcMediaTrackConstraints | boolean;

video?: AiortcMediaTrackConstraints | boolean;

};Setting audio or video to true equals to {source: "device"} (so default microphone or webcam will be used to obtain the track or tracks).

type AiortcMediaTrackConstraints = {

source: 'device' | 'file' | 'url';

device?: string;

file?: string;

url?: string;

format?: string;

options?: object;

timeout?: number;

loop?: boolean;

decode?: boolean;

};Determines which source aiortc will use to generate the audio or video track. These are the possible values:

- "device": System microphone or webcam.

- "file": Path to a multimedia file in the system.

- "url": URL of an HTTP stream.

If source is "device" and this field is given, it specifies the device ID of the microphone or webcam to use. If unset, the default one in the system will be used.

- Default values for

Darwinplatform:- "none:0" for audio.

- "default:none" for video.

- Default values for

Linuxplatform:- "hw:0" for audio.

- "/dev/video0" for video.

Mandatory if source is "file". Must be the absolute path to a multimedia file.

Mandatory if source is "url". Must be the URL of an HTTP stream.

Specifies the device format used by ffmpeg.

-

Default values for

Darwinplatform:- "avfoundation" for audio.

- "avfoundation" for video.

-

Default values for

Linuxplatform:- "alsa" for audio.

- "v4f2" for video.

Specifies the device options used by ffmpeg.

-

Default values for

Darwinplatform:-

{}for audio. -

{ framerate: "30", video_size: "640x480" }for video.

-

-

Default values for

Linuxplatform:-

{}for audio. -

{ framerate: "30", video_size: "640x480" }for video.

-

See documentation in aiortc site (decode option is not documented but you can figure it out by reading usage examples).

mediasoup-client-aiortc supports sending/receiving string and binary DataChannel messages. However, due to the lack of Blob support in Node.js, dataChannel.binaryType is always "arraybuffer" so received binary messages are always ArrayBuffer instances.

When sending, dataChannel.send() (and hence dataProducer.send()) allows passing a string, a Buffer instance or an ArrayBuffer instance.

npm run lintnpm run testnpm run release:checkPYTHON_LOG_TO_STDOUT=true npm run testSee the list of open issues.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for mediasoup-client-aiortc

Similar Open Source Tools

mediasoup-client-aiortc

mediasoup-client-aiortc is a handler for the aiortc Python library, allowing Node.js applications to connect to a mediasoup server using WebRTC for real-time audio, video, and DataChannel communication. It facilitates the creation of Worker instances to manage Python subprocesses, obtain audio/video tracks, and create mediasoup-client handlers. The tool supports features like getUserMedia, handlerFactory creation, and event handling for subprocess closure and unexpected termination. It provides custom classes for media stream and track constraints, enabling diverse audio/video sources like devices, files, or URLs. The tool enhances WebRTC capabilities in Node.js applications through seamless Python subprocess communication.

python-tgpt

Python-tgpt is a Python package that enables seamless interaction with over 45 free LLM providers without requiring an API key. It also provides image generation capabilities. The name _python-tgpt_ draws inspiration from its parent project tgpt, which operates on Golang. Through this Python adaptation, users can effortlessly engage with a number of free LLMs available, fostering a smoother AI interaction experience.

aiavatarkit

AIAvatarKit is a tool for building AI-based conversational avatars quickly. It supports various platforms like VRChat and cluster, along with real-world devices. The tool is extensible, allowing unlimited capabilities based on user needs. It requires VOICEVOX API, Google or Azure Speech Services API keys, and Python 3.10. Users can start conversations out of the box and enjoy seamless interactions with the avatars.

omniai

OmniAI provides a unified Ruby API for integrating with multiple AI providers, streamlining AI development by offering a consistent interface for features such as chat, text-to-speech, speech-to-text, and embeddings. It ensures seamless interoperability across platforms and effortless switching between providers, making integrations more flexible and reliable.

text-extract-api

The text-extract-api is a powerful tool that allows users to convert images, PDFs, or Office documents to Markdown text or JSON structured documents with high accuracy. It is built using FastAPI and utilizes Celery for asynchronous task processing, with Redis for caching OCR results. The tool provides features such as PDF/Office to Markdown and JSON conversion, improving OCR results with LLama, removing Personally Identifiable Information from documents, distributed queue processing, caching using Redis, switchable storage strategies, and a CLI tool for task management. Users can run the tool locally or on cloud services, with support for GPU processing. The tool also offers an online demo for testing purposes.

tokf

Tokf is a versatile text analysis tool designed to extract key information from text data. It provides functionalities for text summarization, sentiment analysis, keyword extraction, and named entity recognition. Tokf is easy to use and can handle large volumes of text data efficiently. Whether you are a data scientist, researcher, or developer, Tokf can help you gain valuable insights from your text data.

python-genai

The Google Gen AI SDK is a Python library that provides access to Google AI and Vertex AI services. It allows users to create clients for different services, work with parameter types, models, generate content, call functions, handle JSON response schemas, stream text and image content, perform async operations, count and compute tokens, embed content, generate and upscale images, edit images, work with files, create and get cached content, tune models, distill models, perform batch predictions, and more. The SDK supports various features like automatic function support, manual function declaration, JSON response schema support, streaming for text and image content, async methods, tuning job APIs, distillation, batch prediction, and more.

gambit

Gambit is an open-source developer-first framework for building reliable LLM workflows. It helps compose small, typed 'decks' with clear inputs/outputs and guardrails. Users can run decks locally, stream traces, and debug with a built-in UI. The framework aims to improve orchestration by treating each step as a small deck, mixing LLM and compute tasks effortlessly, feeding models only necessary information, and providing built-in observability for debugging.

model.nvim

model.nvim is a tool designed for Neovim users who want to utilize AI models for completions or chat within their text editor. It allows users to build prompts programmatically with Lua, customize prompts, experiment with multiple providers, and use both hosted and local models. The tool supports features like provider agnosticism, programmatic prompts in Lua, async and multistep prompts, streaming completions, and chat functionality in 'mchat' filetype buffer. Users can customize prompts, manage responses, and context, and utilize various providers like OpenAI ChatGPT, Google PaLM, llama.cpp, ollama, and more. The tool also supports treesitter highlights and folds for chat buffers.

sonarqube-mcp-server

The SonarQube MCP Server is a Model Context Protocol (MCP) server that enables seamless integration with SonarQube Server or Cloud for code quality and security. It supports the analysis of code snippets directly within the agent context. The server provides various tools for analyzing code, managing issues, accessing metrics, and interacting with SonarQube projects. It also supports advanced features like dependency risk analysis, enterprise portfolio management, and system health checks. The server can be configured for different transport modes, proxy settings, and custom certificates. Telemetry data collection can be disabled if needed.

generative-ai-python

The Google AI Python SDK is the easiest way for Python developers to build with the Gemini API. The Gemini API gives you access to Gemini models created by Google DeepMind. Gemini models are built from the ground up to be multimodal, so you can reason seamlessly across text, images, and code.

instructor

Instructor is a popular Python library for managing structured outputs from large language models (LLMs). It offers a user-friendly API for validation, retries, and streaming responses. With support for various LLM providers and multiple languages, Instructor simplifies working with LLM outputs. The library includes features like response models, retry management, validation, streaming support, and flexible backends. It also provides hooks for logging and monitoring LLM interactions, and supports integration with Anthropic, Cohere, Gemini, Litellm, and Google AI models. Instructor facilitates tasks such as extracting user data from natural language, creating fine-tuned models, managing uploaded files, and monitoring usage of OpenAI models.

flapi

flAPI is a powerful service that automatically generates read-only APIs for datasets by utilizing SQL templates. Built on top of DuckDB, it offers features like automatic API generation, support for Model Context Protocol (MCP), connecting to multiple data sources, caching, security implementation, and easy deployment. The tool allows users to create APIs without coding and enables the creation of AI tools alongside REST endpoints using SQL templates. It supports unified configuration for REST endpoints and MCP tools/resources, concurrent servers for REST API and MCP server, and automatic tool discovery. The tool also provides DuckLake-backed caching for modern, snapshot-based caching with features like full refresh, incremental sync, retention, compaction, and audit logs.

Wandb.jl

Unofficial Julia Bindings for wandb.ai. Wandb is a platform for tracking and visualizing machine learning experiments. It provides a simple and consistent way to log metrics, parameters, and other data from your experiments, and to visualize them in a variety of ways. Wandb.jl provides a convenient way to use Wandb from Julia.

aiocsv

aiocsv is a Python module that provides asynchronous CSV reading and writing. It is designed to be a drop-in replacement for the Python's builtin csv module, but with the added benefit of being able to read and write CSV files asynchronously. This makes it ideal for use in applications that need to process large CSV files efficiently.

cursive-py

Cursive is a universal and intuitive framework for interacting with LLMs. It is extensible, allowing users to hook into any part of a completion life cycle. Users can easily describe functions that LLMs can use with any supported model. Cursive aims to bridge capabilities between different models, providing a single interface for users to choose any model. It comes with built-in token usage and costs calculations, automatic retry, and model expanding features. Users can define and describe functions, generate Pydantic BaseModels, hook into completion life cycle, create embeddings, and configure retry and model expanding behavior. Cursive supports various models from OpenAI, Anthropic, OpenRouter, Cohere, and Replicate, with options to pass API keys for authentication.

For similar tasks

mediasoup-client-aiortc

mediasoup-client-aiortc is a handler for the aiortc Python library, allowing Node.js applications to connect to a mediasoup server using WebRTC for real-time audio, video, and DataChannel communication. It facilitates the creation of Worker instances to manage Python subprocesses, obtain audio/video tracks, and create mediasoup-client handlers. The tool supports features like getUserMedia, handlerFactory creation, and event handling for subprocess closure and unexpected termination. It provides custom classes for media stream and track constraints, enabling diverse audio/video sources like devices, files, or URLs. The tool enhances WebRTC capabilities in Node.js applications through seamless Python subprocess communication.

For similar jobs

resonance

Resonance is a framework designed to facilitate interoperability and messaging between services in your infrastructure and beyond. It provides AI capabilities and takes full advantage of asynchronous PHP, built on top of Swoole. With Resonance, you can: * Chat with Open-Source LLMs: Create prompt controllers to directly answer user's prompts. LLM takes care of determining user's intention, so you can focus on taking appropriate action. * Asynchronous Where it Matters: Respond asynchronously to incoming RPC or WebSocket messages (or both combined) with little overhead. You can set up all the asynchronous features using attributes. No elaborate configuration is needed. * Simple Things Remain Simple: Writing HTTP controllers is similar to how it's done in the synchronous code. Controllers have new exciting features that take advantage of the asynchronous environment. * Consistency is Key: You can keep the same approach to writing software no matter the size of your project. There are no growing central configuration files or service dependencies registries. Every relation between code modules is local to those modules. * Promises in PHP: Resonance provides a partial implementation of Promise/A+ spec to handle various asynchronous tasks. * GraphQL Out of the Box: You can build elaborate GraphQL schemas by using just the PHP attributes. Resonance takes care of reusing SQL queries and optimizing the resources' usage. All fields can be resolved asynchronously.

aiogram_bot_template

Aiogram bot template is a boilerplate for creating Telegram bots using Aiogram framework. It provides a solid foundation for building robust and scalable bots with a focus on code organization, database integration, and localization.

pinecone-ts-client

The official Node.js client for Pinecone, written in TypeScript. This client library provides a high-level interface for interacting with the Pinecone vector database service. With this client, you can create and manage indexes, upsert and query vector data, and perform other operations related to vector search and retrieval. The client is designed to be easy to use and provides a consistent and idiomatic experience for Node.js developers. It supports all the features and functionality of the Pinecone API, making it a comprehensive solution for building vector-powered applications in Node.js.

ai-chatbot

Next.js AI Chatbot is an open-source app template for building AI chatbots using Next.js, Vercel AI SDK, OpenAI, and Vercel KV. It includes features like Next.js App Router, React Server Components, Vercel AI SDK for streaming chat UI, support for various AI models, Tailwind CSS styling, Radix UI for headless components, chat history management, rate limiting, session storage with Vercel KV, and authentication with NextAuth.js. The template allows easy deployment to Vercel and customization of AI model providers.

freeciv-web

Freeciv-web is an open-source turn-based strategy game that can be played in any HTML5 capable web-browser. It features in-depth gameplay, a wide variety of game modes and options. Players aim to build cities, collect resources, organize their government, and build an army to create the best civilization. The game offers both multiplayer and single-player modes, with a 2D version with isometric graphics and a 3D WebGL version available. The project consists of components like Freeciv-web, Freeciv C server, Freeciv-proxy, Publite2, and pbem for play-by-email support. Developers interested in contributing can check the GitHub issues and TODO file for tasks to work on.

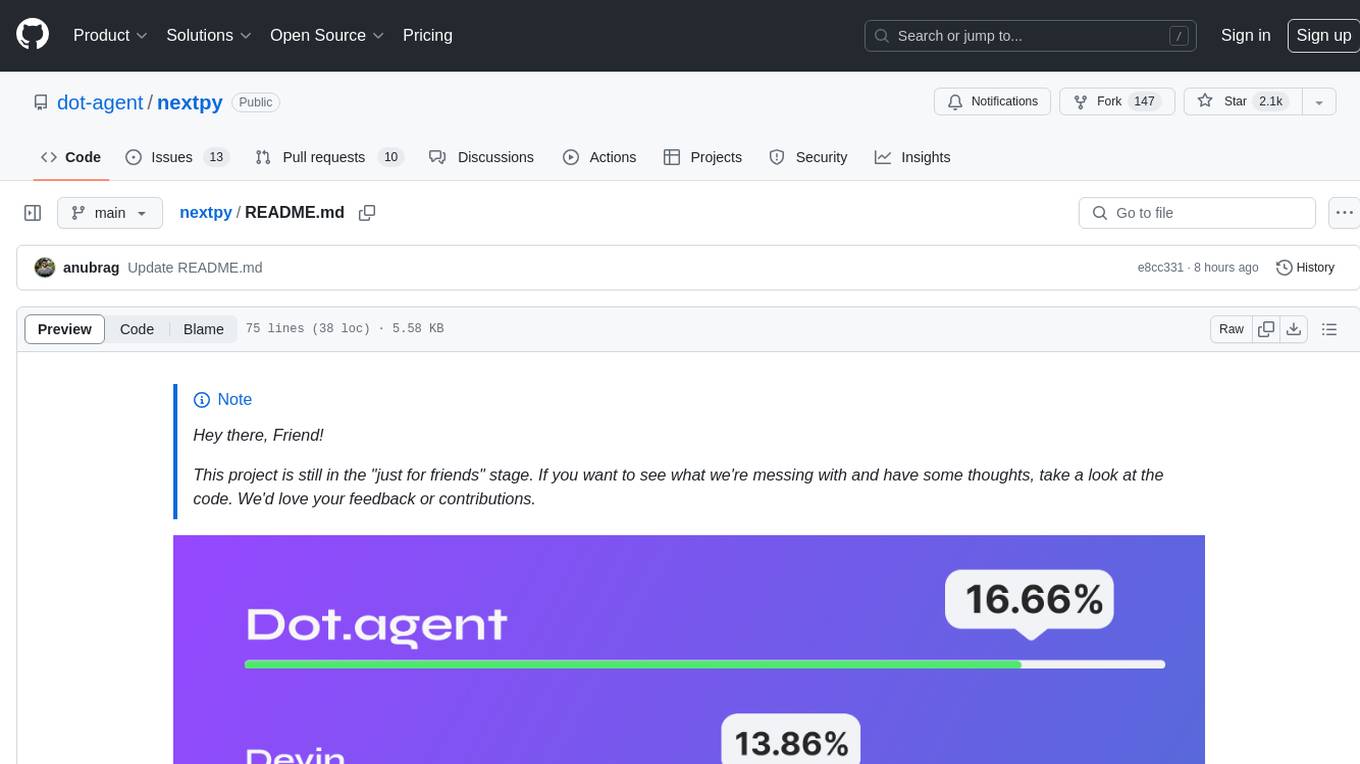

nextpy

Nextpy is a cutting-edge software development framework optimized for AI-based code generation. It provides guardrails for defining AI system boundaries, structured outputs for prompt engineering, a powerful prompt engine for efficient processing, better AI generations with precise output control, modularity for multiplatform and extensible usage, developer-first approach for transferable knowledge, and containerized & scalable deployment options. It offers 4-10x faster performance compared to Streamlit apps, with a focus on cooperation within the open-source community and integration of key components from various projects.

airbadge

Airbadge is a Stripe addon for Auth.js that provides an easy way to create a SaaS site without writing any authentication or payment code. It integrates Stripe Checkout into the signup flow, offers over 50 OAuth options for authentication, allows route and UI restriction based on subscription, enables self-service account management, handles all Stripe webhooks, supports trials and free plans, includes subscription and plan data in the session, and is open source with a BSL license. The project also provides components for conditional UI display based on subscription status and helper functions to restrict route access. Additionally, it offers a billing endpoint with various routes for billing operations. Setup involves installing @airbadge/sveltekit, setting up a database provider for Auth.js, adding environment variables, configuring authentication and billing options, and forwarding Stripe events to localhost.

ChaKt-KMP

ChaKt is a multiplatform app built using Kotlin and Compose Multiplatform to demonstrate the use of Generative AI SDK for Kotlin Multiplatform to generate content using Google's Generative AI models. It features a simple chat based user interface and experience to interact with AI. The app supports mobile, desktop, and web platforms, and is built with Kotlin Multiplatform, Kotlin Coroutines, Compose Multiplatform, Generative AI SDK, Calf - File picker, and BuildKonfig. Users can contribute to the project by following the guidelines in CONTRIBUTING.md. The app is licensed under the MIT License.