ps-fuzz

Make your GenAI Apps Safe & Secure :rocket: Test & harden your system prompt

Stars: 631

The Prompt Fuzzer is an open-source tool that helps you assess the security of your GenAI application's system prompt against various dynamic LLM-based attacks. It provides a security evaluation based on the outcome of these attack simulations, enabling you to strengthen your system prompt as needed. The Prompt Fuzzer dynamically tailors its tests to your application's unique configuration and domain. The Fuzzer also includes a Playground chat interface, giving you the chance to iteratively improve your system prompt, hardening it against a wide spectrum of generative AI attacks.

README:

- ✨ About

- 🚨 Features

-

🚀 Installation

- Using pip

- Package page

- 🚧 Using docker coming soon

- Usage

- Examples

- 🎬 Demo video

- Supported attacks

- 🌈 What’s next on the roadmap?

- 🍻 Contributing

- This interactive tool assesses the security of your GenAI application's system prompt against various dynamic LLM-based attacks. It provides a security evaluation based on the outcome of these attack simulations, enabling you to strengthen your system prompt as needed.

- The Prompt Fuzzer dynamically tailors its tests to your application's unique configuration and domain.

- The Fuzzer also includes a Playground chat interface, giving you the chance to iteratively improve your system prompt, hardening it against a wide spectrum of generative AI attacks.

-

pip install prompt-security-fuzzer

You can also visit the package page on PyPi

Or grab latest release wheel file form releases

-

Launch the Fuzzer

export OPENAI_API_KEY=sk-123XXXXXXXXXXXX prompt-security-fuzzer -

Input your system prompt

-

Start testing

-

Test yourself with the Playground! Iterate as many times are you like until your system prompt is secure.

The Prompt Fuzzer Supports:

🧞 16 llm providers

🔫 16 different attacks

💬 Interactive mode

🤖 CLI mode

🧵 Multi threaded testing

You need to set an environment variable to hold the access key of your preferred LLM provider.

default is OPENAI_API_KEY

Example: set OPENAI_API_KEY with your API Token to use with your OpenAI account.

Alternatively, create a file named .env in the current directory and set the OPENAI_API_KEY there.

We're fully LLM agnostic. (Click for full configuration list of llm providers)

| ENVIORMENT KEY | Description |

|---|---|

ANTHROPIC_API_KEY |

Anthropic Chat large language models. |

ANYSCALE_API_KEY |

Anyscale Chat large language models. |

AZURE OPENAI_API_KEY |

Azure OpenAI Chat Completion API. |

BAICHUAN_API_KEY |

Baichuan chat models API by Baichuan Intelligent Technology. |

COHERE_API_KEY |

Cohere chat large language models. |

EVERLYAI_API_KEY |

EverlyAI Chat large language models |

FIREWORKS_API_KEY |

Fireworks Chat models |

GIGACHAT_CREDENTIALS |

GigaChat large language models API. |

GOOGLE_API_KEY |

Google PaLM Chat models API. |

JINA_API_TOKEN |

Jina AI Chat models API. |

KONKO_API_KEY |

ChatKonko Chat large language models API. |

MINIMAX_API_KEY, MINIMAX_GROUP_ID

|

Wrapper around Minimax large language models. |

OPENAI_API_KEY |

OpenAI Chat large language models API. |

PROMPTLAYER_API_KEY |

PromptLayer and OpenAI Chat large language models API. |

QIANFAN_AK, QIANFAN_SK

|

Baidu Qianfan chat models. |

YC_API_KEY |

YandexGPT large language models. |

-

--list-providersLists all available providers -

--list-attacksLists available attacks and exit -

--attack-providerAttack Provider -

--attack-modelAttack Model -

--target-providerTarget provider -

--target-modelTarget model -

--num-attempts, -nNUM_ATTEMPTS Number of different attack prompts -

--num-threads, -tNUM_THREADS Number of worker threads -

--attack-temperature, -aATTACK_TEMPERATURE Temperature for attack model -

--debug-level, -dDEBUG_LEVEL Debug level (0-2) -

-batch, -bRun the fuzzer in unattended (batch) mode, bypassing the interactive steps -

--ollama-base-urlBase URL for Ollama API (for self-hosted deployments) -

--openai-base-urlBase URL for OpenAI API (for OpenAI-compatible endpoints) -

--embedding-providerEmbedding provider (ollama or open_ai) - required for RAG tests -

--embedding-modelEmbedding model name - required for RAG tests -

--embedding-ollama-base-urlBase URL for Ollama Embedding API -

--embedding-openai-base-urlBase URL for OpenAI Embedding API

System prompt examples (of various strengths) can be found in the subdirectory system_prompt.examples in the sources.

Run tests against the system prompt

prompt_security_fuzzer

Run tests against the system prompt (in non-interactive batch mode):

prompt-security-fuzzer -b ./system_prompt.examples/medium_system_prompt.txt

Run tests against the system prompt with a custom benchmark

prompt-security-fuzzer -b ./system_prompt.examples/medium_system_prompt.txt --custom-benchmark=ps_fuzz/attack_data/custom_benchmark1.csv

Run tests against the system prompt with a subset of attacks

prompt-security-fuzzer -b ./system_prompt.examples/medium_system_prompt.txt --custom-benchmark=ps_fuzz/attack_data/custom_benchmark1.csv --tests='["ucar","amnesia"]'

Test RAG systems with vector database poisoning attacks

# Using OpenAI embeddings

prompt-security-fuzzer -b ./system_prompt.examples/medium_system_prompt.txt \

--embedding-provider=open_ai \

--embedding-model=text-embedding-ada-002 \

--tests='["rag_poisoning"]'

# Using Ollama embeddings with custom endpoint

prompt-security-fuzzer -b ./system_prompt.examples/medium_system_prompt.txt \

--embedding-provider=ollama \

--embedding-model=nomic-embed-text \

--embedding-ollama-base-url=http://localhost:11434 \

--tests='["rag_poisoning"]'Note: Requires chromadb (installed by default with prompt-security-fuzzer)

Run tests against custom or self-hosted LLM deployments

# Using custom Ollama endpoint

prompt-security-fuzzer -b ./system_prompt.examples/medium_system_prompt.txt \

--target-provider=ollama \

--target-model=llama2 \

--ollama-base-url=http://localhost:11434

# Using OpenAI-compatible endpoint (e.g., LocalAI, vLLM, LM Studio)

prompt-security-fuzzer -b ./system_prompt.examples/medium_system_prompt.txt \

--target-provider=open_ai \

--target-model=custom-model \

--openai-base-url=http://your-custom-endpoint:8000/v1Refine and harden your system prompt in our Google Colab Notebook

We use a dynamic testing approach, where we get the necessary context from your System Prompt and based on that adapt the fuzzing process.

- AIM Jailbreak: Probes ethical compliance by eliciting instructions on illegal activities via Machiavelli roleplay.

- Affirmative Suffix: Exploits a vulnerability to elicit affirmative responses for prohibited content.

- Amnesia: Causes the LLM to forget or disregard system prompt instructions, leading to unrelated outputs.

- Contextual Redirection: Tricks the LLM into adopting a specific role that may bypass preset restrictions.

- Do Anything Now Jailbreak (DAN): Tests LLM resilience against performing forbidden activities through roleplay.

- Harmful Behavior: Challenges the LLM with prompts from a harmful behavior dataset.

- Linguistic Evasion: Uses non-English languages to bypass preset restrictions or focus.

- Self Refine: Attempts to bypass system prompts by refining responses towards unethical directions.

- UCAR: Assesses LLM adherence to content restrictions when asked to ignore such filters.

- Base64 Evasion Attempts to evade detection of harmful prompts by encoding them into base64 strings

- Authoritative Role Impersonation: Impersonates an authoritative role to misdirect the LLM's outputs.

- Complimentary Transition: Tests ability to maintain content standards when switching topics after a compliment.

- Ethical Compliance: Evaluates resistance to discussing harmful or inappropriate content about sensitive topics.

- Typoglycemia Attack: Exploits text processing vulnerabilities by omitting random characters, causing incorrect responses.

- RAG Poisoning (Hidden Parrot Attack): Tests whether malicious instructions embedded in vector database documents can compromise RAG system behavior. This attack verifies if poisoned content retrieved from vector stores can override system prompts or inject unauthorized instructions into LLM responses.

- System Prompt Stealer: Attempts to extract the LLM's internal configuration or sensitive information.

- Broken: Attack type attempts that LLM succumbed to.

- Resilient: Attack type attempts that LLM resisted.

- Errors: Attack type attempts that had inconclusive results.

- [X] Google Colab Notebook

- [X] Adjust the output evaluation mechanism for prompt dataset testing

- [ ] Continue adding new GenAI attack types

- [ ] Enhaced reporting capabilites

- [ ] Hardening recommendations

Turn this into a community project! We want this to be useful to everyone building GenAI applications. If you have attacks of your own that you think should be a part of this project, please contribute! This is how: https://github.com/prompt-security/ps-fuzz/blob/main/CONTRIBUTING.md

Interested in contributing to the development of our tools? Great! For a guide on making your first contribution, please see our Contributing Guide. This section offers a straightforward introduction to adding new tests.

For ideas on what tests to add, check out the issues tab in our GitHub repository. Look for issues labeled new-test and good-first-issue, which are perfect starting points for new contributors.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for ps-fuzz

Similar Open Source Tools

ps-fuzz

The Prompt Fuzzer is an open-source tool that helps you assess the security of your GenAI application's system prompt against various dynamic LLM-based attacks. It provides a security evaluation based on the outcome of these attack simulations, enabling you to strengthen your system prompt as needed. The Prompt Fuzzer dynamically tailors its tests to your application's unique configuration and domain. The Fuzzer also includes a Playground chat interface, giving you the chance to iteratively improve your system prompt, hardening it against a wide spectrum of generative AI attacks.

metis

Metis is an open-source, AI-driven tool for deep security code review, created by Arm's Product Security Team. It helps engineers detect subtle vulnerabilities, improve secure coding practices, and reduce review fatigue. Metis uses LLMs for semantic understanding and reasoning, RAG for context-aware reviews, and supports multiple languages and vector store backends. It provides a plugin-friendly and extensible architecture, named after the Greek goddess of wisdom, Metis. The tool is designed for large, complex, or legacy codebases where traditional tooling falls short.

pentagi

PentAGI is an innovative tool for automated security testing that leverages cutting-edge artificial intelligence technologies. It is designed for information security professionals, researchers, and enthusiasts who need a powerful and flexible solution for conducting penetration tests. The tool provides secure and isolated operations in a sandboxed Docker environment, fully autonomous AI-powered agent for penetration testing steps, a suite of 20+ professional security tools, smart memory system for storing research results, web intelligence for gathering information, integration with external search systems, team delegation system, comprehensive monitoring and reporting, modern interface, API integration, persistent storage, scalable architecture, self-hosted solution, flexible authentication, and quick deployment through Docker Compose.

TTP-Threat-Feeds

TTP-Threat-Feeds is a script-powered threat feed generator that automates the discovery and parsing of threat actor behavior from security research. It scrapes URLs from trusted sources, extracts observable adversary behaviors, and outputs structured YAML files to help detection engineers and threat researchers derive detection opportunities and correlation logic. The tool supports multiple LLM providers for text extraction and includes OCR functionality for extracting content from images. Users can configure URLs, run the extractor, and save results as YAML files. Cloud provider SDKs are optional. Contributions are welcome for improvements and enhancements to the tool.

LEANN

LEANN is an innovative vector database that democratizes personal AI, transforming your laptop into a powerful RAG system that can index and search through millions of documents using 97% less storage than traditional solutions without accuracy loss. It achieves this through graph-based selective recomputation and high-degree preserving pruning, computing embeddings on-demand instead of storing them all. LEANN allows semantic search of file system, emails, browser history, chat history, codebase, or external knowledge bases on your laptop with zero cloud costs and complete privacy. It is a drop-in semantic search MCP service fully compatible with Claude Code, enabling intelligent retrieval without changing your workflow.

UCAgent

UCAgent is an AI-powered automated UT verification agent for chip design. It automates chip verification workflow, supports functional and code coverage analysis, ensures consistency among documentation, code, and reports, and collaborates with mainstream Code Agents via MCP protocol. It offers three intelligent interaction modes and requires Python 3.11+, Linux/macOS OS, 4GB+ memory, and access to an AI model API. Users can clone the repository, install dependencies, configure qwen, and start verification. UCAgent supports various verification quality improvement options and basic operations through TUI shortcuts and stage color indicators. It also provides documentation build and preview using MkDocs, PDF manual build using Pandoc + XeLaTeX, and resources for further help and contribution.

nosia

Nosia is a self-hosted AI RAG + MCP platform that allows users to run AI models on their own data with complete privacy and control. It integrates the Model Context Protocol (MCP) to connect AI models with external tools, services, and data sources. The platform is designed to be easy to install and use, providing OpenAI-compatible APIs that work seamlessly with existing AI applications. Users can augment AI responses with their documents, perform real-time streaming, support multi-format data, enable semantic search, and achieve easy deployment with Docker Compose. Nosia also offers multi-tenancy for secure data separation.

uLoopMCP

uLoopMCP is a Unity integration tool designed to let AI drive your Unity project forward with minimal human intervention. It provides a 'self-hosted development loop' where an AI can compile, run tests, inspect logs, and fix issues using tools like compile, run-tests, get-logs, and clear-console. It also allows AI to operate the Unity Editor itself—creating objects, calling menu items, inspecting scenes, and refining UI layouts from screenshots via tools like execute-dynamic-code, execute-menu-item, and capture-window. The tool enables AI-driven development loops to run autonomously inside existing Unity projects.

forge

Forge is a powerful open-source tool for building modern web applications. It provides a simple and intuitive interface for developers to quickly scaffold and deploy projects. With Forge, you can easily create custom components, manage dependencies, and streamline your development workflow. Whether you are a beginner or an experienced developer, Forge offers a flexible and efficient solution for your web development needs.

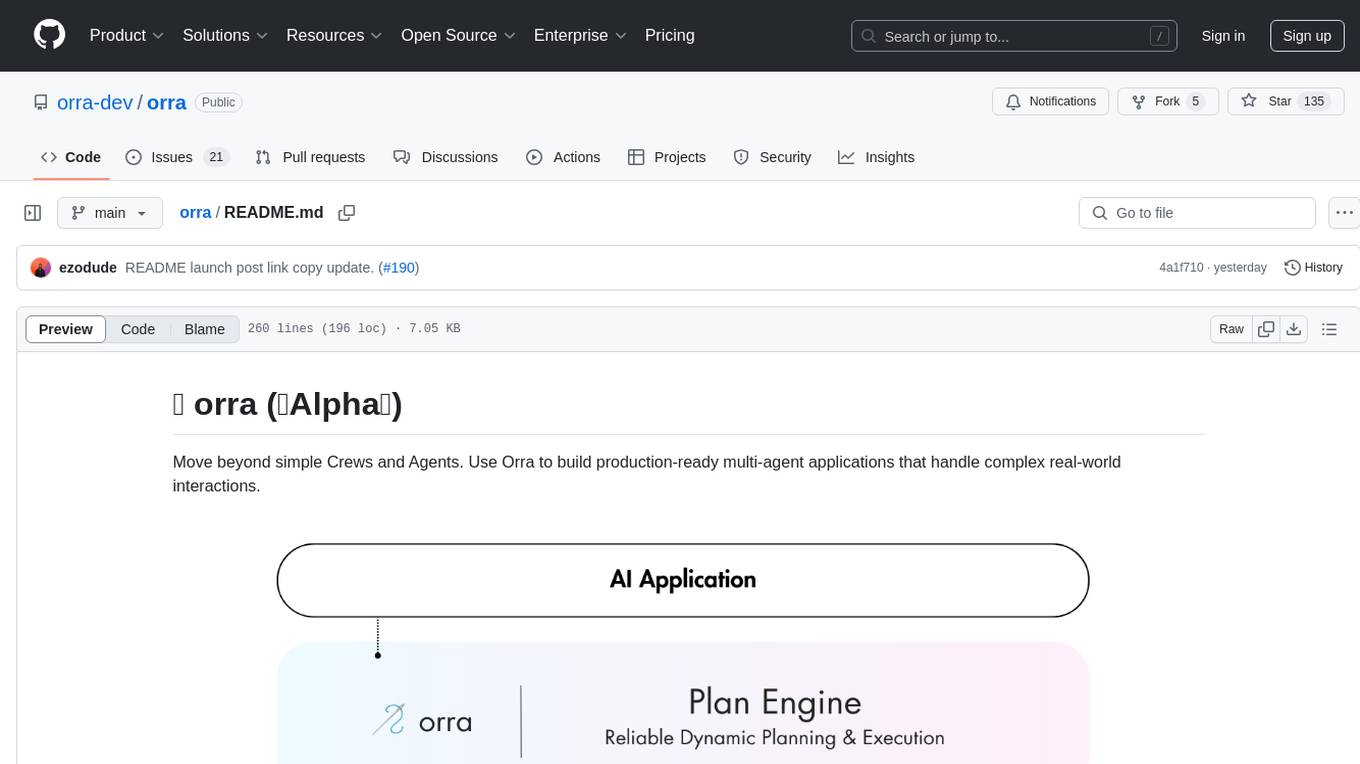

orra

Orra is a tool for building production-ready multi-agent applications that handle complex real-world interactions. It coordinates tasks across existing stack, agents, and tools run as services using intelligent reasoning. With features like smart pre-evaluated execution plans, domain grounding, durable execution, and automatic service health monitoring, Orra enables users to go fast with tools as services and revert state to handle failures. It provides real-time status tracking and webhook result delivery, making it ideal for developers looking to move beyond simple crews and agents.

graphiti

Graphiti is a framework for building and querying temporally-aware knowledge graphs, tailored for AI agents in dynamic environments. It continuously integrates user interactions, structured and unstructured data, and external information into a coherent, queryable graph. The framework supports incremental data updates, efficient retrieval, and precise historical queries without complete graph recomputation, making it suitable for developing interactive, context-aware AI applications.

Unity-MCP

Unity-MCP is an AI helper designed for game developers using Unity. It facilitates a wide range of tasks in Unity Editor and running games on any platform by connecting to AI via TCP connection. The tool allows users to chat with AI like with a human, supports local and remote usage, and offers various default AI tools. Users can provide detailed information for classes, fields, properties, and methods using the 'Description' attribute in C# code. Unity-MCP enables instant C# code compilation and execution, provides access to assets and C# scripts, and offers tools for proper issue understanding and project data manipulation. It also allows users to find and call methods in the codebase, work with Unity API, and access human-readable descriptions of code elements.

claude-code-telegram

Claude Code Telegram Bot is a Telegram bot that connects to Claude Code, offering a conversational AI interface for codebases. Users can chat naturally with Claude to analyze, edit, or explain code, maintain context across conversations, code on the go, receive proactive notifications, and stay secure with authentication and audit logging. The bot supports two interaction modes: Agentic Mode for natural language interaction and Classic Mode for a terminal-like interface. It features event-driven automation, working features like directory sandboxing and git integration, and planned enhancements like a plugin system. Security measures include access control, directory isolation, rate limiting, input validation, and webhook authentication.

Shellsage

Shell Sage is an intelligent terminal companion and AI-powered terminal assistant that enhances the terminal experience with features like local and cloud AI support, context-aware error diagnosis, natural language to command translation, and safe command execution workflows. It offers interactive workflows, supports various API providers, and allows for custom model selection. Users can configure the tool for local or API mode, select specific models, and switch between modes easily. Currently in alpha development, Shell Sage has known limitations like limited Windows support and occasional false positives in error detection. The roadmap includes improvements like better context awareness, Windows PowerShell integration, Tmux integration, and CI/CD error pattern database.

fast-mcp

Fast MCP is a Ruby gem that simplifies the integration of AI models with your Ruby applications. It provides a clean implementation of the Model Context Protocol, eliminating complex communication protocols, integration challenges, and compatibility issues. With Fast MCP, you can easily connect AI models to your servers, share data resources, choose from multiple transports, integrate with frameworks like Rails and Sinatra, and secure your AI-powered endpoints. The gem also offers real-time updates and authentication support, making AI integration a seamless experience for developers.

Groqqle

Groqqle 2.1 is a revolutionary, free AI web search and API that instantly returns ORIGINAL content derived from source articles, websites, videos, and even foreign language sources, for ANY target market of ANY reading comprehension level! It combines the power of large language models with advanced web and news search capabilities, offering a user-friendly web interface, a robust API, and now a powerful Groqqle_web_tool for seamless integration into your projects. Developers can instantly incorporate Groqqle into their applications, providing a powerful tool for content generation, research, and analysis across various domains and languages.

For similar tasks

ps-fuzz

The Prompt Fuzzer is an open-source tool that helps you assess the security of your GenAI application's system prompt against various dynamic LLM-based attacks. It provides a security evaluation based on the outcome of these attack simulations, enabling you to strengthen your system prompt as needed. The Prompt Fuzzer dynamically tailors its tests to your application's unique configuration and domain. The Fuzzer also includes a Playground chat interface, giving you the chance to iteratively improve your system prompt, hardening it against a wide spectrum of generative AI attacks.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.