solon-ai

Java AI application development framework (supports LLM-tool,skill; RAG; MCP; Agent-ReAct,Team-Agent). Compatible with java8 ~ java25. It can also be embedded in SpringBoot, jFinal, Vert.x, Quarkus, and other frameworks.

Stars: 302

Solon-AI is a Java AI & MCP application development framework that supports various AI development capabilities. It is designed to be versatile, efficient, and open for integration with frameworks like SpringBoot, jFinal, and Vert.x. The framework provides examples of embedding solon-ai(& mcp) and showcases interfaces for chat models, function calling, vision, RAG (EmbeddingModel, Repository, DocumentLoader, RerankingModel), Ai Flow, MCP server, MCP client, and MCP Proxy. Solon-AI is part of the Solon project ecosystem, which includes other repositories for different functionalities.

README:

Java LLM(tool, skill) & RAG & MCP & Agent(ReAct, Team) Application development framework

Restraint, efficiency and openness

It is the same type of development framework as LangChain, LangGraph and LlamaIndex

https://solon.noear.org/article/learn-solon-ai

Language: English | 中文

Solon AI is one of the core subprojects of the Solon project. It is a full-scenario Java AI development framework, which aims to deeply integrate LLM large model, RAG knowledge base, MCP protocol and Agent collaboration choreography.

- Full use case support: fits perfectly into the Solon ecosystem and can be seamlessly integrated into frameworks like SpringBoot, Vert.X, Quarkus, etc.

- Multi-model dialects: Adapt model differences by dialect using ChatModel's unified interface (OpenAI, Gemini, Claude, Ollama, DeepSeek, Dashscope, etc.).

- Graph-driven orchestration: supports the transformation of Agent reasoning into observable and governable computation flow graphs.

Examples of embeddings (including third-party frameworks) for solon-ai:

- https://gitee.com/solonlab/solon-ai-mcp-embedded-examples

- https://gitcode.com/solonlab/solon-ai-mcp-embedded-examples

- https://github.com/solonlab/solon-ai-mcp-embedded-examples

- General-purpose Autonomous Agents (e.g., Manus, OpenOperator)

- Intelligent Assistants & RAG Knowledge Bases (e.g., Dify, Coze)

- Multi-Agent Collaborative Orchestration (e.g., AutoGPT, MetaGPT)

- Business-Driven Controlled Workflows (e.g., AI-enhanced DingTalk/Lark approvals, SAP Intelligent Modules)

- Intelligent Document Processing & ETL (e.g., Instabase, Unstructured.io)

- Real-time Data Insights & Dashboards (e.g., Text-to-SQL applications)

- Automated Testing & Quality Assurance (e.g., GitHub Copilot Workspace)

- Low-Code/Visual AI Workflow Platforms (e.g., LangFlow, Flowise)

- And more...

- ChatModel(General Purpose LLM call interface)

Support for synchronous and Reactive calls, built-in dialect adaptation, Tool, Skill, ChatSession, etc.

ChatModel chatModel = ChatModel.of("http://127.0.0.1:11434/api/chat")

.provider("ollama") //Need to specify vendor, used to identify interface style (also called dialect)

.model("qwen2.5:1.5b")

.defaultSkillAdd(new ToolGatewaySkill())

.build();

// Synchronize the call and print the response message

AssistantMessage result = ChatchatModel.prompt("The weather in Hangzhou today?")

.options(op->op.toolAdd(new WeatherTools())) //Adding tools

.call()

.getMessage();

System.out.println(result);

// Stream call

chatModel.prompt("hello").stream(); //Publisher<ChatResponse>- Skills(Solon AI Skills)

Skill skill = new SkillDesc("order_expert")

.description("Order Assistant")

// Dynamic admission: Activated only when "order" is mentioned

.isSupported(prompt -> prompt.getUserMessageContent().contains("order"))

// Dynamic instructions: Inject different Sops depending on whether the user is a VIP or not

.instruction(prompt -> {

if ("VIP".equals(prompt.getMeta("user_level"))) {

return "This is a VIP customer, please call fast_track_tool first.";

}

return "Process the order inquiry according to the normal process.";

})

.toolAdd(new OrderTools());

chatModel.prompt("Where is my order from yesterday?")

.options(o->o.skillAdd(skill))

.call();- RAG(知识库)

It provides full-link support from DocumentLoader, DocumentSplitter, EmbeddingModel, and RerankingModel.

//Building a Knowledge Warehouse

EmbeddingModel embeddingModel = EmbeddingModel.of(apiUrl).apiKey(apiKey).provider(provider).model(model).batchSize(10).build();

RerankingModel rerankingModel = RerankingModel.of(apiUrl).apiKey(apiKey).provider(provider).model(model).build();

InMemoryRepository repository = new InMemoryRepository(TestUtils.getEmbeddingModel()); //3.初始化知识库

repository.insert(new PdfLoader(pdfUri).load());

//retrieval

List<Document> docs = repository.search(query);

//You can rearrange it if you want

docs = rerankingModel.rerank(query, docs);

//Cue enhancement is

ChatMessage message = ChatMessage.ofUserAugment(query, docs);

//Calling the llm

chatModel.prompt(message)

.call();- MCP (Model Context Protocol)

Deep integration with MCP protocol (MCP_2025_06_18), supporting cross-platform tool, resource, and prompt sharing.

//server

@McpServerEndpoint(channel = McpChannel.STREAMABLE, mcpEndpoint = "/mcp")

public class MyMcpServer {

@ToolMapping(description = "Checking the weather")

public String getWeather(@Param(description = "city") String location) {

return "It's sunny, 25 degrees";

}

}

//client

McpClientProvider clientProvider = McpClientProvider.builder()

.channel(McpChannel.STREAMABLE)

.url("http://localhost:8080/mcp")

.build();- Agent (An Agent Experience with Computational Flow Graphs)

The Solon AI Agent transforms reasoning logic into graph-driven collaboration flows, enabling ReAct introspective reasoning and multi-agent Team collaboration.

//Reflective intelligent agent:

ReActAgent agent = ReActAgent.of(chatModel) // 或者用 SimpleAgent.of(chatModel)

.name("weather_expert")

.description("Check the weather and provide advice")

.defaultToolAdd(weatherTool) // Inject MCP or local tools

.build();

agent.prompt("What to wear in Beijing today?").call(); // Autocomplete: Think -> Call tool -> Observe -> Summarize

// Constructing a team agent: Automatically arranging member roles through protocols

TeamAgent team = TeamAgent.of(chatModel)

.name("marketing_team")

.protocol(TeamProtocols.HIERARCHICAL) // Hierarchical collaboration (6 preset protocols)

.agentAdd(copywriterAgent) // Copywriter expert

.agentAdd(illustratorAgent) // Illustrator expert

.build();

team.prompt("Plan a promotion scheme for deep-sea mineral water").call(); // Supervisor automatically decomposes tasks and assigns them to corresponding experts .defaultToolAdd(weatherTool) // Inject MCP or local tools- Ai Flow(Process orchestration experience)

The low-code flow application of Dify is simulated, and the links such as RAG, hint word enhancement and model call are YAML arranged.

id: demo1

layout:

- type: "start"

- task: "@VarInput"

meta:

message: "Solon 是谁开发的?"

- task: "@EmbeddingModel"

meta:

embeddingConfig: # "@type": "org.noear.solon.ai.embedding.EmbeddingConfig"

provider: "ollama"

model: "bge-m3"

apiUrl: "http://127.0.0.1:11434/api/embed"

- task: "@InMemoryRepository"

meta:

documentSources:

- "https://solon.noear.org/article/about?format=md"

splitPipeline:

- "org.noear.solon.ai.rag.splitter.RegexTextSplitter"

- "org.noear.solon.ai.rag.splitter.TokenSizeTextSplitter"

- task: "@ChatModel"

meta:

systemPrompt: "你是个知识库"

stream: false

chatConfig: # "@type": "org.noear.solon.ai.chat.ChatConfig"

provider: "ollama"

model: "qwen2.5:1.5b"

apiUrl: "http://127.0.0.1:11434/api/chat"

- task: "@ConsoleOutput"

# FlowEngine flowEngine = FlowEngine.newInstance();

# ...

# flowEngine.eval("demo1");| Code repository | Description |

|---|---|

| /opensolon/solon | Solon ,Main code repository |

| /opensolon/solon-examples | Solon ,Official website supporting sample code repository |

| /opensolon/solon-expression | Solon Expression ,Code repository |

| /opensolon/solon-flow | Solon Flow ,Code repository |

| /opensolon/solon-ai | Solon Ai ,Code repository |

| /opensolon/solon-cloud | Solon Cloud ,Code repository |

| /opensolon/solon-admin | Solon Admin ,Code repository |

| /opensolon/solon-integration | Solon Integration ,Code repository |

| /opensolon/solon-java17 | Solon Java17 ,Code repository(base java17) |

| /opensolon/solon-java25 | Solon Java25 ,Code repository(base java25) |

| /opensolon/solon-gradle-plugin | Solon Gradle ,Plugin code repository |

| /opensolon/solon-idea-plugin | Solon Idea ,Plugin code repository |

| /opensolon/solon-vscode-plugin | Solon VsCode ,Plugin code repository |

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for solon-ai

Similar Open Source Tools

solon-ai

Solon-AI is a Java AI & MCP application development framework that supports various AI development capabilities. It is designed to be versatile, efficient, and open for integration with frameworks like SpringBoot, jFinal, and Vert.x. The framework provides examples of embedding solon-ai(& mcp) and showcases interfaces for chat models, function calling, vision, RAG (EmbeddingModel, Repository, DocumentLoader, RerankingModel), Ai Flow, MCP server, MCP client, and MCP Proxy. Solon-AI is part of the Solon project ecosystem, which includes other repositories for different functionalities.

tambo

tambo ai is a React library that simplifies the process of building AI assistants and agents in React by handling thread management, state persistence, streaming responses, AI orchestration, and providing a compatible React UI library. It eliminates React boilerplate for AI features, allowing developers to focus on creating exceptional user experiences with clean React hooks that seamlessly integrate with their codebase.

unitxt

Unitxt is a customizable library for textual data preparation and evaluation tailored to generative language models. It natively integrates with common libraries like HuggingFace and LM-eval-harness and deconstructs processing flows into modular components, enabling easy customization and sharing between practitioners. These components encompass model-specific formats, task prompts, and many other comprehensive dataset processing definitions. The Unitxt-Catalog centralizes these components, fostering collaboration and exploration in modern textual data workflows. Beyond being a tool, Unitxt is a community-driven platform, empowering users to build, share, and advance their pipelines collaboratively.

Scrapegraph-ai

ScrapeGraphAI is a web scraping Python library that utilizes LLM and direct graph logic to create scraping pipelines for websites and local documents. It offers various standard scraping pipelines like SmartScraperGraph, SearchGraph, SpeechGraph, and ScriptCreatorGraph. Users can extract information by specifying prompts and input sources. The library supports different LLM APIs such as OpenAI, Groq, Azure, and Gemini, as well as local models using Ollama. ScrapeGraphAI is designed for data exploration and research purposes, providing a versatile tool for extracting information from web pages and generating outputs like Python scripts, audio summaries, and search results.

pixeltable

Pixeltable is a Python library designed for ML Engineers and Data Scientists to focus on exploration, modeling, and app development without the need to handle data plumbing. It provides a declarative interface for working with text, images, embeddings, and video, enabling users to store, transform, index, and iterate on data within a single table interface. Pixeltable is persistent, acting as a database unlike in-memory Python libraries such as Pandas. It offers features like data storage and versioning, combined data and model lineage, indexing, orchestration of multimodal workloads, incremental updates, and automatic production-ready code generation. The tool emphasizes transparency, reproducibility, cost-saving through incremental data changes, and seamless integration with existing Python code and libraries.

superlinked

Superlinked is a compute framework for information retrieval and feature engineering systems, focusing on converting complex data into vector embeddings for RAG, Search, RecSys, and Analytics stack integration. It enables custom model performance in machine learning with pre-trained model convenience. The tool allows users to build multimodal vectors, define weights at query time, and avoid postprocessing & rerank requirements. Users can explore the computational model through simple scripts and python notebooks, with a future release planned for production usage with built-in data infra and vector database integrations.

superagentx

SuperAgentX is a lightweight open-source AI framework designed for multi-agent applications with Artificial General Intelligence (AGI) capabilities. It offers goal-oriented multi-agents with retry mechanisms, easy deployment through WebSocket, RESTful API, and IO console interfaces, streamlined architecture with no major dependencies, contextual memory using SQL + Vector databases, flexible LLM configuration supporting various Gen AI models, and extendable handlers for integration with diverse APIs and data sources. It aims to accelerate the development of AGI by providing a powerful platform for building autonomous AI agents capable of executing complex tasks with minimal human intervention.

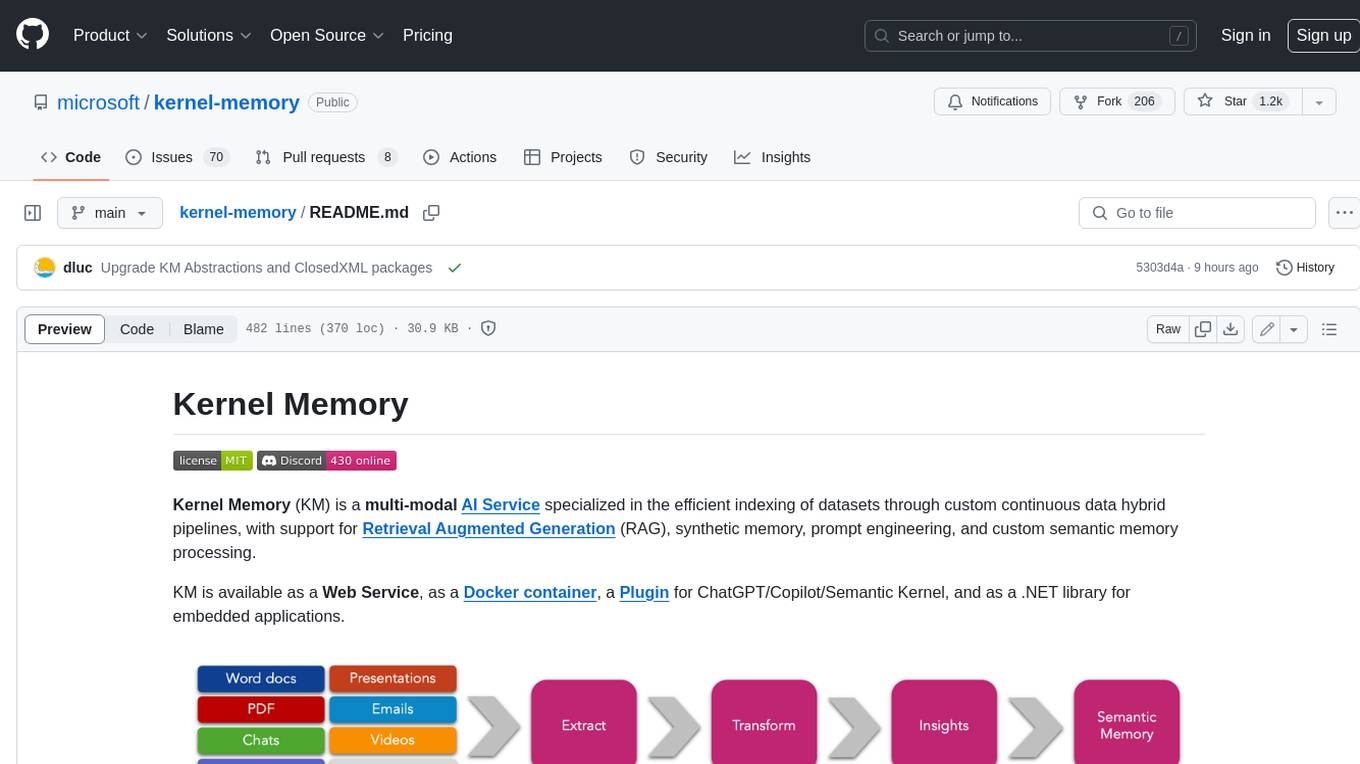

kernel-memory

Kernel Memory (KM) is a multi-modal AI Service specialized in the efficient indexing of datasets through custom continuous data hybrid pipelines, with support for Retrieval Augmented Generation (RAG), synthetic memory, prompt engineering, and custom semantic memory processing. KM is available as a Web Service, as a Docker container, a Plugin for ChatGPT/Copilot/Semantic Kernel, and as a .NET library for embedded applications. Utilizing advanced embeddings and LLMs, the system enables Natural Language querying for obtaining answers from the indexed data, complete with citations and links to the original sources. Designed for seamless integration as a Plugin with Semantic Kernel, Microsoft Copilot and ChatGPT, Kernel Memory enhances data-driven features in applications built for most popular AI platforms.

cellseg_models.pytorch

cellseg-models.pytorch is a Python library built upon PyTorch for 2D cell/nuclei instance segmentation models. It provides multi-task encoder-decoder architectures and post-processing methods for segmenting cell/nuclei instances. The library offers high-level API to define segmentation models, open-source datasets for training, flexibility to modify model components, sliding window inference, multi-GPU inference, benchmarking utilities, regularization techniques, and example notebooks for training and finetuning models with different backbones.

EasySteer

EasySteer is a unified framework built on vLLM for high-performance LLM steering. It offers fast, flexible, and easy-to-use steering capabilities with features like high performance, modular design, fine-grained control, pre-computed steering vectors, and an interactive demo. Users can interactively configure models, adjust steering parameters, and test interventions without writing code. The tool supports OpenAI-compatible APIs and provides modules for hidden states extraction, analysis-based steering, learning-based steering, and a frontend web interface for interactive steering and ReFT interventions.

LTEngine

LTEngine is a free and open-source local AI machine translation API written in Rust. It is self-hosted and compatible with LibreTranslate. LTEngine utilizes large language models (LLMs) via llama.cpp, offering high-quality translations that rival or surpass DeepL for certain languages. It supports various accelerators like CUDA, Metal, and Vulkan, with the largest model 'gemma3-27b' fitting on a single consumer RTX 3090. LTEngine is actively developed, with a roadmap outlining future enhancements and features.

adk-rust

ADK-Rust is a comprehensive and production-ready Rust framework for building AI agents. It features type-safe agent abstractions with async execution and event streaming, multiple agent types including LLM agents, workflow agents, and custom agents, realtime voice agents with bidirectional audio streaming, a tool ecosystem with function tools, Google Search, and MCP integration, production features like session management, artifact storage, memory systems, and REST/A2A APIs, and a developer-friendly experience with interactive CLI, working examples, and comprehensive documentation. The framework follows a clean layered architecture and is production-ready and actively maintained.

trpc-agent-go

A powerful Go framework for building intelligent agent systems with large language models (LLMs), hierarchical planners, memory, telemetry, and a rich tool ecosystem. tRPC-Agent-Go enables the creation of autonomous or semi-autonomous agents that reason, call tools, collaborate with sub-agents, and maintain long-term state. The framework provides detailed documentation, examples, and tools for accelerating the development of AI applications.

langfun

Langfun is a Python library that aims to make language models (LM) fun to work with. It enables a programming model that flows naturally, resembling the human thought process. Langfun emphasizes the reuse and combination of language pieces to form prompts, thereby accelerating innovation. Unlike other LM frameworks, which feed program-generated data into the LM, langfun takes a distinct approach: It starts with natural language, allowing for seamless interactions between language and program logic, and concludes with natural language and optional structured output. Consequently, langfun can aptly be described as Language as functions, capturing the core of its methodology.

MarkLLM

MarkLLM is an open-source toolkit designed for watermarking technologies within large language models (LLMs). It simplifies access, understanding, and assessment of watermarking technologies, supporting various algorithms, visualization tools, and evaluation modules. The toolkit aids researchers and the community in ensuring the authenticity and origin of machine-generated text.

logicstamp-context

LogicStamp Context is a static analyzer that extracts deterministic component contracts from TypeScript codebases, providing structured architectural context for AI coding assistants. It helps AI assistants understand architecture by extracting props, hooks, and dependencies without implementation noise. The tool works with React, Next.js, Vue, Express, and NestJS, and is compatible with various AI assistants like Claude, Cursor, and MCP agents. It offers features like watch mode for real-time updates, breaking change detection, and dependency graph creation. LogicStamp Context is a security-first tool that protects sensitive data, runs locally, and is non-opinionated about architectural decisions.

For similar tasks

solon-ai

Solon-AI is a Java AI & MCP application development framework that supports various AI development capabilities. It is designed to be versatile, efficient, and open for integration with frameworks like SpringBoot, jFinal, and Vert.x. The framework provides examples of embedding solon-ai(& mcp) and showcases interfaces for chat models, function calling, vision, RAG (EmbeddingModel, Repository, DocumentLoader, RerankingModel), Ai Flow, MCP server, MCP client, and MCP Proxy. Solon-AI is part of the Solon project ecosystem, which includes other repositories for different functionalities.

MCP-Chinese-Getting-Started-Guide

The Model Context Protocol (MCP) is an innovative open-source protocol that redefines the interaction between large language models (LLMs) and the external world. MCP provides a standardized approach for any large language model to easily connect to various data sources and tools, enabling seamless access and processing of information. MCP acts as a USB-C interface for AI applications, offering a standardized way for AI models to connect to different data sources and tools. The core functionalities of MCP include Resources, Prompts, Tools, Sampling, Roots, and Transports. This guide focuses on developing an MCP server for network search using Python and uv management. It covers initializing the project, installing dependencies, creating a server, implementing tool execution methods, and running the server. Additionally, it explains how to debug the MCP server using the Inspector tool, how to call tools from the server, and how to connect multiple MCP servers. The guide also introduces the Sampling feature, which allows pre- and post-tool execution operations, and demonstrates how to integrate MCP servers into LangChain for AI applications.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.