AzureOpenAI-with-APIM

Deploy APIM. Auto-configure it to work with your Azure Open AI.

Stars: 55

AzureOpenAI-with-APIM is a repository that provides a one-button deploy solution for Azure API Management (APIM), Key Vault, and Log Analytics to work seamlessly with Azure OpenAI endpoints. It enables organizations to scale and manage their Azure OpenAI service efficiently by issuing subscription keys via APIM, delivering usage metrics, and implementing policies for access control and cost management. The repository offers detailed guidance on implementing APIM to enhance Azure OpenAI resiliency, scalability, performance, monitoring, and chargeback capabilities.

README:

One-button deploy APIM, Key vault, and Log Analytics. Auto-configure APIM to work with your Azure OpenAI endpoint.

Using Azure's APIM orchestration provides a organizations with a powerful way to scale and manage their Azure OpenAI service without deploying Azure OpenAI endpoints everywhere. Administrators can issue subscription keys via APIM for accessing a single Azure OpenAI service instead of having teams share Azure OpenAI keys. APIM delivers usage metrics along with API monitoring to improve business intelligence. APIM policies control access, throttling, and a mechanism for chargeback cost models.

Gain conceptual details and technical step-by-step knowledge on implementing APIM to support your Azure OpenAI resiliency, scalability, performance, monitoring, and charge back capabilities.

[!IMPORTANT]

The goal with this repo is to provide more than conceptual knowledge on the services and technology. We want you to walk away from this repo knowing EXACTLY what to do to add these capabilities to YOUR enterprise. If we are missing information or steps or details or visuals or videos or anything that adds friction to you gaining this knowledge effectively and efficiently, please say something - Add your Ideas · Discussions · GitHub.

There are two solutions developed to meet the needs of the organization from a sandbox to model a production environment.

[!NOTE]

With Azure OpenAI's availability in Azure Government, the solutions are consolidated down because they are identical whether deployed into Azure Commercial or Azure Government.

Once the service is deployed, use the following section to understand how to access your Azure OpenAI service via APIM.

[!TIP]

A subscription, in the context of APIM, is the authorization of a user or group to access an API.

Calculate token counts per subscription. This can be helpful to monitor utilization of Azure OpenAI but also provides the foundation for a charge-back model if, internally, your organization provides a shared services model where Azure OpenAI is deployed and provided back to the business with one of the following models:

- For free and the team managing the service pays for all costs

- Cost basis so the team managing the service provides access to said service but requires the internal business consumer to pay for its use.

Use the following link to deploy an APIM policy and supporting services to capture token counts by subscription using an Event hub, process them using an Azure Function, and log them to Log Analytics workspace for reporting.

Resiliency is the ability of the service to recover from an issue. With regard to Azure OpenAI and APIM, this means using a Retry Policy to attempt the prompt again. Typically the prompt fails because at the time the user submitted their prompt, the Azure OpenAI endpoint was maxed out of either Tokens Per Minute or Requests Per Minute based on the endpoints quota, which triggers a HTTP 429 error.

- Retry policy to leverage two or more Azure OpenAI endpoints

- Expands capacity without impact to user experience or requesting increase to existing Azure OpenAI endpoints

Single region Retry policy means APIM will wait for a specific period of time and attempt to submit the prompt to the same Azure OpenAI endpoint. This is ok for development phases of solutions but not ideal for production.

Multi region Retry policy means APIM will immediately submit the prompt to a completely separate Azure OpenAI endpoint that is deployed to a separate Azure region. This effectively doubles your Azure OpenAI quota and provides resiliency. You can tie this together with the APIM Load Balancer capability (as of April 4, 2024, this is Azure Commercial only and in Preview) you can have scale and resiliency. This is discussed in more detail [LINK HERE]

Scalability provides the ability of your Azure OpenAI service to support higher loads without necessarily increasing regional quotas. This feature (as of April 4, 2024, this is Azure Commercial only and in Preview) uses two or more Azure OpenAI endpoints in a round-robin load balancing configuration. This feature is not built into the One-button deploy but perspective implementation is provided so that organizations can implement.

You can tie this together with the Retry policy (as of April 4, 2024, this is Azure Commercial only and in Preview) you can have scale and resiliency. This is discussed in more detail [LINK HERE]

Scale provides ability of an organization to support higher loads by leveraging multiple regions but the TPM cost model is a best effort compute with no SLAs. When using TPM pay as you go model, as long as your Azure OpenAI endpoint has quota - your prompts will be processed but their latency may be higher than anticipated and variability may be more inconsistent than anticipated.

To improve performance, Azure OpenAI has a cost model called Provisioned Throughput Units (PTU). When using PTUs, the organization is procuring an allotted amount of GPU to process their models. No other organization or individual can use that GPU. This has a positive effect of reducing latency and tightening up the variability in latency. It has a secondary effect of improving cost forecasting (discussed in more detail further in this article).

- Provide cost management per subscription with Rate Throttling

- Implement Cost Management

APIM to Azure OpenAI

[!IMPORTANT]

The latest update to this repo moves to Managed Identity for the one-button deployments and guides for modifying existing APIM services.

Managed identities is the ideal method for authenticating APIM to Azure OpenAI. This eliminates the need to manage the rotation of keys and for keys to be stored anywhere beyond the Azure OpenAI endpoint.

Client or Application to APIM

Managed identities of the Azure App Service, Virtual Machine, Azure Kubernetes Service, or any other compute service in Azure is the preferred method of authentication. It improves security and eliminates the issuance of keys and tokens. Clients can use an OAuth token if required to validate access when using Azure OpenAI via APIM.

These methods shifts the burden of authentication from the application and onto APIM, which improves performance, scalability, operational management, and identity service selection. Update APIM's identity provider for OAuth and that update flows down to the application without any modification to the application.

The examples provide both methods along with guidance on how to setup the client or application to run the code so that managed identity technique can be used.

- Implement authenticating application to APIM using Managed Identity

- Implement authenticating client to APIM using Entra

APIM to Azure OpenAI SAS Token

Subscription key is like a SAS token provided by the backend service but it is issued by APIM for use by the user or group. These are fine during the development phase. We've retained the policy xml file in the repo as an example but this technique is not used.

Client or Application to APIM

For development and when running test commands from your workstation, using the Subscription key is straightforward but we recommend moving from the Subscription key to Managed Identities beyond development. The examples provide both methods along with guidance on how to setup the client or application to run the code so that managed identity technique can be used.

- Implement authenticating application to APIM using Subscription Key

- Implement authenticating client to APIM using Subscription Key

- Contributor permissions to subscription or resource group

- Resource Group (or ability to create)

- Azure OpenAI service deployed

- How-to: Create and deploy an Azure OpenAI Service resource - Azure OpenAI | Microsoft Learn

- If using Multi-region, then deploy an additional Azure OpenAI service in a different region.

- Azure OpenAI model deployed

- How-to: Create and deploy an Azure OpenAI Service resource - Azure OpenAI | Microsoft Learn

- If using Multi-region, make sure Deployment names are identical.

- Azure OpenAI service URL

- Quickstart - Deploy a model and generate text using Azure OpenAI Service - Azure OpenAI | Microsoft Learn

- If using Multi-region, collect the additional Azure OpenAI service URL.

Each solution provides a simple one-button deployment. Select the "Deploy to Azure" button which will open the Azure portal and provide a form for details.

To use the command line deployment method, fork the library and use Codespaces or clone the forked library to your local computer.

- How to install the Azure CLI | Microsoft Learn

- Connect to Azure Government with Azure CLI - Azure Government | Microsoft Learn

- How to install Azure PowerShell | Microsoft Learn

- Connect to Azure Government with PowerShell - Azure Government | Microsoft Learn

The following architectural solutions support two use-cases in the Azure Commercial and Azure Government environments. Determining which solution to implement requires understanding of your current utilization of Azure.

-

API Management to Azure OpenAI

- Supports Azure Commercial and Azure Government

- Developing proof of concept or minimum viable production solution.

- Isolated from enterprise networking using internal networks, Express Routes, and site-2-site VPN connections from the cloud to on-premises networks.

- Assigns APIM the

-

API Management to Azure OpenAI with private endpoints

- Supports Azure Commercial and Azure Government

- Pilot or production solution.

- Connected to the enterprise networking using internal networks, Express Routes, and site-2-site VPN connections from the cloud to on-premises networks.

Use API management deployed to your Azure environment using public IP addresses for accessing APIM and for APIM to access the Azure OpenAI API. Access to the services is secured using keys and Defender for Cloud.

[!NOTE]

Only API Management Service is deployed, this solution requires the Azure OpenAI service to already exist.

- Users and Groups are used to assign access to an API using subscriptions.

- Each User or Group can be assigned their own policies like rate-limiting to control use

- The Azure OpenAI API uses policies to assign backends, retry, rate-throttling, and token counts.

- The backends are assigned using a policy and can include load balance groups. Retry policies reference additional backends for resiliency.

- One or more Azure OpenAI service (endpoint) can be used to manage scale and resiliency.

- The endpoints will reside in different regions so that they can utilize the maximum quota available to them.

- Policies are used to collect information, perform actions, and manipulate user connections.

- App Insights are used to create dashboards to monitor performance and use.

! NOTE ! - It can take up to 45 minutes for all services to deploy as API Management has many underlying Azure resources deployed running the service.

Simple one-button deployment, opens in Azure Portal

# Update the following variables to use the appropriate resource group and subscription.

$resourceGroupName = "RG-APIM-OpenAI"

$location = "East US" # Use MAG region when deploying to MAG

$subscriptionName = "MySubscription"

# az cloud set --name AzureUSGovernment # Uncomment when deploying to MAG

az login

az account set --subscription $subscriptionName

az group create --name $resourceGroupName --location $location

az deployment group create --resource-group $resourceGroupName --template-file .\public-apim.bicep --mode Incremental# Update the following variables to use the appropriate resource group and subscription.

$resourceGroupName = "RG-APIM-OpenAI"

$location = "East US" # Use MAG region when deploying to MAG

$subscriptionName = "MySubscription"

Connect-AzAccount #-Environment AzureUSGovernment # Uncomment when deploying to MAG

Set-AzContext -Subscription $subscriptionName

New-AzResourceGroup -Name $resourceGroupName -Location $location

New-AzResourceGroupDeployment -ResourceGroupName $resourceGroupName -TemplateFile .\public-apim.bicep -Verbose -mode Incremental- Now that APIM is deployed and automatically configured to work with your Azure OpenAI service

Use API management deployed to your Azure environment using private IP addresses for accessing APIM and for APIM to access the Azure OpenAI API. Access to the services is secured using private network connectivity, keys, and Defender for Cloud. Access to the private network is controlled by customer infrastructure and supports internal routing via Express Route or site-2-site VPN for broader enterprise network access like on-premises data centers or site-based users.

- Users and Groups are used to assign access to an API using subscriptions.

- Each User or Group can be assigned their own policies like rate-limiting to control use

- The Azure OpenAI API uses policies to assign backends, retry, rate-throttling, and token counts.

- The backends are assigned using a policy and can include load balance groups. Retry policies reference additional backends for resiliency.

- One or more Azure OpenAI service (endpoint) can be used to manage scale and resiliency.

- The endpoints will reside in different regions so that they can utilize the maximum quota available to them.

- Policies are used to collect information, perform actions, and manipulate user connections.

- App Insights are used to create dashboards to monitor performance and use.

! NOTE ! - It can take up to 45 minutes for all services to deploy as API Management has many underlying Azure resources deployed running the service.

Simple one-button deployment, opens in Azure Portal

# Update the following variables to use the appropriate resource group and subscription.

$resourceGroupName = "RG-APIM-OpenAI"

$location = "East US" # Use MAG region when deploying to MAG

$subscriptionName = "MySubscription"

# az cloud set --name AzureUSGovernment # Uncomment when deploying to MAG

az login

az account set --subscription $subscriptionName

az group create --name $resourceGroupName --location $location

az deployment group create --resource-group $resourceGroupName --template-file .\private-apim.bicep --mode Incremental# Update the following variables to use the appropriate resource group and subscription.

$resourceGroupName = "RG-APIM-OpenAI"

$location = "East US" # Use MAG region when deploying to MAG

$subscriptionName = "MySubscription"

Connect-AzAccount #-Environment AzureUSGovernment # Uncomment when deploying to MAG

Set-AzContext -Subscription $subscriptionName

New-AzResourceGroup -Name $resourceGroupName -Location $location

New-AzResourceGroupDeployment -ResourceGroupName $resourceGroupName -TemplateFile .\private-apim.bicep -Verbose -mode Incremental- Now that APIM is deployed and automatically configured to work with your Azure OpenAI service

TBD

Policy for collecting tokens and user id

TBD

TBD

TBD

TBD

TBD

Azure API Management policy reference - retry | Microsoft Learn

TBD

Ensure reliability of your Azure API Management instance - Azure API Management | Microsoft LearnThrottling

TBD

Advanced request throttling with Azure API Management | Microsoft Learn

- Preview feature for two or more Azure OpenAI endpoints using round-robin load balancing

- Pair with Resiliency for highly scalable solution

- Preview feature for two or more Azure OpenAI endpoints using round-robin load balancing

- Pair with Resiliency for highly scalable solution

- Provide cost management per subscription

- Provide cost management per subscription

TBD

TBD

TBD

TBD

TBD

TBD

TBD

Read through the following steps to setup interacting with APIM and how to use consoles or .net to programatically interact with Azure OpenAI via APIM.

To determine if you have one or more models deployed, visit the AI Studio. Here you can determine if you need to create a model or use an existing model. You will use the model name when quering the Azure OpenAI API via your APIM.

-

Navigate to your Azure OpenAI resource in Azure

-

Select Model deployments

-

Select Manage Deployments

-

Review your models and copy the Deployment name of the model you want to use

The subscription key for APIM is collected at the Subscription section of the APIM resource, regardless if you are in Azure Commercial or Government.

You can use this key for testing or as an example on how to create subscriptions to provide access to you Azure OpenAI service. Instead of sharing your Azure OpenAI Key, you create subscriptions in APIM and share this key, then you can analyze and monitor usage, provide guardrails for usage, and manage access.

- Navigate to your new APIM

- Select Subscriptions from the menu

- Select ...

- Select Show/Hide keys

- Select copy icon

The URL for APIM is collected at the Overview section of the APIM resource, regardless if you are in Azure Commercial or Government.

Using your Azure OpenAI model, API version, APIM URL, and APIM subscription key you can now execute Azure OpenAI queries against your APIM URL instead of your Azure OpenAI URL. This means you can create new subscription keys for anyone or any team who needs access to Azure OpenAI instead of deploying new Azure OpenAI services.

Copy and paste this script into a text editor or Visual Studio code (VSC).

Modify by including your values, then copy and paste all of it into PowerShell 7 terminal or run from VSC.

[!NOTE]

Modify the "CONTENT" line for the system role and the user role to support your development and testing.

# Update these values to match your environment

$apimUrl = 'THE_HTTPS_URL_OF_YOUR_APIM_INSTANCE'

$deploymentName = 'DEPLOYMENT_NAME'

$apiVersion = '2024-02-15-preview'

$subscriptionKey = 'YOUR_APIM_SUBSCRIPTION_KEY'

# Construct the URL

$url = "$apimUrl/deployments/$deploymentName/chat/completions?api-version=$apiVersion"

# Headers

$headers = @{

"Content-Type" = "application/json"

"Ocp-Apim-Subscription-Key" = $subscriptionKey

}

# JSON Body

$body = @{

messages = @(

@{

role = "system"

content = "You are an AI assistant that helps people find information."

},

@{

role = "user"

content = "What are the differences between Azure Machine Learning and Azure AI services?"

}

)

temperature = 0.7

top_p = 0.95

max_tokens = 800

} | ConvertTo-Json

# Invoke the API

$response = Invoke-RestMethod -Uri $url -Method Post -Headers $headers -Body $body

# Output the response

$response.choices.message.contentCopy and paste this script into a text editor or Visual Studio code.

Modify by including your values, then copy and paste all of it into bash terminal, run from VSC, or create a ".sh" file to run.

[!NOTE]

Modify the "CONTENT" line for the system role and the user role to support your development and testing.

#!/bin/bash

apimUrl="THE_HTTPS_URL_OF_YOUR_APIM_INSTANCE"

deploymentName="DEPLOYHMENT_NAME" # Probaby what you named your model, but change if necessary

apiVersion="2024-02-15-preview" # Change to use the latest version

subscriptionKey="YOUR_APIM_SUBSCRIPTION_KEY"

url="${apimUrl}/deployments/${deploymentName}/chat/completions?api-version=${apiVersion}"

key="Ocp-Apim-Subscription-Key: ${subscriptionKey}"

# JSON payload

jsonPayload='{

"messages": [

{

"role": "system",

"content": "You are an AI assistant that helps people find information."

},

{

"role": "user",

"content": "What are the differences between Azure Machine Learning and Azure AI services?"

}

],

"temperature": 0.7,

"top_p": 0.95,

"max_tokens": 800

}'

curl "${url}" -H "Content-Type: application/json" -H "${key}" -d "${jsonPayload}"

You will most likely be using Visual Studio 202x to run this and you know what you are doing.

[!NOTE]

Modify the "ChatMessage" lines for the system role and the user role to support your development and testing.

// Note: The Azure OpenAI client library for .NET is in preview.

// Install the .NET library via NuGet: dotnet add package Azure.AI.OpenAI --version 1.0.0-beta.5

using Azure;

using Azure.AI.OpenAI;

OpenAIClient client = new OpenAIClient(

new Uri("https://INSERT_APIM_URL_HERE/deployments/INSERT_DEPLOYMENT_NAME_HERE/chat/completions?api-version=INSERT_API_VERSION_HERE"),

new AzureKeyCredential("INSERT_APIM_SUBSCRIPTION_KEY_HERE"));

// ### If streaming is not selected

Response<ChatCompletions> responseWithoutStream = await client.GetChatCompletionsAsync(

"INSERT_MODEL_NAME_HERE",

new ChatCompletionsOptions()

{

Messages =

{

new ChatMessage(ChatRole.System, @"You are an AI assistant that helps people find information."),

new ChatMessage(ChatRole.User, @"What are the differences between Azure Machine Learning and Azure AI services?"),

},

Temperature = (float)0,

MaxTokens = 800,

NucleusSamplingFactor = (float)1,

FrequencyPenalty = 0,

PresencePenalty = 0,

});

// The following code shows how to get to the content from Azure OpenAI's response

ChatCompletions completions = responseWithoutStream.Value;

ChatChoice choice = completions.Choices[0];

Console.WriteLine(choice.Message.Content);Copy and paste this script into a text editor or Visual Studio code.

Modify by including your values, then copy and paste all of it into bash terminal, run from VSC, or create a ".py" file to run.

[!NOTE]

If you are running Juypter notebooks, this provides an example on using Azure OpenAI via APIM

# This code is an example of how to use the OpenAI API with Azure API Management (APIM) in a Jupyter Notebook.

import requests

import json

# Set the parameters

apim_url = "apim_url"

deployment_name = "deployment_name"

api_version = "2024-02-15-preview"

subscription_key = "subscription_key"

# Construct the URL and headers

url = f"{apim_url}/deployments/{deployment_name}/chat/completions?api-version={api_version}"

headers = {

"Content-Type": "application/json",

"Ocp-Apim-Subscription-Key": subscription_key

}

# Define the JSON payload

json_payload = {

"messages": [

{

"role": "system",

"content": "You are an AI assistant that helps people find information."

},

{

"role": "user",

"content": "What are the differences between Azure Machine Learning and Azure AI services?"

}

],

"temperature": 0.7,

"top_p": 0.95,

"max_tokens": 800

}

# Make the POST request

response = requests.post(url, headers=headers, json=json_payload)

# Print the response text (or you can process it further as needed)

print(response.text)For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for AzureOpenAI-with-APIM

Similar Open Source Tools

AzureOpenAI-with-APIM

AzureOpenAI-with-APIM is a repository that provides a one-button deploy solution for Azure API Management (APIM), Key Vault, and Log Analytics to work seamlessly with Azure OpenAI endpoints. It enables organizations to scale and manage their Azure OpenAI service efficiently by issuing subscription keys via APIM, delivering usage metrics, and implementing policies for access control and cost management. The repository offers detailed guidance on implementing APIM to enhance Azure OpenAI resiliency, scalability, performance, monitoring, and chargeback capabilities.

azure-search-openai-demo

This sample demonstrates a few approaches for creating ChatGPT-like experiences over your own data using the Retrieval Augmented Generation pattern. It uses Azure OpenAI Service to access a GPT model (gpt-35-turbo), and Azure AI Search for data indexing and retrieval. The repo includes sample data so it's ready to try end to end. In this sample application we use a fictitious company called Contoso Electronics, and the experience allows its employees to ask questions about the benefits, internal policies, as well as job descriptions and roles.

serverless-chat-langchainjs

This sample shows how to build a serverless chat experience with Retrieval-Augmented Generation using LangChain.js and Azure. The application is hosted on Azure Static Web Apps and Azure Functions, with Azure Cosmos DB for MongoDB vCore as the vector database. You can use it as a starting point for building more complex AI applications.

contoso-chat

Contoso Chat is a Python sample demonstrating how to build, evaluate, and deploy a retail copilot application with Azure AI Studio using Promptflow with Prompty assets. The sample implements a Retrieval Augmented Generation approach to answer customer queries based on the company's product catalog and customer purchase history. It utilizes Azure AI Search, Azure Cosmos DB, Azure OpenAI, text-embeddings-ada-002, and GPT models for vectorizing user queries, AI-assisted evaluation, and generating chat responses. By exploring this sample, users can learn to build a retail copilot application, define prompts using Prompty, design, run & evaluate a copilot using Promptflow, provision and deploy the solution to Azure using the Azure Developer CLI, and understand Responsible AI practices for evaluation and content safety.

vertex-ai-creative-studio

GenMedia Creative Studio is an application showcasing the capabilities of Google Cloud Vertex AI generative AI creative APIs. It includes features like Gemini for prompt rewriting and multimodal evaluation of generated images. The app is built with Mesop, a Python-based UI framework, enabling rapid development of web and internal apps. The Experimental folder contains stand-alone applications and upcoming features demonstrating cutting-edge generative AI capabilities, such as image generation, prompting techniques, and audio/video tools.

airbroke

Airbroke is an open-source error catcher tool designed for modern web applications. It provides a PostgreSQL-based backend with an Airbrake-compatible HTTP collector endpoint and a React-based frontend for error management. The tool focuses on simplicity, maintaining a small database footprint even under heavy data ingestion. Users can ask AI about issues, replay HTTP exceptions, and save/manage bookmarks for important occurrences. Airbroke supports multiple OAuth providers for secure user authentication and offers occurrence charts for better insights into error occurrences. The tool can be deployed in various ways, including building from source, using Docker images, deploying on Vercel, Render.com, Kubernetes with Helm, or Docker Compose. It requires Node.js, PostgreSQL, and specific system resources for deployment.

SalesGPT

SalesGPT is an open-source AI agent designed for sales, utilizing context-awareness and LLMs to work across various communication channels like voice, email, and texting. It aims to enhance sales conversations by understanding the stage of the conversation and providing tools like product knowledge base to reduce errors. The agent can autonomously generate payment links, handle objections, and close sales. It also offers features like automated email communication, meeting scheduling, and integration with various LLMs for customization. SalesGPT is optimized for low latency in voice channels and ensures human supervision where necessary. The tool provides enterprise-grade security and supports LangSmith tracing for monitoring and evaluation of intelligent agents built on LLM frameworks.

Customer-Service-Conversational-Insights-with-Azure-OpenAI-Services

This solution accelerator is built on Azure Cognitive Search Service and Azure OpenAI Service to synthesize post-contact center transcripts for intelligent contact center scenarios. It converts raw transcripts into customer call summaries to extract insights around product and service performance. Key features include conversation summarization, key phrase extraction, speech-to-text transcription, sensitive information extraction, sentiment analysis, and opinion mining. The tool enables data professionals to quickly analyze call logs for improvement in contact center operations.

ragapp

RAGapp is a tool designed for easy deployment of Agentic RAG in any enterprise. It allows users to configure and deploy RAG in their own cloud infrastructure using Docker. The tool is built using LlamaIndex and supports hosted AI models from OpenAI or Gemini, as well as local models using Ollama. RAGapp provides endpoints for Admin UI, Chat UI, and API, with the option to specify the model and Ollama host. The tool does not come with an authentication layer, requiring users to secure the '/admin' path in their cloud environment. Deployment can be done using Docker Compose with customizable model and Ollama host settings, or in Kubernetes for cloud infrastructure deployment. Development setup involves using Poetry for installation and building frontends.

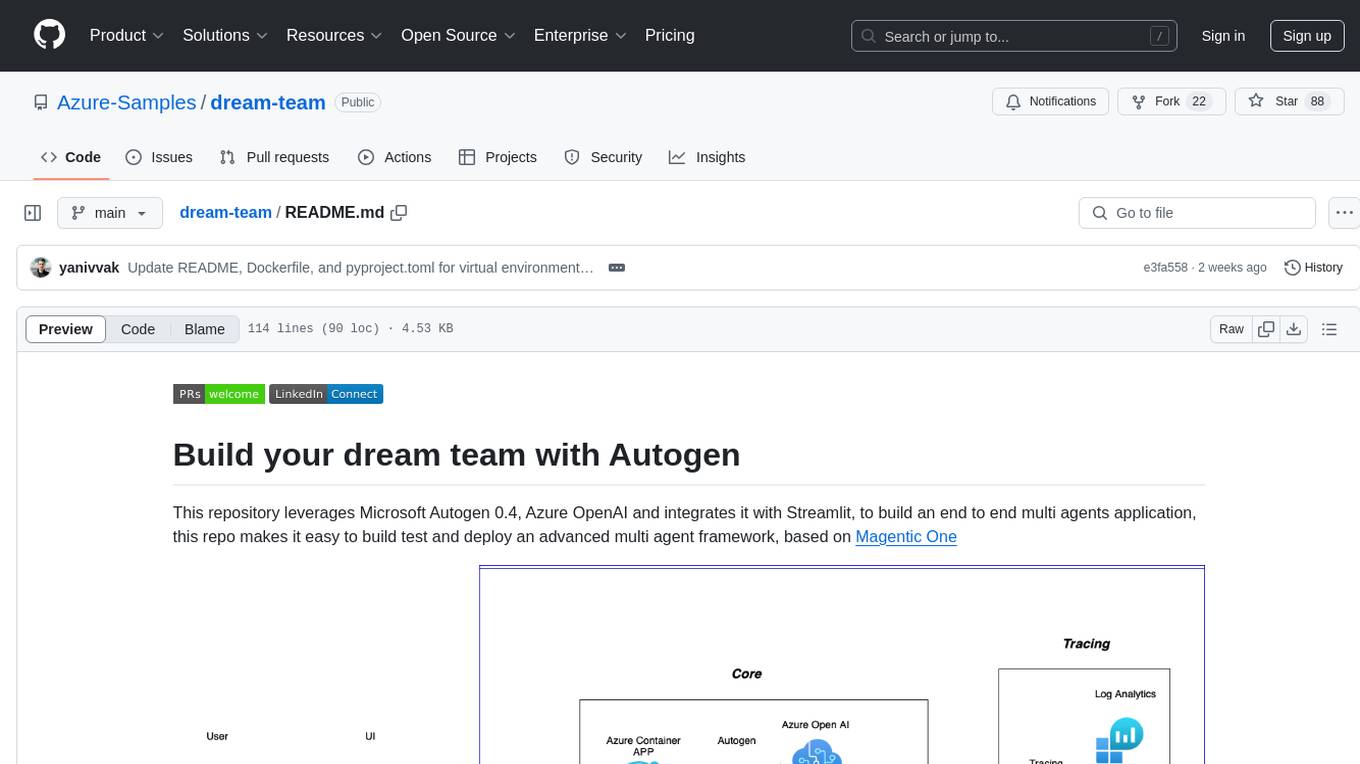

dream-team

Build your dream team with Autogen is a repository that leverages Microsoft Autogen 0.4, Azure OpenAI, and Streamlit to create an end-to-end multi-agent application. It provides an advanced multi-agent framework based on Magentic One, with features such as a friendly UI, single-line deployment, secure code execution, managed identities, and observability & debugging tools. Users can deploy Azure resources and the app with simple commands, work locally with virtual environments, install dependencies, update configurations, and run the application. The repository also offers resources for learning more about building applications with Autogen.

Open_Data_QnA

Open Data QnA is a Python library that allows users to interact with their PostgreSQL or BigQuery databases in a conversational manner, without needing to write SQL queries. The library leverages Large Language Models (LLMs) to bridge the gap between human language and database queries, enabling users to ask questions in natural language and receive informative responses. It offers features such as conversational querying with multiturn support, table grouping, multi schema/dataset support, SQL generation, query refinement, natural language responses, visualizations, and extensibility. The library is built on a modular design and supports various components like Database Connectors, Vector Stores, and Agents for SQL generation, validation, debugging, descriptions, embeddings, responses, and visualizations.

agentok

Agentok Studio is a visual tool built for AutoGen, a cutting-edge agent framework from Microsoft and various contributors. It offers intuitive visual tools to simplify the construction and management of complex agent-based workflows. Users can create workflows visually as graphs, chat with agents, and share flow templates. The tool is designed to streamline the development process for creators and developers working on next-generation Multi-Agent Applications.

coral-cloud

Coral Cloud Resorts is a sample hospitality application that showcases Data Cloud, Agents, and Prompts. It provides highly personalized guest experiences through smart automation, content generation, and summarization. The app requires licenses for Data Cloud, Agents, Prompt Builder, and Einstein for Sales. Users can activate features, deploy metadata, assign permission sets, import sample data, and troubleshoot common issues. Additionally, the repository offers integration with modern web development tools like Prettier, ESLint, and pre-commit hooks for code formatting and linting.

promptflow

**Prompt flow** is a suite of development tools designed to streamline the end-to-end development cycle of LLM-based AI applications, from ideation, prototyping, testing, evaluation to production deployment and monitoring. It makes prompt engineering much easier and enables you to build LLM apps with production quality.

guidellm

GuideLLM is a powerful tool for evaluating and optimizing the deployment of large language models (LLMs). By simulating real-world inference workloads, GuideLLM helps users gauge the performance, resource needs, and cost implications of deploying LLMs on various hardware configurations. This approach ensures efficient, scalable, and cost-effective LLM inference serving while maintaining high service quality. Key features include performance evaluation, resource optimization, cost estimation, and scalability testing.

AntSK

AntSK is an AI knowledge base/agent built with .Net8+Blazor+SemanticKernel. It features a semantic kernel for accurate natural language processing, a memory kernel for continuous learning and knowledge storage, a knowledge base for importing and querying knowledge from various document formats, a text-to-image generator integrated with StableDiffusion, GPTs generation for creating personalized GPT models, API interfaces for integrating AntSK into other applications, an open API plugin system for extending functionality, a .Net plugin system for integrating business functions, real-time information retrieval from the internet, model management for adapting and managing different models from different vendors, support for domestic models and databases for operation in a trusted environment, and planned model fine-tuning based on llamafactory.

For similar tasks

AzureOpenAI-with-APIM

AzureOpenAI-with-APIM is a repository that provides a one-button deploy solution for Azure API Management (APIM), Key Vault, and Log Analytics to work seamlessly with Azure OpenAI endpoints. It enables organizations to scale and manage their Azure OpenAI service efficiently by issuing subscription keys via APIM, delivering usage metrics, and implementing policies for access control and cost management. The repository offers detailed guidance on implementing APIM to enhance Azure OpenAI resiliency, scalability, performance, monitoring, and chargeback capabilities.

For similar jobs

google.aip.dev

API Improvement Proposals (AIPs) are design documents that provide high-level, concise documentation for API development at Google. The goal of AIPs is to serve as the source of truth for API-related documentation and to facilitate discussion and consensus among API teams. AIPs are similar to Python's enhancement proposals (PEPs) and are organized into different areas within Google to accommodate historical differences in customs, styles, and guidance.

kong

Kong, or Kong API Gateway, is a cloud-native, platform-agnostic, scalable API Gateway distinguished for its high performance and extensibility via plugins. It also provides advanced AI capabilities with multi-LLM support. By providing functionality for proxying, routing, load balancing, health checking, authentication (and more), Kong serves as the central layer for orchestrating microservices or conventional API traffic with ease. Kong runs natively on Kubernetes thanks to its official Kubernetes Ingress Controller.

speakeasy

Speakeasy is a tool that helps developers create production-quality SDKs, Terraform providers, documentation, and more from OpenAPI specifications. It supports a wide range of languages, including Go, Python, TypeScript, Java, and C#, and provides features such as automatic maintenance, type safety, and fault tolerance. Speakeasy also integrates with popular package managers like npm, PyPI, Maven, and Terraform Registry for easy distribution.

apicat

ApiCat is an API documentation management tool that is fully compatible with the OpenAPI specification. With ApiCat, you can freely and efficiently manage your APIs. It integrates the capabilities of LLM, which not only helps you automatically generate API documentation and data models but also creates corresponding test cases based on the API content. Using ApiCat, you can quickly accomplish anything outside of coding, allowing you to focus your energy on the code itself.

aiohttp-pydantic

Aiohttp pydantic is an aiohttp view to easily parse and validate requests. You define using function annotations what your methods for handling HTTP verbs expect, and Aiohttp pydantic parses the HTTP request for you, validates the data, and injects the parameters you want. It provides features like query string, request body, URL path, and HTTP headers validation, as well as Open API Specification generation.

ain

Ain is a terminal HTTP API client designed for scripting input and processing output via pipes. It allows flexible organization of APIs using files and folders, supports shell-scripts and executables for common tasks, handles url-encoding, and enables sharing the resulting curl, wget, or httpie command-line. Users can put things that change in environment variables or .env-files, and pipe the API output for further processing. Ain targets users who work with many APIs using a simple file format and uses curl, wget, or httpie to make the actual calls.

OllamaKit

OllamaKit is a Swift library designed to simplify interactions with the Ollama API. It handles network communication and data processing, offering an efficient interface for Swift applications to communicate with the Ollama API. The library is optimized for use within Ollamac, a macOS app for interacting with Ollama models.

ollama4j

Ollama4j is a Java library that serves as a wrapper or binding for the Ollama server. It facilitates communication with the Ollama server and provides models for deployment. The tool requires Java 11 or higher and can be installed locally or via Docker. Users can integrate Ollama4j into Maven projects by adding the specified dependency. The tool offers API specifications and supports various development tasks such as building, running unit tests, and integration tests. Releases are automated through GitHub Actions CI workflow. Areas of improvement include adhering to Java naming conventions, updating deprecated code, implementing logging, using lombok, and enhancing request body creation. Contributions to the project are encouraged, whether reporting bugs, suggesting enhancements, or contributing code.