AI-LLM-ML-CS-Quant-Review

In-depth review of industry trends in AI, LLMs, Machine Learning, Computer Science, and Quantitative Finance.

Stars: 380

This repository provides an in-depth review of industry trends in AI, Large Language Models (LLMs), Machine Learning, Computer Science, and Quantitative Finance. It covers various topics such as NVIDIA GTC conferences, DeepSeek theory and applications, LangGraph & Cursor AI, LLM essentials, system design, computer systems, big data and AI in finance, C++ design patterns, high-frequency finance, machine learning for algorithmic trading, stochastic volatility modeling, and quant job interview questions.

README:

In-depth review of industry trends in AI, LLMs, Machine Learning, Computer Science, and Quantitative Finance.

-

2025 NVIDIA GTC Conference − Technical & Industrial Insight

-

2025 Agentic AI Summit Berkeley − Technical & Industrial Insight

- 1. NVIDIA GTC | AI Conference for Developers

- 2. Agentic AI Summit

- 3. LLM Essentials

- 4. DeepSeek & Kimi

- 5. 2025 Paper Reading

- 6. LangGraph & Cursor AI Projects

- 7. System Design

- 8. Computer Systems

- 9. Big Data and AI in Finance, Econometrics and Statistics Conference, UChicago 2024

- 10. C++ Design Patterns and Derivatives Pricing

- 11. High-Frequency Finance

- 12. Machine Learning for Algorithmic Trading

- 13. Stochastic Volatility Modeling

- 14. Quant Job Interview Questions

2025 Agentic AI Summit Berkeley − Technical & Industrial Insight

Dive into DeepSeek LLM, by Xiaojing Ding, 2025

DeepSeek Large Model High-Performance Core Technology and Multimodal Fusion Development, by Xiaohua Wang, 2025

Efficient Training in PyTorch, by Ailing Zhang, 2024

Generative AI on AWS, by Chris Fregly, 2024

LLM from Theory to Practice, by Qi Zhang, 2024

LangChain Scalable LLM Apps, by Teli Li, 2024

Foundations of LLMs - by Yuren Mao, Zhejiang University, 2024

30 Essential Questions and Answers on Machine Learning and AI - by Sebastian Raschka, 2025

Unveiling Large Model, by Liang Wen, 2025

Educative: Advanced RAG Techniques - Choosing the Right Approach | Notes

Educative: Build AI Agents and Multi-Agent Systems with CrewAI | Notes

Github: MiniGPT-and-DeepSeek-MLA-Multi-Head-Latent-Attention

Educative: Everything You Need to Know About DeepSeek | Notes

World Models | LinkedIn: World Model: 5 Debates Between Eric Xing's PAN & Yann LeCun’s JEPA

- Ed Donner: LLM Engineering: Master AI, Large Language Models & Agents

- Eden Marco: LangChain-Develop LLM powered applications with LangChain

- Eden Marco: LangGraph-Develop LLM powered AI agents with LangGraph

- Eden Marco: Cursor Course: FullStack development with Cursor AI Copilot

GitHub Projects

- MCP-MultiServer-Interoperable-Agent2Agent-LangGraph-AI-System

- Code-Interpreter-ReAct-LangChain-Agent

- LLM-Documentation-Chatbot

- Cognito-LangGraph-RAG

- LangGraph-Reflection-Researcher

- Cursor-FullStack-AI-App

System Design Interview, An Insider's Guide, Second Edition - by Alex Xu, 2020

Generative AI System Design Interview - by Ali Aminian, Hao Sheng, 2024

Machine Learning System Design Interview - by Ali Aminian, Alex Xu, 2023

Educative - Grokking System Design Interview | PDF Notes | Markdown Notes

Educative - Grokking the Modern System Design Interview | Markdown Notes

计算机底层的秘密,陆小风 - 2023,电子工业出版社

BDAI Conference, 2024 Oct 3-5, UChicago

Abstract PDF | Agenda PDF | High Level Overview Notes PDF | Conference Review Notes PDF

C++ Design Patterns and Derivatives Pricing (Mathematics, Finance and Risk, Series Number 2) 2nd Edition, by M. S. Joshi

An Introduction to High-Frequency Finance, by Ramazan Gençay, et al.

Machine Learning for Algorithmic Trading: Predictive models to extract signals from market and alternative data for systematic trading strategies with Python, 2nd Edition Paperback – by Stefan Jansen 2020

Stochastic Volatility Modeling (Chapman and Hall/CRC Financial Mathematics Series) 1st Edition, by Lorenzo Bergomi

Book Link | PDF Char 1 Intro | Markdown Char 1 Intro | PDF Char 2 Local Vol | Markdown Char 2 Local Vol

Quant Job Interview Questions and Answers (Second Edition) – by Mark Joshi 2013

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for AI-LLM-ML-CS-Quant-Review

Similar Open Source Tools

AI-LLM-ML-CS-Quant-Review

This repository provides an in-depth review of industry trends in AI, Large Language Models (LLMs), Machine Learning, Computer Science, and Quantitative Finance. It covers various topics such as NVIDIA GTC conferences, DeepSeek theory and applications, LangGraph & Cursor AI, LLM essentials, system design, computer systems, big data and AI in finance, C++ design patterns, high-frequency finance, machine learning for algorithmic trading, stochastic volatility modeling, and quant job interview questions.

AI-LLM-ML-CS-Quant-Overview

AI-LLM-ML-CS-Quant-Overview is a repository providing overview notes on AI, Large Language Models (LLM), Machine Learning (ML), Computer Science (CS), and Quantitative Finance. It covers various topics such as LangGraph & Cursor AI, DeepSeek, MoE (Mixture of Experts), NVIDIA GTC, LLM Essentials, System Design, Computer Systems, Big Data and AI in Finance, Econometrics and Statistics Conference, C++ Design Patterns and Derivatives Pricing, High-Frequency Finance, Machine Learning for Algorithmic Trading, Stochastic Volatility Modeling, Quant Job Interview Questions, Distributed Systems, Language Models, Designing Machine Learning Systems, Designing Data-Intensive Applications (DDIA), Distributed Machine Learning, and The Elements of Quantitative Investing.

AI-LLM-ML-CS-Quant-Readings

AI-LLM-ML-CS-Quant-Readings is a repository dedicated to taking notes on Artificial Intelligence, Large Language Models, Machine Learning, Computer Science, and Quantitative Finance. It contains a wide range of resources, including theory, applications, conferences, essentials, foundations, system design, computer systems, finance, and job interview questions. The repository covers topics such as AI systems, multi-agent systems, deep learning theory and applications, system design interviews, C++ design patterns, high-frequency finance, algorithmic trading, stochastic volatility modeling, and quantitative investing. It is a comprehensive collection of materials for individuals interested in these fields.

claude-code-ultimate-guide

The Claude Code Ultimate Guide is an exhaustive documentation resource that takes users from beginner to power user in using Claude Code. It includes production-ready templates, workflow guides, a quiz, and a cheatsheet for daily use. The guide covers educational depth, methodologies, and practical examples to help users understand concepts and workflows. It also provides interactive onboarding, a repository structure overview, and learning paths for different user levels. The guide is regularly updated and offers a unique 257-question quiz for comprehensive assessment. Users can also find information on agent teams coverage, methodologies, annotated templates, resource evaluations, and learning paths for different roles like junior developer, senior developer, power user, and product manager/devops/designer.

Embodied-AI-Guide

Embodied-AI-Guide is a comprehensive guide for beginners to understand Embodied AI, focusing on the path of entry and useful information in the field. It covers topics such as Reinforcement Learning, Imitation Learning, Large Language Model for Robotics, 3D Vision, Control, Benchmarks, and provides resources for building cognitive understanding. The repository aims to help newcomers quickly establish knowledge in the field of Embodied AI.

matrixone

MatrixOne is the industry's first database to bring Git-style version control to data, combined with MySQL compatibility, AI-native capabilities, and cloud-native architecture. It is a HTAP (Hybrid Transactional/Analytical Processing) database with a hyper-converged HSTAP engine that seamlessly handles transactional, analytical, full-text search, and vector search workloads in a single unified system—no data movement, no ETL, no compromises. Manage your database like code with features like instant snapshots, time travel, branch & merge, instant rollback, and complete audit trail. Built for the AI era, MatrixOne is MySQL-compatible, AI-native, and cloud-native, offering storage-compute separation, elastic scaling, and Kubernetes-native deployment. It serves as one database for everything, replacing multiple databases and ETL jobs with native OLTP, OLAP, full-text search, and vector search capabilities.

Awesome-Lists

Awesome-Lists is a curated list of awesome lists across various domains of computer science and beyond, including programming languages, web development, data science, and more. It provides a comprehensive index of articles, books, courses, open source projects, and other resources. The lists are organized by topic and subtopic, making it easy to find the information you need. Awesome-Lists is a valuable resource for anyone looking to learn more about a particular topic or to stay up-to-date on the latest developments in the field.

Awesome-Lists-and-CheatSheets

Awesome-Lists is a curated index of selected resources spanning various fields including programming languages and theories, web and frontend development, server-side development and infrastructure, cloud computing and big data, data science and artificial intelligence, product design, etc. It includes articles, books, courses, examples, open-source projects, and more. The repository categorizes resources according to the knowledge system of different domains, aiming to provide valuable and concise material indexes for readers. Users can explore and learn from a wide range of high-quality resources in a systematic way.

agentica

Agentica is a human-centric framework for building large language model agents. It provides functionalities for planning, memory management, tool usage, and supports features like reflection, planning and execution, RAG, multi-agent, multi-role, and workflow. The tool allows users to quickly code and orchestrate agents, customize prompts, and make API calls to various services. It supports API calls to OpenAI, Azure, Deepseek, Moonshot, Claude, Ollama, and Together. Agentica aims to simplify the process of building AI agents by providing a user-friendly interface and a range of functionalities for agent development.

prism-insight

PRISM-INSIGHT is a comprehensive stock analysis and trading simulation system based on AI agents. It automatically captures daily surging stocks via Telegram channel, generates expert-level analyst reports, and performs trading simulations. The system utilizes OpenAI GPT-4.1 for in-depth stock analysis and GPT-5 for investment strategy simulation. It also interacts with users via Anthropic Claude for Telegram conversations. The system architecture includes AI analysis agents, stock tracking, PDF conversion, and Telegram bot functionalities. Users can customize criteria for identifying surging stocks, modify AI prompts, and adjust chart styles. The project is open-source under the MIT license, and all investment decisions based on the analysis are the responsibility of the user.

llm-course

The llm-course repository is a collection of resources and materials for a course on Legal and Legislative Drafting. It includes lecture notes, assignments, readings, and other educational materials to help students understand the principles and practices of drafting legal documents. The course covers topics such as statutory interpretation, legal drafting techniques, and the role of legislation in the legal system. Whether you are a law student, legal professional, or someone interested in understanding the intricacies of legal language, this repository provides valuable insights and resources to enhance your knowledge and skills in legal drafting.

Video-ChatGPT

Video-ChatGPT is a video conversation model that aims to generate meaningful conversations about videos by combining large language models with a pretrained visual encoder adapted for spatiotemporal video representation. It introduces high-quality video-instruction pairs, a quantitative evaluation framework for video conversation models, and a unique multimodal capability for video understanding and language generation. The tool is designed to excel in tasks related to video reasoning, creativity, spatial and temporal understanding, and action recognition.

Code-Review-GPT-Gitlab

A project that utilizes large models to help with Code Review on Gitlab, aimed at improving development efficiency. The project is customized for Gitlab and is developing a Multi-Agent plugin for collaborative review. It integrates various large models for code security issues and stays updated with the latest Code Review trends. The project architecture is designed to be powerful, flexible, and efficient, with easy integration of different models and high customization for developers.

poco-agent

Poco Agent is a cloud-based tool that provides a secure sandbox environment for running tasks without affecting the host machine. It offers a modern UI with mobile adaptability, easy configuration through Docker, and extensive capabilities with support for MCP protocol and custom skills. Users can run tasks asynchronously and schedule them, even when the web interface is closed. Additional features include a built-in browser for internet research and GitHub repository integration. Poco Agent aims to be a more secure, visually appealing, and user-friendly alternative to OpenClaw.

For similar tasks

Awesome-Segment-Anything

Awesome-Segment-Anything is a powerful tool for segmenting and extracting information from various types of data. It provides a user-friendly interface to easily define segmentation rules and apply them to text, images, and other data formats. The tool supports both supervised and unsupervised segmentation methods, allowing users to customize the segmentation process based on their specific needs. With its versatile functionality and intuitive design, Awesome-Segment-Anything is ideal for data analysts, researchers, content creators, and anyone looking to efficiently extract valuable insights from complex datasets.

Time-LLM

Time-LLM is a reprogramming framework that repurposes large language models (LLMs) for time series forecasting. It allows users to treat time series analysis as a 'language task' and effectively leverage pre-trained LLMs for forecasting. The framework involves reprogramming time series data into text representations and providing declarative prompts to guide the LLM reasoning process. Time-LLM supports various backbone models such as Llama-7B, GPT-2, and BERT, offering flexibility in model selection. The tool provides a general framework for repurposing language models for time series forecasting tasks.

crewAI

CrewAI is a cutting-edge framework designed to orchestrate role-playing autonomous AI agents. By fostering collaborative intelligence, CrewAI empowers agents to work together seamlessly, tackling complex tasks. It enables AI agents to assume roles, share goals, and operate in a cohesive unit, much like a well-oiled crew. Whether you're building a smart assistant platform, an automated customer service ensemble, or a multi-agent research team, CrewAI provides the backbone for sophisticated multi-agent interactions. With features like role-based agent design, autonomous inter-agent delegation, flexible task management, and support for various LLMs, CrewAI offers a dynamic and adaptable solution for both development and production workflows.

Transformers_And_LLM_Are_What_You_Dont_Need

Transformers_And_LLM_Are_What_You_Dont_Need is a repository that explores the limitations of transformers in time series forecasting. It contains a collection of papers, articles, and theses discussing the effectiveness of transformers and LLMs in this domain. The repository aims to provide insights into why transformers may not be the best choice for time series forecasting tasks.

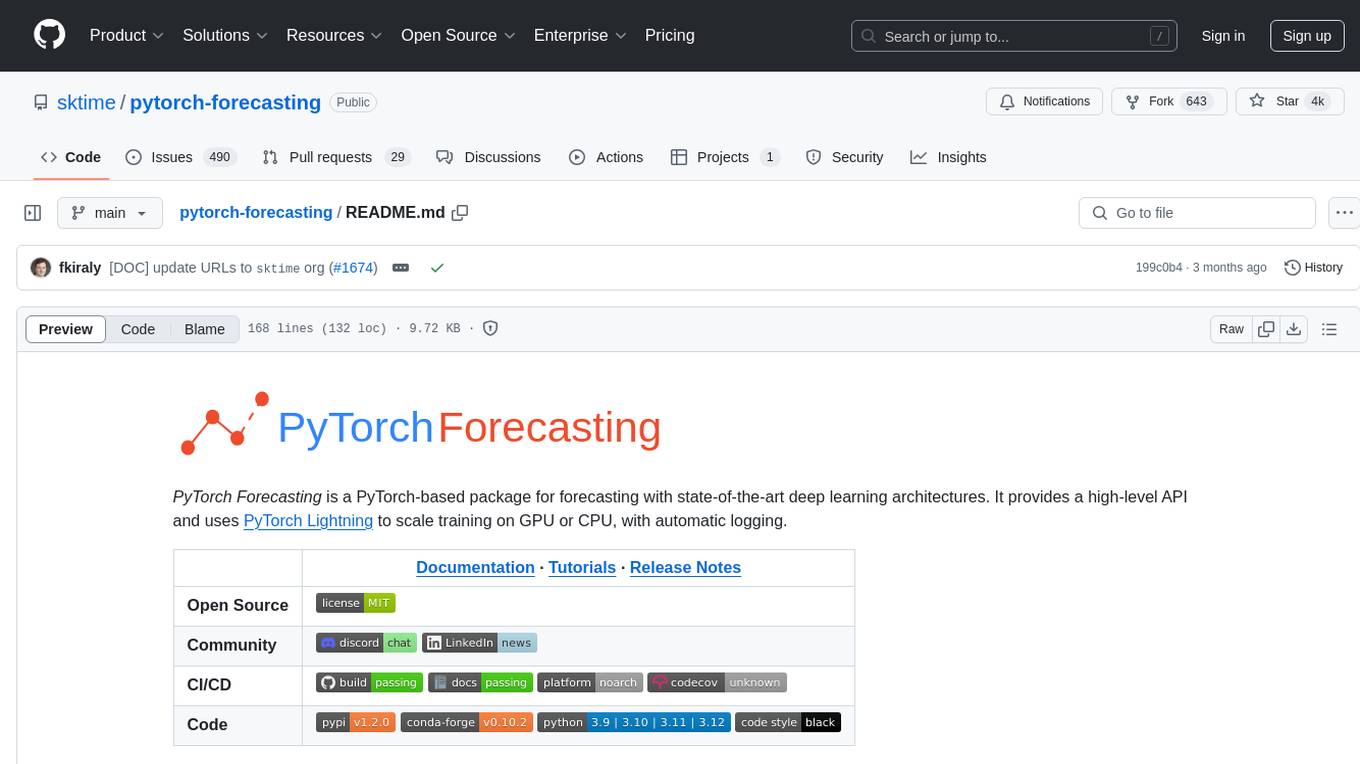

pytorch-forecasting

PyTorch Forecasting is a PyTorch-based package for time series forecasting with state-of-the-art network architectures. It offers a high-level API for training networks on pandas data frames and utilizes PyTorch Lightning for scalable training on GPUs and CPUs. The package aims to simplify time series forecasting with neural networks by providing a flexible API for professionals and default settings for beginners. It includes a timeseries dataset class, base model class, multiple neural network architectures, multi-horizon timeseries metrics, and hyperparameter tuning with optuna. PyTorch Forecasting is built on pytorch-lightning for easy training on various hardware configurations.

spider

Spider is a high-performance web crawler and indexer designed to handle data curation workloads efficiently. It offers features such as concurrency, streaming, decentralization, headless Chrome rendering, HTTP proxies, cron jobs, subscriptions, smart mode, blacklisting, whitelisting, budgeting depth, dynamic AI prompt scripting, CSS scraping, and more. Users can easily get started with the Spider Cloud hosted service or set up local installations with spider-cli. The tool supports integration with Node.js and Python for additional flexibility. With a focus on speed and scalability, Spider is ideal for extracting and organizing data from the web.

AI_for_Science_paper_collection

AI for Science paper collection is an initiative by AI for Science Community to collect and categorize papers in AI for Science areas by subjects, years, venues, and keywords. The repository contains `.csv` files with paper lists labeled by keys such as `Title`, `Conference`, `Type`, `Application`, `MLTech`, `OpenReviewLink`. It covers top conferences like ICML, NeurIPS, and ICLR. Volunteers can contribute by updating existing `.csv` files or adding new ones for uncovered conferences/years. The initiative aims to track the increasing trend of AI for Science papers and analyze trends in different applications.

pytorch-forecasting

PyTorch Forecasting is a PyTorch-based package designed for state-of-the-art timeseries forecasting using deep learning architectures. It offers a high-level API and leverages PyTorch Lightning for efficient training on GPU or CPU with automatic logging. The package aims to simplify timeseries forecasting tasks by providing a flexible API for professionals and user-friendly defaults for beginners. It includes features such as a timeseries dataset class for handling data transformations, missing values, and subsampling, various neural network architectures optimized for real-world deployment, multi-horizon timeseries metrics, and hyperparameter tuning with optuna. Built on pytorch-lightning, it supports training on CPUs, single GPUs, and multiple GPUs out-of-the-box.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.