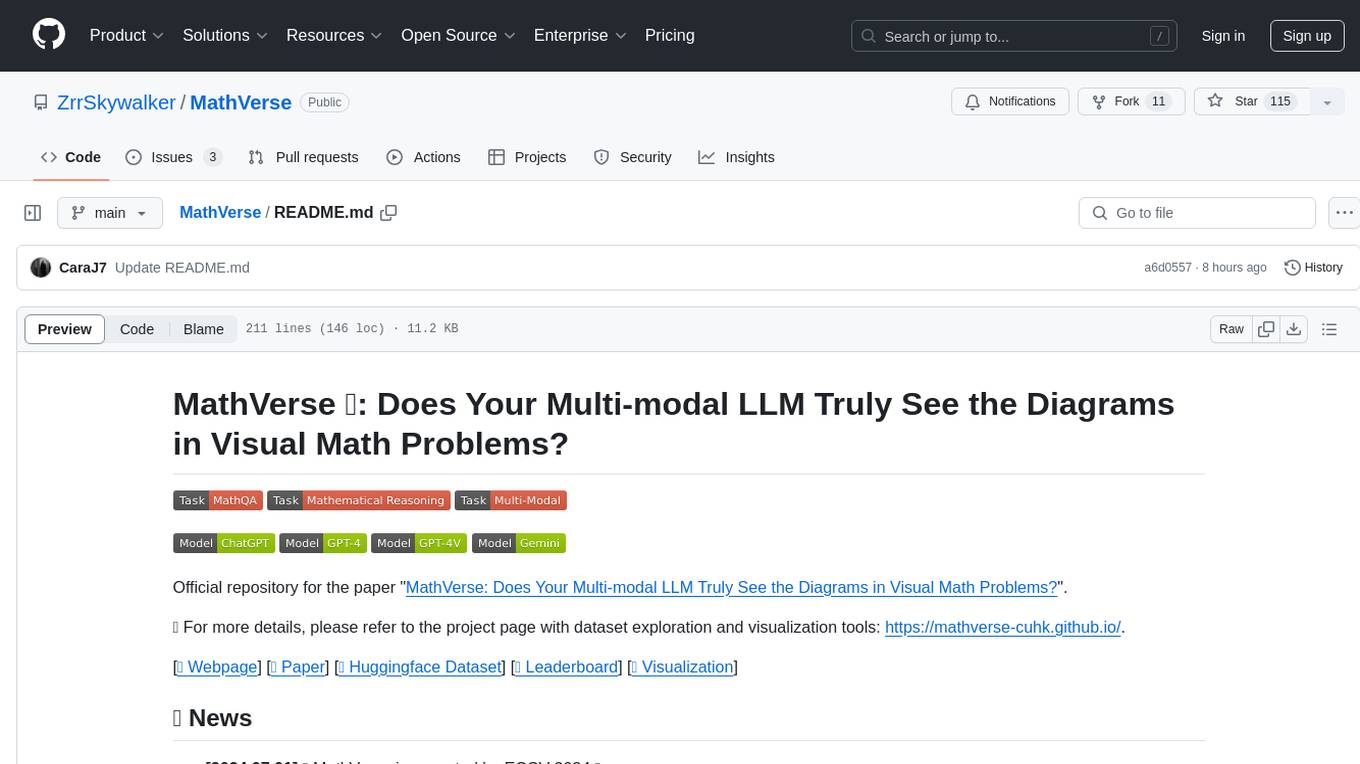

DecryptPrompt

总结Prompt&LLM论文,开源数据&模型,AIGC应用

Stars: 2529

This repository does not provide a tool, but rather a collection of resources and strategies for academics in the field of artificial intelligence who are feeling depressed or overwhelmed by the rapid advancements in the field. The resources include articles, blog posts, and other materials that offer advice on how to cope with the challenges of working in a fast-paced and competitive environment.

README:

如果LLM的突然到来让你感到沮丧,不妨读下主目录的Choose Your Weapon Survival Strategies for Depressed AI Academics 持续更新以下内容,Star to keep updated~

目录顺序如下

- 国内外,垂直领域大模型

- Agent和指令微调等训练框架

- 开源指令,预训练,rlhf,对话,agent训练数据梳理

- AIGC相关应用

- prompt写作指南和5星博客等资源梳理

- Prompt和LLM论文细分方向梳理

- 解密Prompt系列1. Tunning-Free Prompt:GPT2 & GPT3 & LAMA & AutoPrompt

- 解密Prompt系列2. 冻结Prompt微调LM: T5 & PET & LM-BFF

- 解密Prompt系列3. 冻结LM微调Prompt: Prefix-tuning & Prompt-tuning & P-tuning

- 解密Prompt系列4. 升级Instruction Tuning:Flan/T0/InstructGPT/TKInstruct

- 解密prompt系列5. APE+SELF=自动化指令集构建代码实现

- 解密Prompt系列6. lora指令微调扣细节-请冷静,1个小时真不够~

- 解密Prompt系列7. 偏好对齐RLHF-OpenAI·DeepMind·Anthropic对比分析

- 解密Prompt系列8. 无需训练让LLM支持超长输入:知识库 & Unlimiformer & PCW & NBCE

- 解密Prompt系列9. 模型复杂推理-思维链基础和进阶玩法

- 解密Prompt系列10. 思维链COT原理探究

- 解密Prompt系列11. 小模型也能COT,先天不足后天补

- 解密Prompt系列12. LLM Agent零微调范式 ReAct & Self Ask

- 解密Prompt系列13. LLM Agent指令微调方案: Toolformer & Gorilla

- 解密Prompt系列14. LLM Agent之搜索应用设计:WebGPT & WebGLM & WebCPM

- 解密Prompt系列15. LLM Agent之数据库应用设计:DIN & C3 & SQL-Palm & BIRD

- 解密Prompt系列16. LLM对齐经验之数据越少越好?LTD & LIMA & AlpaGasus

- 解密Prompt系列17. LLM对齐方案再升级 WizardLM & BackTranslation & SELF-ALIGN

- 解密Prompt系列18. LLM Agent之只有智能体的世界

- 解密Prompt系列19. LLM Agent之数据分析领域的应用:Data-Copilot & InsightPilot

- 解密Prompt系列20. LLM Agent 之再谈RAG的召回多样性优化

- 解密Prompt系列21. LLM Agent之再谈RAG的召回信息密度和质量

- 解密Prompt系列22. LLM Agent之RAG的反思:放弃了压缩还是智能么?

- 解密Prompt系列23.大模型幻觉分类&归因&检测&缓解方案脑图全梳理

- 解密prompt系列24. RLHF新方案之训练策略:SLiC-HF & DPO & RRHF & RSO

- 解密prompt系列25. RLHF改良方案之样本标注:RLAIF & SALMON

- 解密prompt系列26. 人类思考vs模型思考:抽象和发散思维

- 解密prompt系列27. LLM对齐经验之如何降低通用能力损失

- 解密Prompt系列28. LLM Agent之金融领域智能体:FinMem & FinAgent

- 解密Prompt系列29. LLM Agent之真实世界海量API解决方案:ToolLLM & AnyTool

- 解密Prompt系列30. LLM Agent之互联网冲浪智能体们

- 解密Prompt系列31. LLM Agent之从经验中不断学习的智能体

- 解密Prompt系列32. LLM之表格理解任务-文本模态

- 解密Prompt系列33. LLM之图表理解任务-多模态篇

- 解密prompt系列34. RLHF之训练另辟蹊径:循序渐进 & 青出于蓝

- 解密prompt系列35. 标准化Prompt进行时! DSPy论文串烧和代码示例

- 解密Prompt系列36. Prompt结构化编写和最优化算法UNIPROMPT

| 榜单 | 结果 |

|---|---|

| AlpacaEval:LLM-based automatic evaluation | 开源模型王者vicuna,openchat, wizardlm |

| Huggingface Open LLM Leaderboard | MMLU只评估开源模型,Falcon夺冠,在Eleuther AI4个评估集上评估的LLM模型榜单,vicuna夺冠 |

| https://opencompass.org.cn/ | 上海人工智能实验室推出的开源榜单 |

| Berkley出品大模型排位赛榜有准中文榜单 | Elo评分机制,GPT4自然是稳居第一,GPT4>Claude>GPT3.5>Vicuna>others |

| CMU开源聊天机器人评测应用 | ChatGPT>Vicuna>others;在对话场景中训练可能很重要 |

| Z-Bench中文真格基金评测 | 国产中文模型的编程可用性还相对较低,大家水平差不太多,两版ChatGLM提升明显 |

| Chain-of-thought评估 | GSM8k, MATH等复杂问题排行榜 |

| InfoQ 大模型综合能力评估 | 面向中文,ChatGPT>文心一言> Claude>星火 |

| ToolBench: 工具调用评估榜单 | 工具微调模型和ChatGPT进行对比,提供评测脚本 |

| AgentBench: 推理决策评估榜单 | 清华联合多高校推出不同任务环境,例如购物,家居,操作系统等场景下模型推理决策能力 |

| FlagEval | 智源出品主观+客观LLM评分榜单 |

| Bird-Bench | 更贴合真实世界应用的超大数据库,需要领域知识的NL2SQL榜单,模型追赶人类尚有时日 |

| kola | 以世界知识为核心的评价基准,包括已知的百科知识和未知的近90天网络发布内容,评价知识记忆,理解,应用和创造能力 |

| CEVAL | 中文知识评估,覆盖52个学科,机器评价主要为多项选择 |

| CMMLU | 67个主题中文知识和推理能力评估,多项选择机器评估 |

| LLMEval3 | 复旦推出的知识问答榜单,涵盖大学作业和考题,题库尽可能来自非互联网避免模型作弊 |

| FinancelQ | 度小满开源的金融多项选择评估数据集 |

| SWE-bench | 基于真实github问题和PR的模型编程能力评估 |

| Awesome-MLLM | 多模态大模型榜单 |

| 模型链接 | 模型描述 |

|---|---|

| Phi-3-MINI-128K | 还是质量>数量的训练逻辑,微软的3B小模型 |

| LLama3 | Open Meta带着可商用开源的羊驼3模型来了,重回王座~ |

| WizardLM-2-8x22B | 微软带着WizardLM-2也来了包括70B,7B 和8*22B |

| OpenSora | 没等来OpenAI却等来了OpenSora这个梗不错哦 |

| GROK | 马斯克开源Grok-1:3140亿参数迄今最大,权重架构全开放 |

| Gemma | 谷歌商场开源模型2B,7B免费商用 |

| Mixtral8*7B | 法国“openai”开源基于MegaBlocks训练的MOE模型8*7B 32K |

| Mistral7B | 法国“openai”开源Mistral,超过llama2当前最好7B模型 |

| Idefics2 | Hugging Face 推出 Idefics2 8B 多模态模型 |

| Dolphin-2.2.1-Mistral-7B | 基于Mistral7B使用dolphin数据集微调 |

| Falcon | Falcon由阿联酋技术研究所在超高质量1万亿Token上训练得到1B,7B,40B开源,免费商用!土豪们表示钱什么的格局小了 |

| Vicuna | Alpaca前成员等开源以LLama13B为基础使用ShareGPT指令微调的模型,提出了用GPT4来评测模型效果 |

| OpenChat | 80k ShareGPT对话微调LLama-2 13B开源模型中的战斗机 |

| Guanaco | LLama 7B基座,在alpaca52K数据上加入534K多语言指令数据微调 |

| MPT | MosaicML开源的预训练+指令微调的新模型,可商用,支持84k tokens超长输入 |

| RedPajama | RedPajama项目既开源预训练数据后开源3B,7B的预训练+指令微调模型 |

| koala | 使用alpaca,HC3等开源指令集+ ShareGPT等ChatGPT数据微调llama,在榜单上排名较高 |

| ChatLLaMA | 基于RLHF微调了LLaMA |

| Alpaca | 斯坦福开源的使用52k数据在7B的LLaMA上微调得到, |

| Alpaca-lora | LORA微调的LLaMA |

| Dromedary | IBM self-aligned model with the LLaMA base |

| ColossalChat | HPC-AI Tech开源的Llama+RLHF微调 |

| MiniGPT4 | Vicuna+BLIP2 文本视觉融合 |

| StackLLama | LLama使用Stackexchange数据+SFT+RL |

| Cerebras | Cerebras开源了1亿到130亿的7个模型,从预训练数据到参数全开源 |

| Dolly-v2 | 可商用 7b指令微调开源模型在GPT-J-6B上微调 |

| OpenChatKit | openai研究员打造GPT-NoX-20B微调+6B审核模型过滤 |

| MetaLM | 微软开源的大规模自监督预训练模型 |

| Amazon Titan | 亚马逊在aws上增加自家大模型 |

| OPT-IML | Meta复刻GPT3,up to 175B, 不过效果并不及GPT3 |

| Bloom | BigScience出品,规模最大176B |

| BloomZ | BigScience出品, 基于Bloom微调 |

| Galacia | 和Bloom相似,更针对科研领域训练的模型 |

| T0 | BigScience出品,3B~11B的在T5进行指令微调的模型 |

| EXLLama | Python/C++/CUDA implementation of Llama for use with 4-bit GPTQ weight |

| LongChat | llama-13b使用condensing rotary embedding technique微调的长文本模型 |

| MPT-30B | MosaicML开源的在8Ktoken上训练的大模型 |

| 模型链接 | 模型描述 |

|---|---|

| Yuan2.0-M32 | 原2.0 M32MOE 大模型 |

| DeepSeek-v2 | 深度求索最新发布的21B MOE超强大模型降低KV-cache推理更高效 |

| Qwen1.5-MoE-A2.7B | Qwen推出MOE版本,推理更快 |

| Qwen1.5 | 通义千问升级1.5,支持32K上文 |

| Baichuan2 | 百川第二代也出第二个版本了,提供了7B/13B Base和chat的版本 |

| ziya2 | 基于Llama2训练的ziya2它终于训练完了 |

| InternLM2 7B+20B | 商汤的书生模型2支持200K |

| InternLM-XComposer | 最新多模态视觉大模型 |

| Orion-14B-LongChat | 猎户星空多语言模型支持320K |

| ChatGLM3 | ChatGLM3发布,支持工具调用等更多功能,不过泛化性有待评估 |

| Yuan-2.0 | 浪潮发布Yuan2.0 2B,51B,102B |

| YI-200K | 元一智能开源超长200K的6B,34B模型 |

| XVERSE-256K | 元象发布13B免费商用大模型,虽然很长但是 |

| LLama2-chinese | 没等太久中文预训练微调后的llama2它来了~ |

| YuLan-chat2 | 高瓴人工智能基于Llama-2中英双语继续预训练+指令微调/对话微调 |

| BlueLM | Vivo人工智能实验室开源大模型 |

| zephyr-7B | HuggingFace 团队基于 UltraChat 和 UltraFeedback 训练了 Zephyr-7B 模型 |

| XWin-LM | llama2 + SFT + RLHF |

| Skywork | 昆仑万维集团·天工团队开源13B大模型可商用 |

| Chinese-LLaMA-Alpaca | 哈工大中文指令微调的LLaMA |

| Moss | 为复旦正名!开源了预训练,指令微调的全部数据和模型。可商用 |

| InternLM | 书生浦语在过万亿 token 数据上训练的多语千亿参数基座模型 |

| Aquila2 | 智源更新Aquila2模型系列包括全新34B |

| Aquila | 智源开源7B大模型可商用免费 |

| UltraLM系列 | 面壁智能开源UltraLM13B,奖励模型UltraRM,和批评模型UltraCM |

| PandaLLM | LLAMA2上中文wiki继续预训练+COIG指令微调 |

| XVERSE | 据说中文超越llama2的元象开源模型13B模型 |

| BiLLa | LLama词表·扩充预训练+预训练和任务1比1混合SFT+指令样本SFT三阶段训练 |

| Phoenix | 港中文开源凤凰和奇美拉LLM,Bloom基座,40+语言支持 |

| Wombat-7B | 达摩院开源无需强化学习使用RRHF对齐的语言模型, alpaca基座 |

| TigerBot | 虎博开源了7B 180B的模型以及预训练和微调语料 |

| Luotuo | 中文指令微调的LLaMA,和ChatGLM |

| OpenBuddy | Llama 多语言对话微调模型 |

| Chinese Vincuna | LLama 7B基座,使用Belle+Guanaco数据训练 |

| Linly | Llama 7B基座,使用belle+guanaco+pclue+firefly+CSL+newscommentary等7个指令微调数据集训练 |

| Firefly | 中文2.6B模型,提升模型中文写作,古文能力,待开源全部训练代码,当前只有模型 |

| Baize | 使用100k self-chat对话数据微调的LLama |

| BELLE | 使用ChatGPT生成数据对开源模型进行中文优化 |

| Chatyuan | chatgpt出来后最早的国内开源对话模型,T5架构是下面PromptCLUE的衍生模型 |

| PromptCLUE | 多任务Prompt语言模型 |

| PLUG | 阿里达摩院发布的大模型,提交申请会给下载链接 |

| CPM2.0 | 智源发布CPM2.0 |

| GLM | 清华发布的中英双语130B预训练模型 |

| BayLing | 基于LLama7B/13B,增强的语言对齐的英语/中文大语言模型 |

| 模型 | 描述 |

|---|---|

| Kosmos-2.5 | 微软推出的多模态擅长识别多文字、表格图片 |

| LLAVA-1.5 | 升级后的LLAVA 13B模型浙大出品 |

| MiniGPT-4 | 认知类任务评分最高 |

| InternLM-XComposer | 书生浦语·灵笔2,擅长自由图文理解 |

| mPLUG-DocOwl | 阿里出品面向文档理解的多模态模型 |

| 模型链接 | 模型描述 |

|---|---|

| PPLX-7B/70B | Perplexity.ai的Playground支持他们自家的PPLX模型和众多SOTA大模型,Gemma也支持了 |

| kimi Chat | Moonshot超长文本LLM 可输入20W上文, 文档总结无敌 |

| 万知 | YI模型基座的应用,支持OCR文档识别 |

| 跃问 | 阶跃星辰推出的同样擅长长文本的大模型 |

| 讯飞星火 | 科大讯飞 |

| 文心一言 | 百度 |

| 通义千问 | 阿里 |

| 百川 | 百川 |

| ChatGLM | 智谱轻言 |

| DeepSeek | 深度求索 |

| 360智脑 | 360 |

| 悟空 | 字节跳动 |

| 领域 | 模型链接 | 模型描述 |

|---|---|---|

| 医疗 | MedGPT | 医联发布的 |

| 医疗 | MedPalm | Google在Faln-PaLM的基础上通过多种类型的医疗QA数据进行prompt-tuning指令微调得到,同时构建了MultiMedQA |

| 医疗 | ChatDoctor | 110K真实医患对话样本+5KChatGPT生成数据进行指令微调 |

| 医疗 | Huatuo Med-ChatGLM | 医学知识图谱和chatgpt构建中文医学指令数据集+医学文献和chatgpt构建多轮问答数据 |

| 医疗 | Chinese-vicuna-med | Chinese-vicuna在cMedQA2数据上微调 |

| 医疗 | OpenBioMed | 清华AIR开源轻量版BioMedGPT, 知识图谱&20+生物研究领域多模态预训练模型 |

| 医疗 | DoctorGLM | ChatDoctor+MedDialog+CMD 多轮对话+单轮指令样本微调GLM |

| 医疗 | MedicalGPT-zh | 自建的医学数据库ChatGPT生成QA+16个情境下SELF构建情景对话 |

| 医疗 | PMC-LLaMA | 医疗论文微调Llama |

| 医疗 | PULSE | Bloom微调+继续预训练 |

| 医疗 | NHS-LLM | Chatgpt生成的医疗问答,对话,微调模型 |

| 医疗 | 神农医疗大模型 | 以中医知识图谱的实体为中心生成的中医知识指令数据集11w+,微调LLama-7B |

| 医疗 | 岐黄问道大模型 | 3个子模型构成,已确诊疾病的临床治疗模型+基于症状的临床诊疗模型+中医养生条理模型,看起来是要ToB落地 |

| 医疗 | Zhongjing | 基于Ziya-LLama+医疗预训练+SFT+RLHF的中文医学大模型 |

| 医疗 | MeChat | 心理咨询领域,通过chatgpt改写多轮对话56k |

| 医疗 | SoulChat | 心理咨询领域中文长文本指令与多轮共情对话数据联合指令微调 ChatGLM-6B |

| 医疗 | MindChat | MindChat-Baichuan-13B,Qwen-7B,MindChat-InternLM-7B使用不同基座在模型安全,共情,人类价值观对其上进行了强化 |

| 医疗 | DISC-MedLLM | 疾病知识图谱构建QA对+QA对转化成单论对话+真实世界数据重构+人类偏好数据筛选,SFT微调baichuan |

| 法律 | LawGPT-zh | 利用ChatGPT清洗CrimeKgAssitant数据集得到52k单轮问答+我们根据中华人民共和国法律手册上最核心的9k法律条文,利用ChatGPT联想生成具体的情景问答+知识问答使用ChatGPT基于文本构建QA对 |

| 法律 | LawGPT | 基于llama+扩充词表二次预训练+基于法律条款构建QA指令微调 |

| 法律 | Lawyer Llama | 法律指令微调数据集:咨询+法律考试+对话进行指令微调 |

| 法律 | LexiLaw | 法律指令微调数据集:问答+书籍概念解释,法条内容进行指令微调 |

| 法律 | ChatLaw | 北大推出的法律大模型,应用形式很新颖类似频道内流一切功能皆融合在对话形式内 |

| 法律 | 录问模型 | 在baichuan基础上40G二次预训练+100K指令微调,在知识库构建上采用了Emb+意图+关键词联想结合的方案 |

| 金融 | OpenGPT | 领域LLM指令样本生成+微调框架 |

| 金融 | 乾元BigBang金融2亿模型 | 金融领域预训练+任务微调 |

| 金融 | 度小满千亿金融大模型 | 在Bloom-176B的基础上进行金融+中文预训练和微调 |

| 金融 | 聚宝盆 | 基于 LLaMA 系基模型经过中文金融知识指令精调/指令微调(Instruct-tuning) 的微调模型 |

| 金融 | PIXIU | 整理了多个金融任务数据集加入了时间序列数据进行指令微调 |

| 金融 | FinGPT | 金融传统任务微调 or chatgpt生成金融工具调用 |

| 金融 | CFGPT | 金融预训练+指令微调+RAG等检索任务增强 |

| 金融 | DISC-FinLLM | 复旦发布多微调模型组合金融系统,包括金融知识问答,金融NLP任务,金融计算,金融检索问答 |

| 金融 | InvestLM | CFA考试,SEC, StackExchange投资问题等构建的金融指令微调LLaMA-65+ |

| 金融 | DeepMoney | 基于yi-34b-200k使用金融研报进行微调 |

| 编程 | Starcoder | 80种编程语言+Issue+Commit训练得到的编程大模型 |

| 编程 | ChatSQL | 基于ChatGLM实现NL2sql |

| 编程 | codegeex | 13B预训练+微调多语言变成大模型 |

| 编程 | codegeex2 | Chatglm2的基础上CodeGeeX2-6B 进一步经过了 600B 代码数据预训练 |

| 编程 | stabelcode | 560B token多语言预训练+ 120,000 个 Alpaca指令对齐 |

| 编程 | SQLCoder | 在StarCoder的基础上微调15B超越gpt3.5 |

| 数学 | MathGPT | 是好未来自主研发的,面向全球数学爱好者和科研机构,以解题和讲题算法为核心的大模型。 |

| 数学 | MammoTH | 通过COT+POT构建了MathInstruct数据集微调llama在OOD数据集上超越了WizardLM |

| 数学 | MetaMath | 模型逆向思维解决数学问题,构建了新的MetaMathQA微调llama2 |

| 交通 | TransGPT | LLama-7B+34.6万领域预训练+5.8万条领域指令对话微调(来自文档问答) |

| 交通 | TrafficGPT | ChatGPT+Prompt实现规划,调用交通流量领域专业TFM模型,TFM负责数据分析,任务执行,可视化等操 |

| 科技 | Mozi | 红睡衣预训练+论文QA数据集 + ChatGPT扩充科研对话数据 |

| 天文 | StarGLM | 天文知识指令微调,项目进行中后期考虑天文二次预训练+KG |

| 写作 | 阅文-网文大模型介绍 | 签约作者内测中,主打的内容为打斗场景,剧情切换,环境描写,人设,世界观等辅助片段的生成 |

| 写作 | MediaGPT | LLama-7B扩充词表+指令微调,指令来自国内媒体专家给出的在新闻创作上的80个子任务 |

| 电商 | EcomGPT | 电商领域任务指令微调大模型,指令样本250万,基座模型是Bloomz |

| 植物科学 | PLLaMa | 基于Llama使用植物科学领域学术论文继续预训练+sft扩展的领域模型 |

| 评估 | Auto-J | 上交开源了价值评估对齐13B模型 |

| 评估 | JudgeLM | 智源开源了 JudgeLM 的裁判模型,可以高效准确地评判各类大模型 |

| 评估 | CritiqueLLM | 智谱AI发布评分模型CritiqueLLM,支持含参考文本/无参考文本的评估打分 |

| 工具描述 | 链接 |

|---|---|

| FlexFlow:模型部署推理框架 | https://github.com/flexflow/FlexFlow |

| Medusa:针对采样解码的推理加速框架,可以和其他策略结合 | https://github.com/FasterDecoding/Medusa |

| FlexGen: LLM推理 CPU Offload计算架构 | https://github.com/FMInference/FlexGen |

| VLLM:超高速推理框架Vicuna,Arena背后的无名英雄,比HF快24倍,支持很多基座模型 | https://github.com/vllm-project/vllm |

| Streamingllm: 新注意力池Attention方案,无需微调拓展模型推理长度,同时为推理提速 | https://github.com/mit-han-lab/streaming-llm |

| llama2.c: llama2 纯C语言的推理框架 | https://github.com/karpathy/llama2.c |

| Guidance: 大模型推理控制框架,适配各类interleave生成 | https://github.com/guidance-ai/guidance |

| 应用 | 链接 |

|---|---|

| Wordware.ai: 新的flow构建交互形式,像notion一样的magic命令行形式 | https://www.wordware.ai/?utm_source=toolify |

| Coze:免费 | https://www.coze.com/ |

| Dify | https://dify.ai/zh |

| Anakin | https://app.anakin.ai/discover |

| FLowise | https://github.com/FlowiseAI/Flowise/blob/main/README-ZH.md |

| Microsoft Power Automate | https://www.microsoft.com/zh-cn/power-platform/products/power-automate |

| Mind Studio:有限使用 | https://youai.ai/ |

| QuestFlow:付费 | https://www.questflow.ai/ |

| WordWare.ai: | https://www.wordware.ai/?ref=aihub.cn |

| 工具 | 描述 |

|---|---|

| Alexandria | 从Arix论文开始把整个互联网变成向量索引,可以免费下载 |

| RapidAPI | 统一这个世界的所有API,最大API Hub,有调用成功率,latency等,是真爱! |

| Composio | 可以和langchain,crewAI等进行集成的工具API |

| PyTesseract | OCR解析服务 |

| EasyOCR | 确实使用很友好的OCR服务 |

| surya | OCR服务 |

| Vary | 旷视多模态大模型pdf直接转Markdown |

| LLamaParse | LLamaIndex提供的PDF解析服务,每天免费1000篇 |

| Jina-Cobert | Jian AI开源中英德,8192 Token长文本Embedding |

| BGE-M3 | 智源开源多语言,稀疏+稠密表征,8192 Token长文本Embedding |

| BCE | 网易开源更适配RAG任务的Embedding模型 |

| PreFLMR-VIT-G | 剑桥开源多模态Retriever |

| openparse | 文本解析分块开源服务,先分析文档的视觉布局再进行切分 |

| layout-parser | 准确度较高的开源OCR文档布局识别 |

| AdvancedLiterateMachinery | 阿里OCR团队的文档解析和图片理解 |

| ragflow-deepdoc | ragflow提供的文档识别和解析能力 |

| FireCrawl | 爬取url并生成markdown的神器 |

| Jina-Reader | 把网页转换成模型可读的格式 |

| spRAG | 注入上下文表征,和自动组合上下文提高完整性 |

| knowledge-graph | 自动知识图谱构建工具 |

| Marker-API | PDF转Markdwon服务 |

| MinerU | 文档识别,加入了Layout识别,Reading Order排序,公式识别,OCR文字识别的pipeline |

- Weavel APE

- DSPY:类比Pydantic的标准化prompt和针对few-shot选择的调优

- PromptPerfect:提供多种模态,多模型的prompt一键优化插件

- LangGPT: 结构化Prompt编写模版

- Hebbia.aiMatrix: 号称可以解决更多RAGfail 的分析类场景,多步推理类场景的任务流解决方案

- genspark.ai: 融合了旅行,购物的真生成式搜索引擎,内容也由模型直接生成,可以说是全新搜索形式了,而模型本身回到sidebar的位置只起到辅助的作用,整个网站的风格偏小红书风格

- MindSearch: 通过动态构建图节点,实现更深更广的RAG

- 秘塔搜索: 融合了脑图,表格多模态问答的搜索应用

- iAsk: 海外的通搜APP,支持source筛选过滤

- You.COM : 支持多种检索增强问答模式

- Walles.AI: 融合了图像聊天,文本聊天,chatpdf,web-copilot等多种功能的智能助手

- webpilot.ai 比ChatGPT 自带的 Web Browsing更好用的浏览器检索插件,更适用于复杂搜索场景,也开通api调用了

- New Bing:需要科学上网哦

- Perplexity.ai: 同样需要科学上网,感觉比Bing做的更好的接入ChatGPT的神奇搜索引擎,在Bing之外还加入了相关推荐和追问

- sider.ai: 支持多模型浏览器插件对话和多模态交互操作

- 360AI搜索: 360的AI搜索和秘塔有些像

- MyLens.AI: 支持时间轴,脑图等多种生成结果的检索增强

- Globe Explorer:搜索query相关的知识并构建类似知识图谱的结构返回图片信息

- 天工AI搜索:和You相同的三种模式检索增强

- MiKU搜索:更多面向事件的搜索

- 开搜AI搜索搜索: 免费无广告,直达结果

- EXA:新搜索引擎目标是替换google为AI提供内容检索

- PeopleAlsoAsk: 通过脑图对用户提问进行扩展

- supermemory: 个人知识管理项目,创建note就可以搜索到,更多是作为浏览器插件使用

- glean: 企业知识搜索和项目管理类的搜索初创公司,帮助员工快速定位信息,帮助公司整合信息

- Mem: 个人知识管理,例如知识图谱,已获openai融资

- GPT-Crawler: 通过简单配置,即可自行提取网页的文本信息构建知识库,并进一步自定义GPTs

- ChatInsight: 企业级文档管理,和基于文档的对话

- Afforai: 看到现在做的不错的个人知识管理和笔记文件,支持多文档对比,期待和zetro打通

- Kimi-Chat: 长长长长文档理解无敌的Kimi-Chat,单文档总结多文档结构化对比,无所不能,多长都行!

- ChatDoc:ChatPDF升级版,需要科学上网,增加了表格类解析,支持选择区域的问答,在PDF识别上做的很厉害

- AskyourPdf: 同样是上传pdf进行问答和摘要的应用

- DocsGPT: 比较早出来的Chat DOC通用方案

- ChatPDF: 国内的ChatPDF, 上传pdf后,会给出文章的Top5可能问题,然后对话式从文档中进行问答和检索,10s读3万字

- AlphaBox: 从个人文件夹管理出发的文档问答工具

- Miracleplus: 全AI Agent负责运营的AI内容网站

- goatstack: 可以自定义的论文订阅网站,每天有AI筛选并总结相关论文并推送给用户

- Clay: 销售线索管理和扩展

- Lumina: 据说搜索相关性比google好5倍的开源论文搜索+AI摘要总结

- SCISPACE: 论文研究的白月光,融合了全库搜索问答,以及个人上传PDF构建知识库问答。同样支持相关论文发现,和论文划词解读。并且解读内容可以保存到notebook中方便后续查找,可以说是产品和算法强强联合了。

- ELICIT: 和SCISPACE相似,支持一键生成论文relatied work

- Consensus: AI加持的论文搜素,多论文总结,观点对比工具。产品排名巨高,但个人感觉搜索做的有提升空间

- Aminer: 论文搜索,摘要,问答,搜索关键词符号化改写;但论文知识库问答有些幻觉严重

- cool.paper: 苏神开发的基于kimi的论文阅读网站

- OpenRead: 国内产品,面向论文写作,阅读场景,可以帮助生成文献综述,以及提供和NotionAI相似的智能Markdown用于写作

- ChatPaper: 根据输入关键词,自动在arxiv上下载最新的论文,并对论文进行摘要总结,可以在huggingface上试用

- researchgpt: 和ChatPDF类似,支持arivx论文下载,加载后对话式获取论文重点

- ChatGPT-academic: 又是一个基于gradio实现的paper润色,摘要等功能打包的实现,不少功能可以借鉴

- BriefGPT: 日更Arxiv论文,并对论文进行摘要,关键词抽取,帮助研究者了解最新动态, UI不错哟

- OpenResearcher:针对Arxiv 论文的RAG方案还给出了评估benchmark

- ZeroGPT: 提供GPT文本检测功能的

- AFFiNE AI: 很有创意的写作平台,结合写作绘图为一体

- SudoWrite: 很有创意的AI卡片写作应用,主要面向各种类型的长文写作

- 赛博马良:题如其名,可定制AI员工24小时全网抓取关注的创作选题,推送给小编进行二次创作

- 研墨AI: 面向咨询领域的内容创作应用

- ChatMind: chatgpt生成思维导图,模板很丰富,泛化性也不错,已经被XMind收购了

- 范文喵写作: 范文喵写作工具,选题,大纲,写作全流程

- WriteSonic:AI写作,支持对话和定向创作如广告文案,商品描述, 支持Web检索是亮点,支持中文

- copy.ai: WriteSonic竞品,亮点是像论文引用一样每句话都有对应网站链接,可以一键复制到右边的创作Markdown,超级好用!

- NotionAI:智能Markdown,适用真相!在创作中用command调用AI辅助润色,扩写,检索内容,给创意idea

- AIEditor: NotionAI开源平替,可以替换任意模型

- Eidos:NotionAI的离线平替,可以本地搭建笔记本并替换模型

- Hix-AI: 同时提供copilot模式和综合写作模式

- AI-Write: 个人使用感较好的流程化写作工具

- Jasper: 同上,全是竞品哈哈

- copy.down: 中文的营销文案生成,只能定向创作,支持关键词到文案的生成

- Weaver AI: 波形智能开发的内容创作app,支持多场景写作

- ChatExcel: 指令控制excel计算,对熟悉excel的有些鸡肋,对不熟悉的有点用

- mindShow:免费+付费的PPT制作工具,自定义PPT模板还不够好

- 妙想金融: 东方财富推出的大模型应用

- 支小助:增加思维框架匹配的大模型思考问答

- 通义点金:通义千文也推出了研报阅读和个股问答模块

- Linq:用AI简化金融分析师的研究工作

- BrightWave:AI金融研究助手

- Reportify: 金融领域公司公告,新闻,电话会的问答和摘要总结

- Alpha派: kimi加持会议纪要 + 投研问答 +各类金融资讯综合的一站式平台

- 况客FOF智能投顾:基金大模型应用,基金投顾,支持nl2sql类的数据查询,和基金信息对比查询等

- HithinkGPT:同花顺发布金融大模型问财,覆盖查询,分析,对比,解读,预测等多个问题领域

- FinChat.io:使用最新的财务数据,电话会议记录,季度和年度报告,投资书籍等进行训练

- TigerGPT: 老虎证券,GPT4做个股分析,财报分析,投资知识问答

- ChatFund:韭圈儿发布的第一个基金大模型,看起来是做了多任务指令微调,和APP已有的数据功能进行了全方位的打通,从选基,到持仓分析等等

- ScopeChat:虚拟币应用,整个对话类似ChatLaw把工具组件嵌入了对话中

- AInvest:个股投资,融合BI分析,广场讨论区(有演变成雪球热度指数的赶脚)

- 无涯Infinity :星环科技发布 的金融大模型

- 曹植:达观发布金融大模型融合data2text等金融任务,赋能报告写作

- 妙想: 东方财富自研金融大模型开放试用,但似乎申请一直未通过

- 恒生LightGPT:金融领域继续预训练+插件化设计

- bondGPT: GPT4在细分债券市场的应用开放申请中

- IndexGPT:JPMorgan在研的生成式投资顾问

- Alpha: ChatGPT加持的金融app,支持个股信息查询,资产分析诊断,财报汇总etc

- Composer:量化策略和AI的结合,聊天式+拖拽式投资组合构建和回测

- Finalle.ai: 实时金融数据流接入大模型

- OpenBB: 开源金融投资框架,OpenBB+LLamaIndex主要是大模型+API的使用方案,通过自然语言进行金融数据查询,分析和可视化

- Mr.-Ranedeer-: 基于prompt和GPT-4的强大能力提供个性化学习环境,个性化出题+模型解答

- AI Topiah: 聆心智能AI角色聊天,和路飞唠了两句,多少有点中二之魂在燃烧

- chatbase: 情感角色聊天,还没尝试

- Vana: virtual DNA, 通过聊天创建虚拟自己!概念很炫

- NexusGPT: AutoGPT可以出来工作了,第一个全AI Freelance平台

- cognosys: 全网最火的web端AutoGPT,不过咋说呢试用了下感觉下巴要笑掉了,不剧透去试试你就知道

- godmode:可以进行人为每一步交互的的AutoGPT

- agentgpt: 基础版AutoGPT

- AgentQL:用Query的方式和网页进行交互,开放waitlist申请了

- OpenDevin:CognitionAI发布再SWE-Bench上编码能力有显著提升的智能体

- AlphaCodium: Flow Engineering提高代码整体通过率

- AutoDev: AI编程辅助工具

- Codium: 开源的编程Copilot来啦

- Copilot: 要付费哟

- Fauxpilot: copilot本地开源替代

- Codeium: Copilot替代品,有免费版本支持各种plugin !

- Wolverine: 代码自我debug的python脚本

- Screenshot-to-code: 从网页直接生成HTML代码

- TableAgent: 九章云极推出的数据分析,机器学习智能体

- SwiftAgent: 数势科技推出的数据分析智能体

- Kyligence Copilot:Kyligence发布一站式指标平台的 AI 数智助理,支持对话式指标搜索,异动归因等等

- ai2sql: text2sql老牌公司,相比sqltranslate功能更全面,支持SQL 语法检查、格式化和生成公式

- chat2query: text2sql 相比以上两位支持更自然的文本指令,以及更复杂的数据分析类的sql生成

- OuterBase: text2sql 设计风格很吸睛!电子表格结合mysql和dashboard,更适合数据分析宝宝

- Chat2DB:智能的通用数据库SQL客户端和报表工具

- ChatBI:网易数帆发布ChatBI对话数据分析平台

- DataHerald: Text2SQL

- WrenAI:Text2SQL

- Vaana: 可以本地搭载的基于python的NL2SQL+Plotly绘图框架

- dreamstudio.ai: 开创者,Stable Difussion, 有试用quota

- midjourney: 开创者,艺术风格为主

- Dall.E: 三巨头这就凑齐了

- ControlNet: 为绘画创作加持可控性

- gemo.ai: 多模态聊天机器人,包括文本,图像,视频生成

- storybird: 根据提示词生成故事绘本,还可以售卖

- Magnific.ai: 两个人的团队做出的AI图片精修师

- Civital.com: AI图片共享网站同时支持多模型的图片生成

- IdeoGram.ai: Google Bran研究员创立的图片生成,Md平替

- 即梦:字节推出的文生图,文生视频平台

- FLUX1.0: 堪比MidJourney的开源AI绘画模型,中文支持不好用英文尝试

- Imagen 3: 谷歌推出的最新的文生图模型

- Morph Studio: Stability AI入场视频制作

- 星火绘镜:星火推出,给指令自动生成电影分镜脚本,并制作分镜视频,质量比较高,但需要借助第三方软件剪辑合成

- 即创:抖音推出,电商短视频制作,支持给定商品描述生成对应营销视频

- 度加:支持直接text2video,但质量比星火差很多,应该说是动态PPT的视频style,但一键生成确实很像,也支持进一步编辑和素材替换

- 一帧秒创: 支持图文转视频和数字人播报,个人体验比度加略弱

- Elai: 支持大纲直接生成数字人播报视频,免费试用只能制作几分钟

- AIs for you : AI新闻,AI产品个人定制化订阅推送网站,实时追踪新产品

- SimilarGPTs:全网AI产品流量大全:

- GPTSeek: 大家投票得出的最有价值的GPT应用

- ProductHunt: 技术产品网站,各类热门AI技术产品的集散地

- AI-Product-Index

- AI-Products-All-In-One

- TheRunDown: GPT应用分类

- Awesome AI Agents:Agent应用收藏

- GPT Demo

- AI-Bot各类工具导航

- AI-Search: AI应用检索网站

- StackRadar:各类高科技应用导航

- askaitools: AI产品搜索引擎

- LangGPT: 结构化提示词手册

- Prompt Guide 101: 分任务的prompt编写指南

- OpenAI Cookbook: 提供OpenAI模型使用示例 ⭐

- PromptPerfect:用魔法打败魔法,输入原始提示词,模型进行定向优化,试用后我有点沉默了,可以定向支持不同使用prompt的模型如Difussion,ChatGPT, Dalle等

- ClickPrompt: 为各种prompt加持的工具生成指令包括Difussion,chatgptdeng, 需要OpenAI Key

- ChatGPT ShortCut:提供各式场景下的Prompt范例,范例很全,使用后可以点赞! ⭐

- Full ChatGPT Prompts + Resources: 各种尝尽的prompt范例,和以上场景有所不同

- learning Prompt: prompt engineering超全教程,和落地应用收藏,包括很多LLM调用Agent的高级场景 ⭐

- The art of asking chatgpt for high quality answers: 如何写Prompt指令出书了,链接是中文翻译的版本,比较偏基础使用

- Prompt-Engineer-Guide: 同learnig prompt类的集成教程,互相引用可还行?!分类索引做的更好些 ⭐

- AI Alignment Forum: RLHF等对齐相关最新论文和观点的讨论论坛

- Langchain: Chat with your data:吴恩达LLM实践课程

- 构筑大语言模型应用:应用开发与架构设计: 一本关于 LLM 在真实世界应用的开源电子书

- Large Language Models: Application through Production: 大模型应用Edx出品的课程

- Minbpe: Karpathy大佬离职openai后整了个分词器的教学代码

- LLM-VIZ: 大模型结构可视化支持GPT系列

- 我如何夺冠新加坡首届 GPT-4 提示工程大赛 [译]: 干货很多的prompt技巧

- Prompt-with-Claude: Claude的prompt指南和说明书

- OpenAI ChatGPT Intro

- OpenAI InstructGPT intro

- AllenAI ChatGPT能力解读:How does GPT Obtain its Ability? Tracing Emergent Abilities of Language Models to their Sources ⭐

- Huggingface ChatGPT能力解读:The techniques behind ChatGPT: RLHF, IFT, CoT, Red teaming, and more

- Stephen Wolfram ChatGPT能力解读: What Is ChatGPT Doing and Why Does It Work?

- Chatgpt相关解读汇总

- AGI历史与现状

- 张俊林 通向AGI之路:大型语言模型(LLM)技术精要

- 知乎回答 OpenAI 发布 GPT-4,有哪些技术上的优化或突破?

- 追赶ChatGPT的难点与平替

- 压缩即泛化,泛化即智能 ⭐

- LLM Powered Autonomous Agents ⭐

- All You Need to Know to Build Your First LLM App ⭐

- GPT-4 Architecture, Infrastructure, Training Dataset, Costs, Vision, MoE

- OpenAI研究员出书:为什么伟大不能被计划:

- 拾象投研机构对LLM的调研报告(文中有两次PPT的申请链接):

- 启明创投State of Generative AI 2023

- How to Use AI to Do Stuff: An Opinionated Guide

- Llama 2: an incredible open LLM

- Wolfram语言之父新书:这就是ChatGPT

- 谷歌出品:对大模型领悟能力的一些探索很有意思 Do Machine Learning Models Memorize or Generalize? ⭐

- 符尧大佬系列新作 An Initial Exploration of Theoretical Support for Language Model Data Engineering. Part 1: Pretraining

- 奇绩创坛2023秋季路演日上创新LLM项目一览

- The Power of Prompting微软首席科学家对prompt在垂直领域使用的观点

- The Bitter Lesson 强化学习之父总结的AI研究的经验教训

- LlamaIndex: Beyond RAG: Building Advanced Context-Augmented LLM Applications

- OpenAi Model-Spec:OpenAI发布的第一版模型规范指南,提出了模型理想和现实约束之间的平衡

- Build a Large Language Model from Scratch

- genai-handbook: 超级全的大模型各方面知识资源汇总成了handbook

- 聊聊Agent,RAG主流开发技术和未来应用

- 麻省理工科技采访OpenAI工程师

- 陆奇最新演讲实录:我的大模型世界观|第十四期

- OpenAI首席科学家最新讲座解读LM无监督预训练学了啥 An observation on Generalization ⭐

- The Complete Beginners Guide To Autonomous Agents: Octane AI创始人 Matt Schlicht发表的关于人工智能代理的一些思考

- Large Language Models (in 2023) OpenAI科学家最新大模型演讲

- OpenAI闭门会议DevDay视频 - A survey of Techniques for Maximizing LLM performance,无法翻墙可搜标题找笔记

- 月之暗面杨植麟专访,值得细读 ⭐

- 吴恩达最新演讲:AI Agent工作流的未来

- LLM-Bootcamp 2023

- Extrinsic Hallucinations in LLMs

- https://github.com/dongguanting/In-Context-Learning_PaperList

- https://github.com/thunlp/PromptPapers

- https://github.com/Timothyxxx/Chain-of-ThoughtsPapers

- https://github.com/thunlp/ToolLearningPapers

- https://github.com/MLGroupJLU/LLM-eval-survey

- https://github.com/thu-coai/PaperForONLG

- https://github.com/khuangaf/Awesome-Chart-Understanding

- A Survey of Large Language Models

- Pre-train, Prompt, and Predict: A Systematic Survey of Prompting Methods in Natural Language Processing ⭐

- Paradigm Shift in Natural Language Processing

- Pre-Trained Models: Past, Present and Future

- What Language Model Architecture and Pretraining objects work best for zero shot generalization ⭐

- Towards Reasoning in Large Language Models: A Survey

- Reasoning with Language Model Prompting: A Survey ⭐

- An Overview on Language Models: Recent Developments and Outlook ⭐

- A Survey of Large Language Models[6.29更新版]

- Unifying Large Language Models and Knowledge Graphs: A Roadmap

- Augmented Language Models: a Survey ⭐

- Domain Specialization as the Key to Make Large Language Models Disruptive: A Comprehensive Survey

- Challenges and Applications of Large Language Models

- The Rise and Potential of Large Language Model Based Agents: A Survey

- Large Language Models for Information Retrieval: A Survey

- AI Alignment: A Comprehensive Survey

- Trends in Integration of Knowledge and Large Language Models: A Survey and Taxonomy of Methods, Benchmarks, and Applications

- Large Models for Time Series and Spatio-Temporal Data: A Survey and Outlook

- A Survey on Language Models for Code

- Model-as-a-Service (MaaS): A Survey

- In Context Learning

- LARGER LANGUAGE MODELS DO IN-CONTEXT LEARNING DIFFERENTLY

- How does in-context learning work? A framework for understanding the differences from traditional supervised learning

- Why can GPT learn in-context? Language Model Secretly Perform Gradient Descent as Meta-Optimizers ⭐

- Rethinking the Role of Demonstrations What Makes incontext learning work? ⭐

- Trained Transformers Learn Linear Models In-Context

- In-Context Learning Creates Task Vectors

- 涌现能力

- Sparks of Artificial General Intelligence: Early experiments with GPT-4

- Emerging Ability of Large Language Models ⭐

- LANGUAGE MODELS REPRESENT SPACE AND TIME

- Are Emergent Abilities of Large Language Models a Mirage?

- 能力评估

- IS CHATGPT A GENERAL-PURPOSE NATURAL LANGUAGE PROCESSING TASK SOLVER?

- Can Large Language Models Infer Causation from Correlation?

- Holistic Evaluation of Language Model

- Harnessing the Power of LLMs in Practice: A Survey on ChatGPT and Beyond

- Theory of Mind May Have Spontaneously Emerged in Large Language Models

- Beyond The Imitation Game: Quantifying And Extrapolating The Capabilities Of Language Models

- Do Models Explain Themselves? Counterfactual Simulatability of Natural Language Explanations

- Demystifying GPT Self-Repair for Code Generation

- Evidence of Meaning in Language Models Trained on Programs

- Can Explanations Be Useful for Calibrating Black Box Models

- On the Robustness of ChatGPT: An Adversarial and Out-of-distribution Perspective

- Language acquisition: do children and language models follow similar learning stages?

- Language is primarily a tool for communication rather than thought

- 领域能力

- Capabilities of GPT-4 on Medical Challenge Problems

- Can Generalist Foundation Models Outcompete Special-Purpose Tuning? Case Study in Medicine

- Tunning Free Prompt

- GPT2: Language Models are Unsupervised Multitask Learners

- GPT3: Language Models are Few-Shot Learners ⭐

- LAMA: Language Models as Knowledge Bases?

- AutoPrompt: Eliciting Knowledge from Language Models

- Fix-Prompt LM Tunning

- T5: Exploring the Limits of Transfer Learning with a Unified Text-to-Text Transformer

- PET-TC(a): Exploiting Cloze Questions for Few Shot Text Classification and Natural Language Inference ⭐

- PET-TC(b): PETSGLUE It’s Not Just Size That Matters Small Language Models are also few-shot learners

- GenPET: Few-Shot Text Generation with Natural Language Instructions

- LM-BFF: Making Pre-trained Language Models Better Few-shot Learners ⭐

- ADEPT: Improving and Simplifying Pattern Exploiting Training

- Fix-LM Prompt Tunning

- Prefix-tuning: Optimizing continuous prompts for generation

- Prompt-tunning: The power of scale for parameter-efficient prompt tuning ⭐

- P-tunning: GPT Understands Too ⭐

- WARP: Word-level Adversarial ReProgramming

- LM + Prompt Tunning

- P-tunning v2: Prompt Tuning Can Be Comparable to Fine-tunning Universally Across Scales and Tasks

- PTR: Prompt Tuning with Rules for Text Classification

- PADA: Example-based Prompt Learning for on-the-fly Adaptation to Unseen Domains

- Fix-LM Adapter Tunning

- LORA: LOW-RANK ADAPTATION OF LARGE LANGUAGE MODELS ⭐

- LST: Ladder Side-Tuning for Parameter and Memory Efficient Transfer Learning

- Parameter-Efficient Transfer Learning for NLP

- INTRINSIC DIMENSIONALITY EXPLAINS THE EFFECTIVENESS OF LANGUAGE MODEL FINE-TUNING

- DoRA: Weight-Decomposed Low-Rank Adaptation

- Representation Tuning

- ReFT: Representation Finetuning for Language Models

- GLM-130B: AN OPEN BILINGUAL PRE-TRAINED MODEL

- PaLM: Scaling Language Modeling with Pathways

- PaLM 2 Technical Report

- GPT-4 Technical Report

- Backpack Language Models

- LLaMA: Open and Efficient Foundation Language Models

- Llama 2: Open Foundation and Fine-Tuned Chat Models

- Sheared LLaMA: Accelerating Language Model Pre-training via Structured Pruning

- OpenBA: An Open-sourced 15B Bilingual Asymmetric seq2seq Model Pre-trained from Scratch

- Mistral 7B

- Ziya2: Data-centric Learning is All LLMs Need

- MEGABLOCKS: EFFICIENT SPARSE TRAINING WITH MIXTURE-OF-EXPERTS

- TUTEL: ADAPTIVE MIXTURE-OF-EXPERTS AT SCALE

- Phi1- Textbooks Are All You Need ⭐

- Phi1.5- Textbooks Are All You Need II: phi-1.5 technical report

- Phi-3 Technical Report: A Highly Capable Language Model Locally on Your Phone

- Gemini: A Family of Highly Capable Multimodal Models

- In-Context Pretraining: Language Modeling Beyond Document Boundaries

- LLAMA PRO: Progressive LLaMA with Block Expansion

- QWEN TECHNICAL REPORT

- Fewer Truncations Improve Language Modeling

- ChatGLM: A Family of Large Language Models from GLM-130B to GLM-4 All Tools

- 经典方案

- Flan: FINETUNED LANGUAGE MODELS ARE ZERO-SHOT LEARNERS ⭐

- Flan-T5: Scaling Instruction-Finetuned Language Models

- ExT5: Towards Extreme Multi-Task Scaling for Transfer Learning

- Instruct-GPT: Training language models to follow instructions with human feedback ⭐

- T0: MULTITASK PROMPTED TRAINING ENABLES ZERO-SHOT TASK GENERALIZATION

- Natural Instructions: Cross-Task Generalization via Natural Language Crowdsourcing Instructions

- Tk-INSTRUCT: SUPER-NATURALINSTRUCTIONS: Generalization via Declarative Instructions on 1600+ NLP Tasks

- ZeroPrompt: Scaling Prompt-Based Pretraining to 1,000 Tasks Improves Zero-shot Generalization

- Unnatural Instructions: Tuning Language Models with (Almost) No Human Labor

- INSTRUCTEVAL Towards Holistic Evaluation of Instrucion-Tuned Large Language Models

- SFT数据Scaling Law

- LIMA: Less Is More for Alignment ⭐

- Maybe Only 0.5% Data is Needed: A Preliminary Exploration of Low Training Data Instruction Tuning

- AlpaGasus: Training A Better Alpaca with Fewer Data

- InstructionGPT-4: A 200-Instruction Paradigm for Fine-Tuning MiniGPT-4

- Instruction Mining: High-Quality Instruction Data Selection for Large Language Models

- Visual Instruction Tuning with Polite Flamingo

- Exploring the Impact of Instruction Data Scaling on Large Language Models: An Empirical Study on Real-World Use Cases

- Scaling Relationship on Learning Mathematical Reasoning with Large Language Models

- WHEN SCALING MEETS LLM FINETUNING: THE EFFECT OF DATA, MODEL AND FINETUNING METHOD

- 新对齐/微调方案

- WizardLM: Empowering Large Language Models to Follow Complex Instructions ⭐

- Becoming self-instruct: introducing early stopping criteria for minimal instruct tuning

- Self-Alignment with Instruction Backtranslation ⭐

- Mixture-of-Experts Meets Instruction Tuning:A Winning Combination for Large Language Models

- Goat: Fine-tuned LLaMA Outperforms GPT-4 on Arithmetic Tasks

- PROMPT2MODEL: Generating Deployable Models from Natural Language Instructions

- OpinionGPT: Modelling Explicit Biases in Instruction-Tuned LLMs

- Improving Language Model Negotiation with Self-Play and In-Context Learning from AI Feedback

- Human-like systematic generalization through a meta-learning neural network

- Magicoder: Source Code Is All You Need

- Beyond Human Data: Scaling Self-Training for Problem-Solving with Language Models

- Generative Representational Instruction Tuning

- InsCL: A Data-efficient Continual Learning Paradigm for Fine-tuning Large Language Models with Instructions

- The Instruction Hierarchy: Training LLMs to Prioritize Privileged Instructions

- Magpie: Alignment Data Synthesis from Scratch by Prompting Aligned LLMs with Nothing

- 指令数据生成

- APE: LARGE LANGUAGE MODELS ARE HUMAN-LEVEL PROMPT ENGINEERS ⭐

- SELF-INSTRUCT: Aligning Language Model with Self Generated Instructions ⭐

- iPrompt: Explaining Data Patterns in Natural Language via Interpretable Autoprompting

- Flipped Learning: Guess the Instruction! Flipped Learning Makes Language Models Stronger Zero-Shot Learners

- Fairness-guided Few-shot Prompting for Large Language Models

- Instruction induction: From few examples to natural language task descriptions .

- SELF-QA Unsupervised Knowledge Guided alignment.

- GPT Self-Supervision for a Better Data Annotator

- The Flan Collection Designing Data and Methods

- Self-Consuming Generative Models Go MAD

- InstructEval: Systematic Evaluation of Instruction Selection Methods

- Overwriting Pretrained Bias with Finetuning Data

- Improving Text Embeddings with Large Language Models

- MAGPIE: Alignment Data Synthesis from Scratch by Prompting Aligned LLMs with Nothing

- Scaling Synthetic Data Creation with 1,000,000,000 Personas

- 如何降低通用能力损失

- How Abilities in Large Language Models are Affected by Supervised Fine-tuning Data Composition

- TWO-STAGE LLM FINE-TUNING WITH LESS SPECIALIZATION AND MORE GENERALIZATION

- 微调经验/实验报告

- BELLE: Exploring the Impact of Instruction Data Scaling on Large Language Models: An Empirical Study on Real-World Use Cases

- Baize: Baize: An Open-Source Chat Model with Parameter-Efficient Tuning on Self-Chat Data

- A Comparative Study between Full-Parameter and LoRA-based Fine-Tuning on Chinese Instruction Data for Large LM

- Exploring ChatGPT’s Ability to Rank Content: A Preliminary Study on Consistency with Human Preferences

- Towards Better Instruction Following Language Models for Chinese: Investigating the Impact of Training Data and Evaluation

- Fine tuning LLMs for Enterprise: Practical Guidelines and Recommendations

- Others

- Crosslingual Generalization through Multitask Finetuning

- Cross-Task Generalization via Natural Language Crowdsourcing Instructions

- UNIFIEDSKG: Unifying and Multi-Tasking Structured Knowledge Grounding with Text-to-Text Language Models

- PromptSource: An Integrated Development Environment and Repository for Natural Language Prompts

- ROLELLM: BENCHMARKING, ELICITING, AND ENHANCING ROLE-PLAYING ABILITIES OF LARGE LANGUAGE MODELS

- LaMDA: Language Models for Dialog Applications

- Sparrow: Improving alignment of dialogue agents via targeted human judgements ⭐

- BlenderBot 3: a deployed conversational agent that continually learns to responsibly engage

- How NOT To Evaluate Your Dialogue System: An Empirical Study of Unsupervised Evaluation Metrics for Dialogue Response Generation

- DialogStudio: Towards Richest and Most Diverse Unified Dataset Collection for Conversational AI

- Enhancing Chat Language Models by Scaling High-quality Instructional Conversations

- DiagGPT: An LLM-based Chatbot with Automatic Topic Management for Task-Oriented Dialogue

- 基础&进阶用法

- [zero-shot-COT] Large Language Models are Zero-Shot Reasoners ⭐

- [few-shot COT] Chain of Thought Prompting Elicits Reasoning in Large Language Models ⭐

- SELF-CONSISTENCY IMPROVES CHAIN OF THOUGHT REASONING IN LANGUAGE MODELS

- LEAST-TO-MOST PROMPTING ENABLES COMPLEX REASONING IN LARGE LANGUAGE MODELS ⭐

- Tree of Thoughts: Deliberate Problem Solving with Large Language Models

- Plan-and-Solve Prompting: Improving Zero-Shot Chain-of-Thought Reasoning by Large Language Models

- Decomposed Prompting A MODULAR APPROACH FOR Solving Complex Tasks

- Successive Prompting for Decomposing Complex Questions

- Verify-and-Edit: A Knowledge-Enhanced Chain-of-Thought Framework

- Beyond Chain-of-Thought, Effective Graph-of-Thought Reasoning in Large Language Models

- Tree-of-Mixed-Thought: Combining Fast and Slow Thinking for Multi-hop Visual Reasoning

- LAMBADA: Backward Chaining for Automated Reasoning in Natural Language

- Algorithm of Thoughts: Enhancing Exploration of Ideas in Large Language Models

- Graph of Thoughts: Solving Elaborate Problems with Large Language Models

- Progressive-Hint Prompting Improves Reasoning in Large Language Models

- LARGE LANGUAGE MODELS CAN LEARN RULES

- DIVERSITY OF THOUGHT IMPROVES REASONING ABILITIES OF LARGE LANGUAGE MODELS

- From Complex to Simple: Unraveling the Cognitive Tree for Reasoning with Small Language Models

- Take a Step Back: Evoking Reasoning via Abstraction in Large Language Models

- LARGE LANGUAGE MODELS AS OPTIMIZERS

- Beyond Chain-of-Thought: A Survey of Chain-of-X Paradigms for LLMs

- Buffer of Thoughts: Thought-Augmented Reasoning with Large Language Models

- Abstraction-of-Thought Makes Language Models Better Reasoners

- Faithful Logical Reasoning via Symbolic Chain-of-Thought

- Inductive or Deductive? Rethinking the Fundamental Reasoning Abilities of LLMs

- 分领域COT [Math, Code, Tabular, QA]

- Solving Quantitative Reasoning Problems with Language Models

- SHOW YOUR WORK: SCRATCHPADS FOR INTERMEDIATE COMPUTATION WITH LANGUAGE MODELS

- Solving math word problems with processand outcome-based feedback

- CodeRL: Mastering Code Generation through Pretrained Models and Deep Reinforcement Learning

- T-SciQ: Teaching Multimodal Chain-of-Thought Reasoning via Large Language Model Signals for Science Question Answering

- LEARNING PERFORMANCE-IMPROVING CODE EDITS

- Chain of Code: Reasoning with a Language Model-Augmented Code Emulator

- 原理分析

- Towards Understanding Chain-of-Thought Prompting: An Empirical Study of What Matters ⭐

- TEXT AND PATTERNS: FOR EFFECTIVE CHAIN OF THOUGHT IT TAKES TWO TO TANGO

- Towards Revealing the Mystery behind Chain of Thought: a Theoretical Perspective

- Large Language Models Can Be Easily Distracted by Irrelevant Context

- Chain-of-Thought Reasoning Without Prompting

- 小模型COT蒸馏

- Specializing Smaller Language Models towards Multi-Step Reasoning ⭐

- Teaching Small Language Models to Reason

- Large Language Models are Reasoning Teachers

- Distilling Reasoning Capabilities into Smaller Language Models

- The CoT Collection: Improving Zero-shot and Few-shot Learning of Language Models via Chain-of-Thought Fine-Tuning

- Distilling System 2 into System 1

- COT样本自动构建/选择

- STaR: Self-Taught Reasoner Bootstrapping ReasoningWith Reasoning

- AutoCOT:AUTOMATIC CHAIN OF THOUGHT PROMPTING IN LARGE LANGUAGE MODELS

- Large Language Models Can Self-Improve

- Active Prompting with Chain-of-Thought for Large Language Models

- COMPLEXITY-BASED PROMPTING FOR MULTI-STEP REASONING

- others

- OlaGPT Empowering LLMs With Human-like Problem-Solving abilities

- Challenging BIG-Bench tasks and whether chain-of-thought can solve them

- Large Language Models are Better Reasoners with Self-Verification

- ThoughtSource A central hub for large language model reasoning data

- Two Failures of Self-Consistency in the Multi-Step Reasoning of LLMs

- Deepmind

- Teaching language models to support answers with verified quotes

- sparrow, Improving alignment of dialogue agents via targetd human judgements ⭐

- STATISTICAL REJECTION SAMPLING IMPROVES PREFERENCE OPTIMIZATION

- Reinforced Self-Training (ReST) for Language Modeling

- SLiC-HF: Sequence Likelihood Calibration with Human Feedback

- CALIBRATING SEQUENCE LIKELIHOOD IMPROVES CONDITIONAL LANGUAGE GENERATION

- REWARD DESIGN WITH LANGUAGE MODELS

- Final-Answer RL Solving math word problems with processand outcome-based feedback

- Solving math word problems with process- and outcome-based feedback

- Beyond Human Data: Scaling Self-Training for Problem-Solving with Language Models

- BOND: Aligning LLMs with Best-of-N Distillation

- openai

- PPO: Proximal Policy Optimization Algorithms ⭐

- Deep Reinforcement Learning for Human Preference

- Fine-Tuning Language Models from Human Preferences

- learning to summarize from human feedback

- InstructGPT: Training language models to follow instructions with human feedback ⭐

- Scaling Laws for Reward Model Over optimization ⭐

- WEAK-TO-STRONG GENERALIZATION: ELICITING STRONG CAPABILITIES WITH WEAK SUPERVISION ⭐

- PRM:Let's verify step by step

- Training Verifiers to Solve Math Word Problems [PRM的前置依赖]

- OpenAI Super Alignment Blog

- LLM Critics Help Catch LLM Bugs

- PROVER-VERIFIER GAMES IMPROVE LEGIBILITY OF LLM OUTPUTS

- Rule Based Rewards for Language Model Safety

- Anthropic

- A General Language Assistant as a Laboratory for Alignmen

- Red Teaming Language Models to Reduce Harms Methods,Scaling Behaviors and Lessons Learned

- Training a Helpful and Harmless Assistant with Reinforcement Learning from Human Feedback ⭐

- Constitutional AI Harmlessness from AI Feedback ⭐

- Pretraining Language Models with Human Preferences

- The Capacity for Moral Self-Correction in Large Language Models

- Sleeper Agents: Training Deceptive LLMs that Persist Through Safety Trainin

- AllenAI, RL4LM:IS REINFORCEMENT LEARNING (NOT) FOR NATURAL LANGUAGE PROCESSING BENCHMARKS

- 改良方案

- RRHF: Rank Responses to Align Language Models with Human Feedback without tears

- Chain of Hindsight Aligns Language Models with Feedback

- AlpacaFarm: A Simulation Framework for Methods that Learn from Human Feedback

- RAFT: Reward rAnked FineTuning for Generative Foundation Model Alignment

- RLAIF: Scaling Reinforcement Learning from Human Feedback with AI Feedback

- Training Socially Aligned Language Models in Simulated Human Society

- RAIN: Your Language Models Can Align Themselves without Finetuning

- Generative Judge for Evaluating Alignment

- PEERING THROUGH PREFERENCES: UNRAVELING FEEDBACK ACQUISITION FOR ALIGNING LARGE LANGUAGE MODELS

- SALMON: SELF-ALIGNMENT WITH PRINCIPLE-FOLLOWING REWARD MODELS

- Large Language Model Unlearning ⭐

- ADVERSARIAL PREFERENCE OPTIMIZATION ⭐

- Preference Ranking Optimization for Human Alignment

- A Long Way to Go: Investigating Length Correlations in RLHF

- ENABLE LANGUAGE MODELS TO IMPLICITLY LEARN SELF-IMPROVEMENT FROM DATA

- REWARD MODEL ENSEMBLES HELP MITIGATE OVEROPTIMIZATION

- LEARNING OPTIMAL ADVANTAGE FROM PREFERENCES AND MISTAKING IT FOR REWARD

- ULTRAFEEDBACK: BOOSTING LANGUAGE MODELS WITH HIGH-QUALITY FEEDBACK

- MOTIF: INTRINSIC MOTIVATION FROM ARTIFICIAL INTELLIGENCE FEEDBACK

- STABILIZING RLHF THROUGH ADVANTAGE MODEL AND SELECTIVE REHEARSAL

- Shepherd: A Critic for Language Model Generation

- LEARNING TO GENERATE BETTER THAN YOUR LLM

- Fine-Grained Human Feedback Gives Better Rewards for Language Model Training

- Principle-Driven Self-Alignment of Language Models from Scratch with Minimal Human Supervision

- Direct Preference Optimization: Your Language Model is Secretly a Reward Model

- HIR The Wisdom of Hindsight Makes Language Models Better Instruction Followers

- Aligner: Achieving Efficient Alignment through Weak-to-Strong Correction

- A Minimaximalist Approach to Reinforcement Learning from Human Feedback

- PANDA: Preference Adaptation for Enhancing Domain-Specific Abilities of LLMs

- Weak-to-Strong Search: Align Large Language Models via Searching over Small Language Models

- Weak-to-Strong Extrapolation Expedites Alignment

- Is DPO Superior to PPO for LLM Alignment? A Comprehensive Study

- Token-level Direct Preference Optimization

- SimPO: Simple Preference Optimization with a Reference-Free Reward

- AUTODETECT: Towards a Unified Framework for Automated Weakness Detection in Large Language Models

- META-REWARDING LANGUAGE MODELS: Self-Improving Alignment with LLM-as-a-Meta-Judge

- RL探究

- UNDERSTANDING THE EFFECTS OF RLHF ON LLM GENERALISATION AND DIVERSITY

- A LONG WAY TO GO: INVESTIGATING LENGTH CORRELATIONS IN RLHF

- THE TRICKLE-DOWN IMPACT OF REWARD (IN-)CONSISTENCY ON RLHF

- Open Problems and Fundamental Limitations of Reinforcement Learning from Human Feedback

- HUMAN FEEDBACK IS NOT GOLD STANDARD

- CONTRASTIVE POST-TRAINING LARGE LANGUAGE MODELS ON DATA CURRICULUM

- Language Models Resist Alignment

- A Survey on Large Language Model based Autonomous Agents

- PERSONAL LLM AGENTS: INSIGHTS AND SURVEY ABOUT THE CAPABILITY, EFFICIENCY AND SECURITY

- 基于prompt通用方案

- ReAct: SYNERGIZING REASONING AND ACTING IN LANGUAGE MODELS ⭐

- Self-ask: MEASURING AND NARROWING THE COMPOSITIONALITY GAP IN LANGUAGE MODELS ⭐

- MRKL SystemsA modular, neuro-symbolic architecture that combines large language models, external knowledge sources and discrete reasoning

- PAL: Program-aided Language Models

- ART: Automatic multi-step reasoning and tool-use for large language models

- ReWOO: Decoupling Reasoning from Observations for Efficient Augmented Language Models ⭐

- Interleaving Retrieval with Chain-of-Thought Reasoning for Knowledge-Intensive Multi-Step Questions

- Chameleon: Plug-and-Play Compositional Reasoning with Large Language Models ⭐

- Faithful Chain-of-Thought Reasoning

- Reflexion: Language Agents with Verbal Reinforcement Learning ⭐

- Verify-and-Edit: A Knowledge-Enhanced Chain-of-Thought Framework

- RestGPT: Connecting Large Language Models with Real-World RESTful APIs

- ChatCoT: Tool-Augmented Chain-of-Thought Reasoning on Chat-based Large Language Models

- InstructTODS: Large Language Models for End-to-End Task-Oriented Dialogue Systems

- TPTU: Task Planning and Tool Usage of Large Language Model-based AI Agents

- ControlLLM: Augment Language Models with Tools by Searching on Graphs

- Reflexion: an autonomous agent with dynamic memory and self-reflection

- AutoAgents: A Framework for Automatic Agent Generation

- GitAgent: Facilitating Autonomous Agent with GitHub by Tool Extension

- PreAct: Predicting Future in ReAct Enhances Agent's Planning Ability

- TOOLLLM: FACILITATING LARGE LANGUAGE MODELS TO MASTER 16000+ REAL-WORLD APIS ⭐ -AnyTool: Self-Reflective, Hierarchical Agents for Large-Scale API Calls

- AIOS: LLM Agent Operating System

- LLMCompiler An LLM Compiler for Parallel Function Calling

- Re-Invoke: Tool Invocation Rewriting for Zero-Shot Tool Retrieval

- 基于微调通用方案

- TALM: Tool Augmented Language Models

- Toolformer: Language Models Can Teach Themselves to Use Tools ⭐

- Tool Learning with Foundation Models

- Tool Maker:Large Language Models as Tool Maker

- TaskMatrix.AI: Completing Tasks by Connecting Foundation Models with Millions of APIs

- AgentTuning: Enabling Generalized Agent Abilities for LLMs

- SWIFTSAGE: A Generative Agent with Fast and Slow Thinking for Complex Interactive Tasks

- FireAct: Toward Language Agent Fine-tuning

- Pangu-Agent: A Fine-Tunable Generalist Agent with Structured Reasoning

- REST MEETS REACT: SELF-IMPROVEMENT FOR MULTI-STEP REASONING LLM AGENT

- Efficient Tool Use with Chain-of-Abstraction Reasoning

- Agent-FLAN: Designing Data and Methods of Effective Agent Tuning for Large Language Models

- AgentOhana: Design Unified Data and Training Pipeline for Effective Agent Learning

- Agent Lumos: Unified and Modular Training for Open-Source Language Agents

- 调用模型方案

- HuggingGPT: Solving AI Tasks with ChatGPT and its Friends in HuggingFace

- Gorilla:Large Language Model Connected with Massive APIs ⭐

- OpenAGI: When LLM Meets Domain Experts

- 垂直领域

- 数据分析

- DS-Agent: Automated Data Science by Empowering Large Language Models with Case-Based Reasoning

- InsightLens: Discovering and Exploring Insights from Conversational Contexts in Large-Language-Model-Powered Data Analysis

- Data-Copilot: Bridging Billions of Data and Humans with Autonomous Workflow

- Demonstration of InsightPilot: An LLM-Empowered Automated Data Exploration System

- TaskWeaver: A Code-First Agent Framework

- Automated Social Science: Language Models as Scientist and Subjects

- Data Interpreter: An LLM Agent For Data Science

- 金融

- WeaverBird: Empowering Financial Decision-Making with Large Language Model, Knowledge Base, and Search Engine

- FinGPT: Open-Source Financial Large Language Models

- FinMem: A Performance-Enhanced LLM Trading Agent with Layered Memory and Character Design

- AlphaFin:使用检索增强股票链框架对财务分析进行基准测试

- A Multimodal Foundation Agent for Financial Trading: Tool-Augmented, Diversified, and Generalist ⭐

- Can Large Language Models Beat Wall Street? Unveiling the Potential of AI in stock Selection

- ENHANCING ANOMALY DETECTION IN FINANCIAL MARKETS WITH AN LLM-BASED MULTI-AGENT FRAMEWORK

- TRADINGGPT: MULTI-AGENT SYSTEM WITH LAYERED MEMORY AND DISTINCT CHARACTERS FOR ENHANCED FINANCIAL TRADING PERFORMANCE

- FinRobot: An Open-Source AI Agent Platform for Financial Applications using Large Language Models

- LLMFactor: Extracting Profitable Factors through Prompts for Explainable Stock Movement Prediction

- 生物医疗

- GeneGPT: Augmenting Large Language Models with Domain Tools for Improved Access to Biomedical Information

- ChemCrow Augmenting large language models with chemistry tools

- Generating Explanations in Medical Question-Answering by Expectation Maximization Inference over Evidence

- Agent Hospital: A Simulacrum of Hospital with Evolvable Medical Agents

- Integrating Chemistry Knowledge in Large Language Models via Prompt Engineering

- web/mobile Agent

- AutoWebGLM: Bootstrap And Reinforce A Large Language Model-based Web Navigating Agent

- A Real-World WebAgent with Planning, Long Context Understanding, and Program Synthesis

- Mind2Web: Towards a Generalist Agent for the Web

- MiniWoB++ Reinforcement Learning on Web Interfaces Using Workflow-Guided Exploration

- WEBARENA: A REALISTIC WEB ENVIRONMENT FORBUILDING AUTONOMOUS AGENTS

- AutoCrawler: A Progressive Understanding Web Agent for Web Crawler Generation

- WebLINX: Real-World Website Navigation with Multi-Turn Dialogue

- WebVoyager: Building an End-to-end Web Agent with Large Multimodal Models

- CogAgent: A Visual Language Model for GUI Agents

- Mobile-Agent-v2: Mobile Device Operation Assistant with Effective Navigation via Multi-Agent Collaboration

- WebCanvas: Benchmarking Web Agents in Online Environments

- 其他

- ResearchAgent: Iterative Research Idea Generation over Scientific Literature with Large Language Models

- WebShop: Towards Scalable Real-World Web Interaction with Grounded Language Agents

- ToolkenGPT: Augmenting Frozen Language Models with Massive Tools via Tool Embeddings

- PointLLM: Empowering Large Language Models to Understand Point Clouds

- Interpretable Long-Form Legal Question Answering with Retrieval-Augmented Large Language Models

- CarExpert: Leveraging Large Language Models for In-Car Conversational Question Answering

- 数据分析

- 评估

- Evaluating Verifiability in Generative Search Engines

- Auto-GPT for Online Decision Making: Benchmarks and Additional Opinions

- API-Bank: A Benchmark for Tool-Augmented LLMs

- ToolLLM: Facilitating Large Language Models to Master 16000+ Real-world APIs

- Automatic Evaluation of Attribution by Large Language Models

- Benchmarking Large Language Models in Retrieval-Augmented Generation

- ARES: An Automated Evaluation Framework for Retrieval-Augmented Generation Systems

- MultiAgent

- Generative Agents: Interactive Simulacra of Human Behavior ⭐

- AgentVerse: Facilitating Multi-Agent Collaboration and Exploring Emergent Behaviors in Agents

- CAMEL: Communicative Agents for "Mind" Exploration of Large Scale Language Model Society ⭐

- Exploring Large Language Models for Communication Games: An Empirical Study on Werewolf

- Communicative Agents for Software Development ⭐

- METAAGENTS: SIMULATING INTERACTIONS OF HUMAN BEHAVIORS FOR LLM-BASED TASK-ORIENTED COORDINATION VIA COLLABORATIVE

- System-1.x: Learning to Balance Fast and Slow Planning with Language Models

- One Agent To Rule Them All: Towards Multi-agent Conversational AI

- A Multi-Agent Conversational Recommender System GENERATIVE AGENTS

- LET MODELS SPEAK CIPHERS: MULTIAGENT DEBATE THROUGH EMBEDDINGS

- MedAgents: Large Language Models as Collaborators for Zero-shot Medical Reasoning

- War and Peace (WarAgent): Large Language Model-based Multi-Agent Simulation of World Wars

- More Agents Is All You Need

- Small LLMs Are Weak Tool Learners: A Multi-LLM Agent

- Merge, Ensemble, and Cooperate! A Survey on Collaborative Strategies in the Era of Large Language Models

- Internet of Agents: Weaving a Web of Heterogeneous Agents for Collaborative Intelligence

- RouteLLM: Learning to Route LLMs with Preference Data

- MULTI-AGENT COLLABORATION: HARNESSING THE POWER OF INTELLIGENT LLM AGENTS

- METAGPT: META PROGRAMMING FOR A MULTI-AGENT COLLABORATIVE FRAMEWORK

- 自主学习和探索进化

- AppAgent: Multimodal Agents as Smartphone Users

- Investigate-Consolidate-Exploit: A General Strategy for Inter-Task Agent Self-Evolution

- LLMs in the Imaginarium: Tool Learning through Simulated Trial and Error

- Empowering Large Language Model Agents through Action Learning

- Trial and Error: Exploration-Based Trajectory Optimization for LLM Agents

- OS-COPILOT: TOWARDS GENERALIST COMPUTER AGENTS WITH SELF-IMPROVEMENT

- LLAMA RIDER: SPURRING LARGE LANGUAGE MODELS TO EXPLORE THE OPEN WORLD

- PAST AS A GUIDE: LEVERAGING RETROSPECTIVE LEARNING FOR PYTHON CODE COMPLETION

- AutoGuide: Automated Generation and Selection of State-Aware Guidelines for Large Language Model Agents

- A Survey on Self-Evolution of Large Language Models

- ExpeL: LLM Agents Are Experiential Learners

- ReAct Meets ActRe: When Language Agents Enjoy Training Data Autonomy

- 其他

- LLM+P: Empowering Large Language Models with Optimal Planning Proficiency

- Inference with Reference: Lossless Acceleration of Large Language Models

- RecallM: An Architecture for Temporal Context Understanding and Question Answering

- LLaMA Rider: Spurring Large Language Models to Explore the Open World

- LLMs Can’t Plan, But Can Help Planning in LLM-Modulo Frameworks

-

WebGPT:Browser-assisted question-answering with human feedback

-

WebGLM: Towards An Efficient Web-Enhanced Question Answering System with Human Preferences

-

WebCPM: Interactive Web Search for Chinese Long-form Question Answering ⭐

-

REPLUG: Retrieval-Augmented Black-Box Language Models ⭐

-

RETA-LLM: A Retrieval-Augmented Large Language Model Toolkit

-

Atlas: Few-shot Learning with Retrieval Augmented Language Models

-

RRAML: Reinforced Retrieval Augmented Machine Learning

-

Investigating the Factual Knowledge Boundary of Large Language Models with Retrieval Augmentation

-

PDFTriage: Question Answering over Long, Structured Documents

-

Walking Down the Memory Maze: Beyond Context Limit through Interactive Reading ⭐

-

Demonstrate-Search-Predict: Composing retrieval and language models for knowledge-intensive NLP

-

Search-in-the-Chain: Towards Accurate, Credible and Traceable Large Language Models for Knowledge-intensive Tasks

-

Active Retrieval Augmented Generation

-

kNN-LM Does Not Improve Open-ended Text Generation

-

Can Retriever-Augmented Language Models Reason? The Blame Game Between the Retriever and the Language Model

-

RLCF:Aligning the Capabilities of Large Language Models with the Context of Information Retrieval via Contrastive Feedback

-

Augmented Embeddings for Custom Retrievals

-

DORIS-MAE: Scientific Document Retrieval using Multi-level Aspect-based Queries

-

Learning to Filter Context for Retrieval-Augmented Generation

-

THINK-ON-GRAPH: DEEP AND RESPONSIBLE REASON- ING OF LARGE LANGUAGE MODEL ON KNOWLEDGE GRAPH

-

RA-DIT: RETRIEVAL-AUGMENTED DUAL INSTRUCTION TUNING

-

Query Expansion by Prompting Large Language Models ⭐

-

CHAIN-OF-NOTE: ENHANCING ROBUSTNESS IN RETRIEVAL-AUGMENTED LANGUAGE MODELS

-

IAG: Induction-Augmented Generation Framework for Answering Reasoning Questions

-

T2Ranking: A large-scale Chinese Benchmark for Passage Ranking

-

Factuality Enhanced Language Models for Open-Ended Text Generation

-

FRESHLLMS: REFRESHING LARGE LANGUAGE MODELS WITH SEARCH ENGINE AUGMENTATION

-

KwaiAgents: Generalized Information-seeking Agent System with Large Language Models

-

Rich Knowledge Sources Bring Complex Knowledge Conflicts: Recalibrating Models to Reflect Conflicting Evidence

-

Complex Claim Verification with Evidence Retrieved in the Wild

-

Retrieval-Augmented Generation for Large Language Models: A Survey

-

Enhancing Retrieval-Augmented Large Language Models with Iterative Retrieval-Generation Synergy

-

ChatQA: Building GPT-4 Level Conversational QA Models

-

RAG vs Fine-tuning: Pipelines, Tradeoffs, and a Case Study on Agriculture

-

Benchmarking Large Language Models in Retrieval-Augmented Generation

-

SYNERGISTIC INTERPLAY BETWEEN SEARCH AND LARGE LANGUAGE MODELS FOR INFORMATION RETRIEVAL

-

T-RAG: Lessons from the LLM Trenches

-

RAT: Retrieval Augmented Thoughts Elicit Context-Aware Reasoning in Long-Horizon Generation

-

ARAGOG: Advanced RAG Output Grading

-

ActiveRAG: Revealing the Treasures of Knowledge via Active Learning

-

RAFT: Adapting Language Model to Domain Specific RAG

-

OpenResearcher: Unleashing AI for Accelerated Scientific Research

-

优化检索

- HyDE:Precise Zero-Shot Dense Retrieval without Relevance Labels

- PROMPTAGATOR : FEW-SHOT DENSE RETRIEVAL FROM 8 EXAMPLES

- Query Rewriting for Retrieval-Augmented Large Language Models

- Query2doc: Query Expansion with Large Language Models ⭐

-

Ranking

- A Setwise Approach for Effective and Highly Efficient Zero-shot Ranking with Large Language Models

- RankVicuna: Zero-Shot Listwise Document Reranking with Open-Source Large Language Models

- Improving Passage Retrieval with Zero-Shot Question Generation

- Large Language Models are Effective Text Rankers with Pairwise Ranking Prompting

- RankRAG: Unifying Context Ranking with Retrieval-Augmented Generation in LLMs

- Ranking Manipulation for Conversational Search Engines

- Is ChatGPT Good at Search? Investigating Large Language Models as Re-Ranking Agents

-

传统搜索方案

- ASK THE RIGHT QUESTIONS:ACTIVE QUESTION REFORMULATION WITH REINFORCEMENT LEARNING

- Query Expansion Techniques for Information Retrieval a Survey

- Learning to Rewrite Queries

- Managing Diversity in Airbnb Search

-

新向量模型用于Recall和Ranking

- BGE M3-Embedding: Multi-Lingual, Multi-Functionality, Multi-Granularity Text Embeddings Through Self-Knowledge Distillation

- 网易为RAG设计的BCE Embedding技术报告

- BGE Landmark Embedding: A Chunking-Free Embedding Method For Retrieval Augmented Long-Context Large Language Models

- D2LLM: Decomposed and Distilled Large Language Models for Semantic Search

-

Mindful-RAG: A Study of Points of Failure in Retrieval Augmented Generation

-

Memory3 : Language Modeling with Explicit Memory

-

优化推理结果

- Speculative RAG: Enhancing Retrieval Augmented Generation through Drafting

-

动态RAG(When to Search & Search Plan)

- SELF-RAG: LEARNING TO RETRIEVE, GENERATE, AND CRITIQUE THROUGH SELF-REFLECTION ⭐

- Self-Knowledge Guided Retrieval Augmentation for Large Language Models

- Self-DC: When to retrieve and When to generate Self Divide-and-Conquer for Compositional Unknown Questions

- Small Models, Big Insights: Leveraging Slim Proxy Models To Decide When and What to Retrieve for LLMs

- Adaptive-RAG: Learning to Adapt Retrieval-Augmented Large Language Models through Question Complexity

- REAPER: Reasoning based Retrieval Planning for Complex RAG Systems

- When to Retrieve: Teaching LLMs to Utilize Information Retrieval Effectively

- PlanRAG: A Plan-then-Retrieval Augmented Generation for Generative Large Language Models as Decision Makers

-

Graph RAG

- GRAPH Retrieval-Augmented Generation: A Survey

- From Local to Global: A Graph RAG Approach to Query-Focused Summarization

- GRAG: Graph Retrieval-Augmented Generation

- GNN-RAG: Graph Neural Retrieval for Large Language Model Reasoning

- survey

- Table Meets LLM: Can Large Language Models Understand Structured Table Data? A Benchmark and Empirical Study

- Large Language Models(LLMs) on Tabular Data: Prediction, Generation, and Understanding - A Survey

- Exploring the Numerical Reasoning Capabilities of Language Models: A Comprehensive Analysis on Tabular Data

- prompt

- Large Language Models are Versatile Decomposers: Decompose Evidence and Questions for Table-based Reasoning

- Tab-CoT: Zero-shot Tabular Chain of Thought

- Chain-of-Table: Evolving Tables in the Reasoning Chain for Table Understanding

- fintuning

- TableLlama: Towards Open Large Generalist Models for Tables

- TableLLM: Enabling Tabular Data Manipulation by LLMs in Real Office Usage Scenarios

- multimodal

- MMC: Advancing Multimodal Chart Understanding with Large-scale Instruction Tuning

- ChartLlama: A Multimodal LLM for Chart Understanding and Generation

- ChartAssisstant: A Universal Chart Multimodal Language Model via Chart-to-Table Pre-training and Multitask Instruction Tuning

- ChartInstruct: Instruction Tuning for Chart Comprehension and Reasoning

- ChartX & ChartVLM: A Versatile Benchmark and Foundation Model for Complicated Chart Reasoning

- MATCHA : Enhancing Visual Language Pretraining with Math Reasoning and Chart Derendering

- UniChart: A Universal Vision-language Pretrained Model for Chart Comprehension and Reasoning

- TinyChart: Efficient Chart Understanding with Visual Token Merging and Program-of-Thoughts Learning

- Tables as Texts or Images: Evaluating the Table Reasoning Ability of LLMs and MLLMs

- TableVQA-Bench: A Visual Question Answering Benchmark on Multiple Table Domains

- TabPedia: Towards Comprehensive Visual Table Understanding with Concept Synergy

- 综述类

- Unifying Large Language Models and Knowledge Graphs: A Roadmap

- Large Language Models and Knowledge Graphs: Opportunities and Challenges

- 知识图谱与大模型融合实践研究报告2023

- KG用于大模型推理

- Using Large Language Models for Zero-Shot Natural Language Generation from Knowledge Graphs

- MindMap: Knowledge Graph Prompting Sparks Graph of Thoughts in Large Language Models

- Knowledge-Augmented Language Model Prompting for Zero-Shot Knowledge Graph Question Answering

- Domain Specific Question Answering Over Knowledge Graphs Using Logical Programming and Large Language Models

- BRING YOUR OWN KG: Self-Supervised Program Synthesis for Zero-Shot KGQA

- StructGPT: A General Framework for Large Language Model to Reason over Structured Data

- 大模型用于KG构建

- Enhancing Knowledge Graph Construction Using Large Language Models

- LLM-assisted Knowledge Graph Engineering: Experiments with ChatGPT

- ITERATIVE ZERO-SHOT LLM PROMPTING FOR KNOWLEDGE GRAPH CONSTRUCTION

- Exploring Large Language Models for Knowledge Graph Completion

- HABITAT 3.0: A CO-HABITAT FOR HUMANS, AVATARS AND ROBOTS

- Humanoid Agents: Platform for Simulating Human-like Generative Agents

- Voyager: An Open-Ended Embodied Agent with Large Language Models

- Shaping the future of advanced robotics

- AUTORT: EMBODIED FOUNDATION MODELS FOR LARGE SCALE ORCHESTRATION OF ROBOTIC AGENTS

- ROBOTIC TASK GENERALIZATION VIA HINDSIGHT TRAJECTORY SKETCHES

- ALFWORLD: ALIGNING TEXT AND EMBODIED ENVIRONMENTS FOR INTERACTIVE LEARNING

- MINEDOJO: Building Open-Ended Embodied Agents with Internet-Scale Knowledge

- LEGENT: Open Platform for Embodied Agents

- DoReMi: Optimizing Data Mixtures Speeds Up Language Model Pretraining

- The Pile: An 800GB Dataset of Diverse Text for Language Modeling

- CCNet: Extracting High Quality Monolingual Datasets fromWeb Crawl Data

- WanJuan: A Comprehensive Multimodal Dataset for Advancing English and Chinese Large Models

- CLUECorpus2020: A Large-scale Chinese Corpus for Pre-training Language Model

- In-Context Pretraining: Language Modeling Beyond Document Boundaries

- Data Mixing Laws: Optimizing Data Mixtures by Predicting Language Modeling Performance

- Zyda: A 1.3T Dataset for Open Language Modeling

- Entropy Law: The Story Behind Data Compression and LLM Performance

- Data, Data Everywhere: A Guide for Pretraining Dataset Construction

- Data curation via joint example selection further accelerates multimodal learning

- 金融

- BloombergGPT: A Large Language Model for Finance

- FinVis-GPT: A Multimodal Large Language Model for Financial Chart Analysis

- CFGPT: Chinese Financial Assistant with Large Language Model

- CFBenchmark: Chinese Financial Assistant Benchmark for Large Language Model

- InvestLM: A Large Language Model for Investment using Financial Domain Instruction Tuning

- BBT-Fin: Comprehensive Construction of Chinese Financial Domain Pre-trained Language Model, Corpus and Benchmark

- PIXIU: A Large Language Model, Instruction Data and Evaluation Benchmark for Finance

- The FinBen: An Holistic Financial Benchmark for Large Language Models

- XuanYuan 2.0: A Large Chinese Financial Chat Model with Hundreds of Billions Parameters

- Towards Trustworthy Large Language Models in Industry Domains

- When AI Meets Finance (StockAgent): Large Language Model-based Stock Trading in Simulated Real-world Environments

- A Survey of Large Language Models for Financial Applications: Progress, Prospects and Challenges

- 生物医疗

- MedGPT: Medical Concept Prediction from Clinical Narratives

- BioGPT:Generative Pre-trained Transformer for Biomedical Text Generation and Mining

- PubMed GPT: A Domain-specific large language model for biomedical text ⭐

- ChatDoctor:Medical Chat Model Fine-tuned on LLaMA Model using Medical Domain Knowledge

- Med-PaLM:Large Language Models Encode Clinical Knowledge[V1,V2] ⭐

- SMILE: Single-turn to Multi-turn Inclusive Language Expansion via ChatGPT for Mental Health Support

- Zhongjing: Enhancing the Chinese Medical Capabilities of Large Language Model through Expert Feedback and Real-world Multi-turn Dialogue

- 其他

- Galactia:A Large Language Model for Science

- Augmented Large Language Models with Parametric Knowledge Guiding

- ChatLaw Open-Source Legal Large Language Model ⭐

- MediaGPT : A Large Language Model For Chinese Media

- KITLM: Domain-Specific Knowledge InTegration into Language Models for Question Answering

- EcomGPT: Instruction-tuning Large Language Models with Chain-of-Task Tasks for E-commerce

- TableGPT: Towards Unifying Tables, Nature Language and Commands into One GPT

- LLEMMA: AN OPEN LANGUAGE MODEL FOR MATHEMATICS

- MEDITAB: SCALING MEDICAL TABULAR DATA PREDICTORS VIA DATA CONSOLIDATION, ENRICHMENT, AND REFINEMENT

- PLLaMa: An Open-source Large Language Model for Plant Science

- ADAPTING LARGE LANGUAGE MODELS VIA READING COMPREHENSION

- 位置编码、注意力机制优化

- Unlimiformer: Long-Range Transformers with Unlimited Length Input

- Parallel Context Windows for Large Language Models

- 苏剑林, NBCE:使用朴素贝叶斯扩展LLM的Context处理长度 ⭐

- Structured Prompting: Scaling In-Context Learning to 1,000 Examples

- Vcc: Scaling Transformers to 128K Tokens or More by Prioritizing Important Tokens

- Scaling Transformer to 1M tokens and beyond with RMT

- TRAIN SHORT, TEST LONG: ATTENTION WITH LINEAR BIASES ENABLES INPUT LENGTH EXTRAPOLATION ⭐

- Extending Context Window of Large Language Models via Positional Interpolation

- LongNet: Scaling Transformers to 1,000,000,000 Tokens

- https://kaiokendev.github.io/til#extending-context-to-8k

- 苏剑林,Transformer升级之路:10、RoPE是一种β进制编码 ⭐

- 苏剑林,Transformer升级之路:11、将β进制位置进行到底

- 苏剑林,Transformer升级之路:12、无限外推的ReRoPE?

- 苏剑林,Transformer升级之路:15、Key归一化助力长度外推

- EFFICIENT STREAMING LANGUAGE MODELS WITH ATTENTION SINKS

- Ring Attention with Blockwise Transformers for Near-Infinite Context

- YaRN: Efficient Context Window Extension of Large Language Models

- LM-INFINITE: SIMPLE ON-THE-FLY LENGTH GENERALIZATION FOR LARGE LANGUAGE MODELS

- EFFICIENT STREAMING LANGUAGE MODELS WITH ATTENTION SINKS

- 上文压缩排序方案

- Lost in the Middle: How Language Models Use Long Contexts ⭐

- LLMLingua: Compressing Prompts for Accelerated Inference of Large Language Models

- LongLLMLingua: Accelerating and Enhancing LLMs in Long Context Scenarios via Prompt Compression ⭐

- Learning to Compress Prompts with Gist Tokens

- Unlocking Context Constraints of LLMs: Enhancing Context Efficiency of LLMs with Self-Information-Based Content Filtering

- LongAgent: Scaling Language Models to 128k Context through Multi-Agent Collaboration

- PCToolkit: A Unified Plug-and-Play Prompt Compression Toolkit of Large Language Models

- Are Long-LLMs A Necessity For Long-Context Tasks?

- 训练和模型架构方案

- Never Train from Scratch: FAIR COMPARISON OF LONGSEQUENCE MODELS REQUIRES DATA-DRIVEN PRIORS

- Soaring from 4K to 400K: Extending LLM's Context with Activation Beacon

- Never Lost in the Middle: Improving Large Language Models via Attention Strengthening Question Answering

- Focused Transformer: Contrastive Training for Context Scaling

- Effective Long-Context Scaling of Foundation Models

- ON THE LONG RANGE ABILITIES OF TRANSFORMERS

- Efficient Long-Range Transformers: You Need to Attend More, but Not Necessarily at Every Layer

- POSE: EFFICIENT CONTEXT WINDOW EXTENSION OF LLMS VIA POSITIONAL SKIP-WISE TRAINING

- LONGLORA: EFFICIENT FINE-TUNING OF LONGCONTEXT LARGE LANGUAGE MODELS

- LongAlign: A Recipe for Long Context Alignment of Large Language Models

- Data Engineering for Scaling Language Models to 128K Context

- MEGALODON: Efficient LLM Pretraining and Inference with Unlimited Context Length

- Make Your LLM Fully Utilize the Context

- 效率优化

- Efficient Attention: Attention with Linear Complexities

- Transformers are RNNs: Fast Autoregressive Transformers with Linear Attention

- HyperAttention: Long-context Attention in Near-Linear Time

- FlashAttention: Fast and Memory-Efficient Exact Attention with IO-Awareness

- With Greater Text Comes Greater Necessity: Inference-Time Training Helps Long Text Generation

- Re3 : Generating Longer Stories With Recursive Reprompting and Revision

- RECURRENTGPT: Interactive Generation of (Arbitrarily) Long Text

- DOC: Improving Long Story Coherence With Detailed Outline Control

- Weaver: Foundation Models for Creative Writing

- Assisting in Writing Wikipedia-like Articles From Scratch with Large Language Models

- 大模型方案

- DIN-SQL: Decomposed In-Context Learning of Text-to-SQL with Self-Correction ⭐

- C3: Zero-shot Text-to-SQL with ChatGPT ⭐

- SQL-PALM: IMPROVED LARGE LANGUAGE MODEL ADAPTATION FOR TEXT-TO-SQL

- BIRD Can LLM Already Serve as A Database Interface? A BIg Bench for Large-Scale Database Grounded Text-to-SQL ⭐

- A Case-Based Reasoning Framework for Adaptive Prompting in Cross-Domain Text-to-SQL

- ChatDB: AUGMENTING LLMS WITH DATABASES AS THEIR SYMBOLIC MEMORY

- A comprehensive evaluation of ChatGPT’s zero-shot Text-to-SQL capability

- Few-shot Text-to-SQL Translation using Structure and Content Prompt Learning

- Domain Knowledge Intensive

- Towards Knowledge-Intensive Text-to-SQL Semantic Parsing with Formulaic Knowledge

- Bridging the Generalization Gap in Text-to-SQL Parsing with Schema Expansion

- Towards Robustness of Text-to-SQL Models against Synonym Substitution

- FinQA: A Dataset of Numerical Reasoning over Financial Data

- others

- RESDSQL: Decoupling Schema Linking and Skeleton Parsing for Text-to-SQL

- MIGA: A Unified Multi-task Generation Framework for Conversational Text-to-SQL

- Code Generation with AlphaCodium: From Prompt Engineering to Flow Engineering

- Codeforces as an Educational Platform for Learning Programming in Digitalization

- Competition-Level Code Generation with AlphaCode

- CODECHAIN: TOWARDS MODULAR CODE GENERATION THROUGH CHAIN OF SELF-REVISIONS WITH REPRESENTATIVE SUB-MODULES

- AI Coders Are Among Us: Rethinking Programming Language Grammar Towards Efficient Code Generation

- Survey

- Large language models and the perils of their hallucinations

- Survey of Hallucination in Natural Language Generation

- Siren's Song in the AI Ocean: A Survey on Hallucination in Large Language Models

- A Survey of Hallucination in Large Foundation Models

- A Survey on Hallucination in Large Language Models: Principles, Taxonomy, Challenges, and Open Questions

- Calibrated Language Models Must Hallucinate

- Why Does ChatGPT Fall Short in Providing Truthful Answers?

- Prompt or Tunning

- R-Tuning: Teaching Large Language Models to Refuse Unknown Questions

- PROMPTING GPT-3 TO BE RELIABLE

- ASK ME ANYTHING: A SIMPLE STRATEGY FOR PROMPTING LANGUAGE MODELS ⭐

- On the Advance of Making Language Models Better Reasoners

- RefGPT: Reference → Truthful & Customized Dialogues Generation by GPTs and for GPTs

- Rethinking with Retrieval: Faithful Large Language Model Inference

- GENERATE RATHER THAN RETRIEVE: LARGE LANGUAGE MODELS ARE STRONG CONTEXT GENERATORS

- Large Language Models Struggle to Learn Long-Tail Knowledge

- Decoding Strategy

- Trusting Your Evidence: Hallucinate Less with Context-aware Decoding ⭐

- SELF-REFINE:ITERATIVE REFINEMENT WITH SELF-FEEDBACK ⭐

- Enhancing Self-Consistency and Performance of Pre-Trained Language Models through Natural Language Inference

- Inference-Time Intervention: Eliciting Truthful Answers from a Language Model

- Enabling Large Language Models to Generate Text with Citations

- Factuality Enhanced Language Models for Open-Ended Text Generation

- KL-Divergence Guided Temperature Sampling

- KCTS: Knowledge-Constrained Tree Search Decoding with Token-Level Hallucination Detection

- CONTRASTIVE DECODING IMPROVES REASONING IN LARGE LANGUAGE MODEL

- Contrastive Decoding: Open-ended Text Generation as Optimization

- Probing and Detection

- Automatic Evaluation of Attribution by Large Language Models

- QAFactEval: Improved QA-Based Factual Consistency Evaluation for Summarization

- Zero-Resource Hallucination Prevention for Large Language Models

- LLM Lies: Hallucinations are not Bugs, but Features as Adversarial Examples

- Language Models (Mostly) Know What They Know ⭐

- LM vs LM: Detecting Factual Errors via Cross Examination

- Do Language Models Know When They’re Hallucinating References?

- SELFCHECKGPT: Zero-Resource Black-Box Hallucination Detection for Generative Large Language Models

- SELF-CONTRADICTORY HALLUCINATIONS OF LLMS: EVALUATION, DETECTION AND MITIGATION

- Self-consistency for open-ended generations

- Improving Factuality and Reasoning in Language Models through Multiagent Debate

- Selective-LAMA: Selective Prediction for Confidence-Aware Evaluation of Language Models