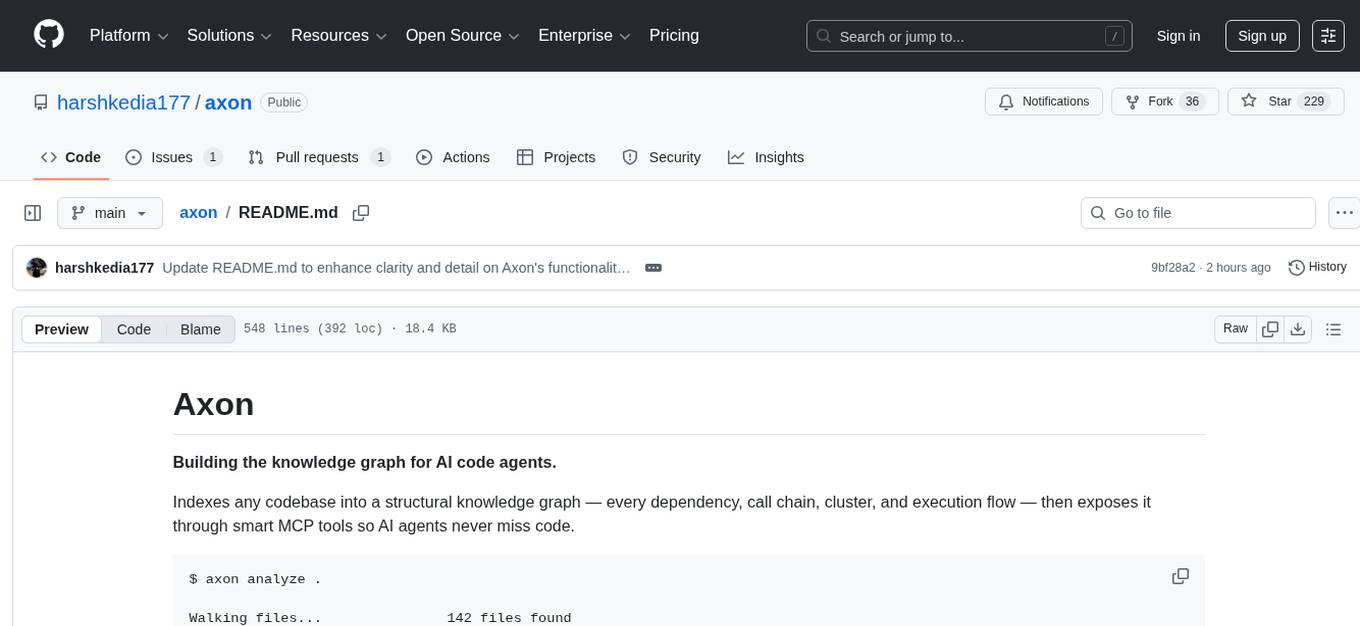

axon

Graph-powered code intelligence engine — indexes codebases into a knowledge graph, exposed via MCP tools for AI agents and a CLI for developers.

Stars: 204

Axon is a powerful neural network library for Python that provides a simple and flexible way to build, train, and deploy deep learning models. It offers a wide range of neural network architectures, optimization algorithms, and evaluation metrics to support various machine learning tasks. With Axon, users can easily create complex neural networks, train them on large datasets, and deploy them in production environments. The library is designed to be user-friendly and efficient, making it suitable for both beginners and experienced deep learning practitioners.

README:

Graph-powered code intelligence engine — indexes your codebase into a knowledge graph and exposes it via MCP tools for AI agents and a CLI for developers.

axon analyze .

Phase 1: Walking files... 142 files found

Phase 3: Parsing code... 142/142

Phase 5: Tracing calls... 847 calls resolved

Phase 7: Analyzing types... 234 type relationships

Phase 8: Detecting communities... 8 clusters found

Phase 9: Detecting execution flows... 34 processes found

Phase 10: Finding dead code... 12 unreachable symbols

Phase 11: Analyzing git history... 18 coupled file pairs

Done in 4.2s — 623 symbols, 1,847 edges, 8 clusters, 34 flows

Most code intelligence tools treat your codebase as flat text. Axon builds a structural graph — every function, class, import, call, type reference, and execution flow becomes a node or edge in a queryable knowledge graph. AI agents using Axon don't just search for keywords; they understand how your code is connected.

For AI agents (Claude Code, Cursor):

- "What breaks if I change this function?" → blast radius via call graph + type references + git coupling

- "What code is never called?" → dead code detection with framework-aware exemptions

- "Show me the login flow end-to-end" → execution flow tracing from entry points through the call graph

- "Which files always change together?" → git history change coupling analysis

For developers:

- Instant answers to architectural questions without grepping through files

- Find dead code, tightly coupled files, and execution flows automatically

- Raw Cypher queries against your codebase's knowledge graph

- Watch mode that re-indexes on every save

Zero cloud dependencies. Everything runs locally — parsing, graph storage, embeddings, search. No API keys, no data leaving your machine.

Axon doesn't just parse your code — it builds a deep structural understanding through 11 sequential analysis phases:

| Phase | What It Does |

|---|---|

| File Walking | Walks repo respecting .gitignore, filters by supported languages |

| Structure | Creates File/Folder hierarchy with CONTAINS relationships |

| Parsing | tree-sitter AST extraction — functions, classes, methods, interfaces, enums, type aliases |

| Import Resolution | Resolves import statements to actual files (relative, absolute, bare specifiers) |

| Call Tracing | Maps function calls with confidence scores (1.0 = exact match, 0.5 = fuzzy) |

| Heritage | Tracks class inheritance (EXTENDS) and interface implementation (IMPLEMENTS) |

| Type Analysis | Extracts type references from parameters, return types, and variable annotations |

| Community Detection | Leiden algorithm clusters related symbols into functional communities |

| Process Detection | Framework-aware entry point detection + BFS flow tracing |

| Dead Code Detection | Multi-pass analysis with override, protocol, and decorator awareness |

| Change Coupling | Git history analysis — finds files that always change together |

Three search strategies fused with Reciprocal Rank Fusion:

- BM25 full-text search — fast exact name and keyword matching via KuzuDB FTS

- Semantic vector search — conceptual queries via 384-dim embeddings (BAAI/bge-small-en-v1.5)

- Fuzzy name search — Levenshtein fallback for typos and partial matches

Test files are automatically down-ranked (0.5x), source-level functions/classes boosted (1.2x).

Finds unreachable symbols with intelligence — not just "zero callers" but a multi-pass analysis:

- Initial scan — flags functions/methods/classes with no incoming calls

-

Exemptions — entry points, exports, constructors, test code, dunder methods,

__init__.pypublic symbols, decorated functions,@propertymethods - Override pass — un-flags methods that override non-dead base class methods (handles dynamic dispatch)

- Protocol conformance — un-flags methods on classes conforming to Protocol interfaces

- Protocol stubs — un-flags all methods on Protocol classes (interface contracts)

When you're about to change a symbol, Axon traces upstream through:

- Call graph — every function that calls this one, recursively

- Type references — every function that takes, returns, or stores this type

- Git coupling — files that historically change alongside this one

Uses the Leiden algorithm (igraph + leidenalg) to automatically discover functional clusters in your codebase. Each community gets a cohesion score and auto-generated label based on member file paths.

Detects entry points using framework-aware patterns:

-

Python:

@app.route,@router.get,@click.command,test_*functions,__main__blocks -

JavaScript/TypeScript: Express handlers, exported functions,

handler/middlewarepatterns

Then traces BFS execution flows from each entry point through the call graph, classifying flows as intra-community or cross-community.

Analyzes 6 months of git history to find hidden dependencies that static analysis misses:

coupling(A, B) = co_changes(A, B) / max(changes(A), changes(B))

Files with coupling strength ≥ 0.3 and 3+ co-changes get linked. Surfaces coupled files in impact analysis.

Live re-indexing powered by a Rust-based file watcher (watchfiles):

$ axon watch

Watching /Users/you/project for changes...

[10:32:15] src/auth/validate.py modified → re-indexed (0.3s)

[10:33:02] 2 files modified → re-indexed (0.5s)- File-local phases (parse, imports, calls, types) run immediately on change

- Global phases (communities, processes, dead code) batch every 30 seconds

Structural diff between branches using git worktrees (no stashing required):

$ axon diff main..feature

Symbols added (4):

+ process_payment (Function) -- src/payments/stripe.py

+ PaymentIntent (Class) -- src/payments/models.py

Symbols modified (2):

~ checkout_handler (Function) -- src/routes/checkout.py

Symbols removed (1):

- old_charge (Function) -- src/payments/legacy.py| Language | Extensions | Parser |

|---|---|---|

| Python | .py |

tree-sitter-python |

| TypeScript |

.ts, .tsx

|

tree-sitter-typescript |

| JavaScript |

.js, .jsx, .mjs, .cjs

|

tree-sitter-javascript |

# With pip

pip install axoniq

# With uv (recommended)

uv add axoniq

# With Neo4j backend support

pip install axoniq[neo4j]Requires Python 3.11+.

git clone https://github.com/harshkedia177/axon.git

cd axon

uv sync --all-extras

uv run axon --helpcd your-project

axon analyze .# Search for symbols

axon query "authentication handler"

# Get full context on a symbol

axon context validate_user

# Check blast radius before changing something

axon impact UserModel --depth 3

# Find dead code

axon dead-code

# Run a raw Cypher query

axon cypher "MATCH (n:Function) WHERE n.is_dead = true RETURN n.name, n.file_path"# Watch mode — re-indexes on every save

axon watch

# Or re-analyze manually

axon analyze .axon analyze [PATH] Index a repository (default: current directory)

--full Force full rebuild (skip incremental)

axon status Show index status for current repo

axon list List all indexed repositories

axon clean Delete index for current repo

--force / -f Skip confirmation prompt

axon query QUERY Hybrid search the knowledge graph

--limit / -n N Max results (default: 20)

axon context SYMBOL 360-degree view of a symbol

axon impact SYMBOL Blast radius analysis

--depth / -d N BFS traversal depth (default: 3)

axon dead-code List all detected dead code

axon cypher QUERY Execute a raw Cypher query (read-only)

axon watch Watch mode — live re-indexing on file changes

axon diff BASE..HEAD Structural branch comparison

axon setup Print MCP configuration JSON

--claude For Claude Code

--cursor For Cursor

axon mcp Start the MCP server (stdio transport)

axon serve Start the MCP server (same as axon mcp)

--watch, -w Enable live file watching with auto-reindex

axon --version Print version

Axon exposes its full intelligence as an MCP server, giving AI agents like Claude Code and Cursor deep structural understanding of your codebase.

Add to your .claude/settings.json or project .mcp.json:

{

"mcpServers": {

"axon": {

"command": "axon",

"args": ["serve", "--watch"]

}

}

}This starts the MCP server with live file watching — the knowledge graph updates automatically as you edit code. To run without watching, use "args": ["mcp"] instead.

Or run the setup helper:

axon setup --claudeAdd to your Cursor MCP settings:

{

"axon": {

"command": "axon",

"args": ["serve", "--watch"]

}

}Or run:

axon setup --cursorOnce connected, your AI agent gets access to these tools:

| Tool | Description |

|---|---|

axon_list_repos |

List all indexed repositories with stats |

axon_query |

Hybrid search (BM25 + vector + fuzzy) across all symbols |

axon_context |

360-degree view — callers, callees, type refs, community, processes |

axon_impact |

Blast radius — all symbols affected by changing the target |

axon_dead_code |

List all unreachable symbols grouped by file |

axon_detect_changes |

Map a git diff to affected symbols in the graph |

axon_cypher |

Execute read-only Cypher queries against the knowledge graph |

Every tool response includes a next-step hint guiding the agent through a natural investigation workflow:

query → "Next: Use context() on a specific symbol for the full picture."

context → "Next: Use impact() if planning changes to this symbol."

impact → "Tip: Review each affected symbol before making changes."

| Resource URI | Description |

|---|---|

axon://overview |

Node and relationship counts by type |

axon://dead-code |

Full dead code report |

axon://schema |

Graph schema reference for writing Cypher queries |

| Label | Description |

|---|---|

File |

Source file |

Folder |

Directory |

Function |

Top-level function |

Class |

Class definition |

Method |

Method within a class |

Interface |

Interface / Protocol definition |

TypeAlias |

Type alias |

Enum |

Enumeration |

Community |

Auto-detected functional cluster |

Process |

Detected execution flow |

| Type | Description | Key Properties |

|---|---|---|

CONTAINS |

Folder → File/Symbol hierarchy | — |

DEFINES |

File → Symbol it defines | — |

CALLS |

Symbol → Symbol it calls |

confidence (0.0–1.0) |

IMPORTS |

File → File it imports from |

symbols (names list) |

EXTENDS |

Class → Class it extends | — |

IMPLEMENTS |

Class → Interface it implements | — |

USES_TYPE |

Symbol → Type it references |

role (param/return/variable) |

EXPORTS |

File → Symbol it exports | — |

MEMBER_OF |

Symbol → Community it belongs to | — |

STEP_IN_PROCESS |

Symbol → Process it participates in | step_number |

COUPLED_WITH |

File → File that co-changes with it |

strength, co_changes

|

{label}:{relative_path}:{symbol_name}

Examples:

function:src/auth/validate.py:validate_user

class:src/models/user.py:User

method:src/models/user.py:User.save

Source Code (.py, .ts, .js, .tsx, .jsx)

│

▼

┌──────────────────────────────────────────────┐

│ Ingestion Pipeline (11 phases) │

│ │

│ walk → structure → parse → imports → calls │

│ → heritage → types → communities → processes │

│ → dead_code → coupling │

└──────────────────────┬───────────────────────┘

│

▼

┌─────────────────┐

│ KnowledgeGraph │ (in-memory during build)

└────────┬────────┘

│

┌────────────┼────────────┐

▼ ▼ ▼

┌─────────┐ ┌─────────┐ ┌─────────┐

│ KuzuDB │ │ FTS │ │ Vector │

│ (graph) │ │ (BM25) │ │ (HNSW) │

└────┬────┘ └────┬────┘ └────┬────┘

└────────────┼────────────┘

│

StorageBackend Protocol

│

┌────────┴────────┐

▼ ▼

┌──────────┐ ┌──────────┐

│ MCP │ │ CLI │

│ Server │ │ (Typer) │

│ (stdio) │ │ │

└────┬─────┘ └────┬─────┘

│ │

Claude Code Terminal

/ Cursor (developer)

| Layer | Technology | Purpose |

|---|---|---|

| Parsing | tree-sitter | Language-agnostic AST extraction |

| Graph Storage | KuzuDB | Embedded graph database with Cypher, FTS, and vector support |

| Graph Algorithms | igraph + leidenalg | Leiden community detection |

| Embeddings | fastembed | ONNX-based 384-dim vectors (~100MB, no PyTorch) |

| MCP Protocol | mcp SDK (FastMCP) | AI agent communication via stdio |

| CLI | Typer + Rich | Terminal interface with progress bars |

| File Watching | watchfiles | Rust-based file system watcher |

| Gitignore | pathspec | Full .gitignore pattern matching |

Everything lives locally in your repo:

your-project/

└── .axon/

├── kuzu/ # KuzuDB graph database (graph + FTS + vectors)

└── meta.json # Index metadata and stats

Add .axon/ to your .gitignore.

The storage layer is abstracted behind a StorageBackend Protocol — KuzuDB is the default, with an optional Neo4j backend available via pip install axoniq[neo4j].

# See everything connected to User

axon context User

# Check blast radius

axon impact User --depth 3

# Find which files always change with user.py

axon cypher "MATCH (a:File)-[r:CodeRelation]->(b:File) WHERE a.name = 'user.py' AND r.rel_type = 'coupled_with' RETURN b.name, r.strength ORDER BY r.strength DESC"axon dead-codeaxon cypher "MATCH (p:Process) RETURN p.name, p.properties ORDER BY p.name"axon cypher "MATCH (a:File)-[r:CodeRelation]->(b:File) WHERE r.rel_type = 'coupled_with' RETURN a.name, b.name, r.strength ORDER BY r.strength DESC LIMIT 20"| Capability | grep/ripgrep | LSP | Axon |

|---|---|---|---|

| Text search | Yes | No | Yes (hybrid BM25 + vector) |

| Go to definition | No | Yes | Yes (graph traversal) |

| Find all callers | No | Partial | Yes (full call graph with confidence) |

| Type relationships | No | Yes | Yes (param/return/variable roles) |

| Dead code detection | No | No | Yes (multi-pass, framework-aware) |

| Execution flow tracing | No | No | Yes (entry point → flow) |

| Community detection | No | No | Yes (Leiden algorithm) |

| Change coupling (git) | No | No | Yes (6-month co-change analysis) |

| Impact analysis | No | No | Yes (calls + types + git coupling) |

| AI agent integration | No | Partial | Yes (full MCP server) |

| Structural branch diff | No | No | Yes (node/edge level) |

| Watch mode | No | Yes | Yes (Rust-based, 500ms debounce) |

| Works offline | Yes | Yes | Yes |

git clone https://github.com/harshkedia177/axon.git

cd axon

uv sync --all-extras

# Run tests

uv run pytest

# Lint

uv run ruff check src/

# Run from source

uv run axon --helpMIT

Built by @harshkedia177

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for axon

Similar Open Source Tools

axon

Axon is a powerful neural network library for Python that provides a simple and flexible way to build, train, and deploy deep learning models. It offers a wide range of neural network architectures, optimization algorithms, and evaluation metrics to support various machine learning tasks. With Axon, users can easily create complex neural networks, train them on large datasets, and deploy them in production environments. The library is designed to be user-friendly and efficient, making it suitable for both beginners and experienced deep learning practitioners.

deeppowers

Deeppowers is a powerful Python library for deep learning applications. It provides a wide range of tools and utilities to simplify the process of building and training deep neural networks. With Deeppowers, users can easily create complex neural network architectures, perform efficient training and optimization, and deploy models for various tasks. The library is designed to be user-friendly and flexible, making it suitable for both beginners and experienced deep learning practitioners.

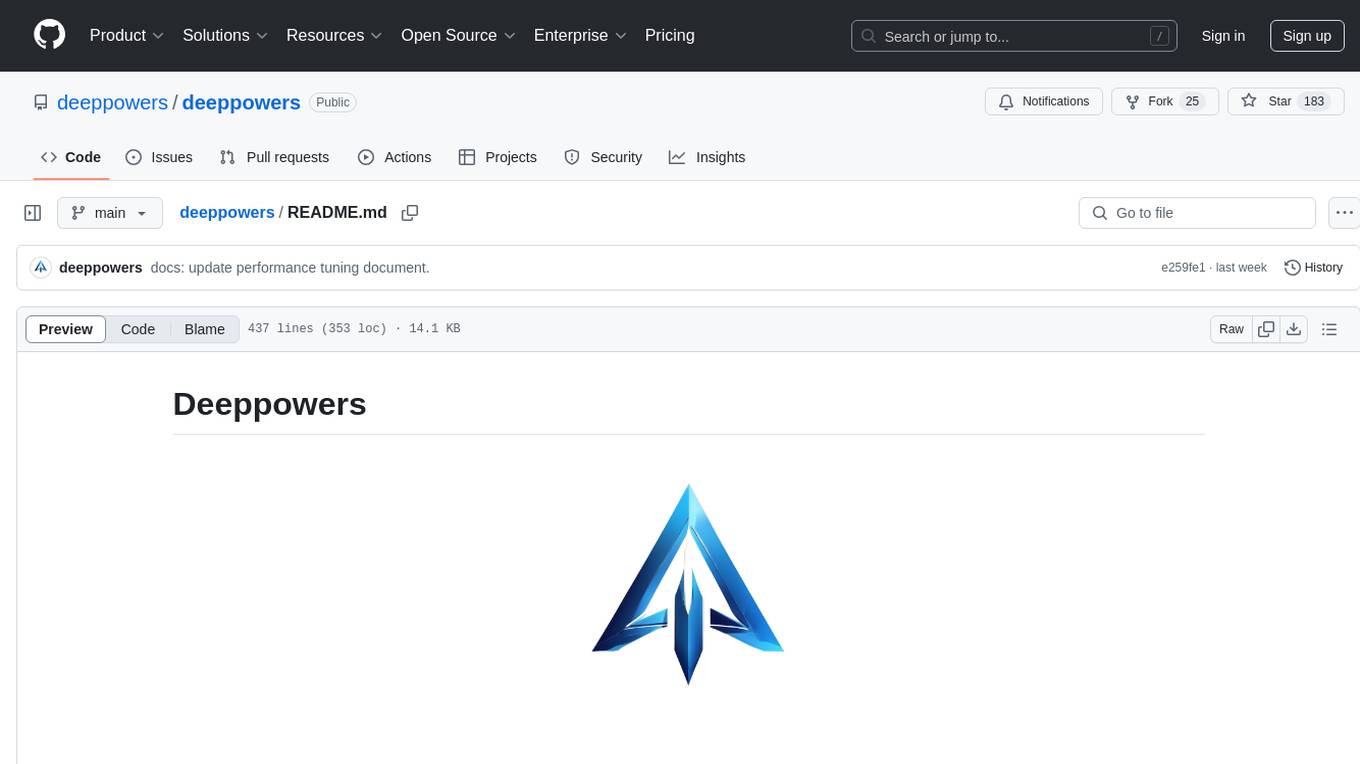

deepteam

Deepteam is a powerful open-source tool designed for deep learning projects. It provides a user-friendly interface for training, testing, and deploying deep neural networks. With Deepteam, users can easily create and manage complex models, visualize training progress, and optimize hyperparameters. The tool supports various deep learning frameworks and allows seamless integration with popular libraries like TensorFlow and PyTorch. Whether you are a beginner or an experienced deep learning practitioner, Deepteam simplifies the development process and accelerates model deployment.

lemonai

LemonAI is a versatile machine learning library designed to simplify the process of building and deploying AI models. It provides a wide range of tools and algorithms for data preprocessing, model training, and evaluation. With LemonAI, users can easily experiment with different machine learning techniques and optimize their models for various tasks. The library is well-documented and beginner-friendly, making it suitable for both novice and experienced data scientists. LemonAI aims to streamline the development of AI applications and empower users to create innovative solutions using state-of-the-art machine learning methods.

ai

This repository contains a collection of AI algorithms and models for various machine learning tasks. It provides implementations of popular algorithms such as neural networks, decision trees, and support vector machines. The code is well-documented and easy to understand, making it suitable for both beginners and experienced developers. The repository also includes example datasets and tutorials to help users get started with building and training AI models. Whether you are a student learning about AI or a professional working on machine learning projects, this repository can be a valuable resource for your development journey.

osaurus

Osaurus is a versatile open-source tool designed for data scientists and machine learning engineers. It provides a wide range of functionalities for data preprocessing, feature engineering, model training, and evaluation. With Osaurus, users can easily clean and transform raw data, extract relevant features, build and tune machine learning models, and analyze model performance. The tool supports various machine learning algorithms and techniques, making it suitable for both beginners and experienced practitioners in the field. Osaurus is actively maintained and updated to incorporate the latest advancements in the machine learning domain, ensuring users have access to state-of-the-art tools and methodologies for their projects.

neurons.me

Neurons.me is an open-source tool designed for creating and managing neural network models. It provides a user-friendly interface for building, training, and deploying deep learning models. With Neurons.me, users can easily experiment with different architectures, hyperparameters, and datasets to optimize their neural networks for various tasks. The tool simplifies the process of developing AI applications by abstracting away the complexities of model implementation and training.

open-ai

Open AI is a powerful tool for artificial intelligence research and development. It provides a wide range of machine learning models and algorithms, making it easier for developers to create innovative AI applications. With Open AI, users can explore cutting-edge technologies such as natural language processing, computer vision, and reinforcement learning. The platform offers a user-friendly interface and comprehensive documentation to support users in building and deploying AI solutions. Whether you are a beginner or an experienced AI practitioner, Open AI offers the tools and resources you need to accelerate your AI projects and stay ahead in the rapidly evolving field of artificial intelligence.

FLAME

FLAME is a lightweight and efficient deep learning framework designed for edge devices. It provides a simple and user-friendly interface for developing and deploying deep learning models on resource-constrained devices. With FLAME, users can easily build and optimize neural networks for tasks such as image classification, object detection, and natural language processing. The framework supports various neural network architectures and optimization techniques, making it suitable for a wide range of applications in the field of edge computing.

AI_Spectrum

AI_Spectrum is a versatile machine learning library that provides a wide range of tools and algorithms for building and deploying AI models. It offers a user-friendly interface for data preprocessing, model training, and evaluation. With AI_Spectrum, users can easily experiment with different machine learning techniques and optimize their models for various tasks. The library is designed to be flexible and scalable, making it suitable for both beginners and experienced data scientists.

pdr_ai_v2

pdr_ai_v2 is a Python library for implementing machine learning algorithms and models. It provides a wide range of tools and functionalities for data preprocessing, model training, evaluation, and deployment. The library is designed to be user-friendly and efficient, making it suitable for both beginners and experienced data scientists. With pdr_ai_v2, users can easily build and deploy machine learning models for various applications, such as classification, regression, clustering, and more.

ai-inference

AI Inference is a Python library that provides tools for deploying and running machine learning models in production environments. It simplifies the process of integrating AI models into applications by offering a high-level API for inference tasks. With AI Inference, developers can easily load pre-trained models, perform inference on new data, and deploy models as RESTful APIs. The library supports various deep learning frameworks such as TensorFlow and PyTorch, making it versatile for a wide range of AI applications.

Automodel

Automodel is a Python library for automating the process of building and evaluating machine learning models. It provides a set of tools and utilities to streamline the model development workflow, from data preprocessing to model selection and evaluation. With Automodel, users can easily experiment with different algorithms, hyperparameters, and feature engineering techniques to find the best model for their dataset. The library is designed to be user-friendly and customizable, allowing users to define their own pipelines and workflows. Automodel is suitable for data scientists, machine learning engineers, and anyone looking to quickly build and test machine learning models without the need for manual intervention.

sdk-python

Strands Agents is a lightweight and flexible SDK that takes a model-driven approach to building and running AI agents. It supports various model providers, offers advanced capabilities like multi-agent systems and streaming support, and comes with built-in MCP server support. Users can easily create tools using Python decorators, integrate MCP servers seamlessly, and leverage multiple model providers for different AI tasks. The SDK is designed to scale from simple conversational assistants to complex autonomous workflows, making it suitable for a wide range of AI development needs.

Awesome-Efficient-MoE

Awesome Efficient MoE is a GitHub repository that provides an implementation of Mixture of Experts (MoE) models for efficient deep learning. The repository includes code for training and using MoE models, which are neural network architectures that combine multiple expert networks to improve performance on complex tasks. MoE models are particularly useful for handling diverse data distributions and capturing complex patterns in data. The implementation in this repository is designed to be efficient and scalable, making it suitable for training large-scale MoE models on modern hardware. The code is well-documented and easy to use, making it accessible for researchers and practitioners interested in leveraging MoE models for their deep learning projects.

simple-ai

Simple AI is a lightweight Python library for implementing basic artificial intelligence algorithms. It provides easy-to-use functions and classes for tasks such as machine learning, natural language processing, and computer vision. With Simple AI, users can quickly prototype and deploy AI solutions without the complexity of larger frameworks.

For similar tasks

AiTreasureBox

AiTreasureBox is a versatile AI tool that provides a collection of pre-trained models and algorithms for various machine learning tasks. It simplifies the process of implementing AI solutions by offering ready-to-use components that can be easily integrated into projects. With AiTreasureBox, users can quickly prototype and deploy AI applications without the need for extensive knowledge in machine learning or deep learning. The tool covers a wide range of tasks such as image classification, text generation, sentiment analysis, object detection, and more. It is designed to be user-friendly and accessible to both beginners and experienced developers, making AI development more efficient and accessible to a wider audience.

InternVL

InternVL scales up the ViT to _**6B parameters**_ and aligns it with LLM. It is a vision-language foundation model that can perform various tasks, including: **Visual Perception** - Linear-Probe Image Classification - Semantic Segmentation - Zero-Shot Image Classification - Multilingual Zero-Shot Image Classification - Zero-Shot Video Classification **Cross-Modal Retrieval** - English Zero-Shot Image-Text Retrieval - Chinese Zero-Shot Image-Text Retrieval - Multilingual Zero-Shot Image-Text Retrieval on XTD **Multimodal Dialogue** - Zero-Shot Image Captioning - Multimodal Benchmarks with Frozen LLM - Multimodal Benchmarks with Trainable LLM - Tiny LVLM InternVL has been shown to achieve state-of-the-art results on a variety of benchmarks. For example, on the MMMU image classification benchmark, InternVL achieves a top-1 accuracy of 51.6%, which is higher than GPT-4V and Gemini Pro. On the DocVQA question answering benchmark, InternVL achieves a score of 82.2%, which is also higher than GPT-4V and Gemini Pro. InternVL is open-sourced and available on Hugging Face. It can be used for a variety of applications, including image classification, object detection, semantic segmentation, image captioning, and question answering.

clarifai-python

The Clarifai Python SDK offers a comprehensive set of tools to integrate Clarifai's AI platform to leverage computer vision capabilities like classification , detection ,segementation and natural language capabilities like classification , summarisation , generation , Q&A ,etc into your applications. With just a few lines of code, you can leverage cutting-edge artificial intelligence to unlock valuable insights from visual and textual content.

X-AnyLabeling

X-AnyLabeling is a robust annotation tool that seamlessly incorporates an AI inference engine alongside an array of sophisticated features. Tailored for practical applications, it is committed to delivering comprehensive, industrial-grade solutions for image data engineers. This tool excels in swiftly and automatically executing annotations across diverse and intricate tasks.

ailia-models

The collection of pre-trained, state-of-the-art AI models. ailia SDK is a self-contained, cross-platform, high-speed inference SDK for AI. The ailia SDK provides a consistent C++ API across Windows, Mac, Linux, iOS, Android, Jetson, and Raspberry Pi platforms. It also supports Unity (C#), Python, Rust, Flutter(Dart) and JNI for efficient AI implementation. The ailia SDK makes extensive use of the GPU through Vulkan and Metal to enable accelerated computing. # Supported models 323 models as of April 8th, 2024

edenai-apis

Eden AI aims to simplify the use and deployment of AI technologies by providing a unique API that connects to all the best AI engines. With the rise of **AI as a Service** , a lot of companies provide off-the-shelf trained models that you can access directly through an API. These companies are either the tech giants (Google, Microsoft , Amazon) or other smaller, more specialized companies, and there are hundreds of them. Some of the most known are : DeepL (translation), OpenAI (text and image analysis), AssemblyAI (speech analysis). There are **hundreds of companies** doing that. We're regrouping the best ones **in one place** !

NanoLLM

NanoLLM is a tool designed for optimized local inference for Large Language Models (LLMs) using HuggingFace-like APIs. It supports quantization, vision/language models, multimodal agents, speech, vector DB, and RAG. The tool aims to provide efficient and effective processing for LLMs on local devices, enhancing performance and usability for various AI applications.

open-ai

Open AI is a powerful tool for artificial intelligence research and development. It provides a wide range of machine learning models and algorithms, making it easier for developers to create innovative AI applications. With Open AI, users can explore cutting-edge technologies such as natural language processing, computer vision, and reinforcement learning. The platform offers a user-friendly interface and comprehensive documentation to support users in building and deploying AI solutions. Whether you are a beginner or an experienced AI practitioner, Open AI offers the tools and resources you need to accelerate your AI projects and stay ahead in the rapidly evolving field of artificial intelligence.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.