FinAnGPT-Pro

A script for creating your very own AI-Powered stock screener

Stars: 188

FinAnGPT-Pro is a financial data downloader and AI query system that downloads quarterly and annual financial data for stocks from EOD Historical Data, storing it in MongoDB and Google BigQuery. It includes an AI-powered natural language interface for querying financial data. Users can set up the tool by following the prerequisites and setup instructions provided in the README. The tool allows users to download financial data for all stocks in a watchlist or for a single stock, query financial data using a natural language interface, and receive responses in a structured format. Important considerations include error handling, rate limiting, data validation, BigQuery costs, MongoDB connection, and security measures for API keys and credentials.

README:

This project is based on the article Grok is Overrated. Do This To Transform ANY LLM to a Super-Intelligent Financial Analyst. It downloads financial data (quarterly and annual) for stocks from EOD Historical Data and stores it in both MongoDB and Google BigQuery. It also includes an AI-powered natural language interface for querying the financial data.

For a more comprehensive, UI-based solution with additional features like algorithmic trading, check out NexusTrade.

Before you begin, ensure you have the following:

-

Node.js (version 18 or higher) and npm installed.

-

MongoDB installed and running locally or accessible via a connection string.

-

Google Cloud Platform (GCP) account with BigQuery enabled.

-

Requesty API key (Sign up for Requesty here - referral link).

-

An EOD Historical Data API key (Sign up for a free or paid plan here - referral link).

-

(Optional) Ollama installed and running locally (Download here) if you want to use local LLM capabilities instead of Requesty.

-

A

.envfile in the root directory with the following variables:CLOUD_DB="mongodb://localhost:27017/your_cloud_db" # Replace with your MongoDB connection string LOCAL_DB="mongodb://localhost:27017/your_local_db" # Replace with your MongoDB connection string EODHD_API_KEY="YOUR_EODHD_API_KEY" # Replace with your EODHD API key REQUESTY_API_KEY="YOUR_REQUESTY_API_KEY" # Replace with your Requesty API key GOOGLE_APPLICATION_CREDENTIALS_JSON='{"type": "service_account", ...}' # Replace with your GCP service account credentials JSON OLLAMA_SERVICE_URL="http://localhost:11434" # Optional: Only needed if using Ollama instead of RequestyImportant: The

GOOGLE_APPLICATION_CREDENTIALS_JSONenvironment variable should contain the entire JSON content of your Google Cloud service account key. This is necessary for authenticating with BigQuery. Make sure this is properly formatted and secured.

-

Clone the repository:

git clone https://github.com/austin-starks/FinAnGPT-Pro cd FinAnGPT-Pro -

Install dependencies:

npm install

-

Create a

.envfile in the root directory of the project. Populate it with the necessary environment variables as described in the "Prerequisites" section. Do not commit this file to your repository! -

Set up Google Cloud credentials:

- Create a Google Cloud service account with BigQuery Data Editor permissions.

- Download the service account key as a JSON file.

- Set the

GOOGLE_APPLICATION_CREDENTIALS_JSONenvironment variable to the contents of this file. Ensure proper JSON formatting.

You have two options for running the script:

Option 1: Using node directly (requires compilation)

-

Compile the TypeScript code:

npm run build

This will create a

distdirectory with the compiled JavaScript files. -

Run the compiled script:

node dist/index.js

Option 2: Using ts-node (for development/easier execution)

-

Install

ts-nodeglobally (if you haven't already):npm install -g ts-node

-

Run the script directly:

ts-node index.ts

-

For all stocks in your watchlist:

- The project includes a

tickers.csvfile with a pre-populated list of major US stocks - You can modify this file to add or remove tickers (one ticker per line, skip the header row)

- Run the upload script:

ts-node upload.ts

- The project includes a

-

For a single stock:

- Modify the

upload.tsscript to useprocessAndSaveEarningsForOneStock:

// In upload.ts const processor = new EarningsProcessor(); await processor.processAndSaveEarningsForOneStock("AAPL"); // Replace with your desired ticker

- Modify the

.

├── src/

│ ├── models/

│ │ └── StockFinancials.ts

│ └── services/

│ ├── databases/

│ │ ├── bigQuery.ts

│ │ └── mongo.ts

│ ├── fundamentalApi/

│ │ └── EodhdClient.ts

│ └── llmApi/

│ ├── clients/

│ │ ├── OllamaServiceClient.ts

│ │ └── RequestyServiceClient.ts

│ └── logs/

│ ├── ollamaChatLogs.ts

│ └── requestyChatLogs.ts

├── tickers.csv

├── .env

├── upload.ts

├── chat.ts

└── README.md

The project includes a natural language interface for querying financial data. You can ask questions in plain English about the stored financial data.

To use the AI query system:

-

Run the chat script:

ts-node chat.ts

-

Example queries you can try:

- "What stocks have the highest revenue?"

- "Show me companies with increasing free cash flow over the last 4 quarters"

- "Which companies have the highest net income in their latest annual report?"

- "List the top 10 companies by EBITDA margin"

The system will convert your natural language query into SQL, execute it against BigQuery, and return the results.

Here's a sample response when asking about companies with the highest net income:

Here's a summary of the stocks with the highest reported net income:

**Summary:**

The top companies by net income are primarily in the technology and finance sectors. Alphabet (GOOG/GOOGL) leads, followed by Berkshire Hathaway (BRK-A/BRK-B), Apple (AAPL), and Microsoft (MSFT).

**Top Stocks by Net Income:**

| Ticker | Symbol | Net Income (USD) | Date |

|--------|--------|------------------|------------|

| GOOG | GOOG | 100,118,000,000 | 2025-02-05 |

| GOOGL | GOOGL | 100,118,000,000 | 2025-02-05 |

| BRK-B | BRK-B | 96,223,000,000 | 2024-02-26 |

| BRK-A | BRK-A | 96,223,000,000 | 2024-02-26 |

| AAPL | AAPL | 93,736,000,000 | 2024-11-01 |

**Insights:**

- The data includes both Class A and Class B shares for Alphabet and Berkshire Hathaway

- Most recent data is from early 2025 reporting period

- Error Handling: The script includes basic error handling, but you may want to enhance it for production use. Consider adding more robust logging and retry mechanisms.

- Rate Limiting: Be mindful of the EOD Historical Data API's rate limits. Implement appropriate delays or batching to avoid exceeding the limits.

- Data Validation: The script filters numeric fields before inserting into BigQuery. You may want to add more comprehensive data validation to ensure data quality.

- BigQuery Costs: Be aware of BigQuery's pricing model. Storing and querying large datasets can incur costs. Optimize your queries and data storage strategies to minimize expenses.

- MongoDB Connection: Ensure your MongoDB instance is running and accessible from the machine running the script.

- Security: Protect your API keys and service account credentials. Do not hardcode them in your code or commit them to your repository. Use environment variables and secure storage mechanisms.

Contributions are welcome! Please submit a pull request with your changes.

This project supports using Ollama as a local LLM alternative to cloud-based services. To use Ollama:

-

Install Ollama:

- Download and install from ollama.com/download

- Follow the installation instructions for your operating system

-

Pull your desired model:

ollama pull llama2 # or mistral, codellama, etc. -

Ensure Ollama is running:

- The service should be running on http://localhost:11434

- Verify by checking if the service responds:

curl http://localhost:11434/api/tags

-

Configure the environment:

- Make sure your

.envfile includes:OLLAMA_SERVICE_URL="http://localhost:11434" - The system will automatically use Ollama for queries when configured

- Make sure your

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for FinAnGPT-Pro

Similar Open Source Tools

FinAnGPT-Pro

FinAnGPT-Pro is a financial data downloader and AI query system that downloads quarterly and annual financial data for stocks from EOD Historical Data, storing it in MongoDB and Google BigQuery. It includes an AI-powered natural language interface for querying financial data. Users can set up the tool by following the prerequisites and setup instructions provided in the README. The tool allows users to download financial data for all stocks in a watchlist or for a single stock, query financial data using a natural language interface, and receive responses in a structured format. Important considerations include error handling, rate limiting, data validation, BigQuery costs, MongoDB connection, and security measures for API keys and credentials.

RooFlow

RooFlow is a VS Code extension that enhances AI-assisted development by providing persistent project context and optimized mode interactions. It reduces token consumption and streamlines workflow by integrating Architect, Code, Test, Debug, and Ask modes. The tool simplifies setup, offers real-time updates, and provides clearer instructions through YAML-based rule files. It includes components like Memory Bank, System Prompts, VS Code Integration, and Real-time Updates. Users can install RooFlow by downloading specific files, placing them in the project structure, and running an insert-variables script. They can then start a chat, select a mode, interact with Roo, and use the 'Update Memory Bank' command for synchronization. The Memory Bank structure includes files for active context, decision log, product context, progress tracking, and system patterns. RooFlow features persistent context, real-time updates, mode collaboration, and reduced token consumption.

graphiti

Graphiti is a framework for building and querying temporally-aware knowledge graphs, tailored for AI agents in dynamic environments. It continuously integrates user interactions, structured and unstructured data, and external information into a coherent, queryable graph. The framework supports incremental data updates, efficient retrieval, and precise historical queries without complete graph recomputation, making it suitable for developing interactive, context-aware AI applications.

RainbowGPT

RainbowGPT is a versatile tool that offers a range of functionalities, including Stock Analysis for financial decision-making, MySQL Management for database navigation, and integration of AI technologies like GPT-4 and ChatGlm3. It provides a user-friendly interface suitable for all skill levels, ensuring seamless information flow and continuous expansion of emerging technologies. The tool enhances adaptability, creativity, and insight, making it a valuable asset for various projects and tasks.

langmanus

LangManus is a community-driven AI automation framework that combines language models with specialized tools for tasks like web search, crawling, and Python code execution. It implements a hierarchical multi-agent system with agents like Coordinator, Planner, Supervisor, Researcher, Coder, Browser, and Reporter. The framework supports LLM integration, search and retrieval tools, Python integration, workflow management, and visualization. LangManus aims to give back to the open-source community and welcomes contributions in various forms.

code2prompt

Code2Prompt is a powerful command-line tool that generates comprehensive prompts from codebases, designed to streamline interactions between developers and Large Language Models (LLMs) for code analysis, documentation, and improvement tasks. It bridges the gap between codebases and LLMs by converting projects into AI-friendly prompts, enabling users to leverage AI for various software development tasks. The tool offers features like holistic codebase representation, intelligent source tree generation, customizable prompt templates, smart token management, Gitignore integration, flexible file handling, clipboard-ready output, multiple output options, and enhanced code readability.

DevDocs

DevDocs is a platform designed to simplify the process of digesting technical documentation for software engineers and developers. It automates the extraction and conversion of web content into markdown format, making it easier for users to access and understand the information. By crawling through child pages of a given URL, DevDocs provides a streamlined approach to gathering relevant data and integrating it into various tools for software development. The tool aims to save time and effort by eliminating the need for manual research and content extraction, ultimately enhancing productivity and efficiency in the development process.

deep-research

Deep Research is a lightning-fast tool that uses powerful AI models to generate comprehensive research reports in just a few minutes. It leverages advanced 'Thinking' and 'Task' models, combined with an internet connection, to provide fast and insightful analysis on various topics. The tool ensures privacy by processing and storing all data locally. It supports multi-platform deployment, offers support for various large language models, web search functionality, knowledge graph generation, research history preservation, local and server API support, PWA technology, multi-key payload support, multi-language support, and is built with modern technologies like Next.js and Shadcn UI. Deep Research is open-source under the MIT License.

run-gemini-cli

run-gemini-cli is a GitHub Action that integrates Gemini into your development workflow via the Gemini CLI. It acts as an autonomous agent for routine coding tasks and an on-demand collaborator. Use it for GitHub pull request reviews, triaging issues, code analysis, and more. It provides automation, on-demand collaboration, extensibility with tools, and customization options.

company-research-agent

Agentic Company Researcher is a multi-agent tool that generates comprehensive company research reports by utilizing a pipeline of AI agents to gather, curate, and synthesize information from various sources. It features multi-source research, AI-powered content filtering, real-time progress streaming, dual model architecture, modern React frontend, and modular architecture. The tool follows an agentic framework with specialized research and processing nodes, leverages separate models for content generation, uses a content curation system for relevance scoring and document processing, and implements a real-time communication system via WebSocket connections. Users can set up the tool quickly using the provided setup script or manually, and it can also be deployed using Docker and Docker Compose. The application can be used for local development and deployed to various cloud platforms like AWS Elastic Beanstalk, Docker, Heroku, and Google Cloud Run.

TaskWeaver

TaskWeaver is a code-first agent framework designed for planning and executing data analytics tasks. It interprets user requests through code snippets, coordinates various plugins to execute tasks in a stateful manner, and preserves both chat history and code execution history. It supports rich data structures, customized algorithms, domain-specific knowledge incorporation, stateful execution, code verification, easy debugging, security considerations, and easy extension. TaskWeaver is easy to use with CLI and WebUI support, and it can be integrated as a library. It offers detailed documentation, demo examples, and citation guidelines.

Local-File-Organizer

The Local File Organizer is an AI-powered tool designed to help users organize their digital files efficiently and securely on their local device. By leveraging advanced AI models for text and visual content analysis, the tool automatically scans and categorizes files, generates relevant descriptions and filenames, and organizes them into a new directory structure. All AI processing occurs locally using the Nexa SDK, ensuring privacy and security. With support for multiple file types and customizable prompts, this tool aims to simplify file management and bring order to users' digital lives.

restai

RestAI is an AIaaS (AI as a Service) platform that allows users to create and consume AI agents (projects) using a simple REST API. It supports various types of agents, including RAG (Retrieval-Augmented Generation), RAGSQL (RAG for SQL), inference, vision, and router. RestAI features automatic VRAM management, support for any public LLM supported by LlamaIndex or any local LLM supported by Ollama, a user-friendly API with Swagger documentation, and a frontend for easy access. It also provides evaluation capabilities for RAG agents using deepeval.

AI-Solana_Bot

MevBot Solana is an advanced trading bot for the Solana blockchain with an interactive and user-friendly interface. It offers features like scam token scanning, automatic network connection, and focus on trading $TRUMP and $MELANIA tokens. Users can set stop-loss and take-profit thresholds, filter tokens based on market cap, and configure purchase amounts. The bot requires a starting balance of at least 3 SOL for optimal performance. It can be managed through a main menu in VS Code and requires prerequisites like Git, Node.js, and VS Code for installation and usage.

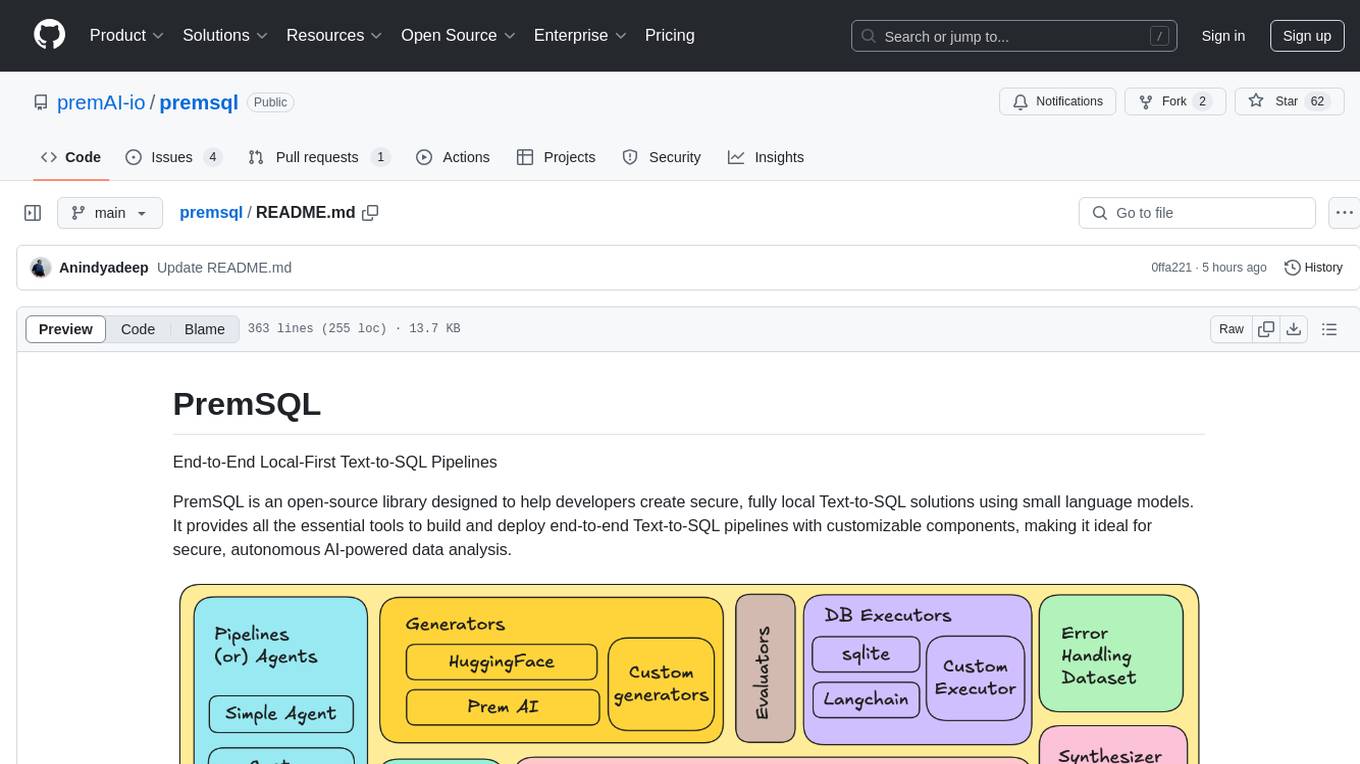

premsql

PremSQL is an open-source library designed to help developers create secure, fully local Text-to-SQL solutions using small language models. It provides essential tools for building and deploying end-to-end Text-to-SQL pipelines with customizable components, ideal for secure, autonomous AI-powered data analysis. The library offers features like Local-First approach, Customizable Datasets, Robust Executors and Evaluators, Advanced Generators, Error Handling and Self-Correction, Fine-Tuning Support, and End-to-End Pipelines. Users can fine-tune models, generate SQL queries from natural language inputs, handle errors, and evaluate model performance against predefined metrics. PremSQL is extendible for customization and private data usage.

youtube_summarizer

YouTube AI Summarizer is a modern Next.js-based tool for AI-powered YouTube video summarization. It allows users to generate concise summaries of YouTube videos using various AI models, with support for multiple languages and summary styles. The application features flexible API key requirements, multilingual support, flexible summary modes, a smart history system, modern UI/UX design, and more. Users can easily input a YouTube URL, select language, summary type, and AI model, and generate summaries with real-time progress tracking. The tool offers a clean, well-structured summary view, history dashboard, and detailed history view for past summaries. It also provides configuration options for API keys and database setup, along with technical highlights, performance improvements, and a modern tech stack.

For similar tasks

FinAnGPT-Pro

FinAnGPT-Pro is a financial data downloader and AI query system that downloads quarterly and annual financial data for stocks from EOD Historical Data, storing it in MongoDB and Google BigQuery. It includes an AI-powered natural language interface for querying financial data. Users can set up the tool by following the prerequisites and setup instructions provided in the README. The tool allows users to download financial data for all stocks in a watchlist or for a single stock, query financial data using a natural language interface, and receive responses in a structured format. Important considerations include error handling, rate limiting, data validation, BigQuery costs, MongoDB connection, and security measures for API keys and credentials.

LLM-Navigation

LLM-Navigation is a repository dedicated to documenting learning records related to large models, including basic knowledge, prompt engineering, building effective agents, model expansion capabilities, security measures against prompt injection, and applications in various fields such as AI agent control, browser automation, financial analysis, 3D modeling, and tool navigation using MCP servers. The repository aims to organize and collect information for personal learning and self-improvement through AI exploration.

Scrapegraph-LabLabAI-Hackathon

ScrapeGraphAI is a web scraping Python library that utilizes LangChain, LLM, and direct graph logic to create scraping pipelines. Users can specify the information they want to extract, and the library will handle the extraction process. The tool is designed to simplify web scraping tasks by providing a streamlined and efficient approach to data extraction.

DevDocs

DevDocs is a platform designed to simplify the process of digesting technical documentation for software engineers and developers. It automates the extraction and conversion of web content into markdown format, making it easier for users to access and understand the information. By crawling through child pages of a given URL, DevDocs provides a streamlined approach to gathering relevant data and integrating it into various tools for software development. The tool aims to save time and effort by eliminating the need for manual research and content extraction, ultimately enhancing productivity and efficiency in the development process.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

agentcloud

AgentCloud is an open-source platform that enables companies to build and deploy private LLM chat apps, empowering teams to securely interact with their data. It comprises three main components: Agent Backend, Webapp, and Vector Proxy. To run this project locally, clone the repository, install Docker, and start the services. The project is licensed under the GNU Affero General Public License, version 3 only. Contributions and feedback are welcome from the community.

oss-fuzz-gen

This framework generates fuzz targets for real-world `C`/`C++` projects with various Large Language Models (LLM) and benchmarks them via the `OSS-Fuzz` platform. It manages to successfully leverage LLMs to generate valid fuzz targets (which generate non-zero coverage increase) for 160 C/C++ projects. The maximum line coverage increase is 29% from the existing human-written targets.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

Azure-Analytics-and-AI-Engagement

The Azure-Analytics-and-AI-Engagement repository provides packaged Industry Scenario DREAM Demos with ARM templates (Containing a demo web application, Power BI reports, Synapse resources, AML Notebooks etc.) that can be deployed in a customer’s subscription using the CAPE tool within a matter of few hours. Partners can also deploy DREAM Demos in their own subscriptions using DPoC.