AI-HealthCare-Assistant

🏥 AI-powered healthcare web app | Symptom detection · Nearest doctor search · Blockchain medical records · X-Ray diagnosis · Face ID auth | Built with React, Node.js, Python, Flask, MongoDB & Ethereum smart contracts | MERN Stack · Machine Learning

Stars: 127

NearestDoctor is a full-stack, AI-powered healthcare web application that bridges the gap between patients and medical professionals. It combines machine learning, blockchain, facial recognition, and natural language processing into a seamless platform that covers the entire patient journey from first symptom to secured medical record. The platform offers features like AI symptom detection & chatbot, location-based doctor search, smart appointment scheduling, blockchain medical records, X-ray lung diagnosis, mental health test, doctor identity verification, multi-mode authentication, blogs & web scraping search, paramedical e-shop, AI voice assistant, and integrated payments. The architecture follows a microservices-inspired MERN architecture with React.js frontend, Node/Express REST API, Python Flask AI/ML microservices, MongoDB database, Ethereum smart contracts, TensorFlow for face recognition and X-ray diagnosis, Nanonets AI OCR API, Dialogflow for chatbot, Google Maps API, ALAN SDK for voice assistant, and Stripe API for payments.

README:

Your intelligent, AI-powered companion for smarter, faster, and safer healthcare.

▲ Click to watch the full demo

- About The Project

- Key Features

- Tech Stack

- Architecture

- Getting Started

- How It Works

- Screenshots

- Roadmap

- Achievements

- Contributing

- Team

- Acknowledgments

NearestDoctor is a full-stack, AI-powered healthcare web application that bridges the gap between patients and medical professionals. It combines machine learning, blockchain, facial recognition, and natural language processing into a seamless platform that covers the entire patient journey from first symptom to secured medical record.

💡 "Every patient deserves professional medical guidance, instantly and securely — no matter where they are."

Despite advances in digital health, patients still face three core challenges:

- 🔍 Finding the right doctor: it's hard to know which specialist fits your symptoms.

- 📆 Getting an appointment: scheduling is manual, slow, and rarely location-aware.

- 🔐 Securing medical records: records are fragmented, inaccessible, and vulnerable to breaches.

NearestDoctor was designed from the ground up to solve all three.

| Feature | Description |

|---|---|

| 🤖 AI Symptom Detection & Chatbot | Patients chat with a Dialogflow-powered bot, describe symptoms, and receive an illness prediction with the right specialist recommendation. |

| 📍 Location-Based Doctor Search | Find the nearest doctor or first available appointment in real time. |

| 📅 Smart Appointment Scheduling | Book with the nearest doctor or earliest available slot directly through the chatbot flow. |

| 🔗 Blockchain Medical Records | Patient data is stored on Ethereum using smart contracts. Every update creates a verifiable, immutable transaction block. |

| 🫁 X-Ray Lung Diagnosis | Doctors upload chest X-rays; a TensorFlow pre-trained model predicts COVID-19, tuberculosis, or pneumonia in seconds. |

| 🧠 Mental Health Test | Patients take a guided quiz to assess their mental wellbeing, then receive a result and curated article suggestions. |

| 🪪 Doctor Identity Verification | Doctors verify their real identity using a national card ID processed through the Nanonets AI OCR API before they can register. |

| 🤳 Multi-Mode Authentication | Three login options: LinkedIn OAuth, username/password, or Face ID (TensorFlow deep learning model). |

| 📰 Blogs & Web Scraping Search | Doctors publish articles; an integrated web scraping engine lets users search for external medical content by keyword. |

| 🛒 Paramedical E-Shop | ML-based patient behavioral analysis drives personalized product recommendations. |

| 🎙️ AI Voice Assistant | ALAN SDK integration lets users navigate the platform entirely by voice commands. |

| 💳 Integrated Payments | Stripe API handles doctor subscription plans and patient service payments. |

| Layer | Technology | Version |

|---|---|---|

| Frontend | React.js | v18 |

| Backend API | Node.js + Express.js | Node v16.13.1 / Express v4.17.1 |

| AI/ML Microservices | Python + Flask | Flask v2.1 |

| Database | MongoDB | v5.9.1 |

| Blockchain | Ethereum Smart Contracts | — |

| Face Recognition | TensorFlow (pre-trained deep learning model) | — |

| ID Card OCR | Nanonets AI API | — |

| Chatbot (Symptoms + Appointments) | Dialogflow | — |

| X-Ray Diagnosis | TensorFlow (pre-trained model) | — |

| Maps & Location | Google Maps API | — |

| Voice Assistant | ALAN SDK | — |

| Payments | Stripe API | — |

| OAuth | LinkedIn OAuth | — |

| Languages | JavaScript, Python, HTML5, CSS | — |

The platform follows a microservices-inspired MERN architecture:

- The React.js frontend communicates with the Node/Express REST API for core operations.

- AI features run as independent Flask microservices (symptom detection, face recognition, X-ray diagnosis, OCR, behavioral analysis).

- MongoDB stores application data users, appointments, blogs, shop inventory.

- Ethereum smart contracts handle patient medical records with immutability and permission-based access control.

-

Node.js v16+ and

npmoryarn - MongoDB running locally or via a cloud URI (v5.9.1+)

- Python 3.8+ for AI/ML microservices

- Git

-

Clone the repository

git clone https://github.com/ahlem-phantom/AI-HealthCare-Assistant.git cd AI-HealthCare-Assistant -

Install Node.js dependencies

npm install # or yarn install -

Install Python dependencies (for AI services)

pip install -r requirements.txt

-

Configure environment variables

Create a

.envfile in the root directory:MONGO_URI=your_mongodb_connection_string JWT_SECRET=your_jwt_secret PORT=5000 STRIPE_SECRET_KEY=your_stripe_key GOOGLE_MAPS_API_KEY=your_maps_key DIALOGFLOW_PROJECT_ID=your_dialogflow_project_id NANONETS_API_KEY=your_nanonets_key

-

Start the main development server

npm run development

-

Start Flask AI microservices (in a separate terminal)

cd py-side flask run -

Open the app at

http://localhost:3000🎉

- Select Role — choose "Patient" at the landing screen.

- Register & Log In — via username/password or LinkedIn OAuth.

- Describe Symptoms — chat with the Dialogflow AI bot; receive a specialist recommendation.

- Mental Health Check — take the guided quiz and get personalized article suggestions.

- Book an Appointment — schedule with the nearest or first-available doctor via the chatbot and Google Maps.

- Medical Records — your records are securely stored on the blockchain; grant selective access to your chosen doctors.

- Verify Identity — upload your national card ID; Nanonets OCR confirms authenticity in real time.

- Register Face ID — enroll your face using the TensorFlow model for future biometric logins.

- Choose a Plan & Pay — select a subscription tier and complete payment via Stripe.

- Log In — use LinkedIn OAuth, username/password, or a live Face ID camera scan.

- Use the Dashboard — manage appointments, write blogs, run X-ray lung diagnoses (COVID / TB / pneumonia), and access patient records via blockchain with granted permission.

-

[x] Phase 1 — Study & Prototyping

- Problem definition, state of the art & competitive analysis

- Feasibility study, wireframes

-

[x] Phase 2 — Design & Initial Build

- Data model & physical/logical architecture design

- Static frontend + first Node.js components

-

[x] Phase 3.1 — Feature Implementation (V1)

- Full backend REST API

- Frontend integration with all services

- Core AI modules: symptom detection, face ID, OCR verification

-

[x] Phase 3.2 — Finalization & Deployment (V2)

- X-ray diagnosis, mental health test, blockchain records

- Final integration, system testing & deployment

-

[ ] v3 — Coming Soon

We're actively working on the next major release:

- 🎨 Redesigned UI/UX with a modern design system

- ⚡ Migration from Node.js backend to FastAPI

- 🤖 Integration of LLM models for smarter symptom analysis and conversational AI

- 🧪 Full CI/CD pipeline for automated testing & deployment

- 📦 All libraries and dependencies upgraded to latest stable versions

- 🏗️ Scalable, cloud-ready architecture

🎖️ NearestDoctor was selected among dozens of projects to participate in the 9th Edition Ceremony of Best Engineering Projects of 2022 at Esprit School of Engineering, Tunisia.

Contributions are welcome! Here's how to get started:

- Fork the project

- Create your feature branch

git checkout -b feature/AmazingFeature

- Commit your changes

git commit -m "Add AmazingFeature" - Push to the branch

git push origin feature/AmazingFeature

- Open a Pull Request

See open issues for ideas and known bugs. Don't forget to ⭐ star the project if you find it useful!

Built with 💕 by AlphaCoders 5 engineering students from Esprit School of Engineering, Tunisia.

Project Mentor: [email protected]

Important: This project was built in 2022 and several of its core libraries and dependencies are now outdated or deprecated. The codebase across all four modules

react-app/,server/,py side/, andblockchain/requires updates before it can be safely run in a modern environment.Known areas that need attention:

- React: project uses an older setup; migration to current React + Vite recommended, update of deprecated dependencies

- Node.js / Express: dependencies likely have known CVEs; run

npm auditand update- Python / Flask: AI service dependencies (TensorFlow, OpenCV, etc.) need version pinning and updates.

A full dependency refresh is planned as part of v3. Contributions to modernize the stack are very welcome, see Contributing.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for AI-HealthCare-Assistant

Similar Open Source Tools

AI-HealthCare-Assistant

NearestDoctor is a full-stack, AI-powered healthcare web application that bridges the gap between patients and medical professionals. It combines machine learning, blockchain, facial recognition, and natural language processing into a seamless platform that covers the entire patient journey from first symptom to secured medical record. The platform offers features like AI symptom detection & chatbot, location-based doctor search, smart appointment scheduling, blockchain medical records, X-ray lung diagnosis, mental health test, doctor identity verification, multi-mode authentication, blogs & web scraping search, paramedical e-shop, AI voice assistant, and integrated payments. The architecture follows a microservices-inspired MERN architecture with React.js frontend, Node/Express REST API, Python Flask AI/ML microservices, MongoDB database, Ethereum smart contracts, TensorFlow for face recognition and X-ray diagnosis, Nanonets AI OCR API, Dialogflow for chatbot, Google Maps API, ALAN SDK for voice assistant, and Stripe API for payments.

claude-code-ultimate-guide

The Claude Code Ultimate Guide is an exhaustive documentation resource that takes users from beginner to power user in using Claude Code. It includes production-ready templates, workflow guides, a quiz, and a cheatsheet for daily use. The guide covers educational depth, methodologies, and practical examples to help users understand concepts and workflows. It also provides interactive onboarding, a repository structure overview, and learning paths for different user levels. The guide is regularly updated and offers a unique 257-question quiz for comprehensive assessment. Users can also find information on agent teams coverage, methodologies, annotated templates, resource evaluations, and learning paths for different roles like junior developer, senior developer, power user, and product manager/devops/designer.

lap

Lap is a lightning-fast, cross-platform photo manager that prioritizes user privacy and local AI processing. It allows users to organize and browse their photos efficiently, with features like natural language search, smart face recognition, and similar image search. Lap does not require importing photos, syncs seamlessly with the file system, and supports multiple libraries. Built for performance with a Rust core and lazy loading, Lap offers a delightful user experience with beautiful design, customization options, and multi-language support. It is a great alternative to cloud-based photo services, offering excellent organization, performance, and no vendor lock-in.

ClashRoyaleBuildABot

Clash Royale Build-A-Bot is a project that allows users to build their own bot to play Clash Royale. It provides an advanced state generator that accurately returns detailed information using cutting-edge technologies. The project includes tutorials for setting up the environment, building a basic bot, and understanding state generation. It also offers updates such as replacing YOLOv5 with YOLOv8 unit model and enhancing performance features like placement and elixir management. The future roadmap includes plans to label more images of diverse cards, add a tracking layer for unit predictions, publish tutorials on Q-learning and imitation learning, release the YOLOv5 training notebook, implement chest opening and card upgrading features, and create a leaderboard for the best bots developed with this repository.

motia

Motia is an AI agent framework designed for software engineers to create, test, and deploy production-ready AI agents quickly. It provides a code-first approach, allowing developers to write agent logic in familiar languages and visualize execution in real-time. With Motia, developers can focus on business logic rather than infrastructure, offering zero infrastructure headaches, multi-language support, composable steps, built-in observability, instant APIs, and full control over AI logic. Ideal for building sophisticated agents and intelligent automations, Motia's event-driven architecture and modular steps enable the creation of GenAI-powered workflows, decision-making systems, and data processing pipelines.

rhesis

Rhesis is a comprehensive test management platform designed for Gen AI teams, offering tools to create, manage, and execute test cases for generative AI applications. It ensures the robustness, reliability, and compliance of AI systems through features like test set management, automated test generation, edge case discovery, compliance validation, integration capabilities, and performance tracking. The platform is open source, emphasizing community-driven development, transparency, extensible architecture, and democratizing AI safety. It includes components such as backend services, frontend applications, SDK for developers, worker services, chatbot applications, and Polyphemus for uncensored LLM service. Rhesis enables users to address challenges unique to testing generative AI applications, such as non-deterministic outputs, hallucinations, edge cases, ethical concerns, and compliance requirements.

mindnlp

MindNLP is an open-source NLP library based on MindSpore. It provides a platform for solving natural language processing tasks, containing many common approaches in NLP. It can help researchers and developers to construct and train models more conveniently and rapidly. Key features of MindNLP include: * Comprehensive data processing: Several classical NLP datasets are packaged into a friendly module for easy use, such as Multi30k, SQuAD, CoNLL, etc. * Friendly NLP model toolset: MindNLP provides various configurable components. It is friendly to customize models using MindNLP. * Easy-to-use engine: MindNLP simplified complicated training process in MindSpore. It supports Trainer and Evaluator interfaces to train and evaluate models easily. MindNLP supports a wide range of NLP tasks, including: * Language modeling * Machine translation * Question answering * Sentiment analysis * Sequence labeling * Summarization MindNLP also supports industry-leading Large Language Models (LLMs), including Llama, GLM, RWKV, etc. For support related to large language models, including pre-training, fine-tuning, and inference demo examples, you can find them in the "llm" directory. To install MindNLP, you can either install it from Pypi, download the daily build wheel, or install it from source. The installation instructions are provided in the documentation. MindNLP is released under the Apache 2.0 license. If you find this project useful in your research, please consider citing the following paper: @misc{mindnlp2022, title={{MindNLP}: a MindSpore NLP library}, author={MindNLP Contributors}, howpublished = {\url{https://github.com/mindlab-ai/mindnlp}}, year={2022} }

rivet

Rivet is a desktop application for creating complex AI agents and prompt chaining, and embedding it in your application. Rivet currently has LLM support for OpenAI GPT-3.5 and GPT-4, Anthropic Claude Instant and Claude 2, [Anthropic Claude 3 Haiku, Sonnet, and Opus](https://www.anthropic.com/news/claude-3-family), and AssemblyAI LeMUR framework for voice data. Rivet has embedding/vector database support for OpenAI Embeddings and Pinecone. Rivet also supports these additional integrations: Audio Transcription from AssemblyAI. Rivet core is a TypeScript library for running graphs created in Rivet. It is used by the Rivet application, but can also be used in your own applications, so that Rivet can call into your own application's code, and your application can call into Rivet graphs.

sktime

sktime is a Python library for time series analysis that provides a unified interface for various time series learning tasks such as classification, regression, clustering, annotation, and forecasting. It offers time series algorithms and tools compatible with scikit-learn for building, tuning, and validating time series models. sktime aims to enhance the interoperability and usability of the time series analysis ecosystem by empowering users to apply algorithms across different tasks and providing interfaces to related libraries like scikit-learn, statsmodels, tsfresh, PyOD, and fbprophet.

atom

Atom is an open-source, self-hosted AI agent platform that allows users to automate workflows by interacting with AI agents. Users can speak or type requests, and Atom's specialty agents can plan, verify, and execute complex workflows across various tech stacks. Unlike SaaS alternatives, Atom runs entirely on the user's infrastructure, ensuring data privacy. The platform offers features such as voice interface, specialty agents for sales, marketing, and engineering, browser and device automation, universal memory and context, agent governance system, deep integrations, dynamic skills, and more. Atom is designed for business automation, multi-agent workflows, and enterprise governance.

prism-insight

PRISM-INSIGHT is a comprehensive stock analysis and trading simulation system based on AI agents. It automatically captures daily surging stocks via Telegram channel, generates expert-level analyst reports, and performs trading simulations. The system utilizes OpenAI GPT-4.1 for in-depth stock analysis and GPT-5 for investment strategy simulation. It also interacts with users via Anthropic Claude for Telegram conversations. The system architecture includes AI analysis agents, stock tracking, PDF conversion, and Telegram bot functionalities. Users can customize criteria for identifying surging stocks, modify AI prompts, and adjust chart styles. The project is open-source under the MIT license, and all investment decisions based on the analysis are the responsibility of the user.

promptolution

Promptolution is a unified, modular framework for prompt optimization designed for researchers and advanced practitioners. It focuses on the optimization stage, providing a clean, transparent, and extensible API for simple prompt optimization for various tasks. It supports many current prompt optimizers, unified LLM backend, response caching, parallelized inference, and detailed logging for post-hoc analysis.

monoscope

Monoscope is an open-source monitoring and observability platform that uses artificial intelligence to understand and monitor systems automatically. It allows users to ingest and explore logs, traces, and metrics in S3 buckets, query in natural language via LLMs, and create AI agents to detect anomalies. Key capabilities include universal data ingestion, AI-powered understanding, natural language interface, cost-effective storage, and zero configuration. Monoscope is designed to reduce alert fatigue, catch issues before they impact users, and provide visibility across complex systems.

Pulse

Pulse is a real-time monitoring tool designed for Proxmox, Docker, and Kubernetes infrastructure. It provides a unified dashboard to consolidate metrics, alerts, and AI-powered insights into a single interface. Suitable for homelabs, sysadmins, and MSPs, Pulse offers core monitoring features, AI-powered functionalities, multi-platform support, security and operations features, and community integrations. Pulse Pro unlocks advanced AI analysis and auto-fix capabilities. The tool is privacy-focused, secure by design, and offers detailed documentation for installation, configuration, security, troubleshooting, and more.

univer

Univer is an isomorphic full-stack framework designed for creating and editing spreadsheets, documents, and slides across web and server. It is highly extensible, high-performance, and can be embedded into applications. Univer offers a wide range of features including formulas, conditional formatting, data validation, collaborative editing, printing, import & export, and more. It supports multiple languages and provides a distraction-free editing experience with a clean interface. Univer is suitable for data analysts, software developers, project managers, content creators, and educators.

AIPex

AIPex is a revolutionary Chrome extension that transforms your browser into an intelligent automation platform. Using natural language commands and AI-powered intelligence, AIPex can automate virtually any browser task - from complex multi-step workflows to simple repetitive actions. It offers features like natural language control, AI-powered intelligence, multi-step automation, universal compatibility, smart data extraction, precision actions, form automation, visual understanding, developer-friendly with extensive API, and lightning-fast execution of automation tasks.

For similar tasks

AI-HealthCare-Assistant

NearestDoctor is a full-stack, AI-powered healthcare web application that bridges the gap between patients and medical professionals. It combines machine learning, blockchain, facial recognition, and natural language processing into a seamless platform that covers the entire patient journey from first symptom to secured medical record. The platform offers features like AI symptom detection & chatbot, location-based doctor search, smart appointment scheduling, blockchain medical records, X-ray lung diagnosis, mental health test, doctor identity verification, multi-mode authentication, blogs & web scraping search, paramedical e-shop, AI voice assistant, and integrated payments. The architecture follows a microservices-inspired MERN architecture with React.js frontend, Node/Express REST API, Python Flask AI/ML microservices, MongoDB database, Ethereum smart contracts, TensorFlow for face recognition and X-ray diagnosis, Nanonets AI OCR API, Dialogflow for chatbot, Google Maps API, ALAN SDK for voice assistant, and Stripe API for payments.

For similar jobs

AI-HealthCare-Assistant

NearestDoctor is a full-stack, AI-powered healthcare web application that bridges the gap between patients and medical professionals. It combines machine learning, blockchain, facial recognition, and natural language processing into a seamless platform that covers the entire patient journey from first symptom to secured medical record. The platform offers features like AI symptom detection & chatbot, location-based doctor search, smart appointment scheduling, blockchain medical records, X-ray lung diagnosis, mental health test, doctor identity verification, multi-mode authentication, blogs & web scraping search, paramedical e-shop, AI voice assistant, and integrated payments. The architecture follows a microservices-inspired MERN architecture with React.js frontend, Node/Express REST API, Python Flask AI/ML microservices, MongoDB database, Ethereum smart contracts, TensorFlow for face recognition and X-ray diagnosis, Nanonets AI OCR API, Dialogflow for chatbot, Google Maps API, ALAN SDK for voice assistant, and Stripe API for payments.

MediCareAI

MediCareAI is an intelligent disease management system powered by AI, designed for patient follow-up and disease tracking. It integrates medical guidelines, AI-powered diagnosis, and document processing to provide comprehensive healthcare support. The system includes features like user authentication, patient management, AI diagnosis, document processing, medical records management, knowledge base system, doctor collaboration platform, and admin system. It ensures privacy protection through automatic PII detection and cleaning for document sharing.

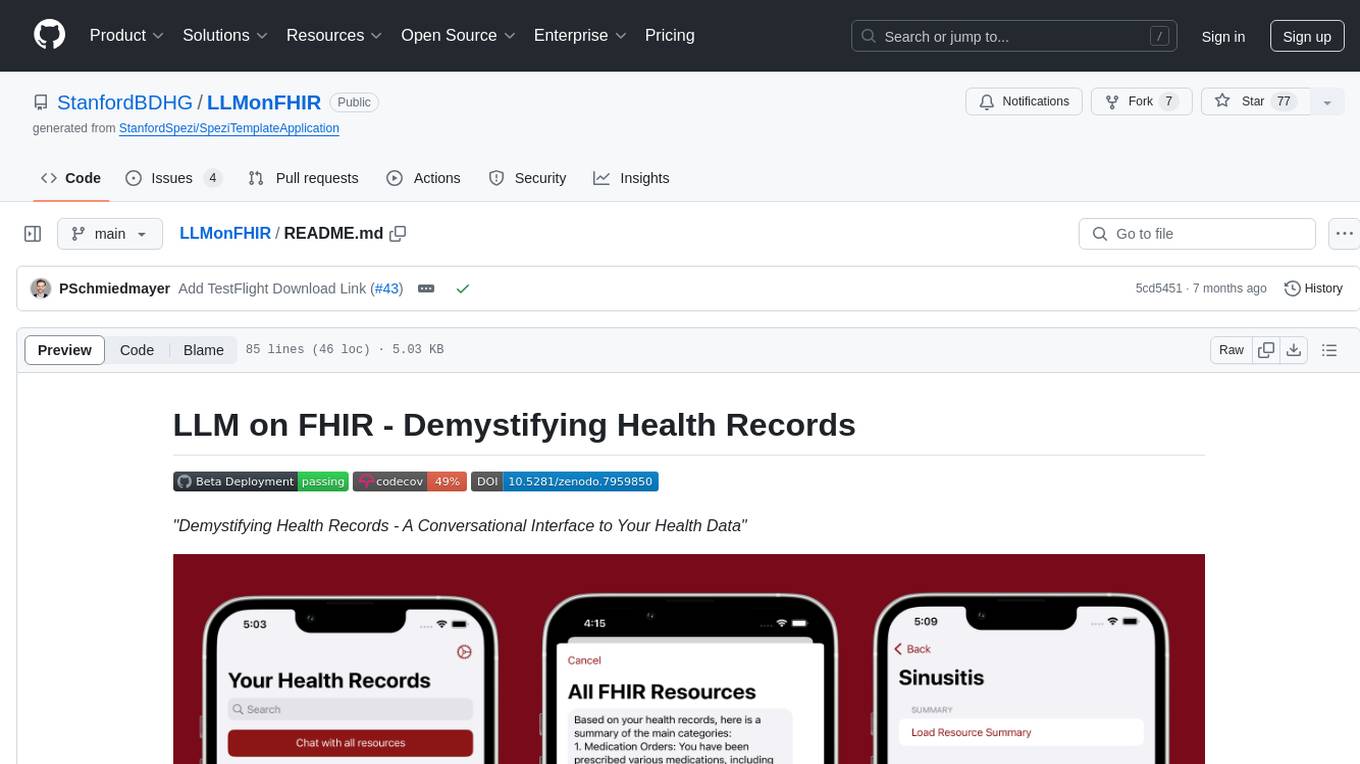

LLMonFHIR

LLMonFHIR is an iOS application that utilizes large language models (LLMs) to interpret and provide context around patient data in the Fast Healthcare Interoperability Resources (FHIR) format. It connects to the OpenAI GPT API to analyze FHIR resources, supports multiple languages, and allows users to interact with their health data stored in the Apple Health app. The app aims to simplify complex health records, provide insights, and facilitate deeper understanding through a conversational interface. However, it is an experimental app for informational purposes only and should not be used as a substitute for professional medical advice. Users are advised to verify information provided by AI models and consult healthcare professionals for personalized advice.

mercure

mercure DICOM Orchestrator is a flexible solution for routing and processing DICOM files. It offers a user-friendly web interface and extensive monitoring functions. Custom processing modules can be implemented as Docker containers. Written in Python, it uses the DCMTK toolkit for DICOM communication. It can be deployed as a single-server installation using Docker Compose or as a scalable cluster installation using Nomad. mercure consists of service modules for receiving, routing, processing, dispatching, cleaning, web interface, and central monitoring.

open-wearables

Open Wearables is an open-source platform that unifies wearable device data from multiple providers and enables AI-powered health insights through natural language automations. It provides a single API for building health applications faster, with embeddable widgets and webhook notifications. Developers can integrate multiple wearable providers, access normalized health data, and build AI-powered insights. The platform simplifies the process of supporting multiple wearables, handling OAuth flows, data mapping, and sync logic, allowing users to focus on product development. Use cases include fitness coaching apps, healthcare platforms, wellness applications, research projects, and personal use.

CareGPT

CareGPT is a medical large language model (LLM) that explores medical data, training, and deployment related research work. It integrates resources, open-source models, rich data, and efficient deployment methods. It supports various medical tasks, including patient diagnosis, medical dialogue, and medical knowledge integration. The model has been fine-tuned on diverse medical datasets to enhance its performance in the healthcare domain.

LLM-for-Healthcare

The repository 'LLM-for-Healthcare' provides a comprehensive survey of large language models (LLMs) for healthcare, covering data, technology, applications, and accountability and ethics. It includes information on various LLM models, training data, evaluation methods, and computation costs. The repository also discusses tasks such as NER, text classification, question answering, dialogue systems, and generation of medical reports from images in the healthcare domain.

Taiyi-LLM

Taiyi (太一) is a bilingual large language model fine-tuned for diverse biomedical tasks. It aims to facilitate communication between healthcare professionals and patients, provide medical information, and assist in diagnosis, biomedical knowledge discovery, drug development, and personalized healthcare solutions. The model is based on the Qwen-7B-base model and has been fine-tuned using rich bilingual instruction data. It covers tasks such as question answering, biomedical dialogue, medical report generation, biomedical information extraction, machine translation, title generation, text classification, and text semantic similarity. The project also provides standardized data formats, model training details, model inference guidelines, and overall performance metrics across various BioNLP tasks.