MobileWorld

Benchmarking Autonomous Mobile Agents in Agent-User Interactive and MCP-Augmented Environments

Stars: 142

MobileWorld is a benchmark for evaluating autonomous mobile agents in realistic scenarios. It offers a challenging online mobile-use benchmark with 201 tasks across 20 mobile applications, including long-horizon tasks, agent-user interaction tasks, and MCP-augmented tasks. The benchmark features a containerized environment, open-source applications, and snapshot-based state management for reproducible results. Task evaluation methods include textual answer verification, backend database verification, local storage inspection, and application callbacks.

README:

Leaderboard • Website • Paper • Docs • Issues

While maintaining the same level of rigorous, reproducible evaluation as AndroidWorld, MobileWorld offers a more challenging online mobile-use benchmark by introducing four additional features that better capture real-world agent behavior.

- 🎯 Broad Real-World Coverage: 201 carefully curated tasks across 20 mobile applications

- 🔄 Long-Horizon Tasks: Multi-step reasoning and cross-app workflows

- 👥 Agent-User Interaction: Novel tasks requiring dynamic human-agent collaboration

- 🔧 MCP-Augmented Tasks: Support Model Context Protocol (MCP) to evaluate hybrid tool usage

Difficulty comparison between MobileWorld and AndroidWorld

-

2026-01-16: Expanded Model Evaluation Support🔥

We have introduced evaluation implementations for the latest frontier models, covering both end-to-end and agentic workflows.

- 🚀 Leaderboard Upgrade with Multi-Dimensional Filtering: We now support focused comparisons within GUI-Only and User-Interaction categories. This allows for a more balanced assessment of core navigation capabilities, especially for models not yet optimized for MCP-hybrid tool calls or complex user dialogues.

- 🏆 New Performance Records:

- GUI-Only Tasks: Seed-1.8 secured the Top-1 spot for end-to-end performance with a 52.1% success rate, followed by Gemini-3-Pro (51.3%) and Claude-4.5-Sonnet (47.8%).

- Combined GUI & User Interaction: MAI-UI-235B-A22B leads the leaderboard with a 45.4% success rate, surpassing Claude-4.5-Sonnet (43.2%) and Seed-1.8 (40.8%).

-

Supported Models:

- End-to-End: MAI-UI, Gemini-3-Pro, Claude-4.5-sonnet, Seed-1.8, and GELab-Zero.

- Agentic: Gemini-3-Pro, Claude-4.5-sonnet, and GPT-5.

-

Implementation Details:

- MAI-UI (code)

- Gemini-3-Pro: End-to-end version adapted from our agentic framework utilizing Gemini’s built-in grounding (code).

- Seed-1.8: Adapted from OSWorld for mobile action spaces, as GUI capability is not yet officially supported (code).

- GELab-Zero (code).

- Note on GPT-5: While supported for agentic tasks, GPT-5 is currently excluded from end-to-end evaluation as its grounding mechanisms remain unclear for standardized testing.

- 2025-12-29: We released MAI-UI, achieving SOTA performance with a 41.7% success rate in the end-to-end models category on the MobileWorld benchmark.

- 2025-12-23: Initial release of MobileWorld benchmark. Check out our paper and website🔥.

-

2025-12-23: Docker image

ghcr.io/Tongyi-MAI/mobile_world:latestavailable for public use.

- Updates

- Overview

- Installation

- Quick Start

- Available Commands

- Documentation

- Benchmark Statistics

- Contact

- Acknowledgements

- Citation

MobileWorld is a comprehensive benchmark for evaluating autonomous mobile agents in realistic scenarios. Our benchmark features a robust infrastructure and deterministic evaluation methodology:

Containerized Environment

The entire evaluation environment runs in Docker-in-Docker containers, including:

- Rooted Android Virtual Device (AVD)

- Self-hosted application backends

- API server for orchestration

This design eliminates external dependencies and enables consistent deployment across different host systems.

Open-Source Applications

We build stable, reproducible environments using popular open-source projects:

- Mattermost: Enterprise communication (Slack alternative)

- Mastodon: Social media platform (X/Twitter alternative)

- Mall4Uni: E-commerce platform

Self-hosting provides full backend access, enabling precise control over task initialization and deterministic verification.

Snapshot-Based State Management

AVD snapshots capture complete device states, ensuring each task execution begins from identical initial conditions for reproducible results.

We implement multiple complementary verification methods for reliable assessment:

- Textual Answer Verification: Pattern matching and string comparison for information retrieval tasks

- Backend Database Verification: Direct database queries to validate state changes (messages, posts, etc.)

- Local Storage Inspection: ADB-based inspection of application data (calendar events, email drafts, etc.)

- Application Callbacks: Custom APIs capturing intermediate states for validation

- Docker with privileged container support

- KVM (Kernel-based Virtual Machine) for Android emulator acceleration

- Python 3.12+

- Linux host system (or Windows with WSL2 + KVM enabled), MacOS support is in progress.

# Clone the repository

git clone https://github.com/Tongyi-MAI/MobileWorld.git

cd MobileWorld

# Install dependencies with uv

uv syncCreate a .env file from .env.example in the project root:

cp .env.example .envEdit the .env file and configure the following parameters:

Required for Agent Evaluation:

-

API_KEY: Your OpenAI-compatible API key for the agent model -

USER_AGENT_API_KEY: API key for user agent LLM (used in agent-user interactive tasks) -

USER_AGENT_BASE_URL: Base URL for user agent API endpoint -

USER_AGENT_MODEL: Model name for user agent (e.g.,gpt-4.1)

Required for MCP-Augmented Tasks:

-

DASHSCOPE_API_KEY: DashScope API key for MCP services -

MODELSCOPE_API_KEY: ModelScope API key for MCP services

Example .env file:

API_KEY=your_api_key_for_agent_model

DASHSCOPE_API_KEY=dashscope_api_key_for_mcp

MODELSCOPE_API_KEY=modelscope_api_key_for_mcp

USER_AGENT_API_KEY=your_user_agent_llm_api_key

USER_AGENT_BASE_URL=your_user_agent_base_url

USER_AGENT_MODEL=gpt-4.1Note:

- MCP API keys are only required if you plan to run MCP-augmented tasks

- User agent settings are only required for agent-user interactive tasks

- See MCP Setup Guide for detailed MCP server configuration

sudo uv run mw env checkThis command verifies Docker, KVM support, and prompts to pull the latest mobile_world Docker image if needed.

sudo uv run mw env run --count 5 --launch-interval 20This launches 5 containerized Android environments with:

-

--count 5: Number of parallel containers -

--launch-interval 20: Wait 20 seconds between container launches

sudo uv run mw eval \

--agent_type qwen3vl \

--task ALL \

--max_round 50 \

--model_name Qwen3-VL-235B-A22B \

--llm_base_url [openai_compatible_url] \

--step_wait_time 3 \

--log_file_root traj_logs/qwen3_vl_logs \

--enable_mcp \

--enable_user_interactionFlags:

--enable_mcp: Include MCP-augmented tasks in evaluation--enable_user_interaction: Include agent-user interaction tasks. Without this flag, only GUI-only tasks are evaluated.

uv run mw logs view --log_dir traj_logs/qwen3_vl_logsOpens an interactive web-based visualization at http://localhost:8760 to explore task trajectories and results.

MobileWorld provides a comprehensive CLI (mw or mobile-world) with the following commands:

| Command | Description |

|---|---|

mw env check |

Check prerequisites (Docker, KVM) and pull latest image |

mw env run |

Launch Docker container(s) with Android emulators |

mw env list |

List running MobileWorld containers |

mw env rm |

Remove/destroy containers |

mw env info |

Get detailed info about a container |

mw env restart |

Restart the server in a container |

mw env exec |

Open a shell in a container |

mw eval |

Run benchmark evaluation suite |

mw test |

Run a single ad-hoc task for testing |

mw info task |

Display available tasks |

mw info agent |

Display available agents |

mw info app |

Display available apps |

mw info mcp |

Display available MCP tools |

mw logs view |

Launch interactive log viewer |

mw logs results |

Print results summary table |

mw logs export |

Export logs as static HTML site |

mw device |

View live Android device screen |

mw server |

Start the backend API server |

Use mw <command> --help for detailed options.

For detailed documentation, see the docs/ directory:

| Document | Description |

|---|---|

| Development Guide | Dev mode, debugging, container management workflows |

| MCP Setup | Configure MCP servers for external tool integration |

| Windows Setup | WSL2 and KVM setup instructions for Windows |

| AVD Configuration | Customize and save Android Virtual Device snapshots |

|

|

For questions, issues, or collaboration inquiries:

- GitHub Issues: Open an issue

- Email: Contact the maintainers

- Discord: Join our Discord server

- WeChat Group: Scan to join our discussion group

We thank Android World and Android-Lab for their open source contributions. We also thank all the open-source contributors!

If you find MobileWorld useful in your research, please cite our paper:

@misc{kong2025mobileworldbenchmarkingautonomousmobile,

title={MobileWorld: Benchmarking Autonomous Mobile Agents in Agent-User Interactive, and MCP-Augmented Environments},

author={Quyu Kong and Xu Zhang and Zhenyu Yang and Nolan Gao and Chen Liu and Panrong Tong and Chenglin Cai and Hanzhang Zhou and Jianan Zhang and Liangyu Chen and Zhidan Liu and Steven Hoi and Yue Wang},

year={2025},

eprint={2512.19432},

archivePrefix={arXiv},

primaryClass={cs.AI},

url={https://arxiv.org/abs/2512.19432},

}If you find MobileWorld helpful, please consider giving us a star ⭐!

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for MobileWorld

Similar Open Source Tools

MobileWorld

MobileWorld is a benchmark for evaluating autonomous mobile agents in realistic scenarios. It offers a challenging online mobile-use benchmark with 201 tasks across 20 mobile applications, including long-horizon tasks, agent-user interaction tasks, and MCP-augmented tasks. The benchmark features a containerized environment, open-source applications, and snapshot-based state management for reproducible results. Task evaluation methods include textual answer verification, backend database verification, local storage inspection, and application callbacks.

MassGen

MassGen is a cutting-edge multi-agent system that leverages the power of collaborative AI to solve complex tasks. It assigns a task to multiple AI agents who work in parallel, observe each other's progress, and refine their approaches to converge on the best solution to deliver a comprehensive and high-quality result. The system operates through an architecture designed for seamless multi-agent collaboration, with key features including cross-model/agent synergy, parallel processing, intelligence sharing, consensus building, and live visualization. Users can install the system, configure API settings, and run MassGen for various tasks such as question answering, creative writing, research, development & coding tasks, and web automation & browser tasks. The roadmap includes plans for advanced agent collaboration, expanded model, tool & agent integration, improved performance & scalability, enhanced developer experience, and a web interface.

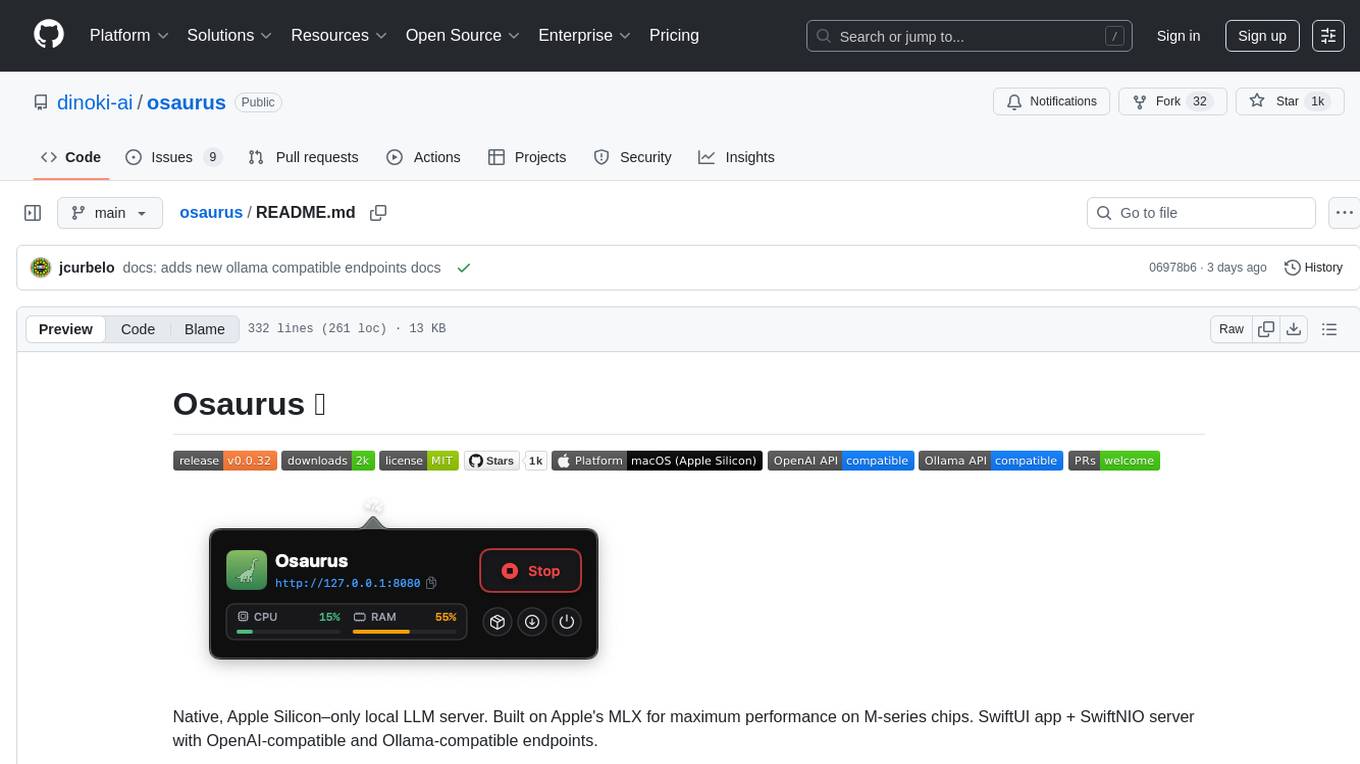

osaurus

Osaurus is a native, Apple Silicon-only local LLM server built on Apple's MLX for maximum performance on M‑series chips. It is a SwiftUI app + SwiftNIO server with OpenAI‑compatible and Ollama‑compatible endpoints. The tool supports native MLX text generation, model management, streaming and non‑streaming chat completions, OpenAI‑compatible function calling, real-time system resource monitoring, and path normalization for API compatibility. Osaurus is designed for macOS 15.5+ and Apple Silicon (M1 or newer) with Xcode 16.4+ required for building from source.

evi-run

evi-run is a powerful, production-ready multi-agent AI system built on Python using the OpenAI Agents SDK. It offers instant deployment, ultimate flexibility, built-in analytics, Telegram integration, and scalable architecture. The system features memory management, knowledge integration, task scheduling, multi-agent orchestration, custom agent creation, deep research, web intelligence, document processing, image generation, DEX analytics, and Solana token swap. It supports flexible usage modes like private, free, and pay mode, with upcoming features including NSFW mode, task scheduler, and automatic limit orders. The technology stack includes Python 3.11, OpenAI Agents SDK, Telegram Bot API, PostgreSQL, Redis, and Docker & Docker Compose for deployment.

AgentNeo

AgentNeo is an advanced, open-source Agentic AI Application Observability, Monitoring, and Evaluation Framework designed to provide deep insights into AI agents, Large Language Model (LLM) calls, and tool interactions. It offers robust logging, visualization, and evaluation capabilities to help debug and optimize AI applications with ease. With features like tracing LLM calls, monitoring agents and tools, tracking interactions, detailed metrics collection, flexible data storage, simple instrumentation, interactive dashboard, project management, execution graph visualization, and evaluation tools, AgentNeo empowers users to build efficient, cost-effective, and high-quality AI-driven solutions.

indexify

Indexify is an open-source engine for building fast data pipelines for unstructured data (video, audio, images, and documents) using reusable extractors for embedding, transformation, and feature extraction. LLM Applications can query transformed content friendly to LLMs by semantic search and SQL queries. Indexify keeps vector databases and structured databases (PostgreSQL) updated by automatically invoking the pipelines as new data is ingested into the system from external data sources. **Why use Indexify** * Makes Unstructured Data **Queryable** with **SQL** and **Semantic Search** * **Real-Time** Extraction Engine to keep indexes **automatically** updated as new data is ingested. * Create **Extraction Graph** to describe **data transformation** and extraction of **embedding** and **structured extraction**. * **Incremental Extraction** and **Selective Deletion** when content is deleted or updated. * **Extractor SDK** allows adding new extraction capabilities, and many readily available extractors for **PDF**, **Image**, and **Video** indexing and extraction. * Works with **any LLM Framework** including **Langchain**, **DSPy**, etc. * Runs on your laptop during **prototyping** and also scales to **1000s of machines** on the cloud. * Works with many **Blob Stores**, **Vector Stores**, and **Structured Databases** * We have even **Open Sourced Automation** to deploy to Kubernetes in production.

AionUi

AionUi is a user interface library for building modern and responsive web applications. It provides a set of customizable components and styles to create visually appealing user interfaces. With AionUi, developers can easily design and implement interactive web interfaces that are both functional and aesthetically pleasing. The library is built using the latest web technologies and follows best practices for performance and accessibility. Whether you are working on a personal project or a professional application, AionUi can help you streamline the UI development process and deliver a seamless user experience.

layra

LAYRA is the world's first visual-native AI automation engine that sees documents like a human, preserves layout and graphical elements, and executes arbitrarily complex workflows with full Python control. It empowers users to build next-generation intelligent systems with no limits or compromises. Built for Enterprise-Grade deployment, LAYRA features a modern frontend, high-performance backend, decoupled service architecture, visual-native multimodal document understanding, and a powerful workflow engine.

handit.ai

Handit.ai is an autonomous engineer tool designed to fix AI failures 24/7. It catches failures, writes fixes, tests them, and ships PRs automatically. It monitors AI applications, detects issues, generates fixes, tests them against real data, and ships them as pull requests—all automatically. Users can write JavaScript, TypeScript, Python, and more, and the tool automates what used to require manual debugging and firefighting.

stenoai

StenoAI is an AI-powered meeting intelligence tool that allows users to record, transcribe, summarize, and query meetings using local AI models. It prioritizes privacy by processing data entirely on the user's device. The tool offers multiple AI models optimized for different use cases, making it ideal for healthcare, legal, and finance professionals with confidential data needs. StenoAI also features a macOS desktop app with a user-friendly interface, making it convenient for users to access its functionalities. The project is open-source and not affiliated with any specific company, emphasizing its focus on meeting-notes productivity and community collaboration.

seline

Seline is a local-first AI desktop application that integrates conversational AI, visual generation tools, vector search, and multi-channel connectivity. It allows users to connect WhatsApp, Telegram, or Slack to create always-on bots with full context and background task delivery. The application supports multi-channel connectivity, deep research mode, local web browsing with Puppeteer, local knowledge and privacy features, visual and creative tools, automation and agents, developer experience enhancements, and more. Seline is actively developed with a focus on improving user experience and functionality.

sandboxed.sh

sandboxed.sh is a self-hosted cloud orchestrator for AI coding agents that provides isolated Linux workspaces with Claude Code, OpenCode & Amp runtimes. It allows users to hand off entire development cycles, run multi-day operations unattended, and keep sensitive data local by analyzing data against scientific literature. The tool features dual runtime support, mission control for remote agent management, isolated workspaces, a git-backed library, MCP registry, and multi-platform support with a web dashboard and iOS app.

specs.md

AI-native development framework with pluggable flows for every use case. Choose from Simple for quick specs, FIRE for adaptive execution, or AI-DLC for full methodology with DDD. Features include flow switcher, active run tracking, intent visualization, and click-to-open spec files. Three flows optimized for different scenarios: Simple for spec generation, prototypes; FIRE for adaptive execution, brownfield, monorepos; AI-DLC for full traceability, DDD, regulated environments. Installable as a VS Code extension for progress tracking. Supported by various AI coding tools like Claude Code, Cursor, GitHub Copilot, and Google Antigravity. Tool agnostic with portable markdown files for agents and specs.

serverless-openclaw

An open-source project, Serverless OpenClaw, that runs OpenClaw on-demand on AWS serverless infrastructure, providing a web UI and Telegram as interfaces. It minimizes cost, offers predictive pre-warming, supports multi-LLM providers, task automation, and one-command deployment. The project aims for cost optimization, easy management, scalability, and security through various features and technologies. It follows a specific architecture and tech stack, with a roadmap for future development phases and estimated costs. The project structure is organized as an npm workspaces monorepo with TypeScript project references, and detailed documentation is available for contributors and users.

ctinexus

CTINexus is a framework that leverages optimized in-context learning of large language models to automatically extract cyber threat intelligence from unstructured text and construct cybersecurity knowledge graphs. It processes threat intelligence reports to extract cybersecurity entities, identify relationships between security concepts, and construct knowledge graphs with interactive visualizations. The framework requires minimal configuration, with no extensive training data or parameter tuning needed.

For similar tasks

MobileWorld

MobileWorld is a benchmark for evaluating autonomous mobile agents in realistic scenarios. It offers a challenging online mobile-use benchmark with 201 tasks across 20 mobile applications, including long-horizon tasks, agent-user interaction tasks, and MCP-augmented tasks. The benchmark features a containerized environment, open-source applications, and snapshot-based state management for reproducible results. Task evaluation methods include textual answer verification, backend database verification, local storage inspection, and application callbacks.

openrl

OpenRL is an open-source general reinforcement learning research framework that supports training for various tasks such as single-agent, multi-agent, offline RL, self-play, and natural language. Developed based on PyTorch, the goal of OpenRL is to provide a simple-to-use, flexible, efficient and sustainable platform for the reinforcement learning research community. It supports a universal interface for all tasks/environments, single-agent and multi-agent tasks, offline RL training with expert dataset, self-play training, reinforcement learning training for natural language tasks, DeepSpeed, Arena for evaluation, importing models and datasets from Hugging Face, user-defined environments, models, and datasets, gymnasium environments, callbacks, visualization tools, unit testing, and code coverage testing. It also supports various algorithms like PPO, DQN, SAC, and environments like Gymnasium, MuJoCo, Atari, and more.

AgentGym

AgentGym is a framework designed to help the AI community evaluate and develop generally-capable Large Language Model-based agents. It features diverse interactive environments and tasks with real-time feedback and concurrency. The platform supports 14 environments across various domains like web navigating, text games, house-holding tasks, digital games, and more. AgentGym includes a trajectory set (AgentTraj) and a benchmark suite (AgentEval) to facilitate agent exploration and evaluation. The framework allows for agent self-evolution beyond existing data, showcasing comparable results to state-of-the-art models.

synthora

Synthora is a lightweight and extensible framework for LLM-driven Agents and ALM research. It aims to simplify the process of building, testing, and evaluating agents by providing essential components. The framework allows for easy agent assembly with a single config, reducing the effort required for tuning and sharing agents. Although in early development stages with unstable APIs, Synthora welcomes feedback and contributions to enhance its stability and functionality.

adk-java

Agent Development Kit (ADK) for Java is an open-source toolkit designed for developers to build, evaluate, and deploy sophisticated AI agents with flexibility and control. It allows defining agent behavior, orchestration, and tool use directly in code, enabling robust debugging, versioning, and deployment anywhere. The toolkit offers a rich tool ecosystem, code-first development approach, and support for modular multi-agent systems, making it ideal for creating advanced AI agents integrated with Google Cloud services.

Agentic-ADK

Agentic ADK is an Agent application development framework launched by Alibaba International AI Business, based on Google-ADK and Ali-LangEngine. It is used for developing, constructing, evaluating, and deploying powerful, flexible, and controllable complex AI Agents. ADK aims to make Agent development simpler and more user-friendly, enabling developers to more easily build, deploy, and orchestrate various Agent applications ranging from simple tasks to complex collaborations.

adk-docs

Agent Development Kit (ADK) is an open-source, code-first toolkit for building, evaluating, and deploying sophisticated AI agents with flexibility and control. It is a flexible and modular framework optimized for Gemini and the Google ecosystem, model-agnostic, deployment-agnostic, and compatible with other frameworks. ADK simplifies agent development by making it feel more like software development, enabling developers to create, deploy, and orchestrate agentic architectures from simple tasks to complex workflows.

adk-python

Agent Development Kit (ADK) is an open-source, code-first Python toolkit for building, evaluating, and deploying sophisticated AI agents with flexibility and control. It is a flexible and modular framework optimized for Gemini and the Google ecosystem, but also compatible with other frameworks. ADK aims to make agent development feel more like software development, enabling developers to create, deploy, and orchestrate agentic architectures ranging from simple tasks to complex workflows.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.