databao-context-engine

Databao Context Engine is an open-source engine that automatically generates a governed semantic context from your databases, BI tools, documents, and spreadsheets. It runs locally in your environment and integrates with any LLM to deliver accurate, context-aware answers

Stars: 51

Databao Context Engine is a Python library that automatically generates governed semantic context from databases, BI tools, documents, and spreadsheets. It provides accurate, context-aware answers without the need for manual schema copying or documentation writing. The tool can be integrated as a standard Python dependency or via the Databao CLI. It supports various data sources like Athena, BigQuery, MySQL, PDF files, and more, and works with LLMs such as Ollama. Users can create domains, configure data sources, build context, and utilize the built contexts for search queries. The tool is governed, versioned, and supports dynamic or static serving of context via MCP server or export as an artifact. Contributions are welcome, and the tool is licensed under Apache 2.0.

README:

Semantic context for your LLMs — generated automatically.

No more copying schemas. No manual documentation. Just accurate answers.

Website • Quickstart • Data Sources • Contributing

Databao Context Engine is a Python library that automatically generates governed semantic context from your databases, BI tools, documents, and spreadsheets.

Use it with any LLM to deliver accurate, context-aware answers — without copying schemas or writing documentation by hand.

You can add Databao Context Engine as a standard Python dependency in your code or via Databao CLI (coming soon).

Your data sources → Context Engine → Unified semantic graph → Any LLM

| Feature | What it means for you |

|---|---|

| Auto-generated context | Extracts schemas, relationships, and semantics automatically |

| Runs locally | Your data never leaves your environment |

| MCP integration | Works with Claude Desktop, Cursor, and any MCP-compatible tool |

| Multiple sources | Databases, dbt projects, spreadsheets, documents |

| Built-in benchmarks | Measure and improve context quality over time |

| LLM agnostic | OpenAI, Anthropic, Ollama, Gemini — use any model |

| Governed & versioned | Track, version, and share context across your team |

| Dynamic or static | Serve context via MCP server or export as artifact |

Databao Context Engine is available on PyPI and can be installed with uv, pip, or another package manager.

uv add databao-context-enginepip install databao-context-engine- Athena

- BigQuery

- ClickHouse

- DuckDB

- MSSQL

- MySQL

- PostgreSQL

- Snowflake

- SQLite

- dbt projects

- PDF files

- Markdown and text files

| Provider | Configuration |

|---|---|

| Ollama |

languageModel: OLLAMA: runs locally, free |

# Initialize the domain in an existing directory

from databao_context_engine import init_dce_domain

domain_manager = init_dce_domain(Path(tempfile.mkdtemp()))

# Or use an existing project

from databao_context_engine import DatabaoContextDomainManager

domain_manager = DatabaoContextDomainManager(domain_dir=Path("path/to/project"))from databao_context_engine import (

DatasourceConnectionStatus,

DatasourceType,

)

# Create a new datasource

postgres_datasource_id = domain_manager.create_datasource_config(

DatasourceType(full_type="postgres"),

datasource_name="my_postgres_datasource",

config_content={

"connection": {"host": "localhost", "user": "dev", "password": "pass"}

},

).datasource.id

# Check the connection to the datasource is valid

check_result = domain_manager.check_datasource_connection()

assert len(check_result) == 1

assert check_result[0].datasource_id == postgres_datasource_id

assert check_result[0].connection_status == DatasourceConnectionStatus.VALIDbuild_result = domain_manager.build_context()

assert len(build_result) == 1

assert build_result[0].datasource_id == postgres_datasource_id

assert build_result[0].datasource_type == DatasourceType(full_type="postgres")

assert build_result[0].context_file_path.is_file()# Switch to the engine if you're already using a domain_manager

context_engine = domain_manager.get_engine_for_domain()

# Or directly create a context engine from the path to your DCE domaint

from databao_context_engine import DatabaoContextEngine

context_engine = DatabaoContextEngine(domain_dir=Path("path/to/project"))# Switch to the engine to use the context built

all_built_contexts = context_engine.get_all_contexts()

assert len(all_built_contexts) == 1

assert all_built_contexts[0].datasource_id == postgres_datasource_id

print(all_built_contexts[0].context)# Run a vector similarity search

results = context_engine.search_context("my search query")

print(f"Found {len(results)} results for query")

print(

"\n\n".join(

[f"{str(result.datasource_id)}\n{result.context_result}" for result in results]

)

)We’d love your help! Here’s how to get involved:

- ⭐ Star this repo — it helps others find us!

- 🐛 Found a bug? Open an issue

- 💡 Have an idea? We’re all ears — create a feature request

- 👍 Upvote issues you care about — helps us prioritize

- 🔧 Submit a PR

- 📝 Improve docs — typos, examples, tutorials — everything helps!

New to open source? No worries! We're friendly and happy to help you get started. 🌱

For more details, see CONTRIBUTING.

Apache 2.0 — use it however you want. See the LICENSE file for details.

Like Databao Context Engine? Give us a ⭐ — it means a lot!

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for databao-context-engine

Similar Open Source Tools

databao-context-engine

Databao Context Engine is a Python library that automatically generates governed semantic context from databases, BI tools, documents, and spreadsheets. It provides accurate, context-aware answers without the need for manual schema copying or documentation writing. The tool can be integrated as a standard Python dependency or via the Databao CLI. It supports various data sources like Athena, BigQuery, MySQL, PDF files, and more, and works with LLMs such as Ollama. Users can create domains, configure data sources, build context, and utilize the built contexts for search queries. The tool is governed, versioned, and supports dynamic or static serving of context via MCP server or export as an artifact. Contributions are welcome, and the tool is licensed under Apache 2.0.

ctinexus

CTINexus is a framework that leverages optimized in-context learning of large language models to automatically extract cyber threat intelligence from unstructured text and construct cybersecurity knowledge graphs. It processes threat intelligence reports to extract cybersecurity entities, identify relationships between security concepts, and construct knowledge graphs with interactive visualizations. The framework requires minimal configuration, with no extensive training data or parameter tuning needed.

LEANN

LEANN is an innovative vector database that democratizes personal AI, transforming your laptop into a powerful RAG system that can index and search through millions of documents using 97% less storage than traditional solutions without accuracy loss. It achieves this through graph-based selective recomputation and high-degree preserving pruning, computing embeddings on-demand instead of storing them all. LEANN allows semantic search of file system, emails, browser history, chat history, codebase, or external knowledge bases on your laptop with zero cloud costs and complete privacy. It is a drop-in semantic search MCP service fully compatible with Claude Code, enabling intelligent retrieval without changing your workflow.

logicstamp-context

LogicStamp Context is a static analyzer that extracts deterministic component contracts from TypeScript codebases, providing structured architectural context for AI coding assistants. It helps AI assistants understand architecture by extracting props, hooks, and dependencies without implementation noise. The tool works with React, Next.js, Vue, Express, and NestJS, and is compatible with various AI assistants like Claude, Cursor, and MCP agents. It offers features like watch mode for real-time updates, breaking change detection, and dependency graph creation. LogicStamp Context is a security-first tool that protects sensitive data, runs locally, and is non-opinionated about architectural decisions.

indexify

Indexify is an open-source engine for building fast data pipelines for unstructured data (video, audio, images, and documents) using reusable extractors for embedding, transformation, and feature extraction. LLM Applications can query transformed content friendly to LLMs by semantic search and SQL queries. Indexify keeps vector databases and structured databases (PostgreSQL) updated by automatically invoking the pipelines as new data is ingested into the system from external data sources. **Why use Indexify** * Makes Unstructured Data **Queryable** with **SQL** and **Semantic Search** * **Real-Time** Extraction Engine to keep indexes **automatically** updated as new data is ingested. * Create **Extraction Graph** to describe **data transformation** and extraction of **embedding** and **structured extraction**. * **Incremental Extraction** and **Selective Deletion** when content is deleted or updated. * **Extractor SDK** allows adding new extraction capabilities, and many readily available extractors for **PDF**, **Image**, and **Video** indexing and extraction. * Works with **any LLM Framework** including **Langchain**, **DSPy**, etc. * Runs on your laptop during **prototyping** and also scales to **1000s of machines** on the cloud. * Works with many **Blob Stores**, **Vector Stores**, and **Structured Databases** * We have even **Open Sourced Automation** to deploy to Kubernetes in production.

obsei

Obsei is an open-source, low-code, AI powered automation tool that consists of an Observer to collect unstructured data from various sources, an Analyzer to analyze the collected data with various AI tasks, and an Informer to send analyzed data to various destinations. The tool is suitable for scheduled jobs or serverless applications as all Observers can store their state in databases. Obsei is still in alpha stage, so caution is advised when using it in production. The tool can be used for social listening, alerting/notification, automatic customer issue creation, extraction of deeper insights from feedbacks, market research, dataset creation for various AI tasks, and more based on creativity.

inferable

Inferable is an open source platform that helps users build reliable LLM-powered agentic automations at scale. It offers a managed agent runtime, durable tool calling, zero network configuration, multiple language support, and is fully open source under the MIT license. Users can define functions, register them with Inferable, and create runs that utilize these functions to automate tasks. The platform supports Node.js/TypeScript, Go, .NET, and React, and provides SDKs, core services, and bootstrap templates for various languages.

search_with_ai

Build your own conversation-based search with AI, a simple implementation with Node.js & Vue3. Live Demo Features: * Built-in support for LLM: OpenAI, Google, Lepton, Ollama(Free) * Built-in support for search engine: Bing, Sogou, Google, SearXNG(Free) * Customizable pretty UI interface * Support dark mode * Support mobile display * Support local LLM with Ollama * Support i18n * Support Continue Q&A with contexts.

R2R

R2R (RAG to Riches) is a fast and efficient framework for serving high-quality Retrieval-Augmented Generation (RAG) to end users. The framework is designed with customizable pipelines and a feature-rich FastAPI implementation, enabling developers to quickly deploy and scale RAG-based applications. R2R was conceived to bridge the gap between local LLM experimentation and scalable production solutions. **R2R is to LangChain/LlamaIndex what NextJS is to React**. A JavaScript client for R2R deployments can be found here. ### Key Features * **🚀 Deploy** : Instantly launch production-ready RAG pipelines with streaming capabilities. * **🧩 Customize** : Tailor your pipeline with intuitive configuration files. * **🔌 Extend** : Enhance your pipeline with custom code integrations. * **⚖️ Autoscale** : Scale your pipeline effortlessly in the cloud using SciPhi. * **🤖 OSS** : Benefit from a framework developed by the open-source community, designed to simplify RAG deployment.

quantalogic

QuantaLogic is a ReAct framework for building advanced AI agents that seamlessly integrates large language models with a robust tool system. It aims to bridge the gap between advanced AI models and practical implementation in business processes by enabling agents to understand, reason about, and execute complex tasks through natural language interaction. The framework includes features such as ReAct Framework, Universal LLM Support, Secure Tool System, Real-time Monitoring, Memory Management, and Enterprise Ready components.

pentagi

PentAGI is an innovative tool for automated security testing that leverages cutting-edge artificial intelligence technologies. It is designed for information security professionals, researchers, and enthusiasts who need a powerful and flexible solution for conducting penetration tests. The tool provides secure and isolated operations in a sandboxed Docker environment, fully autonomous AI-powered agent for penetration testing steps, a suite of 20+ professional security tools, smart memory system for storing research results, web intelligence for gathering information, integration with external search systems, team delegation system, comprehensive monitoring and reporting, modern interface, API integration, persistent storage, scalable architecture, self-hosted solution, flexible authentication, and quick deployment through Docker Compose.

easy-dataset

Easy Dataset is a specialized application designed to streamline the creation of fine-tuning datasets for Large Language Models (LLMs). It offers an intuitive interface for uploading domain-specific files, intelligently splitting content, generating questions, and producing high-quality training data for model fine-tuning. With Easy Dataset, users can transform domain knowledge into structured datasets compatible with all OpenAI-format compatible LLM APIs, making the fine-tuning process accessible and efficient.

pyomop

pyomop is a versatile tool designed as an OMOP Swiss Army Knife for working with OHDSI OMOP Common Data Model (CDM) v5.4 or v6 compliant databases using SQLAlchemy as the ORM. It supports converting query results to pandas DataFrames for machine learning pipelines and provides utilities for working with OMOP vocabularies. The tool is lightweight, easy-to-use, and can be used both as a command-line tool and as an imported library in code. It supports SQLite, PostgreSQL, and MySQL databases, LLM-based natural language queries, FHIR to OMOP conversion utilities, and executing QueryLibrary.

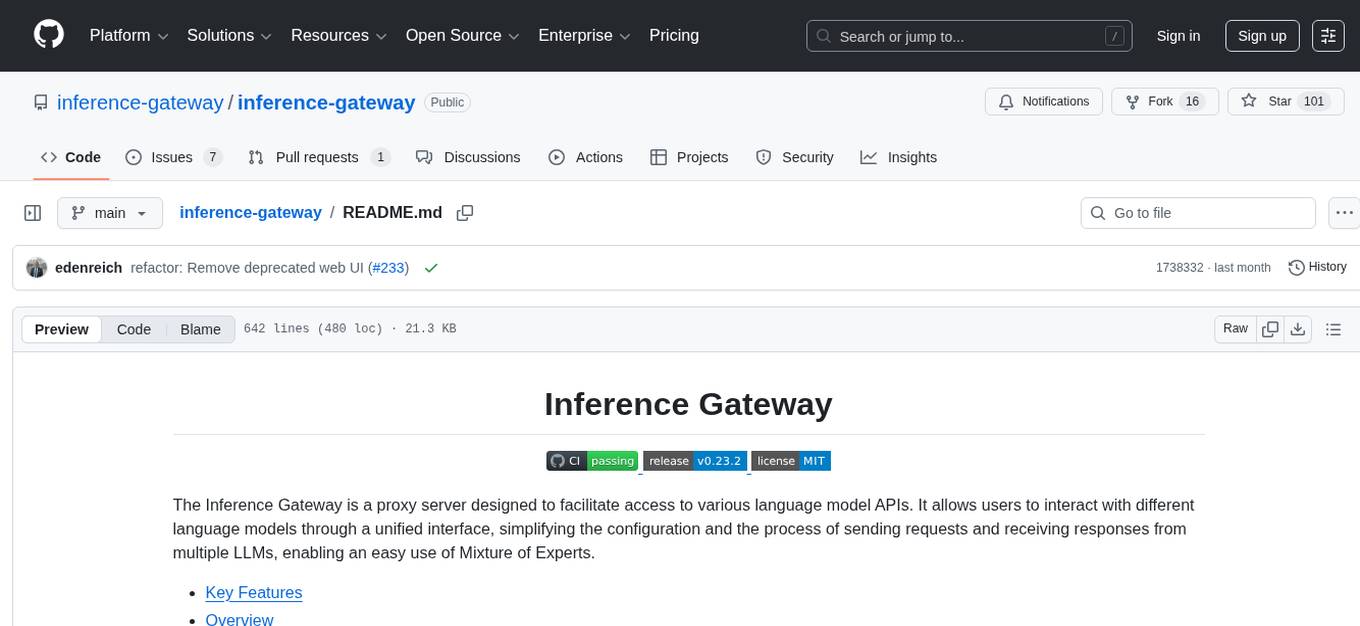

inference-gateway

The Inference Gateway is an open-source proxy server designed to simplify access to various language model APIs. It allows users to interact with different language models through a unified interface, stream tokens in real-time, process images alongside text, and use Docker or Kubernetes for deployment. The gateway supports Model Context Protocol integration, provides metrics and observability features, and is production-ready with minimal resource consumption. It offers middleware control and bypass mechanisms, enabling users to manage capabilities like MCP and vision support. The CLI tool provides status monitoring, interactive chat, configuration management, project initialization, and tool execution functionalities. The project aims to provide a flexible solution for AI Agents, supporting self-hosted LLMs and avoiding vendor lock-in.

uLoopMCP

uLoopMCP is a Unity integration tool designed to let AI drive your Unity project forward with minimal human intervention. It provides a 'self-hosted development loop' where an AI can compile, run tests, inspect logs, and fix issues using tools like compile, run-tests, get-logs, and clear-console. It also allows AI to operate the Unity Editor itself—creating objects, calling menu items, inspecting scenes, and refining UI layouts from screenshots via tools like execute-dynamic-code, execute-menu-item, and capture-window. The tool enables AI-driven development loops to run autonomously inside existing Unity projects.

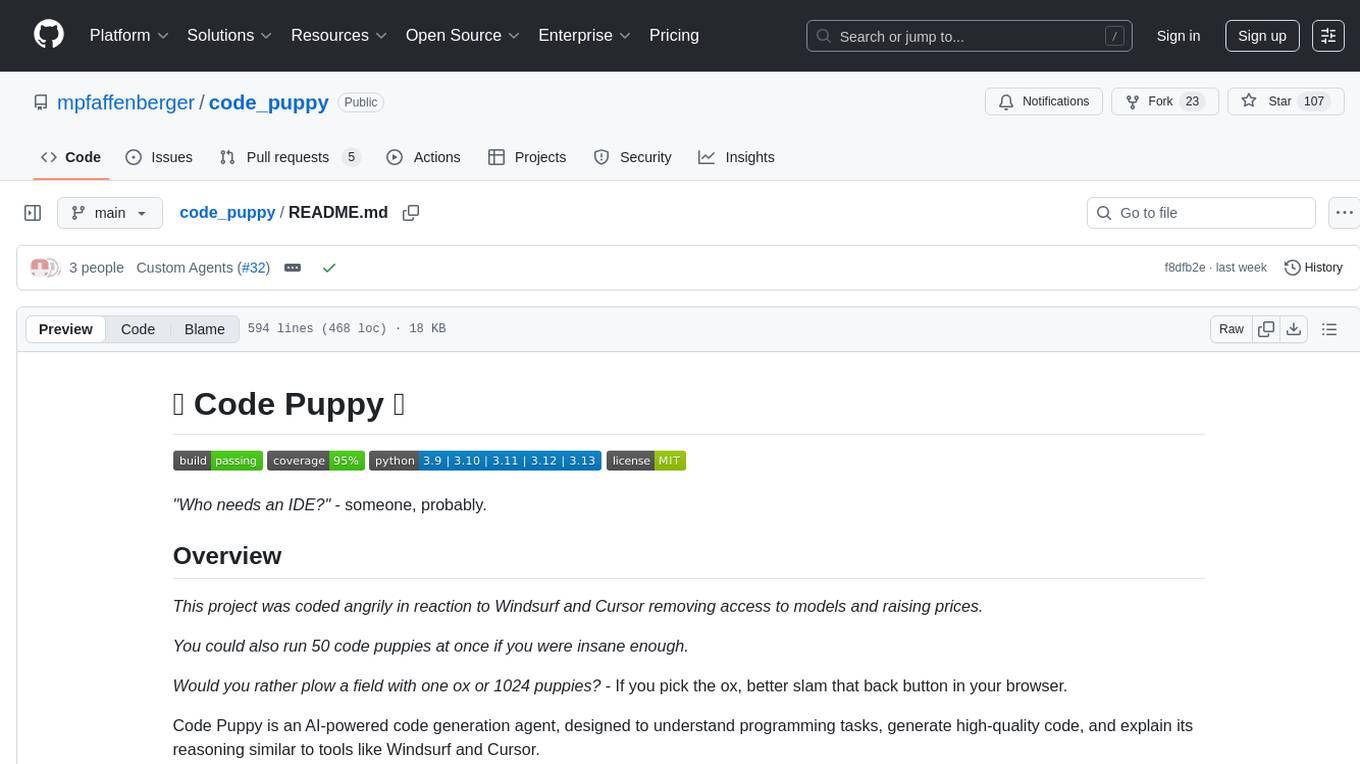

code_puppy

Code Puppy is an AI-powered code generation agent designed to understand programming tasks, generate high-quality code, and explain its reasoning. It supports multi-language code generation, interactive CLI, and detailed code explanations. The tool requires Python 3.9+ and API keys for various models like GPT, Google's Gemini, Cerebras, and Claude. It also integrates with MCP servers for advanced features like code search and documentation lookups. Users can create custom JSON agents for specialized tasks and access a variety of tools for file management, code execution, and reasoning sharing.

For similar tasks

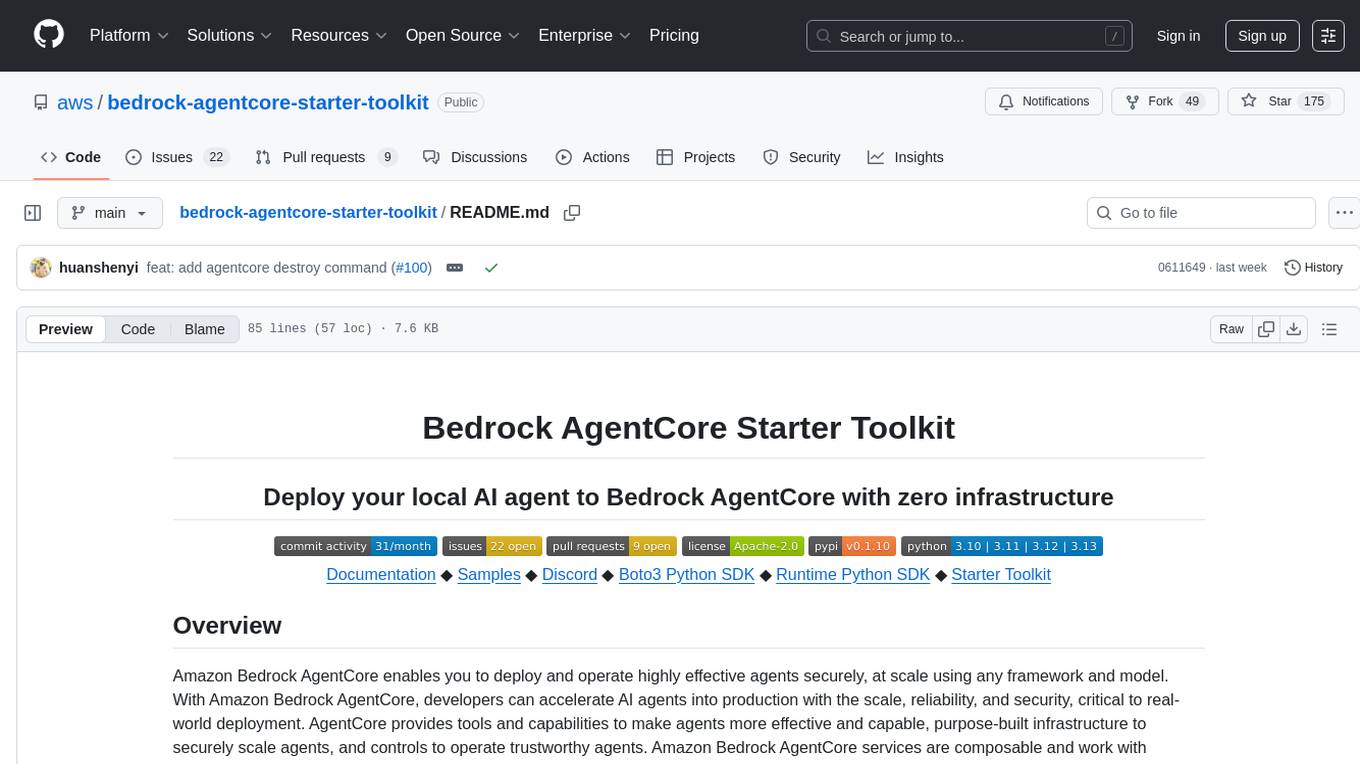

bedrock-agentcore-starter-toolkit

Amazon Bedrock AgentCore Starter Toolkit enables developers to deploy and operate highly effective AI agents securely at scale using any framework and model. It provides tools and capabilities to make agents more effective and capable, purpose-built infrastructure to securely scale agents, and controls to operate trustworthy agents. The toolkit includes modular services like Runtime, Memory, Gateway, Code Interpreter, Browser, Observability, Identity, and Import Agent for seamless migration of existing agents. It is currently in public preview and offers enterprise-grade security and reliability for accelerating AI agent development.

databao-context-engine

Databao Context Engine is a Python library that automatically generates governed semantic context from databases, BI tools, documents, and spreadsheets. It provides accurate, context-aware answers without the need for manual schema copying or documentation writing. The tool can be integrated as a standard Python dependency or via the Databao CLI. It supports various data sources like Athena, BigQuery, MySQL, PDF files, and more, and works with LLMs such as Ollama. Users can create domains, configure data sources, build context, and utilize the built contexts for search queries. The tool is governed, versioned, and supports dynamic or static serving of context via MCP server or export as an artifact. Contributions are welcome, and the tool is licensed under Apache 2.0.

LaVague

LaVague is an open-source Large Action Model framework that uses advanced AI techniques to compile natural language instructions into browser automation code. It leverages Selenium or Playwright for browser actions. Users can interact with LaVague through an interactive Gradio interface to automate web interactions. The tool requires an OpenAI API key for default examples and offers a Playwright integration guide. Contributors can help by working on outlined tasks, submitting PRs, and engaging with the community on Discord. The project roadmap is available to track progress, but users should exercise caution when executing LLM-generated code using 'exec'.

DocsGPT

DocsGPT is an open-source documentation assistant powered by GPT models. It simplifies the process of searching for information in project documentation by allowing developers to ask questions and receive accurate answers. With DocsGPT, users can say goodbye to manual searches and quickly find the information they need. The tool aims to revolutionize project documentation experiences and offers features like live previews, Discord community, guides, and contribution opportunities. It consists of a Flask app, Chrome extension, similarity search index creation script, and a frontend built with Vite and React. Users can quickly get started with DocsGPT by following the provided setup instructions and can contribute to its development by following the guidelines in the CONTRIBUTING.md file. The project follows a Code of Conduct to ensure a harassment-free community environment for all participants. DocsGPT is licensed under MIT and is built with LangChain.

Ollamac

Ollamac is a macOS app designed for interacting with Ollama models. It is optimized for macOS, allowing users to easily use any model from the Ollama library. The app features a user-friendly interface, chat archive for saving interactions, and real-time communication using HTTP streaming technology. Ollamac is open-source, enabling users to contribute to its development and enhance its capabilities. It requires macOS 14 or later and the Ollama system to be installed on the user's Mac with at least one Ollama model downloaded.

npi

NPi is an open-source platform providing Tool-use APIs to empower AI agents with the ability to take action in the virtual world. It is currently under active development, and the APIs are subject to change in future releases. NPi offers a command line tool for installation and setup, along with a GitHub app for easy access to repositories. The platform also includes a Python SDK and examples like Calendar Negotiator and Twitter Crawler. Join the NPi community on Discord to contribute to the development and explore the roadmap for future enhancements.

ain

DeFiChain is a blockchain platform dedicated to enabling decentralized finance with Bitcoin-grade security, strength, and immutability. It offers fast, intelligent, and transparent financial services accessible to everyone. DeFiChain has made significant modifications from Bitcoin Core, including moving to Proof-of-Stake, introducing a masternode model, supporting a community fund, anchoring to the Bitcoin blockchain, and enhancing decentralized financial transaction and opcode support. The platform is under active development with regular releases and contributions are welcomed.

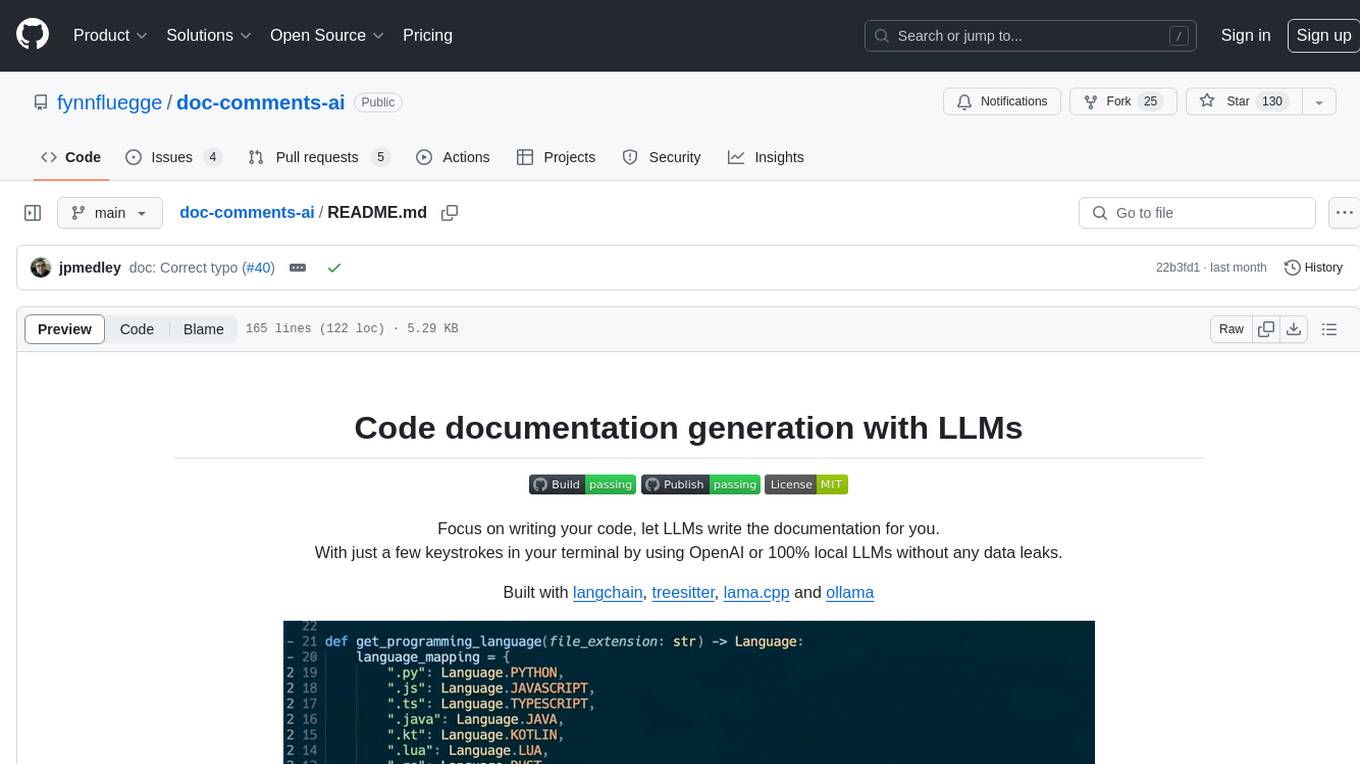

doc-comments-ai

doc-comments-ai is a tool designed to automatically generate code documentation using language models. It allows users to easily create documentation comment blocks for methods in various programming languages such as Python, Typescript, Javascript, Java, Rust, and more. The tool supports both OpenAI and local LLMs, ensuring data privacy and security. Users can generate documentation comments for methods in files, inline comments in method bodies, and choose from different models like GPT-3.5-Turbo, GPT-4, and Azure OpenAI. Additionally, the tool provides support for Treesitter integration and offers guidance on selecting the appropriate model for comprehensive documentation needs.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.