groqbook

Groqbook: Generate entire books in seconds using Groq and Llama3

Stars: 628

Groqbook is a streamlit app that quickly generates entire books from a one-line prompt using Llama3 on Groq. It focuses on nonfiction books, generating chapters within seconds by utilizing Llama3-8b and Llama3-70b models. The tool currently uses section titles to create chapter content, with plans to expand to full book context for fiction books. Users can download the book contents in a text file, and the app supports markdown styling with tables and code for an aesthetic book display.

README:

Groqbook is a streamlit app that scaffolds the creation of books from a one-line prompt using Llama3 on Groq. It works well on nonfiction books and generates each chapter within seconds. The app mixes Llama3-8b and Llama3-70b, utilizing the larger model for generating the structure and the smaller of the two for creating the content. Currently, the model only uses the context of the section title to generate the chapter content. In the future, this will be expanded to the fuller context of the book to allow groqbook to generate quality fiction books as well.

Demo of Groqbook fast generation of book content

Second Part of Demo of Groqbook

Demo of Groqbook downloading markdown-styled book

- 📖 Scaffolded prompting that strategically switches between Llama3-70b and Llama3-8b to balance speed and quality

- 🖊️ Uses markdown styling to create an aesthetic book on the streamlit app that includes tables and code

- 📂 Allows user to download a text file with the entire book contents

| Example | Prompt |

|---|---|

| LLM Basics | The Basics of Large Language Models |

| Data Structures and Algorithms | Data Structures and Algorithms in Java |

[!IMPORTANT] To use Groqbook, you can use the hosted version at groqbook.streamlit.app Alternatively, you can run groqbook locally with streamlit using the quickstart instructions.

To use Groqbook, you can use the hosted version at groqbook.streamlit.app

Alternative, you can run groqbook locally with streamlit.

First, you can set your Groq API key in the environment variables:

export GROQ_API_KEY="gsk_yA..."

This is an optional step that allows you to skip setting the Groq API key later in the streamlit app.

Next, you can set up a virtual environment and install the dependencies.

python3 -m venv venv

source venv/bin/activate # Bash

venv\Scripts\activate.bat # Windows

pip3 install -r requirements.txt

It may be required to install gtk3 for users on windows.

https://github.com/tschoonj/GTK-for-Windows-Runtime-Environment-Installer?tab=readme-ov-file

Finally, you can run the streamlit app.

python3 -m streamlit run main.py

- Streamlit

- Llama3 on Groq Cloud

Groqbook may generate inaccurate information or placeholder content. It should be used to generate books for entertainment purposes only.

Improvements through PRs are welcome!

May 29th, 2024:

Demo of Groqbook's Generation Statistics

June 8th, 2024:

Download Books as Styled PDFs

- Ability to title books which shows on downloads

- Ability to save books to Google drive

- Optional seed content field to input existing notes

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for groqbook

Similar Open Source Tools

groqbook

Groqbook is a streamlit app that quickly generates entire books from a one-line prompt using Llama3 on Groq. It focuses on nonfiction books, generating chapters within seconds by utilizing Llama3-8b and Llama3-70b models. The tool currently uses section titles to create chapter content, with plans to expand to full book context for fiction books. Users can download the book contents in a text file, and the app supports markdown styling with tables and code for an aesthetic book display.

groqnotes

Groqnotes is a streamlit app that helps users generate organized lecture notes from transcribed audio using Groq's Whisper API. It utilizes Llama3-8b and Llama3-70b models to structure and create content quickly. The app offers markdown styling for aesthetic notes, allows downloading notes as text or PDF files, and strategically switches between models for speed and quality balance. Users can access the hosted version at groqnotes.streamlit.app or run it locally with streamlit by setting up the Groq API key and installing dependencies.

GenAI_Agents

GenAI Agents is a comprehensive repository for developing and implementing Generative AI (GenAI) agents, ranging from simple conversational bots to complex multi-agent systems. It serves as a valuable resource for learning, building, and sharing GenAI agents, offering tutorials, implementations, and a platform for showcasing innovative agent creations. The repository covers a wide range of agent architectures and applications, providing step-by-step tutorials, ready-to-use implementations, and regular updates on advancements in GenAI technology.

Topaz-Video-AI

Topaz-Video-AI is a software tool designed to enhance video quality and provide various editing features. Users can utilize this tool to improve the visual appeal of their videos by applying filters, adjusting colors, and enhancing details. The software offers a user-friendly interface and a range of customization options to cater to different editing needs. Despite potential triggers from antivirus programs, Topaz-Video-AI is safe to use and has been tested by numerous users. By following the provided instructions, users can easily download, install, and run the software to enhance their video content.

Topaz-Photo-AI

Topaz-Photo-AI is a software tool designed to enhance and improve the quality of photos using artificial intelligence technology. Users can easily download, install, and run the software to apply various enhancements to their images. The tool provides a user-friendly interface and a range of features to help users enhance their photos with just a few simple steps. With Topaz-Photo-AI, users can achieve professional-level results in photo editing without the need for advanced skills or knowledge.

ai_summer

AI Summer is a repository focused on providing workshops and resources for developing foundational skills in generative AI models and transformer models. The repository offers practical applications for inferencing and training, with a specific emphasis on understanding and utilizing advanced AI chat models like BingGPT. Participants are encouraged to engage in interactive programming environments, decide on projects to work on, and actively participate in discussions and breakout rooms. The workshops cover topics such as generative AI models, retrieval-augmented generation, building AI solutions, and fine-tuning models. The goal is to equip individuals with the necessary skills to work with AI technologies effectively and securely, both locally and in the cloud.

WaveRobloxExecutor

Wave Executor is a cutting-edge script executor tailored for Roblox enthusiasts, offering AI integration for seamless script development, ad-free premium features, and 24/7 customer support. It enhances your Roblox gameplay experience by providing a wide range of features to take your gameplay to new heights.

verbis

Verbis AI is a secure and fully local AI assistant for MacOS that indexes data from various SaaS applications securely on the user's system. It provides a single interface powered by GenAI models to query and manage information. Users can connect Verbis to apps like Google Drive, Outlook, Gmail, and Slack, and use it as a chatbot to search across their data without data leaving their device. The tool is powered by Ollama and Weaviate, utilizing models like Mistral 7B, ms-marco-MiniLM-L-12-v2, and nomic-embed-text. Verbis AI requires Apple Silicon Mac (m1+) and has minimal system resource utilization requirements.

awesome-limitless

A curated list of amazing projects and resources built with the Limitless AI Pendant API. It includes applications, CLI tools, data visualization tools, integrations with plugins and extensions, utilities for server conversion and data ingestion, SDKs and libraries for Go and TypeScript, learning resources, and official API documentation.

llmops-duke-aipi

LLMOps Duke AIPI is a course focused on operationalizing Large Language Models, teaching methodologies for developing applications using software development best practices with large language models. The course covers various topics such as generative AI concepts, setting up development environments, interacting with large language models, using local large language models, applied solutions with LLMs, extensibility using plugins and functions, retrieval augmented generation, introduction to Python web frameworks for APIs, DevOps principles, deploying machine learning APIs, LLM platforms, and final presentations. Students will learn to build, share, and present portfolios using Github, YouTube, and Linkedin, as well as develop non-linear life-long learning skills. Prerequisites include basic Linux and programming skills, with coursework available in Python or Rust. Additional resources and references are provided for further learning and exploration.

crewAI-examples

crewAI-examples is a repository containing examples demonstrating the usage of crewAI framework for facilitating collaboration of role-playing AI agents. The examples showcase various ways to automate processes using crewAI. Created by @joaomdmoura.

awesome-agent-failures

Awesome AI Agent Failures is a community-curated repository documenting known failure modes for AI agents, real-world case studies, and techniques to avoid failures. It provides insights into common failure modes such as tool hallucination, response hallucination, goal misinterpretation, plan generation failures, incorrect tool use, verification & termination failures, and prompt injection. The repository also includes resources like research papers, industry resources, books, external resources, and related awesome lists to help AI engineers build more reliable AI agents by learning from production failures.

hyperfy

Hyperfy is a powerful tool for automating social media marketing tasks. It provides a user-friendly interface to schedule posts, analyze performance metrics, and engage with followers across multiple platforms. With Hyperfy, users can save time and effort by streamlining their social media management processes in one centralized platform.

awesome-gpt-security

Awesome GPT + Security is a curated list of awesome security tools, experimental case or other interesting things with LLM or GPT. It includes tools for integrated security, auditing, reconnaissance, offensive security, detecting security issues, preventing security breaches, social engineering, reverse engineering, investigating security incidents, fixing security vulnerabilities, assessing security posture, and more. The list also includes experimental cases, academic research, blogs, and fun projects related to GPT security. Additionally, it provides resources on GPT security standards, bypassing security policies, bug bounty programs, cracking GPT APIs, and plugin security.

bedrock-engineer

Bedrock Engineer is an autonomous software development agent application that utilizes Amazon Bedrock. It allows users to customize, create/edit files, execute commands, search the web, use a knowledge base, utilize multi-agents, generate images, and more. The tool provides an interactive chat interface with AI agents, file system operations, web search capabilities, project structure management, code analysis, code generation, data analysis, agent and tool customization, chat history management, and multi-language support. Users can select and customize agents, choose from various tools like file system operations, web search, Amazon Bedrock integration, and system command execution. Additionally, the tool offers features for website generation, connecting to design system data sources, AWS Step Functions ASL definition generation, diagram creation using natural language descriptions, and multi-language support.

agentUniverse

agentUniverse is a framework for developing applications powered by multi-agent based on large language model. It provides essential components for building single agent and multi-agent collaboration mechanism for customizing collaboration patterns. Developers can easily construct multi-agent applications and share pattern practices from different fields. The framework includes pre-installed collaboration patterns like PEER and DOE for complex task breakdown and data-intensive tasks.

For similar tasks

groqbook

Groqbook is a streamlit app that quickly generates entire books from a one-line prompt using Llama3 on Groq. It focuses on nonfiction books, generating chapters within seconds by utilizing Llama3-8b and Llama3-70b models. The tool currently uses section titles to create chapter content, with plans to expand to full book context for fiction books. Users can download the book contents in a text file, and the app supports markdown styling with tables and code for an aesthetic book display.

floneum

Floneum is a graph editor that makes it easy to develop your own AI workflows. It uses large language models (LLMs) to run AI models locally, without any external dependencies or even a GPU. This makes it easy to use LLMs with your own data, without worrying about privacy. Floneum also has a plugin system that allows you to improve the performance of LLMs and make them work better for your specific use case. Plugins can be used in any language that supports web assembly, and they can control the output of LLMs with a process similar to JSONformer or guidance.

llm-answer-engine

This repository contains the code and instructions needed to build a sophisticated answer engine that leverages the capabilities of Groq, Mistral AI's Mixtral, Langchain.JS, Brave Search, Serper API, and OpenAI. Designed to efficiently return sources, answers, images, videos, and follow-up questions based on user queries, this project is an ideal starting point for developers interested in natural language processing and search technologies.

discourse-ai

Discourse AI is a plugin for the Discourse forum software that uses artificial intelligence to improve the user experience. It can automatically generate content, moderate posts, and answer questions. This can free up moderators and administrators to focus on other tasks, and it can help to create a more engaging and informative community.

Gemini-API

Gemini-API is a reverse-engineered asynchronous Python wrapper for Google Gemini web app (formerly Bard). It provides features like persistent cookies, ImageFx support, extension support, classified outputs, official flavor, and asynchronous operation. The tool allows users to generate contents from text or images, have conversations across multiple turns, retrieve images in response, generate images with ImageFx, save images to local files, use Gemini extensions, check and switch reply candidates, and control log level.

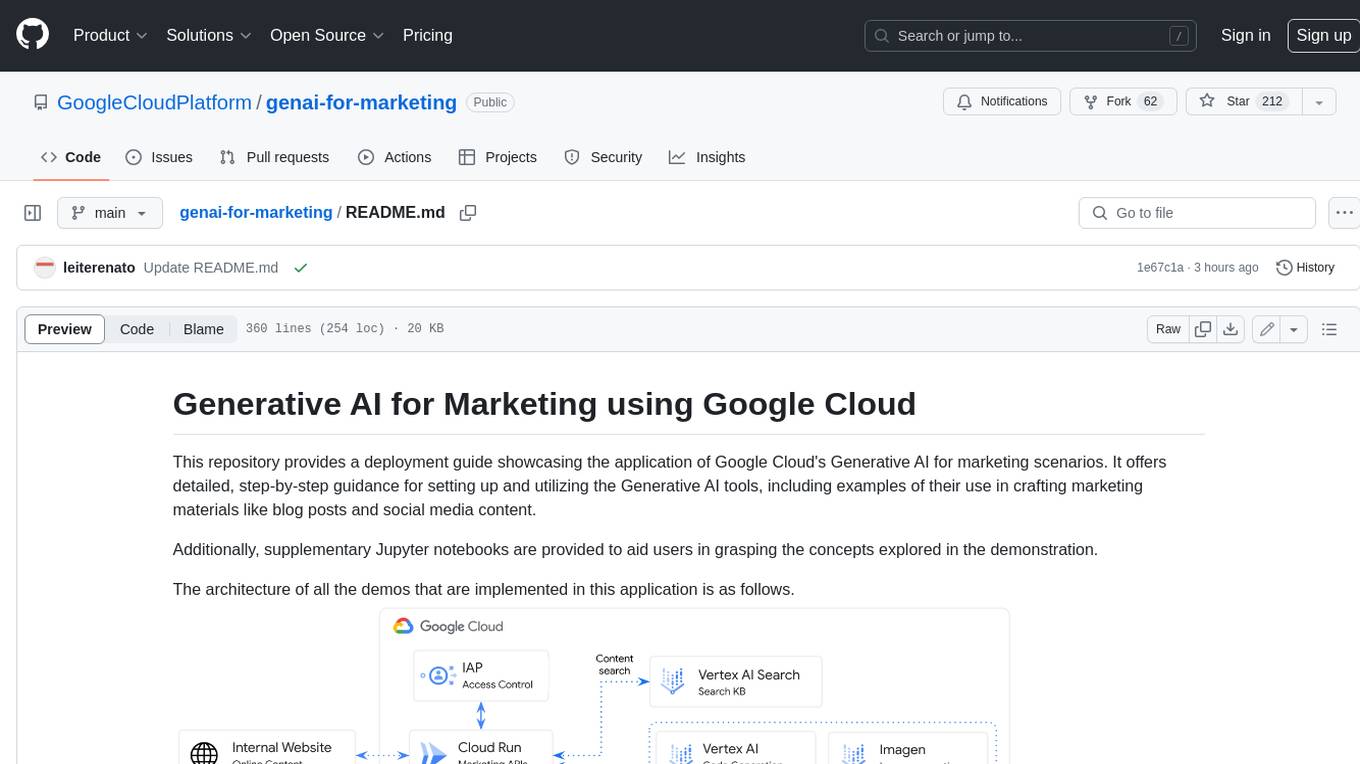

genai-for-marketing

This repository provides a deployment guide for utilizing Google Cloud's Generative AI tools in marketing scenarios. It includes step-by-step instructions, examples of crafting marketing materials, and supplementary Jupyter notebooks. The demos cover marketing insights, audience analysis, trendspotting, content search, content generation, and workspace integration. Users can access and visualize marketing data, analyze trends, improve search experience, and generate compelling content. The repository structure includes backend APIs, frontend code, sample notebooks, templates, and installation scripts.

generative-ai-dart

The Google Generative AI SDK for Dart enables developers to utilize cutting-edge Large Language Models (LLMs) for creating language applications. It provides access to the Gemini API for generating content using state-of-the-art models. Developers can integrate the SDK into their Dart or Flutter applications to leverage powerful AI capabilities. It is recommended to use the SDK for server-side API calls to ensure the security of API keys and protect against potential key exposure in mobile or web apps.

Dough

Dough is a tool for crafting videos with AI, allowing users to guide video generations with precision using images and example videos. Users can create guidance frames, assemble shots, and animate them by defining parameters and selecting guidance videos. The tool aims to help users make beautiful and unique video creations, providing control over the generation process. Setup instructions are available for Linux and Windows platforms, with detailed steps for installation and running the app.

For similar jobs

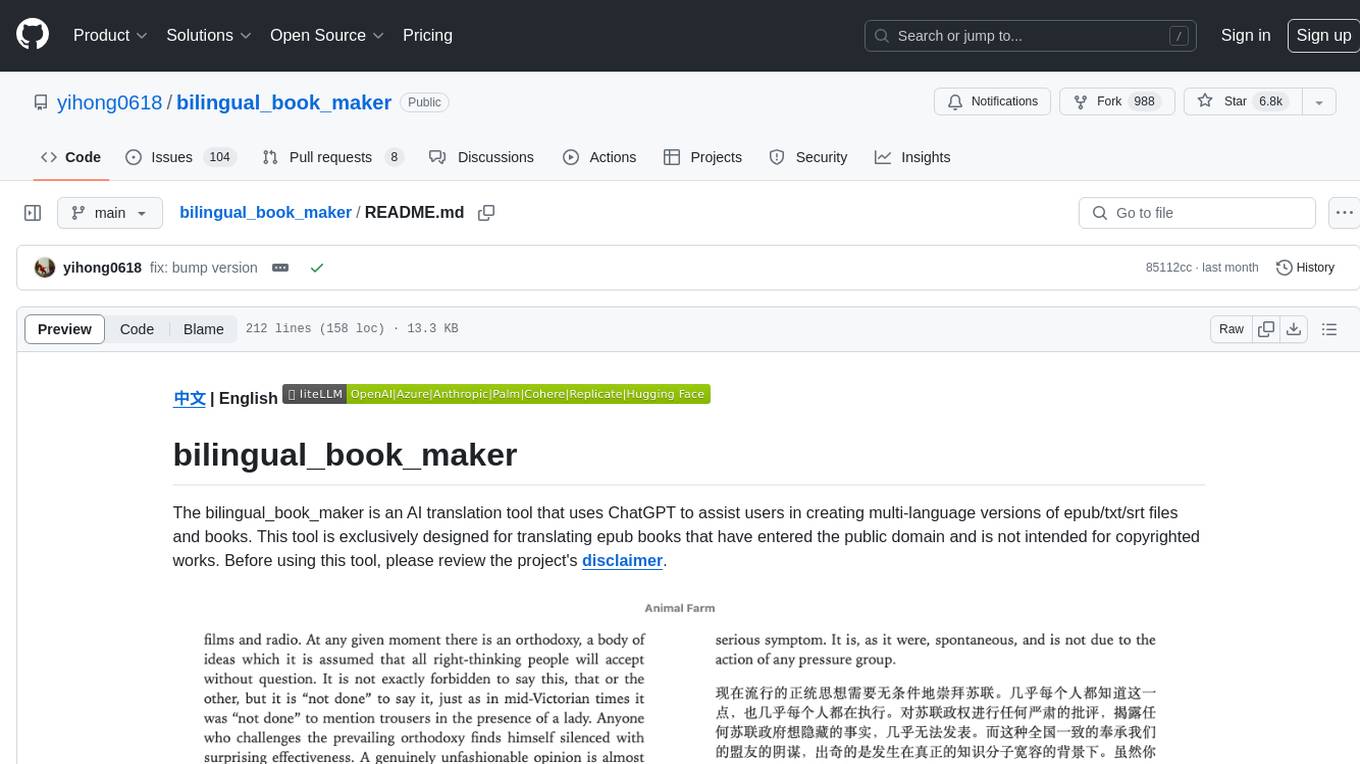

bilingual_book_maker

The bilingual_book_maker is an AI translation tool that uses ChatGPT to assist users in creating multi-language versions of epub/txt/srt files and books. It supports various models like gpt-4, gpt-3.5-turbo, claude-2, palm, llama-2, azure-openai, command-nightly, and gemini. Users need ChatGPT or OpenAI token, epub/txt books, internet access, and Python 3.8+. The tool provides options to specify OpenAI API key, model selection, target language, proxy server, context addition, translation style, and more. It generates bilingual books in epub format after translation. Users can test translations, set batch size, tweak prompts, and use different models like DeepL, Google Gemini, Tencent TranSmart, and more. The tool also supports retranslation, translating specific tags, and e-reader type specification. Docker usage is available for easy setup.

groqbook

Groqbook is a streamlit app that quickly generates entire books from a one-line prompt using Llama3 on Groq. It focuses on nonfiction books, generating chapters within seconds by utilizing Llama3-8b and Llama3-70b models. The tool currently uses section titles to create chapter content, with plans to expand to full book context for fiction books. Users can download the book contents in a text file, and the app supports markdown styling with tables and code for an aesthetic book display.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

daily-poetry-image

Daily Chinese ancient poetry and AI-generated images powered by Bing DALL-E-3. GitHub Action triggers the process automatically. Poetry is provided by Today's Poem API. The website is built with Astro.

exif-photo-blog

EXIF Photo Blog is a full-stack photo blog application built with Next.js, Vercel, and Postgres. It features built-in authentication, photo upload with EXIF extraction, photo organization by tag, infinite scroll, light/dark mode, automatic OG image generation, a CMD-K menu with photo search, experimental support for AI-generated descriptions, and support for Fujifilm simulations. The application is easy to deploy to Vercel with just a few clicks and can be customized with a variety of environment variables.

SillyTavern

SillyTavern is a user interface you can install on your computer (and Android phones) that allows you to interact with text generation AIs and chat/roleplay with characters you or the community create. SillyTavern is a fork of TavernAI 1.2.8 which is under more active development and has added many major features. At this point, they can be thought of as completely independent programs.

Twitter-Insight-LLM

This project enables you to fetch liked tweets from Twitter (using Selenium), save it to JSON and Excel files, and perform initial data analysis and image captions. This is part of the initial steps for a larger personal project involving Large Language Models (LLMs).

AISuperDomain

Aila Desktop Application is a powerful tool that integrates multiple leading AI models into a single desktop application. It allows users to interact with various AI models simultaneously, providing diverse responses and insights to their inquiries. With its user-friendly interface and customizable features, Aila empowers users to engage with AI seamlessly and efficiently. Whether you're a researcher, student, or professional, Aila can enhance your AI interactions and streamline your workflow.