Best AI tools for< Serverless_deployment >

20 - AI tool Sites

Mystic.ai

Mystic.ai is an AI tool designed to deploy and scale Machine Learning models with ease. It offers a fully managed Kubernetes platform that runs in your own cloud, allowing users to deploy ML models in their own Azure/AWS/GCP account or in a shared GPU cluster. Mystic.ai provides cost optimizations, fast inference, simpler developer experience, and performance optimizations to ensure high-performance AI model serving. With features like pay-as-you-go API, cloud integration with AWS/Azure/GCP, and a beautiful dashboard, Mystic.ai simplifies the deployment and management of ML models for data scientists and AI engineers.

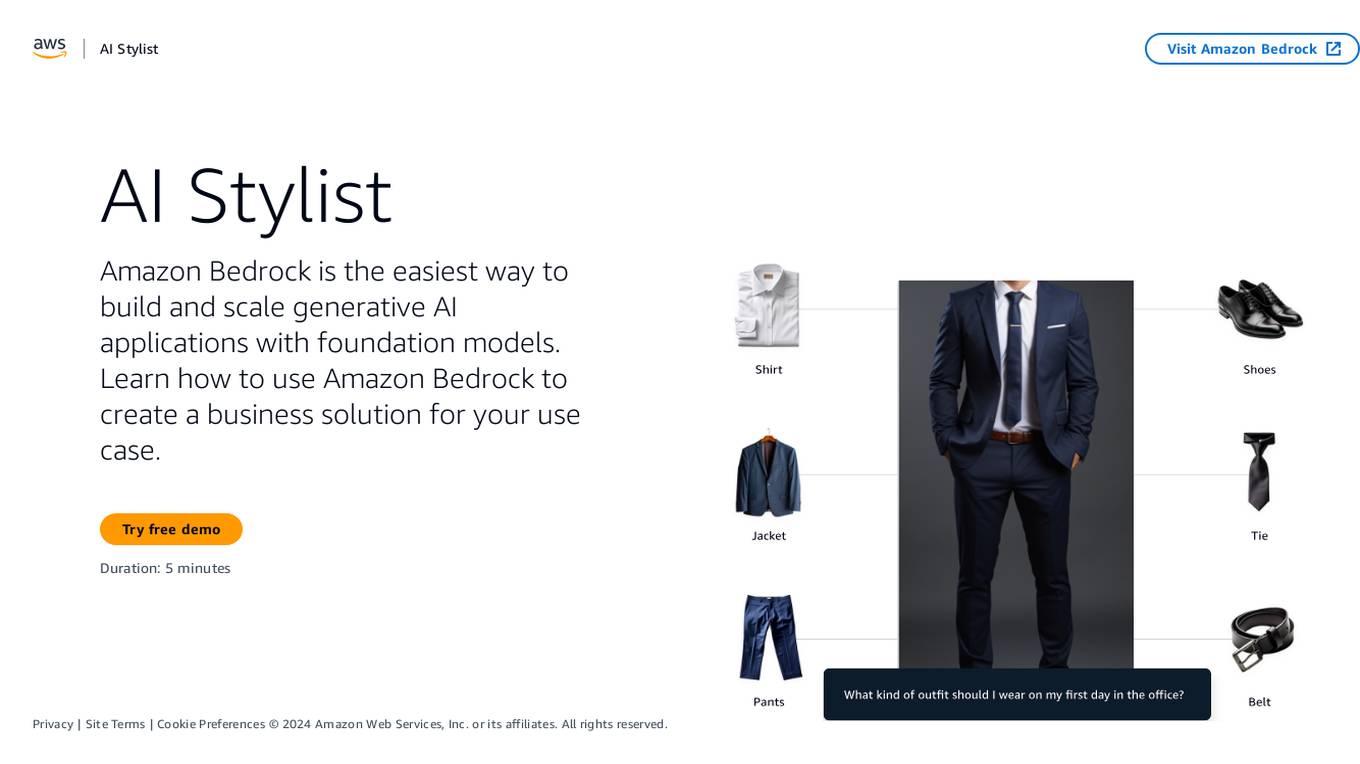

Amazon Bedrock

Amazon Bedrock is a cloud-based platform that enables developers to build, deploy, and manage serverless applications. It provides a fully managed environment that takes care of the infrastructure and operations, so developers can focus on writing code. Bedrock also offers a variety of tools and services to help developers build and deploy their applications, including a code editor, a debugger, and a deployment pipeline.

BackX

BackX is an AI development platform that empowers developers to quickly ship backends across various use cases, environments, and scales. It offers unparalleled accuracy, flexibility, and efficiency by overcoming the limitations of traditional AI-assisted programming. With features like one-click production-grade code, context-aware consistent code output, versioned artifacts, instant deploy, and a suite of AI-powered dev tools, BackX revolutionizes the backend development process. Developers can effortlessly design and manage databases, generate CRUD operations, implement complex business logic, and deploy serverless applications with ease. The platform aims to streamline development processes, increase cost-effectiveness, and provide more accurate outputs than traditional methods.

Volamail

Volamail is an AI-powered email platform that simplifies the email writing process for everyone. It offers AI-assisted editing to help users compose email templates effortlessly. The platform supports importing existing emails in plain HTML format and allows self-hosting for easy deployment. With Volamail, users can send transactional emails via a simple HTTP call without the need for dependencies. The platform is constantly evolving with new features like AI template generation, inline AI editing, and custom domains. Volamail provides simple and scalable pricing options, including a free plan for small projects and affordable custom plans for larger teams.

Xata

Xata is a serverless data platform for PostgreSQL that provides a range of features to make application development faster and easier. These features include schema migrations, file attachments, full-text search, branching, and generative AI. Xata is designed to be the ideal database for application development, with a focus on code simplicity and extensibility. It is also built on open source, so developers can collaborate with the community to drive innovative ideas.

TractoAI

TractoAI is an advanced AI platform that offers deep learning solutions for various industries. It provides Batch Inference with no rate limits, DeepSeek offline inference, and helps in training open source AI models. TractoAI simplifies training infrastructure setup, accelerates workflows with GPUs, and automates deployment and scaling for tasks like ML training and big data processing. The platform supports fine-tuning models, sandboxed code execution, and building custom AI models with distributed training launcher. It is developer-friendly, scalable, and efficient, offering a solution library and expert guidance for AI projects.

Azure Static Web Apps

Azure Static Web Apps is a platform provided by Microsoft Azure for building and deploying modern web applications. It allows developers to easily host static web content and serverless APIs with seamless integration to popular frameworks like React, Angular, and Vue. With Azure Static Web Apps, developers can quickly set up continuous integration and deployment workflows, enabling them to focus on building great user experiences without worrying about infrastructure management.

Cerebium

Cerebium is a serverless AI infrastructure platform that allows teams to build, test, and deploy AI applications quickly and efficiently. With a focus on speed, performance, and cost optimization, Cerebium offers a range of features and tools to simplify the development and deployment of AI projects. The platform ensures high reliability, security, and compliance while providing real-time logging, cost tracking, and observability tools. Cerebium also offers GPU variety and effortless autoscaling to meet the diverse needs of developers and businesses.

AIMLAPI.com

AIMLAPI.com is an AI tool that provides access to over 200 AI models through a single AI API. It offers a wide range of AI features for tasks such as chat, code, image generation, music generation, video, voice embedding, language, genomic models, and 3D generation. The platform ensures fast inference, top-tier serverless infrastructure, high data security, 99% uptime, and 24/7 support. Users can integrate AI features easily into their products and test API models in a sandbox environment before deployment.

Koxy AI

Koxy AI is an AI-powered serverless back-end platform that allows users to build globally distributed, fast, secure, and scalable back-ends with no code required. It offers features such as live logs, smart errors handling, integration with over 80,000 AI models, and more. Koxy AI is designed to help users focus on building the best service possible without wasting time on security and latency concerns. It provides a No-SQL JSON-based database, real-time data synchronization, cloud functions, and a drag-and-drop builder for API flows.

SvectorDB

SvectorDB is a vector database built from the ground up for serverless applications. It is designed to be highly scalable, performant, and easy to use. SvectorDB can be used for a variety of applications, including recommendation engines, document search, and image search.

Empower

Empower is a serverless fine-tuned LLM hosting platform that offers a developer platform for fine-tuned LLMs. It provides prebuilt task-specific base models with GPT4 level response quality, enabling users to save up to 80% on LLM bills with just 5 lines of code change. Empower allows users to own their models, offers cost-effective serving with no compromise on performance, and charges on a per-token basis. The platform is designed to be user-friendly, efficient, and cost-effective for deploying and serving fine-tuned LLMs.

EnergeticAI

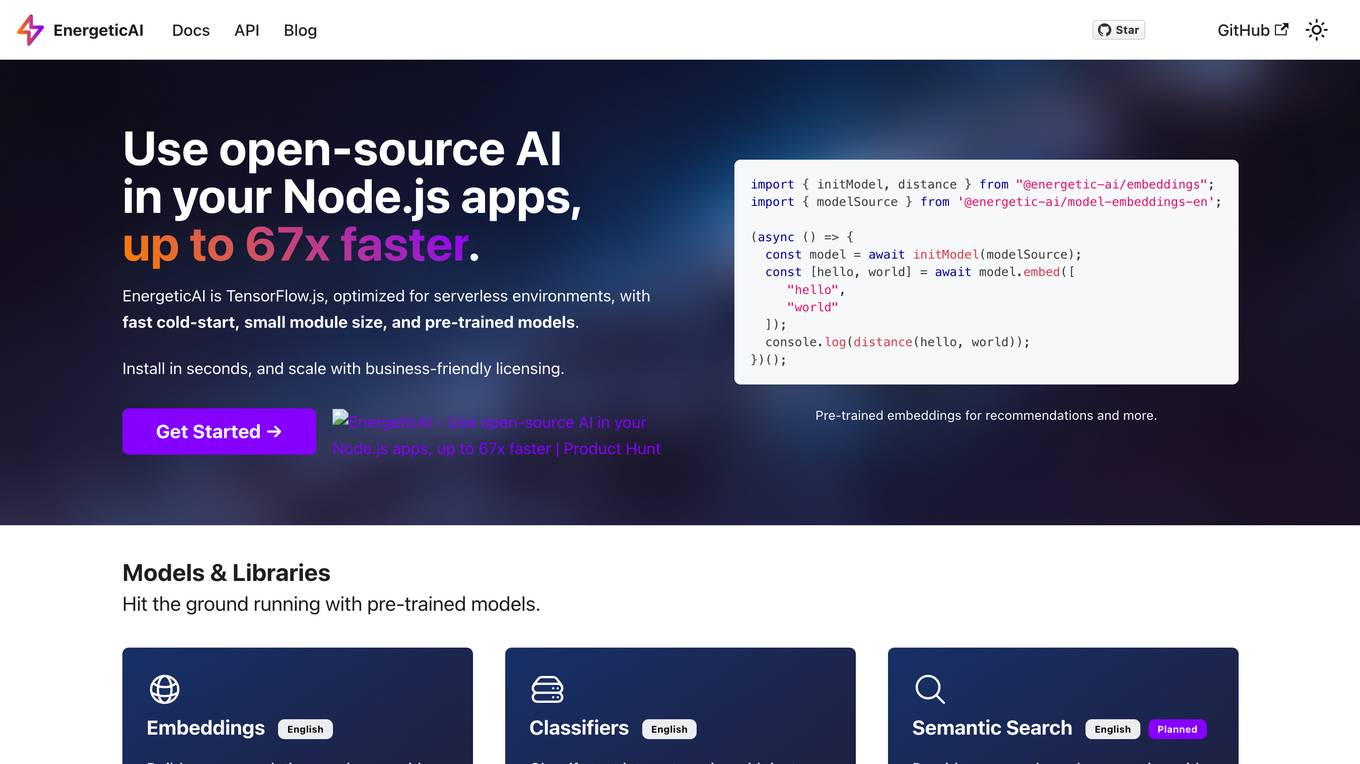

EnergeticAI is an open-source AI library that can be used in Node.js applications. It is optimized for serverless environments and provides fast cold-start, small module size, and pre-trained models. EnergeticAI can be used for a variety of tasks, including building recommendations, classifying text, and performing semantic search.

Modal

Modal is a high-performance cloud platform designed for developers, AI data, and ML teams. It offers a serverless environment for running generative AI models, large-scale batch jobs, job queues, and more. With Modal, users can bring their own code and leverage the platform's optimized container file system for fast cold boots and seamless autoscaling. The platform is engineered for large-scale workloads, allowing users to scale to hundreds of GPUs, pay only for what they use, and deploy functions to the cloud in seconds without the need for YAML or Dockerfiles. Modal also provides features for job scheduling, web endpoints, observability, and security compliance.

Fleak AI Workflows

Fleak AI Workflows is a low-code serverless API Builder designed for data teams to effortlessly integrate, consolidate, and scale their data workflows. It simplifies the process of creating, connecting, and deploying workflows in minutes, offering intuitive tools to handle data transformations and integrate AI models seamlessly. Fleak enables users to publish, manage, and monitor APIs effortlessly, without the need for infrastructure requirements. It supports various data types like JSON, SQL, CSV, and Plain Text, and allows integration with large language models, databases, and modern storage technologies.

Novita AI

Novita AI is an AI cloud platform that offers Model APIs, Serverless, and GPU Instance solutions integrated into one cost-effective platform. It provides tools for building AI products, scaling with serverless architecture, and deploying with GPU instances. Novita AI caters to startups and businesses looking to leverage AI technologies without the need for extensive machine learning expertise. The platform also offers a Startup Program, 24/7 service support, and has received positive feedback for its reasonable pricing and stable API services.

Novita AI

Novita AI is an AI cloud platform offering Model APIs, Serverless, and GPU Instance services in a cost-effective and integrated manner to accelerate AI businesses. It provides optimized models for high-quality dialogue use cases, full spectrum AI APIs for image, video, audio, and LLM applications, serverless auto-scaling based on demand, and customizable GPU solutions for complex AI tasks. The platform also includes a Startup Program, 24/7 service support, and has received positive feedback for its reasonable pricing and stable services.

Pinecone

Pinecone is a vector database designed to build knowledgeable AI applications. It offers a serverless platform with high capacity and low cost, enabling users to perform low-latency vector search for various AI tasks. Pinecone is easy to start and scale, allowing users to create an account, upload vector embeddings, and retrieve relevant data quickly. The platform combines vector search with metadata filters and keyword boosting for better application performance. Pinecone is secure, reliable, and cloud-native, making it suitable for powering mission-critical AI applications.

Together AI

Together AI is an AI tool that offers a variety of generative AI services, including serverless models, fine-tuning capabilities, dedicated endpoints, and GPU clusters. Users can run or fine-tune leading open source models with only a few lines of code. The platform provides a range of functionalities for tasks such as chat, vision, text-to-speech, code/language reranking, and more. Together AI aims to simplify the process of utilizing AI models for various applications.

Pinecone

Pinecone is a vector database designed to help power AI applications for various companies. It offers a serverless platform that enables users to build knowledgeable AI applications quickly and cost-effectively. With Pinecone, users can perform low-latency vector searches for tasks such as search, recommendation, detection, and more. The platform is scalable, secure, and cloud-native, making it suitable for a wide range of AI projects.

1 - Open Source AI Tools

functionary

Functionary is a language model that interprets and executes functions/plugins. It determines when to execute functions, whether in parallel or serially, and understands their outputs. Function definitions are given as JSON Schema Objects, similar to OpenAI GPT function calls. It offers documentation and examples on functionary.meetkai.com. The newest model, meetkai/functionary-medium-v3.1, is ranked 2nd in the Berkeley Function-Calling Leaderboard. Functionary supports models with different context lengths and capabilities for function calling and code interpretation. It also provides grammar sampling for accurate function and parameter names. Users can deploy Functionary models serverlessly using Modal.com.

4 - OpenAI Gpts

Serverless Architect Pro

Helping software engineers to architect domain-driven serverless systems on AWS