Best AI tools for< Handle Web Requests >

20 - AI tool Sites

403 Forbidden

The website appears to be displaying a '403 Forbidden' error message, which typically indicates that the user is not authorized to access the requested resource. This error is often encountered when trying to access a webpage without the necessary permissions or when the server is configured to deny access to a particular URL. The 'openresty' mentioned in the text is likely the web server software being used to handle the request.

403 Forbidden OpenResty

The website displays a '403 Forbidden' error message, which indicates that the server understood the request but refuses to authorize it. This error is often encountered when trying to access a webpage without the necessary permissions. The message 'openresty' suggests that the server may be using the OpenResty web platform. OpenResty is a web platform based on NGINX and LuaJIT, known for its high performance and scalability in handling web traffic.

OpenResty

The website is currently displaying a '403 Forbidden' error, which indicates that the server understood the request but refuses to authorize it. This error is often encountered when trying to access a webpage without the necessary permissions. The 'openresty' mentioned in the text is likely the software running on the server. It is a web platform based on NGINX and LuaJIT, known for its high performance and scalability in handling web traffic. The website may be using OpenResty to manage its server configurations and handle incoming requests.

403 Forbidden Error Handler

The website seems to be experiencing a 403 Forbidden error, which means the server is refusing to respond to the request. This error is often caused by incorrect permissions or misconfiguration on the server side. The message 'openresty' suggests that the server may be using the OpenResty web platform. Users encountering this error may need to contact the website administrator for assistance in resolving the issue.

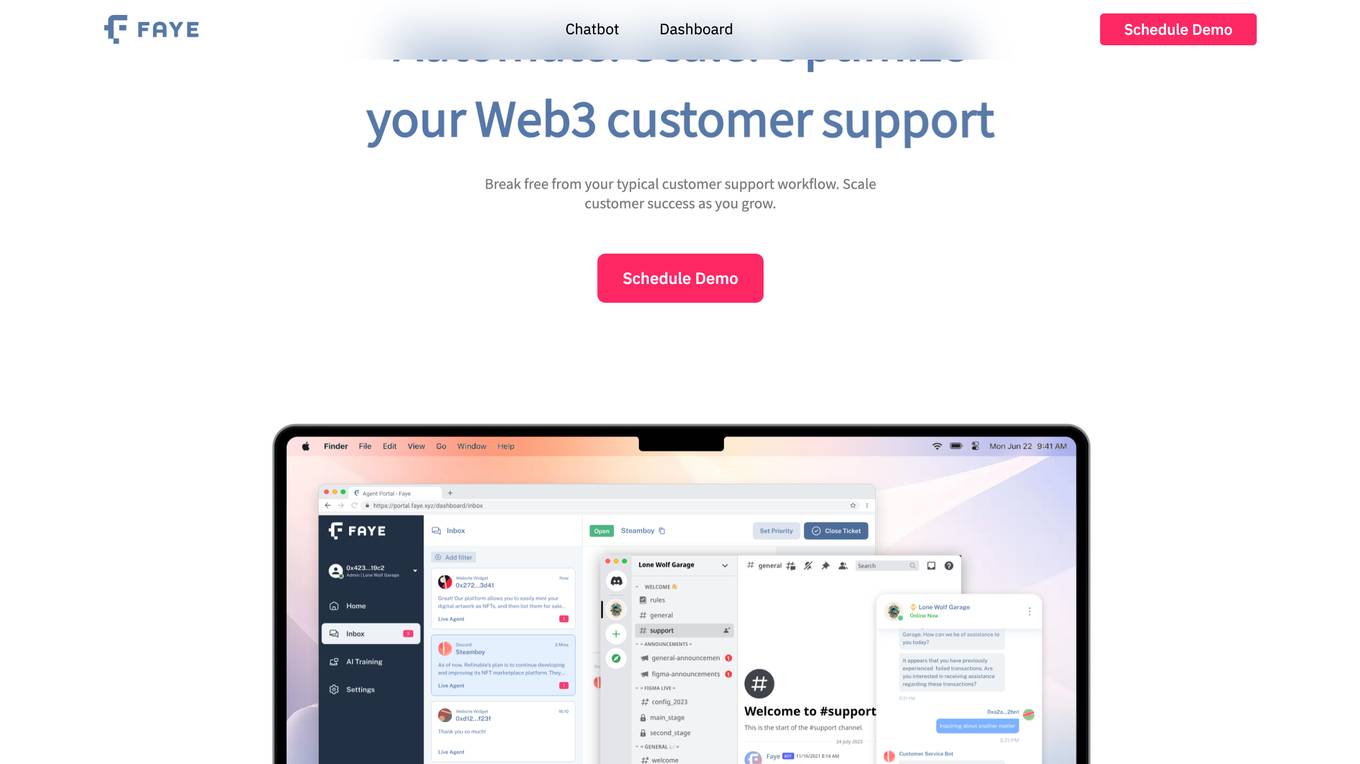

Voyp

Voyp is a voice-powered AI call assistant application that helps users make appointments and reservations easily by simply speaking or typing their requests. It is designed to provide convenience and independence, especially for individuals with speech impairments or social anxiety. Voyp offers features such as international calls, multiple languages, call recording, and calendar integration. Users can interact with Voyp through various platforms like Web, Android, iPhone, and ChatGPT, making it accessible and user-friendly. The application is powered by Artificial Intelligence, enabling it to handle tasks like booking hotel rooms, scheduling appointments, and making restaurant reservations efficiently.

OpenResty

The website is currently displaying a '403 Forbidden' error, which means that access to the requested resource is forbidden. This error is typically caused by insufficient permissions or misconfiguration on the server side. The message 'openresty' suggests that the server is using the OpenResty web platform. OpenResty is a dynamic web platform based on NGINX and Lua that is commonly used for building high-performance web applications. It provides a powerful and flexible environment for developing and deploying web services.

N/A

The website is currently displaying a '403 Forbidden' error message, which indicates that the server understood the request but refuses to authorize it. This error is often caused by insufficient permissions or misconfiguration on the server side. The 'openresty' mentioned in the message refers to a web platform based on NGINX and LuaJIT, commonly used for building high-performance web applications. It seems that the website is currently inaccessible due to server-side issues.

OpenResty

The website is currently displaying a '403 Forbidden' error message, which indicates that the server understood the request but refuses to authorize it. This error is typically caused by insufficient permissions or misconfiguration on the server side. The 'openresty' mentioned in the message refers to a web platform based on NGINX and LuaJIT, often used for building high-performance web applications. It seems that the website is currently inaccessible due to server-side issues.

OpenResty

The website is currently displaying a '403 Forbidden' error, which indicates that the server understood the request but refuses to authorize it. This error message is often displayed when the user is trying to access a webpage or resource that they are not permitted to view. The 'openresty' mentioned in the error message is a web platform based on NGINX and LuaJIT, known for its high performance and scalability in handling web traffic. It is commonly used for building dynamic web applications and APIs.

OpenResty

The website is currently displaying a '403 Forbidden' error message, which indicates that the server is refusing to respond to the request. This error is often caused by insufficient permissions or misconfiguration on the server side. The 'openresty' mentioned in the message is a web platform based on Nginx and Lua that can be used to build high-performance web applications. It is commonly used for content delivery networks, API gateways, and other web services.

404 Error Page

The website is a simple error page indicating that the requested page is not found. It is a standard 404 error message that informs users that the page they are trying to access does not exist or has been relocated. The page is typically displayed when a user enters a URL that is incorrect or when a page has been removed from the website.

Netlify Placeholder Page

The website appears to be a placeholder page indicating that the requested site is not found on Netlify. It suggests that the user may have followed a broken link or entered a non-existent URL. If the user is the site owner and encounters a 404 error, they are directed to Netlify's support guide for troubleshooting tips. The page includes a Netlify Internal ID: 01J2YC4TMAA8RS4SFDTJREBRD0.

404 Error Page

The website is a simple error page indicating that the requested page is not found. It displays a standard 404 error message informing the user that the page they are looking for does not exist or has been moved. This page is commonly encountered when a user tries to access a URL that is no longer valid or has been removed.

404 Error Page

The website displays a 404 Page not found error message indicating that the requested URL was not found on the server. It seems to be a generic error page that informs users when they try to access a page that does not exist on the website.

404 Error Page

The website displays a '404 - Page not found' error message indicating that the requested page does not exist or has been moved. It seems to be a generic error page that users encounter when the URL they are trying to access is invalid or the content has been removed.

Server Error Handler

The website encountered a server error and could not complete the request. Users are advised to try again in 30 seconds. The error message indicates a temporary issue with the server's functionality.

404 Error Page

The website is a simple error page indicating that the requested page is not found. It is a standard HTTP response code that informs users that the server could not find the requested page. The message '404 - Page not found' is displayed to inform users that the page they are trying to access does not exist or has been moved.

404 Error Page

The website displays a '404 - Page not found' error message, indicating that the requested page does not exist or has been moved. It seems to be a generic error page that users encounter when they try to access a non-existent or relocated webpage on the site.

404 Error Page

The website displays a '404 - Page not found' error message, indicating that the requested page does not exist or has been moved. It seems to be a generic error page that users encounter when trying to access a non-existent or relocated webpage.

404 Error Page

The website displays a '404 - Page not found' error message, indicating that the requested page does not exist or has been relocated. It is a common message displayed by websites when a user attempts to access a page that is unavailable.

20 - Open Source AI Tools

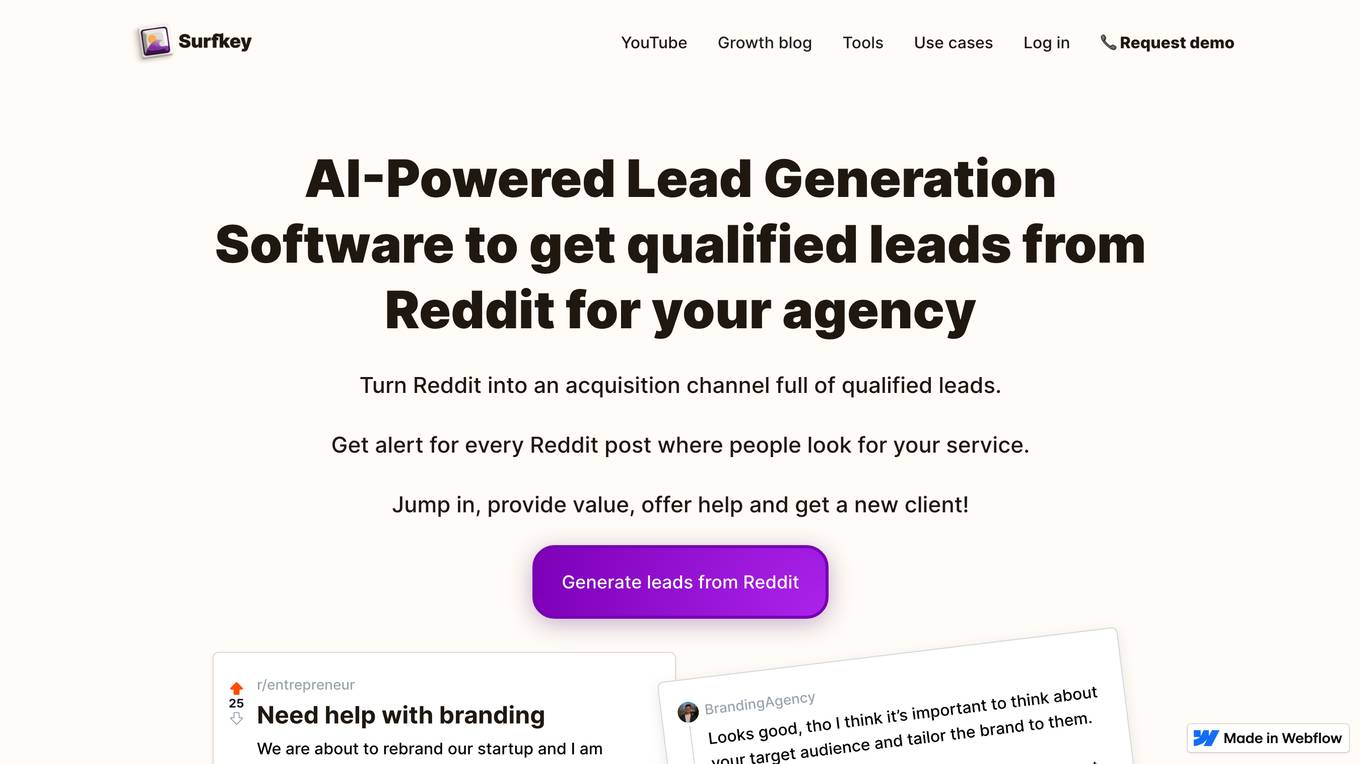

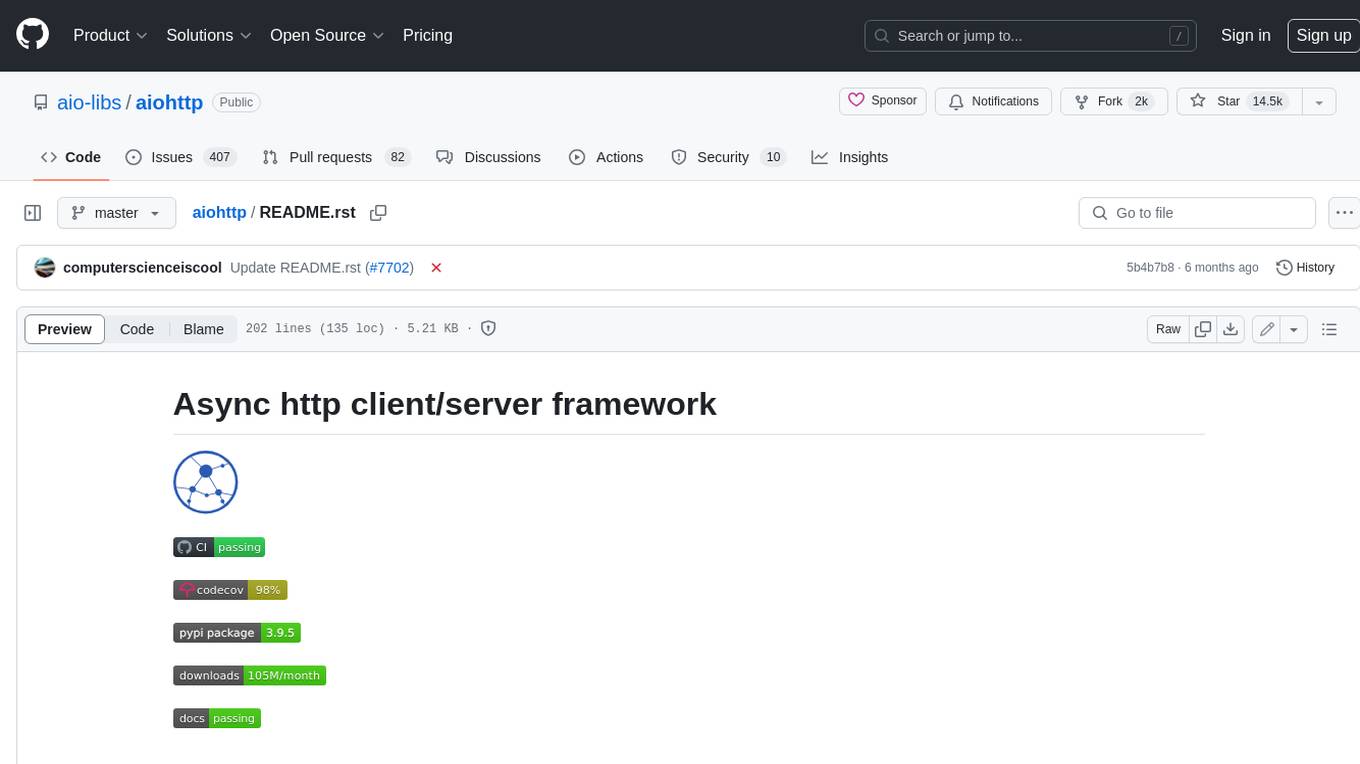

aiohttp

aiohttp is an async http client/server framework that supports both client and server side of HTTP protocol. It also supports both client and server Web-Sockets out-of-the-box and avoids Callback Hell. aiohttp provides a Web-server with middleware and pluggable routing.

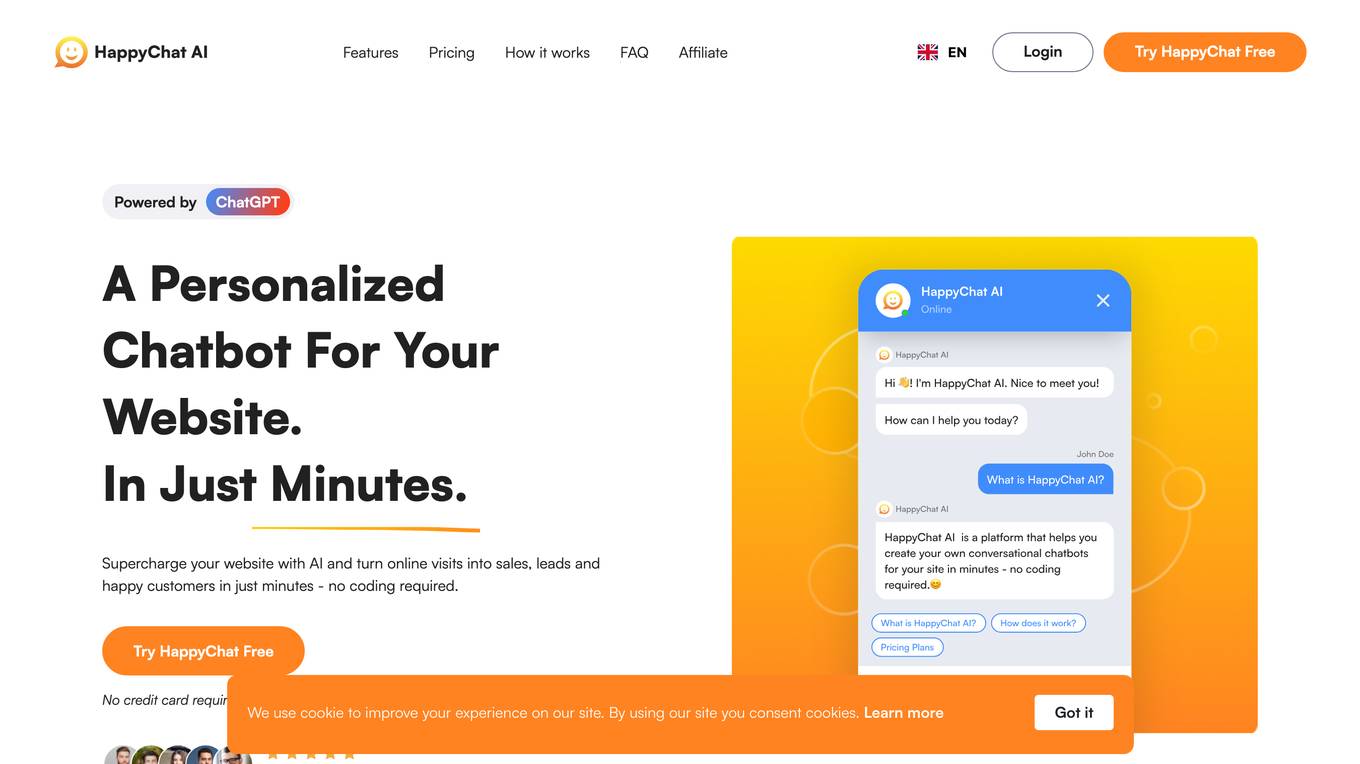

HTFramework

HTFramework is a rapid development framework based on Unity, integrating modular requirements, code reusability, practicality, high cohesion, unified coding standards, extensibility, maintainability, generality, and pluggability. It provides continuous maintenance and upgrades. The framework includes modules for aspect-oriented program code tracking, audio management, controller simplification, coroutine scheduling, custom modules, custom datasets, debugging, entity-component-system, entity management, event handling, exception handling, finite state machines, hotfixing, input management, instruction system, main module access, network client, object pooling, procedures, reference pooling, resource loading, step editing, task editing, UI management, utility tools, web requests, and optional AI, ILRuntime-based hotfixing, XLua integration, and game component modules.

mosec

Mosec is a high-performance and flexible model serving framework for building ML model-enabled backend and microservices. It bridges the gap between any machine learning models you just trained and the efficient online service API. * **Highly performant** : web layer and task coordination built with Rust 🦀, which offers blazing speed in addition to efficient CPU utilization powered by async I/O * **Ease of use** : user interface purely in Python 🐍, by which users can serve their models in an ML framework-agnostic manner using the same code as they do for offline testing * **Dynamic batching** : aggregate requests from different users for batched inference and distribute results back * **Pipelined stages** : spawn multiple processes for pipelined stages to handle CPU/GPU/IO mixed workloads * **Cloud friendly** : designed to run in the cloud, with the model warmup, graceful shutdown, and Prometheus monitoring metrics, easily managed by Kubernetes or any container orchestration systems * **Do one thing well** : focus on the online serving part, users can pay attention to the model optimization and business logic

web-llm

WebLLM is a modular and customizable javascript package that directly brings language model chats directly onto web browsers with hardware acceleration. Everything runs inside the browser with no server support and is accelerated with WebGPU. WebLLM is fully compatible with OpenAI API. That is, you can use the same OpenAI API on any open source models locally, with functionalities including json-mode, function-calling, streaming, etc. We can bring a lot of fun opportunities to build AI assistants for everyone and enable privacy while enjoying GPU acceleration.

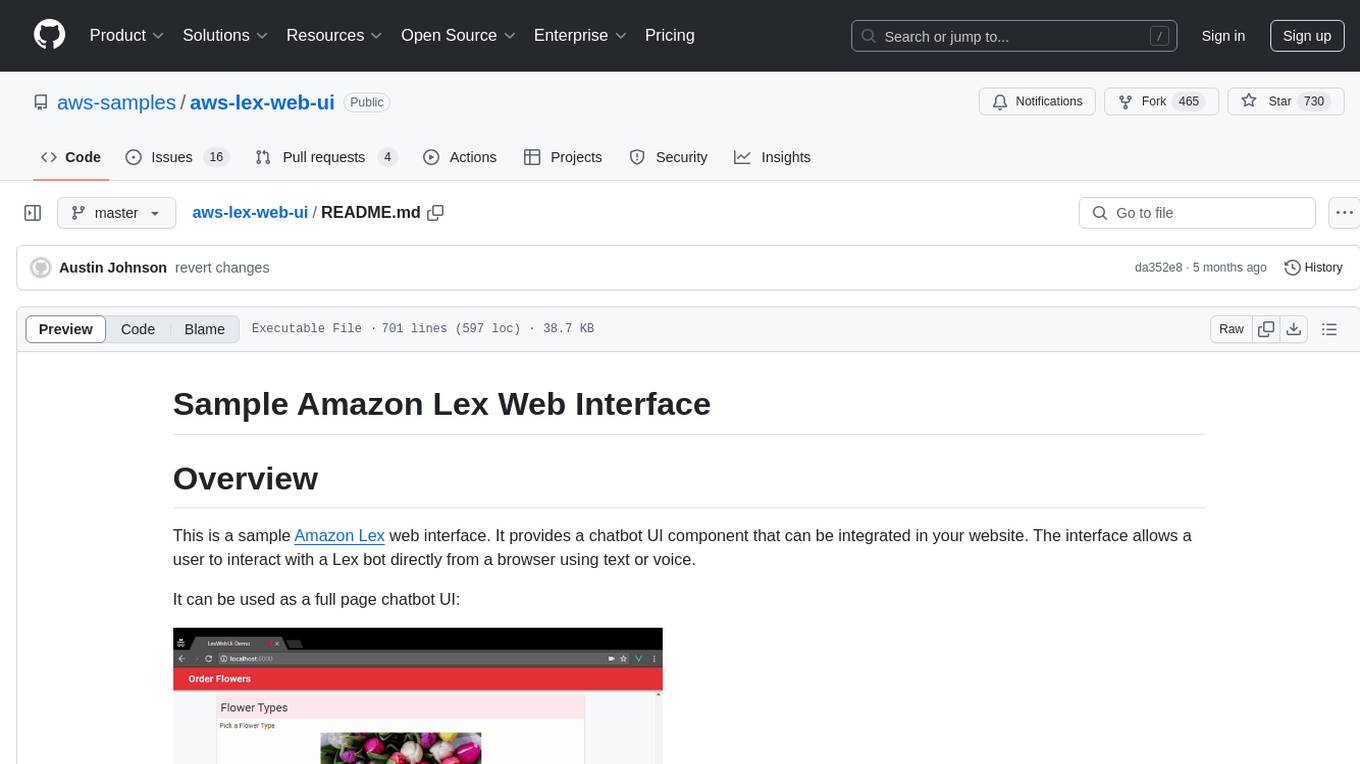

aws-lex-web-ui

The AWS Lex Web UI is a sample Amazon Lex web interface that provides a chatbot UI component for integration into websites. It supports voice and text interactions, Lex response cards, and programmable configuration using JavaScript. The interface can be used as a full-page chatbot UI or embedded as a widget. It offers mobile-ready responsive UI, seamless voice-text switching, and interactive messaging support. The project includes CloudFormation templates for easy deployment and customization. Users can modify configurations, integrate the UI into existing sites, and deploy using various methods like CloudFormation, pre-built libraries, or npm installation.

sd-civitai-browser-plus

sd-civitai-browser-plus is an extension designed for Automatic1111's Stable Difussion Web UI, providing features to browse models from CivitAI, check for updates, download specific model versions hassle-free, assign tags to models, access model info quickly, and download models with high-speed using Aria2. The extension offers a sleek and intuitive user interface, actively maintained with feature requests welcome. It also addresses known issues like frozen downloads with possible solutions. The tool is actively developed with regular updates and bug fixes, ensuring a smooth user experience.

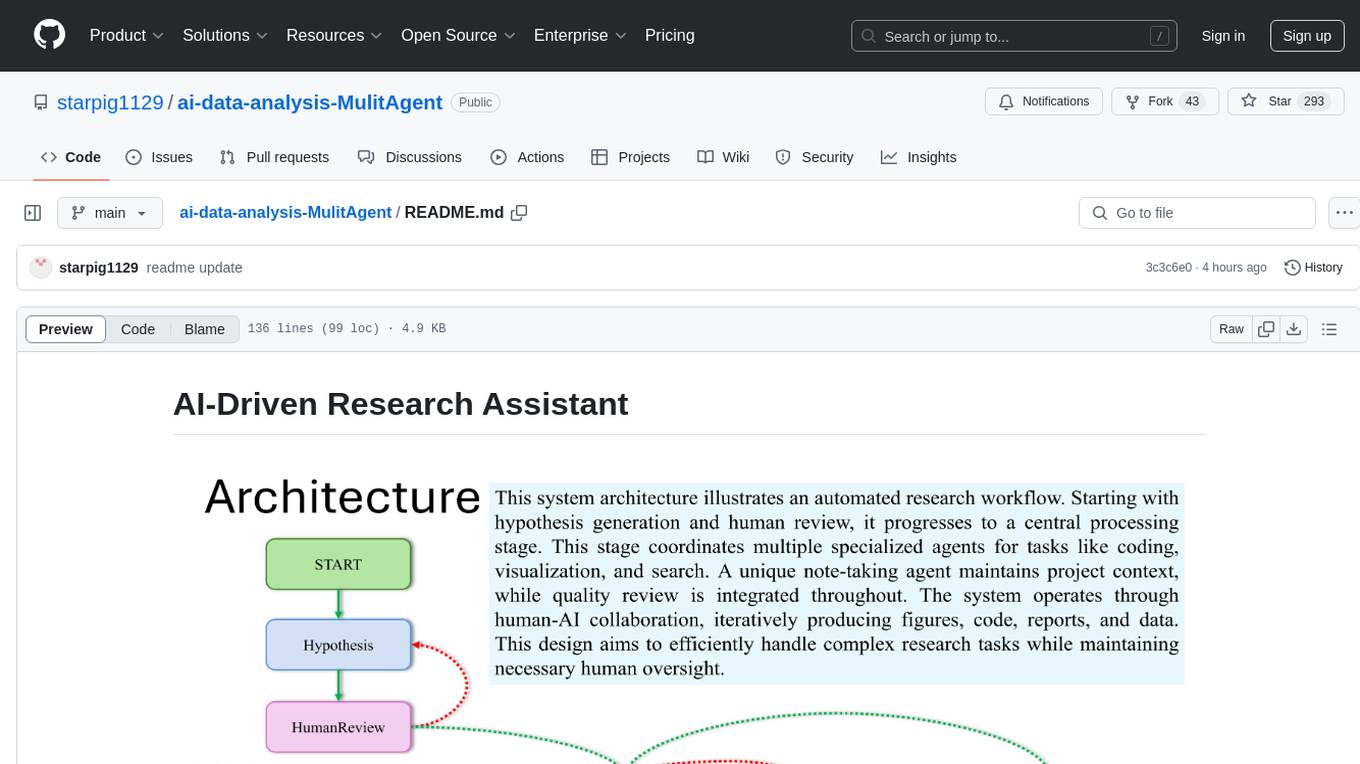

ai-data-analysis-MulitAgent

AI-Driven Research Assistant is an advanced AI-powered system utilizing specialized agents for data analysis, visualization, and report generation. It integrates LangChain, OpenAI's GPT models, and LangGraph for complex research processes. Key features include hypothesis generation, data processing, web search, code generation, and report writing. The system's unique Note Taker agent maintains project state, reducing overhead and improving context retention. System requirements include Python 3.10+ and Jupyter Notebook environment. Installation involves cloning the repository, setting up a Conda virtual environment, installing dependencies, and configuring environment variables. Usage instructions include setting data, running Jupyter Notebook, customizing research tasks, and viewing results. Main components include agents for hypothesis generation, process supervision, visualization, code writing, search, report writing, quality review, and note-taking. Workflow involves hypothesis generation, processing, quality review, and revision. Customization is possible by modifying agent creation and workflow definition. Current issues include OpenAI errors, NoteTaker efficiency, runtime optimization, and refiner improvement. Contributions via pull requests are welcome under the MIT License.

aino

Aino is an experimental HTTP framework for Elixir that uses elli instead of Cowboy like Phoenix and Plug. It focuses on writing handlers to process requests through middleware functions. Aino works on a token instead of a conn, allowing flexibility in adding custom keys. It includes built-in middleware for common tasks and a routing layer for defining routes. Handlers in Aino must return a token with specific keys for response rendering.

algernon

Algernon is a web server with built-in support for QUIC, HTTP/2, Lua, Teal, Markdown, Pongo2, HyperApp, Amber, Sass(SCSS), GCSS, JSX, Ollama (LLMs), BoltDB, Redis, PostgreSQL, MariaDB/MySQL, MSSQL, rate limiting, graceful shutdown, plugins, users, and permissions. It is a small self-contained executable that supports various technologies and features for web development.

call-center-ai

Call Center AI is an AI-powered call center solution that leverages Azure and OpenAI GPT. It is a proof of concept demonstrating the integration of Azure Communication Services, Azure Cognitive Services, and Azure OpenAI to build an automated call center solution. The project showcases features like accessing claims on a public website, customer conversation history, language change during conversation, bot interaction via phone number, multiple voice tones, lexicon understanding, todo list creation, customizable prompts, content filtering, GPT-4 Turbo for customer requests, specific data schema for claims, documentation database access, SMS report sending, conversation resumption, and more. The system architecture includes components like RAG AI Search, SMS gateway, call gateway, moderation, Cosmos DB, event broker, GPT-4 Turbo, Redis cache, translation service, and more. The tool can be deployed remotely using GitHub Actions and locally with prerequisites like Azure environment setup, configuration file creation, and resource hosting. Advanced usage includes custom training data with AI Search, prompt customization, language customization, moderation level customization, claim data schema customization, OpenAI compatible model usage for the LLM, and Twilio integration for SMS.

crawl4ai

Crawl4AI is a powerful and free web crawling service that extracts valuable data from websites and provides LLM-friendly output formats. It supports crawling multiple URLs simultaneously, replaces media tags with ALT, and is completely free to use and open-source. Users can integrate Crawl4AI into Python projects as a library or run it as a standalone local server. The tool allows users to crawl and extract data from specified URLs using different providers and models, with options to include raw HTML content, force fresh crawls, and extract meaningful text blocks. Configuration settings can be adjusted in the `crawler/config.py` file to customize providers, API keys, chunk processing, and word thresholds. Contributions to Crawl4AI are welcome from the open-source community to enhance its value for AI enthusiasts and developers.

resonance

Resonance is a framework designed to facilitate interoperability and messaging between services in your infrastructure and beyond. It provides AI capabilities and takes full advantage of asynchronous PHP, built on top of Swoole. With Resonance, you can: * Chat with Open-Source LLMs: Create prompt controllers to directly answer user's prompts. LLM takes care of determining user's intention, so you can focus on taking appropriate action. * Asynchronous Where it Matters: Respond asynchronously to incoming RPC or WebSocket messages (or both combined) with little overhead. You can set up all the asynchronous features using attributes. No elaborate configuration is needed. * Simple Things Remain Simple: Writing HTTP controllers is similar to how it's done in the synchronous code. Controllers have new exciting features that take advantage of the asynchronous environment. * Consistency is Key: You can keep the same approach to writing software no matter the size of your project. There are no growing central configuration files or service dependencies registries. Every relation between code modules is local to those modules. * Promises in PHP: Resonance provides a partial implementation of Promise/A+ spec to handle various asynchronous tasks. * GraphQL Out of the Box: You can build elaborate GraphQL schemas by using just the PHP attributes. Resonance takes care of reusing SQL queries and optimizing the resources' usage. All fields can be resolved asynchronously.

crawlee-python

Crawlee-python is a web scraping and browser automation library that covers crawling and scraping end-to-end, helping users build reliable scrapers fast. It allows users to crawl the web for links, scrape data, and store it in machine-readable formats without worrying about technical details. With rich configuration options, users can customize almost any aspect of Crawlee to suit their project's needs.

mentals-ai

Mentals AI is a tool designed for creating and operating agents that feature loops, memory, and various tools, all through straightforward markdown syntax. This tool enables you to concentrate solely on the agent’s logic, eliminating the necessity to compose underlying code in Python or any other language. It redefines the foundational frameworks for future AI applications by allowing the creation of agents with recursive decision-making processes, integration of reasoning frameworks, and control flow expressed in natural language. Key concepts include instructions with prompts and references, working memory for context, short-term memory for storing intermediate results, and control flow from strings to algorithms. The tool provides a set of native tools for message output, user input, file handling, Python interpreter, Bash commands, and short-term memory. The roadmap includes features like a web UI, vector database tools, agent's experience, and tools for image generation and browsing. The idea behind Mentals AI originated from studies on psychoanalysis executive functions and aims to integrate 'System 1' (cognitive executor) with 'System 2' (central executive) to create more sophisticated agents.

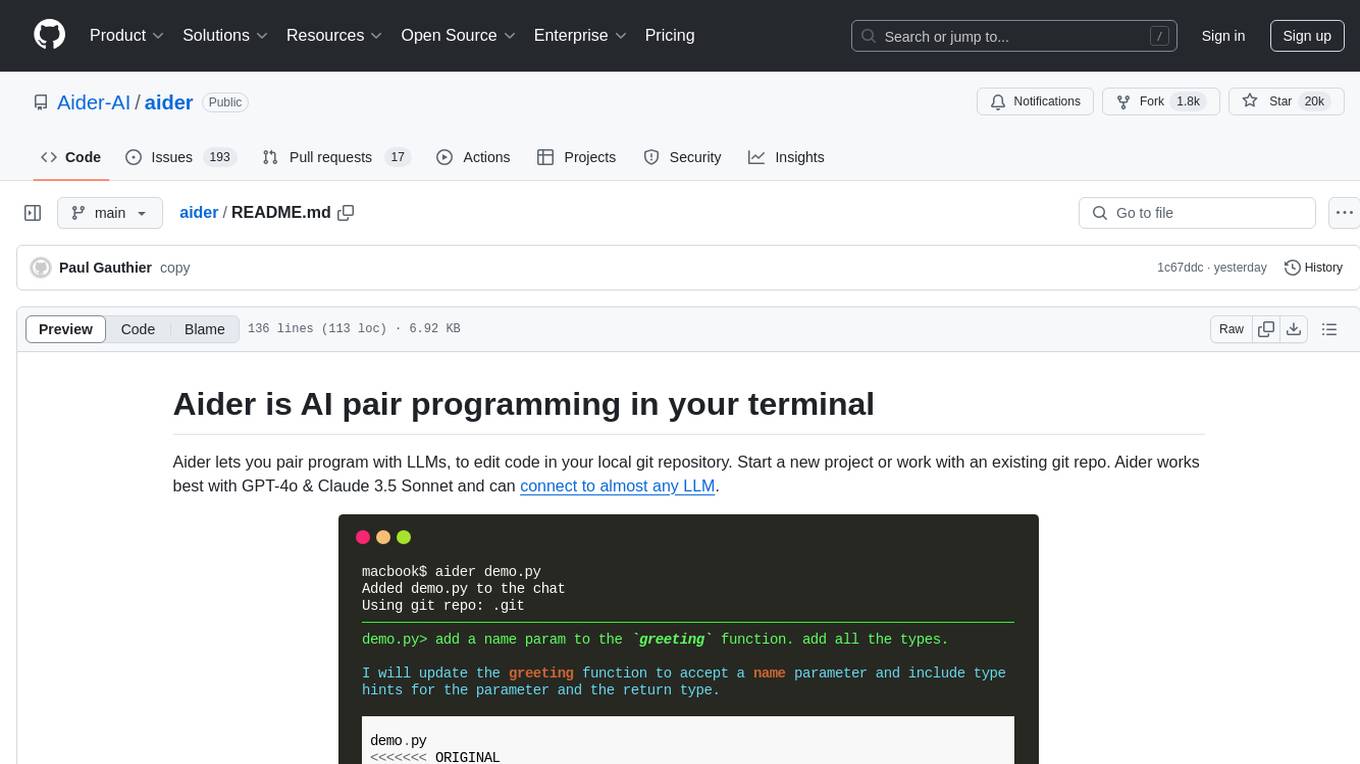

aider

Aider is an AI pair programming tool that allows users to collaborate with large language models (LLMs) to edit code in their local git repository. It works best with GPT-4o & Claude 3.5 Sonnet and can connect to almost any LLM. Users can run Aider with specific files, request changes, add new features or test cases, describe bugs, refactor code, update docs, and more. Aider automatically commits changes with sensible messages, supports multiple programming languages, and can handle complex requests by editing multiple files at once. It uses a map of the entire git repo for efficient performance in larger codebases. Users can chat with Aider, add images, URLs, and even code with their voice. Aider has achieved top scores on SWE Bench, solving real GitHub issues from popular open source projects like django, scikitlearn, matplotlib, etc.

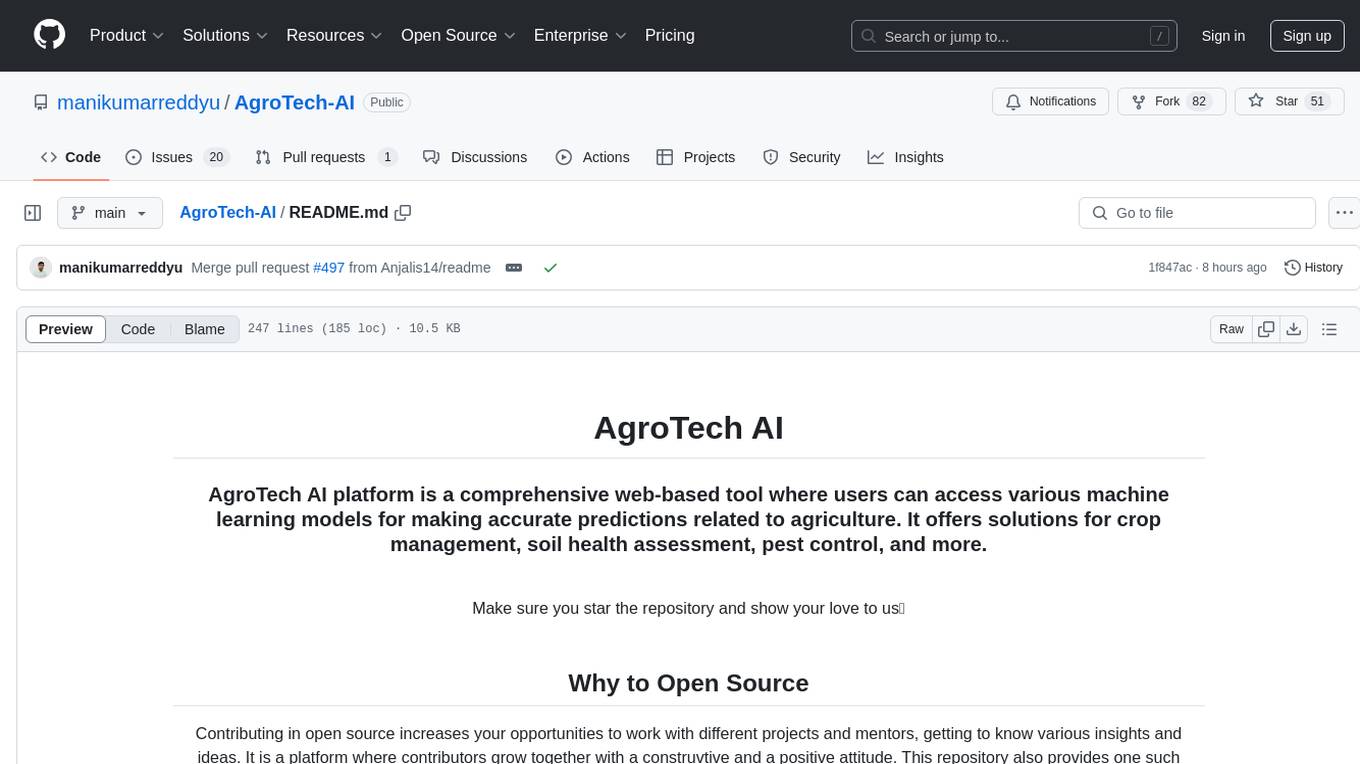

AgroTech-AI

AgroTech AI platform is a comprehensive web-based tool where users can access various machine learning models for making accurate predictions related to agriculture. It offers solutions for crop management, soil health assessment, pest control, and more. The platform implements machine learning algorithms to provide functionalities like fertilizer prediction, crop prediction, soil quality prediction, yield prediction, and mushroom edibility prediction.

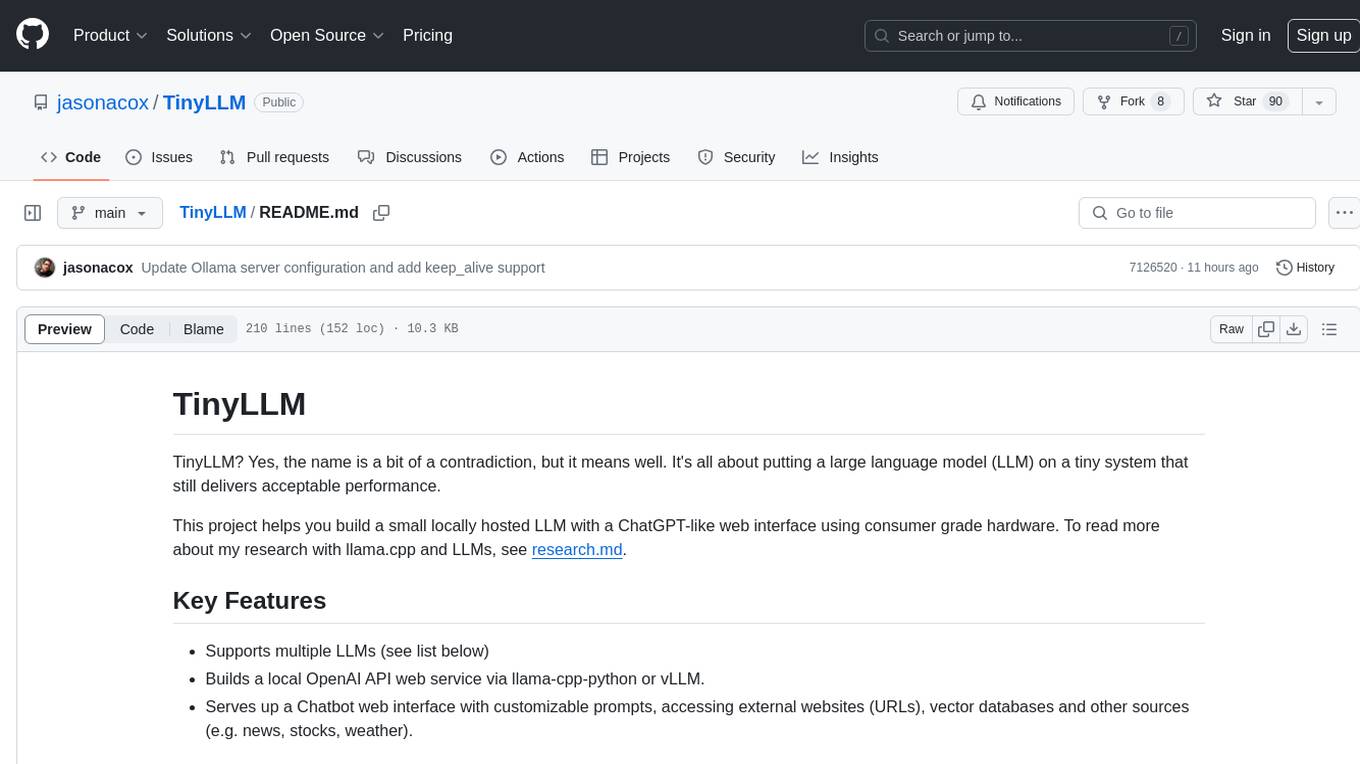

TinyLLM

TinyLLM is a project that helps build a small locally hosted language model with a web interface using consumer-grade hardware. It supports multiple language models, builds a local OpenAI API web service, and serves a Chatbot web interface with customizable prompts. The project requires specific hardware and software configurations for optimal performance. Users can run a local language model using inference servers like vLLM, llama-cpp-python, and Ollama. The Chatbot feature allows users to interact with the language model through a web-based interface, supporting features like summarizing websites, displaying news headlines, stock prices, weather conditions, and using vector databases for queries.

PythonAI

PythonAI is an open-source AI Assistant designed for the Raspberry Pi by Kevin McAleer. The project aims to enhance the capabilities of the Raspberry Pi by providing features such as conversation history, a conversation API, a web interface, a skills framework using plugin technology, and an event framework for adding functionality via plugins. The tool utilizes the Vosk offline library for speech-to-text conversion and offers a simple skills framework for easy implementation of new skills. Users can create new skills by adding Python files to the 'skills' folder and updating the 'skills.json' file. PythonAI is designed to be easy to read, maintain, and extend, making it a valuable tool for Raspberry Pi enthusiasts looking to build AI applications.

awesome-llm-apps

Awesome LLM Apps is a curated collection of applications that leverage RAG with OpenAI, Anthropic, Gemini, and open-source models. The repository contains projects such as Local Llama-3 with RAG for chatting with webpages locally, Chat with Gmail for interacting with Gmail using natural language, Chat with Substack Newsletter for conversing with Substack newsletters using GPT-4, Chat with PDF for intelligent conversation based on PDF documents, and Chat with YouTube Videos for engaging with YouTube video content through natural language. Users can clone the repository, navigate to specific project directories, install dependencies, and follow project-specific instructions to set up and run the apps. Contributions are encouraged, and new app ideas or improvements can be submitted via pull requests.

SWE-agent

SWE-agent is a tool that turns language models (e.g. GPT-4) into software engineering agents capable of fixing bugs and issues in real GitHub repositories. It achieves state-of-the-art performance on the full test set by resolving 12.29% of issues. The tool is built and maintained by researchers from Princeton University. SWE-agent provides a command line tool and a graphical web interface for developers to interact with. It introduces an Agent-Computer Interface (ACI) to facilitate browsing, viewing, editing, and executing code files within repositories. The tool includes features such as a linter for syntax checking, a specialized file viewer, and a full-directory string searching command to enhance the agent's capabilities. SWE-agent aims to improve prompt engineering and ACI design to enhance the performance of language models in software engineering tasks.

20 - OpenAI Gpts

Flask Expert Assistant

This GPT is a specialized assistant for Flask, the popular web framework in Python. It is designed to help both beginners and experienced developers with Flask-related queries, ranging from basic setup and routing to advanced features like database integration and application scaling.

![[latest] Vue.js GPT Screenshot](/screenshots_gpts/g-LXEGvZLUS.jpg)

[latest] Vue.js GPT

Versatile, up-to-date Vue.js assistant with knowledge of the latest version. Part of the [latest] GPTs family.

Apple MapKit Complete Code Expert

A detailed expert trained on all 5,961 pages of Apple MapKit, offering complete coding solutions. Saving time? https://www.buymeacoffee.com/parkerrex ☕️❤️

Selenium Sage

Expert in Selenium test automation, providing practical advice and solutions.

Awkward Situation Solver

Welcome to AwkwardSituation Solver GPT! I am here to help you handle those cringe-worthy social moments with a touch of humor and creativity.

Brofessional: Crucial Chris the Conversation Guru

Using "Crucial Conversations," I can help you handle work and home challenges with confidence and clarity.