Best AI tools for< Configuring Services >

2 - AI tool Sites

Shipixen

Shipixen is an AI-powered tool that allows users to generate custom Next.js codebases with an MDX blog, TypeScript, and Shadcn UI in minutes. It provides a seamless experience for developers to create beautifully designed SaaS, blogs, landing pages, directories, and more without the hassle of manual setup. Shipixen offers a wide range of features, themes, and components to streamline the web development process and empower users to focus on building rather than configuring. With AI content generation capabilities, customizable branding, and easy deployment options, Shipixen is a valuable tool for both beginners and experienced developers.

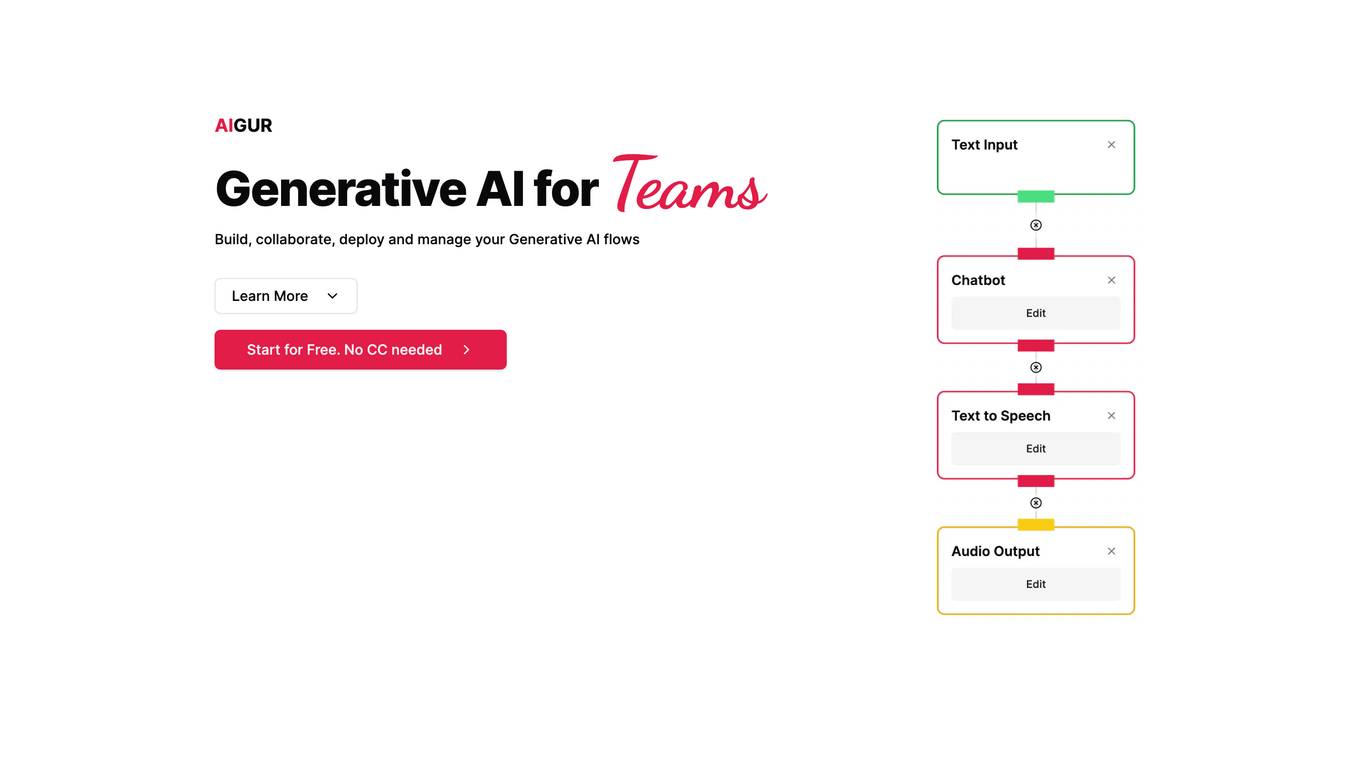

AIGUR

AIGUR is a generative AI platform that enables teams to build, collaborate, deploy, and manage generative AI flows. With AIGUR's no-code editor, users can create generative AI flows by dragging and dropping AI blocks and configuring how they interact. AIGUR also provides collaboration tools that allow multiple users to work on the same flow simultaneously. Once a flow is created, it can be integrated into any web or mobile application using a simple API call. AIGUR also provides monitoring tools that give users visibility into the different executions of their flows, as well as their cost, performance, and availability.

1 - Open Source AI Tools

openai-forward

OpenAI-Forward is an efficient forwarding service implemented for large language models. Its core features include user request rate control, token rate limiting, intelligent prediction caching, log management, and API key management, aiming to provide efficient and convenient model forwarding services. Whether proxying local language models or cloud-based language models like LocalAI or OpenAI, OpenAI-Forward makes it easy. Thanks to support from libraries like uvicorn, aiohttp, and asyncio, OpenAI-Forward achieves excellent asynchronous performance.