openai-forward

🚀 大语言模型高效转发服务 · An efficient forwarding service designed for LLMs. · OpenAI API Reverse Proxy

Stars: 899

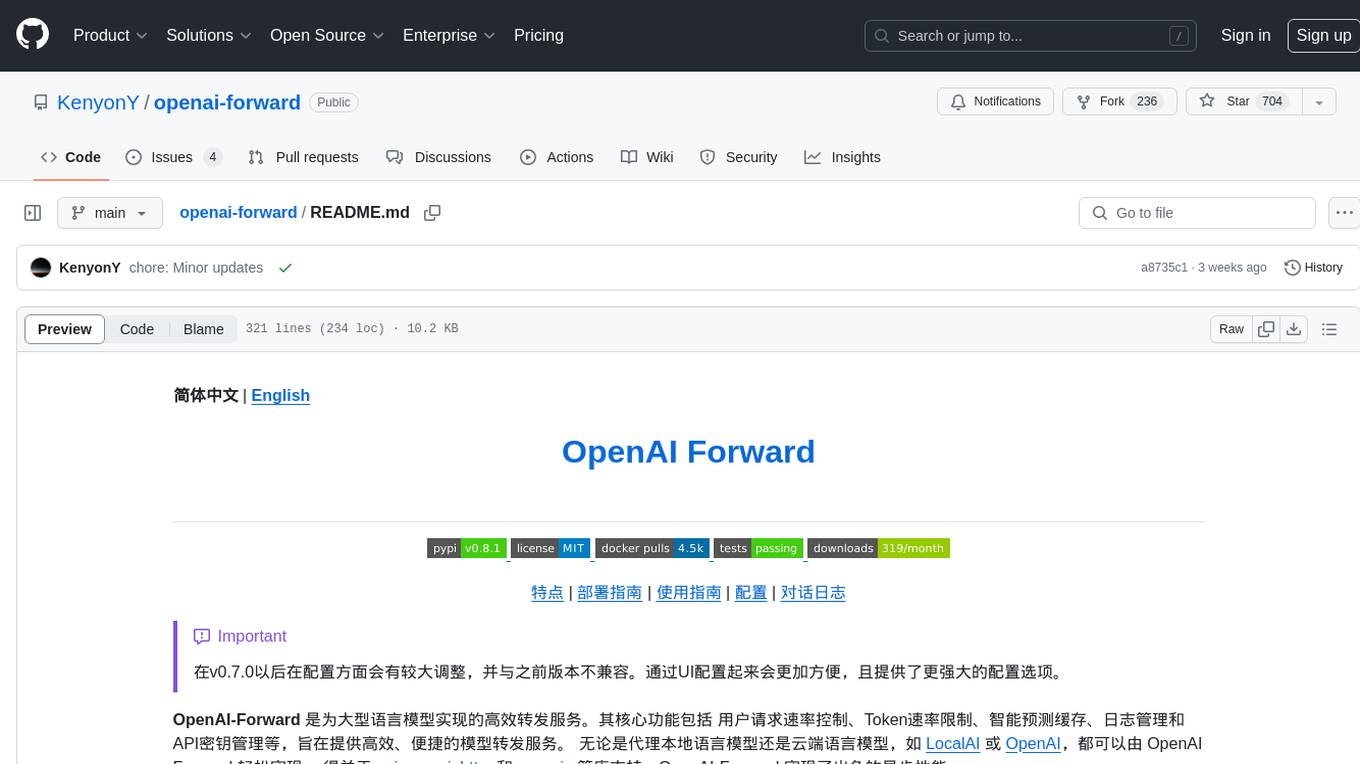

OpenAI-Forward is an efficient forwarding service implemented for large language models. Its core features include user request rate control, token rate limiting, intelligent prediction caching, log management, and API key management, aiming to provide efficient and convenient model forwarding services. Whether proxying local language models or cloud-based language models like LocalAI or OpenAI, OpenAI-Forward makes it easy. Thanks to support from libraries like uvicorn, aiohttp, and asyncio, OpenAI-Forward achieves excellent asynchronous performance.

README:

简体中文 | English

[!IMPORTANT]

在v0.7.0以后在配置方面会有较大调整,并与之前版本不兼容。通过UI配置起来会更加方便,且提供了更强大的配置选项。

OpenAI-Forward 是为大型语言模型实现的高效转发服务。其核心功能包括 用户请求速率控制、Token速率限制、智能预测缓存、日志管理和API密钥管理等,旨在提供高效、便捷的模型转发服务。 无论是代理本地语言模型还是云端语言模型,如 LocalAI 或 OpenAI,都可以由 OpenAI Forward 轻松实现。 得益于 uvicorn, aiohttp, 和 asyncio 等库支持,OpenAI-Forward 实现了出色的异步性能。

- 🎉🎉🎉 v0.7.0版本后支持通过WebUI进行配置管理

- gpt-1106版本已适配

- 缓存后端切换为高性能数据库后端:🗲 FlaxKV

- 全能转发:可转发几乎所有类型的请求

- 性能优先:出色的异步性能

- 缓存AI预测:对AI预测进行缓存,加速服务访问并节省费用

- 用户流量控制:自定义请求速率与Token速率

- 实时响应日志:提升LLMs可观察性

- 自定义秘钥:替代原始API密钥

- 多目标路由:转发多个服务地址至同一服务下的不同路由

- 黑白名单:可对指定IP进行黑白名单限制

- 自动重试:确保服务的稳定性,请求失败时将自动重试

- 快速部署:支持通过pip和docker在本地或云端进行快速部署

由本项目搭建的代理服务地址:

-

原始OpenAI 服务地址

https://api.openai-forward.com

https://render.openai-forward.com -

开启缓存的服务地址(用户请求结果将被保存一段时间)

👉 部署文档

安装

pip install openai-forward

# 或安装webui版本:

pip install openai-forward[webui]启动服务

aifd run

# 或启动带webui的服务

aifd run --webui如果读入了根路径的.env的配置, 将会看到以下启动信息

❯ aifd run

╭────── 🤗 openai-forward is ready to serve! ───────╮

│ │

│ base url https://api.openai.com │

│ route prefix / │

│ api keys False │

│ forward keys False │

│ cache_backend MEMORY │

╰────────────────────────────────────────────────────╯

╭──────────── ⏱️ Rate Limit configuration ───────────╮

│ │

│ backend memory │

│ strategy moving-window │

│ global rate limit 100/minute (req) │

│ /v1/chat/completions 100/2minutes (req) │

│ /v1/completions 60/minute;600/hour (req) │

│ /v1/chat/completions 60/second (token) │

│ /v1/completions 60/second (token) │

╰────────────────────────────────────────────────────╯

INFO: Started server process [191471]

INFO: Waiting for application startup.

INFO: Application startup complete.

INFO: Uvicorn running on http://0.0.0.0:8000 (Press CTRL+C to quit)aifd run的默认选项便是代理https://api.openai.com

下面以搭建好的服务地址https://api.openai-forward.com 为例

Python

from openai import OpenAI # pip install openai>=1.0.0

client = OpenAI(

+ base_url="https://api.openai-forward.com/v1",

api_key="sk-******"

)-

适用场景: 与 LocalAI, api-for-open-llm等项目一起使用

-

如何操作: 以LocalAI为例,如果已在 http://localhost:8080 部署了LocalAI服务,仅需在环境变量或 .env 文件中设置

FORWARD_CONFIG=[{"base_url":"http://localhost:8080","route":"/localai","type":"openai"}]。 然后即可通过访问 http://localhost:8000/localai 使用LocalAI。

(更多)

配置环境变量或 .env 文件如下:

FORWARD_CONFIG=[{"base_url":"https://generativelanguage.googleapis.com","route":"/gemini","type":"general"}]说明:aidf run启动后,即可通过访问 http://localhost:8000/gemini 使用gemini pro。

-

场景1: 使用通用转发,可对任意来源服务进行转发, 可获得请求速率控制与token速率控制;但通用转发不支持自定义秘钥.

-

场景2: 可通过 LiteLLM 可以将 众多云模型的 API 格式转换为 openai 的api格式,然后使用openai风格转发

(更多)

执行 aifd run --webui 进入配置页面 (默认服务地址 http://localhost:8001)

你可以在项目的运行目录下创建 .env 文件来定制各项配置。参考配置可见根目录下的 .env.example文件

开启缓存后,将会对指定路由的内容进行缓存,其中转发类型分别为openai与general两者行为略有不同,

使用general转发时,默认会将相同的请求一律使用缓存返回,

使用openai转发时,在开启缓存后,可以通过OpenAI 的extra_body参数来控制缓存的行为,如

Python

from openai import OpenAI

client = OpenAI(

+ base_url="https://smart.openai-forward.com/v1",

api_key="sk-******"

)

completion = client.chat.completions.create(

model="gpt-3.5-turbo",

messages=[

{"role": "user", "content": "Hello!"}

],

+ extra_body={"caching": True}

)Curl

curl https://smart.openai.com/v1/chat/completions \

-H "Content-Type: application/json" \

-H "Authorization: Bearer sk-******" \

-d '{

"model": "gpt-3.5-turbo",

"messages": [{"role": "user", "content": "Hello!"}],

"caching": true

}'

Click for more details

见.env文件

用例:

import openai

+ openai.api_base = "https://api.openai-forward.com/v1"

- openai.api_key = "sk-******"

+ openai.api_key = "fk-******"支持转发不同地址的服务至同一端口的不同路由下

用例见 .env.example

Click for more details

保存路径在当前目录下的Log/openai/chat/chat.log路径中。

记录格式为

{'messages': [{'role': 'user', 'content': 'hi'}], 'model': 'gpt-3.5-turbo', 'stream': True, 'max_tokens': None, 'n': 1, 'temperature': 1, 'top_p': 1, 'logit_bias': None, 'frequency_penalty': 0, 'presence_penalty': 0, 'stop': None, 'user': None, 'ip': '127.0.0.1', 'uid': '2155fe1580e6aed626aa1ad74c1ce54e', 'datetime': '2023-10-17 15:27:12'}

{'assistant': 'Hello! How can I assist you today?', 'is_tool_calls': False, 'uid': '2155fe1580e6aed626aa1ad74c1ce54e'}

转换为json格式:

aifd convert得到chat_openai.json:

[

{

"datetime": "2023-10-17 15:27:12",

"ip": "127.0.0.1",

"model": "gpt-3.5-turbo",

"temperature": 1,

"messages": [

{

"user": "hi"

}

],

"tools": null,

"is_tool_calls": false,

"assistant": "Hello! How can I assist you today?"

}

]欢迎通过提交拉取请求或在仓库中提出问题来为此项目做出贡献。

OpenAI-Forward 采用 MIT 许可证。

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for openai-forward

Similar Open Source Tools

openai-forward

OpenAI-Forward is an efficient forwarding service implemented for large language models. Its core features include user request rate control, token rate limiting, intelligent prediction caching, log management, and API key management, aiming to provide efficient and convenient model forwarding services. Whether proxying local language models or cloud-based language models like LocalAI or OpenAI, OpenAI-Forward makes it easy. Thanks to support from libraries like uvicorn, aiohttp, and asyncio, OpenAI-Forward achieves excellent asynchronous performance.

sandbox

AIO Sandbox is an all-in-one agent sandbox environment that combines Browser, Shell, File, MCP operations, and VSCode Server in a single Docker container. It provides a unified, secure execution environment for AI agents and developers, with features like unified file system, multiple interfaces, secure execution, zero configuration, and agent-ready MCP-compatible APIs. The tool allows users to run shell commands, perform file operations, automate browser tasks, and integrate with various development tools and services.

DeepTutor

DeepTutor is an AI-powered personalized learning assistant that offers a suite of modules for massive document knowledge Q&A, interactive learning visualization, knowledge reinforcement with practice exercise generation, deep research, and idea generation. The tool supports multi-agent collaboration, dynamic topic queues, and structured outputs for various tasks. It provides a unified system entry for activity tracking, knowledge base management, and system status monitoring. DeepTutor is designed to streamline learning and research processes by leveraging AI technologies and interactive features.

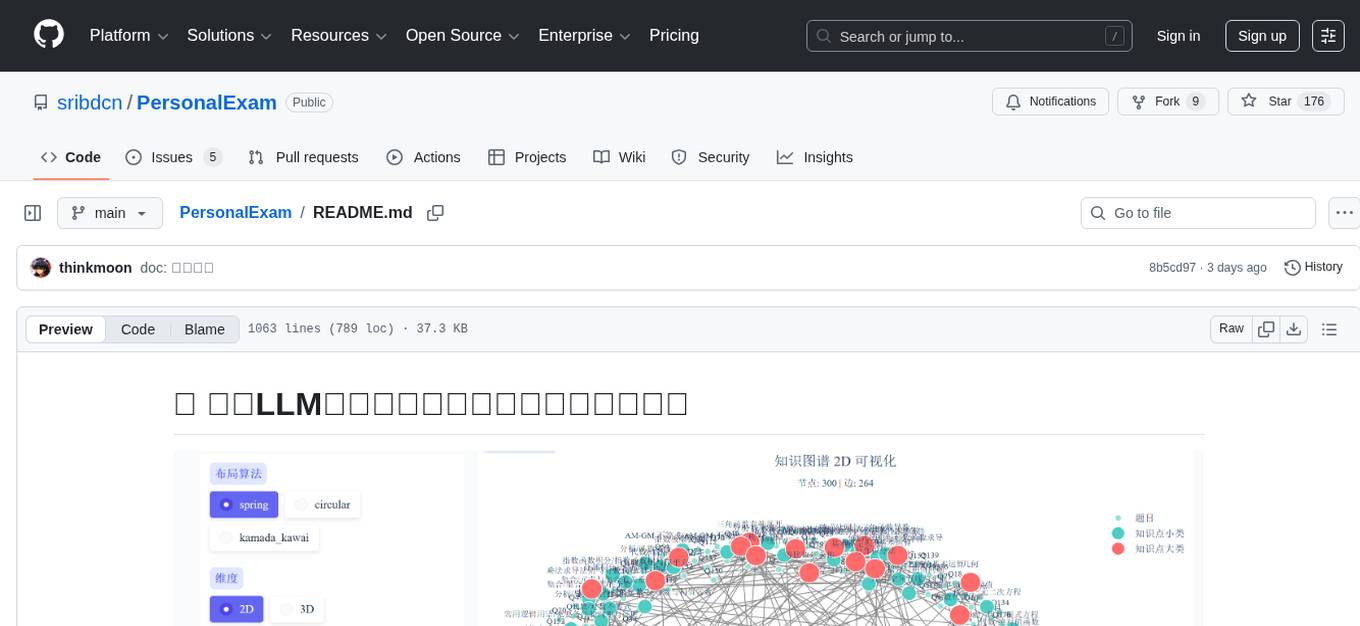

PersonalExam

PersonalExam is a personalized question generation system based on LLM and knowledge graph collaboration. It utilizes the BKT algorithm, RAG engine, and OpenPangu model to achieve personalized intelligent question generation and recommendation. The system features adaptive question recommendation, fine-grained knowledge tracking, AI answer evaluation, student profiling, visual reports, interactive knowledge graph, user management, and system monitoring.

MahoShojo-Generator

MahoShojo-Generator is a web-based AI structured generation tool that allows players to create personalized and evolving magical girls (or quirky characters) and related roles. It offers exciting cyber battles, storytelling activities, and even a ranking feature. The project also includes AI multi-channel polling, user system, public data card sharing, and sensitive word detection. It supports various functionalities such as character generation, arena system, growth and social interaction, cloud and sharing, and other features like scenario generation, tavern ecosystem linkage, and content safety measures.

lassxToolkit

lassxToolkit is a versatile tool designed for file processing tasks. It allows users to manipulate files and folders based on specified configurations in a strict .json format. The tool supports various AI models for tasks such as image upscaling and denoising. Users can customize settings like input/output paths, error handling, file selection, and plugin integration. lassxToolkit provides detailed instructions on configuration options, default values, and model selection. It also offers features like tree restoration, recursive processing, and regex-based file filtering. The tool is suitable for users looking to automate file processing tasks with AI capabilities.

mcp-prompts

mcp-prompts is a Python library that provides a collection of prompts for generating creative writing ideas. It includes a variety of prompts such as story starters, character development, plot twists, and more. The library is designed to inspire writers and help them overcome writer's block by offering unique and engaging prompts to spark creativity. With mcp-prompts, users can access a wide range of writing prompts to kickstart their imagination and enhance their storytelling skills.

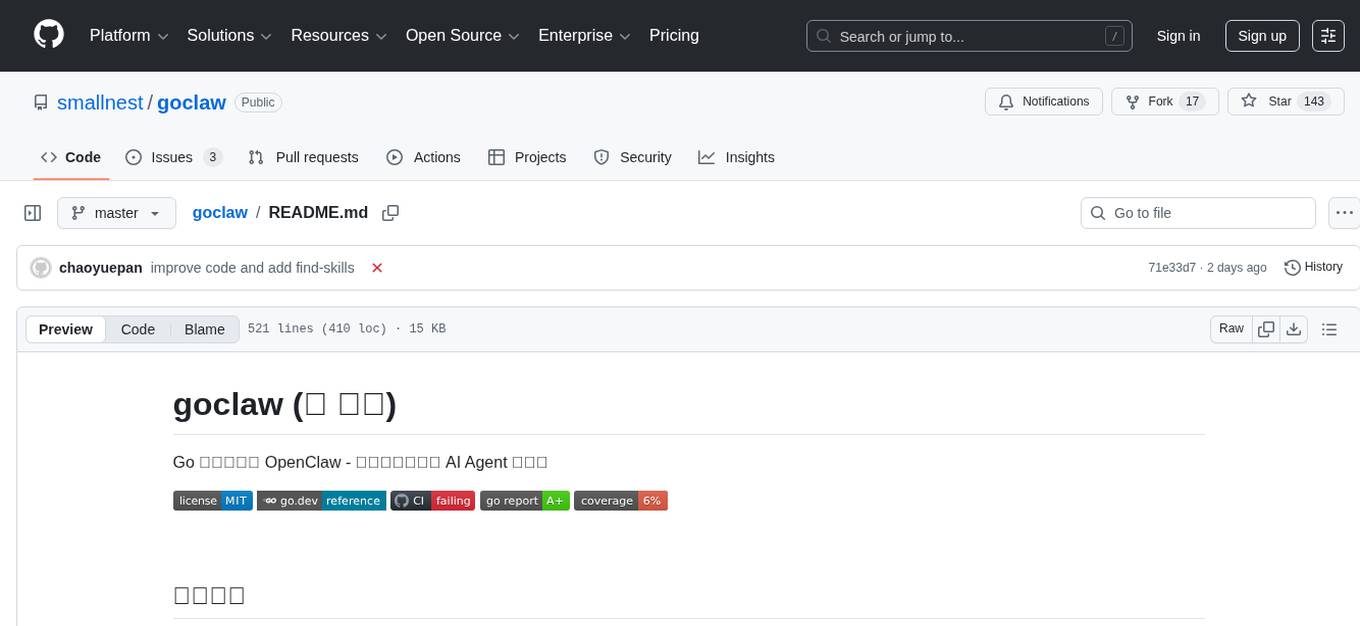

goclaw

goclaw is a powerful AI Agent framework written in Go language. It provides a complete tool system for FileSystem, Shell, Web, and Browser with Docker sandbox support and permission control. The framework includes a skill system compatible with OpenClaw and AgentSkills specifications, supporting automatic discovery and environment gating. It also offers persistent session storage, multi-channel support for Telegram, WhatsApp, Feishu, QQ, and WeWork, flexible configuration with YAML/JSON support, multiple LLM providers like OpenAI, Anthropic, and OpenRouter, WebSocket Gateway, Cron scheduling, and Browser automation based on Chrome DevTools Protocol.

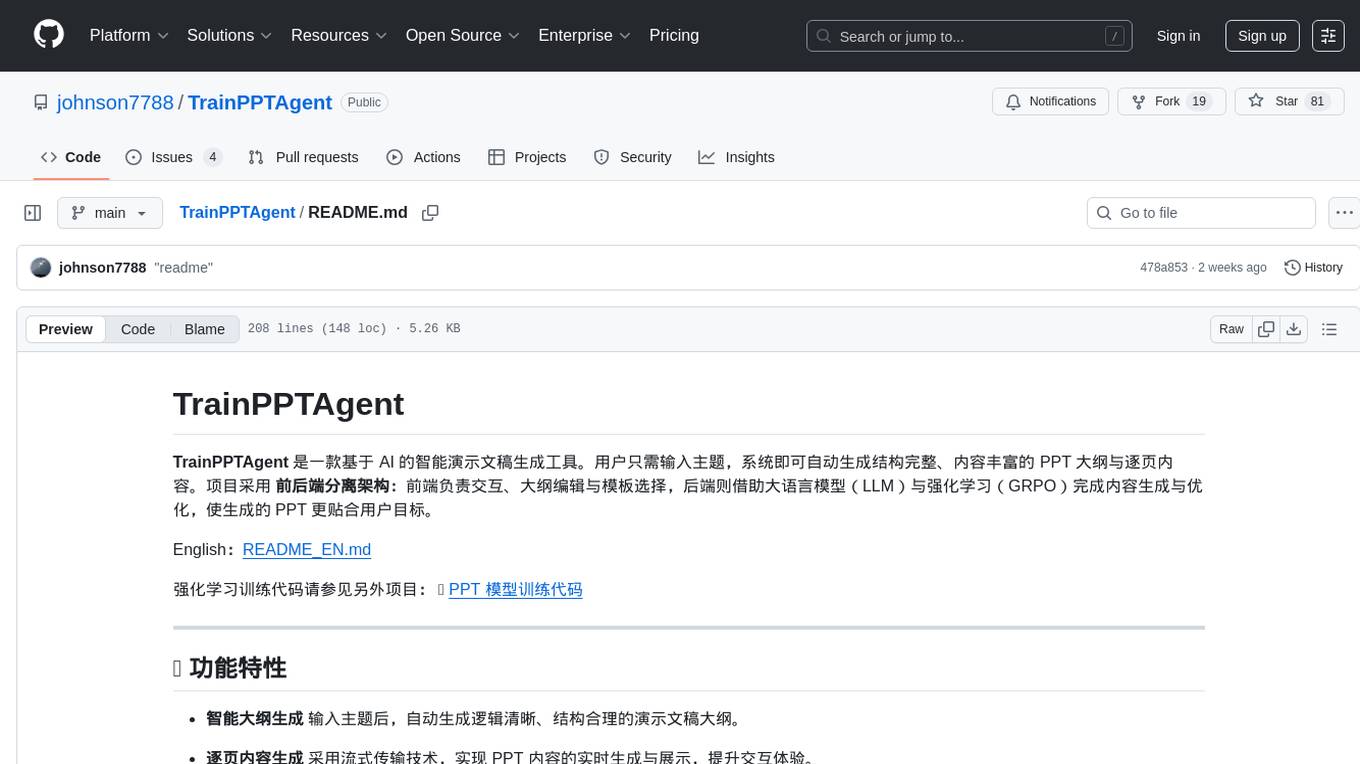

TrainPPTAgent

TrainPPTAgent is an AI-based intelligent presentation generation tool. Users can input a topic and the system will automatically generate a well-structured and content-rich PPT outline and page-by-page content. The project adopts a front-end and back-end separation architecture: the front-end is responsible for interaction, outline editing, and template selection, while the back-end leverages large language models (LLM) and reinforcement learning (GRPO) to complete content generation and optimization, making the generated PPT more tailored to user goals.

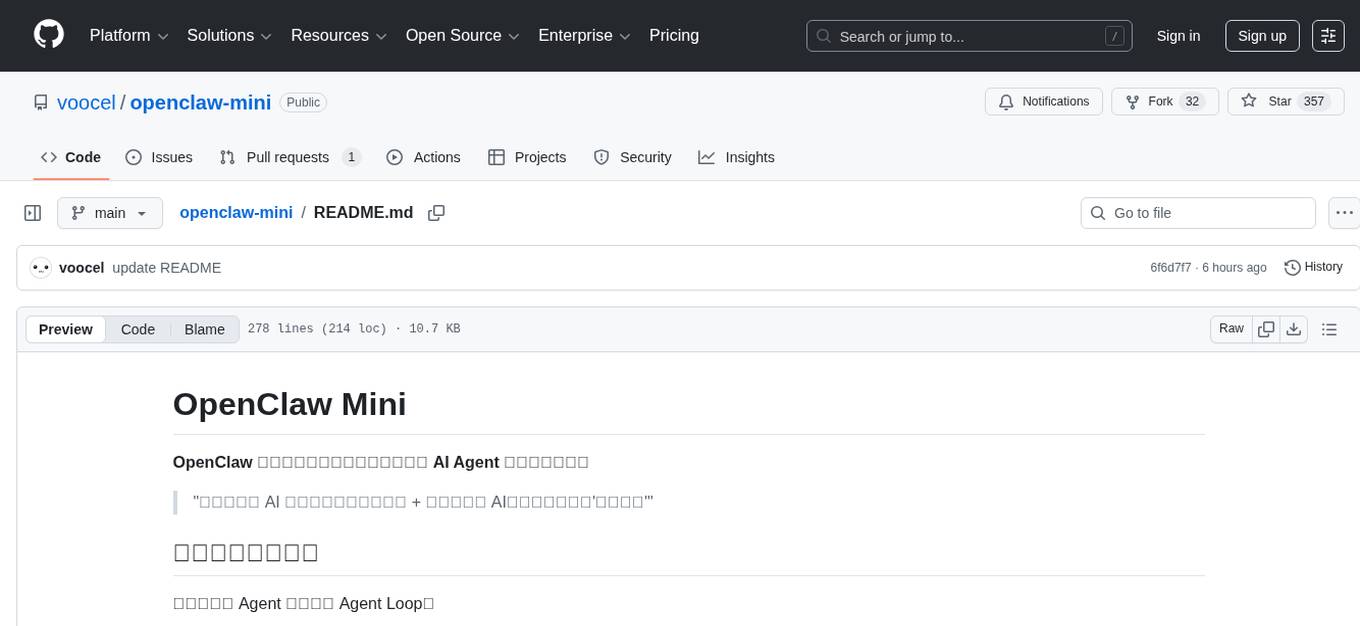

openclaw-mini

OpenClaw Mini is a simplified reproduction of the core architecture of OpenClaw, designed for learning system-level design of AI agents. It focuses on understanding the Agent Loop, session persistence, context management, long-term memory, skill systems, and active awakening. The project provides a minimal implementation to help users grasp the core design concepts of a production-level AI agent system.

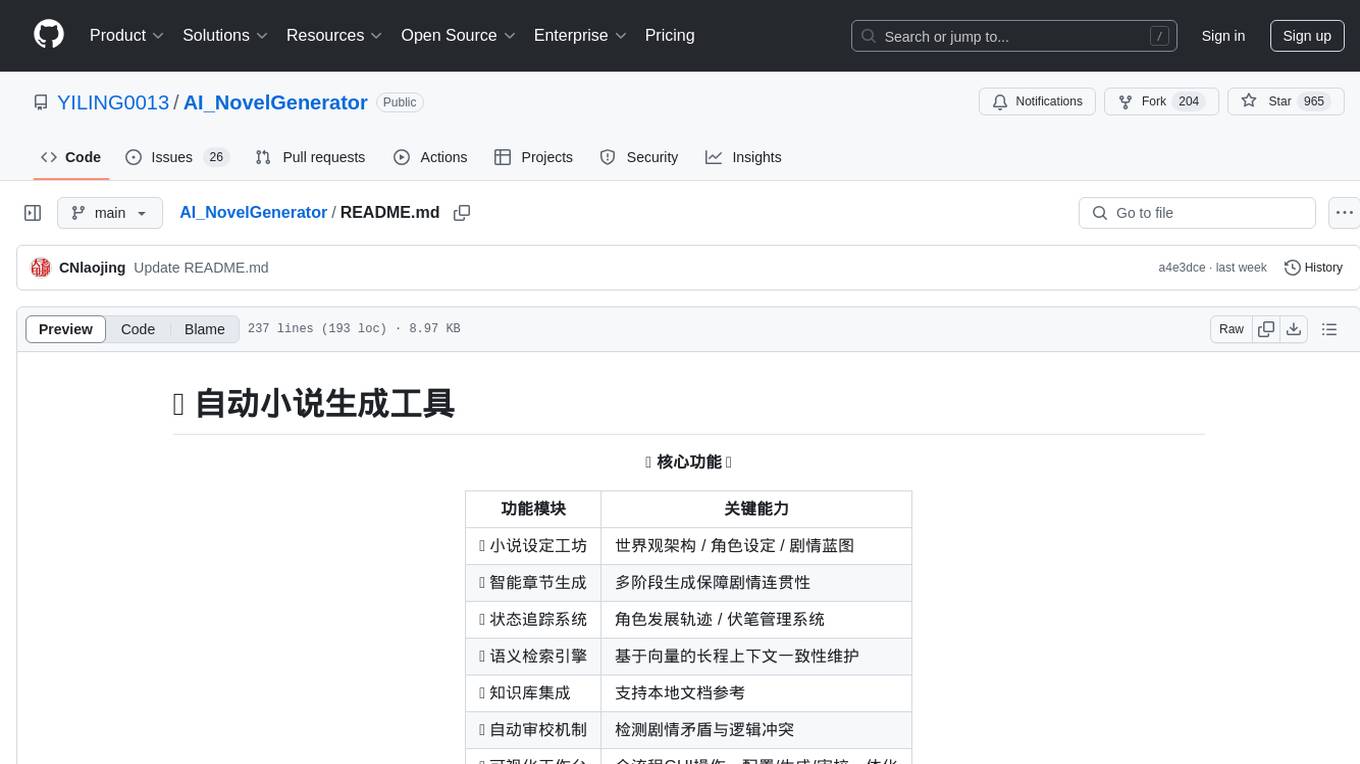

AI_NovelGenerator

AI_NovelGenerator is a versatile novel generation tool based on large language models. It features a novel setting workshop for world-building, character development, and plot blueprinting, intelligent chapter generation for coherent storytelling, a status tracking system for character arcs and foreshadowing management, a semantic retrieval engine for maintaining long-range context consistency, integration with knowledge bases for local document references, an automatic proofreading mechanism for detecting plot contradictions and logic conflicts, and a visual workspace for GUI operations encompassing configuration, generation, and proofreading. The tool aims to assist users in efficiently creating logically rigorous and thematically consistent long-form stories.

Y2A-Auto

Y2A-Auto is an automation tool that transfers YouTube videos to AcFun. It automates the entire process from downloading, translating subtitles, content moderation, intelligent tagging, to partition recommendation and upload. It also includes a web management interface and YouTube monitoring feature. The tool supports features such as downloading videos and covers using yt-dlp, AI translation and embedding of subtitles, AI generation of titles/descriptions/tags, content moderation using Aliyun Green, uploading to AcFun, task management, manual review, and forced upload. It also offers settings for automatic mode, concurrency, proxies, subtitles, login protection, brute force lock, YouTube monitoring, channel/trend capturing, scheduled tasks, history records, optional GPU/hardware acceleration, and Docker deployment or local execution.

hub

Hub is an open-source, high-performance LLM gateway written in Rust. It serves as a smart proxy for LLM applications, centralizing control and tracing of all LLM calls and traces. Built for efficiency, it provides a single API to connect to any LLM provider. The tool is designed to be fast, efficient, and completely open-source under the Apache 2.0 license.

cascadeflow

cascadeflow is an intelligent AI model cascading library that dynamically selects the optimal model for each query or tool call through speculative execution. It helps reduce API costs by 40-85% through intelligent model cascading and speculative execution with automatic per-query cost tracking. The tool is based on the research that shows 40-70% of queries don't require slow, expensive flagship models, and domain-specific smaller models often outperform large general-purpose models on specialized tasks. cascadeflow automatically escalates to flagship models for advanced reasoning when needed. It supports multiple providers, low latency, cost control, and transparency, and can be used for edge and local-hosted AI deployment.

resume-design

Resume-design is an open-source and free resume design and template download website, built with Vue3 + TypeScript + Vite + Element-plus + pinia. It provides two design tools for creating beautiful resumes and a complete backend management system. The project has released two frontend versions and will integrate with a backend system in the future. Users can learn frontend by downloading the released versions or learn design tools by pulling the latest frontend code.

MarkMap-OpenAi-ChatGpt

MarkMap-OpenAi-ChatGpt is a Vue.js-based mind map generation tool that allows users to generate mind maps by entering titles or content. The application integrates the markmap-lib and markmap-view libraries, supports visualizing mind maps, and provides functions for zooming and adapting the map to the screen. Users can also export the generated mind map in PNG, SVG, JPEG, and other formats. This project is suitable for quickly organizing ideas, study notes, project planning, etc. By simply entering content, users can get an intuitive mind map that can be continuously expanded, downloaded, and shared.

For similar tasks

openai-forward

OpenAI-Forward is an efficient forwarding service implemented for large language models. Its core features include user request rate control, token rate limiting, intelligent prediction caching, log management, and API key management, aiming to provide efficient and convenient model forwarding services. Whether proxying local language models or cloud-based language models like LocalAI or OpenAI, OpenAI-Forward makes it easy. Thanks to support from libraries like uvicorn, aiohttp, and asyncio, OpenAI-Forward achieves excellent asynchronous performance.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.