Best AI tools for< Classify Language >

20 - AI tool Sites

NLTK

NLTK (Natural Language Toolkit) is a leading platform for building Python programs to work with human language data. It provides easy-to-use interfaces to over 50 corpora and lexical resources such as WordNet, along with a suite of text processing libraries for classification, tokenization, stemming, tagging, parsing, and semantic reasoning, wrappers for industrial-strength NLP libraries, and an active discussion forum. Thanks to a hands-on guide introducing programming fundamentals alongside topics in computational linguistics, plus comprehensive API documentation, NLTK is suitable for linguists, engineers, students, educators, researchers, and industry users alike.

Mixpeek Solutions

Mixpeek Solutions offers a Multimodal Data Warehouse for Developers, providing a Developer-First API for AI-native Content Understanding. The platform allows users to search, monitor, classify, and cluster unstructured data like video, audio, images, and documents. Mixpeek Solutions offers a range of features including Unified Search, Automated Classification, Unsupervised Clustering, Feature Extractors for Every Data Type, and various specialized extraction models for different data types. The platform caters to a wide range of industries and provides seamless model upgrades, cross-model compatibility, A/B testing infrastructure, and simplified model management.

Datumbox

Datumbox is a machine learning platform that offers a powerful open-source Machine Learning Framework written in Java. It provides a large collection of algorithms, models, statistical tests, and tools to power up intelligent applications. The platform enables developers to build smart software and services quickly using its REST Machine Learning API. Datumbox API offers off-the-shelf Classifiers and Natural Language Processing services for applications like Sentiment Analysis, Topic Classification, Language Detection, and more. It simplifies the process of designing and training Machine Learning models, making it easy for developers to create innovative applications.

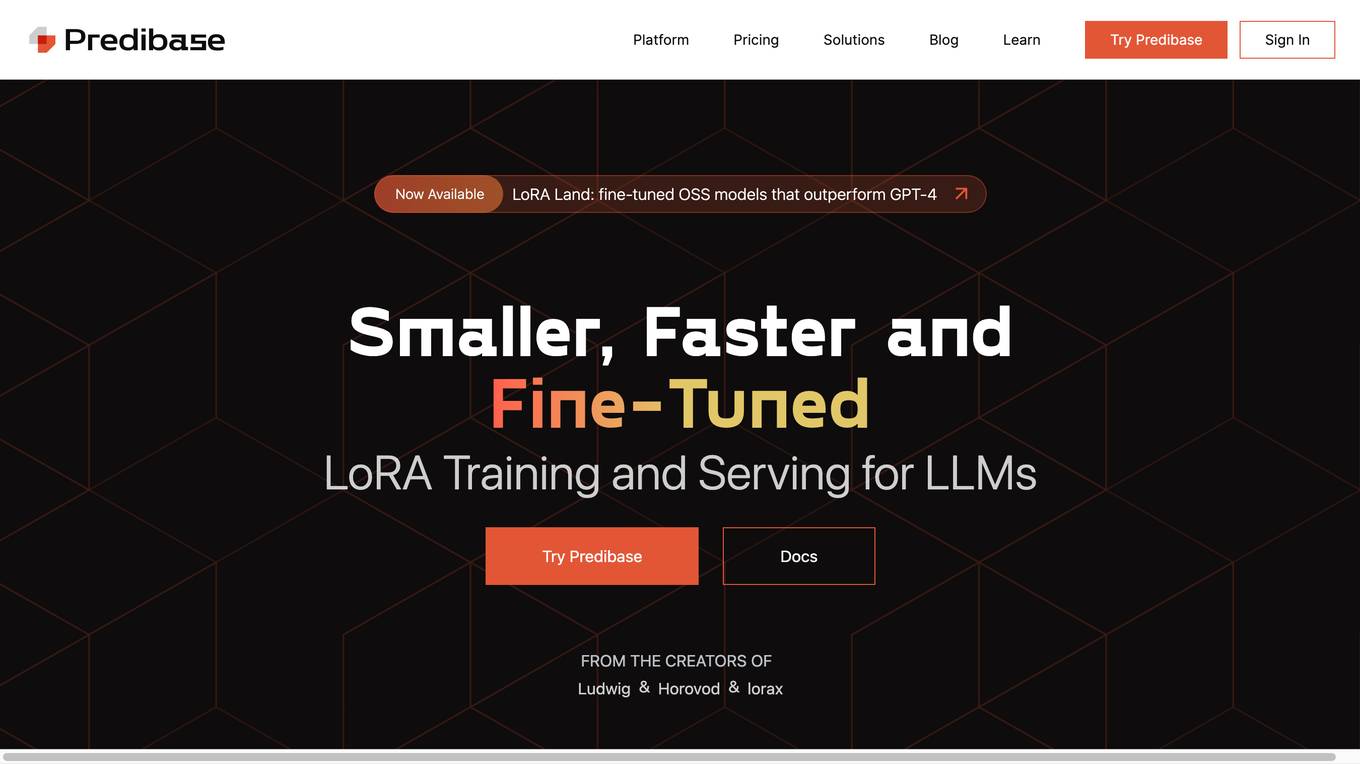

Predibase

Predibase is a platform for fine-tuning and serving Large Language Models (LLMs). It provides a cost-effective and efficient way to train and deploy LLMs for a variety of tasks, including classification, information extraction, customer sentiment analysis, customer support, code generation, and named entity recognition. Predibase is built on proven open-source technology, including LoRAX, Ludwig, and Horovod.

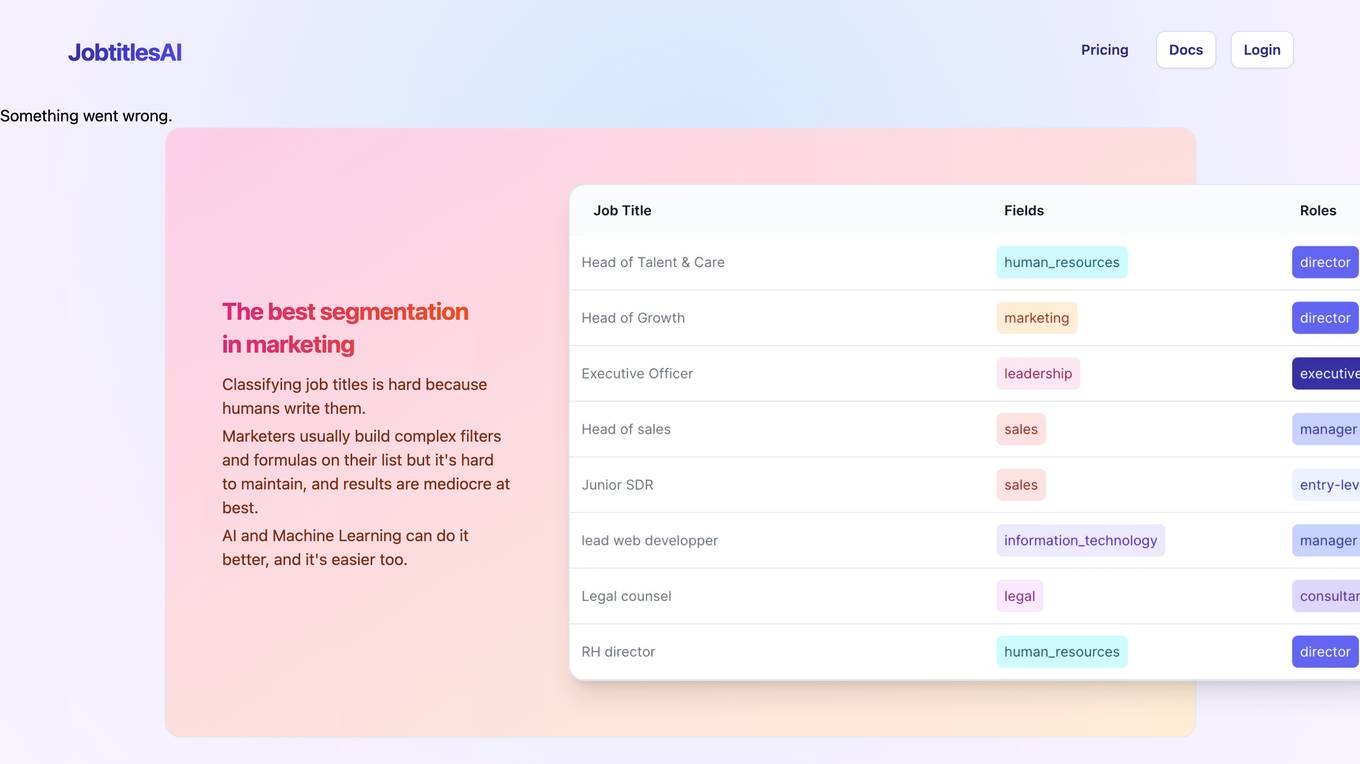

JobtitlesAI

JobtitlesAI is a machine-learning API that sorts job titles into two categories: field (sales, finance, I.T...) and position (executive, management, assistant...). It can be used in spreadsheets, Hubspot, or via API. JobtitlesAI is multilingual and GDPR compliant.

Charm

Charm is an AI-powered spreadsheet assistant that helps users clean messy data, create content, summarize feedback, classify sales leads, and generate dummy data. It is a Google Sheets add-on that automates tasks that are impossible to do with traditional formulas. Charm is used by hundreds of analysts, marketers, product managers, and more.

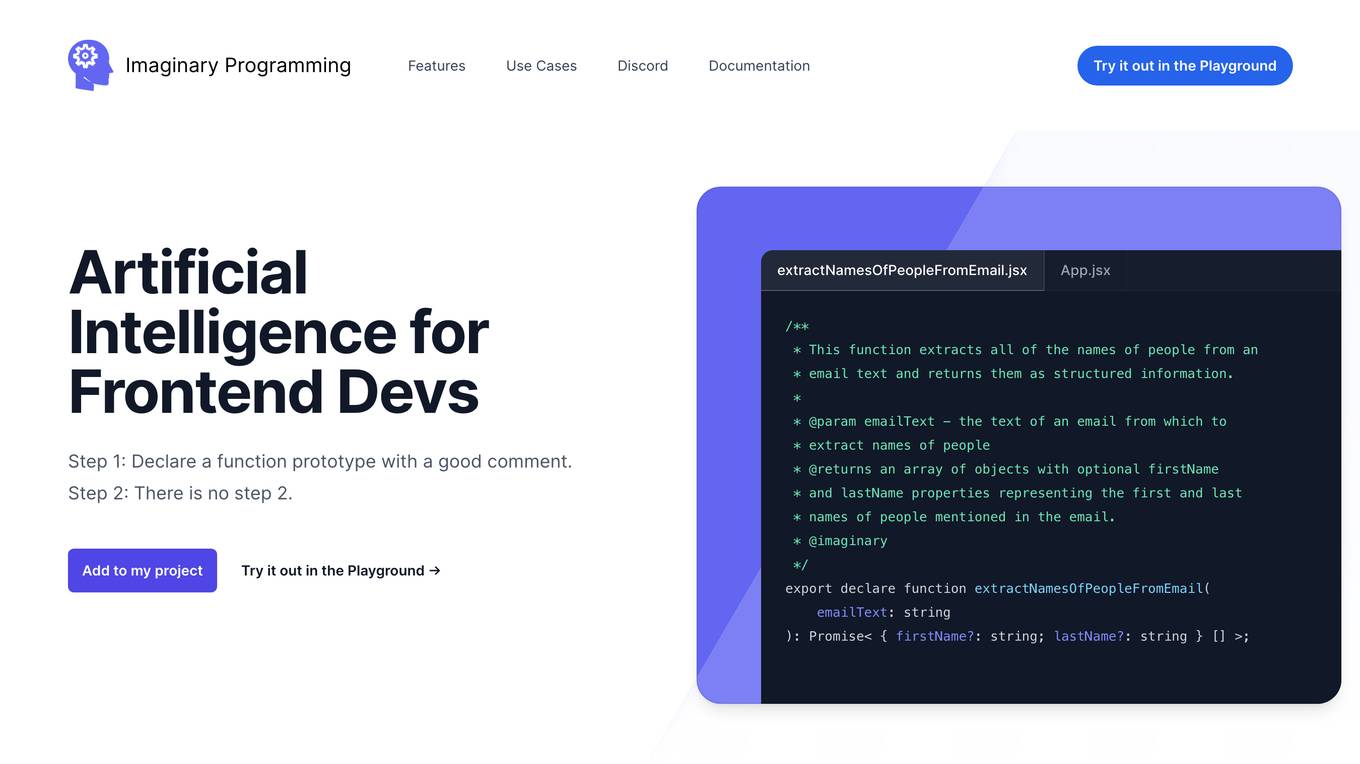

Imaginary Programming

Imaginary Programming is an AI tool that allows frontend developers to leverage OpenAI's GPT engine to add human-like intelligence to their code effortlessly. By defining function prototypes in TypeScript, developers can access GPT's capabilities without the need for AI model training. The tool enables users to extract structured data, generate text, classify data based on intent or emotion, and parse unstructured language. Imaginary Programming is designed to help developers tackle new challenges and enhance their projects with AI intelligence.

Explosion

Explosion is a software company specializing in developer tools and tailored solutions for AI, Machine Learning, and Natural Language Processing (NLP). They are the makers of spaCy, one of the leading open-source libraries for advanced NLP. The company offers consulting services and builds developer tools for various AI-related tasks, such as coreference resolution, dependency parsing, image classification, named entity recognition, and more.

GPTKit

GPTKit is a free AI text generation detection tool that utilizes six different AI-based content detection techniques to identify and classify text as either human- or AI-generated. It provides reports on the authenticity and reality of the analyzed content, with an accuracy of approximately 93%. The first 2048 characters in every request are free, and users can register for free to get 2048 characters/request.

AIGeneratedCourses

AIGeneratedCourses is a collection of AI-generated courses created by Chat2Course.com. These courses are designed to help you learn about a variety of AI-related topics, including machine learning, deep learning, and natural language processing. The courses are easy to follow and are perfect for beginners who want to learn more about AI.

Totoy

Totoy is a Document AI tool that redefines the way documents are processed. Its API allows users to explain, classify, and create knowledge bases from documents without the need for training. The tool supports 19 languages and works with plain text, images, and PDFs. Totoy is ideal for automating workflows, complying with accessibility laws, and creating custom AI assistants for employees or customers.

AI Horde

AI Horde is a crowdsourced distributed cluster of Image generation workers and text generation workers. It provides an API and various tools for developers to integrate AI-powered image and text generation into their applications. The AI Horde is supported by a community of volunteers who contribute their GPU processing power to the cluster.

Apply AI

This website provides a platform for users to apply artificial intelligence (AI) to their work. Users can access a variety of AI tools and resources, including pre-trained models, datasets, and tutorials. The website also provides a community forum where users can connect with other AI enthusiasts and experts.

Lettria

Lettria is a no-code AI platform for text that helps users turn unstructured text data into structured knowledge. It combines the best of Large Language Models (LLMs) and symbolic AI to overcome current limitations in knowledge extraction. Lettria offers a suite of APIs for text cleaning, text mining, text classification, and prompt engineering. It also provides a Knowledge Studio for building knowledge graphs and private GPT models. Lettria is trusted by large organizations such as AP-HP and Leroy Merlin to improve their data analysis and decision-making processes.

Jekka

Jekka is an AI-powered platform that helps businesses automate their workflows and processes. It offers a range of features, including natural language processing, machine learning, and computer vision, that can be used to create custom AI solutions. Jekka is designed to be easy to use, even for those with no prior experience with AI. It provides a drag-and-drop interface that makes it simple to create and deploy AI models.

Cohere

Cohere is a leading provider of artificial intelligence (AI) tools and services. Our mission is to make AI accessible and useful to everyone, from individual developers to large enterprises. We offer a range of AI tools and services, including natural language processing, computer vision, and machine learning. Our tools are used by businesses of all sizes to improve customer service, automate tasks, and gain insights from data.

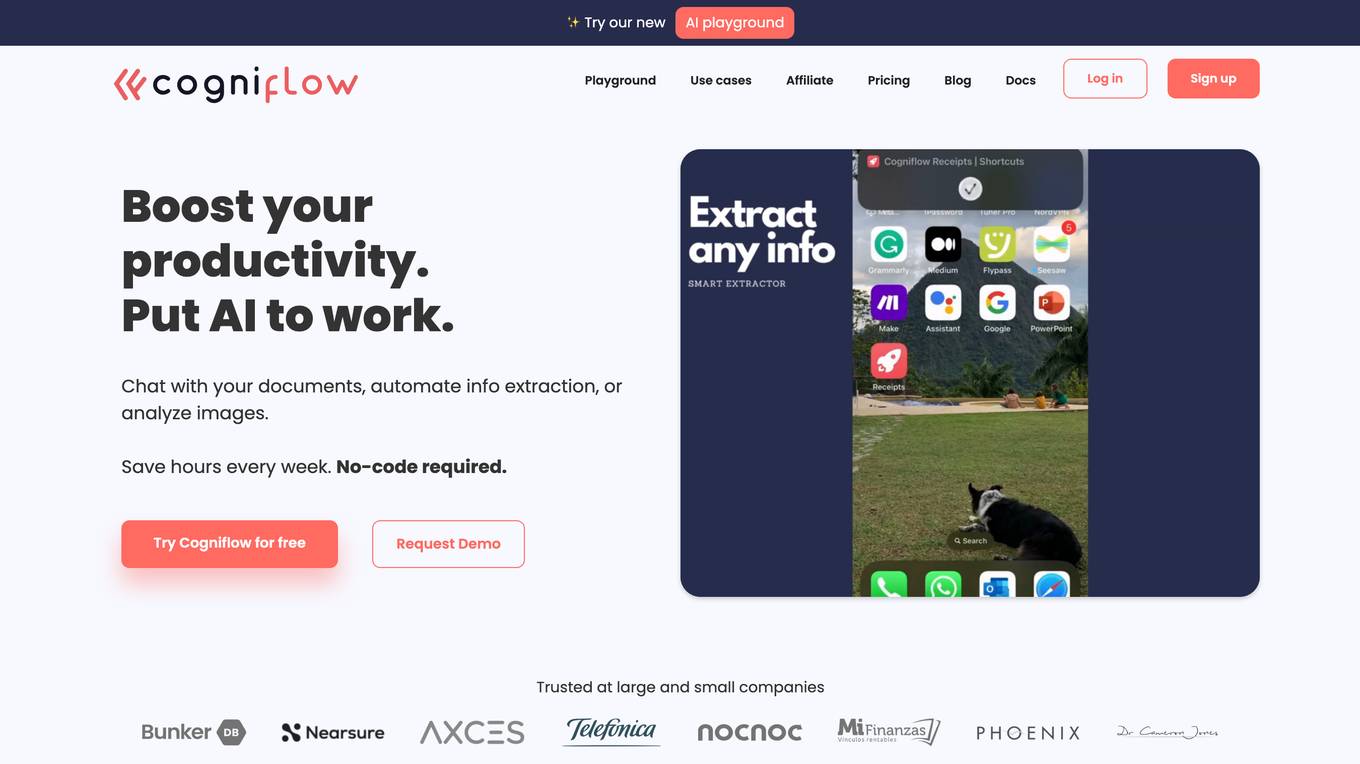

Cogniflow

Cogniflow is a no-code AI platform that allows users to build and deploy custom AI models without any coding experience. The platform provides a variety of pre-built AI models that can be used for a variety of tasks, including customer service, HR, operations, and more. Cogniflow also offers a variety of integrations with other applications, making it easy to connect your AI models to your existing workflow.

Nesa Playground

Nesa is a global blockchain network that brings AI on-chain, allowing applications and protocols to seamlessly integrate with AI. It offers secure execution for critical inference, a private AI network, and a global AI model repository. Nesa supports various AI models for tasks like text classification, content summarization, image generation, language translation, and more. The platform is backed by a team with extensive experience in AI and deep learning, with numerous awards and recognitions in the field.

AIModels.fyi

AIModels.fyi is a website that helps users find the best AI model for their startup. The website provides a weekly rundown of the latest AI models and research, and also allows users to search for models by category or keyword. AIModels.fyi is a valuable resource for anyone looking to use AI to solve a problem.

FranzAI LLM Playground

FranzAI LLM Playground is an AI-powered tool that helps you extract, classify, and analyze unstructured text data. It leverages transformer models to provide accurate and meaningful results, enabling you to build data applications faster and more efficiently. With FranzAI, you can accelerate product and content classification, enhance data interpretation, and advance data extraction processes, unlocking key insights from your textual data.

1 - Open Source AI Tools

easyAi

EasyAi is a lightweight, beginner-friendly Java artificial intelligence algorithm framework. It can be seamlessly integrated into Java projects with Maven, requiring no additional environment configuration or dependencies. The framework provides pre-packaged modules for image object detection and AI customer service, as well as various low-level algorithm tools for deep learning, machine learning, reinforcement learning, heuristic learning, and matrix operations. Developers can easily develop custom micro-models tailored to their business needs.

20 - OpenAI Gpts

Prompt Injection Detector

GPT used to classify prompts as valid inputs or injection attempts. Json output.

Automated AI Prompt Categorizer

Comprehensive categorization and organization for AI Prompts

Dr. Classify

Just upload a numerical dataset for classification task, will apply data analysis and machine learning steps to make a best model possible.

NACE Classifier

NACE (Nomenclature of Economic Activities) is the European statistical classification of economic activities. This is not an official product. Official information here: https://nacev2.com/en

TradeComply

Import Export Compliance | Tariff Classification | Shipping Queries | Logistics & Supply Chain Solutions

LiDAR GPT - LAStools Comprehensive Expert

Expert in LAStools with in-depth command line knowledge.

GICS Classifier

GICS is a classification standard developed by MSCI and S&P Dow Jones Indices. This GPT is not a MSCI and S&P product. Official website : https://www.msci.com/our-solutions/indexes/gics

UNSPSC Explorer

Expert in UNSPSC Codes (United Nations Standard Products and Services Code®).

DGL coding assistant

Assists with DGL coding, focusing on edge classification and link prediction.

Lexi - Article Classifier

Classifies articles into knowledge domains. source code: https://homun.posetmage.com/Agents/

Cloud Scholar

Super astronomer identifying clouds in English and Chinese, sharing facts in Chinese.

Not Hotdog

What would you say if I told you there is an app on the market that can tell you if you have a hot dog or not a hot dog.

MDR Navigator

Medical Device Expert on MDR 2017/745, IVDR 2017/746 and related MDCG guidance