Best AI tools for< Memorization Coaches >

Infographic

7 - AI tool Sites

Tarteel

Tarteel is an AI-powered application designed to help users memorize the Quran more effectively. It offers features such as mistake detection, memorization planning, setting goals, and a premium experience. With Tarteel, users can tailor their memorization journey to their learning style and preferences, track their progress, and receive real-time alerts for missed, incorrect, and skipped words in their recitation. The application aims to provide a seamless and personalized Quran memorization experience for users of all levels.

Memgrain

Memgrain is an AI-powered study tool that offers a range of features to help users create, study, memorize, and learn through flashcards and book summaries. The platform leverages AI technology to generate interactive flashcards from various sources like notes, PDFs, and webpages. Users can utilize spaced repetition algorithms for effective memorization and personalized learning experiences. Memgrain aims to revolutionize the way knowledge is absorbed and retained by combining academic rigor with innovative technology.

EasySpeak

EasySpeak is an AI-powered teleprompter app that helps you deliver speeches and presentations with confidence. With its advanced features, you can record professional-quality videos, generate captivating scripts, and share your content seamlessly. Whether you're a public speaker, educator, or business professional, EasySpeak empowers you to connect with your audience and make a lasting impact.

Flot AI

Flot AI is an AI-powered writing, reading, and memorization tool that seamlessly integrates into your daily workflow. It is backed by OpenAI and designed to assist users across various apps and websites. With features like AI memory, grammar correction, composing drafts, and expert prompts, Flot AI aims to enhance users' productivity and creativity. The application supports over 200 languages and offers a universal solution for writing and memory tasks at a competitive price point.

Tala

Tala is an AI-powered language tutor designed for hands-on learners. It encourages free-flowing conversation early in the learning journey, focusing on natural language acquisition rather than rote memorization. With advanced speech recognition technology, Tala helps users build confidence in speaking and offers a flexible learning experience with adjustable listening speeds and easy access to look-up tools. The platform aims to make language learning engaging and immersive, allowing users to practice without fear of embarrassment and improve their pronunciation through interactive conversations.

Neural Consult

Neural Consult is a cutting-edge AI-powered medical education platform that offers personalized learning tools to empower medical students. The platform provides unlimited board questions, Anki cards, clinical simulations, and more, tailored to individual study needs. With features like question generation, lecture summaries, differential diagnosis, and AI-powered case simulations, Neural Consult revolutionizes the way medical students learn and prepare for exams. Trusted by students at top medical schools, Neural Consult aims to enhance clinical reasoning, memorization skills, and overall learning efficiency through innovative AI technology.

NewWord

NewWord is an AI-powered language learning tool designed to help users memorize and expand their vocabulary efficiently. It offers innovative features such as personalized word management solutions, AI-powered insights, and a unique traceability system. The application aims to make language learning more convenient and enjoyable by providing diversified review strategies and daily reminders. NewWord is suitable for individuals looking to enhance their linguistic skills through practical exercises and scenario-based learning modules.

20 - Open Source Tools

Awesome-LLM-Long-Context-Modeling

This repository includes papers and blogs about Efficient Transformers, Length Extrapolation, Long Term Memory, Retrieval Augmented Generation(RAG), and Evaluation for Long Context Modeling.

mimir

MIMIR is a Python package designed for measuring memorization in Large Language Models (LLMs). It provides functionalities for conducting experiments related to membership inference attacks on LLMs. The package includes implementations of various attacks such as Likelihood, Reference-based, Zlib Entropy, Neighborhood, Min-K% Prob, Min-K%++, Gradient Norm, and allows users to extend it by adding their own datasets and attacks.

llm-finetuning

llm-finetuning is a repository that provides a serverless twist to the popular axolotl fine-tuning library using Modal's serverless infrastructure. It allows users to quickly fine-tune any LLM model with state-of-the-art optimizations like Deepspeed ZeRO, LoRA adapters, Flash attention, and Gradient checkpointing. The repository simplifies the fine-tuning process by not exposing all CLI arguments, instead allowing users to specify options in a config file. It supports efficient training and scaling across multiple GPUs, making it suitable for production-ready fine-tuning jobs.

llmops-duke-aipi

LLMOps Duke AIPI is a course focused on operationalizing Large Language Models, teaching methodologies for developing applications using software development best practices with large language models. The course covers various topics such as generative AI concepts, setting up development environments, interacting with large language models, using local large language models, applied solutions with LLMs, extensibility using plugins and functions, retrieval augmented generation, introduction to Python web frameworks for APIs, DevOps principles, deploying machine learning APIs, LLM platforms, and final presentations. Students will learn to build, share, and present portfolios using Github, YouTube, and Linkedin, as well as develop non-linear life-long learning skills. Prerequisites include basic Linux and programming skills, with coursework available in Python or Rust. Additional resources and references are provided for further learning and exploration.

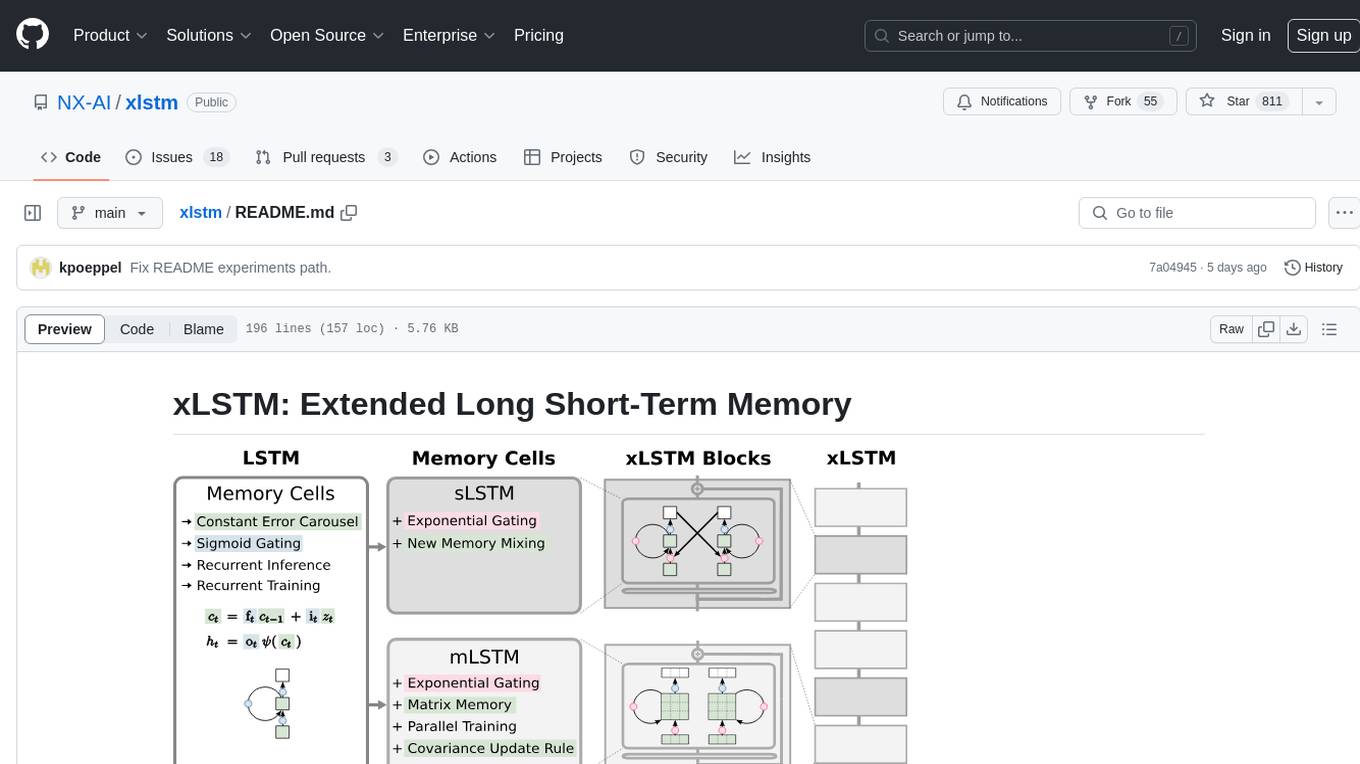

xlstm

xLSTM is a new Recurrent Neural Network architecture based on ideas of the original LSTM. Through Exponential Gating with appropriate normalization and stabilization techniques and a new Matrix Memory it overcomes the limitations of the original LSTM and shows promising performance on Language Modeling when compared to Transformers or State Space Models. The package is based on PyTorch and was tested for versions >=1.8. For the CUDA version of xLSTM, you need Compute Capability >= 8.0. The xLSTM tool provides two main components: xLSTMBlockStack for non-language applications or integrating in other architectures, and xLSTMLMModel for language modeling or other token-based applications.

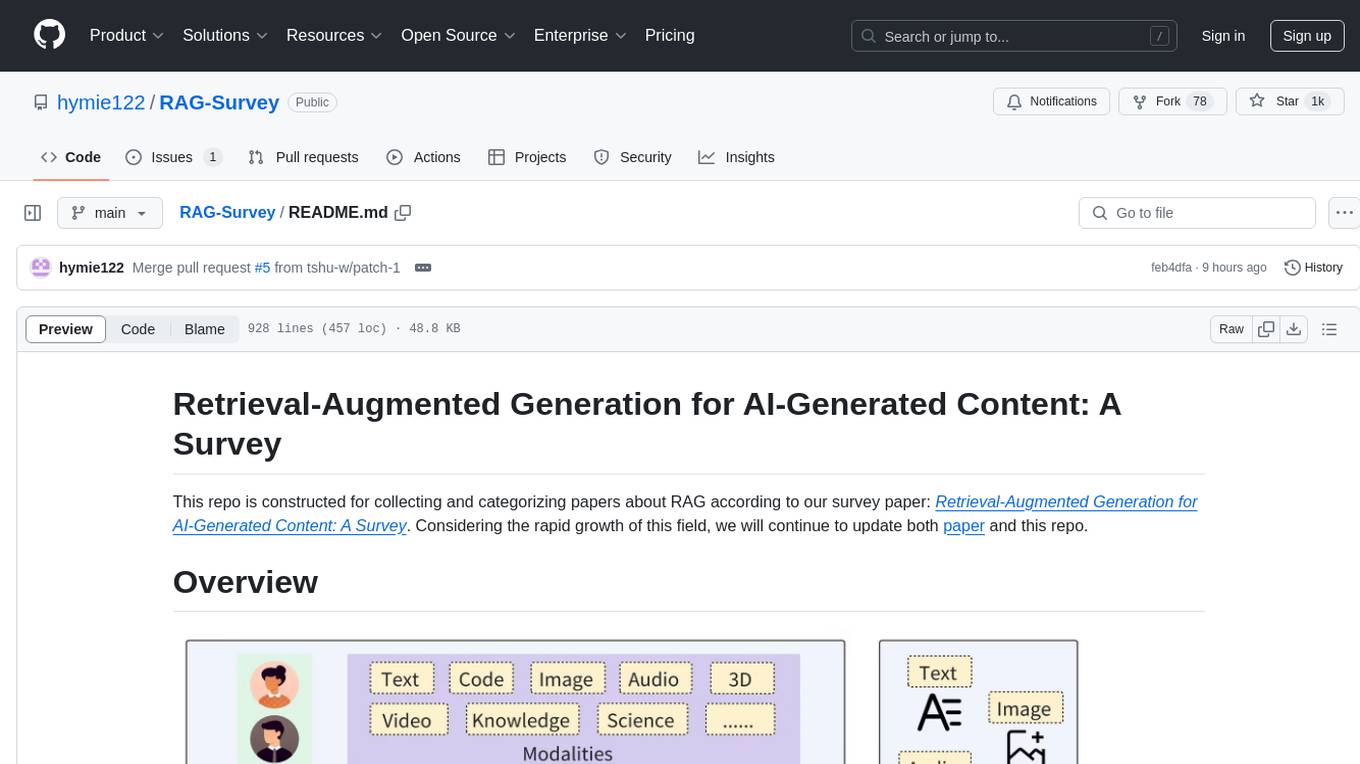

RAG-Survey

This repository is dedicated to collecting and categorizing papers related to Retrieval-Augmented Generation (RAG) for AI-generated content. It serves as a survey repository based on the paper 'Retrieval-Augmented Generation for AI-Generated Content: A Survey'. The repository is continuously updated to keep up with the rapid growth in the field of RAG.

RWKV-LM

RWKV is an RNN with Transformer-level LLM performance, which can also be directly trained like a GPT transformer (parallelizable). And it's 100% attention-free. You only need the hidden state at position t to compute the state at position t+1. You can use the "GPT" mode to quickly compute the hidden state for the "RNN" mode. So it's combining the best of RNN and transformer - **great performance, fast inference, saves VRAM, fast training, "infinite" ctx_len, and free sentence embedding** (using the final hidden state).

LLM4SE

The collection is actively updated with the help of an internal literature search engine.

Awesome-Code-LLM

Analyze the following text from a github repository (name and readme text at end) . Then, generate a JSON object with the following keys and provide the corresponding information for each key, in lowercase letters: 'description' (detailed description of the repo, must be less than 400 words,Ensure that no line breaks and quotation marks.),'for_jobs' (List 5 jobs suitable for this tool,in lowercase letters), 'ai_keywords' (keywords of the tool,user may use those keyword to find the tool,in lowercase letters), 'for_tasks' (list of 5 specific tasks user can use this tool to do,in lowercase letters), 'answer' (in english languages)

prompt-in-context-learning

An Open-Source Engineering Guide for Prompt-in-context-learning from EgoAlpha Lab. 📝 Papers | ⚡️ Playground | 🛠 Prompt Engineering | 🌍 ChatGPT Prompt | ⛳ LLMs Usage Guide > **⭐️ Shining ⭐️:** This is fresh, daily-updated resources for in-context learning and prompt engineering. As Artificial General Intelligence (AGI) is approaching, let’s take action and become a super learner so as to position ourselves at the forefront of this exciting era and strive for personal and professional greatness. The resources include: _🎉Papers🎉_: The latest papers about _In-Context Learning_ , _Prompt Engineering_ , _Agent_ , and _Foundation Models_. _🎉Playground🎉_: Large language models(LLMs)that enable prompt experimentation. _🎉Prompt Engineering🎉_: Prompt techniques for leveraging large language models. _🎉ChatGPT Prompt🎉_: Prompt examples that can be applied in our work and daily lives. _🎉LLMs Usage Guide🎉_: The method for quickly getting started with large language models by using LangChain. In the future, there will likely be two types of people on Earth (perhaps even on Mars, but that's a question for Musk): - Those who enhance their abilities through the use of AIGC; - Those whose jobs are replaced by AI automation. 💎EgoAlpha: Hello! human👤, are you ready?

Awesome-LLM-Safety

Welcome to our Awesome-llm-safety repository! We've curated a collection of the latest, most comprehensive, and most valuable resources on large language model safety (llm-safety). But we don't stop there; included are also relevant talks, tutorials, conferences, news, and articles. Our repository is constantly updated to ensure you have the most current information at your fingertips.

ai_all_resources

This repository is a compilation of excellent ML and DL tutorials created by various individuals and organizations. It covers a wide range of topics, including machine learning fundamentals, deep learning, computer vision, natural language processing, reinforcement learning, and more. The resources are organized into categories, making it easy to find the information you need. Whether you're a beginner or an experienced practitioner, you're sure to find something valuable in this repository.

Awesome-LLM-Tabular

This repository is a curated list of research papers that explore the integration of Large Language Model (LLM) technology with tabular data. It aims to provide a comprehensive resource for researchers and practitioners interested in this emerging field. The repository includes papers on a wide range of topics, including table-to-text generation, table question answering, and tabular data classification. It also includes a section on related datasets and resources.

awesome-generative-ai

A curated list of Generative AI projects, tools, artworks, and models

awesome-generative-information-retrieval

This repository contains a curated list of resources on generative information retrieval, including research papers, datasets, tools, and applications. Generative information retrieval is a subfield of information retrieval that uses generative models to generate new documents or passages of text that are relevant to a given query. This can be useful for a variety of tasks, such as question answering, summarization, and document generation. The resources in this repository are intended to help researchers and practitioners stay up-to-date on the latest advances in generative information retrieval.

Awesome-LLM-Interpretability

Awesome-LLM-Interpretability is a curated list of materials related to LLM (Large Language Models) interpretability, covering tutorials, code libraries, surveys, videos, papers, and blogs. It includes resources on transformer mechanistic interpretability, visualization, interventions, probing, fine-tuning, feature representation, learning dynamics, knowledge editing, hallucination detection, and redundancy analysis. The repository aims to provide a comprehensive overview of tools, techniques, and methods for understanding and interpreting the inner workings of large language models.

3 - OpenAI Gpts

MediCards Creator

Creates Anki cards for UK MBBS students, with optimized and accurate content.