AI tools for docker-aio

Related Tools:

Duckietown

Duckietown is a platform for delivering cutting-edge robotics and AI learning experiences. It offers teaching resources to instructors, hands-on activities to learners, an accessible research platform to researchers, and a state-of-the-art ecosystem for professional training. Duckietown's mission is to make robotics and AI education state-of-the-art, hands-on, and accessible to all.

Kapa.ai

Kapa.ai is an AI tool that transforms technical documentation and knowledge bases into a reliable, LLM-powered AI assistant. It provides precise, context-specific answers, identifies documentation gaps, and integrates effortlessly with various platforms. Trusted by leading companies, Kapa.ai is designed for organizations with technical products, offering accuracy, rapid deployment features, and enterprise-grade security.

GrapixAI

GrapixAI is a leading provider of low-cost cloud GPU rental services and AI server solutions. The company's focus on flexibility, scalability, and cutting-edge technology enables a variety of AI applications in both local and cloud environments. GrapixAI offers the lowest prices for on-demand GPUs such as RTX4090, RTX 3090, RTX A6000, RTX A5000, and A40. The platform provides Docker-based container ecosystem for quick software setup, powerful GPU search console, customizable pricing options, various security levels, GUI and CLI interfaces, real-time bidding system, and personalized customer support.

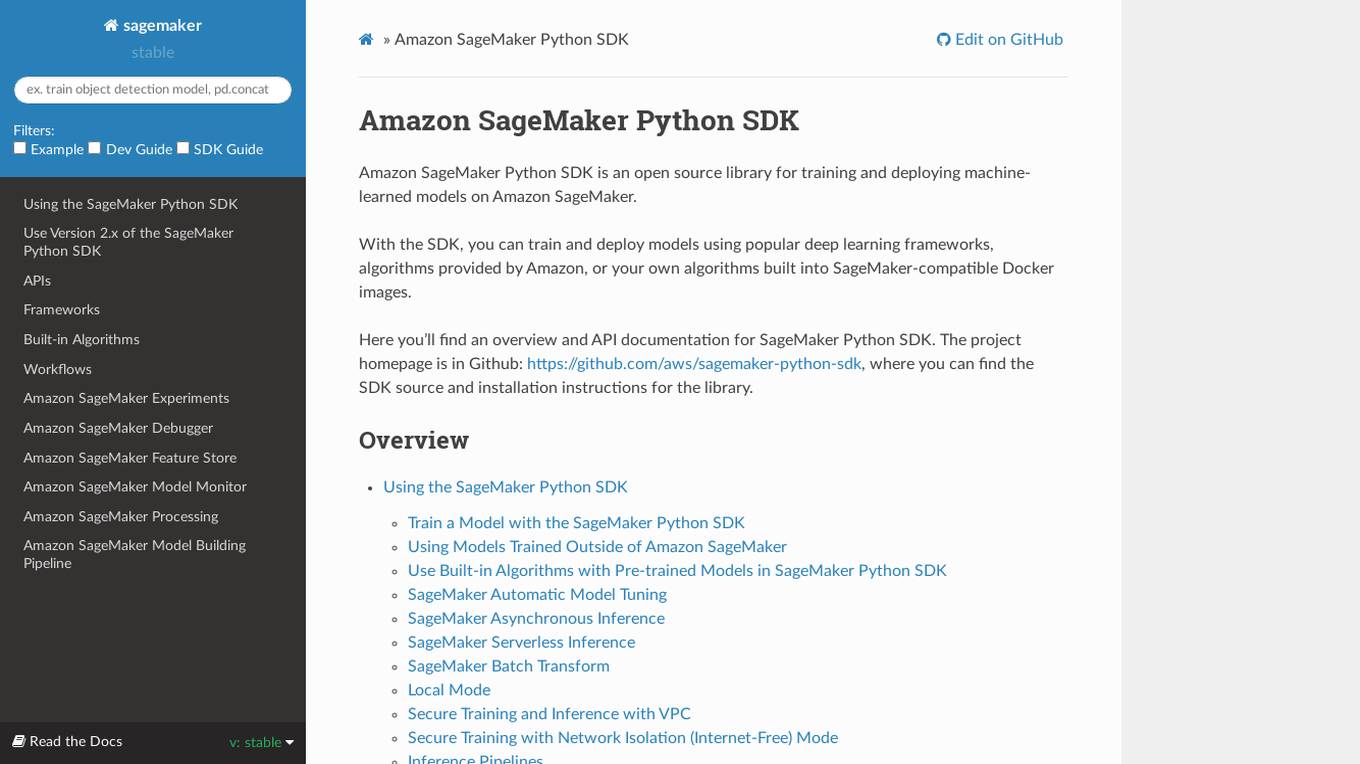

Amazon SageMaker Python SDK

Amazon SageMaker Python SDK is an open source library for training and deploying machine-learned models on Amazon SageMaker. With the SDK, you can train and deploy models using popular deep learning frameworks, algorithms provided by Amazon, or your own algorithms built into SageMaker-compatible Docker images.

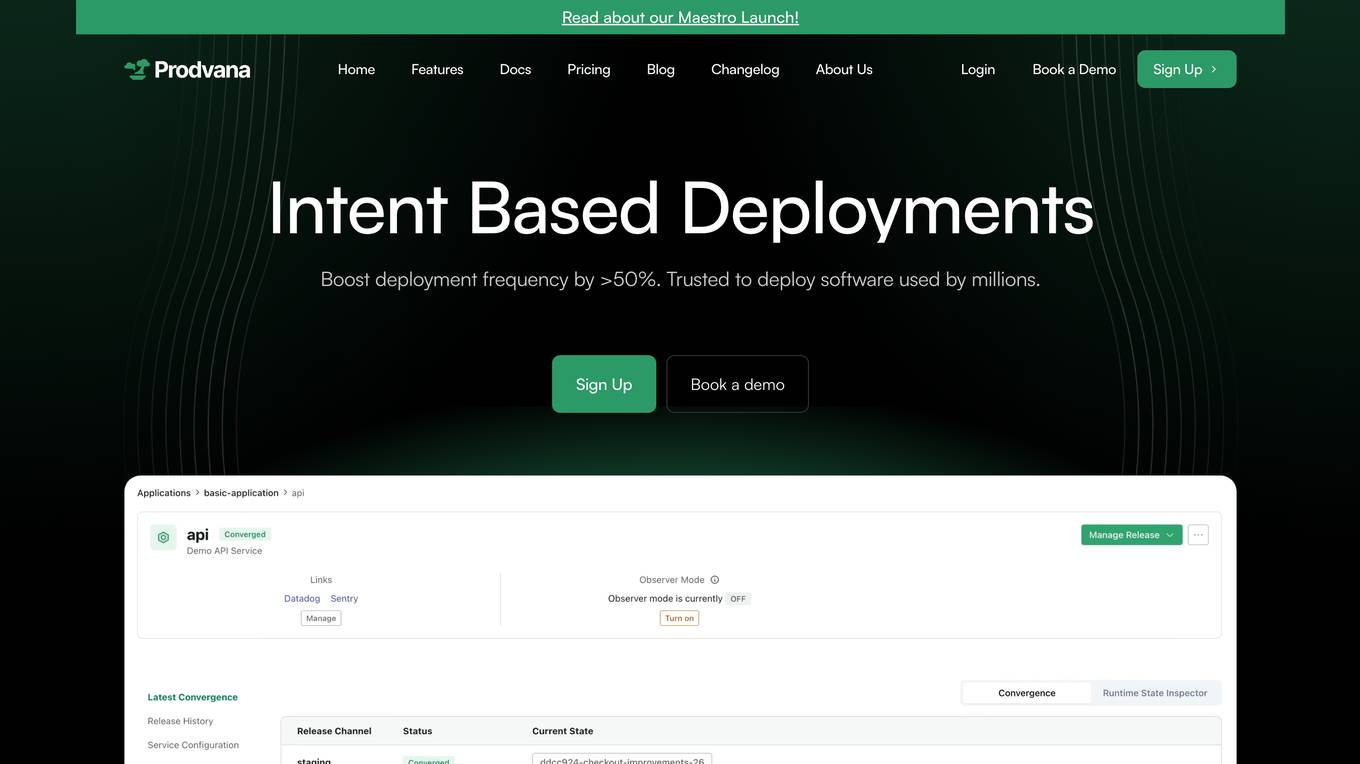

Prodvana

Prodvana is an intelligent deployment platform that helps businesses automate and streamline their software deployment process. It provides a variety of features to help businesses improve the speed, reliability, and security of their deployments. Prodvana is a cloud-based platform that can be used with any type of infrastructure, including on-premises, hybrid, and multi-cloud environments. It is also compatible with a wide range of DevOps tools and technologies. Prodvana's key features include: Intent-based deployments: Prodvana uses intent-based deployment technology to automate the deployment process. This means that businesses can simply specify their deployment goals, and Prodvana will automatically generate and execute the necessary steps to achieve those goals. This can save businesses a significant amount of time and effort. Guardrails for deployments: Prodvana provides a variety of guardrails to help businesses ensure the security and reliability of their deployments. These guardrails include approvals, database validations, automatic deployment validation, and simple interfaces to add custom guardrails. This helps businesses to prevent errors and reduce the risk of outages. Frictionless DevEx: Prodvana provides a frictionless developer experience by tracking commits through the infrastructure, ensuring complete visibility beyond just Docker images. This helps developers to quickly identify and resolve issues, and it also makes it easier to collaborate with other team members. Intelligence with Clairvoyance: Prodvana's Clairvoyance feature provides businesses with insights into the impact of their deployments before they are executed. This helps businesses to make more informed decisions about their deployments and to avoid potential problems. Easy integrations: Prodvana integrates seamlessly with a variety of DevOps tools and technologies. This makes it easy for businesses to use Prodvana with their existing workflows and processes.

Landing AI

Landing AI is a computer vision platform and AI software company that provides a cloud-based platform for building and deploying computer vision applications. The platform includes a library of pre-trained models, a set of tools for data labeling and model training, and a deployment service that allows users to deploy their models to the cloud or edge devices. Landing AI's platform is used by a variety of industries, including automotive, electronics, food and beverage, medical devices, life sciences, agriculture, manufacturing, infrastructure, and pharma.

Lokal.so

Lokal.so is an AI-powered tool designed to supercharge your localhost development experience. It offers features like sharing your localhost with the public, debugging incoming requests, and developing with the assistance of an AI assistant. With Lokal.so, you can leverage Cloudflare's network for faster site delivery, use a built-in S3 server for easy file debugging, and automatically convert JSON payloads into different programming language models. The tool aims to simplify local development by providing a self-hosted tunnel server, unlimited .local domain access, and endpoint management with memorable names.

Docker and Docker Swarm Assistant

Expert in Docker and Docker Swarm solutions and troubleshooting.

The Dock - Your Docker Assistant

Technical assistant specializing in Docker and Docker Compose. Lets Debug !

rosGPT

Learn ROS/ROS2 at any level, from beginner to expert with rosGPT - and build Docker containers to test your robot anywhere.

The Dorker

I help create precise Google Dork search strings using advanced search operators.

docker-aio

The docker-aio repository provides an accelerated mirror service for Docker users, allowing them to speed up image pulls by replacing original domains with corresponding accelerated domains. Users in Asia are advised to comply with local laws and regulations when using this service. The repository offers installation scripts and instructions on how to modify Docker configurations to utilize the accelerated mirrors effectively.

OpenDAN-Personal-AI-OS

OpenDAN is an open source Personal AI OS that consolidates various AI modules for personal use. It empowers users to create powerful AI agents like assistants, tutors, and companions. The OS allows agents to collaborate, integrate with services, and control smart devices. OpenDAN offers features like rapid installation, AI agent customization, connectivity via Telegram/Email, building a local knowledge base, distributed AI computing, and more. It aims to simplify life by putting AI in users' hands. The project is in early stages with ongoing development and future plans for user and kernel mode separation, home IoT device control, and an official OpenDAN SDK release.

aiodocker

Aiodocker is a simple Docker HTTP API wrapper written with asyncio and aiohttp. It provides asynchronous bindings for interacting with Docker containers and images. Users can easily manage Docker resources using async functions and methods. The library offers features such as listing images and containers, creating and running containers, and accessing container logs. Aiodocker is designed to work seamlessly with Python's asyncio framework, making it suitable for building asynchronous Docker management applications.

ztncui-aio

This repository contains a Docker image with ZeroTier One and ztncui to set up a standalone ZeroTier network controller with a web user interface. It provides features like Golang auto-mkworld for generating a planet file, supports local persistent storage configuration, and includes a public file server. Users can build the Docker image, set up the container with specific environment variables, and manage the ZeroTier network controller through the web interface.

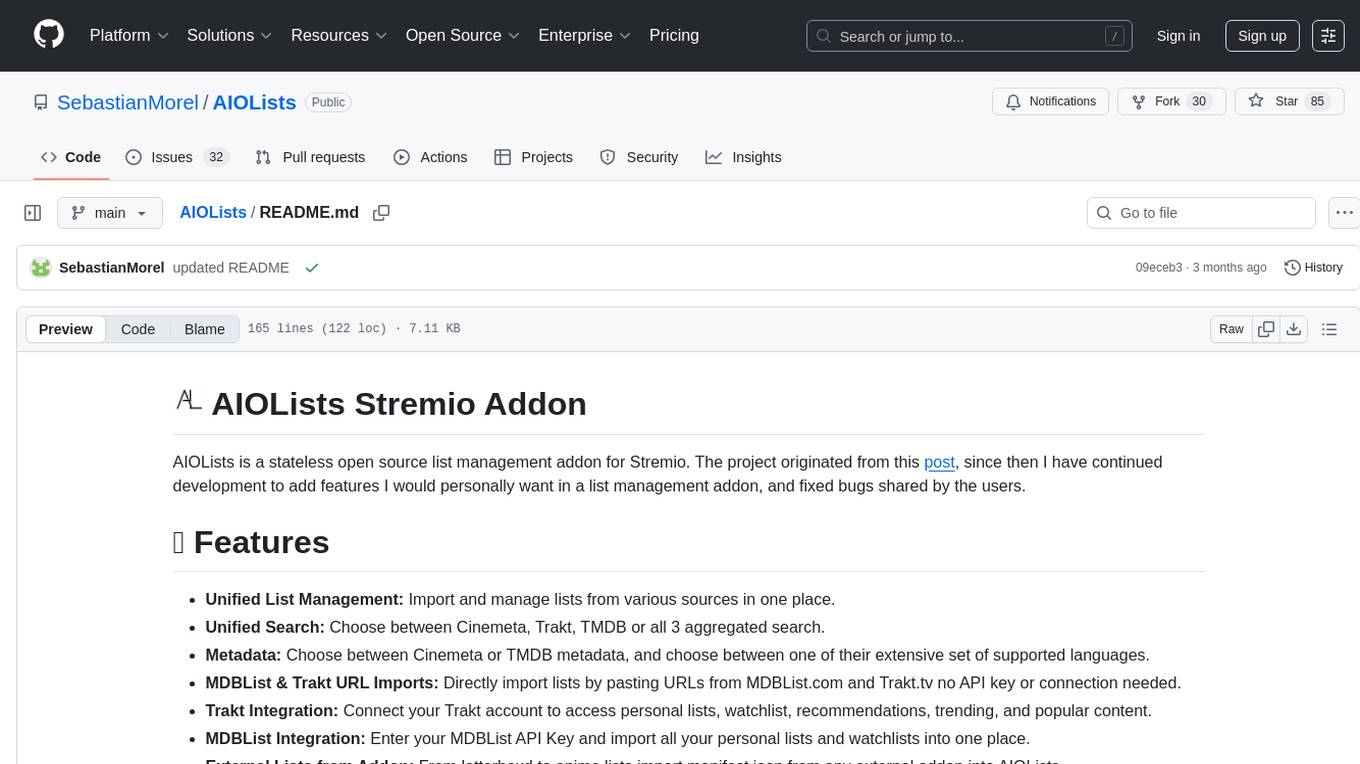

AIOStreams

AIOStreams is a versatile tool that combines streams from various addons into one platform, offering extensive customization options. Users can change result formats, filter results by various criteria, remove duplicates, prioritize services, sort results, specify size limits, and more. The tool scrapes results from selected addons, applies user configurations, and presents the results in a unified manner. It simplifies the process of finding and accessing desired content from multiple sources, enhancing user experience and efficiency.

aiogram-django-template

Aiogram & Django API Template is a robust and secure Django template with advanced features like Docker integration, Celery for asynchronous tasks, Sentry for error tracking, Django Rest Framework for building APIs, and more. It provides scalability options, up-to-date dependencies, and integration with AWS S3 for storage. The template includes configuration guides for secrets, ports, performance tuning, application settings, CORS and CSRF settings, and database configuration. Security, scalability, and monitoring are emphasized for efficient Django API development.

aiogram_bot_template

This repository provides a template for easy asynchronous bot development for Telegram using Aiogram library. It includes setup instructions using Docker Compose, migration commands for TortoiseORM, and examples for working with TortoiseORM. The template aims to simplify the process of creating Telegram bots with features like FastAPI integration and Docker Compose setup. The repository also includes a list of tasks to be completed, such as changing SQLAlchemy to TortoiseORM, adding FastAPI for webhook, and setting up CI/CD examples.

aiogram_bot_template

Aiogram bot template is a boilerplate for creating Telegram bots using Aiogram framework. It provides a solid foundation for building robust and scalable bots with a focus on code organization, database integration, and localization.

AiogramShopBot

AiogramShopBot is a software product based on Aiogram3 and SQLAlchemy that allows you to automate sales of digital goods in Telegram. One of the bot's advantages is that AiogramShopBot implements the ability to top up with Bitcoin, Litecoin, Solana and stablecoins in the TRC20 and ERC20 networks, which allows you to sell digital goods worldwide. The bot provides features for user registration, balance top-up, purchase of goods, purchase history, admin functionalities like announcements, inventory management, user management, analytics & reports, and multibot functionality. It supports encryption via SQLCipher, multiple cryptocurrencies, and offers a user-friendly interface for managing sales and transactions.

aiops-modules

AIOps Modules is a collection of reusable Infrastructure as Code (IAC) modules that work with SeedFarmer CLI. The modules are decoupled and can be aggregated using GitOps principles to achieve desired use cases, removing heavy lifting for end users. They must be generic for reuse in Machine Learning and Foundation Model Operations domain, adhering to SeedFarmer Guide structure. The repository includes deployment steps, project manifests, and various modules for SageMaker, Mlflow, FMOps/LLMOps, MWAA, Step Functions, EKS, and example use cases. It also supports Industry Data Framework (IDF) and Autonomous Driving Data Framework (ADDF) Modules.

AIO

AIO is a comprehensive guide for setting up a home All-in-One server, enabling users to create an enterprise-level virtualized environment at home. It allows running multiple operating systems simultaneously, achieving public network access, optimizing hardware performance, and reducing IT costs. The guide includes detailed documentation for setting up from scratch and requires a computer with virtualization support, VMware ESXi installation media, and basic network configuration knowledge.

sandbox

AIO Sandbox is an all-in-one agent sandbox environment that combines Browser, Shell, File, MCP operations, and VSCode Server in a single Docker container. It provides a unified, secure execution environment for AI agents and developers, with features like unified file system, multiple interfaces, secure execution, zero configuration, and agent-ready MCP-compatible APIs. The tool allows users to run shell commands, perform file operations, automate browser tasks, and integrate with various development tools and services.

ai-assisted-devops

AI-Assisted DevOps is a 10-day course focusing on integrating artificial intelligence (AI) technologies into DevOps practices. The course covers various topics such as AI for observability, incident response, CI/CD pipeline optimization, security, and FinOps. Participants will learn about running large language models (LLMs) locally, making API calls, AI-powered shell scripting, and using AI agents for self-healing infrastructure. Hands-on activities include creating GitHub repositories with bash scripts, generating Docker manifests, predicting server failures using AI, and building AI agents to monitor deployments. The course culminates in a capstone project where learners implement AI-assisted DevOps automation and receive peer feedback on their projects.

anything-llm

AnythingLLM is a full-stack application that enables you to turn any document, resource, or piece of content into context that any LLM can use as references during chatting. This application allows you to pick and choose which LLM or Vector Database you want to use as well as supporting multi-user management and permissions.

AI-CloudOps

AI+CloudOps is a cloud-native operations management platform designed for enterprises. It aims to integrate artificial intelligence technology with cloud-native practices to significantly improve the efficiency and level of operations work. The platform offers features such as AIOps for monitoring data analysis and alerts, multi-dimensional permission management, visual CMDB for resource management, efficient ticketing system, deep integration with Prometheus for real-time monitoring, and unified Kubernetes management for cluster optimization.