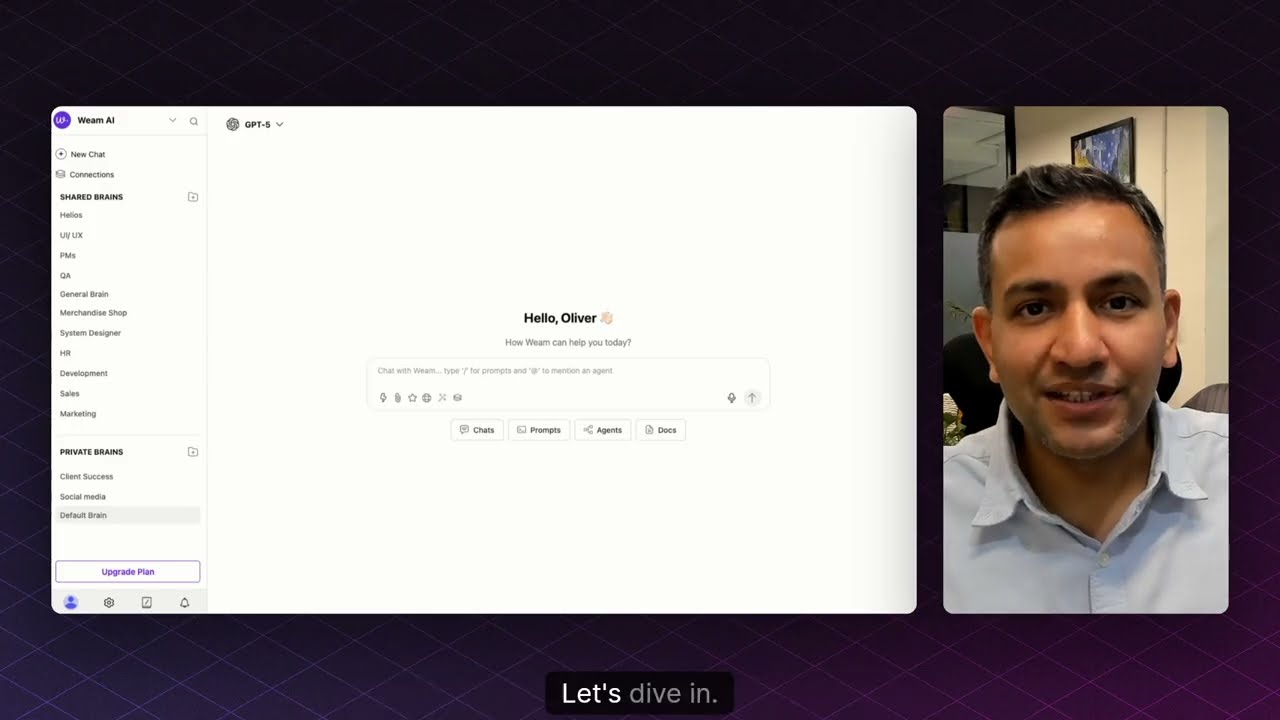

weam

Web app for teams of 20+ members. In-built connections to major LLMs via API. Share chats, prompts, and agents in team or private folders. Modern, fully responsive stack (Next.js, Node.js). Deploy your own vibe-coded AI apps, agents, or workflows—or use ready-made solutions from the library.

Stars: 132

Weam is an open source platform designed to help teams systematically adopt AI. It provides a production-ready stack with Next.js frontend and Node.js/Python backend, allowing for immediate deployment and use. Weam connects to major LLM providers, enabling easy access to the latest AI models. The platform organizes AI interactions into 'Brains' for different departments, offering customization and expansion options. Features include chat system, productivity tools, sharing & access controls, prompt library, AI agents, RAG, MCP, enterprise features, pre-built automations, and upcoming AI app solutions. Weam is free, open source, and scalable to meet growing needs.

README:

LLMs, Agents, AI Apps, and Team—In One Place, Ready to Use and Expand

Weam is an open source platform that helps teams adopt AI systematically. It includes a complete, production-ready stack with Next.js frontend and Node.js backend - ready to deploy and use immediately.

System Requirements: CPU with 4+ cores, 8GB+ RAM. Professional installation support available for non-technical teams.

Getting Started: Simply download or fork the repository and self-host it on your infrastructure.

Why Weam?

Modern teams need easy access to the latest AI models. Weam connects to all major LLM providers out of the box - just add your API keys and you're ready to go. Think of it as ChatGPT built specifically for teams.

All your organization's AI interactions live in one centralized platform, organized into "Brains" (intelligent folders). These Brains contain not just chat histories, but also your custom prompts and AI agents. You can structure Brains around your departments - Marketing, Sales, Engineering, Support - whatever fits your organization.

But we didn't stop at chat. Weam is built to grow with your needs. Customize existing features or add entirely new AI applications. With the rise of AI-powered development tools like Loveable and Cursor, your team can rapidly build custom AI apps. Instead of scattered deployments, bring these apps into Weam where your entire team already works. We're building a library of open source AI apps you can use as-is, customize, or use as inspiration for your own.

Bottom line: You get a production-grade AI platform that's ready to deploy today and can expand as your needs grow. Completely free and open source.

Visit our comprehensive Documentation. for:

- Step-by-step installation guides

- Video tutorials for all features

- Technical architecture details

- API references and more

- Multi-LLM Support: OpenAI, Anthropic, Gemini, Llama, Perplexity, DeepSeek, Open Router, Hugging Face, Grok, and more

- Intelligent Context: Full conversation history maintains coherent, context-aware discussions

- Local Model Support: Connect to self-hosted models (coming soon)

- Conversation Management: Fork chats to explore different directions

- Voice Input: Speak instead of type with built-in voice-to-text

- Web Scraping: Extract content from any URL to provide context to your AI

- Web Search: Real-time information retrieval during conversations

- Team Collaboration: Multiple team members can work together in the same chat

- Threaded Comments: Slack-style commenting for organized discussions

- Team Management: Add or remove team members from specific chats

- Public Sharing: Generate shareable links for external collaboration

- Custom Prompts: Create and save prompts for repeated use

- Team Library: Build a shared repository of proven prompts

- Template Collection: Access pre-built prompts for common tasks

- Smart Generation: Auto-create prompts by scraping websites

- Organization: Tag and favorite prompts for quick access

- Quick Access: Type "/" in any chat to instantly access your prompts

- Prompt Enhancement: AI-powered feature that transforms basic queries into comprehensive prompts

- Real Example: Input a website URL and basic information → receive a complete prompt with website summary and context

- Custom Instructions: Define specific behaviors and knowledge for each agent

- Knowledge Base: Upload documents to create specialized agents

- Model Selection: Choose the optimal AI model for each agent's purpose

- MCP Integration: Model Context Protocol support coming soon

- Quick Deployment: Access any agent instantly by typing "@" in chat

- Persistent Sessions: Selected agents remain active throughout conversations

- Complete Pipeline: Built-in document processing system

- Smart Processing: Documents are automatically chunked, embedded, and vectorized

- Intelligent Retrieval: User queries trigger semantic search across document chunks

- Context Injection: Relevant information is seamlessly provided to the LLM

- Agent Integration: RAG pipeline automatically processes documents uploaded to agents

- Growing Integrations: Gmail, Slack, Google Drive, and more being added regularly

- Developer Friendly: Follow our documentation to add your own MCP connections

- Multi-Workspace: Create separate environments for different teams or projects

- Brain Organization: Shared folders for teams with private areas for individual work

- Access Control: Granular permissions for chats, prompts, and agents

- Usage Analytics: Admin dashboard showing team member activity

- Team Groups: Organize users for efficient permission management

Unlock powerful AI-driven tools to streamline your business operations and enhance productivity across various domains.

The AI Document Editor allows you to quickly create and edit documents using ready-made templates or by designing your own. With AI assistance built in, writing and formatting become faster and more intuitive.

👉 Check out the repository

The AI Recruiter enables you to build custom interviewers and generate interview links that can be shared with candidates. After completion, you receive detailed analytics on interview results to help with hiring decisions.

👉 Check out the repository

With the Landing Page Generator, you can automatically create high-converting landing page content by providing a website URL, Figma design, or a PDF. It extracts and structures content tailored for web use.

👉 Check out the repository

The SEO Content Generator helps you craft SEO-optimized blog posts and articles. It also supports sitemap audits and on-page SEO improvements to enhance your website’s visibility.

👉 Check out the repository

The AI Chatbot Builder lets you create RAG (Retrieval-Augmented Generation)-based AI chatbots that can be embedded directly into your website. It also provides conversation history and usage analytics for ongoing improvement.

👉 Check out the repository

git clone https://github.com/weam-ai/weam.git

cd weam

cp .env.example .env

bash build.sh (for mac/linux)

or

bash winbuild.sh (for windows)

docker-compose up --build- Docs: Quickstart Guide

- From Source / Cloud: Coming soon

- Setup Video: Uploading soon

- Install with Docker (2 minutes)

- Add your LLM API keys (OpenAI, Claude, etc.)

- Create your first Workspace

- Add a Brain with files or data

- Start chatting with AI

- Invite your team and scale collaboration

We welcome contributions of all kinds:

- Star this repo to show your support

- Report issues you encounter

- Suggest features on our roadmap

- Submit pull requests for improvements

- Improve documentation

- Design UI/UX improvements

- Contributor Guide:

CONTRIBUTING.md

- Self-hosted: Your data never leaves your infrastructure

- Role-based access: Granular permissions and workspace isolation

- Audit logs: Complete activity tracking

- API security: OAuth 2.0 and JWT authentication

Weam AI is licensed under a modified Apache License 2.0 with additional terms to:

- Protect fair use and encourage contributions

- Ensure commercial use guidelines

- Maintain open source principles

SeeLICENSEfor full terms.

SaaS-style deployment of our open source project requires a commercial license. Learn more about commercial licensing and pricing here

Need help setting up?

Installation Support — Get Weam’s production-ready AI platform running fast with our professional installation services, so your team can focus on transforming workflows with AI.

- Smart Brain Memory: Turn your Brain's chat history into organizational memory that LLMs can optionally access for smarter, context-aware responses.

- Expanded MCP Ecosystem: More MCP integrations coming soon, plus the ability to connect any MCP service directly to your agents.

- Advanced Agent Capabilities: More ready to use Pro agents and AI Apps

Built with ❤️ by the Weam AI community

The open source AI platform that brings teams and AI together.

⭐ Star us on GitHub •

Join our Discord •

Visit our Website

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for weam

Similar Open Source Tools

weam

Weam is an open source platform designed to help teams systematically adopt AI. It provides a production-ready stack with Next.js frontend and Node.js/Python backend, allowing for immediate deployment and use. Weam connects to major LLM providers, enabling easy access to the latest AI models. The platform organizes AI interactions into 'Brains' for different departments, offering customization and expansion options. Features include chat system, productivity tools, sharing & access controls, prompt library, AI agents, RAG, MCP, enterprise features, pre-built automations, and upcoming AI app solutions. Weam is free, open source, and scalable to meet growing needs.

codegate

CodeGate is a local gateway that enhances the safety of AI coding assistants by ensuring AI-generated recommendations adhere to best practices, safeguarding code integrity, and protecting individual privacy. Developed by Stacklok, CodeGate allows users to confidently leverage AI in their development workflow without compromising security or productivity. It works seamlessly with coding assistants, providing real-time security analysis of AI suggestions. CodeGate is designed with privacy at its core, keeping all data on the user's machine and offering complete control over data.

nanobrowser

Nanobrowser is an open-source AI web automation tool that runs in your browser. It is a free alternative to OpenAI Operator with flexible LLM options and a multi-agent system. Nanobrowser offers premium web automation capabilities while keeping users in complete control, with features like a multi-agent system, interactive side panel, task automation, follow-up questions, and multiple LLM support. Users can easily download and install Nanobrowser as a Chrome extension, configure agent models, and accomplish tasks such as news summary, GitHub research, and shopping research with just a sentence. The tool uses a specialized multi-agent system powered by large language models to understand and execute complex web tasks. Nanobrowser is actively developed with plans to expand LLM support, implement security measures, optimize memory usage, enable session replay, and develop specialized agents for domain-specific tasks. Contributions from the community are welcome to improve Nanobrowser and build the future of web automation.

MyDeviceAI

MyDeviceAI is a personal AI assistant app for iPhone that brings the power of artificial intelligence directly to the device. It focuses on privacy, performance, and personalization by running AI models locally and integrating with privacy-focused web services. The app offers seamless user experience, web search integration, advanced reasoning capabilities, personalization features, chat history access, and broad device support. It requires macOS, Xcode, CocoaPods, Node.js, and a React Native development environment for installation. The technical stack includes React Native framework, AI models like Qwen 3 and BGE Small, SearXNG integration, Redux for state management, AsyncStorage for storage, Lucide for UI components, and tools like ESLint and Prettier for code quality.

refact

This repository contains Refact WebUI for fine-tuning and self-hosting of code models, which can be used inside Refact plugins for code completion and chat. Users can fine-tune open-source code models, self-host them, download and upload Lloras, use models for code completion and chat inside Refact plugins, shard models, host multiple small models on one GPU, and connect GPT-models for chat using OpenAI and Anthropic keys. The repository provides a Docker container for running the self-hosted server and supports various models for completion, chat, and fine-tuning. Refact is free for individuals and small teams under the BSD-3-Clause license, with custom installation options available for GPU support. The community and support include contributing guidelines, GitHub issues for bugs, a community forum, Discord for chatting, and Twitter for product news and updates.

lotti

Lotti is an open-source personal assistant that helps users capture, organize, and understand their work and life through AI-enhanced task management, audio recordings, and intelligent summaries. It ensures complete data ownership, configurable AI providers, privacy-first design, and no vendor lock-in. Users can pick up tasks, record voice notes, and ask for summaries. Core features include AI-powered intelligence, comprehensive tracking, and privacy & control. Lotti supports multiple AI providers, offers installation guides, beta testing options, and development instructions. It is built on Flutter with a focus on privacy, local AI, and user data ownership.

nexent

Nexent is a powerful tool for analyzing and visualizing network traffic data. It provides comprehensive insights into network behavior, helping users to identify patterns, anomalies, and potential security threats. With its user-friendly interface and advanced features, Nexent is suitable for network administrators, cybersecurity professionals, and anyone looking to gain a deeper understanding of their network infrastructure.

ChatFAQ

ChatFAQ is an open-source comprehensive platform for creating a wide variety of chatbots: generic ones, business-trained, or even capable of redirecting requests to human operators. It includes a specialized NLP/NLG engine based on a RAG architecture and customized chat widgets, ensuring a tailored experience for users and avoiding vendor lock-in.

LLMstudio

LLMstudio by TensorOps is a platform that offers prompt engineering tools for accessing models from providers like OpenAI, VertexAI, and Bedrock. It provides features such as Python Client Gateway, Prompt Editing UI, History Management, and Context Limit Adaptability. Users can track past runs, log costs and latency, and export history to CSV. The tool also supports automatic switching to larger-context models when needed. Coming soon features include side-by-side comparison of LLMs, automated testing, API key administration, project organization, and resilience against rate limits. LLMstudio aims to streamline prompt engineering, provide execution history tracking, and enable effortless data export, offering an evolving environment for teams to experiment with advanced language models.

beeai-platform

BeeAI is an open-source platform that simplifies the discovery, running, and sharing of AI agents across different frameworks. It addresses challenges such as framework fragmentation, deployment complexity, and discovery issues by providing a standardized platform for individuals and teams to access agents easily. With features like a centralized agent catalog, framework-agnostic interfaces, containerized agents, and consistent user experiences, BeeAI aims to streamline the process of working with AI agents for both developers and teams.

CodeGPT

CodeGPT is an extension for JetBrains IDEs that provides access to state-of-the-art large language models (LLMs) for coding assistance. It offers a range of features to enhance the coding experience, including code completions, a ChatGPT-like interface for instant coding advice, commit message generation, reference file support, name suggestions, and offline development support. CodeGPT is designed to keep privacy in mind, ensuring that user data remains secure and private.

TaskingAI

TaskingAI brings Firebase's simplicity to **AI-native app development**. The platform enables the creation of GPTs-like multi-tenant applications using a wide range of LLMs from various providers. It features distinct, modular functions such as Inference, Retrieval, Assistant, and Tool, seamlessly integrated to enhance the development process. TaskingAI’s cohesive design ensures an efficient, intelligent, and user-friendly experience in AI application development.

promptbook

Promptbook is a library designed to build responsible, controlled, and transparent applications on top of large language models (LLMs). It helps users overcome limitations of LLMs like hallucinations, off-topic responses, and poor quality output by offering features such as fine-tuning models, prompt-engineering, and orchestrating multiple prompts in a pipeline. The library separates concerns, establishes a common format for prompt business logic, and handles low-level details like model selection and context size. It also provides tools for pipeline execution, caching, fine-tuning, anomaly detection, and versioning. Promptbook supports advanced techniques like Retrieval-Augmented Generation (RAG) and knowledge utilization to enhance output quality.

plandex

Plandex is an open source, terminal-based AI coding engine designed for complex tasks. It uses long-running agents to break up large tasks into smaller subtasks, helping users work through backlogs, navigate unfamiliar technologies, and save time on repetitive tasks. Plandex supports various AI models, including OpenAI, Anthropic Claude, Google Gemini, and more. It allows users to manage context efficiently in the terminal, experiment with different approaches using branches, and review changes before applying them. The tool is platform-independent and runs from a single binary with no dependencies.

learnhouse

LearnHouse is an open-source platform that allows anyone to easily provide world-class educational content. It supports various content types, including dynamic pages, videos, and documents. The platform is still in early development and should not be used in production environments. However, it offers several features, such as dynamic Notion-like pages, ease of use, multi-organization support, support for uploading videos and documents, course collections, user management, quizzes, course progress tracking, and an AI-powered assistant for teachers and students. LearnHouse is built using various open-source projects, including Next.js, TailwindCSS, Radix UI, Tiptap, FastAPI, YJS, PostgreSQL, LangChain, and React.

refact-vscode

Refact.ai is an open-source AI coding assistant that boosts developer's productivity. It supports 25+ programming languages and offers features like code completion, AI Toolbox for code explanation and refactoring, integrated in-IDE chat, and self-hosting or cloud version. The Enterprise plan provides enhanced customization, security, fine-tuning, user statistics, efficient inference, priority support, and access to 20+ LLMs for up to 50 engineers per GPU.

For similar tasks

Azure-Analytics-and-AI-Engagement

The Azure-Analytics-and-AI-Engagement repository provides packaged Industry Scenario DREAM Demos with ARM templates (Containing a demo web application, Power BI reports, Synapse resources, AML Notebooks etc.) that can be deployed in a customer’s subscription using the CAPE tool within a matter of few hours. Partners can also deploy DREAM Demos in their own subscriptions using DPoC.

sorrentum

Sorrentum is an open-source project that aims to combine open-source development, startups, and brilliant students to build machine learning, AI, and Web3 / DeFi protocols geared towards finance and economics. The project provides opportunities for internships, research assistantships, and development grants, as well as the chance to work on cutting-edge problems, learn about startups, write academic papers, and get internships and full-time positions at companies working on Sorrentum applications.

tidb

TiDB is an open-source distributed SQL database that supports Hybrid Transactional and Analytical Processing (HTAP) workloads. It is MySQL compatible and features horizontal scalability, strong consistency, and high availability.

zep-python

Zep is an open-source platform for building and deploying large language model (LLM) applications. It provides a suite of tools and services that make it easy to integrate LLMs into your applications, including chat history memory, embedding, vector search, and data enrichment. Zep is designed to be scalable, reliable, and easy to use, making it a great choice for developers who want to build LLM-powered applications quickly and easily.

telemetry-airflow

This repository codifies the Airflow cluster that is deployed at workflow.telemetry.mozilla.org (behind SSO) and commonly referred to as "WTMO" or simply "Airflow". Some links relevant to users and developers of WTMO: * The `dags` directory in this repository contains some custom DAG definitions * Many of the DAGs registered with WTMO don't live in this repository, but are instead generated from ETL task definitions in bigquery-etl * The Data SRE team maintains a WTMO Developer Guide (behind SSO)

mojo

Mojo is a new programming language that bridges the gap between research and production by combining Python syntax and ecosystem with systems programming and metaprogramming features. Mojo is still young, but it is designed to become a superset of Python over time.

pandas-ai

PandasAI is a Python library that makes it easy to ask questions to your data in natural language. It helps you to explore, clean, and analyze your data using generative AI.

databend

Databend is an open-source cloud data warehouse that serves as a cost-effective alternative to Snowflake. With its focus on fast query execution and data ingestion, it's designed for complex analysis of the world's largest datasets.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.