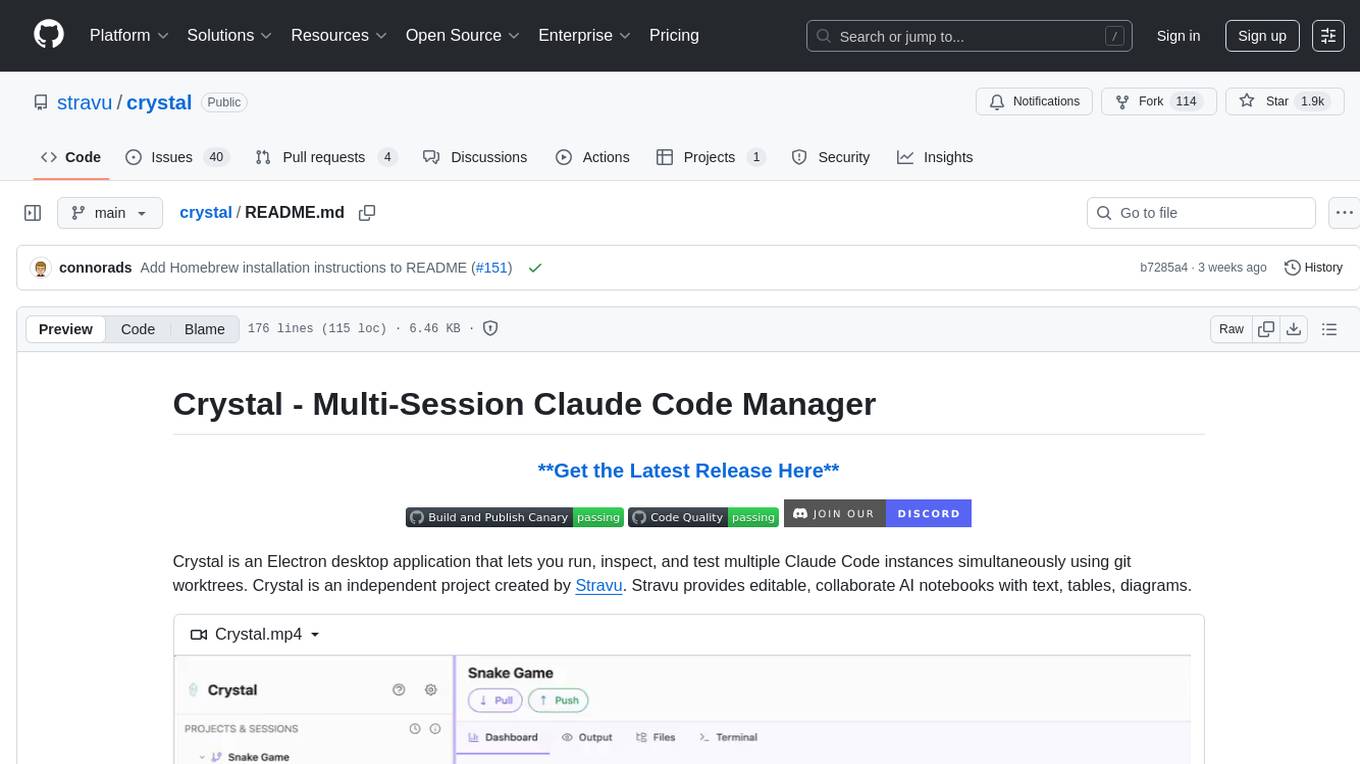

crystal

Run multiple Claude Code AI sessions in parallel git worktrees. Test, compare approaches & manage AI-assisted development workflows in one desktop app.

Stars: 1900

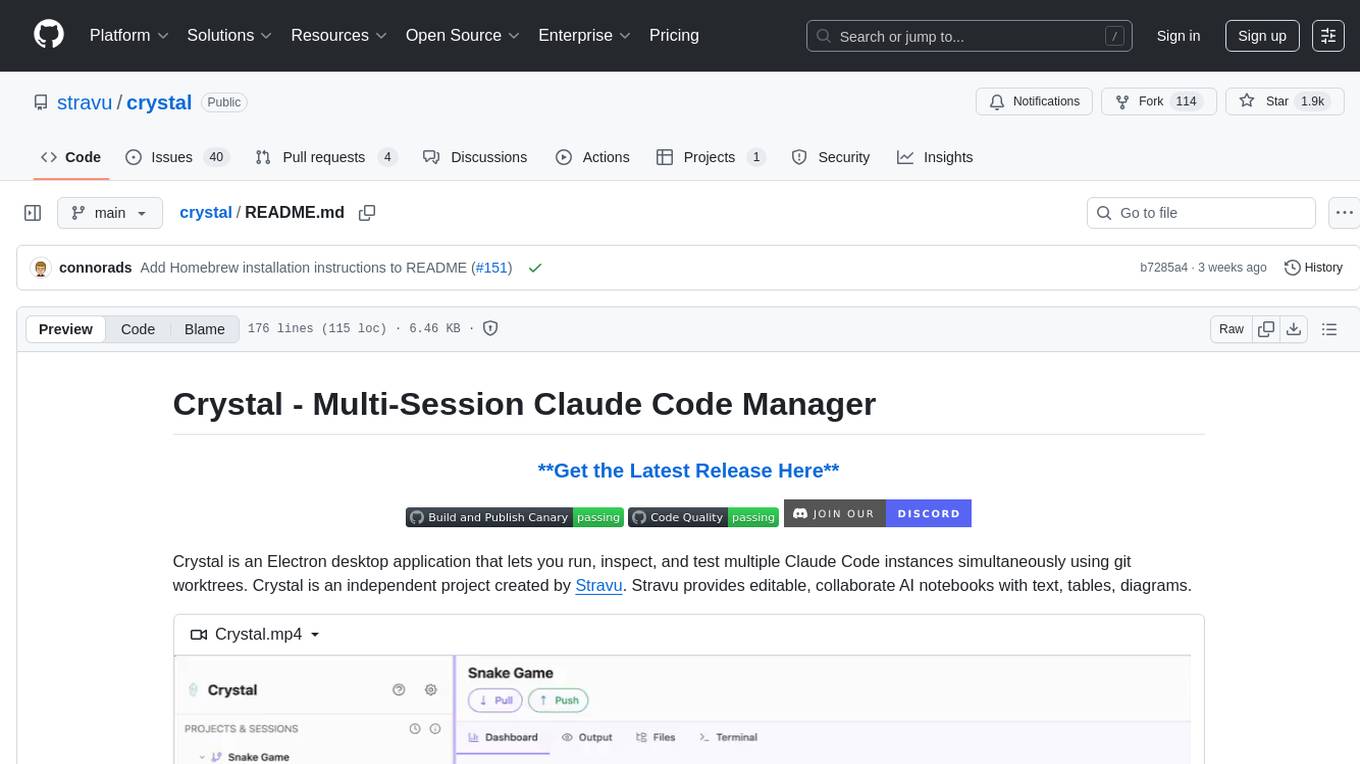

Crystal is an Electron desktop application that allows users to run, inspect, and test multiple Claude Code instances simultaneously using git worktrees. It provides features such as parallel sessions, git worktree isolation, session persistence, git integration, change tracking, notifications, and the ability to run scripts. Crystal simplifies the workflow by creating isolated sessions, iterating with Claude Code, reviewing diff changes, and squashing commits for a clean history. It is a tool designed for collaborative AI notebook editing and testing.

README:

Crystal is an Electron desktop application that lets you run, inspect, and test multiple Claude Code instances simultaneously using git worktrees. Crystal is an independent project created by Stravu. Stravu provides editable, collaborate AI notebooks with text, tables, diagrams.

https://github.com/user-attachments/assets/5534f535-40fc-4de1-a5e1-896524d260c9

- Create sessions from prompts, each in an isolated git worktree

- Iterate with Claude Code inside your sessions. Each iteration will make a commit so you can always go back.

- Review the diff changes and make manual edits as needed

- Squash your commits together with a new message and rebase to your main branch.

- 🚀 Parallel Sessions - Run multiple Claude Code instances at once

- 🌳 Git Worktree Isolation - Each session gets its own branch

- 💾 Session Persistence - Resume conversations anytime

- 🔧 Git Integration - Built-in rebase and squash operations

- 📊 Change Tracking - View diffs and track modifications

- 🔔 Notifications - Desktop alerts when sessions need input

- 🏗️ Run Scripts - Test changes instantly without leaving Crystal

- Claude Code installed and logged in or API key provided

- Git installed

- Git repository (Crystal will initialize one if needed)

Create a new project if you haven't already. This can be an empty folder or an existing git repository. Crystal will initialize git if needed.

For any feature you're working on, create one or multiple new sessions:

- Each session will be an isolated git worktree

As sessions complete:

- Configure run scripts in project settings to test your application without leaving Crystal

- Use the diff viewer to review all changes and make manual edits as needed

- Continue conversations with Claude Code if you need additional changes

When everything looks good:

- Click "Rebase to main" to squash all commits with a new message and rebase them to your main branch

- This creates a clean commit history on your main branch

- Rebase from main: Pull latest changes from main into your worktree

- Squash and rebase to main: Combine all commits and rebase onto main

- Always preview commands with tooltips before executing

-

macOS: Download

Crystal-{version}.dmgfrom the latest release- Open the DMG file and drag Crystal to your Applications folder

- On first launch, you may need to right-click and select "Open" due to macOS security settings

brew install --cask stravu-crystal# Clone the repository

git clone https://github.com/stravu/crystal.git

cd crystal

# One-time setup

pnpm run setup

# Run in development

pnpm run electron-dev# Build for macOS

pnpm build:macWe welcome contributions! Please see our Contributing Guidelines for details.

If you're using Crystal to develop Crystal itself, you need to use a separate data directory to avoid conflicts with your main Crystal instance:

# Set the run script in your Crystal project settings to:

pnpm run setup && pnpm run build:main && CRYSTAL_DIR=~/.crystal_test pnpm electron-devThis ensures:

- Your development Crystal instance uses

~/.crystal_testfor its data - Your main Crystal instance continues using

~/.crystal - Worktrees won't conflict between the two instances

- You can safely test changes without affecting your primary Crystal setup

To use Crystal with cloud providers or via corporate infrastructure, you should create a settings file with ENV values to correctly connect to the provider.

For example, here is a minimal configuration to use Amazon Bedrock via an AWS Profile:

{

"env": {

"CLAUDE_CODE_USE_BEDROCK": "1",

"AWS_REGION": "us-east-2", // Replace with your AWS region

"AWS_PROFILE": "my-aws-profile" // Replace with your profile

},

}Check the deployment documentation for more information on getting setup with your particular deployment.

For a full project overview, see CLAUDE.md. Additional diagrams, database schema details, release instructions, and license notes can be found in the docs directory.

Crystal is open source software licensed under the MIT License.

Crystal includes third-party software components. All third-party licenses are documented in the NOTICES file. This file is automatically generated and kept up-to-date with our dependencies.

To regenerate the NOTICES file after updating dependencies:

pnpm run generate-noticesCrystal is an independent project created by Stravu. Claude™ is a trademark of Anthropic, PBC. Crystal is not affiliated with, endorsed by, or sponsored by Anthropic. This tool is designed to work with Claude Code, which must be installed separately.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for crystal

Similar Open Source Tools

crystal

Crystal is an Electron desktop application that allows users to run, inspect, and test multiple Claude Code instances simultaneously using git worktrees. It provides features such as parallel sessions, git worktree isolation, session persistence, git integration, change tracking, notifications, and the ability to run scripts. Crystal simplifies the workflow by creating isolated sessions, iterating with Claude Code, reviewing diff changes, and squashing commits for a clean history. It is a tool designed for collaborative AI notebook editing and testing.

traceroot

TraceRoot is a tool that helps engineers debug production issues 10× faster using AI-powered analysis of traces, logs, and code context. It accelerates the debugging process with AI-powered insights, integrates seamlessly into the development workflow, provides real-time trace and log analysis, code context understanding, and intelligent assistance. Features include ease of use, LLM flexibility, distributed services, AI debugging interface, and integration support. Users can get started with TraceRoot Cloud for a 7-day trial or self-host the tool. SDKs are available for Python and JavaScript/TypeScript.

generator

ctx is a tool designed to automatically generate organized context files from code files, GitHub repositories, Git commits, web pages, and plain text. It aims to efficiently provide necessary context to AI language models like ChatGPT and Claude, enabling users to streamline code refactoring, multiple iteration development, documentation generation, and seamless AI integration. With ctx, users can create structured markdown documents, save context files, and serve context through an MCP server for real-time assistance. The tool simplifies the process of sharing project information with AI assistants, making AI conversations smarter and easier.

qwen-code

Qwen Code is an open-source AI agent optimized for Qwen3-Coder, designed to help users understand large codebases, automate tedious work, and expedite the shipping process. It offers an agentic workflow with rich built-in tools, a terminal-first approach with optional IDE integration, and supports both OpenAI-compatible API and Qwen OAuth authentication methods. Users can interact with Qwen Code in interactive mode, headless mode, IDE integration, and through a TypeScript SDK. The tool can be configured via settings.json, environment variables, and CLI flags, and offers benchmark results for performance evaluation. Qwen Code is part of an ecosystem that includes AionUi and Gemini CLI Desktop for graphical interfaces, and troubleshooting guides are available for issue resolution.

repomix

Repomix is a powerful tool that packs your entire repository into a single, AI-friendly file. It is designed to format your codebase for easy understanding by AI tools like Large Language Models (LLMs), Claude, ChatGPT, and Gemini. Repomix offers features such as AI optimization, token counting, simplicity in usage, customization options, Git awareness, and security-focused checks using Secretlint. It allows users to pack their entire repository or specific directories/files using glob patterns, and even supports processing remote Git repositories. The tool generates output in plain text, XML, or Markdown formats, with options for including/excluding files, removing comments, and performing security checks. Repomix also provides a global configuration option, custom instructions for AI context, and a security check feature to detect sensitive information in files.

langchain-google

LangChain Google is a repository containing three packages with Google integrations: langchain-google-genai for Google Generative AI models, langchain-google-vertexai for Google Cloud Generative AI on Vertex AI, and langchain-google-community for other Google product integrations. The repository is organized as a monorepo with a structure including libs for different packages, and files like pyproject.toml and Makefile for building, linting, and testing. It provides guidelines for contributing, local development dependencies installation, formatting, linting, working with optional dependencies, and testing with unit and integration tests. The focus is on maintaining unit test coverage and avoiding excessive integration tests, with annotations for GCP infrastructure-dependent tests.

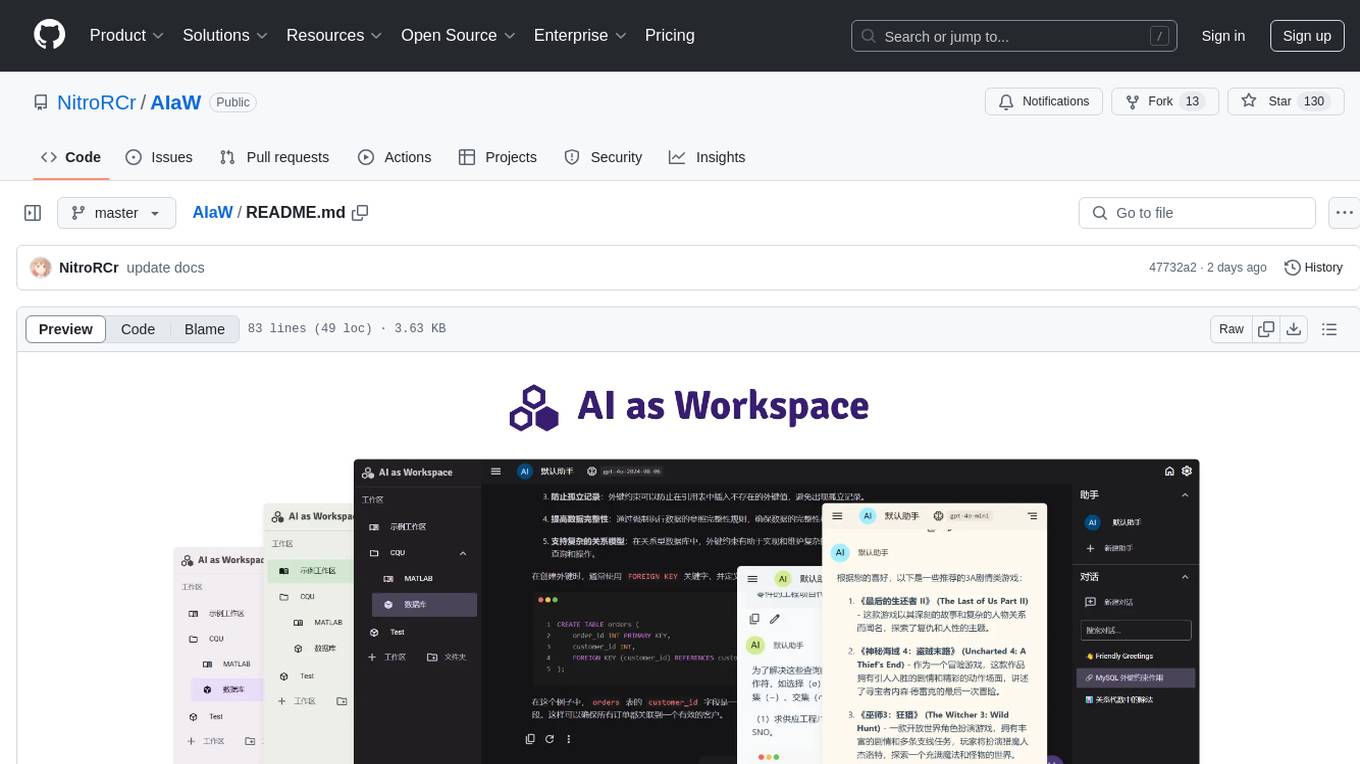

AIaW

AIaW is a next-generation LLM client with full functionality, lightweight, and extensible. It supports various basic functions such as streaming transfer, image uploading, and latex formulas. The tool is cross-platform with a responsive interface design. It supports multiple service providers like OpenAI, Anthropic, and Google. Users can modify questions, regenerate in a forked manner, and visualize conversations in a tree structure. Additionally, it offers features like file parsing, video parsing, plugin system, assistant market, local storage with real-time cloud sync, and customizable interface themes. Users can create multiple workspaces, use dynamic prompt word variables, extend plugins, and benefit from detailed design elements like real-time content preview, optimized code pasting, and support for various file types.

ai-manus

AI Manus is a general-purpose AI Agent system that supports running various tools and operations in a sandbox environment. It offers deployment with minimal dependencies, supports multiple tools like Terminal, Browser, File, Web Search, and messaging tools, allocates separate sandboxes for tasks, manages session history, supports stopping and interrupting conversations, file upload and download, and is multilingual. The system also provides user login and authentication. The project primarily relies on Docker for development and deployment, with model capability requirements and recommended Deepseek and GPT models.

openchamber

OpenChamber is a web and desktop interface for the OpenCode AI coding agent, designed to work alongside the OpenCode TUI. The project was built entirely with AI coding agents under supervision, serving as a proof of concept that AI agents can create usable software. It offers features like integrated terminal, Git operations with AI commit message generation, smart tool visualization, permission management, multi-agent runs, task tracker UI, model selection UX, UI scaling controls, session auto-cleanup, and memory optimizations. OpenChamber provides cross-device continuity, remote access, and a visual alternative for developers preferring GUI workflows.

Awesome-Vibe-Coding

Awesome-Vibe-Coding is a curated list of Vibe Coding open-source projects, tools, and learning resources. It includes a comprehensive collection of AI-powered development toolkits, IDEs, extensions, platforms, and cloud-based agents for modern software development. The repository also covers development standards, MCP servers, agent communication protocols, agent SDKs, supporting tools, vibe coding projects, and learning resources. The project aims to showcase the power of AI-assisted development and provide a resource hub for developers interested in Vibe Coding practices.

mosaico

Mosaico is a blazing-fast data platform designed to bridge the gap between Robotics and Physical AI. It streamlines data management, compression, and search by replacing monolithic files with a structured archive powered by Rust and Python. The platform operates on a standard client-server model, with the server daemon, mosaicod, handling heavy lifting tasks like data conversion, compression, and organized storage. The Python SDK (mosaico-sdk-py) and Rust backend (mosaicod) are included in this monorepo configuration to simplify testing and reduce compatibility issues. Mosaico enables the ingestion of standard ROS sequences, transforming them into synchronized, randomized dataframes for Physical AI applications. Efficiency is built into the architecture, with data batches streamed directly from the Mosaico data platform, eliminating the need to download massive datasets locally.

runhouse

Runhouse is a tool that allows you to build, run, and deploy production-quality AI apps and workflows on your own compute. It provides simple, powerful APIs for the full lifecycle of AI development, from research to evaluation to production to updates to scaling to management, and across any infra. By automatically packaging your apps into scalable, secure, and observable services, Runhouse can also turn otherwise redundant AI activities into common reusable components across your team or company, which improves cost, velocity, and reproducibility.

context-lens

Context Lens is a local proxy tool that captures LLM API calls from coding tools to provide a breakdown of context composition, including system prompts, tool definitions, conversation history, tool results, and thinking blocks. It helps developers understand why coding sessions may be resource-intensive without requiring any code changes. The tool works with various coding tools like Claude Code, Codex, Gemini CLI, Aider, and Pi, interacting with OpenAI, Anthropic, and Google APIs. Context Lens offers a visual treemap breakdown, cost tracking, conversation threading, agent breakdown, timeline visualization, context diff analysis, findings flags, auto-detection of coding tools, LHAR export, state persistence, and streaming support, all running locally for privacy and control.

hyper-mcp

hyper-mcp is a fast and secure MCP server that enables adding AI capabilities to applications through WebAssembly plugins. It supports writing plugins in various languages, distributing them via standard OCI registries, and running them in resource-constrained environments. The tool offers sandboxing with WASM for limiting access, cross-platform compatibility, and deployment flexibility. Security features include sandboxed plugins, memory-safe execution, secure plugin distribution, and fine-grained access control. Users can configure the tool for global or project-specific use, start the server with different transport options, and utilize available plugins for tasks like time calculations, QR code generation, hash generation, IP retrieval, and webpage fetching.

ultracontext

UltraContext is a context API for AI agents that simplifies controlling what agents see by allowing users to replace messages, compact or offload context, replay decisions, and roll back mistakes with a single API call. It provides versioned context out of the box with full history and zero complexity. The tool aims to address the issue of context rot in large language models by providing a simple API with automatic versioning, time-travel capabilities, schema-free data storage, framework-agnostic compatibility, and fast performance. UltraContext is designed to streamline the process of managing context for AI agents, enabling users to focus on solving interesting problems rather than spending time gluing context together.

llm

The 'llm' package for Emacs provides an interface for interacting with Large Language Models (LLMs). It abstracts functionality to a higher level, concealing API variations and ensuring compatibility with various LLMs. Users can set up providers like OpenAI, Gemini, Vertex, Claude, Ollama, GPT4All, and a fake client for testing. The package allows for chat interactions, embeddings, token counting, and function calling. It also offers advanced prompt creation and logging capabilities. Users can handle conversations, create prompts with placeholders, and contribute by creating providers.

For similar tasks

crystal

Crystal is an Electron desktop application that allows users to run, inspect, and test multiple Claude Code instances simultaneously using git worktrees. It provides features such as parallel sessions, git worktree isolation, session persistence, git integration, change tracking, notifications, and the ability to run scripts. Crystal simplifies the workflow by creating isolated sessions, iterating with Claude Code, reviewing diff changes, and squashing commits for a clean history. It is a tool designed for collaborative AI notebook editing and testing.

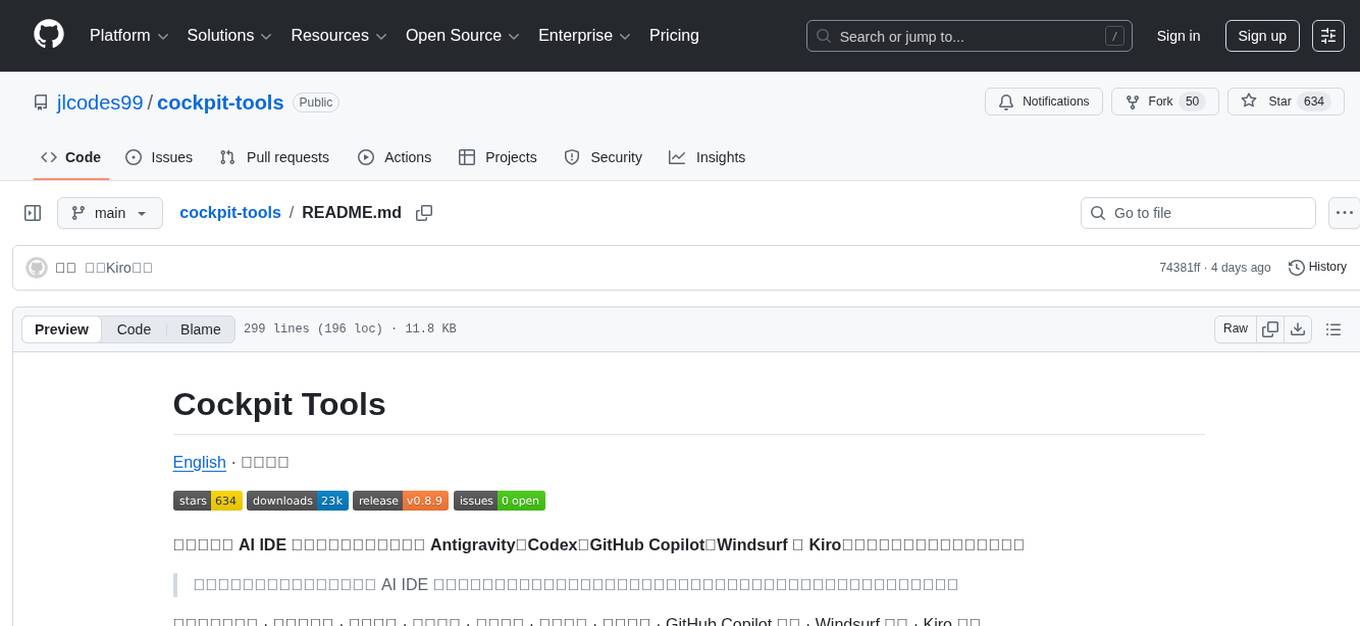

cockpit-tools

Cockpit Tools is a versatile AI IDE account management tool that supports Antigravity, Codex, GitHub Copilot, Windsurf, and Kiro. It allows efficient management of multiple AI IDE accounts with features like one-click switching, quota monitoring, automatic wake-up, and parallel running of multiple instances. The tool supports 16 languages and provides functionalities such as dashboard overview, account management for each supported platform, multiple instance management, quota monitoring, wake-up tasks, device fingerprinting, and plugin integration.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.