autolabel

Label, clean and enrich text datasets with LLMs.

Stars: 2034

Autolabel is a Python library designed to label, clean, and enrich text datasets using Large Language Models (LLMs). It provides a simple 3-step process for labeling data, supports various NLP tasks, and offers features like confidence estimation, explanations, and state management. Users can access Refuel hosted LLMs for labeling and confidence estimation, and the library supports commercial and open source LLMs from providers like OpenAI, Anthropic, HuggingFace, and Google. Autolabel aims to streamline the labeling process for machine learning tasks by leveraging state-of-the-art LLM techniques and minimizing costs and experimentation time.

README:

pip install refuel-autolabel

Access to large, clean and diverse labeled datasets is a critical component for any machine learning effort to be successful. State-of-the-art LLMs like GPT-4 are able to automatically label data with high accuracy, and at a fraction of the cost and time compared to manual labeling.

Autolabel is a Python library to label, clean and enrich text datasets with any Large Language Models (LLM) of your choice.

Check out our technical report to learn more about the performance of RefuelLLM-v2 on our benchmark. You can replicate the benchmark yourself by following the steps below

cd autolabel/benchmark

curl https://autolabel-benchmarking.s3.us-west-2.amazonaws.com/data.zip -o data.zip

unzip data.zip

python benchmark.py --model $model --base_dir benchmark-results

python results.py --eval_dir benchmark-results

cat results.csvYou can benchmark the relevant model by replacing $model with the name of the model needed to be benchmarked. If it is an API hosted model like gpt-3.5-turbo, gpt-4-1106-preview, claude-3-opus-20240229, gemini-1.5-pro-preview-0409 or some other Autolabel supported model, just write the name of the model. If the model to be benchmarked is a vLLM supported model then pass the local path or the huggingface path corresponding to the model. This will run the benchmark along with the same prompts for all models.

The results.csv will contain a row with every model that was benchmarked as a row. Look at benchmark/results.csv for an example.

Autolabel provides a simple 3-step process for labeling data:

- Specify the labeling guidelines and LLM model to use in a JSON config.

- Dry-run to make sure the final prompt looks good.

- Kick off a labeling run for your dataset!

Let's imagine we are building an ML model to analyze sentiment analysis of movie review. We have a dataset of movie reviews that we'd like to get labeled first. For this case, here's what the example dataset and configs will look like:

{

"task_name": "MovieSentimentReview",

"task_type": "classification",

"model": {

"provider": "openai",

"name": "gpt-3.5-turbo"

},

"dataset": {

"label_column": "label",

"delimiter": ","

},

"prompt": {

"task_guidelines": "You are an expert at analyzing the sentiment of movie reviews. Your job is to classify the provided movie review into one of the following labels: {labels}",

"labels": [

"positive",

"negative",

"neutral"

],

"few_shot_examples": [

{

"example": "I got a fairly uninspired stupid film about how human industry is bad for nature.",

"label": "negative"

},

{

"example": "I loved this movie. I found it very heart warming to see Adam West, Burt Ward, Frank Gorshin, and Julie Newmar together again.",

"label": "positive"

},

{

"example": "This movie will be played next week at the Chinese theater.",

"label": "neutral"

}

],

"example_template": "Input: {example}\nOutput: {label}"

}

}Initialize the labeling agent and pass it the config:

from autolabel import LabelingAgent, AutolabelDataset

agent = LabelingAgent(config='config.json')Preview an example prompt that will be sent to the LLM:

ds = AutolabelDataset('dataset.csv', config = config)

agent.plan(ds)This prints:

━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━ 100/100 0:00:00 0:00:00

┌──────────────────────────┬─────────┐

│ Total Estimated Cost │ $0.538 │

│ Number of Examples │ 200 │

│ Average cost per example │ 0.00269 │

└──────────────────────────┴─────────┘

─────────────────────────────────────────

Prompt Example:

You are an expert at analyzing the sentiment of movie reviews. Your job is to classify the provided movie review into one of the following labels: [positive, negative, neutral]

Some examples with their output answers are provided below:

Example: I got a fairly uninspired stupid film about how human industry is bad for nature.

Output:

negative

Example: I loved this movie. I found it very heart warming to see Adam West, Burt Ward, Frank Gorshin, and Julie Newmar together again.

Output:

positive

Example: This movie will be played next week at the Chinese theater.

Output:

neutral

Now I want you to label the following example:

Input: A rare exception to the rule that great literature makes disappointing films.

Output:

─────────────────────────────────────────────────────────────────────────────────────────

Finally, we can run the labeling on a subset or entirety of the dataset:

ds = agent.run(ds)The output dataframe contains the label column:

ds.df.head()

text ... MovieSentimentReview_llm_label

0 I was very excited about seeing this film, ant... ... negative

1 Serum is about a crazy doctor that finds a ser... ... negative

4 I loved this movie. I knew it would be chocked... ... positive

...- Label data for NLP tasks such as classification, question-answering and named entity-recognition, entity matching and more.

- Use commercial or open source LLMs from providers such as OpenAI, Anthropic, HuggingFace, Google and more.

- Support for research-proven LLM techniques to boost label quality, such as few-shot learning and chain-of-thought prompting.

- Confidence estimation and explanations out of the box for every single output label

- Caching and state management to minimize costs and experimentation time

Refuel provides access to hosted open source LLMs for labeling, and for estimating confidence This is helpful, because you can calibrate a confidence threshold for your labeling task, and then route less confident labels to humans, while you still get the benefits of auto-labeling for the confident examples.

In order to use Refuel hosted LLMs, you can request access here.

Check out our public roadmap to learn more about ongoing and planned improvements to the Autolabel library.

We are always looking for suggestions and contributions from the community. Join the discussion on Discord or open a Github issue to report bugs and request features.

Autolabel is a rapidly developing project. We welcome contributions in all forms - bug reports, pull requests and ideas for improving the library.

- Join the conversation on Discord

- Open an issue on Github for bugs and request features.

- Grab an open issue, and submit a pull request.

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for autolabel

Similar Open Source Tools

autolabel

Autolabel is a Python library designed to label, clean, and enrich text datasets using Large Language Models (LLMs). It provides a simple 3-step process for labeling data, supports various NLP tasks, and offers features like confidence estimation, explanations, and state management. Users can access Refuel hosted LLMs for labeling and confidence estimation, and the library supports commercial and open source LLMs from providers like OpenAI, Anthropic, HuggingFace, and Google. Autolabel aims to streamline the labeling process for machine learning tasks by leveraging state-of-the-art LLM techniques and minimizing costs and experimentation time.

ebook-mcp

Ebook-MCP is a powerful Model Context Protocol (MCP) server designed for processing electronic books. It provides standardized APIs for seamless integration between LLM applications and e-book processing capabilities. The tool supports EPUB and PDF formats, enabling users to manage their digital library, have interactive reading experiences, support active learning, and easily navigate content through natural language queries. By bridging traditional e-books with AI capabilities, Ebook-MCP enhances the value users can extract from their digital reading materials.

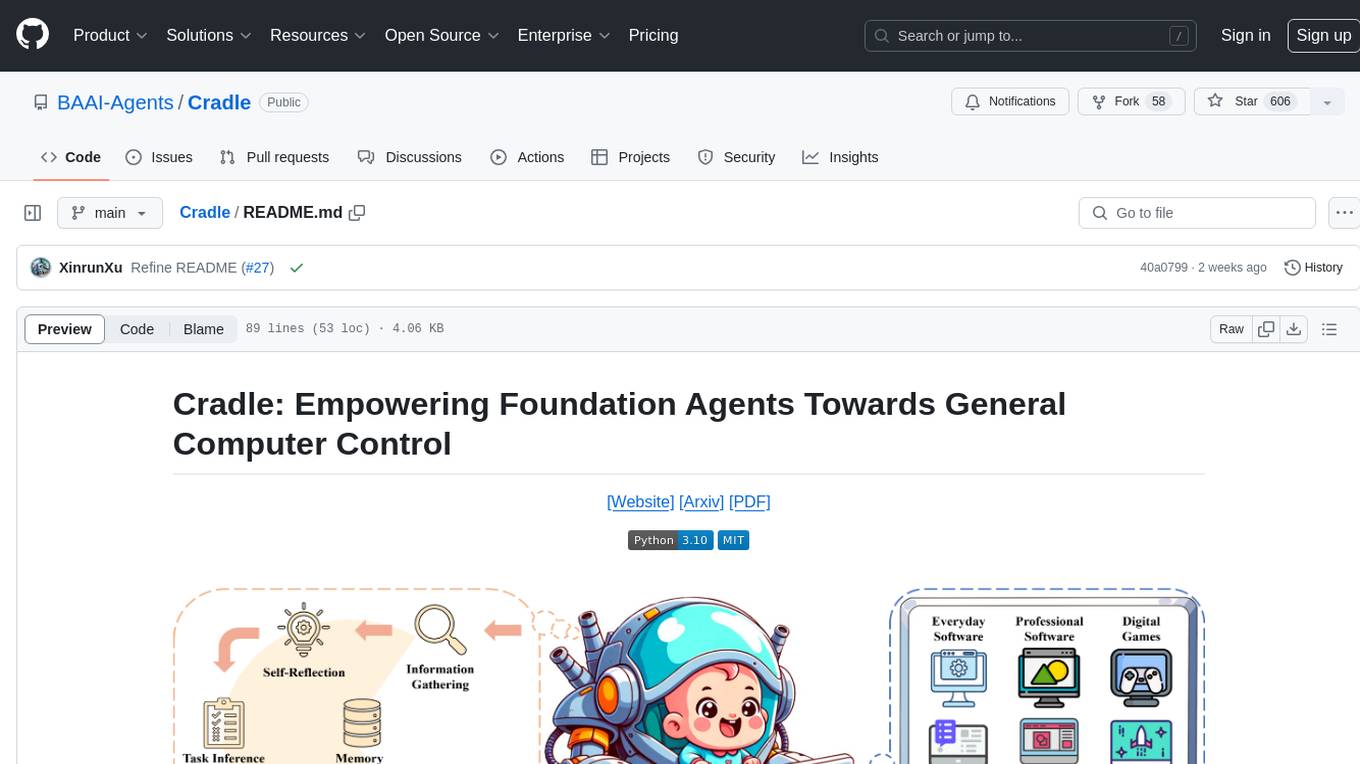

Cradle

The Cradle project is a framework designed for General Computer Control (GCC), empowering foundation agents to excel in various computer tasks through strong reasoning abilities, self-improvement, and skill curation. It provides a standardized environment with minimal requirements, constantly evolving to support more games and software. The repository includes released versions, publications, and relevant assets.

VSP-LLM

VSP-LLM (Visual Speech Processing incorporated with LLMs) is a novel framework that maximizes context modeling ability by leveraging the power of LLMs. It performs multi-tasks of visual speech recognition and translation, where given instructions control the task type. The input video is mapped to the input latent space of a LLM using a self-supervised visual speech model. To address redundant information in input frames, a deduplication method is employed using visual speech units. VSP-LLM utilizes Low Rank Adaptors (LoRA) for computationally efficient training.

all-rag-techniques

This repository provides a hands-on approach to Retrieval-Augmented Generation (RAG) techniques, simplifying advanced concepts into understandable implementations using Python libraries like openai, numpy, and matplotlib. It offers a collection of Jupyter Notebooks with concise explanations, step-by-step implementations, code examples, evaluations, and visualizations for various RAG techniques. The goal is to make RAG more accessible and demystify its workings for educational purposes.

FinAnGPT-Pro

FinAnGPT-Pro is a financial data downloader and AI query system that downloads quarterly and annual financial data for stocks from EOD Historical Data, storing it in MongoDB and Google BigQuery. It includes an AI-powered natural language interface for querying financial data. Users can set up the tool by following the prerequisites and setup instructions provided in the README. The tool allows users to download financial data for all stocks in a watchlist or for a single stock, query financial data using a natural language interface, and receive responses in a structured format. Important considerations include error handling, rate limiting, data validation, BigQuery costs, MongoDB connection, and security measures for API keys and credentials.

elmer

Elmer is a user-friendly wrapper over common APIs for calling llm’s, with support for streaming and easy registration and calling of R functions. Users can interact with Elmer in various ways, such as interactive chat console, interactive method call, programmatic chat, and streaming results. Elmer also supports async usage for running multiple chat sessions concurrently, useful for Shiny applications. The tool calling feature allows users to define external tools that Elmer can request to execute, enhancing the capabilities of the chat model.

ai-hedge-fund

AI Hedge Fund is a proof of concept for an AI-powered hedge fund that explores the use of AI to make trading decisions. The project is for educational purposes only and simulates trading decisions without actual trading. It employs agents like Market Data Analyst, Valuation Agent, Sentiment Agent, Fundamentals Agent, Technical Analyst, Risk Manager, and Portfolio Manager to gather and analyze data, calculate risk metrics, and make trading decisions.

AI-TOD

AI-TOD is a dataset for tiny object detection in aerial images, containing 700,621 object instances across 28,036 images. Objects in AI-TOD are smaller with a mean size of 12.8 pixels compared to other aerial image datasets. To use AI-TOD, download xView training set and AI-TOD_wo_xview, then generate the complete dataset using the provided synthesis tool. The dataset is publicly available for academic and research purposes under CC BY-NC-SA 4.0 license.

langgraph4j

Langgraph4j is a Java library for language processing tasks such as text classification, sentiment analysis, and named entity recognition. It provides a set of tools and algorithms for analyzing text data and extracting useful information. The library is designed to be efficient and easy to use, making it suitable for both research and production applications.

sec-parser

The `sec-parser` project simplifies extracting meaningful information from SEC EDGAR HTML documents by organizing them into semantic elements and a tree structure. It helps in parsing SEC filings for financial and regulatory analysis, analytics and data science, AI and machine learning, causal AI, and large language models. The tool is especially beneficial for AI, ML, and LLM applications by streamlining data pre-processing and feature extraction.

RooFlow

RooFlow is a VS Code extension that enhances AI-assisted development by providing persistent project context and optimized mode interactions. It reduces token consumption and streamlines workflow by integrating Architect, Code, Test, Debug, and Ask modes. The tool simplifies setup, offers real-time updates, and provides clearer instructions through YAML-based rule files. It includes components like Memory Bank, System Prompts, VS Code Integration, and Real-time Updates. Users can install RooFlow by downloading specific files, placing them in the project structure, and running an insert-variables script. They can then start a chat, select a mode, interact with Roo, and use the 'Update Memory Bank' command for synchronization. The Memory Bank structure includes files for active context, decision log, product context, progress tracking, and system patterns. RooFlow features persistent context, real-time updates, mode collaboration, and reduced token consumption.

PentestGPT

PentestGPT is a penetration testing tool empowered by ChatGPT, designed to automate the penetration testing process. It operates interactively to guide penetration testers in overall progress and specific operations. The tool supports solving easy to medium HackTheBox machines and other CTF challenges. Users can use PentestGPT to perform tasks like testing connections, using different reasoning models, discussing with the tool, searching on Google, and generating reports. It also supports local LLMs with custom parsers for advanced users.

YuE

YuE (乐) is an open-source foundation model designed for music generation, specifically transforming lyrics into full songs. It can generate complete songs in various genres and vocal styles, ensuring a polished and cohesive result. The model requires significant GPU memory for generating long sequences and recommends specific configurations for optimal performance. Users can customize the number of sessions for memory usage. The tool provides a quickstart guide for generating music using Transformers and includes tips for execution time and tag selection. The project is licensed under Creative Commons Attribution Non Commercial 4.0.

giskard

Giskard is an open-source Python library that automatically detects performance, bias & security issues in AI applications. The library covers LLM-based applications such as RAG agents, all the way to traditional ML models for tabular data.

exospherehost

Exosphere is an open source infrastructure designed to run AI agents at scale for large data and long running flows. It allows developers to define plug and playable nodes that can be run on a reliable backbone in the form of a workflow, with features like dynamic state creation at runtime, infinite parallel agents, persistent state management, and failure handling. This enables the deployment of production agents that can scale beautifully to build robust autonomous AI workflows.

For similar tasks

autolabel

Autolabel is a Python library designed to label, clean, and enrich text datasets using Large Language Models (LLMs). It provides a simple 3-step process for labeling data, supports various NLP tasks, and offers features like confidence estimation, explanations, and state management. Users can access Refuel hosted LLMs for labeling and confidence estimation, and the library supports commercial and open source LLMs from providers like OpenAI, Anthropic, HuggingFace, and Google. Autolabel aims to streamline the labeling process for machine learning tasks by leveraging state-of-the-art LLM techniques and minimizing costs and experimentation time.

awesome-open-data-annotation

At ZenML, we believe in the importance of annotation and labeling workflows in the machine learning lifecycle. This repository showcases a curated list of open-source data annotation and labeling tools that are actively maintained and fit for purpose. The tools cover various domains such as multi-modal, text, images, audio, video, time series, and other data types. Users can contribute to the list and discover tools for tasks like named entity recognition, data annotation for machine learning, image and video annotation, text classification, sequence labeling, object detection, and more. The repository aims to help users enhance their data-centric workflows by leveraging these tools.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.