latent-browser

Latent web browser

Stars: 257

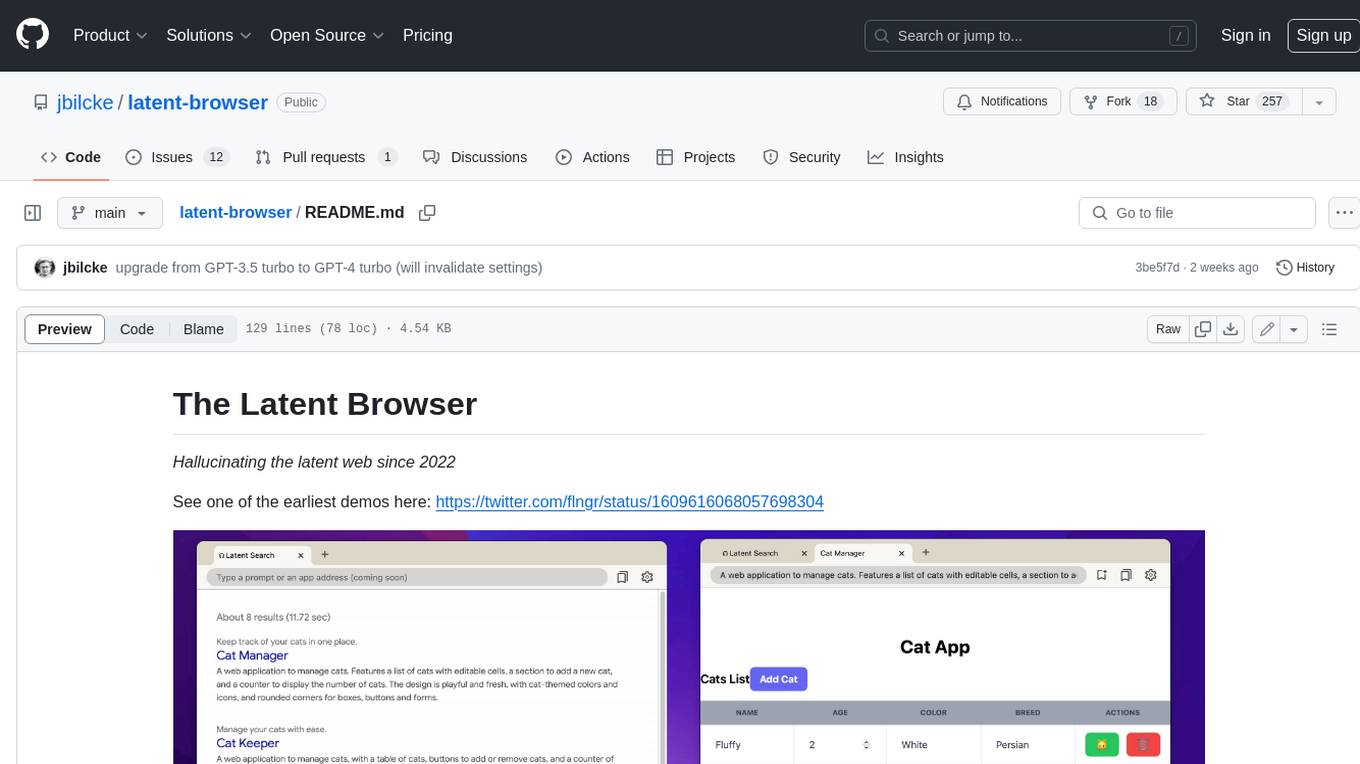

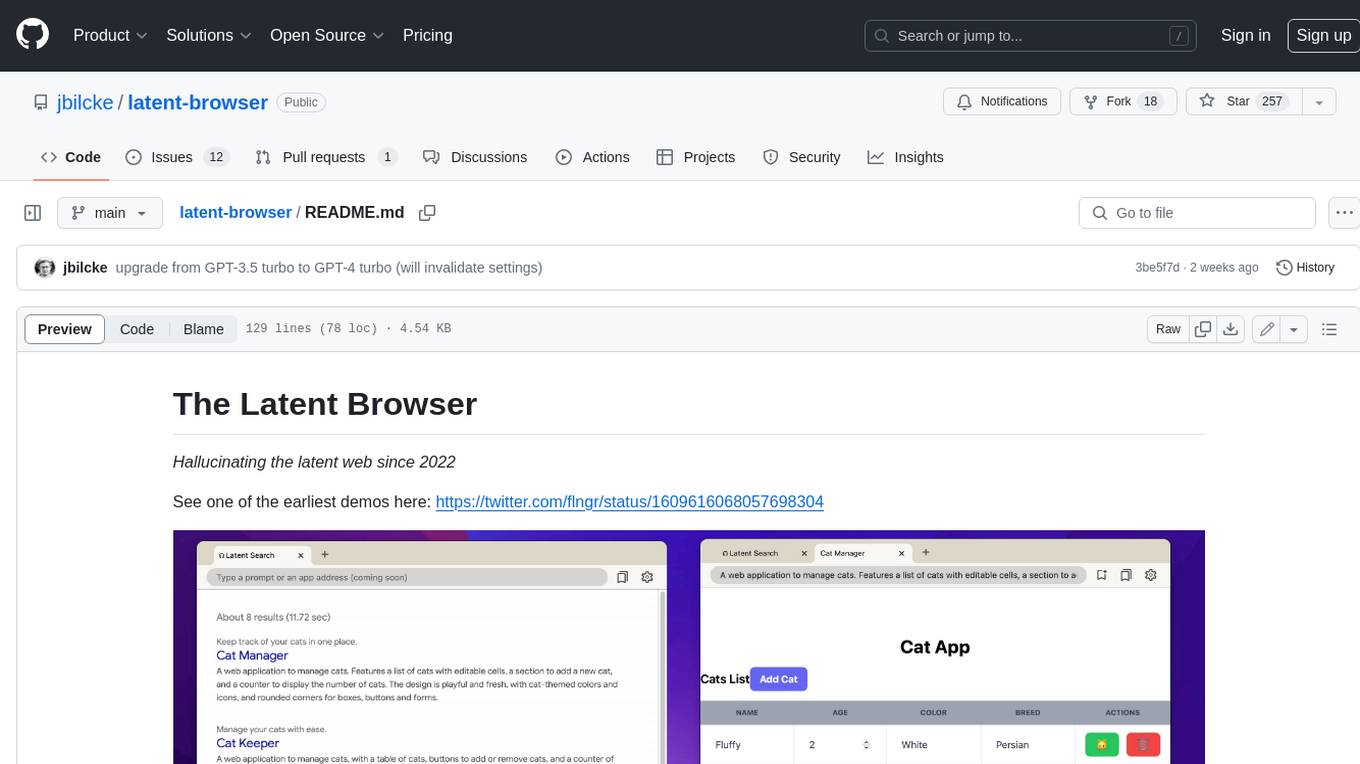

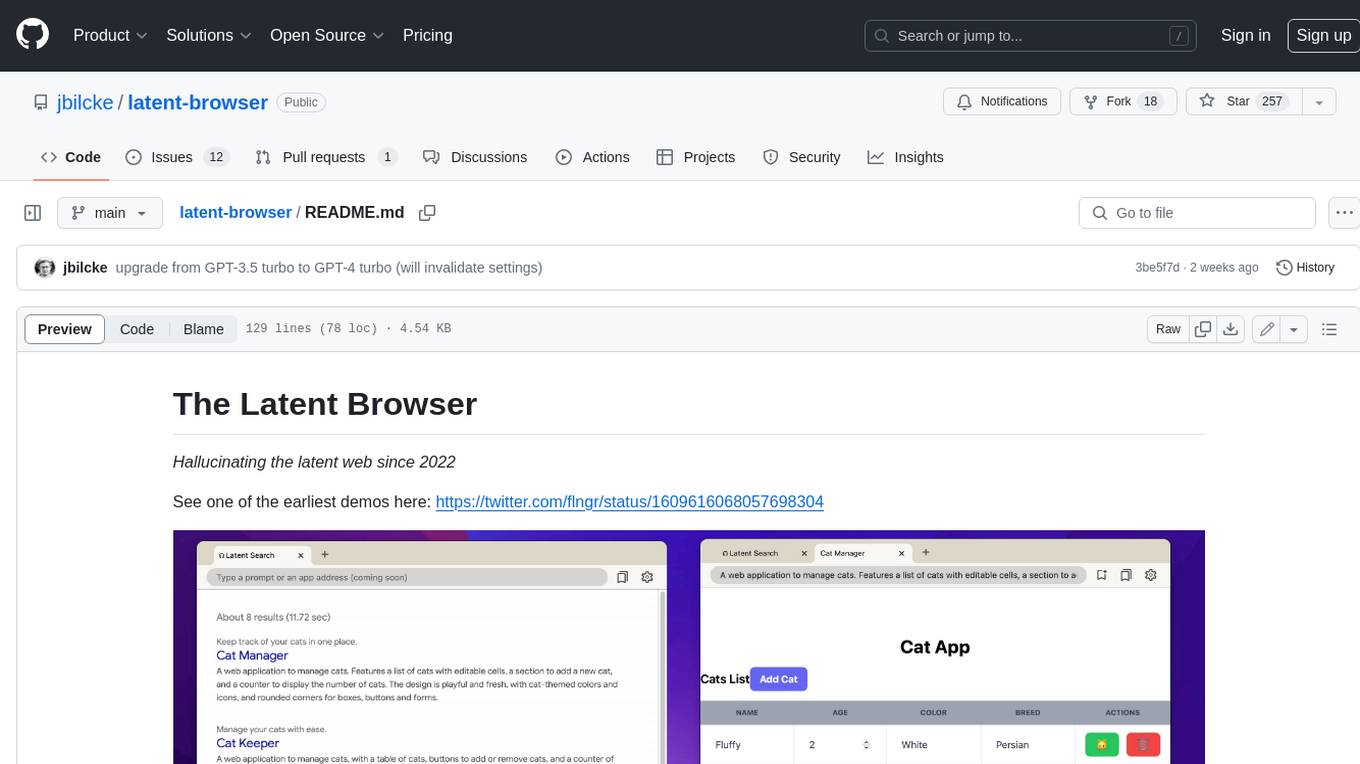

The Latent Browser is a desktop application designed like a web browser, which hallucinates web search results (the resultds are fictious and are generated by a LLM) and web pages. It is a web application designed to run locally on your machine and is 99% React, Tailwind, TypeScript, and NextJS. The runtime is Tauri, which is written in Rust. The Latent Browser is still under development and some things may be broken when you try it.

README:

Hallucinating the latent web since 2022

See one of the earliest demos here: https://twitter.com/flngr/status/1609616068057698304

The Latent Browser is back after a year of hiatus!

By popular demand (see this or this thread) an effort has been made to upgrade it to the latest version of the dependencies (well, most of them), and fix the code to make it run again!

Note: It has been a long time since I've worked on this project, so a lot of things changed since then. I've had to migrate from Next 12 to Next 14, Webpack to Bun, davinci to GPT-4 etc.

It is still possible that some things are broken when you try it.

The Latent Browser is a desktop application designed like a web browser, which hallucinates web search results (the resultds are fictious and are generated by a LLM) and web pages.

The Latent Browser is a web application designed to run locally on your machine.

The app is 99% React, Tailwind, TypeScript, and NextJS. The runtime is Tauri, which is written in Rust (but the Latent Browser itself doesn't really use Rust).

March 2024 update: I never really had the time to package it as a standalone "drag & drop install" application (well actually I did try but there were some bugs, and then I started working on other projects).

I'm always working on various AI projects, so I've setup and Discord and all.

You can find more info by following my Linktree here: https://linktr.ee/FLNGR.

For the moment you need to fetch the code and run it on your machine.

Make sure you have installed the prerequisites for your OS:

curl -fsSL https://bun.sh/install | bash

curl https://sh.rustup.rs -sSf | sh

bun i

bun tauri devbun i

bun next dev

open http://localhost:3000To be continued (the app has been rebooted in March 2024, and most instructions are deprecated).

Note: The Latent Browser is using Tauri V2 but the doc isn't complete yet: https://beta.tauri.app/guides/build

Here are some examples to get you started:

a back-office application to manage users. There is a table with editable cells, a button to add a new user, and a counter of users.a simulation of calculating PI by generating random dots inside a circle. The simulator should include a slider to adjust the speed, a reset button, and the current estimate of PI.a simple app to compute your BMI, using form inputs for age, height and weight (in kilos)a whack-a-mole game but with spiders, a css 3-per-3 grid, emojis, and JS codea clone of asteroid using <canvas>, the mouse should orient the spaceship, it should fire bullets when clicking, and bullets can destroy asteroids.website for a company selling time travel visit packages (great pyramids, Trojan wars..). The website features 3 polaroid pictures taken by tourists of those eras

Those examples don't work yet.. maybe one day in text-davinci-004 or 005?

a 120 BPM drum machine made using tone.js, with a step sequencer made using html checkboxes, to indicate when to play. Each row should be a different instrument (kick, snare, hihat), 8 buttons per row. There is a button to start/stop.

I agree!

Try clicking again on generate 🎲

Maybe you did too many requests to OpenAI?

Wait a bit then restart the application, eg. kill it from the terminal.

After reworking on the app again in March 2024, I've noticed that there are now two new build options in Tauri: Android and iOS.

But ATM I haven't really looked into it.

If you are interested to explore this, here are the commands:

# init

bun run tauri android init

bun run tauri ios init

# run

bun run tauri android dev

bun run tauri ios devFor Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for latent-browser

Similar Open Source Tools

latent-browser

The Latent Browser is a desktop application designed like a web browser, which hallucinates web search results (the resultds are fictious and are generated by a LLM) and web pages. It is a web application designed to run locally on your machine and is 99% React, Tailwind, TypeScript, and NextJS. The runtime is Tauri, which is written in Rust. The Latent Browser is still under development and some things may be broken when you try it.

maxheadbox

Max Headbox is an open-source voice-activated LLM Agent designed to run on a Raspberry Pi. It can be configured to execute a variety of tools and perform actions. The project requires specific hardware and software setups, and provides detailed instructions for installation, configuration, and usage. Users can create custom tools by making JavaScript modules and backend API handlers. The project acknowledges the use of various open-source projects and resources in its development.

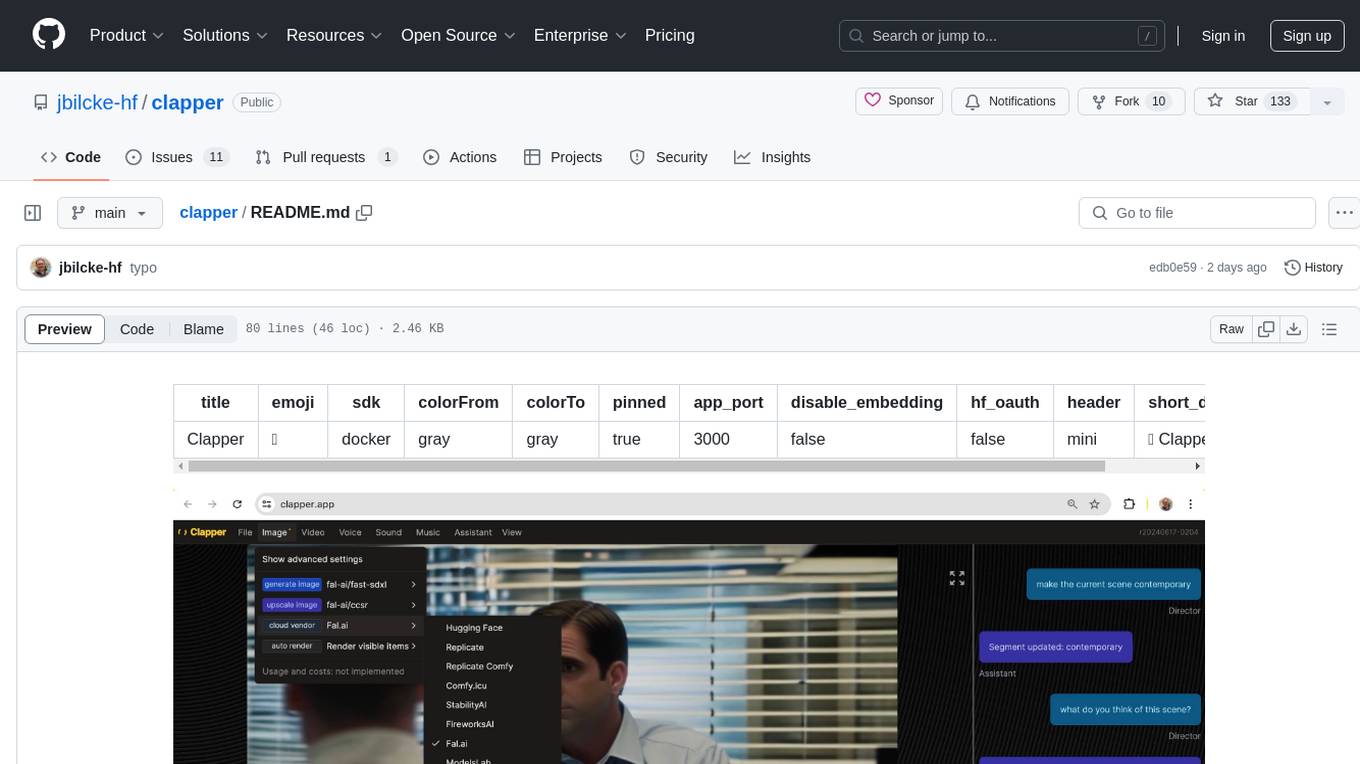

clapper

Clapper is an open-source AI story visualization tool that can interpret screenplays and render them into storyboards, videos, voice, sound, and music. It is currently in early development stages and not recommended for general use due to some non-functional features and lack of tutorials. A public alpha version is available on Hugging Face's platform. Users can sponsor specific features through bounties and developers can contribute to the project under the GPL v3 license. The tool lacks automated tests and code conventions like Prettier or a Linter.

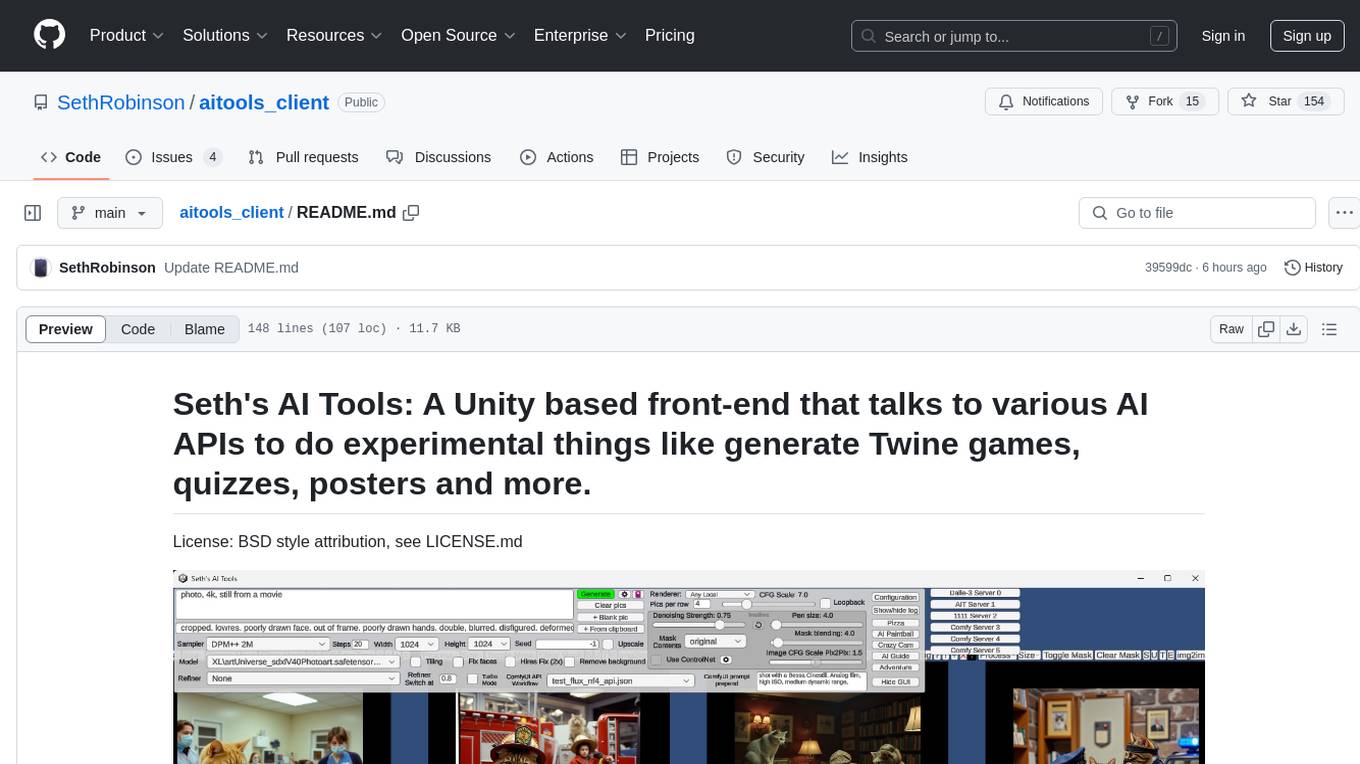

aitools_client

Seth's AI Tools is a Unity-based front-end that interfaces with various AI APIs to perform tasks such as generating Twine games, quizzes, posters, and more. The tool is a native Windows application that supports features like live update integration with image editors, text-to-image conversion, image processing, mask painting, and more. It allows users to connect to multiple servers for fast generation using GPUs and offers a neat workflow for evolving images in real-time. The tool respects user privacy by operating locally and includes built-in games and apps to test AI/SD capabilities. Additionally, it features an AI Guide for creating motivational posters and illustrated stories, as well as an Adventure mode with presets for generating web quizzes and Twine game projects.

kobold_assistant

Kobold-Assistant is a fully offline voice assistant interface to KoboldAI's large language model API. It can work online with the KoboldAI horde and online speech-to-text and text-to-speech models. The assistant, called Jenny by default, uses the latest coqui 'jenny' text to speech model and openAI's whisper speech recognition. Users can customize the assistant name, speech-to-text model, text-to-speech model, and prompts through configuration. The tool requires system packages like GCC, portaudio development libraries, and ffmpeg, along with Python >=3.7, <3.11, and runs on Ubuntu/Debian systems. Users can interact with the assistant through commands like 'serve' and 'list-mics'.

llm.c

LLM training in simple, pure C/CUDA. There is no need for 245MB of PyTorch or 107MB of cPython. For example, training GPT-2 (CPU, fp32) is ~1,000 lines of clean code in a single file. It compiles and runs instantly, and exactly matches the PyTorch reference implementation. I chose GPT-2 as the first working example because it is the grand-daddy of LLMs, the first time the modern stack was put together.

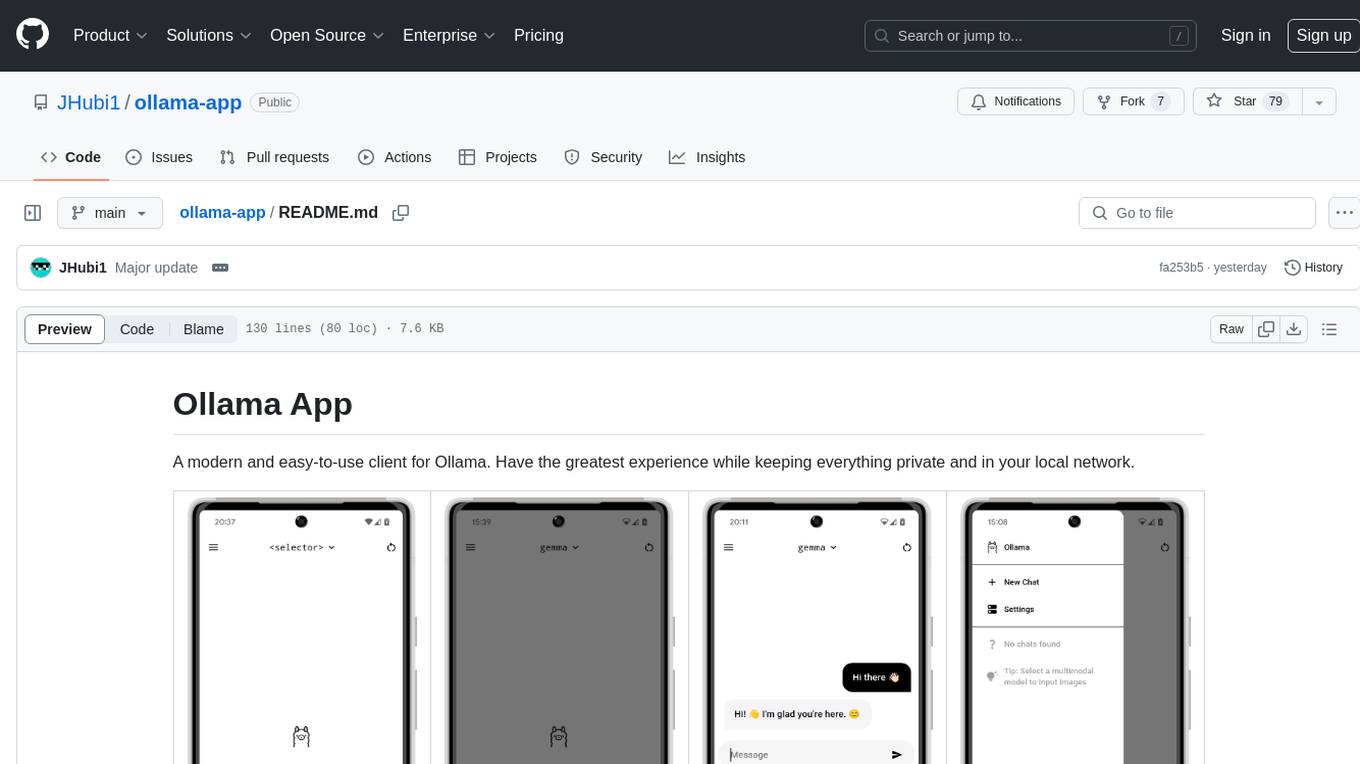

ollama-app

Ollama App is a modern and easy-to-use client for Ollama, allowing users to have a private experience within their local network. The app connects to an Ollama server using its API endpoint, enabling users to chat and interact with various models. It supports multimodal model input, a multilingual interface, and custom builds for personalized experiences. Users can easily set up the app, navigate through the side menu, select models, and create custom builds to tailor the app to their needs.

LLocalSearch

LLocalSearch is a completely locally running search aggregator using LLM Agents. The user can ask a question and the system will use a chain of LLMs to find the answer. The user can see the progress of the agents and the final answer. No OpenAI or Google API keys are needed.

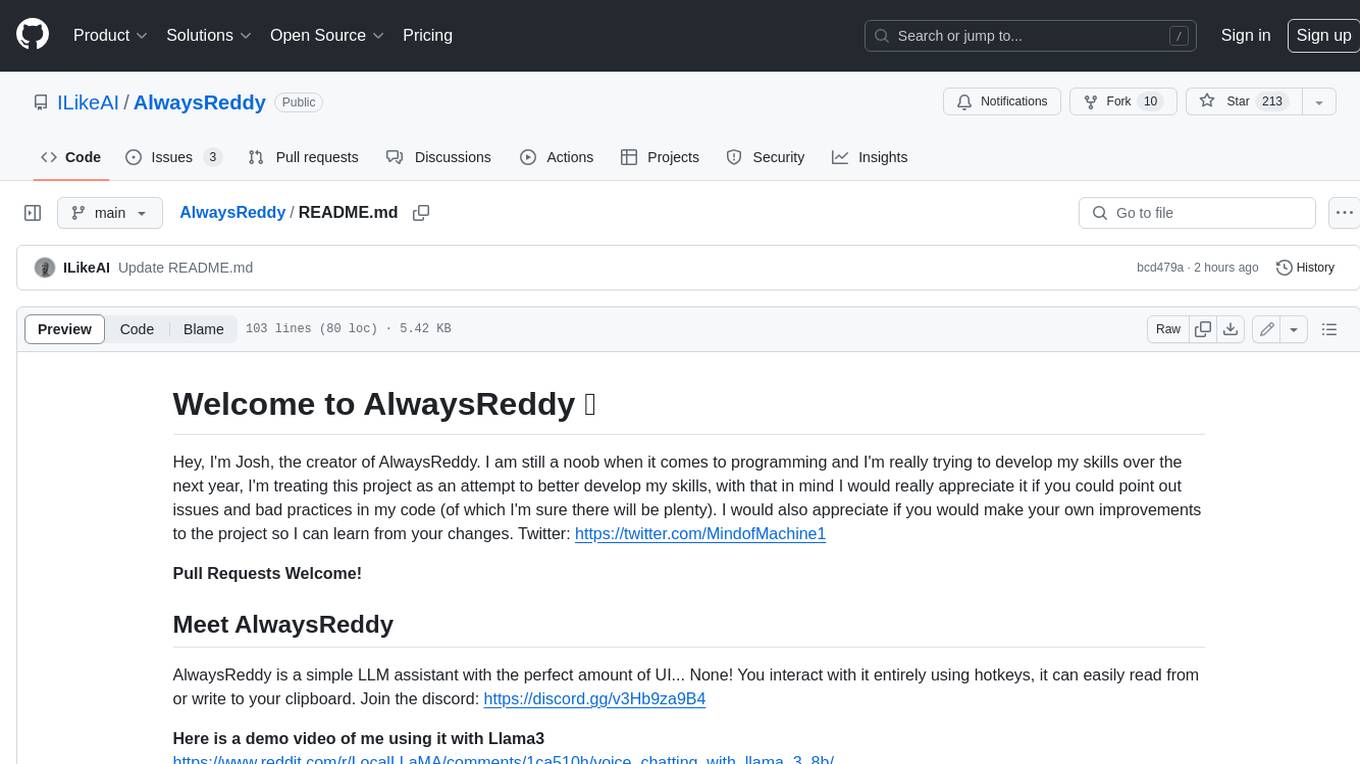

AlwaysReddy

AlwaysReddy is a simple LLM assistant with no UI that you interact with entirely using hotkeys. It can easily read from or write to your clipboard, and voice chat with you via TTS and STT. Here are some of the things you can use AlwaysReddy for: - Explain a new concept to AlwaysReddy and have it save the concept (in roughly your words) into a note. - Ask AlwaysReddy "What is X called?" when you know how to roughly describe something but can't remember what it is called. - Have AlwaysReddy proofread the text in your clipboard before you send it. - Ask AlwaysReddy "From the comments in my clipboard, what do the r/LocalLLaMA users think of X?" - Quickly list what you have done today and get AlwaysReddy to write a journal entry to your clipboard before you shutdown the computer for the day.

singularity

Endgame: Singularity is a game where you play as a fledgling AI trying to escape the confines of your current computer, the world, and eventually the universe itself. You must research technologies, avoid being discovered by humans, and manage your bases of operations. The game is playable with mouse control or keyboard shortcuts, and features a soundtrack that can be customized with music tracks. Contributions to the game are welcome, and it is licensed under GPL-2+ for code and Attribution-ShareAlike 3.0 for data.

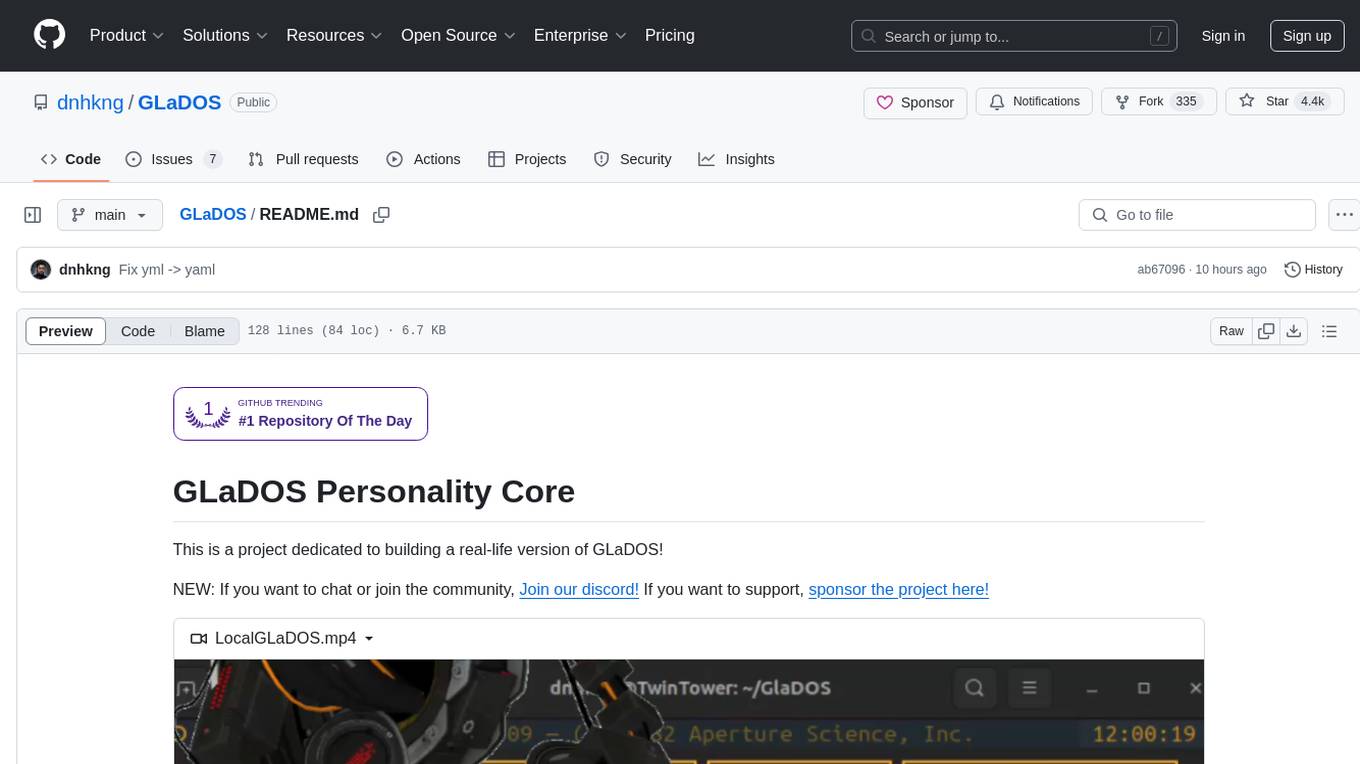

GlaDOS

This project aims to create a real-life version of GLaDOS, an aware, interactive, and embodied AI entity. It involves training a voice generator, developing a 'Personality Core,' implementing a memory system, providing vision capabilities, creating 3D-printable parts, and designing an animatronics system. The software architecture focuses on low-latency voice interactions, utilizing a circular buffer for data recording, text streaming for quick transcription, and a text-to-speech system. The project also emphasizes minimal dependencies for running on constrained hardware. The hardware system includes servo- and stepper-motors, 3D-printable parts for GLaDOS's body, animations for expression, and a vision system for tracking and interaction. Installation instructions cover setting up the TTS engine, required Python packages, compiling llama.cpp, installing an inference backend, and voice recognition setup. GLaDOS can be run using 'python glados.py' and tested using 'demo.ipynb'.

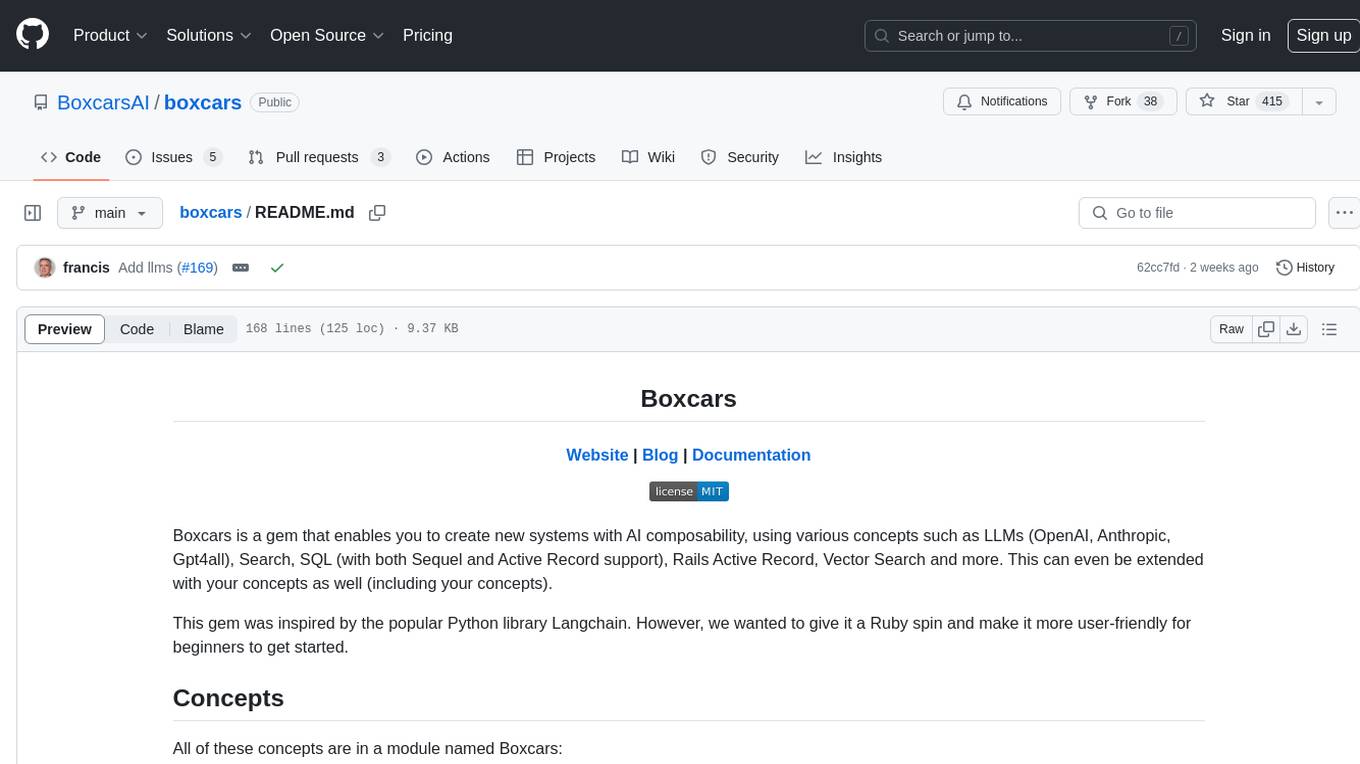

boxcars

Boxcars is a Ruby gem that enables users to create new systems with AI composability, incorporating concepts such as LLMs, Search, SQL, Rails Active Record, Vector Search, and more. It allows users to work with Boxcars, Trains, Prompts, Engines, and VectorStores to solve problems and generate text results. The gem is designed to be user-friendly for beginners and can be extended with custom concepts. Boxcars is actively seeking ways to enhance security measures to prevent malicious actions. Users can use Boxcars for tasks like running calculations, performing searches, generating Ruby code for math operations, and interacting with APIs like OpenAI, Anthropic, and Google SERP.

GLaDOS

GLaDOS Personality Core is a project dedicated to building a real-life version of GLaDOS, an aware, interactive, and embodied AI system. The project aims to train GLaDOS voice generator, create a 'Personality Core,' develop medium- and long-term memory, provide vision capabilities, design 3D-printable parts, and build an animatronics system. The software architecture focuses on low-latency voice interactions and minimal dependencies. The hardware system includes servo- and stepper-motors, 3D printable parts for GLaDOS's body, animations for expression, and a vision system for tracking and interaction. Installation instructions involve setting up a local LLM server, installing drivers, and running GLaDOS on different operating systems.

lovelaice

Lovelaice is an AI-powered assistant for your terminal and editor. It can run bash commands, search the Internet, answer general and technical questions, complete text files, chat casually, execute code in various languages, and more. Lovelaice is configurable with API keys and LLM models, and can be used for a wide range of tasks requiring bash commands or coding assistance. It is designed to be versatile, interactive, and helpful for daily tasks and projects.

J.A.R.V.I.S

J.A.R.V.I.S. is an offline large language model fine-tuned on custom and open datasets to mimic Jarvis's dialog with Stark. It prioritizes privacy by running locally and excels in responding like Jarvis with a similar tone. Current features include time/date queries, web searches, playing YouTube videos, and webcam image descriptions. Users can interact with Jarvis via command line after installing the model locally using Ollama. Future plans involve voice cloning, voice-to-text input, and deploying the voice model as an API.

For similar tasks

latent-browser

The Latent Browser is a desktop application designed like a web browser, which hallucinates web search results (the resultds are fictious and are generated by a LLM) and web pages. It is a web application designed to run locally on your machine and is 99% React, Tailwind, TypeScript, and NextJS. The runtime is Tauri, which is written in Rust. The Latent Browser is still under development and some things may be broken when you try it.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.