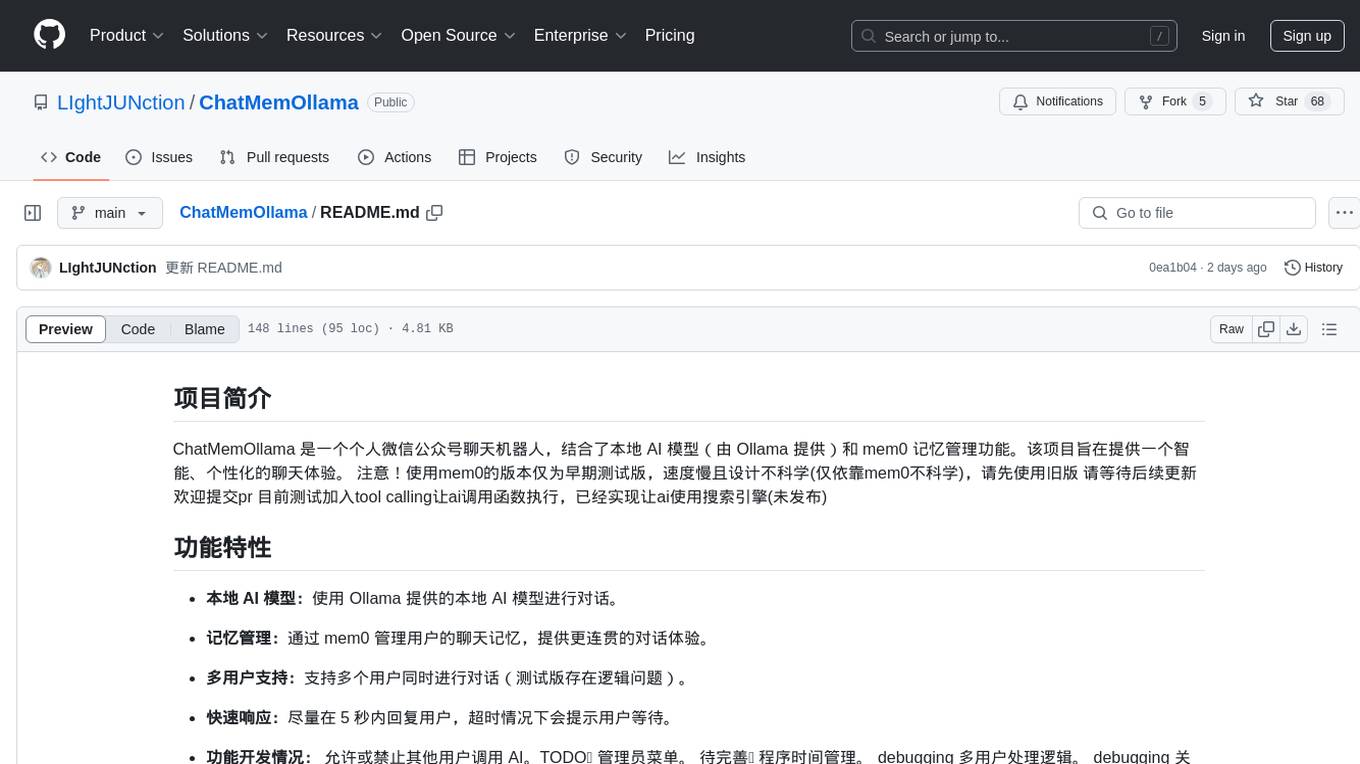

ChatMemOllama

一个个人微信公众号聊天机器人,使用本地ai模型(ollma提供),以及mem0管理记忆

Stars: 68

ChatMemOllama is a personal WeChat public account chatbot that combines a local AI model (provided by Ollama) and mem0 memory management functionality. The project aims to provide an intelligent, personalized chat experience. It features a local AI model for conversation, memory management through mem0 for a coherent dialogue experience, support for multiple users simultaneously (with logic issues in the test version), and quick responses within 5 seconds to users with timeout prompts. It allows or prohibits other users from calling AI, with ongoing development tasks including debugging multiple user handling logic and keyword replies, and completed tasks such as basic conversation and tool calling. The ultimate goal is to wait for pre-task testing completion.

README:

ChatMemOllama 是一个个人微信公众号聊天机器人,结合了本地 AI 模型(由 Ollama 提供)和 mem0 记忆管理功能。该项目旨在提供一个智能、个性化的聊天体验。 注意!使用mem0的版本仅为早期测试版,速度慢且设计不科学(仅依靠mem0不科学),请先使用旧版 请等待后续更新 欢迎提交pr 目前测试加入tool calling让ai调用函数执行,已经实现让ai使用搜索引擎(未发布)

-

本地 AI 模型:使用 Ollama 提供的本地 AI 模型进行对话。

-

记忆管理:通过 mem0 管理用户的聊天记忆,提供更连贯的对话体验。

-

多用户支持:支持多个用户同时进行对话(测试版存在逻辑问题)。

-

快速响应:尽量在 5 秒内回复用户,超时情况下会提示用户等待。

-

功能开发情况: 允许或禁止其他用户调用 AI。TODO📌 管理员菜单。 待完善📌 程序时间管理。 debugging 多用户处理逻辑。 debugging 关键词回复。 ✅ 基本对话。 ✅ tool calling。最终目标 等待前置任务测试完成

- Python 3.7+

- Flask

- FastAPI

- WeChatPy

- Ollama

-

克隆仓库:

git clone https://github.com/LIghtJUNction/ChatMemOllama.git cd ChatMemOllama -

安装依赖:

pip install -r requirements.txt

2.1 qdrant安装与使用 1.docker安装 2.wsl安装 如果遇到目标计算机积极拒绝报错 请检查ollama以及qdrant是否在后台运行

- 配置环境变量:

export WECHAT_TOKEN='your_wechat_token' export APPID='your_appid' export APPSECRET='your_appsecret' export EncodingAESKey='your_encoding_aes_key'

3.1.填写配置-config.json

- 运行应用:

python justchat.py

欢迎任何形式的贡献!请确保在提交 PR 之前阅读以下指南:

- Fork 仓库并创建一个新的分支。

- 提交您的修改并推送到您的分支。

- 创建一个 Pull Request 并描述您的更改。

本项目基于 Apache 2.0 许可证进行分发。详情请参阅 LICENSE 文件。

如有任何问题或建议,请通过 email 联系我:[email protected]

感谢大家对这个项目的喜欢!😊

作者的想法是将这个 AI 打造为个人的、能持久记忆并轻松访问本地文件的智能体。以下是我的一些心得与展望:

一个私人AI助理让我在手机上也能随时随地快捷聊天💬,同时让关注我公众号的人体验更好,实现这个完全是可行的。我第一步探索了使用微信官方的接口并连接到我的公众号,并写出了第一版的代码,这个在项目第一天晚上即完成了第一版。

- mem0 的速度:mem0 做向量库检索非常慢,超出了我的预期。

- 微信超时限制:微信公众号有 5 秒的超时限制,这让我一度怀疑是服务器处理响应有逻辑错误。

-

程序启动:代码会在

@post后等待一条 POST 请求。 -

消息处理:将请求参数提取为 JSON 格式

msg_info,然后依次进行解密、生成回复、加密并最终返回 XML 格式的加密内容。

-

第三版基本逻辑:

- 异步接受响应,AI 生成回复时采用多线程处理。

- 结合第一版和第二版的优点,实现及时回答及长期记忆。

- 用 tool calling 方式,让 AI 自主决定是保存记忆还是提取记忆。

- 狗语翻译器:让 AI 决策后调用狗语翻译器加密消息。

- 本地文件访问:让 AI 调用本地文件,告诉用户文件内容。

- 自动资料收集:让 AI 自己不停地在网上收集资料并保存。

考虑到个人计算机的算力有限,对于多用户的体验必然很糟糕。因此,可以搞一个管理员菜单:

-

进入管理员模式:发送

sudo su,如果用户的 OpenID 为管理员 ID 即可进入,(验证后)

流程:运行并第一时间发送一条消息至公众号将注册为用户零

查看config.json中的随机生成密钥,将其发送到公众号即授权成功

进入管理员菜单,向公众号送指令help即可查看帮助

发送exit即可退出

发送sudo su即可重新进入

经过鉴权验证后程序自动记录你的openid并设置为管理员,无需后续操作。

最后,感谢大家对这个项目的喜爱!✨ 这个项目的星星数量出乎我的意料,比我前面几个 repo 的星星多得多。这对我是一种莫大的鼓励,谢谢!

2024.10.9

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for ChatMemOllama

Similar Open Source Tools

ChatMemOllama

ChatMemOllama is a personal WeChat public account chatbot that combines a local AI model (provided by Ollama) and mem0 memory management functionality. The project aims to provide an intelligent, personalized chat experience. It features a local AI model for conversation, memory management through mem0 for a coherent dialogue experience, support for multiple users simultaneously (with logic issues in the test version), and quick responses within 5 seconds to users with timeout prompts. It allows or prohibits other users from calling AI, with ongoing development tasks including debugging multiple user handling logic and keyword replies, and completed tasks such as basic conversation and tool calling. The ultimate goal is to wait for pre-task testing completion.

LabelQuick

LabelQuick_V2.0 is a fast image annotation tool designed and developed by the AI Horizon team. This version has been optimized and improved based on the previous version. It provides an intuitive interface and powerful annotation and segmentation functions to efficiently complete dataset annotation work. The tool supports video object tracking annotation, quick annotation by clicking, and various video operations. It introduces the SAM2 model for accurate and efficient object detection in video frames, reducing manual intervention and improving annotation quality. The tool is designed for Windows systems and requires a minimum of 6GB of memory.

xhs_ai_publisher

xhs_ai_publisher is an automation tool designed for publishing articles on the Xiaohongshu platform. It combines a graphical user interface with automation scripts to generate content using large model technology. The tool simplifies the content creation and publishing process by automatically logging in and publishing articles through a web browser.

CodeAsk

CodeAsk is a code analysis tool designed to tackle complex issues such as code that seems to self-replicate, cryptic comments left by predecessors, messy and unclear code, and long-lasting temporary solutions. It offers intelligent code organization and analysis, security vulnerability detection, code quality assessment, and other interesting prompts to help users understand and work with legacy code more efficiently. The tool aims to translate 'legacy code mountains' into understandable language, creating an illusion of comprehension and facilitating knowledge transfer to new team members.

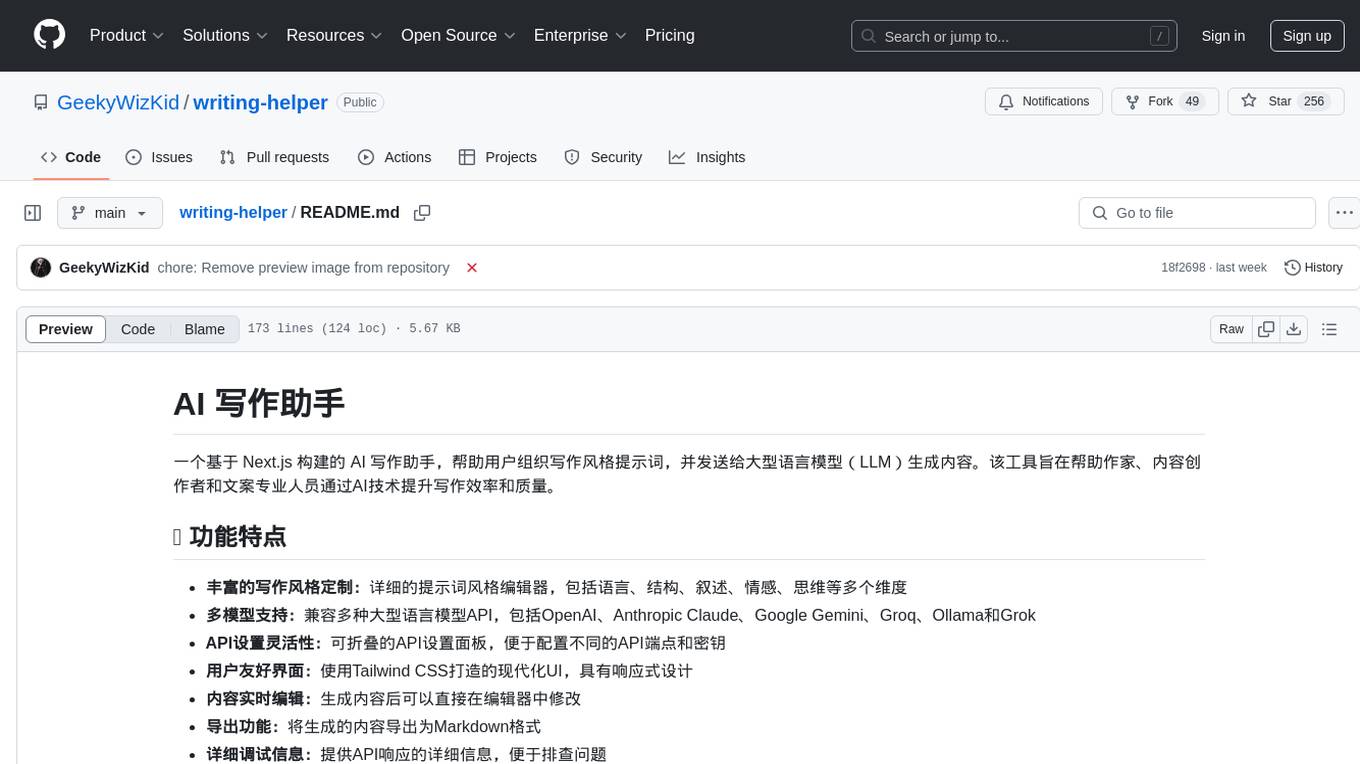

writing-helper

A Next.js-based AI writing assistant that helps users organize writing style prompts and sends them to large language models (LLMs) to generate content. The tool aims to help writers, content creators, and copywriters improve writing efficiency and quality through AI technology. It features rich writing style customization, support for multiple LLM APIs, flexible API settings, user-friendly interface, real-time content editing, export function, detailed debugging information, dark/light mode support, and more.

promptMinder

PromptMinder is a professional prompt word management platform that simplifies and enhances AI prompt word management. It features prompt word version control with support for version tracking and history viewing, diff comparison similar to Git for quick identification of prompt word updates, customizable tagging for quick categorization and retrieval, support for private and public prompt words, integration of AI models for intelligent prompt word generation, team collaboration with team creation, member management, and permission control, community contribution feature with audit and publishing process. The platform also offers a responsive design for mobile devices, internationalization support for Chinese and English languages, modern interface based on Shadcn UI, intelligent search and filtering functionality, and convenient copy and share features. It is built for high performance using Next.js 16 + React 19, with security authentication provided by Clerk, reliable storage using Supabase + PostgreSQL database, and easy deployment supporting Vercel and Zeabur one-click deployment.

Fay

Fay is an open-source digital human framework that offers different versions for various purposes. The '带货完整版' is suitable for online and offline salespersons. The '助理完整版' serves as a human-machine interactive digital assistant that can also control devices upon command. The 'agent版' is designed to be an autonomous agent capable of making decisions and contacting its owner. The framework provides updates and improvements across its different versions, including features like emotion analysis integration, model optimizations, and compatibility enhancements. Users can access detailed documentation for each version through the provided links.

Yi-Ai

Yi-Ai is a project based on the development of nineai 2.4.2. It is for learning and reference purposes only, not for commercial use. The project includes updates to popular models like gpt-4o and claude3.5, as well as new features such as model image recognition. It also supports various functionalities like model sorting, file type extensions, and bug fixes. The project provides deployment tutorials for both integrated and compiled packages, with instructions for environment setup, configuration, dependency installation, and project startup. Additionally, it offers a management platform with different access levels and emphasizes the importance of following the steps for proper system operation.

AI-Codereview-Gitlab

AI-Codereview-Gitlab is an automated code review tool based on large models, designed to help development teams conduct intelligent code reviews quickly during code merging or submission. It supports multiple large models including DeepSeek, ZhipuAI, OpenAI, and Ollama. The tool can automatically push review results to DingTalk, WeChat Work, and Feishu, generate daily reports based on GitLab commit records, and provide a visual dashboard to display code review records. The tool works by triggering webhook events on GitLab when users submit code, calling third-party large models to review the code, and recording the review results in corresponding Merge Requests or Commit Notes.

AI-automatically-generates-novels

AI Novel Writing Assistant is an intelligent productivity tool for novel creation based on AI + prompt words. It has been used by hundreds of studios and individual authors to quickly and batch generate novels. With AI technology to enhance writing efficiency and a comprehensive prompt word management feature, it achieves 20 times efficiency improvement in intelligent book disassembly, intelligent book title and synopsis generation, text polishing, and shift+L quick term insertion, making writing easier and more professional. It has been upgraded to v5.2. The tool supports mind map construction of outlines and chapters, AI self-optimization of novels, writing knowledge base management, shift+L quick term insertion in the text input field, support for any mainstream large models integration, custom skin color, prompt word import and export, support for large text memory, right-click polishing, expansion, and de-AI flavoring of outlines, chapters, and text, multiple sets of novel prompt word library management, and book disassembly function.

MoneyPrinterTurbo

MoneyPrinterTurbo is a tool that can automatically generate video content based on a provided theme or keyword. It can create video scripts, materials, subtitles, and background music, and then compile them into a high-definition short video. The tool features a web interface and an API interface, supporting AI-generated video scripts, customizable scripts, multiple HD video sizes, batch video generation, customizable video segment duration, multilingual video scripts, multiple voice synthesis options, subtitle generation with font customization, background music selection, access to high-definition and copyright-free video materials, and integration with various AI models like OpenAI, moonshot, Azure, and more. The tool aims to simplify the video creation process and offers future plans to enhance voice synthesis, add video transition effects, provide more video material sources, offer video length options, include free network proxies, enable real-time voice and music previews, support additional voice synthesis services, and facilitate automatic uploads to YouTube platform.

ChatGPT-airport-tizi-fanqiang

This repository provides a curated list of recommended airport proxies for accessing ChatGPT and other AI tools while bypassing internet restrictions. The proxies are tested and verified to ensure reliability and stability. The readme includes detailed instructions on how to set up and use the proxies with various devices and platforms. Additionally, the repository offers advanced tutorials on upgrading to GPT-4/Plus, deploying a 24/7 ChatGPT微信机器人 server, and using Claude-3 securely and for free.

chatgpt-webui

ChatGPT WebUI is a user-friendly web graphical interface for various LLMs like ChatGPT, providing simplified features such as core ChatGPT conversation and document retrieval dialogues. It has been optimized for better RAG retrieval accuracy and supports various search engines. Users can deploy local language models easily and interact with different LLMs like GPT-4, Azure OpenAI, and more. The tool offers powerful functionalities like GPT4 API configuration, system prompt setup for role-playing, and basic conversation features. It also provides a history of conversations, customization options, and a seamless user experience with themes, dark mode, and PWA installation support.

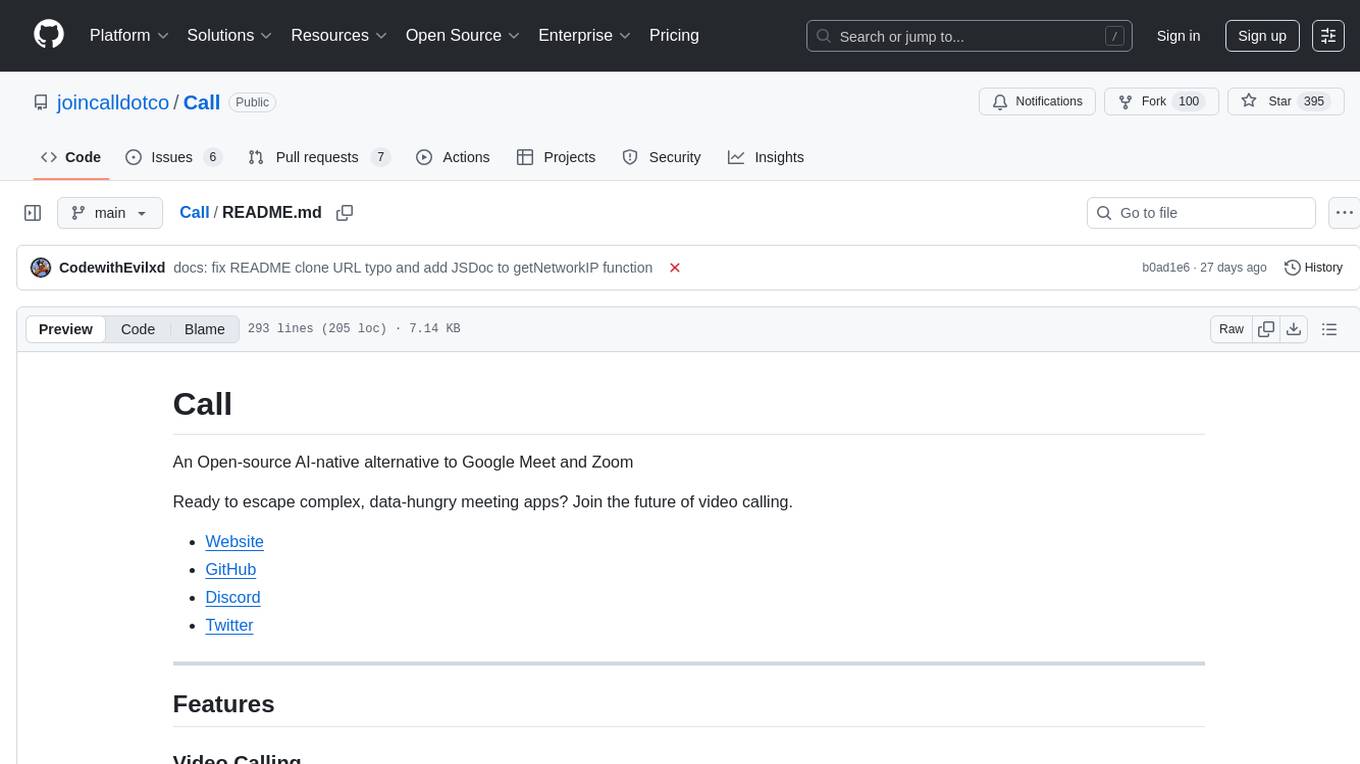

Call

Call is an open-source AI-native alternative to Google Meet and Zoom, offering video calling, team collaboration, contact management, meeting scheduling, AI-powered features, security, and privacy. It is cross-platform, web-based, mobile responsive, and supports offline capabilities. The tech stack includes Next.js, TypeScript, Tailwind CSS, Mediasoup-SFU, React Query, Zustand, Hono, PostgreSQL, Drizzle ORM, Better Auth, Turborepo, Docker, Vercel, and Rate Limiting.

aice_ps

Aice PS is a powerful web-based AI photo editor that utilizes Google aistudio's advanced capabilities to make professional image editing and creation simple and intuitive. Users can enhance images, apply creative filters, make professional adjustments, and even generate new images from scratch using simple text prompts. The tool combines various cutting-edge AI capabilities to provide a one-stop creative image and video solution, including AI image generation, intelligent editing, creative filters, professional adjustments, AI inspiration suggestions, intelligent synthesis, texture overlay, one-click cutout, time travel effects, BeatSync for music and image synchronization, NB prompt word library, basic editing toolkit, and more.

hugging-llm

HuggingLLM is a project that aims to introduce ChatGPT to a wider audience, particularly those interested in using the technology to create new products or applications. The project focuses on providing practical guidance on how to use ChatGPT-related APIs to create new features and applications. It also includes detailed background information and system design introductions for relevant tasks, as well as example code and implementation processes. The project is designed for individuals with some programming experience who are interested in using ChatGPT for practical applications, and it encourages users to experiment and create their own applications and demos.

For similar tasks

ChatMemOllama

ChatMemOllama is a personal WeChat public account chatbot that combines a local AI model (provided by Ollama) and mem0 memory management functionality. The project aims to provide an intelligent, personalized chat experience. It features a local AI model for conversation, memory management through mem0 for a coherent dialogue experience, support for multiple users simultaneously (with logic issues in the test version), and quick responses within 5 seconds to users with timeout prompts. It allows or prohibits other users from calling AI, with ongoing development tasks including debugging multiple user handling logic and keyword replies, and completed tasks such as basic conversation and tool calling. The ultimate goal is to wait for pre-task testing completion.

h2ogpt

h2oGPT is an Apache V2 open-source project that allows users to query and summarize documents or chat with local private GPT LLMs. It features a private offline database of any documents (PDFs, Excel, Word, Images, Video Frames, Youtube, Audio, Code, Text, MarkDown, etc.), a persistent database (Chroma, Weaviate, or in-memory FAISS) using accurate embeddings (instructor-large, all-MiniLM-L6-v2, etc.), and efficient use of context using instruct-tuned LLMs (no need for LangChain's few-shot approach). h2oGPT also offers parallel summarization and extraction, reaching an output of 80 tokens per second with the 13B LLaMa2 model, HYDE (Hypothetical Document Embeddings) for enhanced retrieval based upon LLM responses, a variety of models supported (LLaMa2, Mistral, Falcon, Vicuna, WizardLM. With AutoGPTQ, 4-bit/8-bit, LORA, etc.), GPU support from HF and LLaMa.cpp GGML models, and CPU support using HF, LLaMa.cpp, and GPT4ALL models. Additionally, h2oGPT provides Attention Sinks for arbitrarily long generation (LLaMa-2, Mistral, MPT, Pythia, Falcon, etc.), a UI or CLI with streaming of all models, the ability to upload and view documents through the UI (control multiple collaborative or personal collections), Vision Models LLaVa, Claude-3, Gemini-Pro-Vision, GPT-4-Vision, Image Generation Stable Diffusion (sdxl-turbo, sdxl) and PlaygroundAI (playv2), Voice STT using Whisper with streaming audio conversion, Voice TTS using MIT-Licensed Microsoft Speech T5 with multiple voices and Streaming audio conversion, Voice TTS using MPL2-Licensed TTS including Voice Cloning and Streaming audio conversion, AI Assistant Voice Control Mode for hands-free control of h2oGPT chat, Bake-off UI mode against many models at the same time, Easy Download of model artifacts and control over models like LLaMa.cpp through the UI, Authentication in the UI by user/password via Native or Google OAuth, State Preservation in the UI by user/password, Linux, Docker, macOS, and Windows support, Easy Windows Installer for Windows 10 64-bit (CPU/CUDA), Easy macOS Installer for macOS (CPU/M1/M2), Inference Servers support (oLLaMa, HF TGI server, vLLM, Gradio, ExLLaMa, Replicate, OpenAI, Azure OpenAI, Anthropic), OpenAI-compliant, Server Proxy API (h2oGPT acts as drop-in-replacement to OpenAI server), Python client API (to talk to Gradio server), JSON Mode with any model via code block extraction. Also supports MistralAI JSON mode, Claude-3 via function calling with strict Schema, OpenAI via JSON mode, and vLLM via guided_json with strict Schema, Web-Search integration with Chat and Document Q/A, Agents for Search, Document Q/A, Python Code, CSV frames (Experimental, best with OpenAI currently), Evaluate performance using reward models, and Quality maintained with over 1000 unit and integration tests taking over 4 GPU-hours.

serverless-chat-langchainjs

This sample shows how to build a serverless chat experience with Retrieval-Augmented Generation using LangChain.js and Azure. The application is hosted on Azure Static Web Apps and Azure Functions, with Azure Cosmos DB for MongoDB vCore as the vector database. You can use it as a starting point for building more complex AI applications.

react-native-vercel-ai

Run Vercel AI package on React Native, Expo, Web and Universal apps. Currently React Native fetch API does not support streaming which is used as a default on Vercel AI. This package enables you to use AI library on React Native but the best usage is when used on Expo universal native apps. On mobile you get back responses without streaming with the same API of `useChat` and `useCompletion` and on web it will fallback to `ai/react`

LLamaSharp

LLamaSharp is a cross-platform library to run 🦙LLaMA/LLaVA model (and others) on your local device. Based on llama.cpp, inference with LLamaSharp is efficient on both CPU and GPU. With the higher-level APIs and RAG support, it's convenient to deploy LLM (Large Language Model) in your application with LLamaSharp.

gpt4all

GPT4All is an ecosystem to run powerful and customized large language models that work locally on consumer grade CPUs and any GPU. Note that your CPU needs to support AVX or AVX2 instructions. Learn more in the documentation. A GPT4All model is a 3GB - 8GB file that you can download and plug into the GPT4All open-source ecosystem software. Nomic AI supports and maintains this software ecosystem to enforce quality and security alongside spearheading the effort to allow any person or enterprise to easily train and deploy their own on-edge large language models.

ChatGPT-Telegram-Bot

ChatGPT Telegram Bot is a Telegram bot that provides a smooth AI experience. It supports both Azure OpenAI and native OpenAI, and offers real-time (streaming) response to AI, with a faster and smoother experience. The bot also has 15 preset bot identities that can be quickly switched, and supports custom bot identities to meet personalized needs. Additionally, it supports clearing the contents of the chat with a single click, and restarting the conversation at any time. The bot also supports native Telegram bot button support, making it easy and intuitive to implement required functions. User level division is also supported, with different levels enjoying different single session token numbers, context numbers, and session frequencies. The bot supports English and Chinese on UI, and is containerized for easy deployment.

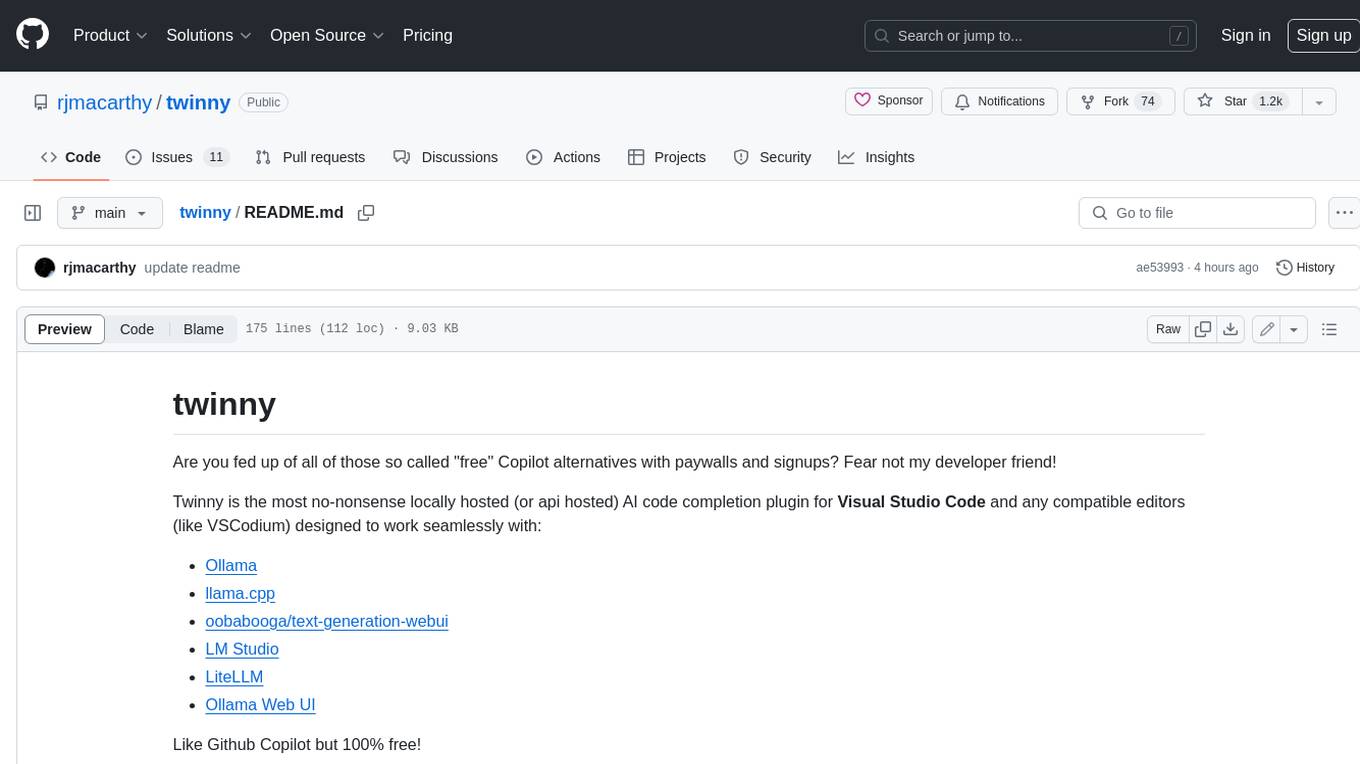

twinny

Twinny is a free and open-source AI code completion plugin for Visual Studio Code and compatible editors. It integrates with various tools and frameworks, including Ollama, llama.cpp, oobabooga/text-generation-webui, LM Studio, LiteLLM, and Open WebUI. Twinny offers features such as fill-in-the-middle code completion, chat with AI about your code, customizable API endpoints, and support for single or multiline fill-in-middle completions. It is easy to install via the Visual Studio Code extensions marketplace and provides a range of customization options. Twinny supports both online and offline operation and conforms to the OpenAI API standard.

For similar jobs

sweep

Sweep is an AI junior developer that turns bugs and feature requests into code changes. It automatically handles developer experience improvements like adding type hints and improving test coverage.

teams-ai

The Teams AI Library is a software development kit (SDK) that helps developers create bots that can interact with Teams and Microsoft 365 applications. It is built on top of the Bot Framework SDK and simplifies the process of developing bots that interact with Teams' artificial intelligence capabilities. The SDK is available for JavaScript/TypeScript, .NET, and Python.

ai-guide

This guide is dedicated to Large Language Models (LLMs) that you can run on your home computer. It assumes your PC is a lower-end, non-gaming setup.

classifai

Supercharge WordPress Content Workflows and Engagement with Artificial Intelligence. Tap into leading cloud-based services like OpenAI, Microsoft Azure AI, Google Gemini and IBM Watson to augment your WordPress-powered websites. Publish content faster while improving SEO performance and increasing audience engagement. ClassifAI integrates Artificial Intelligence and Machine Learning technologies to lighten your workload and eliminate tedious tasks, giving you more time to create original content that matters.

chatbot-ui

Chatbot UI is an open-source AI chat app that allows users to create and deploy their own AI chatbots. It is easy to use and can be customized to fit any need. Chatbot UI is perfect for businesses, developers, and anyone who wants to create a chatbot.

BricksLLM

BricksLLM is a cloud native AI gateway written in Go. Currently, it provides native support for OpenAI, Anthropic, Azure OpenAI and vLLM. BricksLLM aims to provide enterprise level infrastructure that can power any LLM production use cases. Here are some use cases for BricksLLM: * Set LLM usage limits for users on different pricing tiers * Track LLM usage on a per user and per organization basis * Block or redact requests containing PIIs * Improve LLM reliability with failovers, retries and caching * Distribute API keys with rate limits and cost limits for internal development/production use cases * Distribute API keys with rate limits and cost limits for students

uAgents

uAgents is a Python library developed by Fetch.ai that allows for the creation of autonomous AI agents. These agents can perform various tasks on a schedule or take action on various events. uAgents are easy to create and manage, and they are connected to a fast-growing network of other uAgents. They are also secure, with cryptographically secured messages and wallets.

griptape

Griptape is a modular Python framework for building AI-powered applications that securely connect to your enterprise data and APIs. It offers developers the ability to maintain control and flexibility at every step. Griptape's core components include Structures (Agents, Pipelines, and Workflows), Tasks, Tools, Memory (Conversation Memory, Task Memory, and Meta Memory), Drivers (Prompt and Embedding Drivers, Vector Store Drivers, Image Generation Drivers, Image Query Drivers, SQL Drivers, Web Scraper Drivers, and Conversation Memory Drivers), Engines (Query Engines, Extraction Engines, Summary Engines, Image Generation Engines, and Image Query Engines), and additional components (Rulesets, Loaders, Artifacts, Chunkers, and Tokenizers). Griptape enables developers to create AI-powered applications with ease and efficiency.