Rankify

🔥 Rankify: A Comprehensive Python Toolkit for Retrieval, Re-Ranking, and Retrieval-Augmented Generation 🔥. Our toolkit integrates 40 pre-retrieved benchmark datasets and supports 7+ retrieval techniques, 24+ state-of-the-art Reranking models, and multiple RAG methods.

Stars: 335

Rankify is a Python toolkit designed for unified retrieval, re-ranking, and retrieval-augmented generation (RAG) research. It integrates 40 pre-retrieved benchmark datasets and supports 7 retrieval techniques, 24 state-of-the-art re-ranking models, and multiple RAG methods. Rankify provides a modular and extensible framework, enabling seamless experimentation and benchmarking across retrieval pipelines. It offers comprehensive documentation, open-source implementation, and pre-built evaluation tools, making it a powerful resource for researchers and practitioners in the field.

README:

🔥 Rankify: A Comprehensive Python Toolkit for Retrieval, Re-Ranking, and Retrieval-Augmented Generation 🔥

If you like our Framework, don't hesitate to ⭐ star this repository ⭐. This helps us to make the Framework more better and scalable to different models and methods 🤗.

A modular and efficient retrieval, reranking and RAG framework designed to work with state-of-the-art models for retrieval, ranking and rag tasks.

Rankify is a Python toolkit designed for unified retrieval, re-ranking, and retrieval-augmented generation (RAG) research. Our toolkit integrates 40 pre-retrieved benchmark datasets and supports 7 retrieval techniques, 24 state-of-the-art re-ranking models, and multiple RAG methods. Rankify provides a modular and extensible framework, enabling seamless experimentation and benchmarking across retrieval pipelines. Comprehensive documentation, open-source implementation, and pre-built evaluation tools make Rankify a powerful resource for researchers and practitioners in the field.

🚀 Demo

To run the demo locally:

# Make sure Rankify is installed

pip install streamlit

# Then run the demo

streamlit run demo.py

https://github.com/user-attachments/assets/13184943-55db-4f0c-b509-fde920b809bc

🔗 Navigation

- Features

- Roadmap

- Installation

- Quick Start

- Retrievers

- Re-Rankers

- Generators

- Evaluation

- Documentation

- Community Contributing

- Contributing

- License

- Acknowledgments

- Citation

🔧 Installation

Set up the virtual environment

First, create and activate a conda environment with Python 3.10:

conda create -n rankify python=3.10

conda activate rankify

Install PyTorch 2.5.1

we recommend installing Rankify with PyTorch 2.5.1 for Rankify. Refer to the PyTorch installation page for platform-specific installation commands.

If you have access to GPUs, it's recommended to install the CUDA version 12.4 or 12.6 of PyTorch, as many of the evaluation metrics are optimized for GPU use.

To install Pytorch 2.5.1 you can install it from the following cmd

pip install torch==2.5.1 torchvision==0.20.1 torchaudio==2.5.1 --index-url https://download.pytorch.org/whl/cu124

Basic Installation

To install Rankify, simply use pip (requires Python 3.10+):

pip install rankify

This will install the base functionality required for retrieval, re-ranking, and retrieval-augmented generation (RAG).

Recommended Installation

For full functionality, we recommend installing Rankify with all dependencies:

pip install "rankify[all]"

This ensures you have all necessary modules, including retrieval, re-ranking, and RAG support.

Optional Dependencies

If you prefer to install only specific components, choose from the following:

# Install dependencies for retrieval only (BM25, DPR, ANCE, etc.)

pip install "rankify[retriever]"

# Install base re-ranking with vLLM support for `FirstModelReranker`, `LiT5ScoreReranker`, `LiT5DistillReranker`, `VicunaReranker`, and `ZephyrReranker'.

pip install "rankify[reranking]"

Or, to install from GitHub for the latest development version:

git clone https://github.com/DataScienceUIBK/rankify.git

cd rankify

pip install -e .

# For full functionality we recommend installing Rankify with all dependencies:

pip install -e ".[all]"

# Install dependencies for retrieval only (BM25, DPR, ANCE, etc.)

pip install -e ".[retriever]"

# Install base re-ranking with vLLM support for `FirstModelReranker`, `LiT5ScoreReranker`, `LiT5DistillReranker`, `VicunaReranker`, and `ZephyrReranker'.

pip install -e ".[reranking]"

Using ColBERT Retriever

If you want to use ColBERT Retriever, follow these additional setup steps:

# Install GCC and required libraries

conda install -c conda-forge gcc=9.4.0 gxx=9.4.0

conda install -c conda-forge libstdcxx-ng

# Export necessary environment variables

export LD_LIBRARY_PATH=$CONDA_PREFIX/lib:$LD_LIBRARY_PATH

export CC=gcc

export CXX=g++

export PATH=$CONDA_PREFIX/bin:$PATH

# Clear cached torch extensions

rm -rf ~/.cache/torch_extensions/*

🚀 Quick Start

1️⃣ Pre-retrieved Datasets

We provide 1,000 pre-retrieved documents per dataset, which you can download from:

🔗 Hugging Face Dataset Repository

Dataset Format

The pre-retrieved documents are structured as follows:

[

{

"question": "...",

"answers": ["...", "...", ...],

"ctxs": [

{

"id": "...", // Passage ID from database TSV file

"score": "...", // Retriever score

"has_answer": true|false // Whether the passage contains the answer

}

]

}

]

Access Datasets in Rankify

You can easily download and use pre-retrieved datasets through Rankify.

List Available Datasets

To see all available datasets:

from rankify.dataset.dataset import Dataset

# Display available datasets

Dataset.avaiable_dataset()

Retriever Datasets

from rankify.dataset.dataset import Dataset

# Download BM25-retrieved documents for nq-dev

dataset = Dataset(retriever="bm25", dataset_name="nq-dev", n_docs=100)

documents = dataset.download(force_download=False)

# Download BGE-retrieved documents for nq-dev

dataset = Dataset(retriever="bge", dataset_name="nq-dev", n_docs=100)

documents = dataset.download(force_download=False)

# Download ColBERT-retrieved documents for nq-dev

dataset = Dataset(retriever="colbert", dataset_name="nq-dev", n_docs=100)

documents = dataset.download(force_download=False)

# Download MSS-DPR-retrieved documents for nq-dev

dataset = Dataset(retriever="mss-dpr", dataset_name="nq-dev", n_docs=100)

documents = dataset.download(force_download=False)

# Download MSS-retrieved documents for nq-dev

dataset = Dataset(retriever="mss", dataset_name="nq-dev", n_docs=100)

documents = dataset.download(force_download=False)

# Download MSS-retrieved documents for nq-dev

dataset = Dataset(retriever="contriever", dataset_name="nq-dev", n_docs=100)

documents = dataset.download(force_download=False)

# Download ANCE-retrieved documents for nq-dev

dataset = Dataset(retriever="ance", dataset_name="nq-dev", n_docs=100)

documents = dataset.download(force_download=False)

Load Pre-retrieved Dataset from File

If you have already downloaded a dataset, you can load it directly:

from rankify.dataset.dataset import Dataset

# Load pre-downloaded BM25 dataset for WebQuestions

documents = Dataset.load_dataset('./tests/out-datasets/bm25/web_questions/test.json', 100)

Now, you can integrate retrieved documents with re-ranking and RAG workflows! 🚀

Feature Comparison for Pre-Retrieved Datasets

The following table provides an overview of the availability of different retrieval methods (BM25, DPR, ColBERT, ANCE, BGE, Contriever) for each dataset.

✅ Completed

⏳ Part Completed, Pending other Parts

🕒 Pending

Dataset

BM25

DPR

ColBERT

ANCE

BGE

Contriever

2WikimultihopQA

✅

🕒

🕒

🕒

🕒

🕒

ArchivialQA

✅

🕒

🕒

🕒

🕒

🕒

ChroniclingAmericaQA

✅

🕒

🕒

🕒

🕒

🕒

EntityQuestions

✅

🕒

🕒

🕒

🕒

🕒

AmbigQA

✅

🕒

✅

🕒

🕒

🕒

ARC

✅

🕒

🕒

🕒

🕒

🕒

ASQA

✅

🕒

🕒

🕒

🕒

🕒

MS MARCO

🕒

🕒

🕒

🕒

🕒

🕒

AY2

✅

🕒

🕒

🕒

🕒

🕒

Bamboogle

✅

🕒

🕒

🕒

🕒

🕒

BoolQ

✅

🕒

✅

🕒

✅

🕒

CommonSenseQA

✅

🕒

✅

🕒

✅

🕒

CuratedTREC

✅

🕒

✅

⏳

✅

🕒

ELI5

✅

🕒

🕒

🕒

🕒

🕒

FERMI

✅

🕒

✅

⏳

✅

🕒

FEVER

✅

🕒

🕒

🕒

🕒

🕒

HellaSwag

✅

🕒

🕒

🕒

🕒

🕒

HotpotQA

✅

🕒

🕒

🕒

🕒

🕒

MMLU

✅

🕒

🕒

🕒

🕒

🕒

Musique

✅

🕒

🕒

🕒

🕒

🕒

NarrativeQA

✅

🕒

✅

⏳

✅

🕒

NQ

✅

🕒

✅

⏳

✅

🕒

OpenbookQA

✅

🕒

🕒

🕒

🕒

🕒

PIQA

✅

🕒

✅

🕒

🕒

🕒

PopQA

✅

🕒

✅

⏳

✅

🕒

Quartz

✅

🕒

🕒

🕒

🕒

🕒

SIQA

✅

🕒

✅

🕒

✅

🕒

StrategyQA

✅

🕒

🕒

🕒

🕒

🕒

TREX

✅

🕒

🕒

🕒

🕒

🕒

TriviaQA

✅

🕒

✅

⏳

✅

🕒

TruthfulQA

✅

🕒

🕒

🕒

🕒

🕒

TruthfulQA

✅

🕒

🕒

🕒

🕒

🕒

WebQ

✅

🕒

✅

⏳

✅

🕒

WikiQA

✅

🕒

✅

⏳

✅

🕒

WikiAsp

✅

🕒

🕒

🕒

🕒

🕒

WikiPassageQA

✅

🕒

✅

⏳

✅

🕒

WNED

✅

🕒

🕒

🕒

🕒

🕒

WoW

✅

🕒

🕒

🕒

🕒

🕒

Zsre

✅

🕒

🕒

🕒

🕒

🕒

2️⃣ Running Retrieval

To perform retrieval using Rankify, you can choose from various retrieval methods such as BM25, DPR, ANCE, Contriever, ColBERT, and BGE.

Example: Running Retrieval on Sample Queries

from rankify.dataset.dataset import Document, Question, Answer, Context

from rankify.retrievers.retriever import Retriever

# Sample Documents

documents = [

Document(question=Question("the cast of a good day to die hard?"), answers=Answer([

"Jai Courtney",

"Sebastian Koch",

"Radivoje Bukvić",

"Yuliya Snigir",

"Sergei Kolesnikov",

"Mary Elizabeth Winstead",

"Bruce Willis"

]), contexts=[]),

Document(question=Question("Who wrote Hamlet?"), answers=Answer(["Shakespeare"]), contexts=[])

]

# BM25 retrieval on Wikipedia

bm25_retriever_wiki = Retriever(method="bm25", n_docs=5, index_type="wiki")

# BM25 retrieval on MS MARCO

bm25_retriever_msmacro = Retriever(method="bm25", n_docs=5, index_type="msmarco")

# DPR (multi-encoder) retrieval on Wikipedia

dpr_retriever_wiki = Retriever(method="dpr", model="dpr-multi", n_docs=5, index_type="wiki")

# DPR (multi-encoder) retrieval on MS MARCO

dpr_retriever_msmacro = Retriever(method="dpr", model="dpr-multi", n_docs=5, index_type="msmarco")

# DPR (single-encoder) retrieval on Wikipedia

dpr_retriever_wiki = Retriever(method="dpr", model="dpr-single", n_docs=5, index_type="wiki")

# DPR (single-encoder) retrieval on MS MARCO

dpr_retriever_msmacro = Retriever(method="dpr", model="dpr-single", n_docs=5, index_type="msmarco")

# ANCE retrieval on Wikipedia

ance_retriever_wiki = Retriever(method="ance", model="ance-multi", n_docs=5, index_type="wiki")

# ANCE retrieval on MS MARCO

ance_retriever_msmacro = Retriever(method="ance", model="ance-multi", n_docs=5, index_type="msmarco")

# Contriever retrieval on Wikipedia

contriever_retriever_wiki = Retriever(method="contriever", model="facebook/contriever-msmarco", n_docs=5, index_type="wiki")

# Contriever retrieval on MS MARCO

contriever_retriever_msmacro = Retriever(method="contriever", model="facebook/contriever-msmarco", n_docs=5, index_type="msmarco")

# ColBERT retrieval on Wikipedia

colbert_retriever_wiki = Retriever(method="colbert", model="colbert-ir/colbertv2.0", n_docs=5, index_type="wiki")

# ColBERT retrieval on MS MARCO

colbert_retriever_msmacro = Retriever(method="colbert", model="colbert-ir/colbertv2.0", n_docs=5, index_type="msmarco")

# BGE retrieval on Wikipedia

bge_retriever_wiki = Retriever(method="bge", model="BAAI/bge-large-en-v1.5", n_docs=5, index_type="wiki")

# BGE retrieval on MS MARCO

bge_retriever_msmacro = Retriever(method="bge", model="BAAI/bge-large-en-v1.5", n_docs=5, index_type="msmarco")

# Hyde retrieval on Wikipedia

hyde_retriever_wiki = Retriever(method="hyde" , n_docs=5, index_type="wiki", api_key=OPENAI_API_KEY )

# Hyde retrieval on MS MARCO

hyde_retriever_msmacro = Retriever(method="hyde", n_docs=5, index_type="msmarco", api_key=OPENAI_API_KEY)

Running Retrieval

After defining the retriever, you can retrieve documents using:

retrieved_documents = bm25_retriever_wiki.retrieve(documents)

for i, doc in enumerate(retrieved_documents):

print(f"\nDocument {i+1}:")

print(doc)

3️⃣ Running Reranking

Rankify provides support for multiple reranking models. Below are examples of how to use each model.

Example: Reranking a Document

from rankify.dataset.dataset import Document, Question, Answer, Context

from rankify.models.reranking import Reranking

# Sample document setup

question = Question("When did Thomas Edison invent the light bulb?")

answers = Answer(["1879"])

contexts = [

Context(text="Lightning strike at Seoul National University", id=1),

Context(text="Thomas Edison tried to invent a device for cars but failed", id=2),

Context(text="Coffee is good for diet", id=3),

Context(text="Thomas Edison invented the light bulb in 1879", id=4),

Context(text="Thomas Edison worked with electricity", id=5),

]

document = Document(question=question, answers=answers, contexts=contexts)

# Initialize the reranker

reranker = Reranking(method="monot5", model_name="monot5-base-msmarco")

# Apply reranking

reranker.rank([document])

# Print reordered contexts

for context in document.reorder_contexts:

print(f" - {context.text}")

Examples of Using Different Reranking Models

# UPR

model = Reranking(method='upr', model_name='t5-base')

# API-Based Rerankers

model = Reranking(method='apiranker', model_name='voyage', api_key='your-api-key')

model = Reranking(method='apiranker', model_name='jina', api_key='your-api-key')

model = Reranking(method='apiranker', model_name='mixedbread.ai', api_key='your-api-key')

# Blender Reranker

model = Reranking(method='blender_reranker', model_name='PairRM')

# ColBERT Reranker

model = Reranking(method='colbert_ranker', model_name='Colbert')

# EchoRank

model = Reranking(method='echorank', model_name='flan-t5-large')

# First Ranker

model = Reranking(method='first_ranker', model_name='base')

# FlashRank

model = Reranking(method='flashrank', model_name='ms-marco-TinyBERT-L-2-v2')

# InContext Reranker

Reranking(method='incontext_reranker', model_name='llamav3.1-8b')

# InRanker

model = Reranking(method='inranker', model_name='inranker-small')

# ListT5

model = Reranking(method='listt5', model_name='listt5-base')

# LiT5 Distill

model = Reranking(method='lit5distill', model_name='LiT5-Distill-base')

# LiT5 Score

model = Reranking(method='lit5score', model_name='LiT5-Distill-base')

# LLM Layerwise Ranker

model = Reranking(method='llm_layerwise_ranker', model_name='bge-multilingual-gemma2')

# LLM2Vec

model = Reranking(method='llm2vec', model_name='Meta-Llama-31-8B')

# MonoBERT

model = Reranking(method='monobert', model_name='monobert-large')

# MonoT5

Reranking(method='monot5', model_name='monot5-base-msmarco')

# RankGPT

model = Reranking(method='rankgpt', model_name='llamav3.1-8b')

# RankGPT API

model = Reranking(method='rankgpt-api', model_name='gpt-3.5', api_key="gpt-api-key")

model = Reranking(method='rankgpt-api', model_name='gpt-4', api_key="gpt-api-key")

model = Reranking(method='rankgpt-api', model_name='llamav3.1-8b', api_key="together-api-key")

model = Reranking(method='rankgpt-api', model_name='claude-3-5', api_key="claude-api-key")

# RankT5

model = Reranking(method='rankt5', model_name='rankt5-base')

# Sentence Transformer Reranker

model = Reranking(method='sentence_transformer_reranker', model_name='all-MiniLM-L6-v2')

model = Reranking(method='sentence_transformer_reranker', model_name='gtr-t5-base')

model = Reranking(method='sentence_transformer_reranker', model_name='sentence-t5-base')

model = Reranking(method='sentence_transformer_reranker', model_name='distilbert-multilingual-nli-stsb-quora-ranking')

model = Reranking(method='sentence_transformer_reranker', model_name='msmarco-bert-co-condensor')

# SPLADE

model = Reranking(method='splade', model_name='splade-cocondenser')

# Transformer Ranker

model = Reranking(method='transformer_ranker', model_name='mxbai-rerank-xsmall')

model = Reranking(method='transformer_ranker', model_name='bge-reranker-base')

model = Reranking(method='transformer_ranker', model_name='bce-reranker-base')

model = Reranking(method='transformer_ranker', model_name='jina-reranker-tiny')

model = Reranking(method='transformer_ranker', model_name='gte-multilingual-reranker-base')

model = Reranking(method='transformer_ranker', model_name='nli-deberta-v3-large')

model = Reranking(method='transformer_ranker', model_name='ms-marco-TinyBERT-L-6')

model = Reranking(method='transformer_ranker', model_name='msmarco-MiniLM-L12-en-de-v1')

# TwoLAR

model = Reranking(method='twolar', model_name='twolar-xl')

# Vicuna Reranker

model = Reranking(method='vicuna_reranker', model_name='rank_vicuna_7b_v1')

# Zephyr Reranker

model = Reranking(method='zephyr_reranker', model_name='rank_zephyr_7b_v1_full')

4️⃣ Using Generator Module

Rankify provides a Generator Module to facilitate retrieval-augmented generation (RAG) by integrating retrieved documents into generative models for producing answers. Below is an example of how to use different generator methods.

from rankify.dataset.dataset import Document, Question, Answer, Context

from rankify.generator.generator import Generator

# Define question and answer

question = Question("What is the capital of France?")

answers = Answer(["Paris"])

contexts = [

Context(id=1, title="France", text="The capital of France is Paris.", score=0.9),

Context(id=2, title="Germany", text="Berlin is the capital of Germany.", score=0.5)

]

# Construct document

doc = Document(question=question, answers=answers, contexts=contexts)

# Initialize Generator (e.g., Meta Llama)

generator = Generator(method="in-context-ralm", model_name='meta-llama/Llama-3.1-8B')

# Generate answer

generated_answers = generator.generate([doc])

print(generated_answers) # Output: ["Paris"]

5️⃣ Evaluating with Metrics

Rankify provides built-in evaluation metrics for retrieval, re-ranking, and retrieval-augmented generation (RAG). These metrics help assess the quality of retrieved documents, the effectiveness of ranking models, and the accuracy of generated answers.

Evaluating Generated Answers

You can evaluate the quality of retrieval-augmented generation (RAG) results by comparing generated answers with ground-truth answers.

from rankify.metrics.metrics import Metrics

from rankify.dataset.dataset import Dataset

# Load dataset

dataset = Dataset('bm25', 'nq-test', 100)

documents = dataset.download(force_download=False)

# Initialize Generator

generator = Generator(method="in-context-ralm", model_name='meta-llama/Llama-3.1-8B')

# Generate answers

generated_answers = generator.generate(documents)

# Evaluate generated answers

metrics = Metrics(documents)

print(metrics.calculate_generation_metrics(generated_answers))

Evaluating Retrieval Performance

# Calculate retrieval metrics before reranking

metrics = Metrics(documents)

before_ranking_metrics = metrics.calculate_retrieval_metrics(ks=[1, 5, 10, 20, 50, 100], use_reordered=False)

print(before_ranking_metrics)

Evaluating Reranked Results

# Calculate retrieval metrics after reranking

after_ranking_metrics = metrics.calculate_retrieval_metrics(ks=[1, 5, 10, 20, 50, 100], use_reordered=True)

print(after_ranking_metrics)

📜 Supported Models

1️⃣ Retrievers

- ✅ BM25

- ✅ DPR

- ✅ ColBERT

- ✅ ANCE

- ✅ BGE

- ✅ Contriever

- ✅ BPR

- ✅ HYDE

- 🕒 RepLlama

- 🕒 coCondenser

- 🕒 Spar

- 🕒 Dragon

- 🕒 Hybird

2️⃣ Rerankers

- ✅ Cross-Encoders

- ✅ RankGPT

- ✅ RankGPT-API

- ✅ MonoT5

- ✅ MonoBert

- ✅ RankT5

- ✅ ListT5

- ✅ LiT5Score

- ✅ LiT5Dist

- ✅ Vicuna Reranker

- ✅ Zephyr Reranker

- ✅ Sentence Transformer-based

- ✅ FlashRank Models

- ✅ API-Based Rerankers

- ✅ ColBERT Reranker

- ✅ LLM Layerwise Ranker

- ✅ Splade Reranker

- ✅ UPR Reranker

- ✅ Inranker Reranker

- ✅ Transformer Reranker

- ✅ FIRST Reranker

- ✅ Blender Reranker

- ✅ LLM2VEC Reranker

- ✅ ECHO Reranker

- ✅ Incontext Reranker

- 🕒 DynRank

- 🕒 ASRank

- 🕒 RankLlama

3️⃣ Generators

- ✅ Fusion-in-Decoder (FiD) with T5

- ✅ In-Context Learning RLAM

✨ Features

- 🔥 Unified Framework: Combines retrieval, re-ranking, and retrieval-augmented generation (RAG) into a single modular toolkit.

- 📚 Rich Dataset Support: Includes 40+ benchmark datasets with pre-retrieved documents for seamless experimentation.

- 🧲 Diverse Retrieval Methods: Supports BM25, DPR, ANCE, BPR, ColBERT, BGE, and Contriever for flexible retrieval strategies.

- 🎯 Powerful Re-Ranking: Implements 24 advanced models with 41 sub-methods to optimize ranking performance.

- 🏗️ Prebuilt Indices: Provides Wikipedia and MS MARCO corpora, eliminating indexing overhead and speeding up retrieval.

- 🔮 Seamless RAG Integration: Works with GPT, LLAMA, T5, and Fusion-in-Decoder (FiD) models for retrieval-augmented generation.

- 🛠 Extensible & Modular: Easily integrates custom datasets, retrievers, ranking models, and RAG pipelines.

- 📊 Built-in Evaluation Suite: Includes retrieval, ranking, and RAG metrics for robust benchmarking.

- 📖 User-Friendly Documentation: Access detailed 📖 online docs, example notebooks, and tutorials for easy adoption.

🔍 Roadmap

Rankify is still under development, and this is our first release (v0.1.0). While it already supports a wide range of retrieval, re-ranking, and RAG techniques, we are actively enhancing its capabilities by adding more retrievers, rankers, datasets, and features.

🛠 Planned Improvements

Retrievers

✅ Supports: BM25, DPR, ANCE, BPR, ColBERT, BGE, Contriever

✨ ⏳ Coming Soon: Spar, MSS, MSS-DPR

✨ ⏳ Custom Index Loading for user-defined retrieval corpora

Re-Rankers

✅ 24 models & 41 sub-methods

✨ ⏳ Expanding with more ranking models

Datasets

✅ 40 benchmark datasets

✨ ⏳ Adding new datasets & custom dataset integration

Retrieval-Augmented Generation (RAG)

✅ Works with: GPT, LLAMA, T5

✨ ⏳ Expanding to more generative models

Evaluation & Usability

✅ Standard metrics: Top-K, EM, Recall

✨ ⏳ Adding advanced metrics: NDCG, MAP for retrievers

Pipeline Integration

✨ ⏳ Introducing a pipeline module for end-to-end retrieval, ranking, and RAG workflows

📖 Documentation

For full API documentation, visit the Rankify Docs.

💡 Contributing

Follow these steps to get involved:

-

Fork this repository to your GitHub account.

-

Create a new branch for your feature or fix:

git checkout -b feature/YourFeatureName

-

Make your changes and commit them:

git commit -m "Add YourFeatureName"

-

Push the changes to your branch:

git push origin feature/YourFeatureName

-

Submit a Pull Request to propose your changes.

Thank you for helping make this project better!

🌐 Community Contributions

Chinese community resources available!

Special thanks to Xiumao for writing two exceptional Chinese blog posts about Rankify:

These articles were crafted with high-traffic optimization in mind and are widely recommended in Chinese academic and developer circles.

We updated the 中文版本 to reflect these blog contributions while keeping original content intact—thank you Xiumao for your continued support!

🔖 License

Rankify is licensed under the Apache-2.0 License - see the LICENSE file for details.

🙏 Acknowledgments

We would like to express our gratitude to the following libraries, which have greatly contributed to the development of Rankify:

-

Rerankers – A powerful Python library for integrating various reranking methods.

🔗 GitHub Repository

-

Pyserini – A toolkit for supporting BM25-based retrieval and integration with sparse/dense retrievers.

🔗 GitHub Repository

-

FlashRAG – A modular framework for Retrieval-Augmented Generation (RAG) research.

🔗 GitHub Repository

🌟 Citation

Please kindly cite our paper if helps your research:

@article{abdallah2025rankify,

title={Rankify: A Comprehensive Python Toolkit for Retrieval, Re-Ranking, and Retrieval-Augmented Generation},

author={Abdallah, Abdelrahman and Mozafari, Jamshid and Piryani, Bhawna and Ali, Mohammed and Jatowt, Adam},

journal={arXiv preprint arXiv:2502.02464},

year={2025}

}

Star History

If you like our Framework, don't hesitate to ⭐ star this repository ⭐. This helps us to make the Framework more better and scalable to different models and methods 🤗.

A modular and efficient retrieval, reranking and RAG framework designed to work with state-of-the-art models for retrieval, ranking and rag tasks.

Rankify is a Python toolkit designed for unified retrieval, re-ranking, and retrieval-augmented generation (RAG) research. Our toolkit integrates 40 pre-retrieved benchmark datasets and supports 7 retrieval techniques, 24 state-of-the-art re-ranking models, and multiple RAG methods. Rankify provides a modular and extensible framework, enabling seamless experimentation and benchmarking across retrieval pipelines. Comprehensive documentation, open-source implementation, and pre-built evaluation tools make Rankify a powerful resource for researchers and practitioners in the field.

🚀 Demo

To run the demo locally:

# Make sure Rankify is installed

pip install streamlit

# Then run the demo

streamlit run demo.pyhttps://github.com/user-attachments/assets/13184943-55db-4f0c-b509-fde920b809bc

🔗 Navigation

- Features

- Roadmap

- Installation

- Quick Start

- Retrievers

- Re-Rankers

- Generators

- Evaluation

- Documentation

- Community Contributing

- Contributing

- License

- Acknowledgments

- Citation

🔧 Installation

Set up the virtual environment

First, create and activate a conda environment with Python 3.10:

conda create -n rankify python=3.10

conda activate rankifyInstall PyTorch 2.5.1

we recommend installing Rankify with PyTorch 2.5.1 for Rankify. Refer to the PyTorch installation page for platform-specific installation commands.

If you have access to GPUs, it's recommended to install the CUDA version 12.4 or 12.6 of PyTorch, as many of the evaluation metrics are optimized for GPU use.

To install Pytorch 2.5.1 you can install it from the following cmd

pip install torch==2.5.1 torchvision==0.20.1 torchaudio==2.5.1 --index-url https://download.pytorch.org/whl/cu124Basic Installation

To install Rankify, simply use pip (requires Python 3.10+):

pip install rankify

This will install the base functionality required for retrieval, re-ranking, and retrieval-augmented generation (RAG).

Recommended Installation

For full functionality, we recommend installing Rankify with all dependencies:

pip install "rankify[all]"This ensures you have all necessary modules, including retrieval, re-ranking, and RAG support.

Optional Dependencies

If you prefer to install only specific components, choose from the following:

# Install dependencies for retrieval only (BM25, DPR, ANCE, etc.)

pip install "rankify[retriever]"

# Install base re-ranking with vLLM support for `FirstModelReranker`, `LiT5ScoreReranker`, `LiT5DistillReranker`, `VicunaReranker`, and `ZephyrReranker'.

pip install "rankify[reranking]"Or, to install from GitHub for the latest development version:

git clone https://github.com/DataScienceUIBK/rankify.git

cd rankify

pip install -e .

# For full functionality we recommend installing Rankify with all dependencies:

pip install -e ".[all]"

# Install dependencies for retrieval only (BM25, DPR, ANCE, etc.)

pip install -e ".[retriever]"

# Install base re-ranking with vLLM support for `FirstModelReranker`, `LiT5ScoreReranker`, `LiT5DistillReranker`, `VicunaReranker`, and `ZephyrReranker'.

pip install -e ".[reranking]"Using ColBERT Retriever

If you want to use ColBERT Retriever, follow these additional setup steps:

# Install GCC and required libraries

conda install -c conda-forge gcc=9.4.0 gxx=9.4.0

conda install -c conda-forge libstdcxx-ng# Export necessary environment variables

export LD_LIBRARY_PATH=$CONDA_PREFIX/lib:$LD_LIBRARY_PATH

export CC=gcc

export CXX=g++

export PATH=$CONDA_PREFIX/bin:$PATH

# Clear cached torch extensions

rm -rf ~/.cache/torch_extensions/*🚀 Quick Start

1️⃣ Pre-retrieved Datasets

We provide 1,000 pre-retrieved documents per dataset, which you can download from:

🔗 Hugging Face Dataset Repository

Dataset Format

The pre-retrieved documents are structured as follows:

[

{

"question": "...",

"answers": ["...", "...", ...],

"ctxs": [

{

"id": "...", // Passage ID from database TSV file

"score": "...", // Retriever score

"has_answer": true|false // Whether the passage contains the answer

}

]

}

]Access Datasets in Rankify

You can easily download and use pre-retrieved datasets through Rankify.

List Available Datasets

To see all available datasets:

from rankify.dataset.dataset import Dataset

# Display available datasets

Dataset.avaiable_dataset()Retriever Datasets

from rankify.dataset.dataset import Dataset

# Download BM25-retrieved documents for nq-dev

dataset = Dataset(retriever="bm25", dataset_name="nq-dev", n_docs=100)

documents = dataset.download(force_download=False)

# Download BGE-retrieved documents for nq-dev

dataset = Dataset(retriever="bge", dataset_name="nq-dev", n_docs=100)

documents = dataset.download(force_download=False)

# Download ColBERT-retrieved documents for nq-dev

dataset = Dataset(retriever="colbert", dataset_name="nq-dev", n_docs=100)

documents = dataset.download(force_download=False)

# Download MSS-DPR-retrieved documents for nq-dev

dataset = Dataset(retriever="mss-dpr", dataset_name="nq-dev", n_docs=100)

documents = dataset.download(force_download=False)

# Download MSS-retrieved documents for nq-dev

dataset = Dataset(retriever="mss", dataset_name="nq-dev", n_docs=100)

documents = dataset.download(force_download=False)

# Download MSS-retrieved documents for nq-dev

dataset = Dataset(retriever="contriever", dataset_name="nq-dev", n_docs=100)

documents = dataset.download(force_download=False)

# Download ANCE-retrieved documents for nq-dev

dataset = Dataset(retriever="ance", dataset_name="nq-dev", n_docs=100)

documents = dataset.download(force_download=False)Load Pre-retrieved Dataset from File

If you have already downloaded a dataset, you can load it directly:

from rankify.dataset.dataset import Dataset

# Load pre-downloaded BM25 dataset for WebQuestions

documents = Dataset.load_dataset('./tests/out-datasets/bm25/web_questions/test.json', 100)Now, you can integrate retrieved documents with re-ranking and RAG workflows! 🚀

Feature Comparison for Pre-Retrieved Datasets

The following table provides an overview of the availability of different retrieval methods (BM25, DPR, ColBERT, ANCE, BGE, Contriever) for each dataset.

✅ Completed ⏳ Part Completed, Pending other Parts 🕒 Pending

| Dataset | BM25 | DPR | ColBERT | ANCE | BGE | Contriever |

|---|---|---|---|---|---|---|

| 2WikimultihopQA | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| ArchivialQA | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| ChroniclingAmericaQA | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| EntityQuestions | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| AmbigQA | ✅ | 🕒 | ✅ | 🕒 | 🕒 | 🕒 |

| ARC | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| ASQA | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| MS MARCO | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| AY2 | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| Bamboogle | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| BoolQ | ✅ | 🕒 | ✅ | 🕒 | ✅ | 🕒 |

| CommonSenseQA | ✅ | 🕒 | ✅ | 🕒 | ✅ | 🕒 |

| CuratedTREC | ✅ | 🕒 | ✅ | ⏳ | ✅ | 🕒 |

| ELI5 | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| FERMI | ✅ | 🕒 | ✅ | ⏳ | ✅ | 🕒 |

| FEVER | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| HellaSwag | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| HotpotQA | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| MMLU | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| Musique | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| NarrativeQA | ✅ | 🕒 | ✅ | ⏳ | ✅ | 🕒 |

| NQ | ✅ | 🕒 | ✅ | ⏳ | ✅ | 🕒 |

| OpenbookQA | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| PIQA | ✅ | 🕒 | ✅ | 🕒 | 🕒 | 🕒 |

| PopQA | ✅ | 🕒 | ✅ | ⏳ | ✅ | 🕒 |

| Quartz | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| SIQA | ✅ | 🕒 | ✅ | 🕒 | ✅ | 🕒 |

| StrategyQA | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| TREX | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| TriviaQA | ✅ | 🕒 | ✅ | ⏳ | ✅ | 🕒 |

| TruthfulQA | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| TruthfulQA | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| WebQ | ✅ | 🕒 | ✅ | ⏳ | ✅ | 🕒 |

| WikiQA | ✅ | 🕒 | ✅ | ⏳ | ✅ | 🕒 |

| WikiAsp | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| WikiPassageQA | ✅ | 🕒 | ✅ | ⏳ | ✅ | 🕒 |

| WNED | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| WoW | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

| Zsre | ✅ | 🕒 | 🕒 | 🕒 | 🕒 | 🕒 |

2️⃣ Running Retrieval

To perform retrieval using Rankify, you can choose from various retrieval methods such as BM25, DPR, ANCE, Contriever, ColBERT, and BGE.

Example: Running Retrieval on Sample Queries

from rankify.dataset.dataset import Document, Question, Answer, Context

from rankify.retrievers.retriever import Retriever

# Sample Documents

documents = [

Document(question=Question("the cast of a good day to die hard?"), answers=Answer([

"Jai Courtney",

"Sebastian Koch",

"Radivoje Bukvić",

"Yuliya Snigir",

"Sergei Kolesnikov",

"Mary Elizabeth Winstead",

"Bruce Willis"

]), contexts=[]),

Document(question=Question("Who wrote Hamlet?"), answers=Answer(["Shakespeare"]), contexts=[])

]# BM25 retrieval on Wikipedia

bm25_retriever_wiki = Retriever(method="bm25", n_docs=5, index_type="wiki")

# BM25 retrieval on MS MARCO

bm25_retriever_msmacro = Retriever(method="bm25", n_docs=5, index_type="msmarco")

# DPR (multi-encoder) retrieval on Wikipedia

dpr_retriever_wiki = Retriever(method="dpr", model="dpr-multi", n_docs=5, index_type="wiki")

# DPR (multi-encoder) retrieval on MS MARCO

dpr_retriever_msmacro = Retriever(method="dpr", model="dpr-multi", n_docs=5, index_type="msmarco")

# DPR (single-encoder) retrieval on Wikipedia

dpr_retriever_wiki = Retriever(method="dpr", model="dpr-single", n_docs=5, index_type="wiki")

# DPR (single-encoder) retrieval on MS MARCO

dpr_retriever_msmacro = Retriever(method="dpr", model="dpr-single", n_docs=5, index_type="msmarco")

# ANCE retrieval on Wikipedia

ance_retriever_wiki = Retriever(method="ance", model="ance-multi", n_docs=5, index_type="wiki")

# ANCE retrieval on MS MARCO

ance_retriever_msmacro = Retriever(method="ance", model="ance-multi", n_docs=5, index_type="msmarco")

# Contriever retrieval on Wikipedia

contriever_retriever_wiki = Retriever(method="contriever", model="facebook/contriever-msmarco", n_docs=5, index_type="wiki")

# Contriever retrieval on MS MARCO

contriever_retriever_msmacro = Retriever(method="contriever", model="facebook/contriever-msmarco", n_docs=5, index_type="msmarco")

# ColBERT retrieval on Wikipedia

colbert_retriever_wiki = Retriever(method="colbert", model="colbert-ir/colbertv2.0", n_docs=5, index_type="wiki")

# ColBERT retrieval on MS MARCO

colbert_retriever_msmacro = Retriever(method="colbert", model="colbert-ir/colbertv2.0", n_docs=5, index_type="msmarco")

# BGE retrieval on Wikipedia

bge_retriever_wiki = Retriever(method="bge", model="BAAI/bge-large-en-v1.5", n_docs=5, index_type="wiki")

# BGE retrieval on MS MARCO

bge_retriever_msmacro = Retriever(method="bge", model="BAAI/bge-large-en-v1.5", n_docs=5, index_type="msmarco")

# Hyde retrieval on Wikipedia

hyde_retriever_wiki = Retriever(method="hyde" , n_docs=5, index_type="wiki", api_key=OPENAI_API_KEY )

# Hyde retrieval on MS MARCO

hyde_retriever_msmacro = Retriever(method="hyde", n_docs=5, index_type="msmarco", api_key=OPENAI_API_KEY)Running Retrieval

After defining the retriever, you can retrieve documents using:

retrieved_documents = bm25_retriever_wiki.retrieve(documents)

for i, doc in enumerate(retrieved_documents):

print(f"\nDocument {i+1}:")

print(doc)3️⃣ Running Reranking

Rankify provides support for multiple reranking models. Below are examples of how to use each model.

Example: Reranking a Document

from rankify.dataset.dataset import Document, Question, Answer, Context

from rankify.models.reranking import Reranking

# Sample document setup

question = Question("When did Thomas Edison invent the light bulb?")

answers = Answer(["1879"])

contexts = [

Context(text="Lightning strike at Seoul National University", id=1),

Context(text="Thomas Edison tried to invent a device for cars but failed", id=2),

Context(text="Coffee is good for diet", id=3),

Context(text="Thomas Edison invented the light bulb in 1879", id=4),

Context(text="Thomas Edison worked with electricity", id=5),

]

document = Document(question=question, answers=answers, contexts=contexts)

# Initialize the reranker

reranker = Reranking(method="monot5", model_name="monot5-base-msmarco")

# Apply reranking

reranker.rank([document])

# Print reordered contexts

for context in document.reorder_contexts:

print(f" - {context.text}")Examples of Using Different Reranking Models

# UPR

model = Reranking(method='upr', model_name='t5-base')

# API-Based Rerankers

model = Reranking(method='apiranker', model_name='voyage', api_key='your-api-key')

model = Reranking(method='apiranker', model_name='jina', api_key='your-api-key')

model = Reranking(method='apiranker', model_name='mixedbread.ai', api_key='your-api-key')

# Blender Reranker

model = Reranking(method='blender_reranker', model_name='PairRM')

# ColBERT Reranker

model = Reranking(method='colbert_ranker', model_name='Colbert')

# EchoRank

model = Reranking(method='echorank', model_name='flan-t5-large')

# First Ranker

model = Reranking(method='first_ranker', model_name='base')

# FlashRank

model = Reranking(method='flashrank', model_name='ms-marco-TinyBERT-L-2-v2')

# InContext Reranker

Reranking(method='incontext_reranker', model_name='llamav3.1-8b')

# InRanker

model = Reranking(method='inranker', model_name='inranker-small')

# ListT5

model = Reranking(method='listt5', model_name='listt5-base')

# LiT5 Distill

model = Reranking(method='lit5distill', model_name='LiT5-Distill-base')

# LiT5 Score

model = Reranking(method='lit5score', model_name='LiT5-Distill-base')

# LLM Layerwise Ranker

model = Reranking(method='llm_layerwise_ranker', model_name='bge-multilingual-gemma2')

# LLM2Vec

model = Reranking(method='llm2vec', model_name='Meta-Llama-31-8B')

# MonoBERT

model = Reranking(method='monobert', model_name='monobert-large')

# MonoT5

Reranking(method='monot5', model_name='monot5-base-msmarco')

# RankGPT

model = Reranking(method='rankgpt', model_name='llamav3.1-8b')

# RankGPT API

model = Reranking(method='rankgpt-api', model_name='gpt-3.5', api_key="gpt-api-key")

model = Reranking(method='rankgpt-api', model_name='gpt-4', api_key="gpt-api-key")

model = Reranking(method='rankgpt-api', model_name='llamav3.1-8b', api_key="together-api-key")

model = Reranking(method='rankgpt-api', model_name='claude-3-5', api_key="claude-api-key")

# RankT5

model = Reranking(method='rankt5', model_name='rankt5-base')

# Sentence Transformer Reranker

model = Reranking(method='sentence_transformer_reranker', model_name='all-MiniLM-L6-v2')

model = Reranking(method='sentence_transformer_reranker', model_name='gtr-t5-base')

model = Reranking(method='sentence_transformer_reranker', model_name='sentence-t5-base')

model = Reranking(method='sentence_transformer_reranker', model_name='distilbert-multilingual-nli-stsb-quora-ranking')

model = Reranking(method='sentence_transformer_reranker', model_name='msmarco-bert-co-condensor')

# SPLADE

model = Reranking(method='splade', model_name='splade-cocondenser')

# Transformer Ranker

model = Reranking(method='transformer_ranker', model_name='mxbai-rerank-xsmall')

model = Reranking(method='transformer_ranker', model_name='bge-reranker-base')

model = Reranking(method='transformer_ranker', model_name='bce-reranker-base')

model = Reranking(method='transformer_ranker', model_name='jina-reranker-tiny')

model = Reranking(method='transformer_ranker', model_name='gte-multilingual-reranker-base')

model = Reranking(method='transformer_ranker', model_name='nli-deberta-v3-large')

model = Reranking(method='transformer_ranker', model_name='ms-marco-TinyBERT-L-6')

model = Reranking(method='transformer_ranker', model_name='msmarco-MiniLM-L12-en-de-v1')

# TwoLAR

model = Reranking(method='twolar', model_name='twolar-xl')

# Vicuna Reranker

model = Reranking(method='vicuna_reranker', model_name='rank_vicuna_7b_v1')

# Zephyr Reranker

model = Reranking(method='zephyr_reranker', model_name='rank_zephyr_7b_v1_full')4️⃣ Using Generator Module

Rankify provides a Generator Module to facilitate retrieval-augmented generation (RAG) by integrating retrieved documents into generative models for producing answers. Below is an example of how to use different generator methods.

from rankify.dataset.dataset import Document, Question, Answer, Context

from rankify.generator.generator import Generator

# Define question and answer

question = Question("What is the capital of France?")

answers = Answer(["Paris"])

contexts = [

Context(id=1, title="France", text="The capital of France is Paris.", score=0.9),

Context(id=2, title="Germany", text="Berlin is the capital of Germany.", score=0.5)

]

# Construct document

doc = Document(question=question, answers=answers, contexts=contexts)

# Initialize Generator (e.g., Meta Llama)

generator = Generator(method="in-context-ralm", model_name='meta-llama/Llama-3.1-8B')

# Generate answer

generated_answers = generator.generate([doc])

print(generated_answers) # Output: ["Paris"]5️⃣ Evaluating with Metrics

Rankify provides built-in evaluation metrics for retrieval, re-ranking, and retrieval-augmented generation (RAG). These metrics help assess the quality of retrieved documents, the effectiveness of ranking models, and the accuracy of generated answers.

Evaluating Generated Answers

You can evaluate the quality of retrieval-augmented generation (RAG) results by comparing generated answers with ground-truth answers.

from rankify.metrics.metrics import Metrics

from rankify.dataset.dataset import Dataset

# Load dataset

dataset = Dataset('bm25', 'nq-test', 100)

documents = dataset.download(force_download=False)

# Initialize Generator

generator = Generator(method="in-context-ralm", model_name='meta-llama/Llama-3.1-8B')

# Generate answers

generated_answers = generator.generate(documents)

# Evaluate generated answers

metrics = Metrics(documents)

print(metrics.calculate_generation_metrics(generated_answers))Evaluating Retrieval Performance

# Calculate retrieval metrics before reranking

metrics = Metrics(documents)

before_ranking_metrics = metrics.calculate_retrieval_metrics(ks=[1, 5, 10, 20, 50, 100], use_reordered=False)

print(before_ranking_metrics)Evaluating Reranked Results

# Calculate retrieval metrics after reranking

after_ranking_metrics = metrics.calculate_retrieval_metrics(ks=[1, 5, 10, 20, 50, 100], use_reordered=True)

print(after_ranking_metrics)📜 Supported Models

1️⃣ Retrievers

- ✅ BM25

- ✅ DPR

- ✅ ColBERT

- ✅ ANCE

- ✅ BGE

- ✅ Contriever

- ✅ BPR

- ✅ HYDE

- 🕒 RepLlama

- 🕒 coCondenser

- 🕒 Spar

- 🕒 Dragon

- 🕒 Hybird

2️⃣ Rerankers

- ✅ Cross-Encoders

- ✅ RankGPT

- ✅ RankGPT-API

- ✅ MonoT5

- ✅ MonoBert

- ✅ RankT5

- ✅ ListT5

- ✅ LiT5Score

- ✅ LiT5Dist

- ✅ Vicuna Reranker

- ✅ Zephyr Reranker

- ✅ Sentence Transformer-based

- ✅ FlashRank Models

- ✅ API-Based Rerankers

- ✅ ColBERT Reranker

- ✅ LLM Layerwise Ranker

- ✅ Splade Reranker

- ✅ UPR Reranker

- ✅ Inranker Reranker

- ✅ Transformer Reranker

- ✅ FIRST Reranker

- ✅ Blender Reranker

- ✅ LLM2VEC Reranker

- ✅ ECHO Reranker

- ✅ Incontext Reranker

- 🕒 DynRank

- 🕒 ASRank

- 🕒 RankLlama

3️⃣ Generators

- ✅ Fusion-in-Decoder (FiD) with T5

- ✅ In-Context Learning RLAM

✨ Features

- 🔥 Unified Framework: Combines retrieval, re-ranking, and retrieval-augmented generation (RAG) into a single modular toolkit.

- 📚 Rich Dataset Support: Includes 40+ benchmark datasets with pre-retrieved documents for seamless experimentation.

- 🧲 Diverse Retrieval Methods: Supports BM25, DPR, ANCE, BPR, ColBERT, BGE, and Contriever for flexible retrieval strategies.

- 🎯 Powerful Re-Ranking: Implements 24 advanced models with 41 sub-methods to optimize ranking performance.

- 🏗️ Prebuilt Indices: Provides Wikipedia and MS MARCO corpora, eliminating indexing overhead and speeding up retrieval.

- 🔮 Seamless RAG Integration: Works with GPT, LLAMA, T5, and Fusion-in-Decoder (FiD) models for retrieval-augmented generation.

- 🛠 Extensible & Modular: Easily integrates custom datasets, retrievers, ranking models, and RAG pipelines.

- 📊 Built-in Evaluation Suite: Includes retrieval, ranking, and RAG metrics for robust benchmarking.

- 📖 User-Friendly Documentation: Access detailed 📖 online docs, example notebooks, and tutorials for easy adoption.

🔍 Roadmap

Rankify is still under development, and this is our first release (v0.1.0). While it already supports a wide range of retrieval, re-ranking, and RAG techniques, we are actively enhancing its capabilities by adding more retrievers, rankers, datasets, and features.

🛠 Planned Improvements

Retrievers

✅ Supports: BM25, DPR, ANCE, BPR, ColBERT, BGE, Contriever

✨ ⏳ Coming Soon: Spar, MSS, MSS-DPR

✨ ⏳ Custom Index Loading for user-defined retrieval corpora

Re-Rankers

✅ 24 models & 41 sub-methods

✨ ⏳ Expanding with more ranking models

Datasets

✅ 40 benchmark datasets

✨ ⏳ Adding new datasets & custom dataset integration

Retrieval-Augmented Generation (RAG)

✅ Works with: GPT, LLAMA, T5

✨ ⏳ Expanding to more generative models

Evaluation & Usability

✅ Standard metrics: Top-K, EM, Recall

✨ ⏳ Adding advanced metrics: NDCG, MAP for retrievers

Pipeline Integration

✨ ⏳ Introducing a pipeline module for end-to-end retrieval, ranking, and RAG workflows

📖 Documentation

For full API documentation, visit the Rankify Docs.

💡 Contributing

Follow these steps to get involved:

-

Fork this repository to your GitHub account.

-

Create a new branch for your feature or fix:

git checkout -b feature/YourFeatureName

-

Make your changes and commit them:

git commit -m "Add YourFeatureName" -

Push the changes to your branch:

git push origin feature/YourFeatureName

-

Submit a Pull Request to propose your changes.

Thank you for helping make this project better!

🌐 Community Contributions

Chinese community resources available!

Special thanks to Xiumao for writing two exceptional Chinese blog posts about Rankify:

These articles were crafted with high-traffic optimization in mind and are widely recommended in Chinese academic and developer circles.

We updated the 中文版本 to reflect these blog contributions while keeping original content intact—thank you Xiumao for your continued support!

🔖 License

Rankify is licensed under the Apache-2.0 License - see the LICENSE file for details.

🙏 Acknowledgments

We would like to express our gratitude to the following libraries, which have greatly contributed to the development of Rankify:

-

Rerankers – A powerful Python library for integrating various reranking methods.

🔗 GitHub Repository -

Pyserini – A toolkit for supporting BM25-based retrieval and integration with sparse/dense retrievers.

🔗 GitHub Repository -

FlashRAG – A modular framework for Retrieval-Augmented Generation (RAG) research.

🔗 GitHub Repository

🌟 Citation

Please kindly cite our paper if helps your research:

@article{abdallah2025rankify,

title={Rankify: A Comprehensive Python Toolkit for Retrieval, Re-Ranking, and Retrieval-Augmented Generation},

author={Abdallah, Abdelrahman and Mozafari, Jamshid and Piryani, Bhawna and Ali, Mohammed and Jatowt, Adam},

journal={arXiv preprint arXiv:2502.02464},

year={2025}

}Star History

For Tasks:

Click tags to check more tools for each tasksFor Jobs:

Alternative AI tools for Rankify

Similar Open Source Tools

Rankify

Rankify is a Python toolkit designed for unified retrieval, re-ranking, and retrieval-augmented generation (RAG) research. It integrates 40 pre-retrieved benchmark datasets and supports 7 retrieval techniques, 24 state-of-the-art re-ranking models, and multiple RAG methods. Rankify provides a modular and extensible framework, enabling seamless experimentation and benchmarking across retrieval pipelines. It offers comprehensive documentation, open-source implementation, and pre-built evaluation tools, making it a powerful resource for researchers and practitioners in the field.

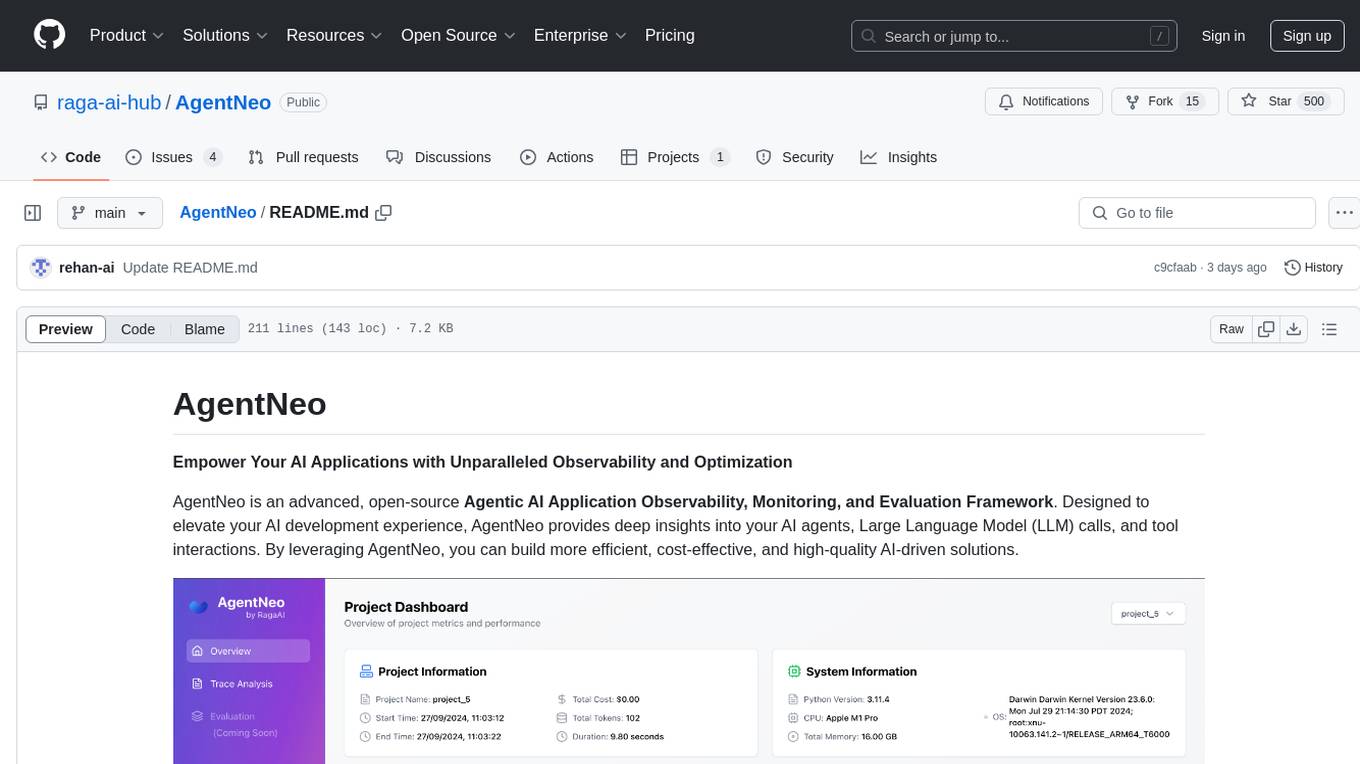

AgentNeo

AgentNeo is an advanced, open-source Agentic AI Application Observability, Monitoring, and Evaluation Framework designed to provide deep insights into AI agents, Large Language Model (LLM) calls, and tool interactions. It offers robust logging, visualization, and evaluation capabilities to help debug and optimize AI applications with ease. With features like tracing LLM calls, monitoring agents and tools, tracking interactions, detailed metrics collection, flexible data storage, simple instrumentation, interactive dashboard, project management, execution graph visualization, and evaluation tools, AgentNeo empowers users to build efficient, cost-effective, and high-quality AI-driven solutions.

AutoAgents

AutoAgents is a cutting-edge multi-agent framework built in Rust that enables the creation of intelligent, autonomous agents powered by Large Language Models (LLMs) and Ractor. Designed for performance, safety, and scalability. AutoAgents provides a robust foundation for building complex AI systems that can reason, act, and collaborate. With AutoAgents you can create Cloud Native Agents, Edge Native Agents and Hybrid Models as well. It is so extensible that other ML Models can be used to create complex pipelines using Actor Framework.

aegra

Aegra is a self-hosted AI agent backend platform that provides LangGraph power without vendor lock-in. Built with FastAPI + PostgreSQL, it offers complete control over agent orchestration for teams looking to escape vendor lock-in, meet data sovereignty requirements, enable custom deployments, and optimize costs. Aegra is Agent Protocol compliant and perfect for teams seeking a free, self-hosted alternative to LangGraph Platform with zero lock-in, full control, and compatibility with existing LangGraph Client SDK.

claude-flow

Claude-Flow is a workflow automation tool designed to streamline and optimize business processes. It provides a user-friendly interface for creating and managing workflows, allowing users to automate repetitive tasks and improve efficiency. With features such as drag-and-drop workflow builder, customizable templates, and integration with popular business tools, Claude-Flow empowers users to automate their workflows without the need for extensive coding knowledge. Whether you are a small business owner looking to streamline your operations or a project manager seeking to automate task assignments, Claude-Flow offers a flexible and scalable solution to meet your workflow automation needs.

shimmy

Shimmy is a 5.1MB single-binary local inference server providing OpenAI-compatible endpoints for GGUF models. It offers fast, reliable AI inference with sub-second responses, zero configuration, and automatic port management. Perfect for developers seeking privacy, cost-effectiveness, speed, and easy integration with popular tools like VSCode and Cursor. Shimmy is designed to be invisible infrastructure that simplifies local AI development and deployment.

claude-007-agents

Claude Code Agents is an open-source AI agent system designed to enhance development workflows by providing specialized AI agents for orchestration, resilience engineering, and organizational memory. These agents offer specialized expertise across technologies, AI system with organizational memory, and an agent orchestration system. The system includes features such as engineering excellence by design, advanced orchestration system, Task Master integration, live MCP integrations, professional-grade workflows, and organizational intelligence. It is suitable for solo developers, small teams, enterprise teams, and open-source projects. The system requires a one-time bootstrap setup for each project to analyze the tech stack, select optimal agents, create configuration files, set up Task Master integration, and validate system readiness.

anylabeling

AnyLabeling is a tool for effortless data labeling with AI support from YOLO and Segment Anything. It combines features from LabelImg and Labelme with an improved UI and auto-labeling capabilities. Users can annotate images with polygons, rectangles, circles, lines, and points, as well as perform auto-labeling using YOLOv5 and Segment Anything. The tool also supports text detection, recognition, and Key Information Extraction (KIE) labeling, with multiple language options available such as English, Vietnamese, and Chinese.

evi-run

evi-run is a powerful, production-ready multi-agent AI system built on Python using the OpenAI Agents SDK. It offers instant deployment, ultimate flexibility, built-in analytics, Telegram integration, and scalable architecture. The system features memory management, knowledge integration, task scheduling, multi-agent orchestration, custom agent creation, deep research, web intelligence, document processing, image generation, DEX analytics, and Solana token swap. It supports flexible usage modes like private, free, and pay mode, with upcoming features including NSFW mode, task scheduler, and automatic limit orders. The technology stack includes Python 3.11, OpenAI Agents SDK, Telegram Bot API, PostgreSQL, Redis, and Docker & Docker Compose for deployment.

finite-monkey-engine

FiniteMonkey is an advanced vulnerability mining engine powered purely by GPT, requiring no prior knowledge base or fine-tuning. Its effectiveness significantly surpasses most current related research approaches. The tool is task-driven, prompt-driven, and focuses on prompt design, leveraging 'deception' and hallucination as key mechanics. It has helped identify vulnerabilities worth over $60,000 in bounties. The tool requires PostgreSQL database, OpenAI API access, and Python environment for setup. It supports various languages like Solidity, Rust, Python, Move, Cairo, Tact, Func, Java, and Fake Solidity for scanning. FiniteMonkey is best suited for logic vulnerability mining in real projects, not recommended for academic vulnerability testing. GPT-4-turbo is recommended for optimal results with an average scan time of 2-3 hours for medium projects. The tool provides detailed scanning results guide and implementation tips for users.

hugging-llm

HuggingLLM is a project that aims to introduce ChatGPT to a wider audience, particularly those interested in using the technology to create new products or applications. The project focuses on providing practical guidance on how to use ChatGPT-related APIs to create new features and applications. It also includes detailed background information and system design introductions for relevant tasks, as well as example code and implementation processes. The project is designed for individuals with some programming experience who are interested in using ChatGPT for practical applications, and it encourages users to experiment and create their own applications and demos.

mcp

Model Context Protocol (MCP) is an open protocol that standardizes how applications provide context to large language models (LLMs). It allows AI applications to connect with various data sources and tools in a consistent manner, enhancing their capabilities and flexibility. This repository contains core libraries, test frameworks, engineering systems, pipelines, and tooling for Microsoft MCP Server contributors to unify engineering investments and reduce duplication and divergence. For more details, visit the official MCP website.

aigne-doc-smith

AIGNE DocSmith is a powerful AI-driven documentation generation tool that automates the creation of detailed, structured, and multi-language documentation directly from source code. It intelligently analyzes codebase to generate a comprehensive document structure, populates content with high-quality AI-powered generation, supports seamless translation into 12+ languages, integrates with AIGNE Hub for large language models, offers Discuss Kit publishing, automatically updates documentation with source code changes, and allows for individual document optimization.

RSTGameTranslation

RSTGameTranslation is a tool designed for translating game text into multiple languages efficiently. It provides a user-friendly interface for game developers to easily manage and localize their game content. With RSTGameTranslation, developers can streamline the translation process, ensuring consistency and accuracy across different language versions of their games. The tool supports various file formats commonly used in game development, making it versatile and adaptable to different project requirements. Whether you are working on a small indie game or a large-scale production, RSTGameTranslation can help you reach a global audience by making localization a seamless and hassle-free experience.

ChordMiniApp

ChordMini is an advanced music analysis platform with AI-powered chord recognition, beat detection, and synchronized lyrics. It features a clean and intuitive interface for YouTube search, chord progression visualization, interactive guitar diagrams with accurate fingering patterns, lead sheet with AI assistant for synchronized lyrics transcription, and various add-on features like Roman Numeral Analysis, Key Modulation Signals, Simplified Chord Notation, and Enhanced Chord Correction. The tool requires Node.js, Python 3.9+, and a Firebase account for setup. It offers a hybrid backend architecture for local development and production deployments, with features like beat detection, chord recognition, lyrics processing, rate limiting, and audio processing supporting MP3, WAV, and FLAC formats. ChordMini provides a comprehensive music analysis workflow from user input to visualization, including dual input support, environment-aware processing, intelligent caching, advanced ML pipeline, and rich visualization options.

Embodied-AI-Guide

Embodied-AI-Guide is a comprehensive guide for beginners to understand Embodied AI, focusing on the path of entry and useful information in the field. It covers topics such as Reinforcement Learning, Imitation Learning, Large Language Model for Robotics, 3D Vision, Control, Benchmarks, and provides resources for building cognitive understanding. The repository aims to help newcomers quickly establish knowledge in the field of Embodied AI.

For similar tasks

Rankify

Rankify is a Python toolkit designed for unified retrieval, re-ranking, and retrieval-augmented generation (RAG) research. It integrates 40 pre-retrieved benchmark datasets and supports 7 retrieval techniques, 24 state-of-the-art re-ranking models, and multiple RAG methods. Rankify provides a modular and extensible framework, enabling seamless experimentation and benchmarking across retrieval pipelines. It offers comprehensive documentation, open-source implementation, and pre-built evaluation tools, making it a powerful resource for researchers and practitioners in the field.

beyondllm

Beyond LLM offers an all-in-one toolkit for experimentation, evaluation, and deployment of Retrieval-Augmented Generation (RAG) systems. It simplifies the process with automated integration, customizable evaluation metrics, and support for various Large Language Models (LLMs) tailored to specific needs. The aim is to reduce LLM hallucination risks and enhance reliability.

llm-rag-workshop

The LLM RAG Workshop repository provides a workshop on using Large Language Models (LLMs) and Retrieval-Augmented Generation (RAG) to generate and understand text in a human-like manner. It includes instructions on setting up the environment, indexing Zoomcamp FAQ documents, creating a Q&A system, and using OpenAI for generation based on retrieved information. The repository focuses on enhancing language model responses with retrieved information from external sources, such as document databases or search engines, to improve factual accuracy and relevance of generated text.

buildel

Buildel is an AI automation platform that empowers users to create versatile workflows without writing code. It supports multiple providers and interfaces, offers pre-built use cases, and allows users to bring their own API keys. Ideal for AI-powered document retrieval, conversational interfaces, and data integration. Users can get started at app.buildel.ai or run Buildel locally with Node.js, Elixir/Erlang, Docker, Git, and JQ installed. Join the community on Discord for support and discussions.

chatgpt-webui

ChatGPT WebUI is a user-friendly web graphical interface for various LLMs like ChatGPT, providing simplified features such as core ChatGPT conversation and document retrieval dialogues. It has been optimized for better RAG retrieval accuracy and supports various search engines. Users can deploy local language models easily and interact with different LLMs like GPT-4, Azure OpenAI, and more. The tool offers powerful functionalities like GPT4 API configuration, system prompt setup for role-playing, and basic conversation features. It also provides a history of conversations, customization options, and a seamless user experience with themes, dark mode, and PWA installation support.

nttu-chatbot

NTTU Chatbot is a student support chatbot developed using LLM + Document Retriever (RAG) technology in Vietnamese. It provides assistance to students by answering their queries and retrieving relevant documents. The chatbot aims to enhance the student support system by offering quick and accurate responses to user inquiries. It utilizes advanced language models and document retrieval techniques to deliver efficient and effective support to users.

gfm-rag

The GFM-RAG is a graph foundation model-powered pipeline that combines graph neural networks to reason over knowledge graphs and retrieve relevant documents for question answering. It features a knowledge graph index, efficiency in multi-hop reasoning, generalizability to unseen datasets, transferability for fine-tuning, compatibility with agent-based frameworks, and interpretability of reasoning paths. The tool can be used for conducting retrieval and question answering tasks using pre-trained models or fine-tuning on custom datasets.

For similar jobs

weave

Weave is a toolkit for developing Generative AI applications, built by Weights & Biases. With Weave, you can log and debug language model inputs, outputs, and traces; build rigorous, apples-to-apples evaluations for language model use cases; and organize all the information generated across the LLM workflow, from experimentation to evaluations to production. Weave aims to bring rigor, best-practices, and composability to the inherently experimental process of developing Generative AI software, without introducing cognitive overhead.

LLMStack

LLMStack is a no-code platform for building generative AI agents, workflows, and chatbots. It allows users to connect their own data, internal tools, and GPT-powered models without any coding experience. LLMStack can be deployed to the cloud or on-premise and can be accessed via HTTP API or triggered from Slack or Discord.

VisionCraft

The VisionCraft API is a free API for using over 100 different AI models. From images to sound.

kaito

Kaito is an operator that automates the AI/ML inference model deployment in a Kubernetes cluster. It manages large model files using container images, avoids tuning deployment parameters to fit GPU hardware by providing preset configurations, auto-provisions GPU nodes based on model requirements, and hosts large model images in the public Microsoft Container Registry (MCR) if the license allows. Using Kaito, the workflow of onboarding large AI inference models in Kubernetes is largely simplified.

PyRIT

PyRIT is an open access automation framework designed to empower security professionals and ML engineers to red team foundation models and their applications. It automates AI Red Teaming tasks to allow operators to focus on more complicated and time-consuming tasks and can also identify security harms such as misuse (e.g., malware generation, jailbreaking), and privacy harms (e.g., identity theft). The goal is to allow researchers to have a baseline of how well their model and entire inference pipeline is doing against different harm categories and to be able to compare that baseline to future iterations of their model. This allows them to have empirical data on how well their model is doing today, and detect any degradation of performance based on future improvements.

tabby

Tabby is a self-hosted AI coding assistant, offering an open-source and on-premises alternative to GitHub Copilot. It boasts several key features: * Self-contained, with no need for a DBMS or cloud service. * OpenAPI interface, easy to integrate with existing infrastructure (e.g Cloud IDE). * Supports consumer-grade GPUs.

spear

SPEAR (Simulator for Photorealistic Embodied AI Research) is a powerful tool for training embodied agents. It features 300 unique virtual indoor environments with 2,566 unique rooms and 17,234 unique objects that can be manipulated individually. Each environment is designed by a professional artist and features detailed geometry, photorealistic materials, and a unique floor plan and object layout. SPEAR is implemented as Unreal Engine assets and provides an OpenAI Gym interface for interacting with the environments via Python.

Magick

Magick is a groundbreaking visual AIDE (Artificial Intelligence Development Environment) for no-code data pipelines and multimodal agents. Magick can connect to other services and comes with nodes and templates well-suited for intelligent agents, chatbots, complex reasoning systems and realistic characters.